SOCIAL

Twitter Unveils New ‘Manipulated Media’ Policy to Limit the Impact of Deepfakes

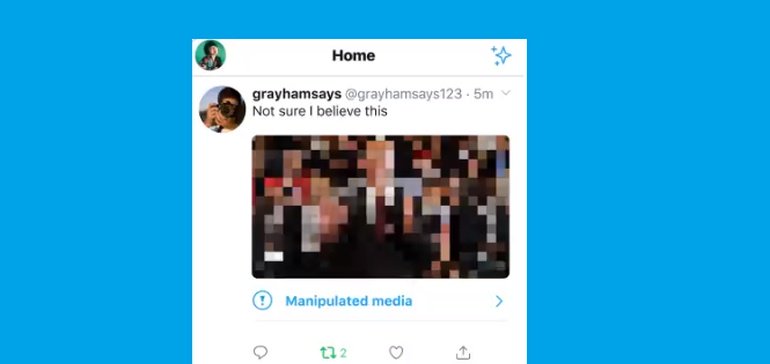

After working on its manipulated media policy over the last few months, Twitter has this week unveiled its official rule against the posting of deceptive manipulated content, while it’s also launching a new tag for detected edited material.

As per Twitter, it’s new, official rule on manipulated media usage is:

“You may not deceptively share synthetic or manipulated media that are likely to cause harm. In addition, we may label Tweets containing synthetic and manipulated media to help people understand their authenticity and to provide context.”

Twitter showcased its new label in a separate announcement tweet:

We know that some Tweets include manipulated photos or videos that can cause people harm. Today we’re introducing a new rule and a label that will address this and give people more context around these Tweets pic.twitter.com/P1ThCsirZ4

— Twitter Safety (@TwitterSafety) February 4, 2020

With deepfakes potentially on the rise as a tool for misinformation, Twitter’s approach seems like a good one, allowing for satirical or light-hearted uses of the evolving form, while implementing clear warnings on potentially damaging content.

Indeed, deepfakes have become a major focus for online providers in recent times, with both Google and Facebook also launching new research initiatives to help them detect and action the same. From a consumer perspective, deepfakes haven’t really had a major impact as yet (that we know of), but the increased action from the major online players suggests that a new wave is coming, and that as such technology becomes easier to use, and more accessible, it will inevitably be seen as a tool for manipulating public opinion, where possible.

And it’s easy to imagine it become a bigger issue. These days, people tend to believe what they choose to online – if a news story doesn’t align with your world view, dismiss it and find another source that does. That approach has been emboldened by world leaders who’ve increasingly dismissed press reports as ‘fake news’ in public. In this new media climate, you can bet that manipulated videos depicting what people want to believe will spread quickly – so it’s important for Twitter, and other platforms, to take proactive steps.

Hopefully, these added prompts will at least slow any such momentum, and prompt people to reconsider. It’ll likely be impossible to stop deepfakes from having any impact, but prompt labeling like this could act as a significant deterrent.

SOCIAL

Snapchat Explores New Messaging Retention Feature: A Game-Changer or Risky Move?

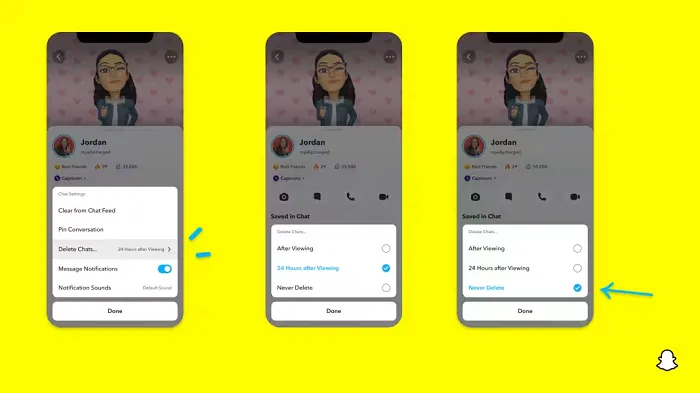

In a recent announcement, Snapchat revealed a groundbreaking update that challenges its traditional design ethos. The platform is experimenting with an option that allows users to defy the 24-hour auto-delete rule, a feature synonymous with Snapchat’s ephemeral messaging model.

The proposed change aims to introduce a “Never delete” option in messaging retention settings, aligning Snapchat more closely with conventional messaging apps. While this move may blur Snapchat’s distinctive selling point, Snap appears convinced of its necessity.

According to Snap, the decision stems from user feedback and a commitment to innovation based on user needs. The company aims to provide greater flexibility and control over conversations, catering to the preferences of its community.

Currently undergoing trials in select markets, the new feature empowers users to adjust retention settings on a conversation-by-conversation basis. Flexibility remains paramount, with participants able to modify settings within chats and receive in-chat notifications to ensure transparency.

Snapchat underscores that the default auto-delete feature will persist, reinforcing its design philosophy centered on ephemerality. However, with the app gaining traction as a primary messaging platform, the option offers users a means to preserve longer chat histories.

The update marks a pivotal moment for Snapchat, renowned for its disappearing message premise, especially popular among younger demographics. Retaining this focus has been pivotal to Snapchat’s identity, but the shift suggests a broader strategy aimed at diversifying its user base.

This strategy may appeal particularly to older demographics, potentially extending Snapchat’s relevance as users age. By emulating features of conventional messaging platforms, Snapchat seeks to enhance its appeal and broaden its reach.

Yet, the introduction of message retention poses questions about Snapchat’s uniqueness. While addressing user demands, the risk of diluting Snapchat’s distinctiveness looms large.

As Snapchat ventures into uncharted territory, the outcome of this experiment remains uncertain. Will message retention propel Snapchat to new heights, or will it compromise the platform’s uniqueness?

Only time will tell.

SOCIAL

Catering to specific audience boosts your business, says accountant turned coach

While it is tempting to try to appeal to a broad audience, the founder of alcohol-free coaching service Just the Tonic, Sandra Parker, believes the best thing you can do for your business is focus on your niche. Here’s how she did just that.

When running a business, reaching out to as many clients as possible can be tempting. But it also risks making your marketing “too generic,” warns Sandra Parker, the founder of Just The Tonic Coaching.

“From the very start of my business, I knew exactly who I could help and who I couldn’t,” Parker told My Biggest Lessons.

Parker struggled with alcohol dependence as a young professional. Today, her business targets high-achieving individuals who face challenges similar to those she had early in her career.

“I understand their frustrations, I understand their fears, and I understand their coping mechanisms and the stories they’re telling themselves,” Parker said. “Because of that, I’m able to market very effectively, to speak in a language that they understand, and am able to reach them.”Â

“I believe that it’s really important that you know exactly who your customer or your client is, and you target them, and you resist the temptation to make your marketing too generic to try and reach everyone,” she explained.

“If you speak specifically to your target clients, you will reach them, and I believe that’s the way that you’re going to be more successful.

Watch the video for more of Sandra Parker’s biggest lessons.

SOCIAL

Instagram Tests Live-Stream Games to Enhance Engagement

Instagram’s testing out some new options to help spice up your live-streams in the app, with some live broadcasters now able to select a game that they can play with viewers in-stream.

As you can see in these example screens, posted by Ahmed Ghanem, some creators now have the option to play either “This or That”, a question and answer prompt that you can share with your viewers, or “Trivia”, to generate more engagement within your IG live-streams.

That could be a simple way to spark more conversation and interaction, which could then lead into further engagement opportunities from your live audience.

Meta’s been exploring more ways to make live-streaming a bigger consideration for IG creators, with a view to live-streams potentially catching on with more users.

That includes the gradual expansion of its “Stars” live-stream donation program, giving more creators in more regions a means to accept donations from live-stream viewers, while back in December, Instagram also added some new options to make it easier to go live using third-party tools via desktop PCs.

Live streaming has been a major shift in China, where shopping live-streams, in particular, have led to massive opportunities for streaming platforms. They haven’t caught on in the same way in Western regions, but as TikTok and YouTube look to push live-stream adoption, there is still a chance that they will become a much bigger element in future.

Which is why IG is also trying to stay in touch, and add more ways for its creators to engage via streams. Live-stream games is another element within this, which could make this a better community-building, and potentially sales-driving option.

We’ve asked Instagram for more information on this test, and we’ll update this post if/when we hear back.

-

PPC7 days ago

PPC7 days agoCompetitor Monitoring: 7 ways to keep watch on the competition

-

PPC6 days ago

PPC6 days agoA History of Google AdWords and Google Ads: Revolutionizing Digital Advertising & Marketing Since 2000

-

SEARCHENGINES7 days ago

SEARCHENGINES7 days agoMore Google March 2024 Core Update Ranking Volatility

-

WORDPRESS5 days ago

WORDPRESS5 days agoTurkish startup ikas attracts $20M for its e-commerce platform designed for small businesses

-

PPC6 days ago

PPC6 days ago31 Ready-to-Go Mother’s Day Messages for Social Media, Email, & More

-

WORDPRESS7 days ago

WORDPRESS7 days agoThrive Architect vs Divi vs Elementor

-

MARKETING5 days ago

MARKETING5 days agoRoundel Media Studio: What to Expect From Target’s New Self-Service Platform

-

SEARCHENGINES5 days ago

SEARCHENGINES5 days agoGoogle Search Results Can Be Harmful & Dangerous In Some Cases