SOCIAL

Twitter Announces New Election Integrity Measures as We Head Into the Final Weeks of the US Presidential Campaign

With just 25 days to go until the US Presidential election, Twitter has announced a range of new measures designed to stop the spread of misinformation, including significant design changes that have been built into the tweet process, which should prompt users to think twice before amplifying certain messages.

The major, functional change is that, from October 20th ‘through at least the end of Election week’, whenever a user in the US taps on the retweet option, on any tweet, that will open the ‘Quote Tweet’ composer by default, as opposed to giving users the option to choose between a ‘Retweet’ or ‘ Quote Tweet’.

As you can see here, the new sequence removes the pop-up option where you can choose to simply retweet a message.

You can still retweet as normal by not entering any text in the composer, but the revised design will ideally prompt more people to add their own thoughts, and/or re-assess exactly why it is that they’re retweeting each message.

Twitter announced the change by tagging onto a trending meme format:

The process was spotted in testing by reverse engineering expert Jane Manchun Wong earlier in the week, and as noted, it could add an extra, important level of friction in the tweet amplification process, which may prove effective in getting users to consider their actions more carefully.

A good example of this is Twitter’s recently introduced ‘read before retweet’ prompt, which calls on users to open a link before they share it.

That extra step has already had a significant impact, with Twitter reporting that users open articles 40% more often when shown these prompts.

Removing the straight retweet option may not seem like a major shift, and it may not be as up front as these prompts. But the results here do show that such pushes can be effective in altering user behaviors.

Some users will begin seeing this new process on the web version of Twitter from today.

In addition to this, and also running from October 20th till whenever Twitter deems necessary, Twitter will also put a halt on all “liked by” and “followed by” recommendations from people that you follow appearing in your main feed.

As explained by Twitter:

“These recommendations can be a helpful way for people to see relevant conversations from outside of their network, but we are removing them because we don’t believe the “Like” button provides sufficient, thoughtful consideration prior to amplifying Tweets to people who don’t follow the author of the Tweet, or the relevant topic that the Tweet is about.”

This makes a lot of sense – the addition of tweets liked by those you follow within your feed is generally not overly beneficial either way, and it also makes tweet likes, essentially, random retweets, which may mean that the person who liked the tweet ends up sharing it among his/her followers unintentionally.

People ‘Like’ tweets for different reasons – sometimes to indicate agreement, sometimes to tag something to read later, etc. As such, the mechanism which re-shares your likes is not ideal either way, and at a time where Twitter is working to promote more thoughtful sharing, removing unintended amplification seems like an obvious step.

Twitter’s also narrowing down its Trends to only display those which include additional context.

Early last month, Twitter announced a new effort to include more context within its Trends listings, by providing a short explainer or an example tweet on each, which makes it clearer why, exactly, a term or entity might be trending at any given time.

For the election period, this will now be the default – which is good, because there are still many instances where, say, a celebrity’s name will be trending, and you have that moment where your hart skips a beat in fear for their life, or a random word will show up, like earlier this week, when ‘Fly’ briefly appeared on my Trends list.

As you can see in this example, all the trends listed on Twitter ‘For You’ discovery page will now include added context. Again, this will be in place for US users from October 20th till whenever Twitter deems fit.

In addition to this, Twitter has also added some new rules around election-related content, particularly in regards to claims of victory by candidates and voter intimidation at polls.

On election outcomes, Twitter says that it will not allow users, including candidates, to claim victory on its platform until the outcome is officially announced.

“To determine the results of an election in the US, we require either an announcement from state election officials, or a public projection from at least two authoritative, national news outlets that make independent election calls. Tweets which include premature claims will be labeled and direct people to our official US election page.”

So, Twitter will still leave these claims up, it will just label them, and direct users to official updates. Facebook has taken a similar approach, though it also plans to display top of feed announcements on the progress of vote counts across both Facebook and Instagram, taking it a step further.

With US President Donald Trump repeatedly refusing to assure a peaceful transfer of power in the case of him losing the vote, there’s a real concern that he, or others, could use the mass-reach of social media to falsely claim victory. That could lead to a difficult situation, even civil unrest, if there’s a dispute over the official counts, and none of the major social platforms want to play any part in facilitating such.

Really, Twitter should just remove any such claims, but by referring people to official information, it should negate such impacts, while still enabling users to see what candidates are claiming.

That said, Twitter will remove threats of intimidation at polls, or efforts to dissuade people from voting:

“Tweets meant to incite interference with the election process or with the implementation of election results, such as through violent action, will be subject to removal. This covers all Congressional races and the Presidential Election.”

So Twitter could remove all false claims and threats. Either way, it’s taking a tougher stand on such from now on in.

But that’s not all – in addition to this, Twitter will now also add another new prompt to stop people sharing any tweets that have been tagged as including misleading information.

“Starting next week, when people attempt to Retweet one of these Tweets with a misleading information label, they will see a prompt pointing them to credible information about the topic before they are able to amplify it.”

Again, this is another level of friction designed to prompt a re-think before a user amplifies such messages, and Twitter’s also adding new warnings and restrictions on any Tweets which have been slapped with a misleading information label from:

- US political figures

- US-based accounts with more than 100,000 followers

- Profiles that see significant engagement.

“People must tap through a warning to see these Tweets, and then will only be able to Quote Tweet; likes, Retweets and replies will be turned off, and these Tweets won’t be algorithmically recommended by Twitter.”

This is a key element, because high-profile users are the ones who are able to add significant credibility and amplification to false and misleading claims.

This was the case late last year, when during the UK election campaign, an image of a child forced to lay on the floor in an overcrowded hospital was circulated on social media. Unfounded rumors suggested that the image was faked, and those claims were then boosted by several celebrities, rapidly escalating the issue, and turning into a far more divisive, aggressive point.

The case highlights the credence that high profile users can unwittingly lend to such campaigns, getting them in front of many more users. In the US, actor Woody Harrelson has shared COVID-19 conspiracy theories, including the suggestion that 5G may be facilitating the virus’ spread. Harrelson has more than 2.2 million followers on Instagram alone.

Limiting questionable claims from these users makes a lot of sense with respect to slowing viral spread.

These are some significant, important measures from Twitter, which could go a long way towards addressing key election concerns, and potential misuse of its platform. Of course, Facebook is still seen as the key focus in this respect, but Twitter too has been a focus of various investigations into the rapid spread of misinformation, and it remains the social media platform of choice for President Trump.

But then again, most of these past investigations have highlighted Twitter bot armies as the key tool for boosting misinformation via tweet. Twitter has made efforts to address this – back in April, the platform removed 20,000 fake accounts linked to the governments of Serbia, Saudi Arabia, Egypt, Honduras and Indonesia as part of its ongoing efforts to combat misuse, while it’s also questioned the validity of such studies in measuring the impact of bot activity on its network.

Seemingly, Twitter has addressed at least some elements in this respect. How effective those measures have been, we’ll have to wait and see, but bots still loom as a significant amplification concern amid these other preventative measures.

SOCIAL

Snapchat Explores New Messaging Retention Feature: A Game-Changer or Risky Move?

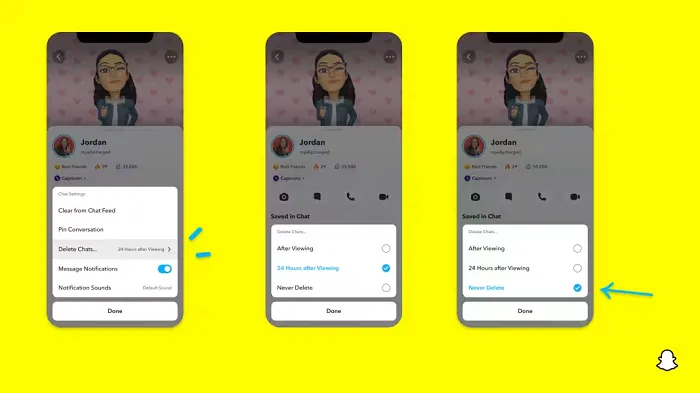

In a recent announcement, Snapchat revealed a groundbreaking update that challenges its traditional design ethos. The platform is experimenting with an option that allows users to defy the 24-hour auto-delete rule, a feature synonymous with Snapchat’s ephemeral messaging model.

The proposed change aims to introduce a “Never delete” option in messaging retention settings, aligning Snapchat more closely with conventional messaging apps. While this move may blur Snapchat’s distinctive selling point, Snap appears convinced of its necessity.

According to Snap, the decision stems from user feedback and a commitment to innovation based on user needs. The company aims to provide greater flexibility and control over conversations, catering to the preferences of its community.

Currently undergoing trials in select markets, the new feature empowers users to adjust retention settings on a conversation-by-conversation basis. Flexibility remains paramount, with participants able to modify settings within chats and receive in-chat notifications to ensure transparency.

Snapchat underscores that the default auto-delete feature will persist, reinforcing its design philosophy centered on ephemerality. However, with the app gaining traction as a primary messaging platform, the option offers users a means to preserve longer chat histories.

The update marks a pivotal moment for Snapchat, renowned for its disappearing message premise, especially popular among younger demographics. Retaining this focus has been pivotal to Snapchat’s identity, but the shift suggests a broader strategy aimed at diversifying its user base.

This strategy may appeal particularly to older demographics, potentially extending Snapchat’s relevance as users age. By emulating features of conventional messaging platforms, Snapchat seeks to enhance its appeal and broaden its reach.

Yet, the introduction of message retention poses questions about Snapchat’s uniqueness. While addressing user demands, the risk of diluting Snapchat’s distinctiveness looms large.

As Snapchat ventures into uncharted territory, the outcome of this experiment remains uncertain. Will message retention propel Snapchat to new heights, or will it compromise the platform’s uniqueness?

Only time will tell.

SOCIAL

Catering to specific audience boosts your business, says accountant turned coach

While it is tempting to try to appeal to a broad audience, the founder of alcohol-free coaching service Just the Tonic, Sandra Parker, believes the best thing you can do for your business is focus on your niche. Here’s how she did just that.

When running a business, reaching out to as many clients as possible can be tempting. But it also risks making your marketing “too generic,” warns Sandra Parker, the founder of Just The Tonic Coaching.

“From the very start of my business, I knew exactly who I could help and who I couldn’t,” Parker told My Biggest Lessons.

Parker struggled with alcohol dependence as a young professional. Today, her business targets high-achieving individuals who face challenges similar to those she had early in her career.

“I understand their frustrations, I understand their fears, and I understand their coping mechanisms and the stories they’re telling themselves,” Parker said. “Because of that, I’m able to market very effectively, to speak in a language that they understand, and am able to reach them.”Â

“I believe that it’s really important that you know exactly who your customer or your client is, and you target them, and you resist the temptation to make your marketing too generic to try and reach everyone,” she explained.

“If you speak specifically to your target clients, you will reach them, and I believe that’s the way that you’re going to be more successful.

Watch the video for more of Sandra Parker’s biggest lessons.

SOCIAL

Instagram Tests Live-Stream Games to Enhance Engagement

Instagram’s testing out some new options to help spice up your live-streams in the app, with some live broadcasters now able to select a game that they can play with viewers in-stream.

As you can see in these example screens, posted by Ahmed Ghanem, some creators now have the option to play either “This or That”, a question and answer prompt that you can share with your viewers, or “Trivia”, to generate more engagement within your IG live-streams.

That could be a simple way to spark more conversation and interaction, which could then lead into further engagement opportunities from your live audience.

Meta’s been exploring more ways to make live-streaming a bigger consideration for IG creators, with a view to live-streams potentially catching on with more users.

That includes the gradual expansion of its “Stars” live-stream donation program, giving more creators in more regions a means to accept donations from live-stream viewers, while back in December, Instagram also added some new options to make it easier to go live using third-party tools via desktop PCs.

Live streaming has been a major shift in China, where shopping live-streams, in particular, have led to massive opportunities for streaming platforms. They haven’t caught on in the same way in Western regions, but as TikTok and YouTube look to push live-stream adoption, there is still a chance that they will become a much bigger element in future.

Which is why IG is also trying to stay in touch, and add more ways for its creators to engage via streams. Live-stream games is another element within this, which could make this a better community-building, and potentially sales-driving option.

We’ve asked Instagram for more information on this test, and we’ll update this post if/when we hear back.

-

SEO7 days ago

SEO7 days agoGoogle Limits News Links In California Over Proposed ‘Link Tax’ Law

-

SEARCHENGINES6 days ago

SEARCHENGINES6 days agoGoogle Core Update Volatility, Helpful Content Update Gone, Dangerous Google Search Results & Google Ads Confusion

-

SEO6 days ago

SEO6 days ago10 Paid Search & PPC Planning Best Practices

-

MARKETING7 days ago

MARKETING7 days ago2 Ways to Take Back the Power in Your Business: Part 2

-

MARKETING5 days ago

MARKETING5 days ago5 Psychological Tactics to Write Better Emails

-

SEARCHENGINES5 days ago

SEARCHENGINES5 days agoWeekend Google Core Ranking Volatility

-

PPC7 days ago

PPC7 days agoCritical Display Error in Brand Safety Metrics On Twitter/X Corrected

-

MARKETING6 days ago

MARKETING6 days agoThe power of program management in martech