SEO

Is Google headed towards a continuous “real-time” algorithm?

30-second summary:

- The present reality is that Google presses the button and updates its algorithm, which in turn can update site rankings

- What if we are entering a world where it is less of Google pressing a button and more of the algorithm automatically updating rankings in “real-time”?

- Advisory Board member and Wix’s Head of SEO Branding, Mordy Oberstein shares his data observations and insights

If you’ve been doing SEO even for a short while, chances are you’re familiar with a Google algorithm update. Every so often, whether we like it or not, Google presses the button and updates its algorithm, which in turn can update our rankings. The key phrase here is “presses the button.”

But, what if we are entering a world where it’s less of Google pressing a button and more of the algorithm automatically updating rankings in “real-time”? What would that world look like and who would it benefit?

What do we mean by continuous real-time algorithm updates?

It is obvious that technology is constantly evolving but what needs to be made clear is that this applies to Google’s algorithm as well. As the technology available to Google improves, the search engine can do things like better understand the content and assess websites. However, this technology needs to be interjected into the algorithm. In other words, as new technology becomes available to Google or as the current technology improves (we might refer to this as machine learning “getting smarter”) Google, in order to utilize these advancements, needs to “make them a part” of its algorithms.

Take MUM for example. Google has started to use aspects of MUM in the algorithm. However, (at the time of writing) MUM is not fully implemented. As time goes on and based on Google’s previous announcements, MUM is almost certainly going to be applied to additional algorithmic tasks.

Of course, once Google introduces new technology or has refined its current capabilities it will likely want to reassess rankings. If Google is better at understanding content or assessing site quality, wouldn’t it want to apply these capabilities to the rankings? When it does so, Google “presses the button” and releases an algorithm update.

So, say one of Google’s current machine-learning properties has evolved. It’s taken the input over time and has been refined – it’s “smarter” for lack of a better word. Google may elect to “reintroduce” this refined machine learning property into the algorithm and reassess the pages being ranked accordingly.

These updates are specific and purposeful. Google is “pushing the button.” This is most clearly seen when Google announces something like a core update or product review update or even a spam update.

In fact, perhaps nothing better concretizes what I’ve been saying here than what Google said about its spam updates:

“While Google’s automated systems to detect search spam are constantly operating, we occasionally make notable improvements to how they work…. From time to time, we improve that system to make it better at spotting spam and to help ensure it catches new types of spam.”

In other words, Google was able to develop an improvement to a current machine learning property and released an update so that this improvement could be applied to ranking pages.

If this process is “manual” (to use a crude word), what then would continuous “real-time” updates be? Let’s take Google’s Product Review Updates. Initially released in April of 2021, Google’s Product Review Updates aim at weeding out product review pages that are thin, unhelpful, and (if we’re going to call a spade a spade) exists essentially to earn affiliate revenue.

To do this, Google is using machine learning in a specific way, looking at specific criteria. With each iteration of the update (such as there was in December 2021, March 2022, etc.) these machine learning apparatuses have the opportunity to recalibrate and refine. Meaning, they can be potentially more effective over time as the machine “learns” – which is kind of the point when it comes to machine learning.

What I theorize, at this point, is that as these machine learning properties refine themselves, rank fluctuates accordingly. Meaning, Google allows machine learning properties to “recalibrate” and impact the rankings. Google then reviews and analyzes and sees if the changes are to its liking.

We may know this process as unconfirmed algorithm updates (for the record I am 100% not saying that all unconfirmed updates are as such). It’s why I believe there is such a strong tendency towards rank reversals in between official algorithm updates.

It’s quite common that the SERP will see a noticeable increase in rank fluctuations that can impact a page’s rankings only to see those rankings reverse back to their original position with the next wave of rank fluctuations (whether that be a few days later or weeks later). In fact, this process can repeat itself multiple times. The net effect is a given page seeing rank changes followed by reversals or a series of reversals.

A series of rank reversals impacting almost all pages ranking between position 5 and 20 that align with across-the-board heightened rank fluctuations

This trend, as I see it, is Google allowing its machine learning properties to evolve or recalibrate (or however you’d like to describe it) in real-time. Meaning, no one is pushing a button over at Google but rather the algorithm is adjusting to the continuous “real-time” recalibration of the machine learning properties.

It’s this dynamic that I am referring to when I question if we are heading toward “real-time” or “continuous” algorithmic rank adjustments.

What would a continuous real-time google algorithm mean?

So what? What if Google adopted a continuous real-time model? What would the practical implications be?

In a nutshell, it would mean that rank volatility would be far more of a constant. Instead of waiting for Google to push the button on an algorithm update in order to rank to be significantly impacted as a construct, this would simply be the norm. The algorithm would be constantly evaluating pages/sites “on its own” and making adjustments to rank in more real-time.

Another implication would be a lack of having to wait for the next update for restoration. While not a hard-fast rule, if you are significantly impacted by an official Google update, such as a core update, you generally won’t see rank restoration occur until the release of the next version of the update – whereupon your pages will be evaluated. In a real-time scenario, pages are constantly being evaluated, much the way links are with Penguin 4.0 which was released in 2016. To me, this would be a major change to the current “SERP ecosystem.”

I would even argue that, to an extent, we already have a continuous “real-time” algorithm. In fact, that we at least partially have a real-time Google algorithm is simply fact. As mentioned, In 2016, Google released Penguin 4.0 which removed the need to wait for another version of the update as this specific algorithm evaluates pages on a constant basis.

However, outside of Penguin, what do I mean when I say that, to an extent, we already have a continuous real-time algorithm?

The case for real-time algorithm adjustments

The constant “real-time” rank adjustments that occur in the ecosystem are so significant that they refined the volatility landscape.

Per Semrush data I pulled, there was a 58% increase in the number of days that reflected high-rank volatility in 2021 as compared to 2020. Similarly, there was a 59% increase in the number of days that reflected either high or very high levels of rank volatility:

Simply put, there is a significant increase in the number of instances that reflect elevated levels of rank volatility. After studying these trends and looking at the ranking patterns, I believe the aforementioned rank reversals are the cause. Meaning, a large portion of the increased instances in rank volatility are coming from what I believe to be machine learning continually recalibrating in “real-time,” thereby producing unprecedented levels of rank reversals.

Supporting this is the fact (that along with the increased instances of rank volatility) we did not see increases in how drastic the rank movement is. Meaning, there are more instances of rank volatility but the degree of volatility did not increase.

In fact, there was a decrease in how dramatic the average rank movement was in 2021 relative to 2020!

Why? Again, I chalk this up to the recalibration of machine learning properties and their “real-time” impact on rankings. In other words, we’re starting to see more micro-movements that align with the natural evolution of Google’s machine-learning properties.

When a machine learning property is refined as its intake/learning advances, you’re unlikely to see enormous swings in the rankings. Rather, you will see a refinement in the rankings that align with refinement in the machine learning itself.

Hence, the rank movement we’re seeing, as a rule, is far more constant yet not as drastic.

The final step towards continuous real-time algorithm updates

While much of the ranking movement that occurs is continuous in that it is not dependent on specific algorithmic refreshes, we’re not fully there yet. As I mentioned, much of the rank volatility is a series of reversing rank positions. Changes to these ranking patterns, again, are often not solidified until the rollout of an official Google update, most commonly, an official core algorithm update.

Until the longer-lasting ranking patterns are set without the need to “press the button” we don’t have a full-on continuous or “real-time” Google algorithm.

However, I have to wonder if the trend is not heading toward that. For starters, Google’s Helpful Content Update (HCU) does function in real-time.

Per Google:

“Our classifier for this update runs continuously, allowing it to monitor newly-launched sites and existing ones. As it determines that the unhelpful content has not returned in the long-term, the classification will no longer apply.”

How is this so? The same as what we’ve been saying all along here – Google has allowed its machine learning to have the autonomy it would need to be “real-time” or as Google calls it, “continuous”:

“This classifier process is entirely automated, using a machine-learning model.”

For the record, continuous does not mean ever-changing. In the case of the HCU, there’s a logical validation period before restoration. Should we ever see a “truly” continuous real-time algorithm, this may apply in various ways as well. I don’t want to let on that the second you make a change to a page, there will be a ranking response should we ever see a “real-time” algorithm.

At the same time, the “traditional” officially “button-pushed” algorithm update has become less impactful over time. In a study I conducted back in late 2021, I noticed that Semrush data indicated that since 2018’s Medic Update, the core updates being released were becoming significantly less impactful.

Data indicates that Google’s core updates are presenting less rank volatility overall as time goes on

Subsequently, this trend has continued. Per my analysis of the September 2022 Core Update, there was a noticeable drop-off in the volatility seen relative to the May 2022 Core Update.

Rank volatility change was far less dramatic during the September 2022 Core Update relative to the May 2022 Core Update

It’s a dual convergence. Google’s core update releases seem to be less impactful overall (obviously, individual sites can get slammed just as hard) while at the same time its latest update (the HCU) is continuous.

To me, it all points towards Google looking to abandon the traditional algorithm update release model in favor of a more continuous construct. (Further evidence could be in how the release of official updates has changed. If you look back at the various outlets covering these updates, the data will show you that the roll-out now tends to be slower with fewer days of increased volatility and, again, with less overall impact).

The question is, why would Google want to go to a more continuous real-time model?

Why a continuous real-time google algorithm is beneficial

A real-time continuous algorithm? Why would Google want that? It’s pretty simple, I think. Having an update that continuously refreshes rankings to reward the appropriate pages and sites is a win for Google (again, I don’t mean instant content revision or optimization resulting in instant rank change).

Which is more beneficial to Google’s users? A continuous-like updating of the best results or periodic updates that can take months to present change?

The idea of Google continuously analyzing and updating in a more real-time scenario is simply better for users. How does it help a user looking for the best result to have rankings that reset periodically with each new iteration of an official algorithm update?

Wouldn’t it be better for users if a site, upon seeing its rankings slip, made changes that resulted in some great content, and instead of waiting months to have it rank well, users could access it on the SERP far sooner?

Continuous algorithmic implementation means that Google can get better content in front of users far faster.

It’s also better for websites. Do you really enjoy implementing a change in response to ranking loss and then having to wait perhaps months for restoration?

Also, the fact that Google would so heavily rely on machine learning and trust the adjustments it was making only happens if Google is confident in its ability to understand content, relevancy, authority, etc. SEOs and site owners should want this. It means that Google could rely less on secondary signals and more directly on the primary commodity, content and its relevance, trustworthiness, etc.

Google being able to more directly assess content, pages, and domains overall is healthy for the web. It also opens the door for niche sites and sites that are not massive super-authorities (think the Amazons and WebMDs of the world).

Google’s better understanding of content creates more parity. Google moving towards a more real-time model would be a manifestation of that better understanding.

A new way of thinking about google updates

A continuous real-time algorithm would intrinsically change the way we would have to think about Google updates. It would, to a greater or lesser extent, make tracking updates as we now know them essentially obsolete. It would change the way we look at SEO weather tools in that, instead of looking for specific moments of increased rank volatility, we’d pay more attention to overall trends over an extended period of time.

Based on the ranking trends we already discussed, I’d argue that, to a certain extent, that time has already come. We’re already living in an environment where rankings fluctuate far more than they used to and to an extent has redefined what stable rankings mean in many situations.

To both conclude and put things simply, edging closer to a continuous real-time algorithm is part and parcel of a new era in ranking organically on Google’s SERP.

Mordy Oberstein is Head of SEO Branding at Wix. Mordy can be found on Twitter @MordyOberstein.

Subscribe to the Search Engine Watch newsletter for insights on SEO, the search landscape, search marketing, digital marketing, leadership, podcasts, and more.

Join the conversation with us on LinkedIn and Twitter.

SEO

Measuring Content Impact Across The Customer Journey

Understanding the impact of your content at every touchpoint of the customer journey is essential – but that’s easier said than done. From attracting potential leads to nurturing them into loyal customers, there are many touchpoints to look into.

So how do you identify and take advantage of these opportunities for growth?

Watch this on-demand webinar and learn a comprehensive approach for measuring the value of your content initiatives, so you can optimize resource allocation for maximum impact.

You’ll learn:

- Fresh methods for measuring your content’s impact.

- Fascinating insights using first-touch attribution, and how it differs from the usual last-touch perspective.

- Ways to persuade decision-makers to invest in more content by showcasing its value convincingly.

With Bill Franklin and Oliver Tani of DAC Group, we unravel the nuances of attribution modeling, emphasizing the significance of layering first-touch and last-touch attribution within your measurement strategy.

Check out these insights to help you craft compelling content tailored to each stage, using an approach rooted in first-hand experience to ensure your content resonates.

Whether you’re a seasoned marketer or new to content measurement, this webinar promises valuable insights and actionable tactics to elevate your SEO game and optimize your content initiatives for success.

View the slides below or check out the full webinar for all the details.

SEO

How to Find and Use Competitor Keywords

Competitor keywords are the keywords your rivals rank for in Google’s search results. They may rank organically or pay for Google Ads to rank in the paid results.

Knowing your competitors’ keywords is the easiest form of keyword research. If your competitors rank for or target particular keywords, it might be worth it for you to target them, too.

There is no way to see your competitors’ keywords without a tool like Ahrefs, which has a database of keywords and the sites that rank for them. As far as we know, Ahrefs has the biggest database of these keywords.

How to find all the keywords your competitor ranks for

- Go to Ahrefs’ Site Explorer

- Enter your competitor’s domain

- Go to the Organic keywords report

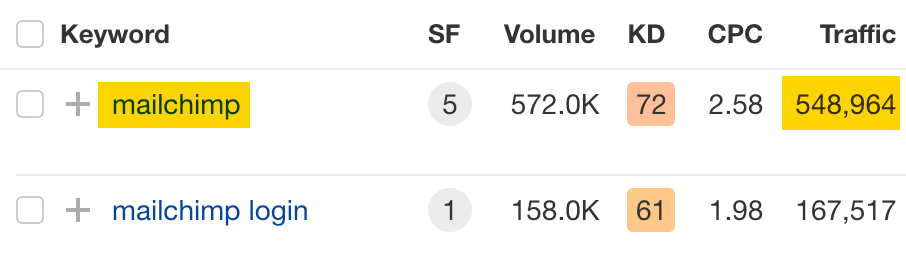

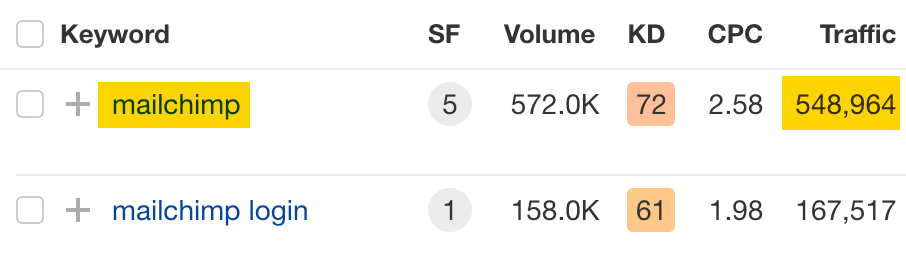

The report is sorted by traffic to show you the keywords sending your competitor the most visits. For example, Mailchimp gets most of its organic traffic from the keyword “mailchimp.”

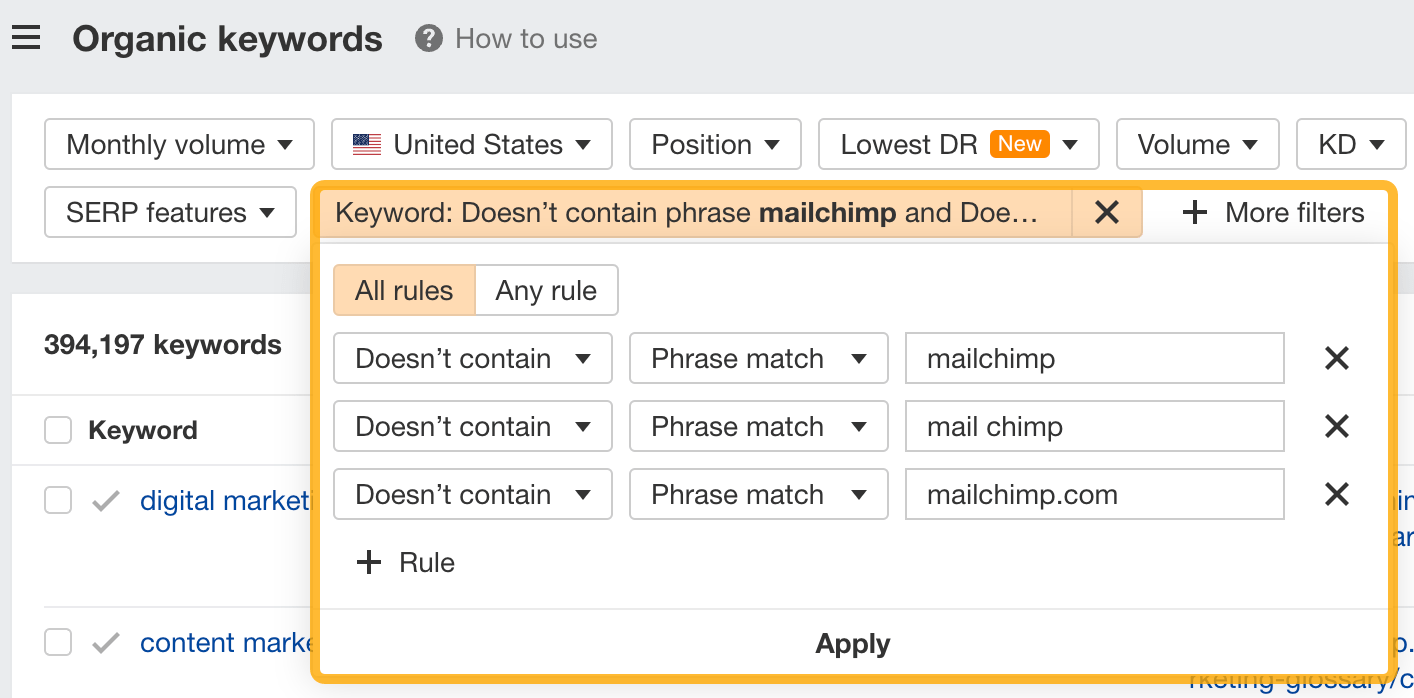

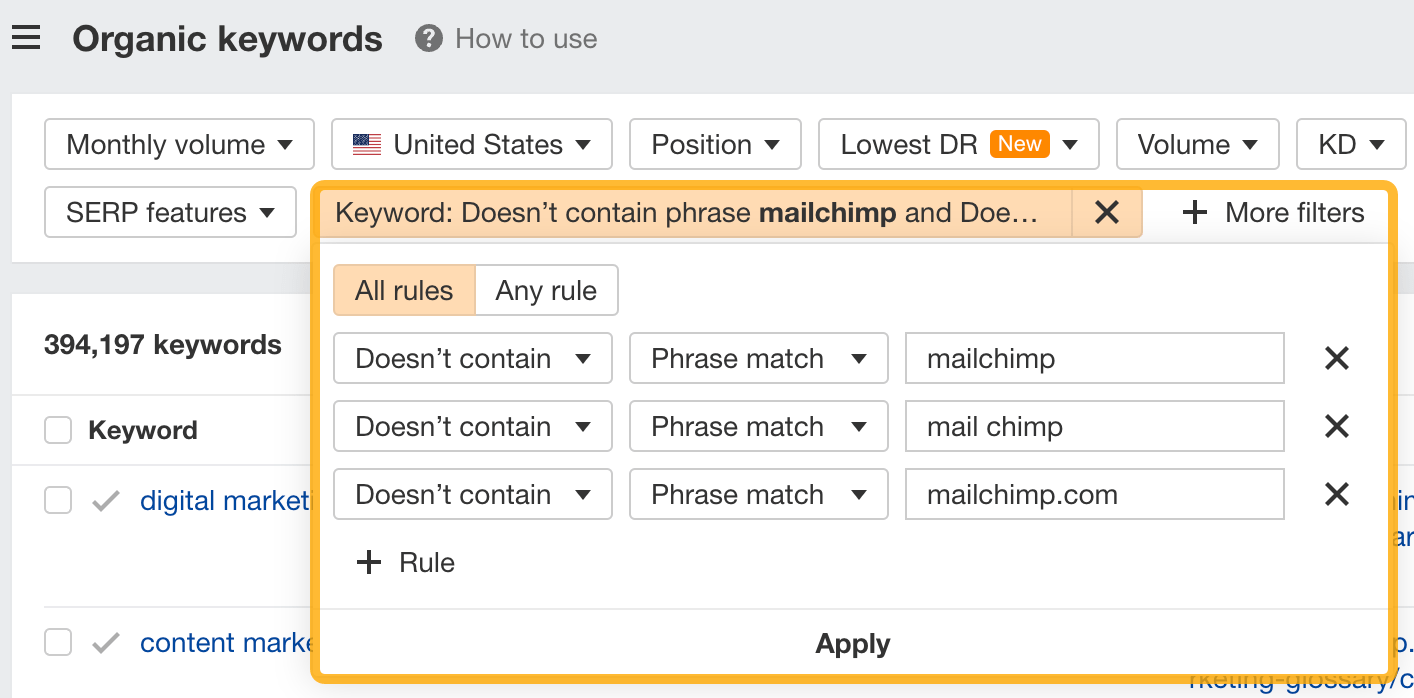

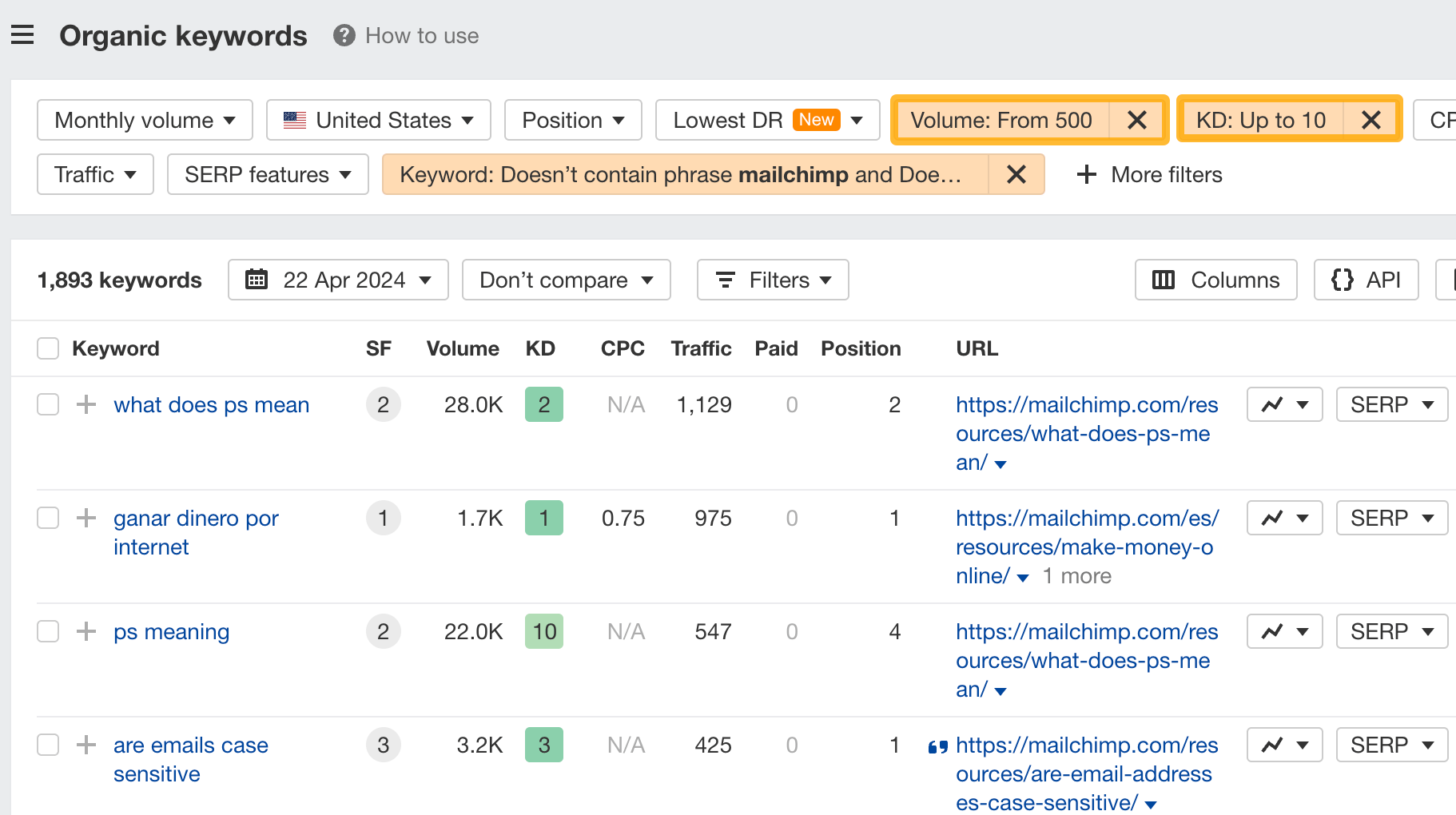

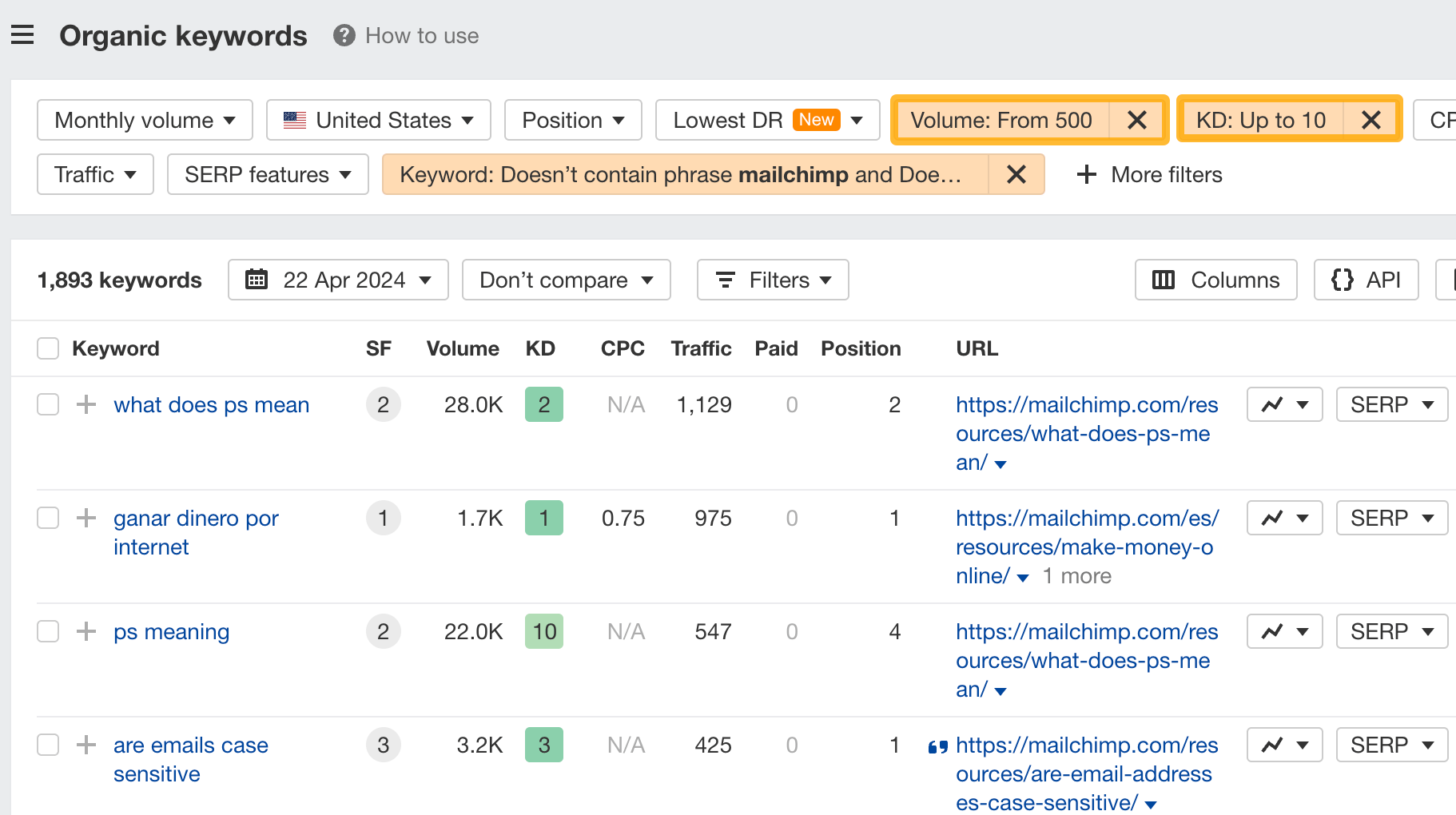

Since you’re unlikely to rank for your competitor’s brand, you might want to exclude branded keywords from the report. You can do this by adding a Keyword > Doesn’t contain filter. In this example, we’ll filter out keywords containing “mailchimp” or any potential misspellings:

If you’re a new brand competing with one that’s established, you might also want to look for popular low-difficulty keywords. You can do this by setting the Volume filter to a minimum of 500 and the KD filter to a maximum of 10.

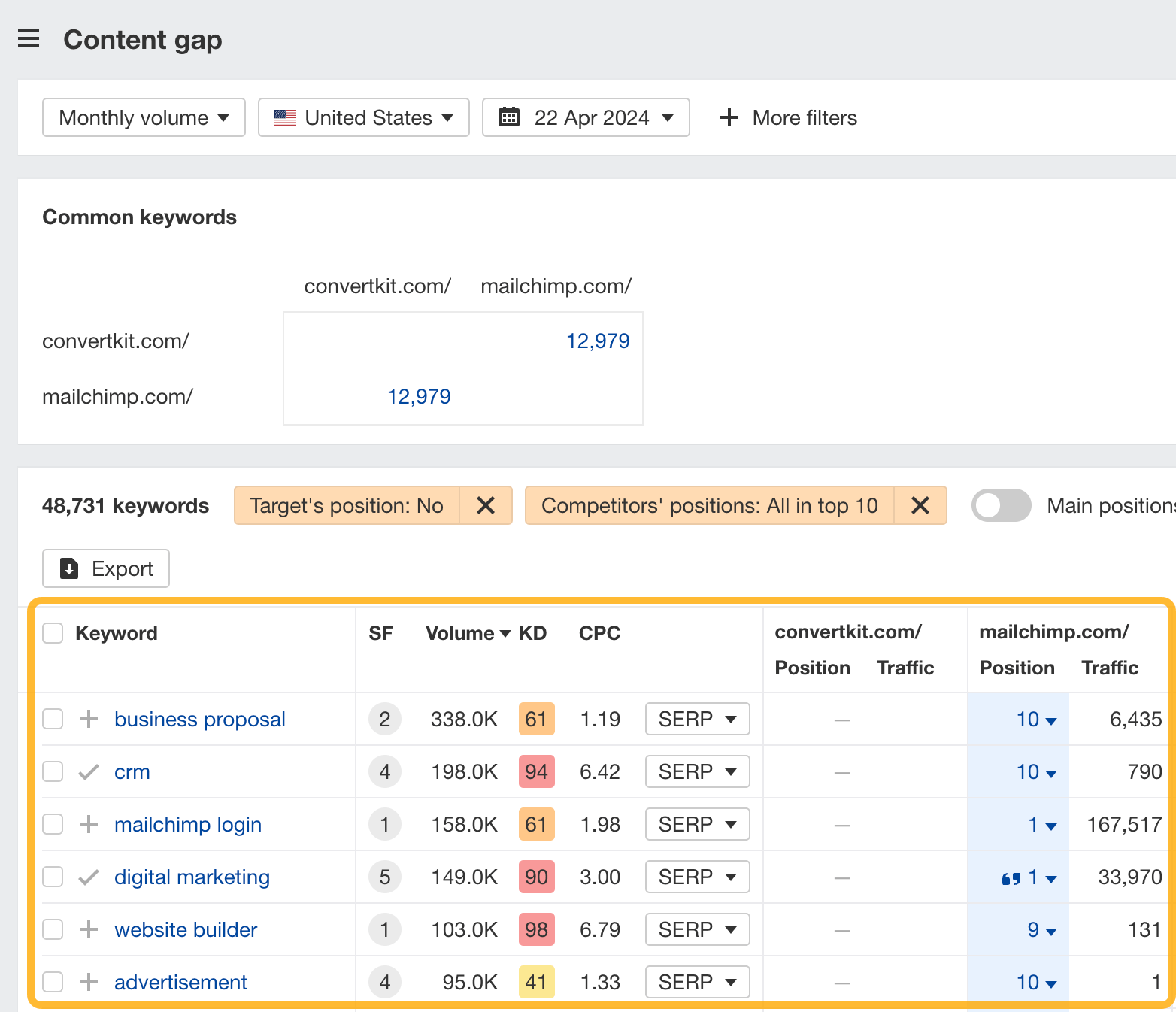

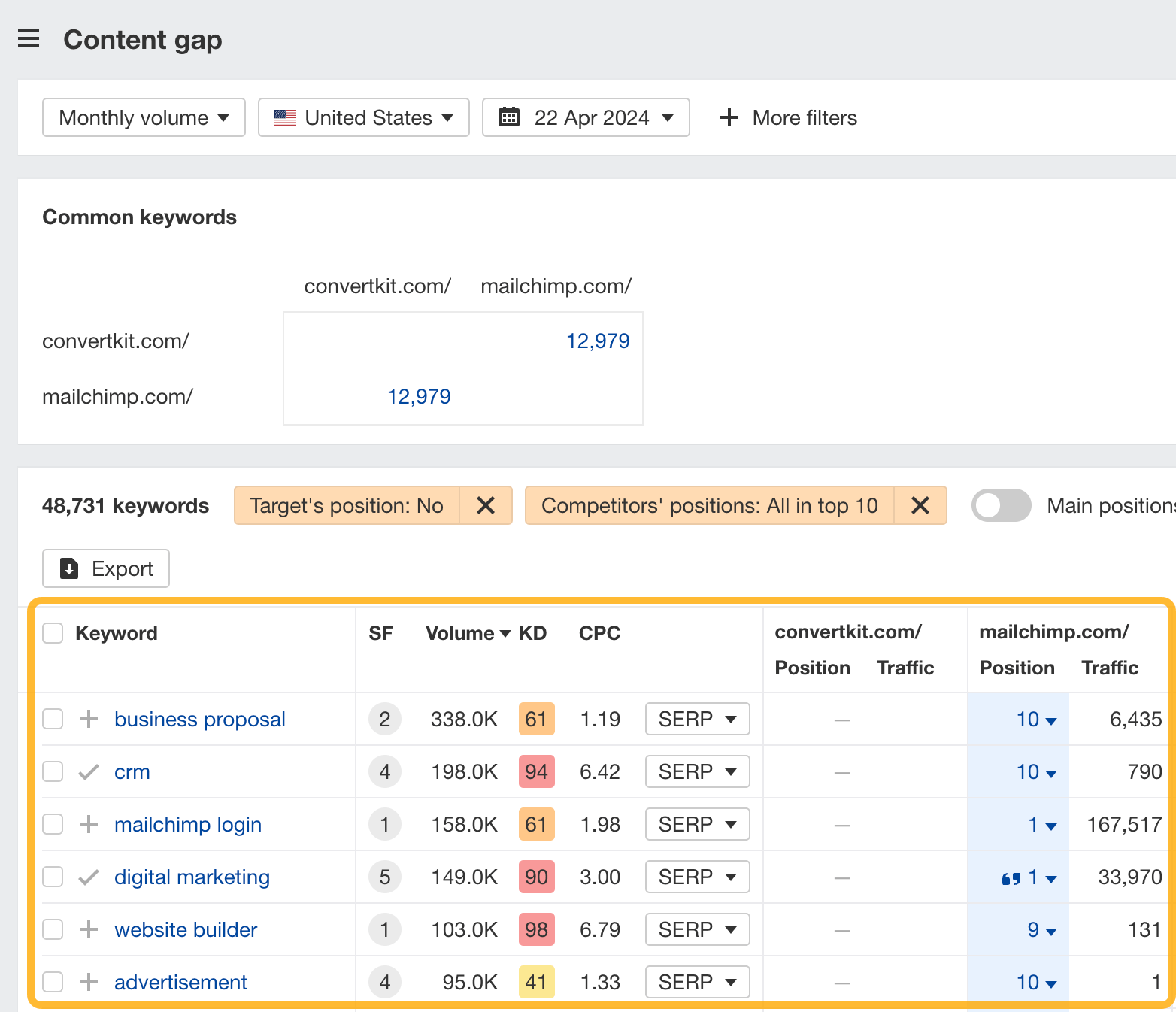

How to find keywords your competitor ranks for, but you don’t

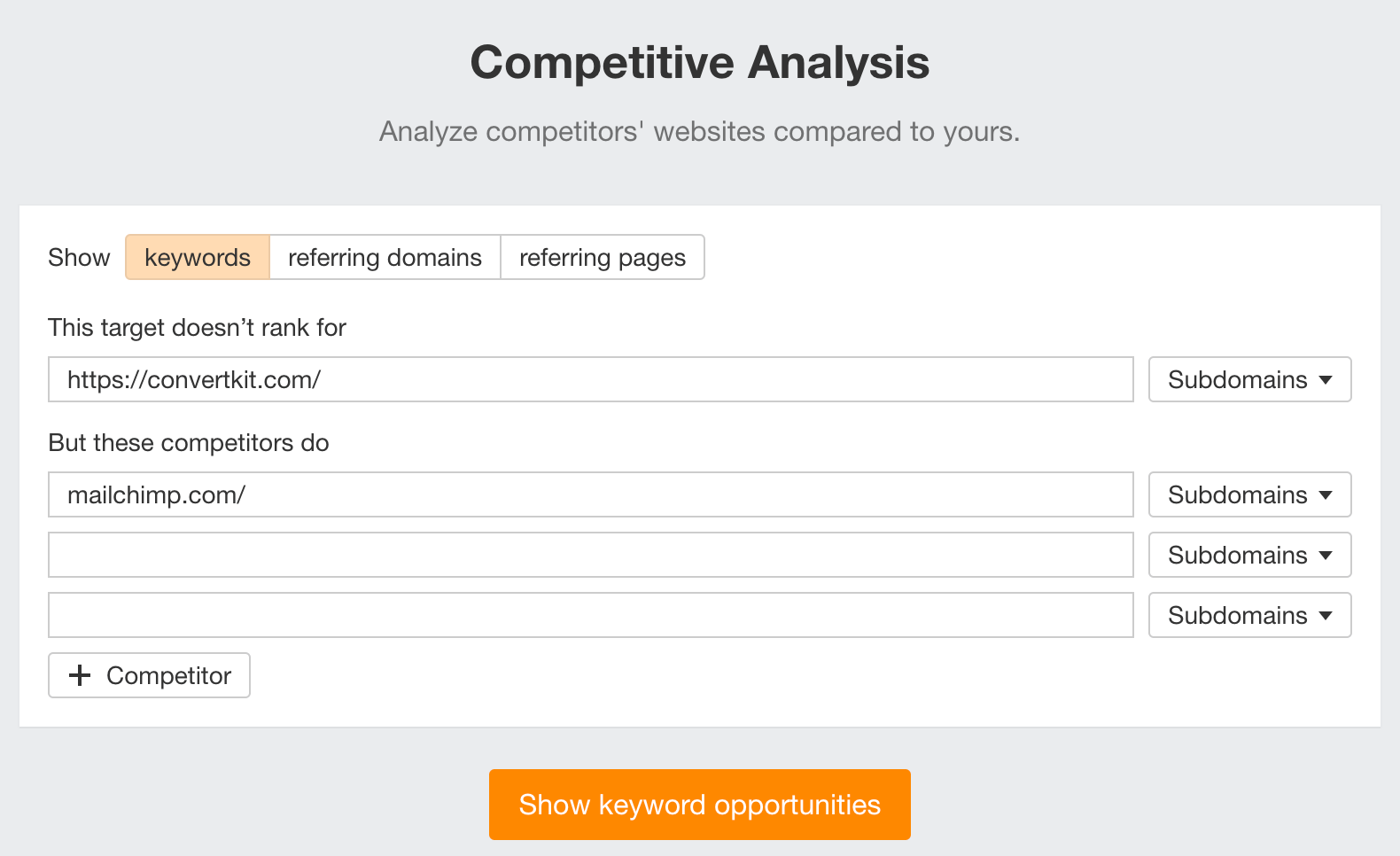

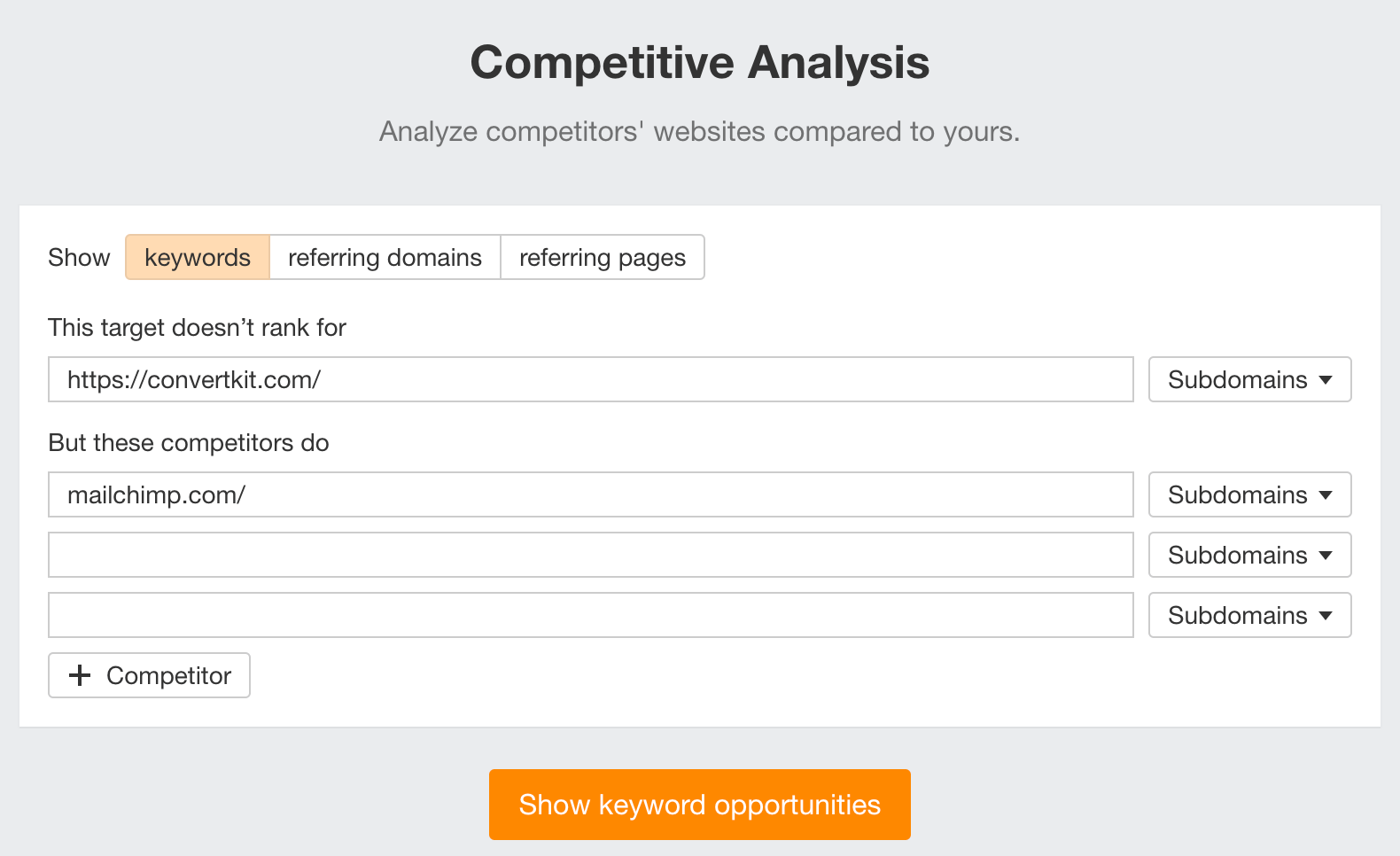

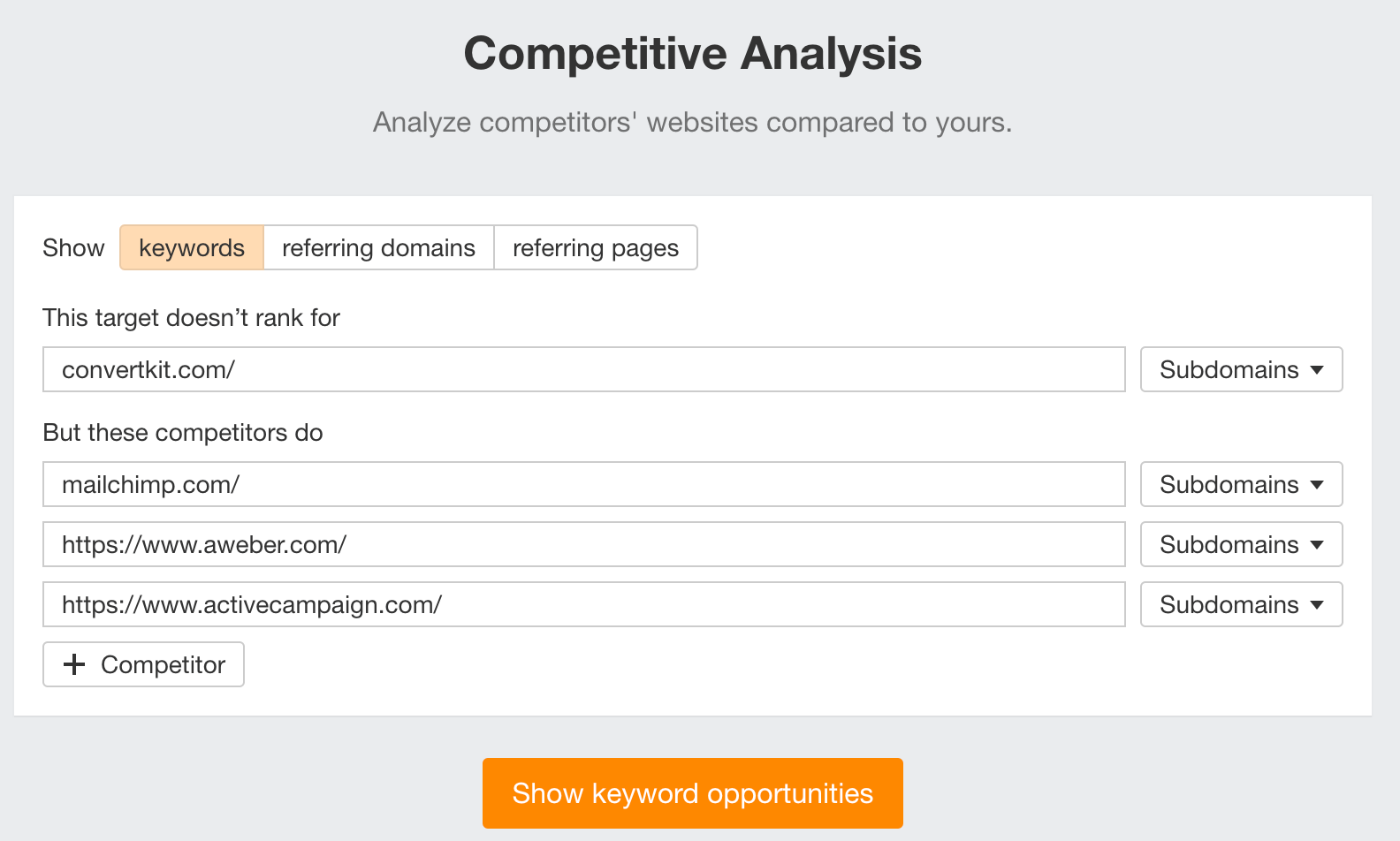

- Go to Competitive Analysis

- Enter your domain in the This target doesn’t rank for section

- Enter your competitor’s domain in the But these competitors do section

Hit “Show keyword opportunities,” and you’ll see all the keywords your competitor ranks for, but you don’t.

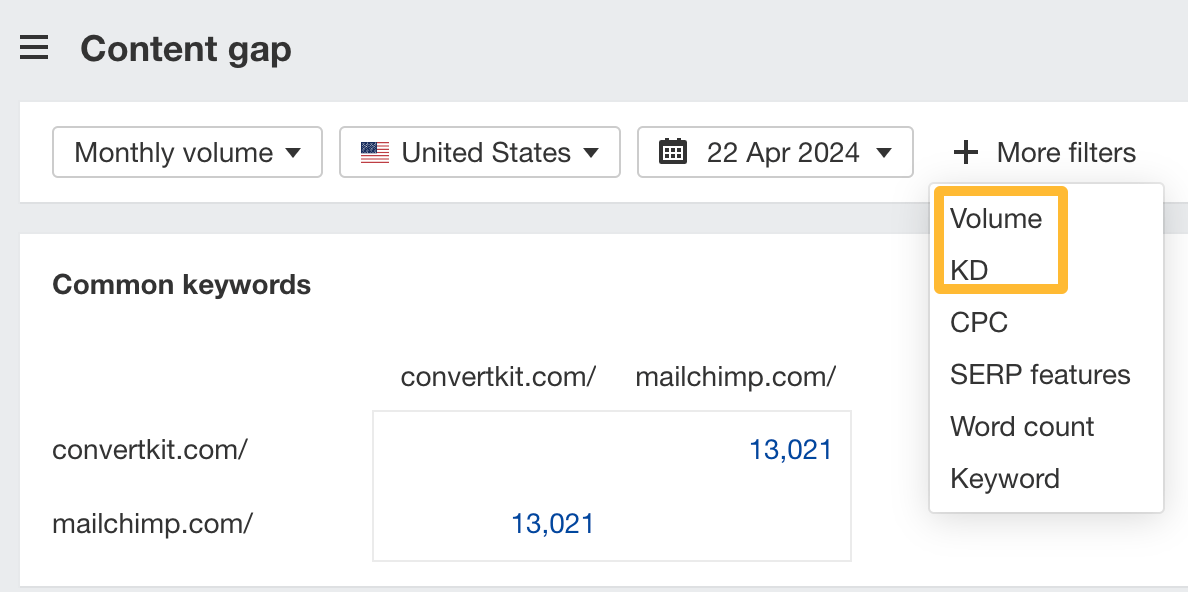

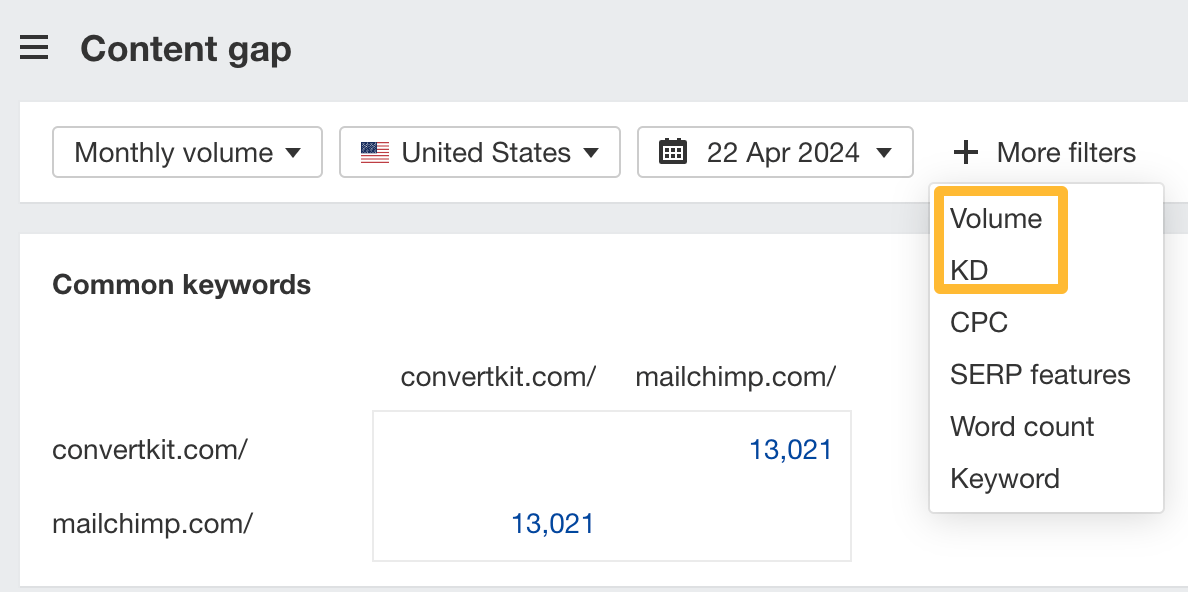

You can also add a Volume and KD filter to find popular, low-difficulty keywords in this report.

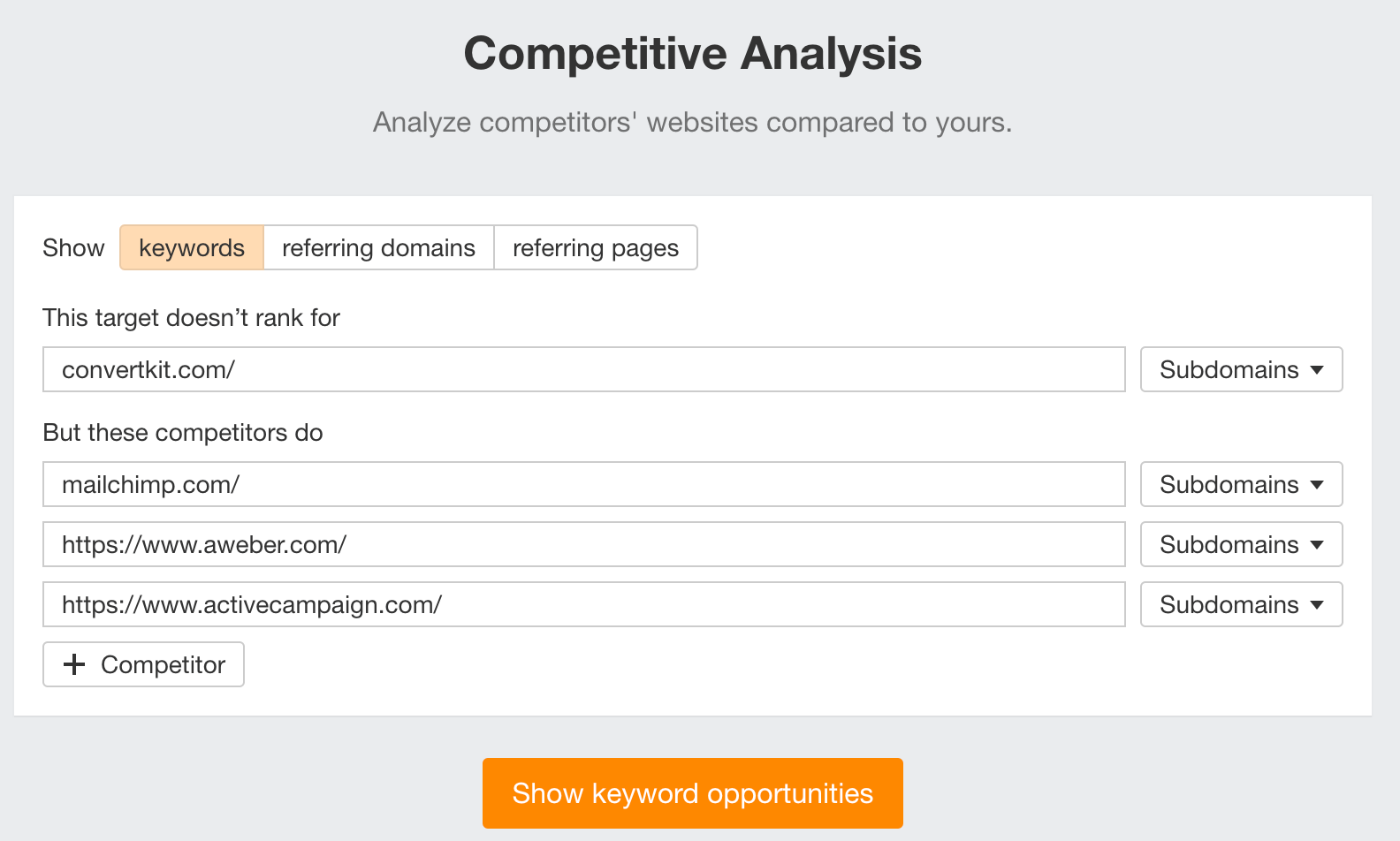

How to find keywords multiple competitors rank for, but you don’t

- Go to Competitive Analysis

- Enter your domain in the This target doesn’t rank for section

- Enter the domains of multiple competitors in the But these competitors do section

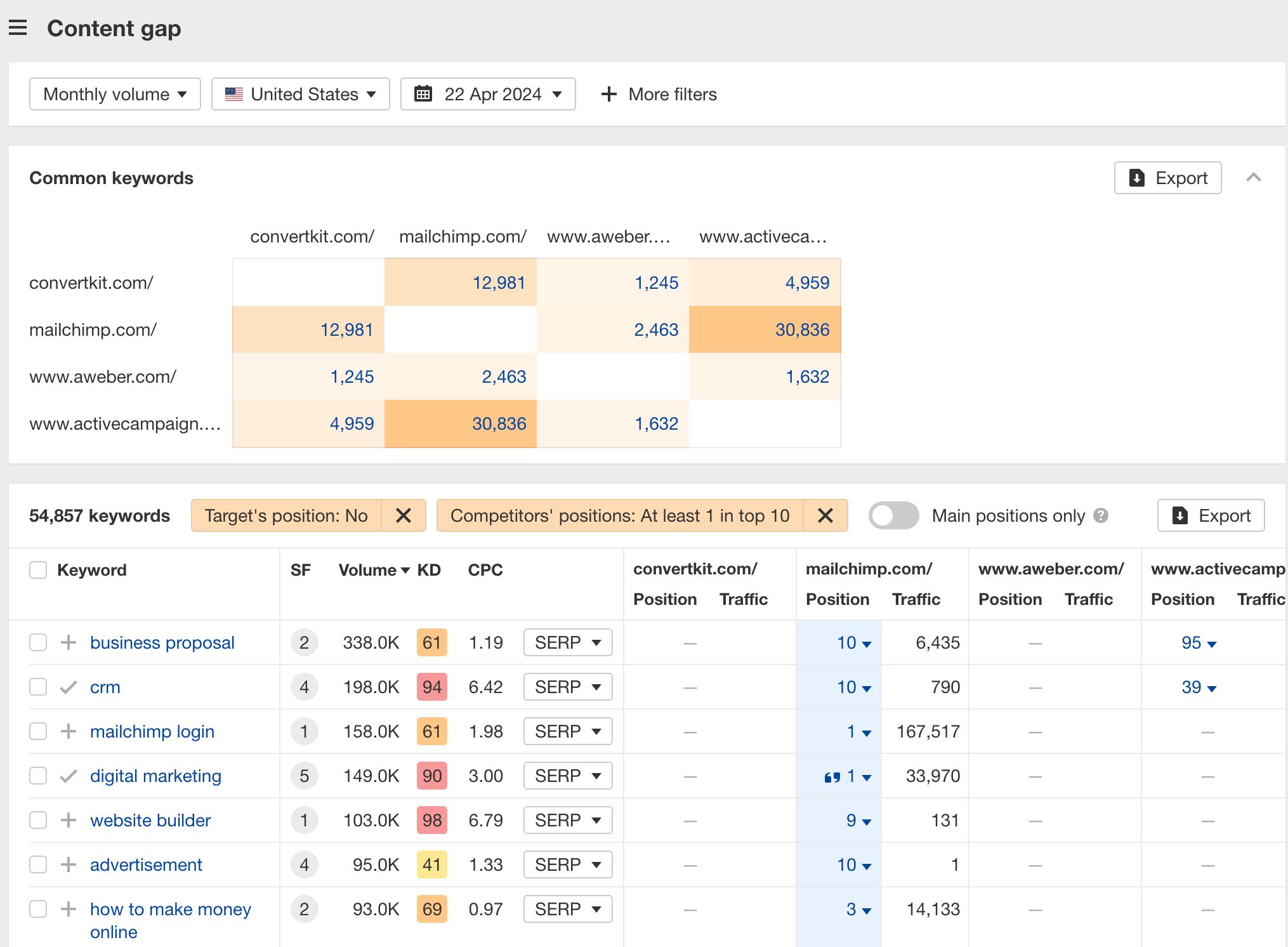

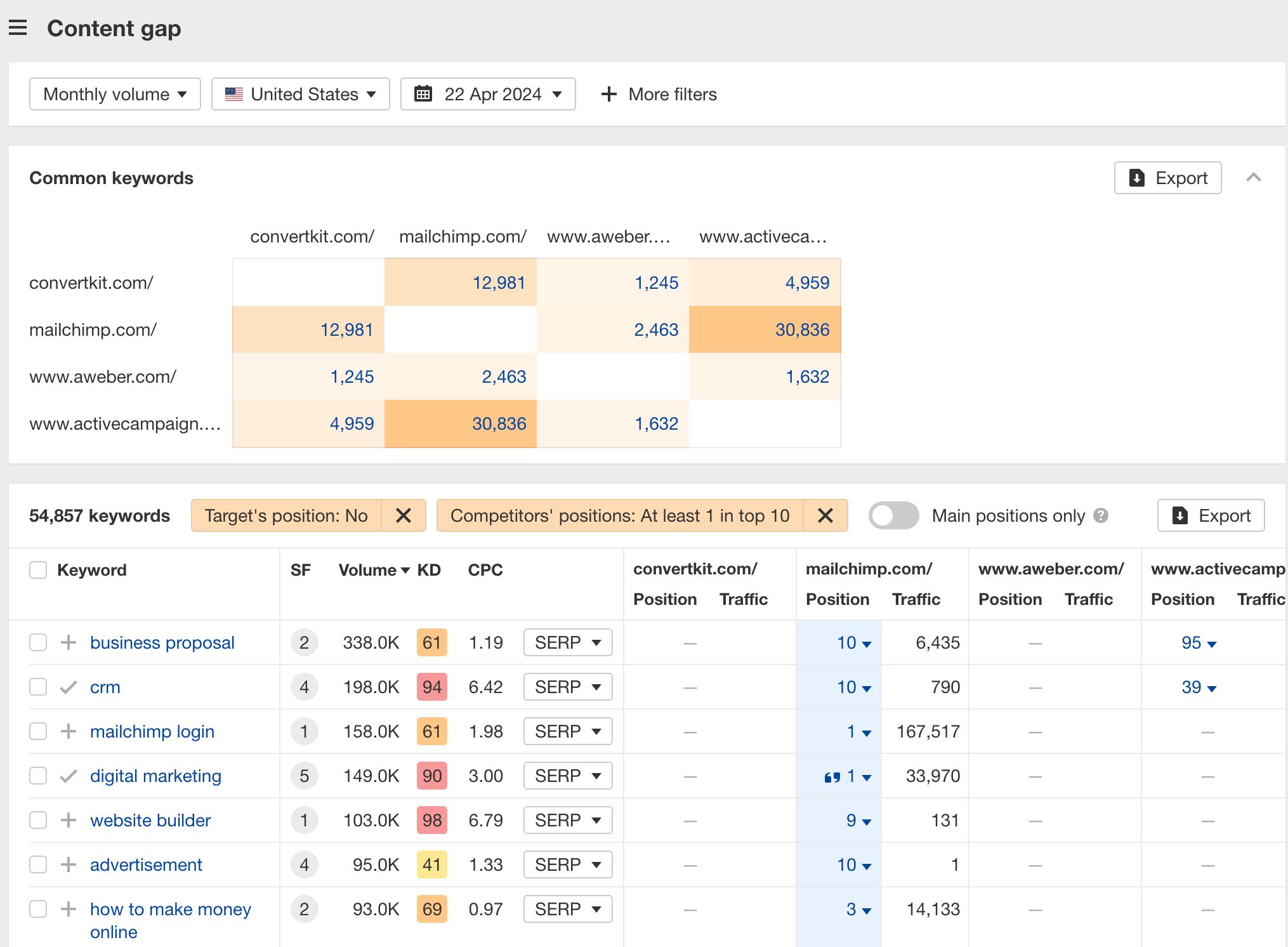

You’ll see all the keywords that at least one of these competitors ranks for, but you don’t.

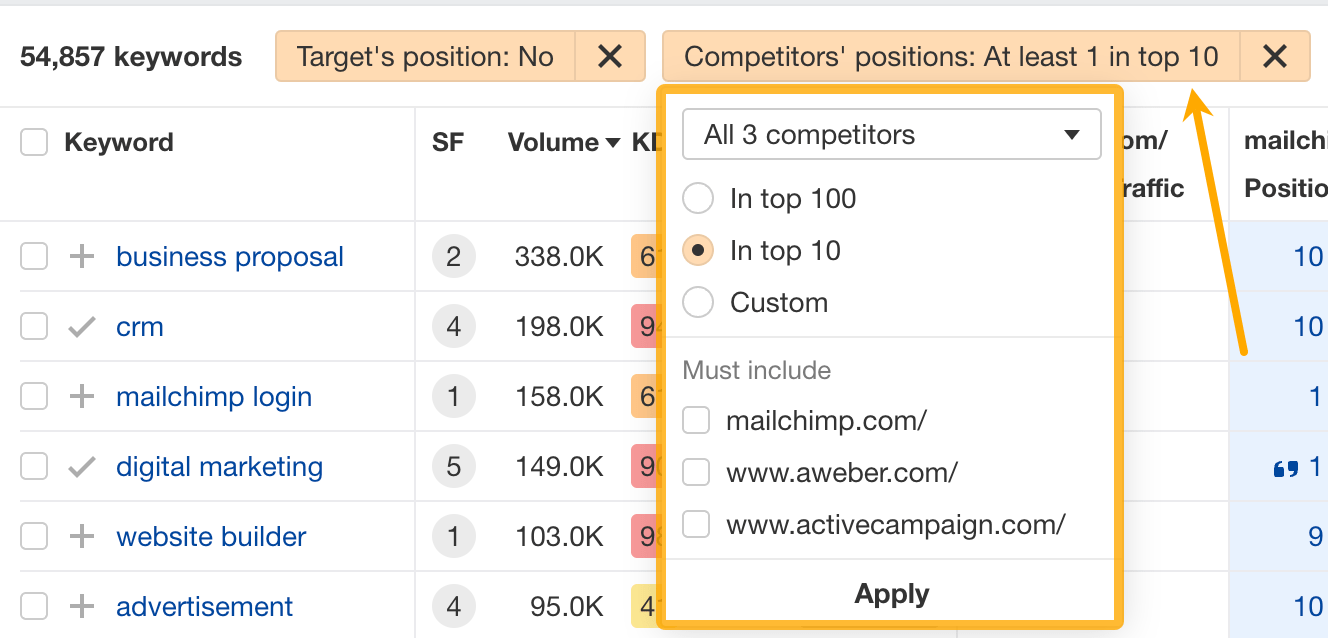

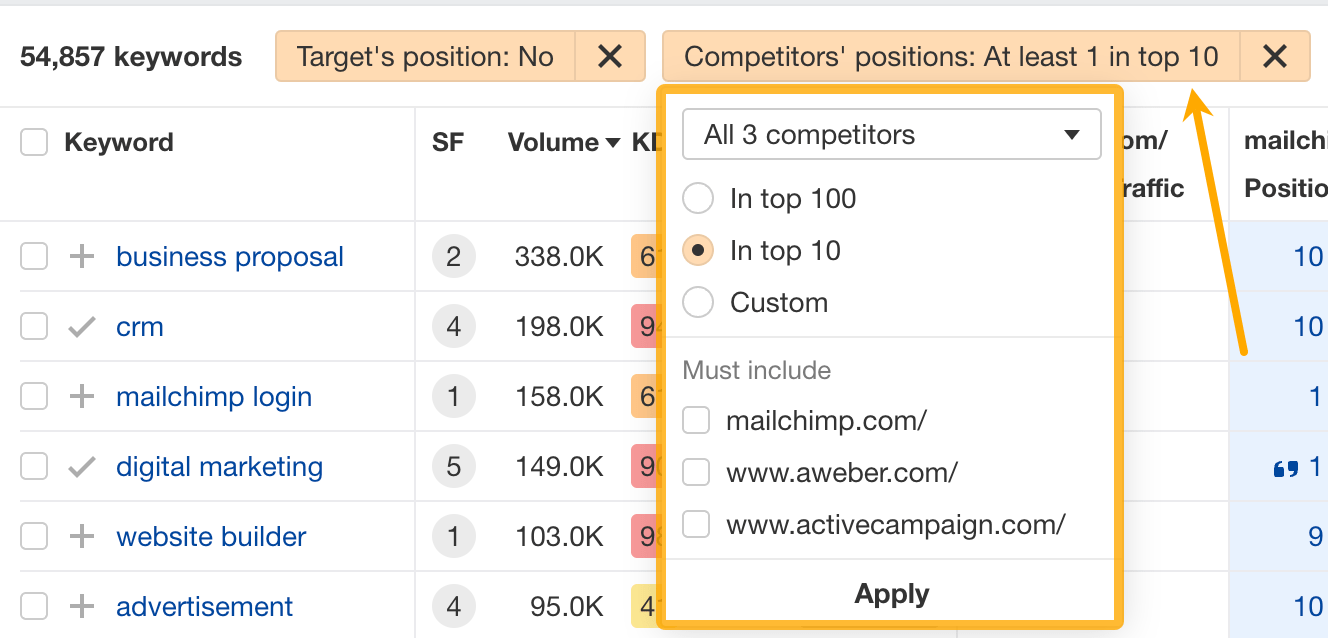

You can also narrow the list down to keywords that all competitors rank for. Click on the Competitors’ positions filter and choose All 3 competitors:

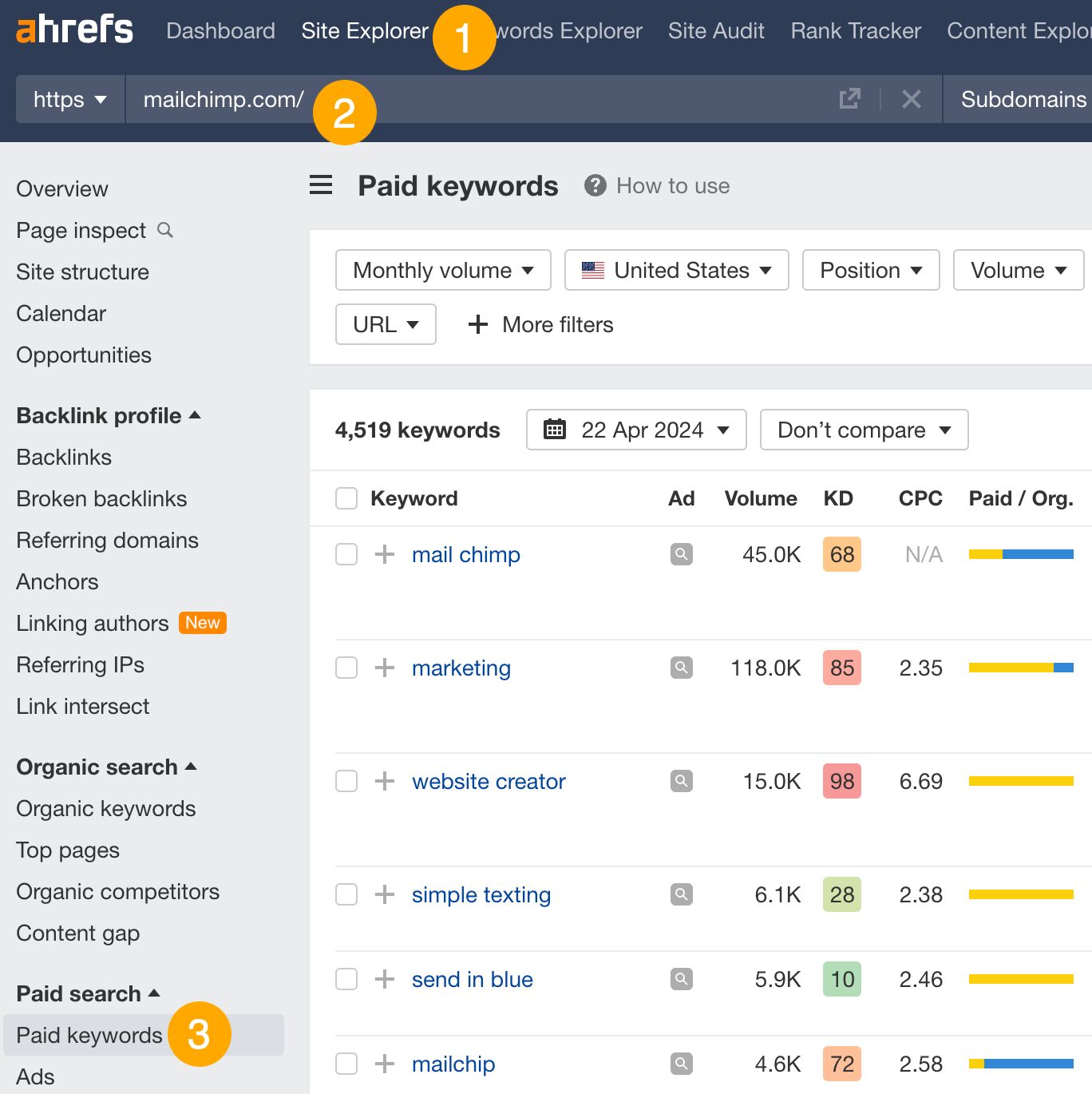

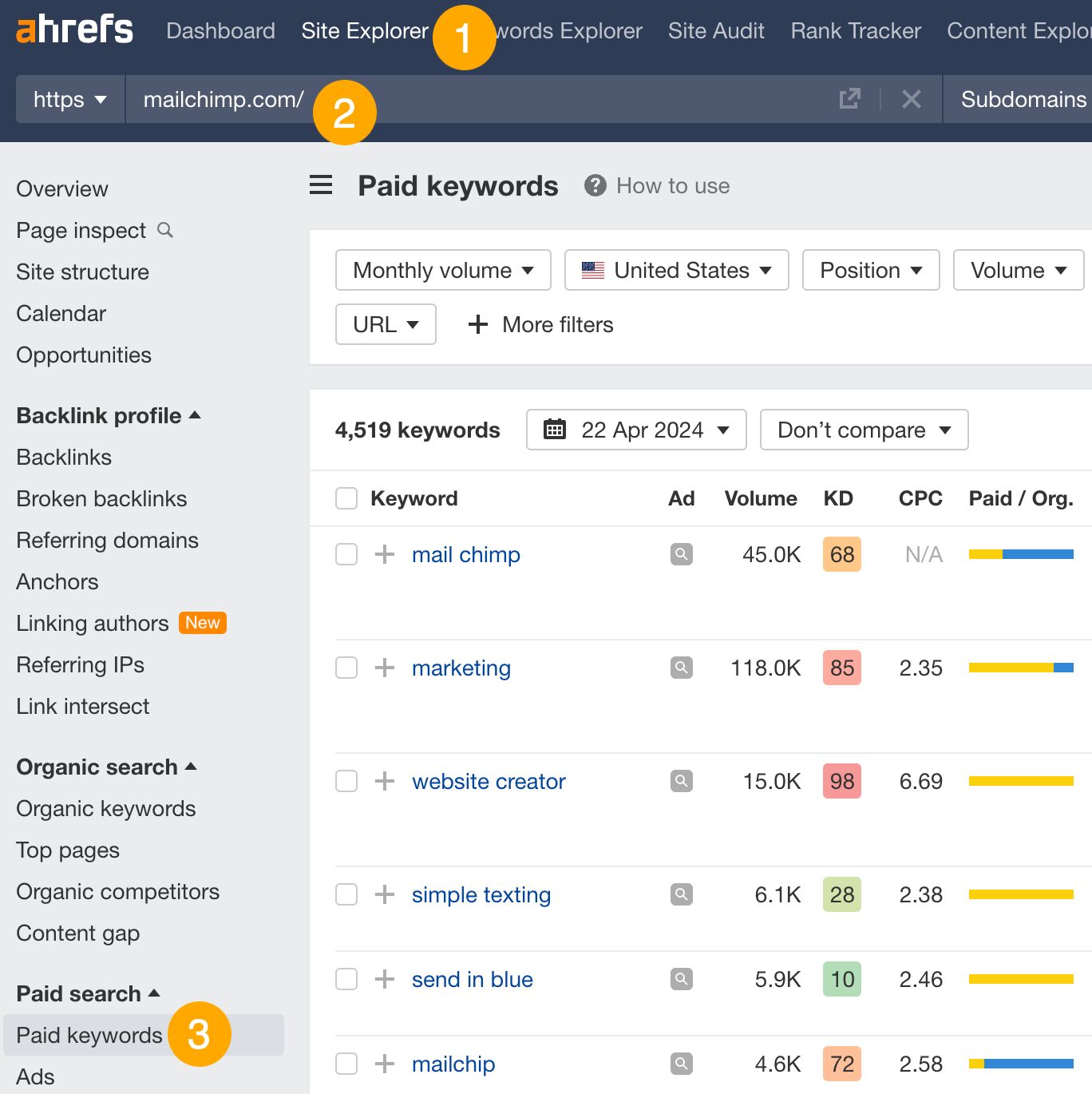

- Go to Ahrefs’ Site Explorer

- Enter your competitor’s domain

- Go to the Paid keywords report

This report shows you the keywords your competitors are targeting via Google Ads.

Since your competitor is paying for traffic from these keywords, it may indicate that they’re profitable for them—and could be for you, too.

You know what keywords your competitors are ranking for or bidding on. But what do you do with them? There are basically three options.

1. Create pages to target these keywords

You can only rank for keywords if you have content about them. So, the most straightforward thing you can do for competitors’ keywords you want to rank for is to create pages to target them.

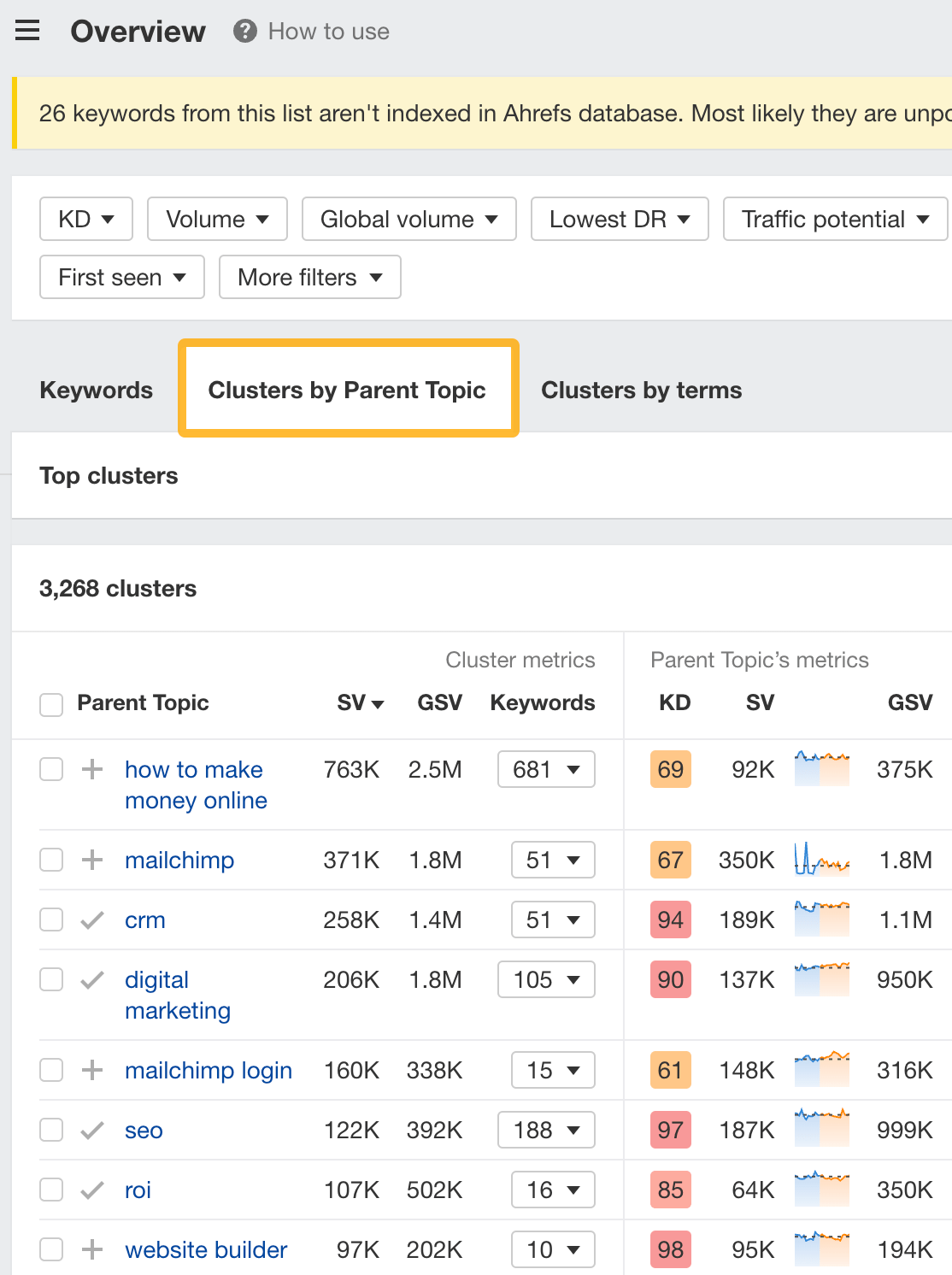

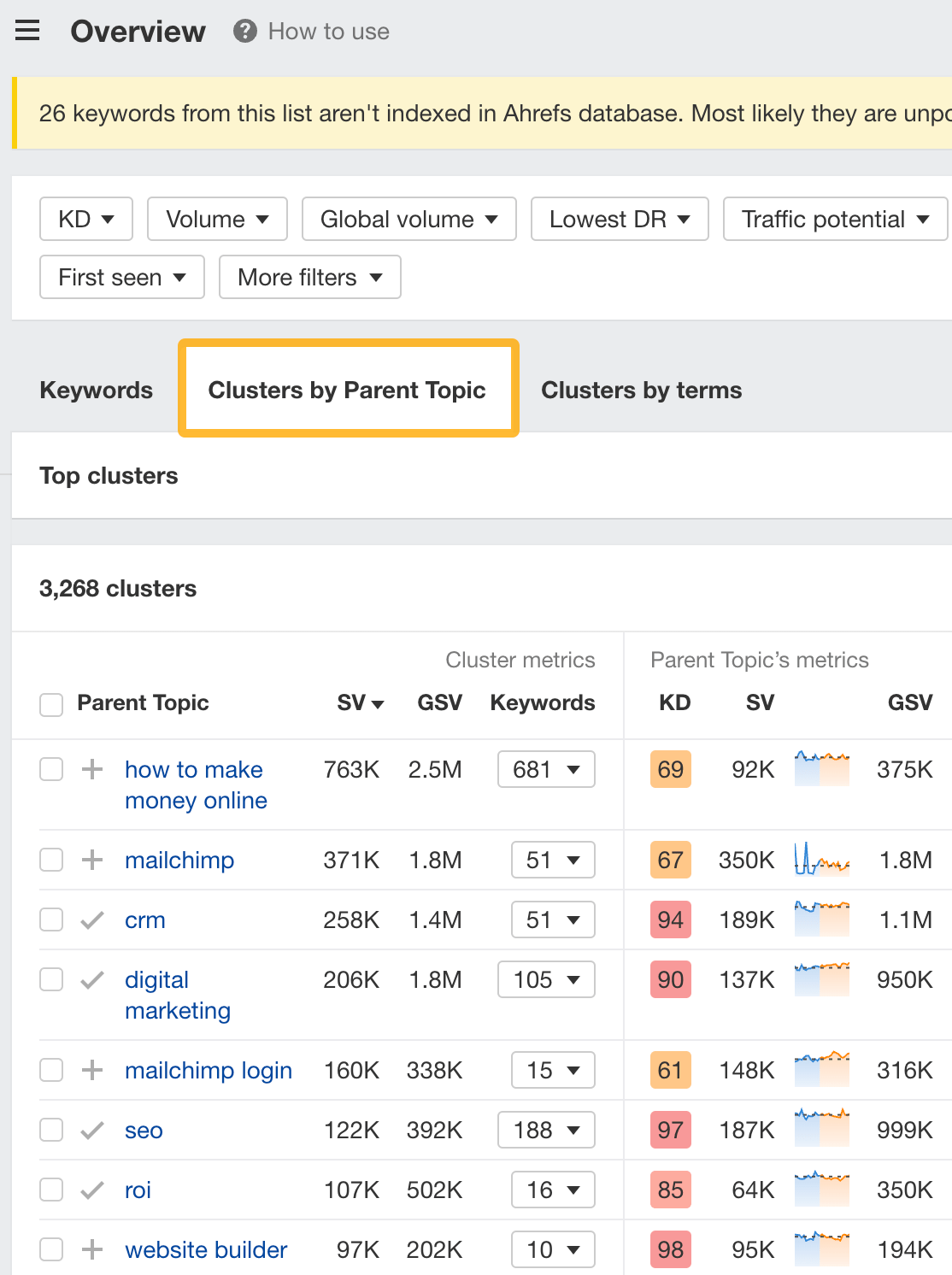

However, before you do this, it’s worth clustering your competitor’s keywords by Parent Topic. This will group keywords that mean the same or similar things so you can target them all with one page.

Here’s how to do that:

- Export your competitor’s keywords, either from the Organic Keywords or Content Gap report

- Paste them into Keywords Explorer

- Click the “Clusters by Parent Topic” tab

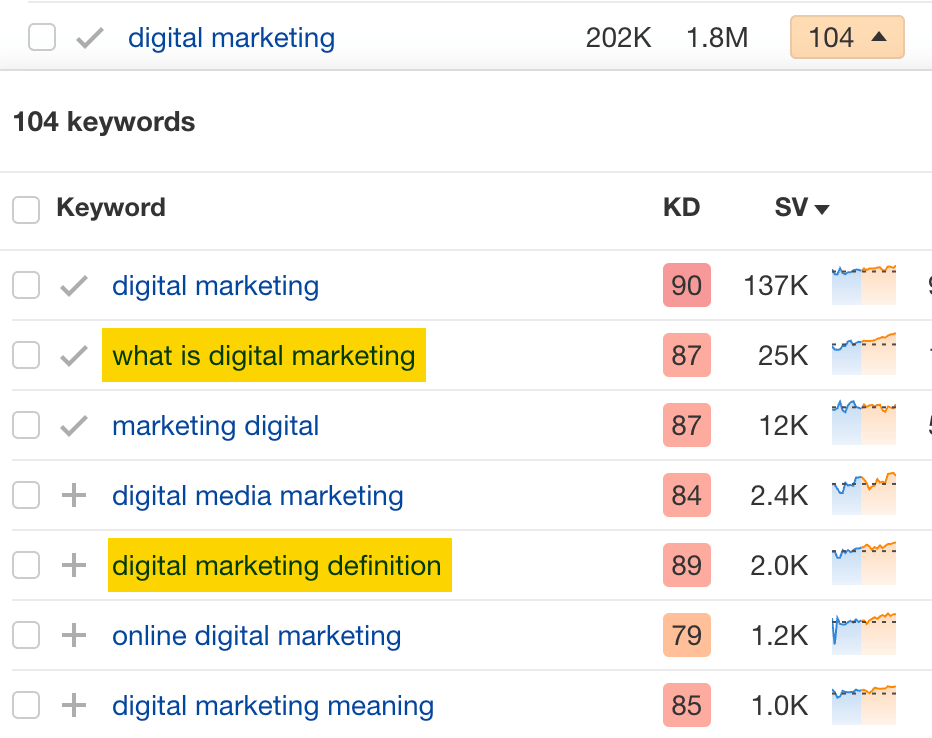

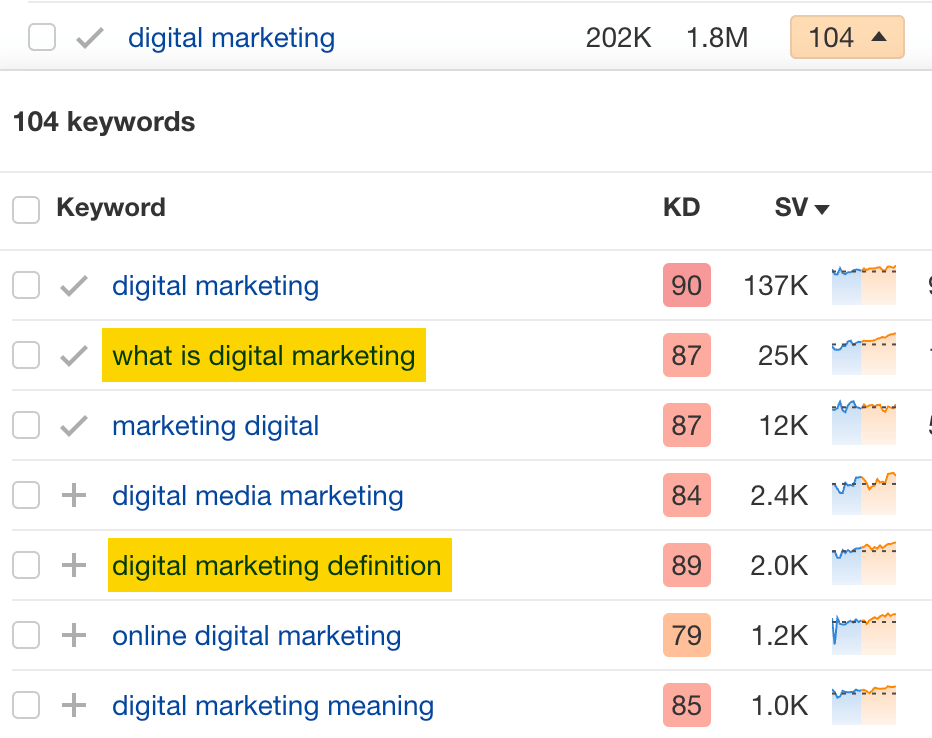

For example, MailChimp ranks for keywords like “what is digital marketing” and “digital marketing definition.” These and many others get clustered under the Parent Topic of “digital marketing” because people searching for them are all looking for the same thing: a definition of digital marketing. You only need to create one page to potentially rank for all these keywords.

2. Optimize existing content by filling subtopics

You don’t always need to create new content to rank for competitors’ keywords. Sometimes, you can optimize the content you already have to rank for them.

How do you know which keywords you can do this for? Try this:

- Export your competitor’s keywords

- Paste them into Keywords Explorer

- Click the “Clusters by Parent Topic” tab

- Look for Parent Topics you already have content about

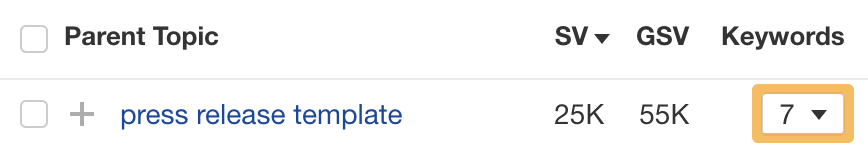

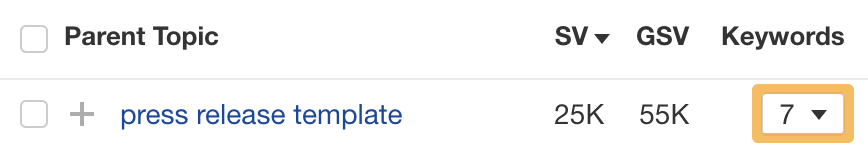

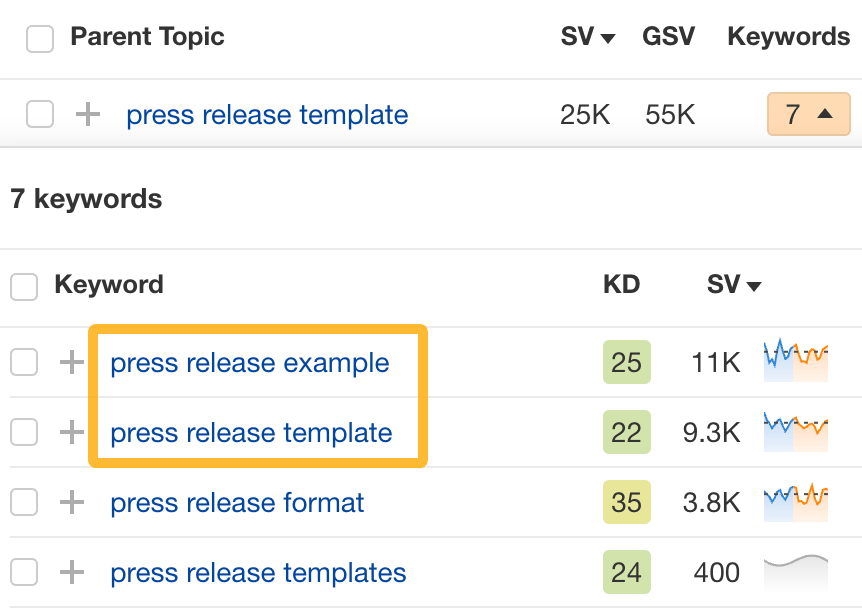

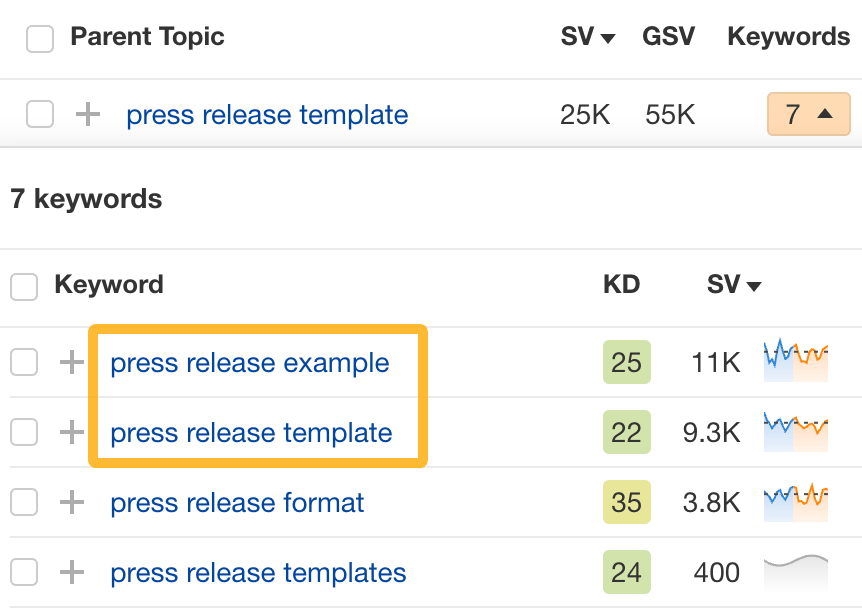

For example, if we analyze our competitor, we can see that seven keywords they rank for fall under the Parent Topic of “press release template.”

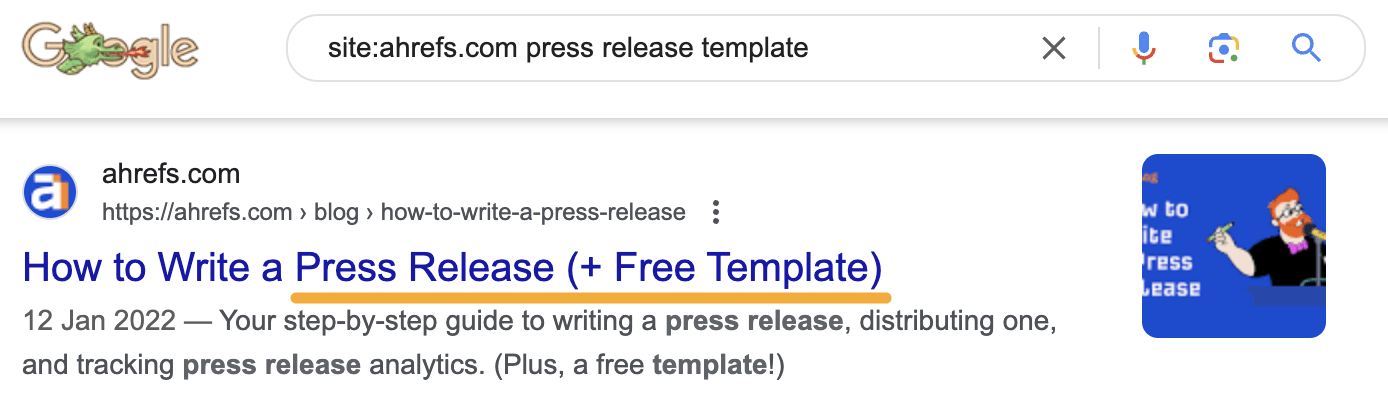

If we search our site, we see that we already have a page about this topic.

If we click the caret and check the keywords in the cluster, we see keywords like “press release example” and “press release format.”

To rank for the keywords in the cluster, we can probably optimize the page we already have by adding sections about the subtopics of “press release examples” and “press release format.”

3. Target these keywords with Google Ads

Paid keywords are the simplest—look through the report and see if there are any relevant keywords you might want to target, too.

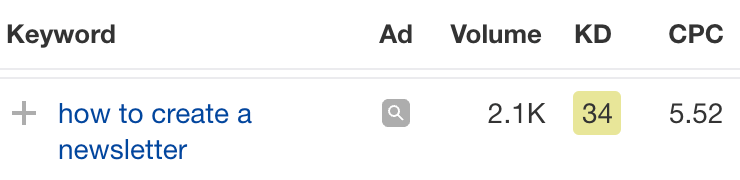

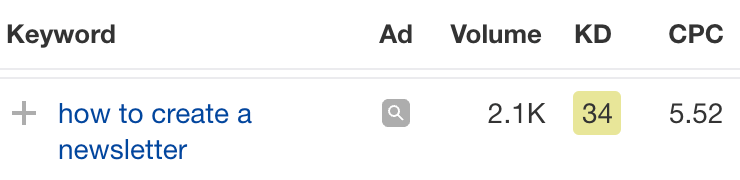

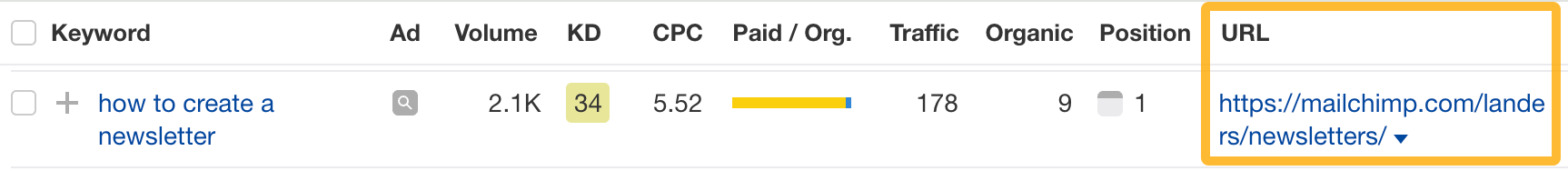

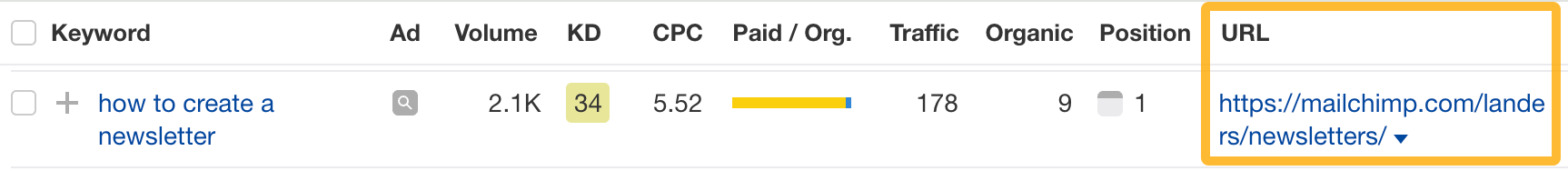

For example, Mailchimp is bidding for the keyword “how to create a newsletter.”

If you’re ConvertKit, you may also want to target this keyword since it’s relevant.

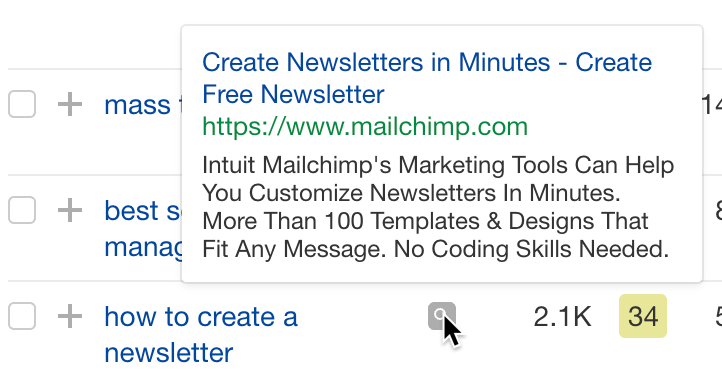

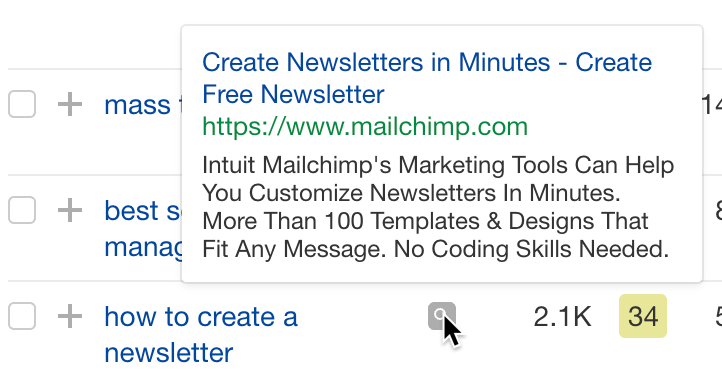

If you decide to target the same keyword via Google Ads, you can hover over the magnifying glass to see the ads your competitor is using.

You can also see the landing page your competitor directs ad traffic to under the URL column.

Learn more

Check out more tutorials on how to do competitor keyword analysis:

SEO

Google Confirms Links Are Not That Important

Google’s Gary Illyes confirmed at a recent search marketing conference that Google needs very few links, adding to the growing body of evidence that publishers need to focus on other factors. Gary tweeted confirmation that he indeed say those words.

Background Of Links For Ranking

Links were discovered in the late 1990’s to be a good signal for search engines to use for validating how authoritative a website is and then Google discovered soon after that anchor text could be used to provide semantic signals about what a webpage was about.

One of the most important research papers was Authoritative Sources in a Hyperlinked Environment by Jon M. Kleinberg, published around 1998 (link to research paper at the end of the article). The main discovery of this research paper is that there is too many web pages and there was no objective way to filter search results for quality in order to rank web pages for a subjective idea of relevance.

The author of the research paper discovered that links could be used as an objective filter for authoritativeness.

Kleinberg wrote:

“To provide effective search methods under these conditions, one needs a way to filter, from among a huge collection of relevant pages, a small set of the most “authoritative” or ‘definitive’ ones.”

This is the most influential research paper on links because it kick-started more research on ways to use links beyond as an authority metric but as a subjective metric for relevance.

Objective is something factual. Subjective is something that’s closer to an opinion. The founders of Google discovered how to use the subjective opinions of the Internet as a relevance metric for what to rank in the search results.

What Larry Page and Sergey Brin discovered and shared in their research paper (The Anatomy of a Large-Scale Hypertextual Web Search Engine – link at end of this article) was that it was possible to harness the power of anchor text to determine the subjective opinion of relevance from actual humans. It was essentially crowdsourcing the opinions of millions of website expressed through the link structure between each webpage.

What Did Gary Illyes Say About Links In 2024?

At a recent search conference in Bulgaria, Google’s Gary Illyes made a comment about how Google doesn’t really need that many links and how Google has made links less important.

Patrick Stox tweeted about what he heard at the search conference:

” ‘We need very few links to rank pages… Over the years we’ve made links less important.’ @methode #serpconf2024″

Google’s Gary Illyes tweeted a confirmation of that statement:

“I shouldn’t have said that… I definitely shouldn’t have said that”

Why Links Matter Less

The initial state of anchor text when Google first used links for ranking purposes was absolutely non-spammy, which is why it was so useful. Hyperlinks were primarily used as a way to send traffic from one website to another website.

But by 2004 or 2005 Google was using statistical analysis to detect manipulated links, then around 2004 “powered-by” links in website footers stopped passing anchor text value, and by 2006 links close to the words “advertising” stopped passing link value, links from directories stopped passing ranking value and by 2012 Google deployed a massive link algorithm called Penguin that destroyed the rankings of likely millions of websites, many of which were using guest posting.

The link signal eventually became so bad that Google decided in 2019 to selectively use nofollow links for ranking purposes. Google’s Gary Illyes confirmed that the change to nofollow was made because of the link signal.

Google Explicitly Confirms That Links Matter Less

In 2023 Google’s Gary Illyes shared at a PubCon Austin that links were not even in the top 3 of ranking factors. Then in March 2024, coinciding with the March 2024 Core Algorithm Update, Google updated their spam policies documentation to downplay the importance of links for ranking purposes.

The documentation previously said:

“Google uses links as an important factor in determining the relevancy of web pages.”

The update to the documentation that mentioned links was updated to remove the word important.

Links are not just listed as just another factor:

“Google uses links as a factor in determining the relevancy of web pages.”

At the beginning of April Google’s John Mueller advised that there are more useful SEO activities to engage on than links.

Mueller explained:

“There are more important things for websites nowadays, and over-focusing on links will often result in you wasting your time doing things that don’t make your website better overall”

Finally, Gary Illyes explicitly said that Google needs very few links to rank webpages and confirmed it.

I shouldn’t have said that… I definitely shouldn’t have said that

— Gary 鯨理/경리 Illyes (so official, trust me) (@methode) April 19, 2024

Why Google Doesn’t Need Links

The reason why Google doesn’t need many links is likely because of the extent of AI and natural language undertanding that Google uses in their algorithms. Google must be highly confident in its algorithm to be able to explicitly say that they don’t need it.

Way back when Google implemented the nofollow into the algorithm there were many link builders who sold comment spam links who continued to lie that comment spam still worked. As someone who started link building at the very beginning of modern SEO (I was the moderator of the link building forum at the #1 SEO forum of that time), I can say with confidence that links have stopped playing much of a role in rankings beginning several years ago, which is why I stopped about five or six years ago.

Read the research papers

Authoritative Sources in a Hyperlinked Environment – Jon M. Kleinberg (PDF)

The Anatomy of a Large-Scale Hypertextual Web Search Engine

Featured Image by Shutterstock/RYO Alexandre

-

PPC5 days ago

PPC5 days ago19 Best SEO Tools in 2024 (For Every Use Case)

-

MARKETING6 days ago

MARKETING6 days agoStreamlining Processes for Increased Efficiency and Results

-

SEARCHENGINES6 days ago

Daily Search Forum Recap: April 17, 2024

-

SEO6 days ago

SEO6 days agoAn In-Depth Guide And Best Practices For Mobile SEO

-

PPC6 days ago

PPC6 days ago97 Marvelous May Content Ideas for Blog Posts, Videos, & More

-

SEARCHENGINES5 days ago

Daily Search Forum Recap: April 18, 2024

-

MARKETING6 days ago

MARKETING6 days agoEcommerce evolution: Blurring the lines between B2B and B2C

-

SEARCHENGINES4 days ago

Daily Search Forum Recap: April 19, 2024

You must be logged in to post a comment Login