SEO

Seven Free Open Source GPT Models Released

Silicon Valley AI company Cerebras released seven open source GPT models to provide an alternative to the tightly controlled and proprietary systems available today.

The royalty free open source GPT models, including the weights and training recipe have been released under the highly permissive Apache 2.0 license by Cerebras, a Silicon Valley based AI infrastructure for AI applications company.

To a certain extent, the seven GPT models are a proof of concept for the Cerebras Andromeda AI supercomputer.

The Cerebras infrastructure allows their customers, like Jasper AI Copywriter, to quickly train their own custom language models.

A Cerebras blog post about the hardware technology noted:

“We trained all Cerebras-GPT models on a 16x CS-2 Cerebras Wafer-Scale Cluster called Andromeda.

The cluster enabled all experiments to be completed quickly, without the traditional distributed systems engineering and model parallel tuning needed on GPU clusters.

Most importantly, it enabled our researchers to focus on the design of the ML instead of the distributed system. We believe the capability to easily train large models is a key enabler for the broad community, so we have made the Cerebras Wafer-Scale Cluster available on the cloud through the Cerebras AI Model Studio.”

Cerebras GPT Models and Transparency

Cerebras cites the concentration of ownership of AI technology to just a few companies as a reason for creating seven open source GPT models.

OpenAI, Meta and Deepmind keep a large amount of information about their systems private and tightly controlled, which limits innovation to whatever the three corporations decide others can do with their data.

Is a closed-source system best for innovation in AI? Or is open source the future?

Cerebras writes:

“For LLMs to be an open and accessible technology, we believe it’s important to have access to state-of-the-art models that are open, reproducible, and royalty free for both research and commercial applications.

To that end, we have trained a family of transformer models using the latest techniques and open datasets that we call Cerebras-GPT.

These models are the first family of GPT models trained using the Chinchilla formula and released via the Apache 2.0 license.”

Thus these seven models are released on Hugging Face and GitHub to encourage more research through open access to AI technology.

These models were trained with Cerebras’ Andromeda AI supercomputer, a process that only took weeks to accomplish.

Cerebras-GPT is fully open and transparent, unlike the latest GPT models from OpenAI (GPT-4), Deepmind and Meta OPT.

OpenAI and Deepmind Chinchilla do not offer licenses to use the models. Meta OPT only offers a non-commercial license.

OpenAI’s GPT-4 has absolutely no transparency about their training data. Did they use Common Crawl data? Did they scrape the Internet and create their own dataset?

OpenAI is keeping this information (and more) secret, which is in contrast to the Cerebras-GPT approach that is fully transparent.

The following is all open and transparent:

- Model architecture

- Training data

- Model weights

- Checkpoints

- Compute-optimal training status (yes)

- License to use: Apache 2.0 License

The seven versions come in 111M, 256M, 590M, 1.3B, 2.7B, 6.7B, and 13B models.

IT was announced:

“In a first among AI hardware companies, Cerebras researchers trained, on the Andromeda AI supercomputer, a series of seven GPT models with 111M, 256M, 590M, 1.3B, 2.7B, 6.7B, and 13B parameters.

Typically a multi-month undertaking, this work was completed in a few weeks thanks to the incredible speed of the Cerebras CS-2 systems that make up Andromeda, and the ability of Cerebras’ weight streaming architecture to eliminate the pain of distributed compute.

These results demonstrate that Cerebras’ systems can train the largest and most complex AI workloads today.

This is the first time a suite of GPT models, trained using state-of-the-art training efficiency techniques, has been made public.

These models are trained to the highest accuracy for a given compute budget (i.e. training efficient using the Chinchilla recipe) so they have lower training time, lower training cost, and use less energy than any existing public models.”

Open Source AI

The Mozilla foundation, makers of open source software Firefox, have started a company called Mozilla.ai to build open source GPT and recommender systems that are trustworthy and respect privacy.

Databricks also recently released an open source GPT Clone called Dolly which aims to democratize “the magic of ChatGPT.”

In addition to those seven Cerebras GPT models, another company, called Nomic AI, released GPT4All, an open source GPT that can run on a laptop.

Today we’re releasing GPT4All, an assistant-style chatbot distilled from 430k GPT-3.5-Turbo outputs that you can run on your laptop. pic.twitter.com/VzvRYPLfoY

— Nomic AI (@nomic_ai) March 28, 2023

The open source AI movement is at a nascent stage but is gaining momentum.

GPT technology is giving birth to massive changes across industries and it’s possible, maybe inevitable, that open source contributions may change the face of the industries driving that change.

If the open source movement keeps advancing at this pace, we may be on the cusp of witnessing a shift in AI innovation that keeps it from concentrating in the hands of a few corporations.

Read the official announcement:

Cerebras Systems Releases Seven New GPT Models Trained on CS-2 Wafer-Scale Systems

Featured image by Shutterstock/Merkushev Vasiliy

SEO

HARO Has Been Dead for a While

I know nothing about the new tool. I haven’t tried it. But after trying to use HARO recently, I can’t say I’m surprised or saddened by its death. It’s been a walking corpse for a while.

I used HARO way back in the day to build links. It worked. But a couple of months ago, I experienced the platform from the other side when I decided to try to source some “expert” insights for our posts.

After just a few minutes of work, I got hundreds of pitches:

So, I grabbed a cup of coffee and began to work through them. It didn’t take long before I lost the will to live. Every other pitch seemed like nothing more than lazy AI-generated nonsense from someone who definitely wasn’t an expert.

Here’s one of them:

Seriously. Who writes like that? I’m a self-confessed dullard (any fellow Dull Men’s Club members here?), and even I’m not that dull…

I don’t think I looked through more than 30-40 of the responses. I just couldn’t bring myself to do it. It felt like having a conversation with ChatGPT… and not a very good one!

Despite only reviewing a few dozen of the many pitches I received, one stood out to me:

Believe it or not, this response came from a past client of mine who runs an SEO agency in the UK. Given how knowledgeable and experienced he is (he actually taught me a lot about SEO back in the day when I used to hassle him with questions on Skype), this pitch rang alarm bells for two reasons:

- I truly doubt he spends his time replying to HARO queries

- I know for a fact he’s no fan of Neil Patel (sorry, Neil, but I’m sure you’re aware of your reputation at this point!)

So… I decided to confront him 😉

Here’s what he said:

Shocker.

I pressed him for more details:

I’m getting a really good deal and paying per link rather than the typical £xxxx per month for X number of pitches. […] The responses as you’ve seen are not ideal but that’s a risk I’m prepared to take as realistically I dont have the time to do it myself. He’s not native english, but I have had to have a word with him a few times about clearly using AI. On the low cost ones I don’t care but on authority sites it needs to be more refined.

I think this pretty much sums up the state of HARO before its death. Most “pitches” were just AI answers from SEOs trying to build links for their clients.

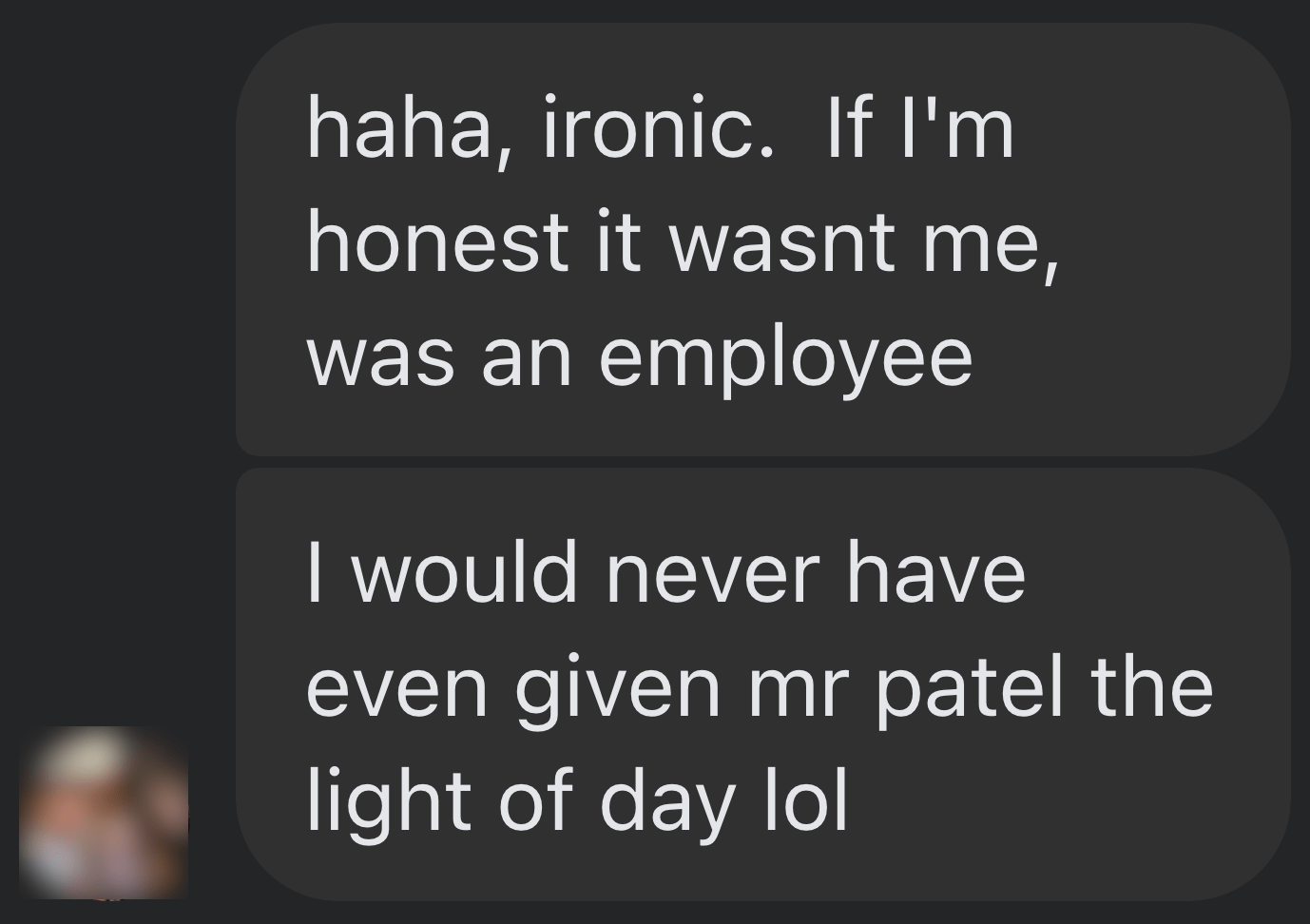

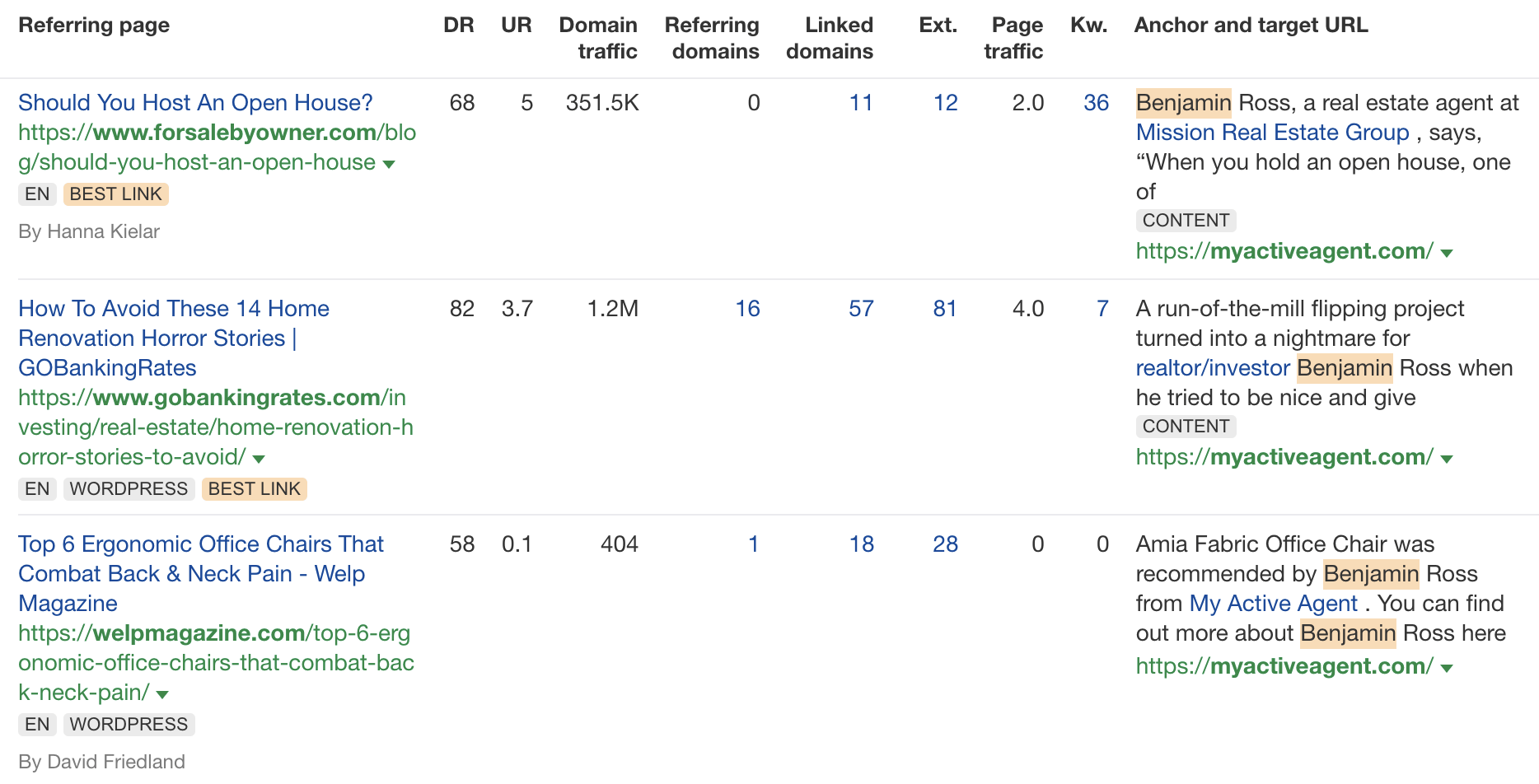

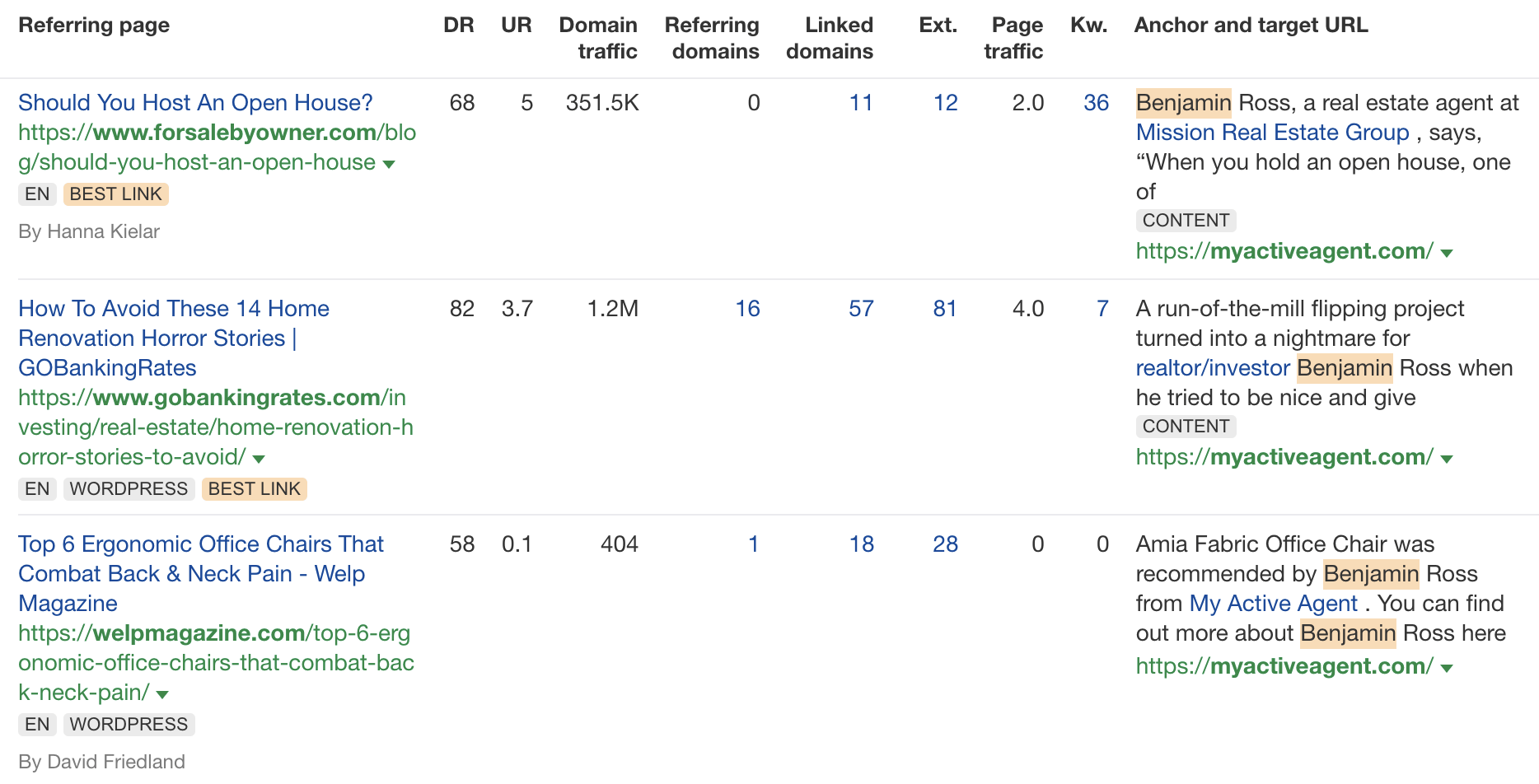

Don’t get me wrong. I’m not throwing shade here. I know that good links are hard to come by, so you have to do what works. And the reality is that HARO did work. Just look at the example below. You can tell from the anchor and surrounding text in Ahrefs that these links were almost certainly built with HARO:

But this was the problem. HARO worked so well back in the day that it was only a matter of time before spammers and the #scale crew ruined it for everyone. That’s what happened, and now HARO is no more. So…

If you’re a link builder, I think it’s time to admit that HARO link building is dead and move on.

No tactic works well forever. It’s the law of sh**ty clickthroughs. This is why you don’t see SEOs having huge success with tactics like broken link building anymore. They’ve moved on to more innovative tactics or, dare I say it, are just buying links.

Sidenote.

Talking of buying links, here’s something to ponder: if Connectively charges for pitches, are links built through those pitches technically paid? If so, do they violate Google’s spam policies? It’s a murky old world this SEO lark, eh?

If you’re a journalist, Connectively might be worth a shot. But with experts being charged for pitches, you probably won’t get as many responses. That might be a good thing. You might get less spam. Or you might just get spammed by SEOs with deep pockets. The jury’s out for now.

My advice? Look for alternative methods like finding and reaching out to experts directly. You can easily use tools like Content Explorer to find folks who’ve written lots of content about the topic and are likely to be experts.

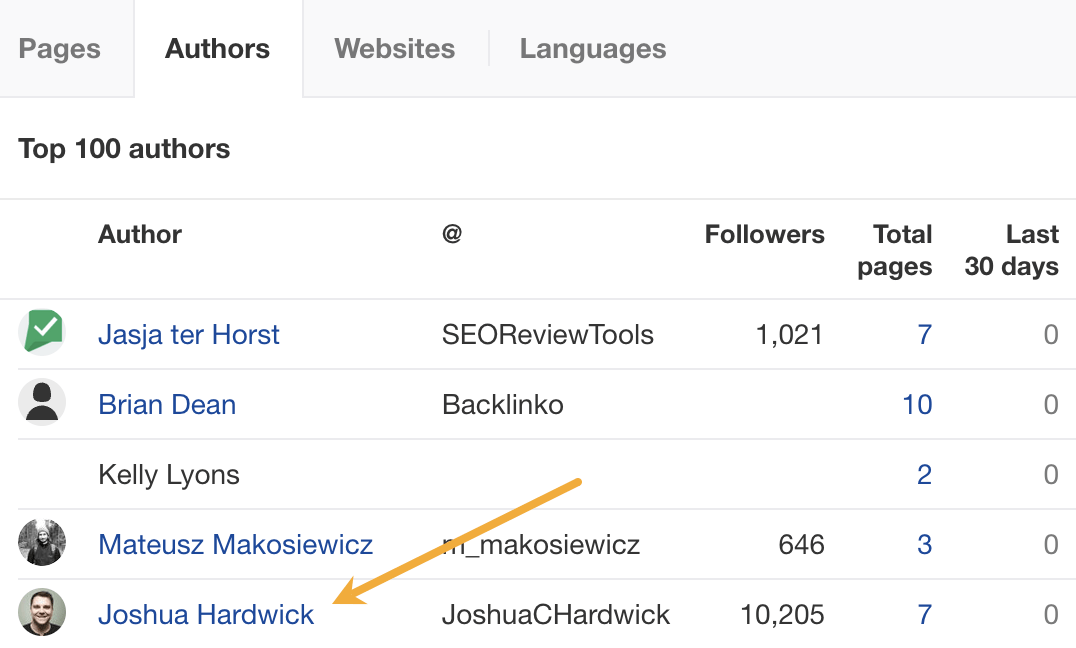

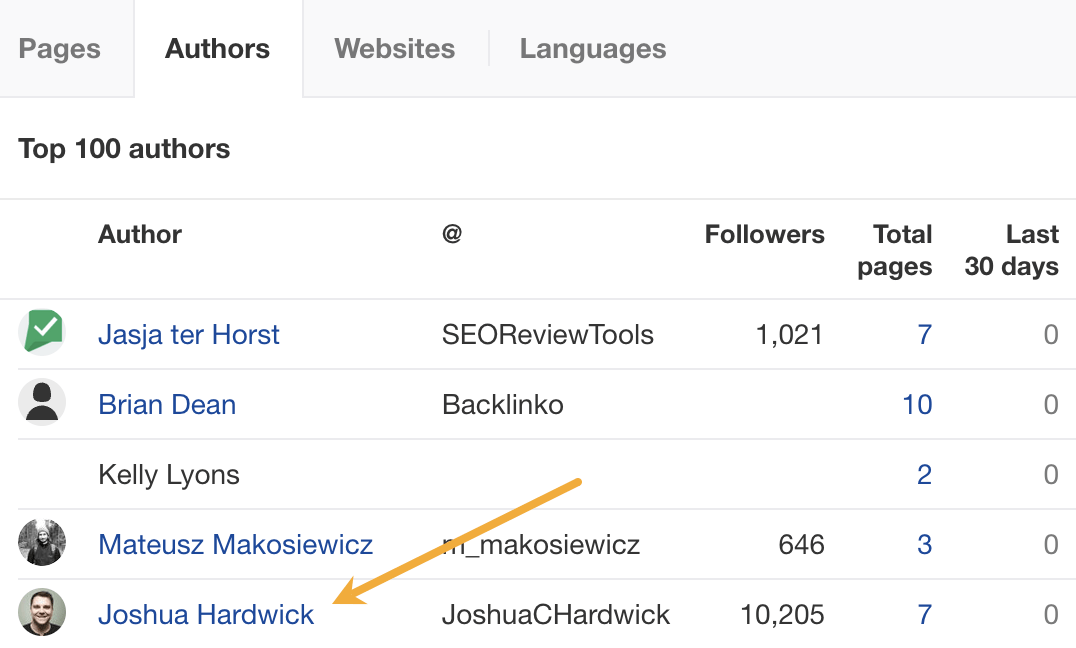

For example, if you look for content with “backlinks” in the title and go to the Authors tab, you might see a familiar name. 😉

I don’t know if I’d call myself an expert, but I’d be happy to give you a quote if you reached out on social media or emailed me (here’s how to find my email address).

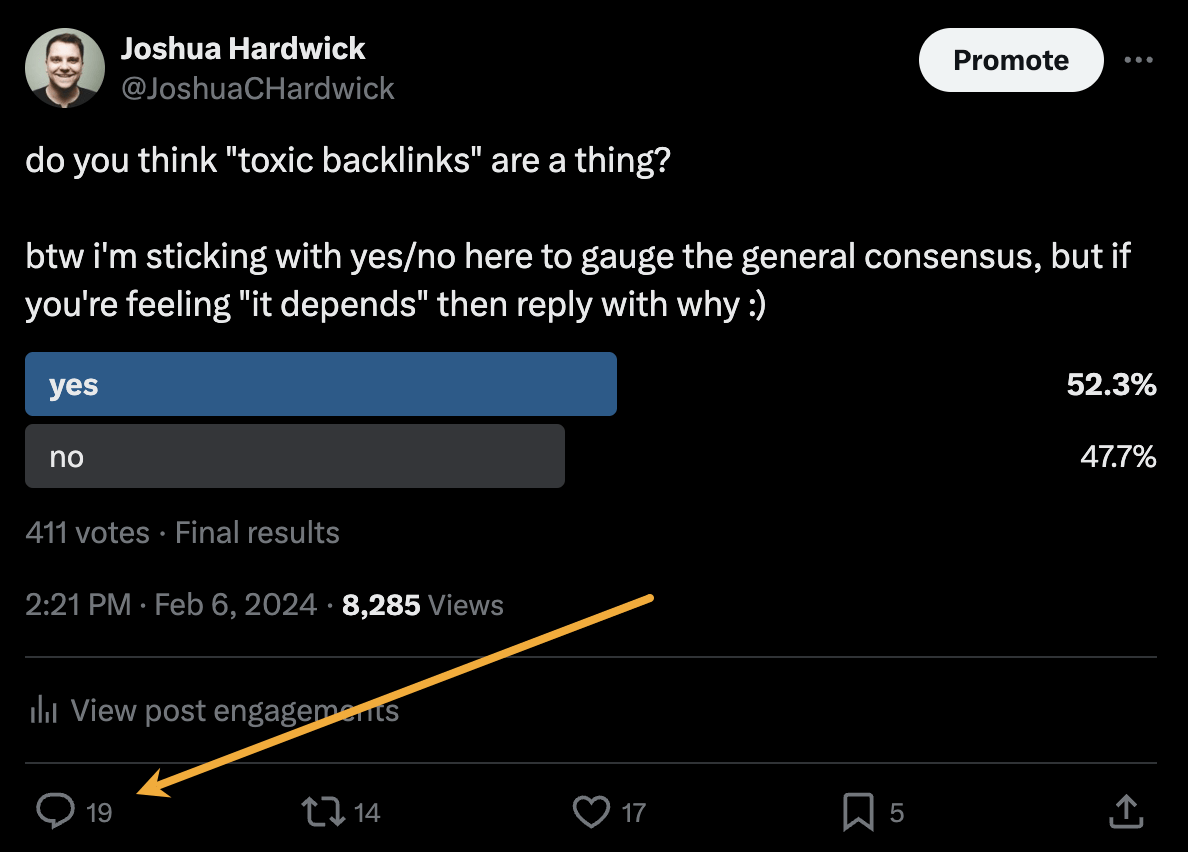

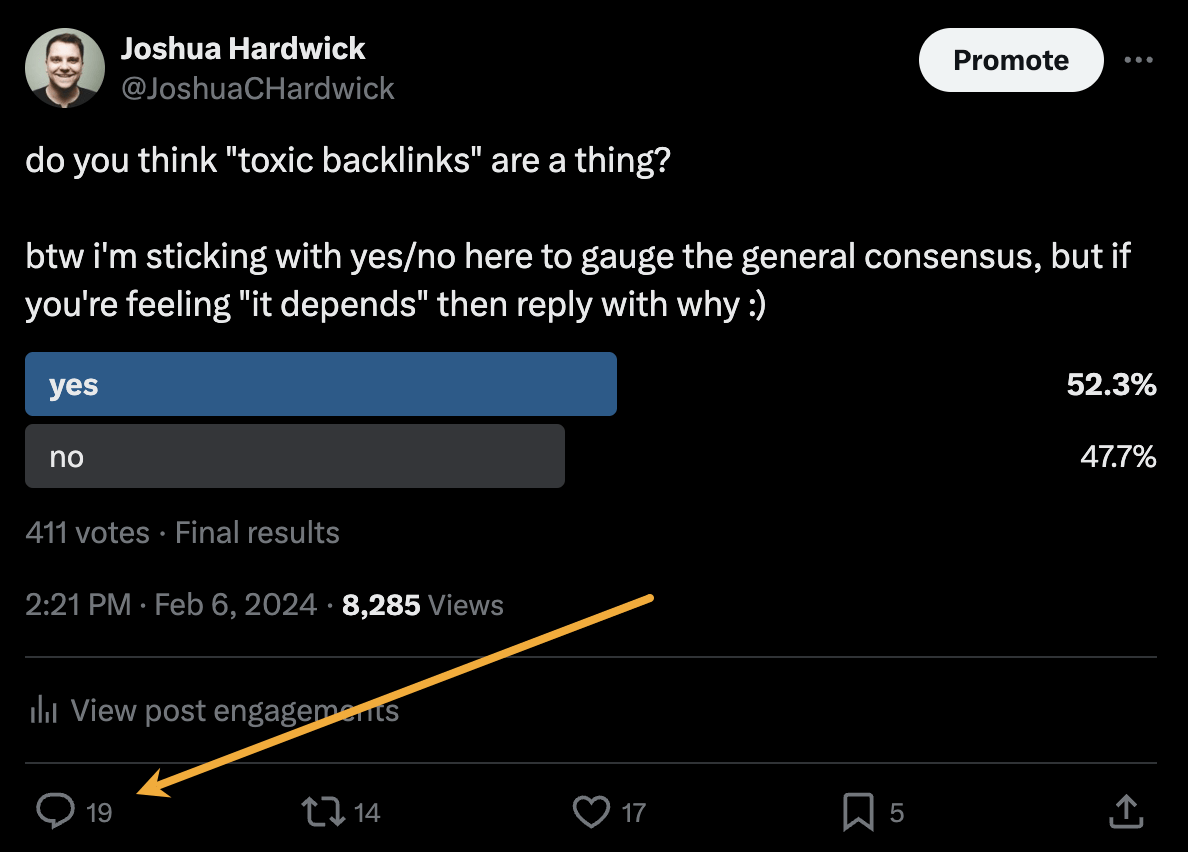

Alternatively, you can bait your audience into giving you their insights on social media. I did this recently with a poll on X and included many of the responses in my guide to toxic backlinks.

Either of these options is quicker than using HARO because you don’t have to sift through hundreds of responses looking for a needle in a haystack. If you disagree with me and still love HARO, feel free to tell me why on X 😉

SEO

Google Clarifies Vacation Rental Structured Data

Google’s structured data documentation for vacation rentals was recently updated to require more specific data in a change that is more of a clarification than it is a change in requirements. This change was made without any formal announcement or notation in the developer pages changelog.

Vacation Rentals Structured Data

These specific structured data types makes vacation rental information eligible for rich results that are specific to these kinds of rentals. However it’s not available to all websites. Vacation rental owners are required to be connected to a Google Technical Account Manager and have access to the Google Hotel Center platform.

VacationRental Structured Data Type Definitions

The primary changes were made to the structured data property type definitions where Google defines what the required and recommended property types are.

The changes to the documentation is in the section governing the Recommended properties and represents a clarification of the recommendations rather than a change in what Google requires.

The primary changes were made to the structured data type definitions where Google defines what the required and recommended property types are.

The changes to the documentation is in the section governing the Recommended properties and represents a clarification of the recommendations rather than a change in what Google requires.

Address Schema.org property

This is a subtle change but it’s important because it now represents a recommendation that requires more precise data.

This is what was recommended before:

“streetAddress”: “1600 Amphitheatre Pkwy.”

This is what it now recommends:

“streetAddress”: “1600 Amphitheatre Pkwy, Unit 6E”

Address Property Change Description

The most substantial change is to the description of what the “address” property is, becoming more descriptive and precise about what is recommended.

The description before the change:

PostalAddress

Information about the street address of the listing. Include all properties that apply to your country.

The description after the change:

PostalAddress

The full, physical location of the vacation rental.

Provide the street address, city, state or region, and postal code for the vacation rental. If applicable, provide the unit or apartment number.

Note that P.O. boxes or other mailing-only addresses are not considered full, physical addresses.

This is repeated in the section for address.streetAddress property

This is what it recommended before:

address.streetAddress Text

The full street address of your vacation listing.

And this is what it recommends now:

address.streetAddress Text

The full street address of your vacation listing, including the unit or apartment number if applicable.

Clarification And Not A Change

Although these updates don’t represent a change in Google’s guidance they are nonetheless important because they offer clearer guidance with less ambiguity as to what is recommended.

Read the updated structured data guidance:

Vacation rental (VacationRental) structured data

Featured Image by Shutterstock/New Africa

SEO

Google On Hyphens In Domain Names

Google’s John Mueller answered a question on Reddit about why people don’t use hyphens with domains and if there was something to be concerned about that they were missing.

Domain Names With Hyphens For SEO

I’ve been working online for 25 years and I remember when using hyphens in domains was something that affiliates did for SEO when Google was still influenced by keywords in the domain, URL, and basically keywords anywhere on the webpage. It wasn’t something that everyone did, it was mainly something that was popular with some affiliate marketers.

Another reason for choosing domain names with keywords in them was that site visitors tended to convert at a higher rate because the keywords essentially prequalified the site visitor. I know from experience how useful two-keyword domains (and one word domain names) are for conversions, as long as they didn’t have hyphens in them.

A consideration that caused hyphenated domain names to fall out of favor is that they have an untrustworthy appearance and that can work against conversion rates because trustworthiness is an important factor for conversions.

Lastly, hyphenated domain names look tacky. Why go with tacky when a brandable domain is easier for building trust and conversions?

Domain Name Question Asked On Reddit

This is the question asked on Reddit:

“Why don’t people use a lot of domains with hyphens? Is there something concerning about it? I understand when you tell it out loud people make miss hyphen in search.”

And this is Mueller’s response:

“It used to be that domain names with a lot of hyphens were considered (by users? or by SEOs assuming users would? it’s been a while) to be less serious – since they could imply that you weren’t able to get the domain name with fewer hyphens. Nowadays there are a lot of top-level-domains so it’s less of a thing.

My main recommendation is to pick something for the long run (assuming that’s what you’re aiming for), and not to be overly keyword focused (because life is too short to box yourself into a corner – make good things, course-correct over time, don’t let a domain-name limit what you do online). The web is full of awkward, keyword-focused short-lived low-effort takes made for SEO — make something truly awesome that people will ask for by name. If that takes a hyphen in the name – go for it.”

Pick A Domain Name That Can Grow

Mueller is right about picking a domain name that won’t lock your site into one topic. When a site grows in popularity the natural growth path is to expand the range of topics the site coves. But that’s hard to do when the domain is locked into one rigid keyword phrase. That’s one of the downsides of picking a “Best + keyword + reviews” domain, too. Those domains can’t grow bigger and look tacky, too.

That’s why I’ve always recommended brandable domains that are memorable and encourage trust in some way.

Read the post on Reddit:

Read Mueller’s response here.

Featured Image by Shutterstock/Benny Marty

-

WORDPRESS6 days ago

WORDPRESS6 days agoTurkish startup ikas attracts $20M for its e-commerce platform designed for small businesses

-

PPC7 days ago

PPC7 days ago31 Ready-to-Go Mother’s Day Messages for Social Media, Email, & More

-

PPC6 days ago

PPC6 days agoA History of Google AdWords and Google Ads: Revolutionizing Digital Advertising & Marketing Since 2000

-

MARKETING5 days ago

MARKETING5 days agoRoundel Media Studio: What to Expect From Target’s New Self-Service Platform

-

SEO5 days ago

SEO5 days agoGoogle Limits News Links In California Over Proposed ‘Link Tax’ Law

-

MARKETING6 days ago

MARKETING6 days agoUnlocking the Power of AI Transcription for Enhanced Content Marketing Strategies

-

SEARCHENGINES6 days ago

SEARCHENGINES6 days agoGoogle Search Results Can Be Harmful & Dangerous In Some Cases

-

SEARCHENGINES5 days ago

Daily Search Forum Recap: April 12, 2024