SEO

What Are Core Web Vitals & How Can You Improve Them?

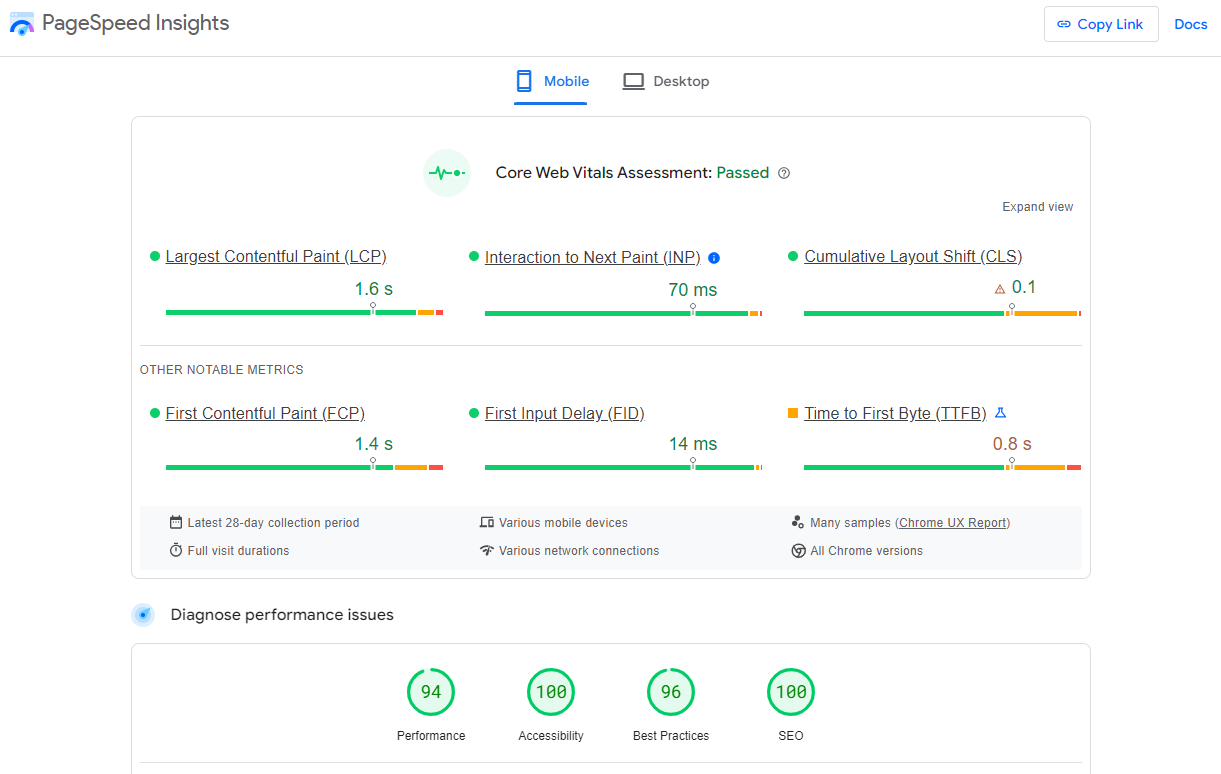

Core Web Vitals are speed metrics that are part of Google’s Page Experience signals used to measure user experience. The metrics measure visual load with Largest Contentful Paint (LCP), visual stability with Cumulative Layout Shift (CLS), and interactivity with First Input Delay (FID).

Mobile page experience and the included Core Web Vital metrics have officially been used for ranking pages since May 2021. Desktop signals have also been used as of February 2022.

The easiest way to see the metrics for your site is with the Core Web Vitals report in Google Search Console. With the report, you can easily see if your pages are categorized as “poor URLs,” “URLs need improvement,” or “good URLs.”

The thresholds for each category are as follows:

| Good | Needs improvement | Poor | |

|---|---|---|---|

| LCP | <=2.5s | <=4s | >4s |

| FID | <=100ms | <=300ms | >300ms |

| CLS | <=0.1 | <=0.25 | >0.25 |

And here’s how the report looks:

If you click into one of these reports, you get a better breakdown of the issues with categorization and the number of URLs impacted.

Clicking into one of the issues gives you a breakdown of page groups that are impacted. This grouping of pages makes a lot of sense. This is because most of the changes to improve Core Web Vitals are done for a particular page template that impacts many pages. You make the changes once in the template, and that will be fixed across the pages in the group.

Now that you know what pages are impacted, here’s some more information about Core Web Vitals and how you can get your pages to pass the checks:

Fact 1: The metrics are split between desktop and mobile. Mobile signals are used for mobile rankings, and desktop signals are used for desktop rankings.

Fact 2: The data comes from the Chrome User Experience Report (CrUX), which records data from opted-in Chrome users. The metrics are assessed at the 75th percentile of users. So if 70% of your users are in the “good” category and 5% are in the “need improvement” category, then your page will still be judged as “need improvement.”

Fact 3: The metrics are assessed for each page. But if there isn’t enough data, Google Webmaster Trends Analyst John Mueller states that signals from sections of a site or the overall site may be used. In our Core Web Vitals data study, we looked at over 42 million pages and found that only 11.4% of the pages had metrics associated with them.

Fact 4: With the addition of these new metrics, Accelerated Mobile Pages (AMP) was removed as a requirement from the Top Stories feature on mobile. Since new stories won’t actually have data on the speed metrics, it’s likely the metrics from a larger category of pages or even the entire domain may be used.

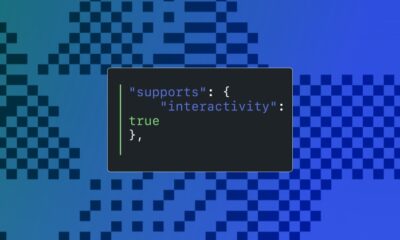

Fact 5: Single Page Applications don’t measure a couple of metrics, FID and LCP, through page transitions. There are a couple of proposed changes, including the App History API and potentially a change in the metric used to measure interactivity that would be called “Responsiveness.”

Fact 6: The metrics may change over time, and the thresholds may as well. Google has already changed the metrics used for measuring speed in its tools over the years, as well as its thresholds for what is considered fast or not.

Core Web Vitals have already changed, and there are more proposed changes to the metrics. I wouldn’t be surprised if page size was added. You can pass the current metrics by prioritizing assets and still have an extremely large page. It’s a pretty big miss, in my opinion.

There are over 200 ranking factors, many of which don’t carry much weight. When talking about Core Web Vitals, Google reps have referred to these as tiny ranking factors or even tiebreakers. I don’t expect much, if any, improvement in rankings from improving Core Web Vitals. Still, they are a factor, and this tweet from John shows how the boost may work.

Think of it like this. Graphic not to scale. pic.twitter.com/6lLUYNM53A

— 🐐 John 🐐 (@JohnMu) May 21, 2021

There have been ranking factors targeting speed metrics for many years. So I wasn’t expecting much, if any, impact to be visible when the mobile page experience update rolled out. Unfortunately, there were also a couple of Google core updates during the time frame for the Page Experience update, which makes determining the impact too messy to draw a conclusion.

There are a couple of studies that found some positive correlation between passing Core Web Vitals and better rankings, but I personally look at these results with skepticism. It’s like saying a site that focuses on SEO tends to rank better. If a site is already working on Core Web Vitals, it likely has done a lot of other things right as well. And people did work on them, as you can see in the chart below from our data study.

Let’s look at each of the Core Web Vitals in more detail.

Here are the three current components of Core Web Vitals and what they measure:

- Largest Contentful Paint (LCP) – Visual load

- Cumulative Layout Shift (CLS) – Visual stability

- First Input Delay (FID) – Interactivity

Note there are additional Web Vitals that serve as proxy measures or supplemental metrics but are not used in the ranking calculations. The Web Vitals metrics for visual load include Time to First Byte (TTFB) and First Contentful Paint (FCP). Total Blocking Time (TBT) and Time to Interactive (TTI) help to measure interactivity.

Largest Contentful Paint

LCP is the single largest visible element loaded in the viewport.

The largest element is usually going to be a featured image or maybe the <h1> tag. But it could also be any of these:

- <img> element

- <image> element inside an <svg> element

- Image inside a <video> element

- Background image loaded with the url() function

- Blocks of text

<svg> and <video> may be added in the future.

How to see LCP

In PageSpeed Insights, the LCP element will be specified in the “Diagnostics” section. Also, notice there is a tab to select LCP that will only show issues related to LCP.

In Chrome DevTools, follow these steps:

- Performance > check “Screenshots”

- Click “Start profiling and reload page”

- LCP is on the timing graph

- Click the node; this is the element for LCP

Optimizing LCP

As we saw in PageSpeed Insights, there are a lot of issues that need to be solved, making LCP the hardest metric to improve, in my opinion. In our study, I noticed that most sites didn’t seem to improve their LCP over time.

Here are a few concepts to keep in mind and some ways you can improve LCP.

1. Smaller is faster

If you can get rid of any files or reduce their sizes, then your page will load faster. This means you may want to delete any files not being used or parts of the code that aren’t used.

How you go about this will depend a lot on your setup, but the process is usually referred to as tree shaking. This is commonly done via some kind of automated process. But in some systems, this step may not be worth the effort.

There’s also compression, which makes the file sizes smaller. Pretty much every file type used to build your website can be compressed, including CSS, JavaScript, Images, and HTML.

2. Closer is faster

Information takes time to travel. The further you are from a server, the longer it takes for the data to be transferred. Unless you serve a small geographical area, having a Content Delivery Network (CDN) is a good idea.

CDNs give you a way to connect and serve your site that’s closer to users. It’s like having copies of your server in different locations around the world.

3. Use the same server if possible

When you first connect to a server, there’s a process that navigates the web and establishes a secure connection between you and the server. This takes some time, and each new connection you need to make adds additional delay while it goes through the same process. If you host your resources on the same server, you can eliminate those extra delays.

If you can’t use the same server, you may want to use preconnect or DNS-prefetch to start connections earlier. A browser will typically wait for the HTML to finish downloading before starting a connection. But with preconnect or DNS-prefetch, it starts earlier than it normally would. Do note that DNS-prefetch has better support than preconnect.

4. Cache what you can

When you cache resources, they’re downloaded for the first page view but don’t need to be downloaded for subsequent page views. With the resources already available, additional page loads will be much faster. Check out how few files are downloaded in the second page load in the waterfall charts below.

First load of the page:

Second load of the page:

5. Prioritization of resources

To pass the LCP check, you should prioritize how your resources are loaded in the critical rendering path. What I mean by that is you want to rearrange the order in which the resources are downloaded and processed. You should first load the resources needed to get the content users see immediately, then load the rest.

Many sites can get to a passing time for LCP by just adding some preload statements for things like the main image, as well as necessary stylesheets and fonts. Let’s look at how to optimize the various resource types.

Images Early

If you don’t need the image, the most impactful solution is to simply get rid of it. If you must have the image, I suggest optimizing the size and quality to keep it as small as possible.

On top of that, you may want to preload the image. This is going to start the download of that image a little earlier. This means it’s going to display a little earlier. A preload statement for a responsive image looks like this:

<link rel="preload" as="image" href=“cat.jpg"

imagesrcset=“cat_400px.jpg 400w,

cat_800px.jpg 800w, cat_1600px.jpg 1600w"

image>

Images Late

You should lazy load any images that you don’t need immediately. This loads images later in the process or when a user is close to seeing them. You can use loading=“lazy” like this:

<img src=“cat.jpg" alt=“cat" loading="lazy">

CSS Early

We already talked about removing unused CSS and minifying the CSS you have. The other major thing you should do is to inline critical CSS. What this does is it takes the part of the CSS needed to load the content users see immediately and then applies it directly into the HTML. When the HTML is downloaded, all the CSS needed to load what users see is already available.

CSS Late

With any extra CSS that isn’t critical, you’ll want to apply it later in the process. You can go ahead and start downloading the CSS with a preload statement but not apply the CSS until later with an onload event. This looks like:

<link rel="preload" href="https://ahrefs.com/blog/core-web-vitals/stylesheet.css" as="style" onload="this.rel="stylesheet"">

Fonts

I’m going to give you a few options here for what I think is:

Good: Preload your fonts. Even better if you use the same server to get rid of the connection.

Better: Font-display: optional. This can be paired with a preload statement. This is going to give your font a small window of time to load. If the font doesn’t make it in time, the initial page load will simply show a default font. Your custom font will then be cached and show up on subsequent page loads.

Best: Just use a system font. There’s nothing to load—so no delays.

JavaScript Early

We already talked about removing unused JavaScript and minifying what you have. If you’re using a JavaScript framework, then you may want to prerender or server-side render (SSR) the page.

Your other options are to inline the JavaScript needed early. It’s similar to what we discussed about CSS, where you load portions of the code within the HTML or preload the JavaScript files so that you get them earlier. This should only be done for assets needed to load the content above the fold or if some functionality depends on this JavaScript.

JavaScript Late

Any JavaScript you don’t need immediately should be loaded later. There are two main ways to do that—defer and async attributes. These attributes can be added to your script tags.

Usually, a script being downloaded blocks the parser while downloading and executing. Async will let the parsing and downloading occur at the same time but still block parsing during the script execution. Defer will not block parsing during the download and only execute after the HTML has finished parsing.

Which should you use? For anything that you want earlier or that has dependencies, I’ll lean toward async. For instance, I tend to use async on analytics tags so that more users are recorded. You’ll want to defer anything that is not needed until later or doesn’t have dependencies. The attributes are pretty easy to add. Check out these examples:

Normal:

<script src="https://www.domain.com/file.js"></script>

Async:

<script src="https://www.domain.com/file.js" async></script>

Defer:

<script src="https://www.domain.com/file.js" defer></script>

Misc

There are a few other technologies that you may want to look at to help with performance. These include Speculative Prerendering, Early Hints, Signed Exchanges, and HTTP/3.

Resources

Cumulative Layout Shift

CLS measures how elements move around or how stable the page layout is. It takes into account the size of the content and the distance it moves. Google has already updated how CLS is measured. Previously, it would continue to measure even after the initial page load. But now it’s restricted to a five-second time frame where the most shifting occurs.

It can be annoying if you try to click something on a page that shifts and you end up clicking on something you don’t intend to. It happens to me all the time. I click on one thing and, suddenly, I’m clicking on an ad and am now not even on the same website. As a user, I find that frustrating.

Common causes of CLS include:

- Images without dimensions.

- Ads, embeds, and iframes without dimensions.

- Injecting content with JavaScript.

- Applying fonts or styles late in the load.

How to see CLS

In PageSpeed Insights, if you select CLS, you can see all the related issues. The main one to pay attention to here is “Avoid large layout shifts.”

We’re using WebPageTest. In Filmstrip View, use the following options:

- Highlight Layout Shifts

- Thumbnail Size: Huge

- Thumbnail Interval: 0.1 secs

Notice how our font restyles between 5.1 secs and 5.2 secs, shifting the layout as our custom font is applied.

Smashing Magazine also had an interesting technique where it outlined everything with a 3px solid red line and recorded a video of the page loading to identify where layout shifts were happening.

Optimizing CLS

In most cases, to optimize CLS, you’re going to be working on issues related to images, fonts or, possibly, injected content. Let’s look at each case.

Images

For images, what you need to do is reserve the space so that there’s no shift and the image simply fills that space. This can mean setting the height and width of images by specifying them within the <img> tag like this:

<img src=“cat.jpg" width="640" height="360" alt=“cat with string" />

For responsive images, you need to use a srcset like this:

<img

width="1000"

height="1000"

src="https://ahrefs.com/blog/core-web-vitals/puppy-1000.jpg"

alt="Puppy with balloons" />

And reserve the max space needed for any dynamic content like ads.

Fonts

For fonts, the goal is to get the font on the screen as fast as possible and to not swap it with another font. When a font is loaded or changed, you end up with a noticeable shift like a Flash of Invisible Text (FOIT) or Flash of Unstyled Text (FOUT).

If you can use a system font, do that. There’s nothing to load, so there are no delays or changes that will cause a shift.

If you have to use a custom font, the current best method for minimizing CLS is to combine <link rel=”preload”> (which is going to try to grab your font as soon as possible) and font-display: optional (which is going to give your font a small window of time to load). If the font doesn’t make it in time, the initial page load will simply show a default font. Your custom font will then be cached and show up on subsequent page loads.

Injected content

When content is dynamically inserted above existing content, this causes a layout shift. If you’re going to do this, reserve enough space for it ahead of time.

Resources

First Input Delay

FID is the time from when a user interacts with your page to when the page responds. You can also think of it as responsiveness.

Example interactions:

- Clicking on a link or button

- Inputting text into a blank field

- Selecting a drop-down menu

- Clicking a checkbox

Some events like scrolling or zooming are not counted.

It can be frustrating trying to click something, and nothing happens on the page.

Not all users will interact with a page, so the page may not have an FID value. This is also why lab test tools won’t have the value because they’re not interacting with the page. What you may want to look at for lab tests is Total Blocking Time (TBT). In PageSpeed Insights, you can use the TBT tab to see related issues.

What causes the delay?

JavaScript competing for the main thread. There’s just one main thread, and JavaScript competes to run tasks on it. Think of it like JavaScript having to take turns to run.

While a task is running, a page can’t respond to user input. This is the delay that is felt. The longer the task, the longer the delay experienced by the user. The breaks between tasks are the opportunities that the page has to switch to the user input task and respond to what they wanted to do.

Optimizing FID

Most pages pass FID checks. But if you need to work on FID, there are just a few items you can work on. If you can reduce the amount of JavaScript running, then do that.

If you’re on a JavaScript framework, there’s a lot of JavaScript needed for the page to load. That JavaScript can take a while to process in the browser, and that can cause delays. If you use prerendering or (SSR), you shift this burden from the browser to the server.

Another option is to break up the JavaScript so that it runs for less time. You take those long tasks that delay response to user input and break them into smaller tasks that block for less time. This is done with code splitting, which breaks the tasks into smaller chunks.

There’s also the option of moving some of the JavaScript to a service worker. I did mention that JavaScript competes for the one main thread in the browser, but this is sort of a workaround that gives it another place to run.

There are some trade-offs as far as caching goes. And the service worker can’t access the DOM, so it can’t do any updates or changes. If you’re going to move JavaScript to a service worker, you really need to have a developer that knows what to do.

Resources

There are many tools you can use for testing and monitoring. Generally, you want to see the actual field data, which is what you’ll be measured on. But the lab data is more useful for testing.

The difference between lab and field data is that field data looks at real users, network conditions, devices, caching, etc. But lab data is consistently tested based on the same conditions to make the test results repeatable.

Many of these tools use Lighthouse as the base for their lab tests. The exception is WebPageTest, although you can also run Lighthouse tests with it as well. The field data comes from CrUX.

Field Data

There are some additional tools you can use to gather your own Real User Monitoring (RUM) data that provide more immediate feedback on how speed improvements impact your actual users (rather than just relying on lab tests).

Lab Data

PageSpeed Insights is great to check one page at a time. But if you want both lab data and field data at scale, the easiest way to get that is through the API. You can connect to it easily with Ahrefs Webmaster Tools (free) or Ahrefs’ Site Audit and get reports detailing your performance.

Note that the Core Web Vitals data shown will be determined by the user-agent you select for your crawl during the setup.

I also like the report in GSC because you can see the field data for many pages at once. But the data is a bit delayed and on a 28-day rolling average, so changes may take some time to show up in the report.

Another thing that may be useful is you can find the scoring weights for Lighthouse at any point in time and see the historical changes. This can give you some idea of why your scores have changed and what Google may be weighting more over time.

Final thoughts

I don’t think Core Web Vitals have much impact on SEO and, unless you are extremely slow, I generally won’t prioritize fixing them. If you want to argue for Core Web Vitals improvements, I think that’s hard to do for SEO.

However, you can make a case for it for user experience. Or as I mentioned in my page speed article, improvements should help you record more data in your analytics, which “feels” like an increase. You may also be able to make a case for more conversions, as there are a lot of studies out there that show this (but it also may be a result of recording more data).

Here’s another key point: work with your developers; they are the experts here. Page speed can be extremely complex. If you’re on your own, you may need to rely on a plugin or service (e.g., WP Rocket or Autoptimize) to handle this.

Things will get easier as new technologies are rolled out and many of the platforms like your CMS, your CDN, or even your browser take on some of the optimization tasks. My prediction is that within a few years, most sites won’t even have to worry much because most of the optimizations will already be handled.

Many of the platforms are already rolling out or working on things that will help you.

Already, WordPress is preloading the first image and is putting together a team to work on Core Web Vitals. Cloudflare has already rolled out many things that will make your site faster, such as Early Hints, Signed Exchanges, and HTTP/3. I expect this trend to continue until site owners don’t even have to worry about working on this anymore.

As always, message me on Twitter if you have any questions.

SEO

2024 WordPress Vulnerability Report Shows Errors Sites Keep Making

WordPress security scanner WPScan’s 2024 WordPress vulnerability report calls attention to WordPress vulnerability trends and suggests the kinds of things website publishers (and SEOs) should be looking out for.

Some of the key findings from the report were that just over 20% of vulnerabilities were rated as high or critical level threats, with medium severity threats, at 67% of reported vulnerabilities, making up the majority. Many regard medium level vulnerabilities as if they are low-level threats and that’s a mistake because they’re not low level and should be regarded as deserving attention.

The WPScan report advised:

“While severity doesn’t translate directly to the risk of exploitation, it’s an important guideline for website owners to make an educated decision about when to disable or update the extension.”

WordPress Vulnerability Severity Distribution

Critical level vulnerabilities, the highest level of threat, represented only 2.38% of vulnerabilities, which is essentially good news for WordPress publishers. Yet as mentioned earlier, when combined with the percentages of high level threats (17.68%) the number or concerning vulnerabilities rises to almost 20%.

Here are the percentages by severity ratings:

- Critical 2.38%

- Low 12.83%

- High 17.68%

- Medium 67.12%

Authenticated Versus Unauthenticated

Authenticated vulnerabilities are those that require an attacker to first attain user credentials and their accompanying permission levels in order to exploit a particular vulnerability. Exploits that require subscriber-level authentication are the most exploitable of the authenticated exploits and those that require administrator level access present the least risk (although not always a low risk for a variety of reasons).

Unauthenticated attacks are generally the easiest to exploit because anyone can launch an attack without having to first acquire a user credential.

The WPScan vulnerability report found that about 22% of reported vulnerabilities required subscriber level or no authentication at all, representing the most exploitable vulnerabilities. On the other end of the scale of the exploitability are vulnerabilities requiring admin permission levels representing a total of 30.71% of reported vulnerabilities.

Permission Levels Required For Exploits

Vulnerabilities requiring administrator level credentials represented the highest percentage of exploits, followed by Cross Site Request Forgery (CSRF) with 24.74% of vulnerabilities. This is interesting because CSRF is an attack that uses social engineering to get a victim to click a link from which the user’s permission levels are acquired. This is a mistake that WordPress publishers should be aware of because all it takes is for an admin level user to follow a link which then enables the hacker to assume admin level privileges to the WordPress website.

The following is the percentages of exploits ordered by roles necessary to launch an attack.

Ascending Order Of User Roles For Vulnerabilities

- Author 2.19%

- Subscriber 10.4%

- Unauthenticated 12.35%

- Contributor 19.62%

- CSRF 24.74%

- Admin 30.71%

Most Common Vulnerability Types Requiring Minimal Authentication

Broken Access Control in the context of WordPress refers to a security failure that can allow an attacker without necessary permission credentials to gain access to higher credential permissions.

In the section of the report that looks at the occurrences and vulnerabilities underlying unauthenticated or subscriber level vulnerabilities reported (Occurrence vs Vulnerability on Unauthenticated or Subscriber+ reports), WPScan breaks down the percentages for each vulnerability type that is most common for exploits that are the easiest to launch (because they require minimal to no user credential authentication).

The WPScan threat report noted that Broken Access Control represents a whopping 84.99% followed by SQL injection (20.64%).

The Open Worldwide Application Security Project (OWASP) defines Broken Access Control as:

“Access control, sometimes called authorization, is how a web application grants access to content and functions to some users and not others. These checks are performed after authentication, and govern what ‘authorized’ users are allowed to do.

Access control sounds like a simple problem but is insidiously difficult to implement correctly. A web application’s access control model is closely tied to the content and functions that the site provides. In addition, the users may fall into a number of groups or roles with different abilities or privileges.”

SQL injection, at 20.64% represents the second most prevalent type of vulnerability, which WPScan referred to as both “high severity and risk” in the context of vulnerabilities requiring minimal authentication levels because attackers can access and/or tamper with the database which is the heart of every WordPress website.

These are the percentages:

- Broken Access Control 84.99%

- SQL Injection 20.64%

- Cross-Site Scripting 9.4%

- Unauthenticated Arbitrary File Upload 5.28%

- Sensitive Data Disclosure 4.59%

- Insecure Direct Object Reference (IDOR) 3.67%

- Remote Code Execution 2.52%

- Other 14.45%

Vulnerabilities In The WordPress Core Itself

The overwhelming majority of vulnerability issues were reported in third-party plugins and themes. However, there were in 2023 a total of 13 vulnerabilities reported in the WordPress core itself. Out of the thirteen vulnerabilities only one of them was rated as a high severity threat, which is the second highest level, with Critical being the highest level vulnerability threat, a rating scoring system maintained by the Common Vulnerability Scoring System (CVSS).

The WordPress core platform itself is held to the highest standards and benefits from a worldwide community that is vigilant in discovering and patching vulnerabilities.

Website Security Should Be Considered As Technical SEO

Site audits don’t normally cover website security but in my opinion every responsible audit should at least talk about security headers. As I’ve been saying for years, website security quickly becomes an SEO issue once a website’s ranking start disappearing from the search engine results pages (SERPs) due to being compromised by a vulnerability. That’s why it’s critical to be proactive about website security.

According to the WPScan report, the main point of entry for hacked websites were leaked credentials and weak passwords. Ensuring strong password standards plus two-factor authentication is an important part of every website’s security stance.

Using security headers is another way to help protect against Cross-Site Scripting and other kinds of vulnerabilities.

Lastly, a WordPress firewall and website hardening are also useful proactive approaches to website security. I once added a forum to a brand new website I created and it was immediately under attack within minutes. Believe it or not, virtually every website worldwide is under attack 24 hours a day by bots scanning for vulnerabilities.

Read the WPScan Report:

WPScan 2024 Website Threat Report

Featured Image by Shutterstock/Ljupco Smokovski

SEO

An In-Depth Guide And Best Practices For Mobile SEO

Over the years, search engines have encouraged businesses to improve mobile experience on their websites. More than 60% of web traffic comes from mobile, and in some cases based on the industry, mobile traffic can reach up to 90%.

Since Google has completed its switch to mobile-first indexing, the question is no longer “if” your website should be optimized for mobile, but how well it is adapted to meet these criteria. A new challenge has emerged for SEO professionals with the introduction of Interaction to Next Paint (INP), which replaced First Input Delay (FID) starting March, 12 2024.

Thus, understanding mobile SEO’s latest advancements, especially with the shift to INP, is crucial. This guide offers practical steps to optimize your site effectively for today’s mobile-focused SEO requirements.

What Is Mobile SEO And Why Is It Important?

The goal of mobile SEO is to optimize your website to attain better visibility in search engine results specifically tailored for mobile devices.

This form of SEO not only aims to boost search engine rankings, but also prioritizes enhancing mobile user experience through both content and technology.

While, in many ways, mobile SEO and traditional SEO share similar practices, additional steps related to site rendering and content are required to meet the needs of mobile users and the speed requirements of mobile devices.

Does this need to be a priority for your website? How urgent is it?

Consider this: 58% of the world’s web traffic comes from mobile devices.

If you aren’t focused on mobile users, there is a good chance you’re missing out on a tremendous amount of traffic.

Mobile-First Indexing

Additionally, as of 2023, Google has switched its crawlers to a mobile-first indexing priority.

This means that the mobile experience of your site is critical to maintaining efficient indexing, which is the step before ranking algorithms come into play.

Read more: Where We Are Today With Google’s Mobile-First Index

How Much Of Your Traffic Is From Mobile?

How much traffic potential you have with mobile users can depend on various factors, including your industry (B2B sites might attract primarily desktop users, for example) and the search intent your content addresses (users might prefer desktop for larger purchases, for example).

Regardless of where your industry and the search intent of your users might be, the future will demand that you optimize your site experience for mobile devices.

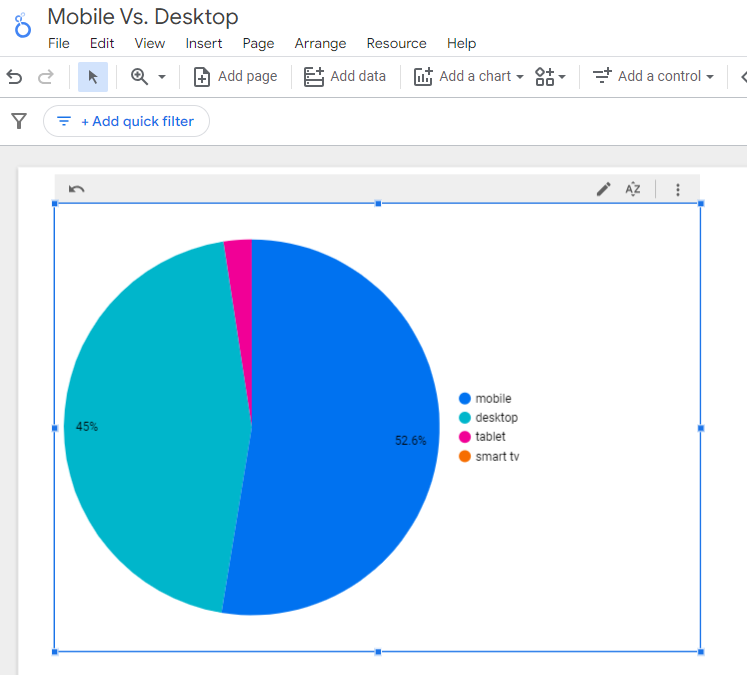

How can you assess your current mix of mobile vs. desktop users?

An easy way to see what percentage of your users is on mobile is to go into Google Analytics 4.

- Click Reports in the left column.

- Click on the Insights icon on the right side of the screen.

- Scroll down to Suggested Questions and click on it.

- Click on Technology.

- Click on Top Device model by Users.

- Then click on Top Device category by Users under Related Results.

- The breakdown of Top Device category will match the date range selected at the top of GA4.

You can also set up a report in Looker Studio.

- Add your site to the Data source.

- Add Device category to the Dimension field.

- Add 30-day active users to the Metric field.

- Click on Chart to select the view that works best for you.

Screenshot from Looker Studio, March 2024

Screenshot from Looker Studio, March 2024You can add more Dimensions to really dig into the data to see which pages attract which type of users, what the mobile-to-desktop mix is by country, which search engines send the most mobile users, and so much more.

Read more: Why Mobile And Desktop Rankings Are Different

How To Check If Your Site Is Mobile-Friendly

Now that you know how to build a report on mobile and desktop usage, you need to figure out if your site is optimized for mobile traffic.

While Google removed the mobile-friendly testing tool from Google Search Console in December 2023, there are still a number of useful tools for evaluating your site for mobile users.

Bing still has a mobile-friendly testing tool that will tell you the following:

- Viewport is configured correctly.

- Page content fits device width.

- Text on the page is readable.

- Links and tap targets are sufficiently large and touch-friendly.

- Any other issues detected.

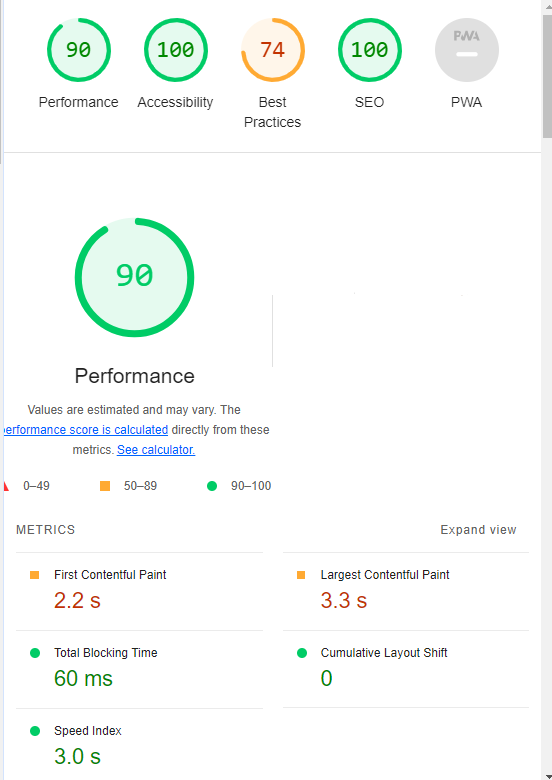

Google’s Lighthouse Chrome extension provides you with an evaluation of your site’s performance across several factors, including load times, accessibility, and SEO.

To use, install the Lighthouse Chrome extension.

- Go to your website in your browser.

- Click on the orange lighthouse icon in your browser’s address bar.

- Click Generate Report.

- A new tab will open and display your scores once the evaluation is complete.

Screenshot from Lighthouse, March 2024

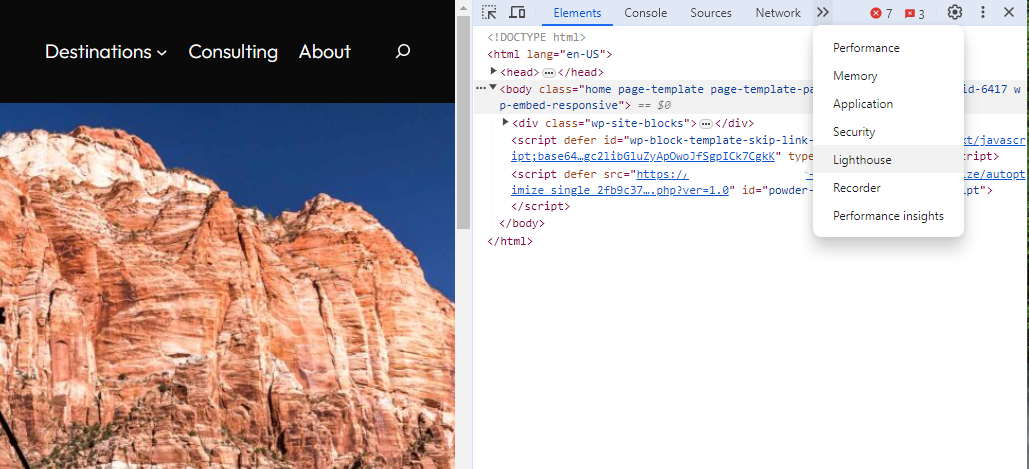

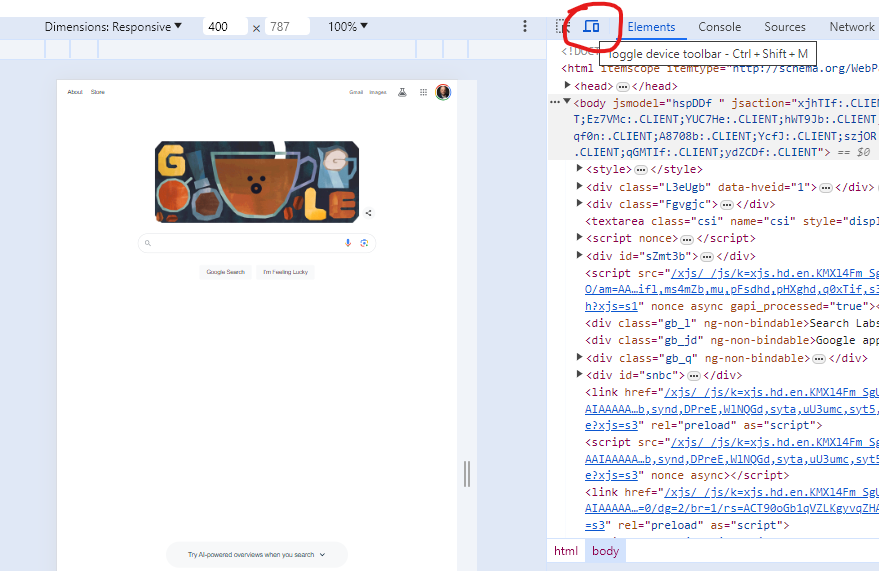

Screenshot from Lighthouse, March 2024You can also use the Lighthouse report in Developer Tools in Chrome.

- Simply click on the three dots next to the address bar.

- Select “More Tools.”

- Select Developer Tools.

- Click on the Lighthouse tab.

- Choose “Mobile” and click the “Analyze page load” button.

Screenshot from Lighthouse, March 2024

Screenshot from Lighthouse, March 2024Another option that Google offers is the PageSpeed Insights (PSI) tool. Simply add your URL into the field and click Analyze.

PSI will integrate any Core Web Vitals scores into the resulting view so you can see what your users are experiencing when they come to your site.

Screenshot from PageSpeed Insights, March 2024

Screenshot from PageSpeed Insights, March 2024Other tools, like WebPageTest.org, will graphically display the processes and load times for everything it takes to display your webpages.

With this information, you can see which processes block the loading of your pages, which ones take the longest to load, and how this affects your overall page load times.

You can also emulate the mobile experience by using Developer Tools in Chrome, which allows you to switch back and forth between a desktop and mobile experience.

Screenshot from Google Chrome Developer Tools, March 2024

Screenshot from Google Chrome Developer Tools, March 2024Lastly, use your own mobile device to load and navigate your website:

- Does it take forever to load?

- Are you able to navigate your site to find the most important information?

- Is it easy to add something to cart?

- Can you read the text?

Read more: Google PageSpeed Insights Reports: A Technical Guide

How To Optimize Your Site Mobile-First

With all these tools, keep an eye on the Performance and Accessibility scores, as these directly affect mobile users.

Expand each section within the PageSpeed Insights report to see what elements are affecting your score.

These sections can give your developers their marching orders for optimizing the mobile experience.

While mobile speeds for cellular networks have steadily improved around the world (the average speed in the U.S. has jumped to 27.06 Mbps from 11.14 Mbps in just eight years), speed and usability for mobile users are at a premium.

Read more: Top 7 SEO Benefits Of Responsive Web Design

Best Practices For Mobile Optimization

Unlike traditional SEO, which can focus heavily on ensuring that you are using the language of your users as it relates to the intersection of your products/services and their needs, optimizing for mobile SEO can seem very technical SEO-heavy.

While you still need to be focused on matching your content with the needs of the user, mobile search optimization will require the aid of your developers and designers to be fully effective.

Below are several key factors in mobile SEO to keep in mind as you’re optimizing your site.

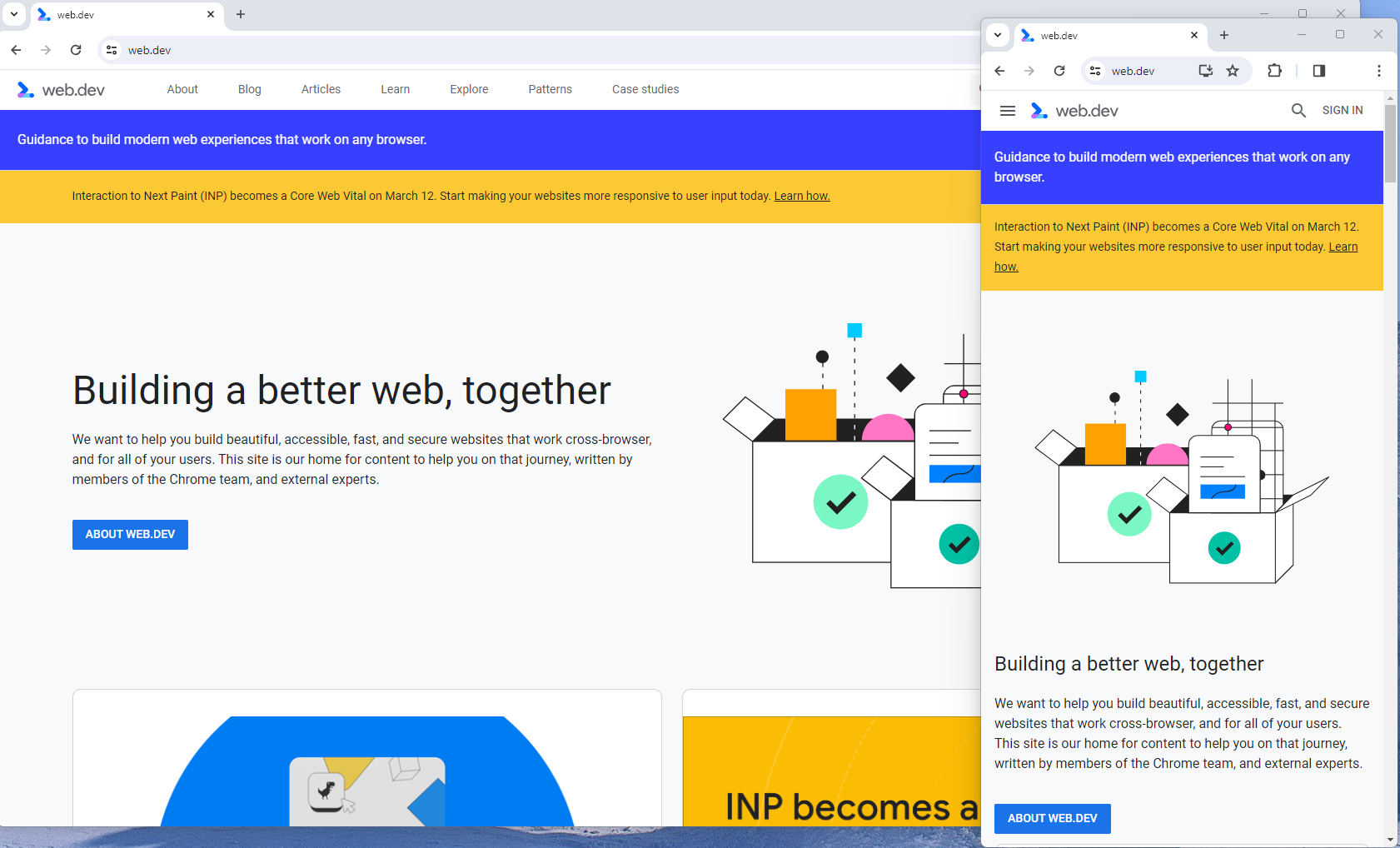

Site Rendering

How your site responds to different devices is one of the most important elements in mobile SEO.

The two most common approaches to this are responsive design and dynamic serving.

Responsive design is the most common of the two options.

Using your site’s cascading style sheets (CSS) and flexible layouts, as well as responsive content delivery networks (CDN) and modern image file types, responsive design allows your site to adjust to a variety of screen sizes, orientations, and resolutions.

With the responsive design, elements on the page adjust in size and location based on the size of the screen.

You can simply resize the window of your desktop browser and see how this works.

Screenshot from web.dev, March 2024

Screenshot from web.dev, March 2024This is the approach that Google recommends.

Adaptive design, also known as dynamic serving, consists of multiple fixed layouts that are dynamically served to the user based on their device.

Sites can have a separate layout for desktop, smartphone, and tablet users. Each design can be modified to remove functionality that may not make sense for certain device types.

This is a less efficient approach, but it does give sites more control over what each device sees.

While these will not be covered here, two other options:

- Progressive Web Apps (PWA), which can seamlessly integrate into a mobile app.

- Separate mobile site/URL (which is no longer recommended).

Read more: An Introduction To Rendering For SEO

Interaction to Next Paint (INP)

Google has introduced Interaction to Next Paint (INP) as a more comprehensive measure of user experience, succeeding First Input Delay. While FID measures the time from when a user first interacts with your page (e.g., clicking a link, tapping a button) to the time when the browser is actually able to begin processing event handlers in response to that interaction. INP, on the other hand, broadens the scope by measuring the responsiveness of a website throughout the entire lifespan of a page, not just first interaction.

Note that actions such as hovering and scrolling do not influence INP, however, keyboard-driven scrolling or navigational actions are considered keystrokes that may activate events measured by INP but not scrolling which is happeing due to interaction.

Scrolling may indirectly affect INP, for example in scenarios where users scroll through content, and additional content is lazy-loaded from the API. While the act of scrolling itself isn’t included in the INP calculation, the processing, necessary for loading additional content, can create contention on the main thread, thereby increasing interaction latency and adversely affecting the INP score.

What qualifies as an optimal INP score?

- An INP under 200ms indicates good responsiveness.

- Between 200ms and 500ms needs improvement.

- Over 500ms means page has poor responsiveness.

and these are common issues causing poor INP scores:

- Long JavaScript Tasks: Heavy JavaScript execution can block the main thread, delaying the browser’s ability to respond to user interactions. Thus break long JS tasks into smaller chunks by using scheduler API.

- Large DOM (HTML) Size: A large DOM ( starting from 1500 elements) can severely impact a website’s interactive performance. Every additional DOM element increases the work required to render pages and respond to user interactions.

- Inefficient Event Callbacks: Event handlers that execute lengthy or complex operations can significantly affect INP scores. Poorly optimized callbacks attached to user interactions, like clicks, keypress or taps, can block the main thread, delaying the browser’s ability to render visual feedback promptly. For example when handlers perform heavy computations or initiate synchronous network requests such on clicks.

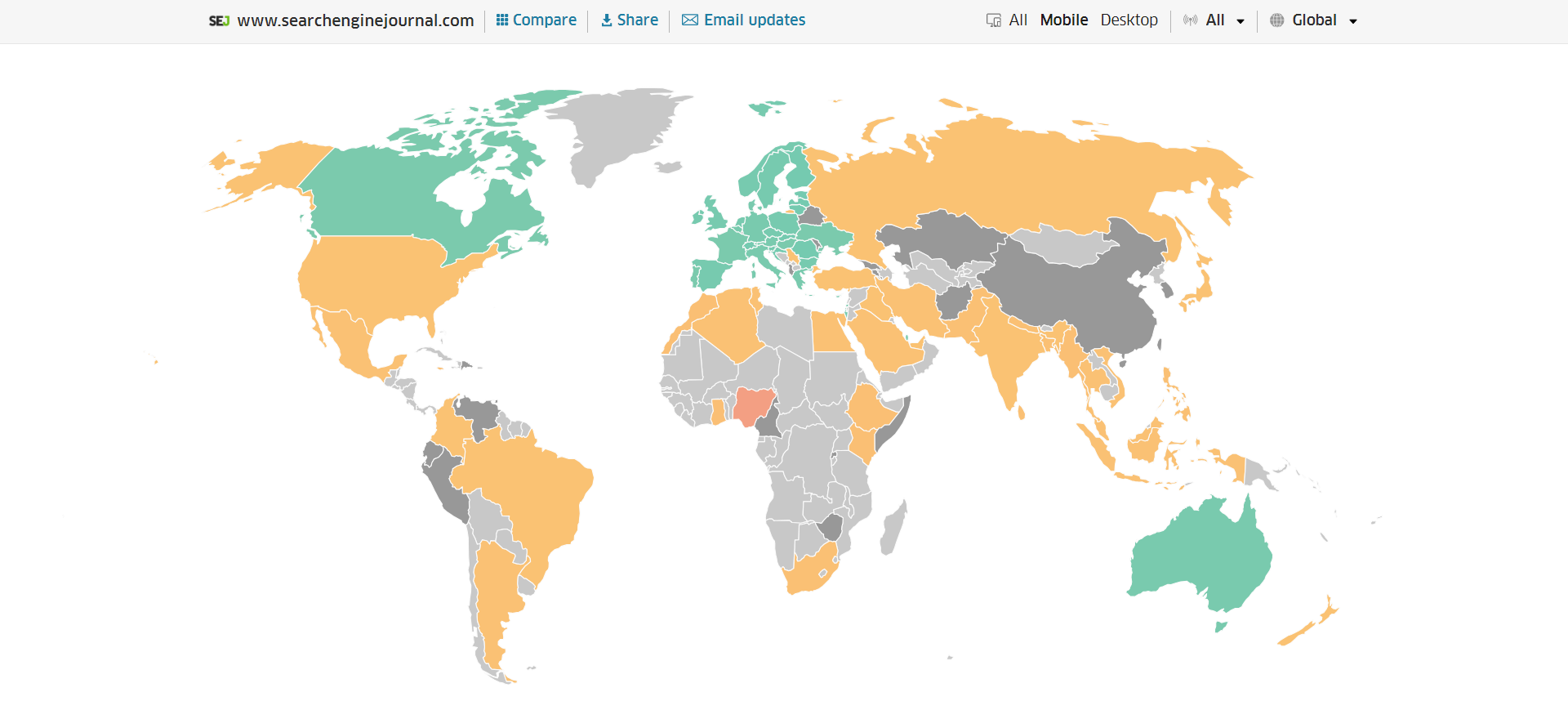

and you can troubleshoot INP issues using free and paid tools.

As a good starting point I would recommend to check your INP scores by geos via treo.sh which will give you a great high level insights where you struggle with most.

INP scores by Geos

INP scores by GeosRead more: How To Improve Interaction To Next Paint (INP)

Image Optimization

Images add a lot of value to the content on your site and can greatly affect the user experience.

From page speeds to image quality, you could adversely affect the user experience if you haven’t optimized your images.

This is especially true for the mobile experience. Images need to adjust to smaller screens, varying resolutions, and screen orientation.

- Use responsive images

- Implement lazy loading

- Compress your images (use WebP)

- Add your images into sitemap

Optimizing images is an entire science, and I advise you to read our comprehensive guide on image SEO how to implement the mentioned recommendations.

Avoid Intrusive Interstitials

Google rarely uses concrete language to state that something is a ranking factor or will result in a penalty, so you know it means business about intrusive interstitials in the mobile experience.

Intrusive interstitials are basically pop-ups on a page that prevent the user from seeing content on the page.

John Mueller, Google’s Senior Search Analyst, stated that they are specifically interested in the first interaction a user has after clicking on a search result.

Not all pop-ups are considered bad. Interstitial types that are considered “intrusive” by Google include:

- Pop-ups that cover most or all of the page content.

- Non-responsive interstitials or pop-ups that are impossible for mobile users to close.

- Pop-ups that are not triggered by a user action, such as a scroll or a click.

Read more: 7 Tips To Keep Pop-Ups From Harming Your SEO

Structured Data

Most of the tips provided in this guide so far are focused on usability and speed and have an additive effect, but there are changes that can directly influence how your site appears in mobile search results.

Search engine results pages (SERPs) haven’t been the “10 blue links” in a very long time.

They now reflect the diversity of search intent, showing a variety of different sections to meet the needs of users. Local Pack, shopping listing ads, video content, and more dominate the mobile search experience.

As a result, it’s more important than ever to provide structured data markup to the search engines, so they can display rich results for users.

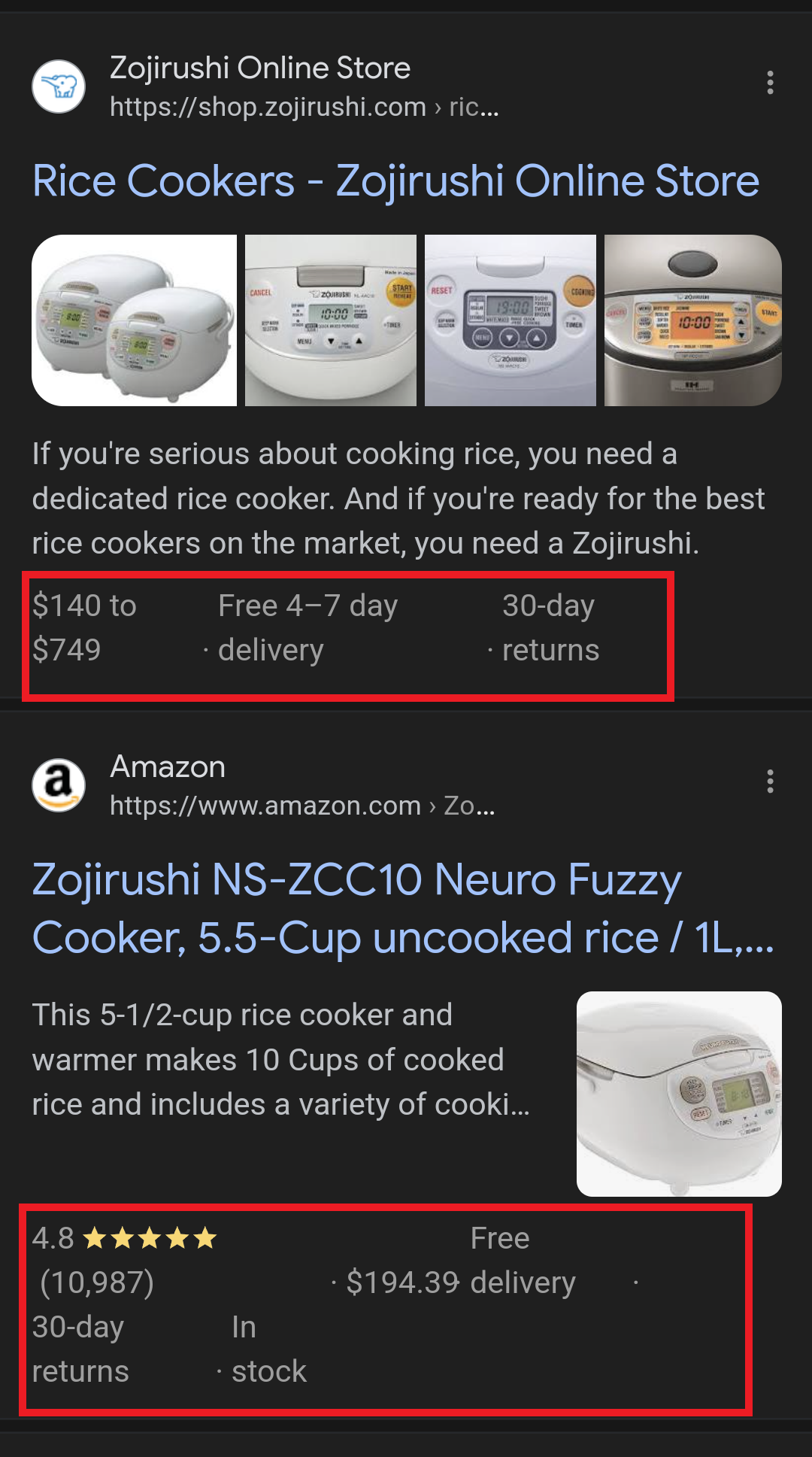

In this example, you can see that both Zojirushi and Amazon have included structured data for their rice cookers, and Google is displaying rich results for both.

Screenshot from search for [Japanese rice cookers], Google, March 2024

Screenshot from search for [Japanese rice cookers], Google, March 2024Adding structured data markup to your site can influence how well your site shows up for local searches and product-related searches.

Using JSON-LD, you can mark up the business, product, and services data on your pages in Schema markup.

If you use WordPress as the content management system for your site, there are several plugins available that will automatically mark up your content with structured data.

Read more: What Structured Data To Use And Where To Use It?

Content Style

When you think about your mobile users and the screens on their devices, this can greatly influence how you write your content.

Rather than long, detailed paragraphs, mobile users prefer concise writing styles for mobile reading.

Each key point in your content should be a single line of text that easily fits on a mobile screen.

Your font sizes should adjust to the screen’s resolution to avoid eye strain for your users.

If possible, allow for a dark or dim mode for your site to further reduce eye strain.

Headers should be concise and address the searcher’s intent. Rather than lengthy section headers, keep it simple.

Finally, make sure that your text renders in a font size that’s readable.

Read more: 10 Tips For Creating Mobile-Friendly Content

Tap Targets

As important as text size, the tap targets on your pages should be sized and laid out appropriately.

Tap targets include navigation elements, links, form fields, and buttons like “Add to Cart” buttons.

Targets smaller than 48 pixels by 48 pixels and targets that overlap or are overlapped by other page elements will be called out in the Lighthouse report.

Tap targets are essential to the mobile user experience, especially for ecommerce websites, so optimizing them is vital to the health of your online business.

Read more: Google’s Lighthouse SEO Audit Tool Now Measures Tap Target Spacing

Prioritizing These Tips

If you have delayed making your site mobile-friendly until now, this guide may feel overwhelming. As a result, you may not know what to prioritize first.

As with so many other optimizations in SEO, it’s important to understand which changes will have the greatest impact, and this is just as true for mobile SEO.

Think of SEO as a framework in which your site’s technical aspects are the foundation of your content. Without a solid foundation, even the best content may struggle to rank.

- Responsive or Dynamic Rendering: If your site requires the user to zoom and scroll right or left to read the content on your pages, no number of other optimizations can help you. This should be first on your list.

- Content Style: Rethink how your users will consume your content online. Avoid very long paragraphs. “Brevity is the soul of wit,” to quote Shakespeare.

- Image Optimization: Begin migrating your images to next-gen image formats and optimize your content display network for speed and responsiveness.

- Tap Targets: A site that prevents users from navigating or converting into sales won’t be in business long. Make navigation, links, and buttons usable for them.

- Structured Data: While this element ranks last in priority on this list, rich results can improve your chances of receiving traffic from a search engine, so add this to your to-do list once you’ve completed the other optimizations.

Summary

From How Search Works, “Google’s mission is to organize the world’s information and make it universally accessible and useful.”

If Google’s primary mission is focused on making all the world’s information accessible and useful, then you know they will prefer surfacing sites that align with that vision.

Since a growing percentage of users are on mobile devices, you may want to infer the word “everywhere” added to the end of the mission statement.

Are you missing out on traffic from mobile devices because of a poor mobile experience?

If you hope to remain relevant, make mobile SEO a priority now.

Featured Image: Paulo Bobita/Search Engine Journal

SEO

HARO Has Been Dead for a While

I know nothing about the new tool. I haven’t tried it. But after trying to use HARO recently, I can’t say I’m surprised or saddened by its death. It’s been a walking corpse for a while.

I used HARO way back in the day to build links. It worked. But a couple of months ago, I experienced the platform from the other side when I decided to try to source some “expert” insights for our posts.

After just a few minutes of work, I got hundreds of pitches:

So, I grabbed a cup of coffee and began to work through them. It didn’t take long before I lost the will to live. Every other pitch seemed like nothing more than lazy AI-generated nonsense from someone who definitely wasn’t an expert.

Here’s one of them:

Seriously. Who writes like that? I’m a self-confessed dullard (any fellow Dull Men’s Club members here?), and even I’m not that dull…

I don’t think I looked through more than 30-40 of the responses. I just couldn’t bring myself to do it. It felt like having a conversation with ChatGPT… and not a very good one!

Despite only reviewing a few dozen of the many pitches I received, one stood out to me:

Believe it or not, this response came from a past client of mine who runs an SEO agency in the UK. Given how knowledgeable and experienced he is (he actually taught me a lot about SEO back in the day when I used to hassle him with questions on Skype), this pitch rang alarm bells for two reasons:

- I truly doubt he spends his time replying to HARO queries

- I know for a fact he’s no fan of Neil Patel (sorry, Neil, but I’m sure you’re aware of your reputation at this point!)

So… I decided to confront him 😉

Here’s what he said:

Shocker.

I pressed him for more details:

I’m getting a really good deal and paying per link rather than the typical £xxxx per month for X number of pitches. […] The responses as you’ve seen are not ideal but that’s a risk I’m prepared to take as realistically I dont have the time to do it myself. He’s not native english, but I have had to have a word with him a few times about clearly using AI. On the low cost ones I don’t care but on authority sites it needs to be more refined.

I think this pretty much sums up the state of HARO before its death. Most “pitches” were just AI answers from SEOs trying to build links for their clients.

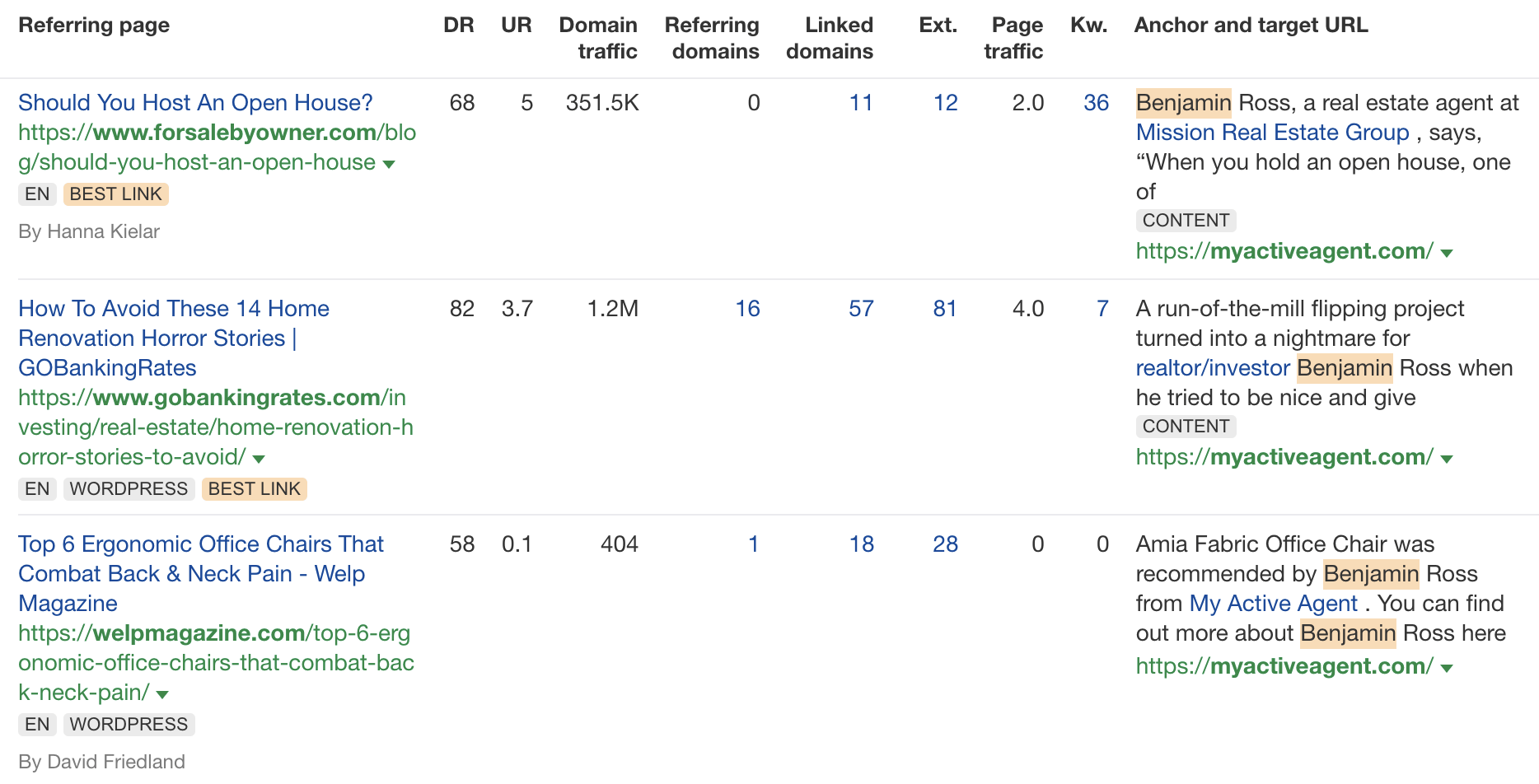

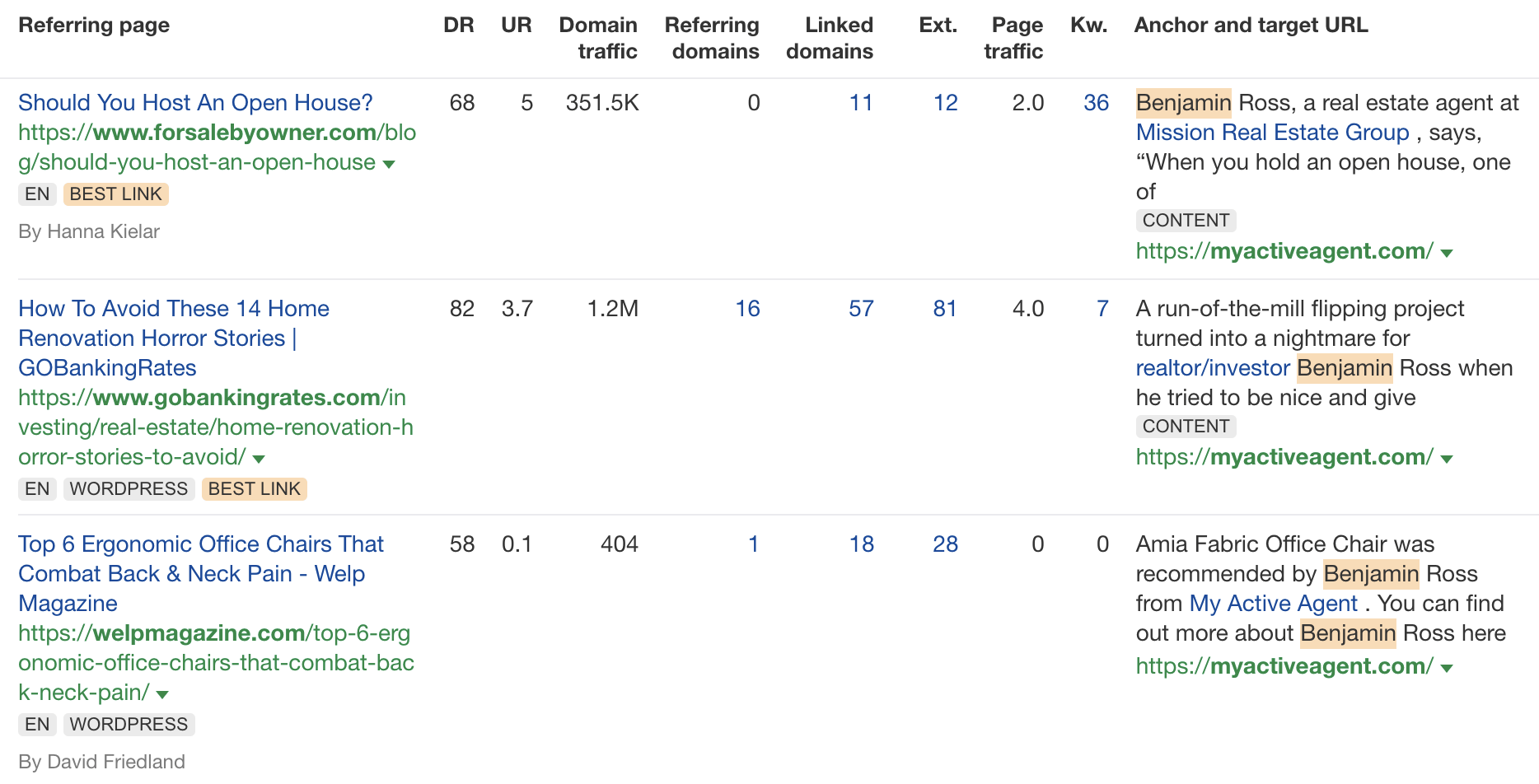

Don’t get me wrong. I’m not throwing shade here. I know that good links are hard to come by, so you have to do what works. And the reality is that HARO did work. Just look at the example below. You can tell from the anchor and surrounding text in Ahrefs that these links were almost certainly built with HARO:

But this was the problem. HARO worked so well back in the day that it was only a matter of time before spammers and the #scale crew ruined it for everyone. That’s what happened, and now HARO is no more. So…

If you’re a link builder, I think it’s time to admit that HARO link building is dead and move on.

No tactic works well forever. It’s the law of sh**ty clickthroughs. This is why you don’t see SEOs having huge success with tactics like broken link building anymore. They’ve moved on to more innovative tactics or, dare I say it, are just buying links.

Sidenote.

Talking of buying links, here’s something to ponder: if Connectively charges for pitches, are links built through those pitches technically paid? If so, do they violate Google’s spam policies? It’s a murky old world this SEO lark, eh?

If you’re a journalist, Connectively might be worth a shot. But with experts being charged for pitches, you probably won’t get as many responses. That might be a good thing. You might get less spam. Or you might just get spammed by SEOs with deep pockets. The jury’s out for now.

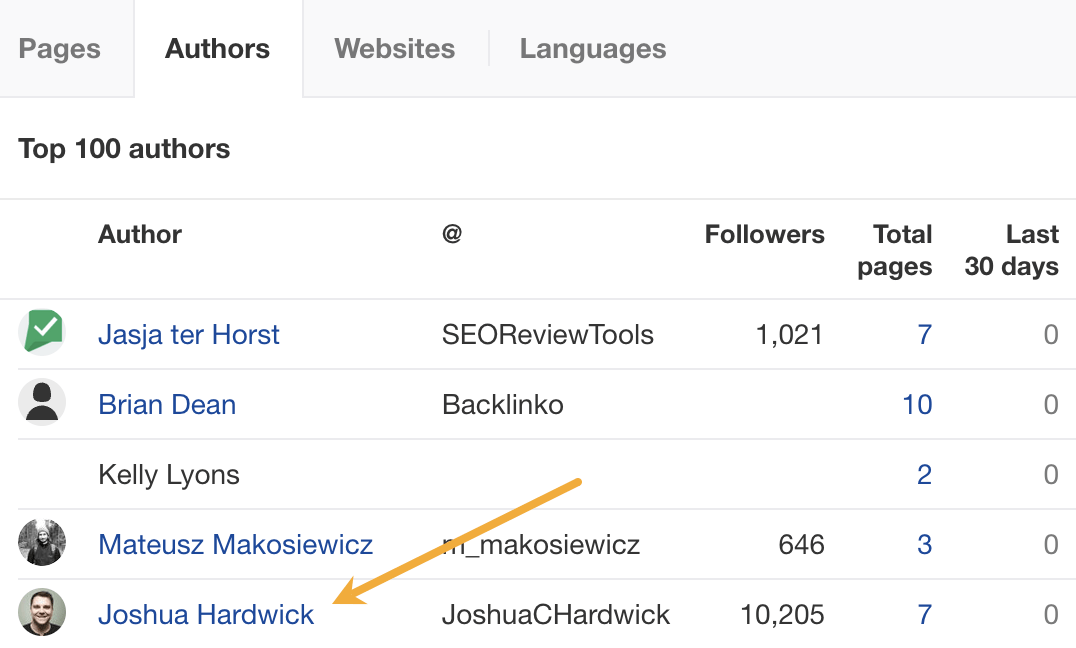

My advice? Look for alternative methods like finding and reaching out to experts directly. You can easily use tools like Content Explorer to find folks who’ve written lots of content about the topic and are likely to be experts.

For example, if you look for content with “backlinks” in the title and go to the Authors tab, you might see a familiar name. 😉

I don’t know if I’d call myself an expert, but I’d be happy to give you a quote if you reached out on social media or emailed me (here’s how to find my email address).

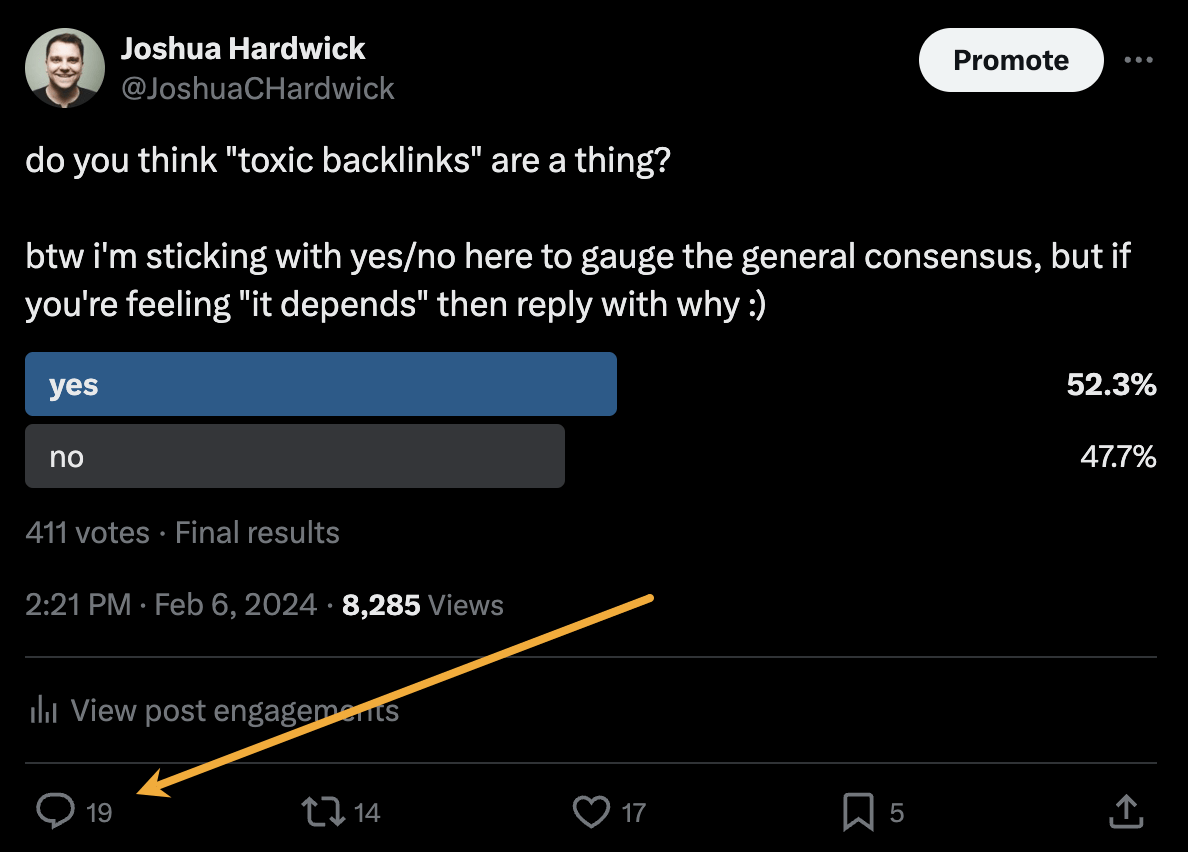

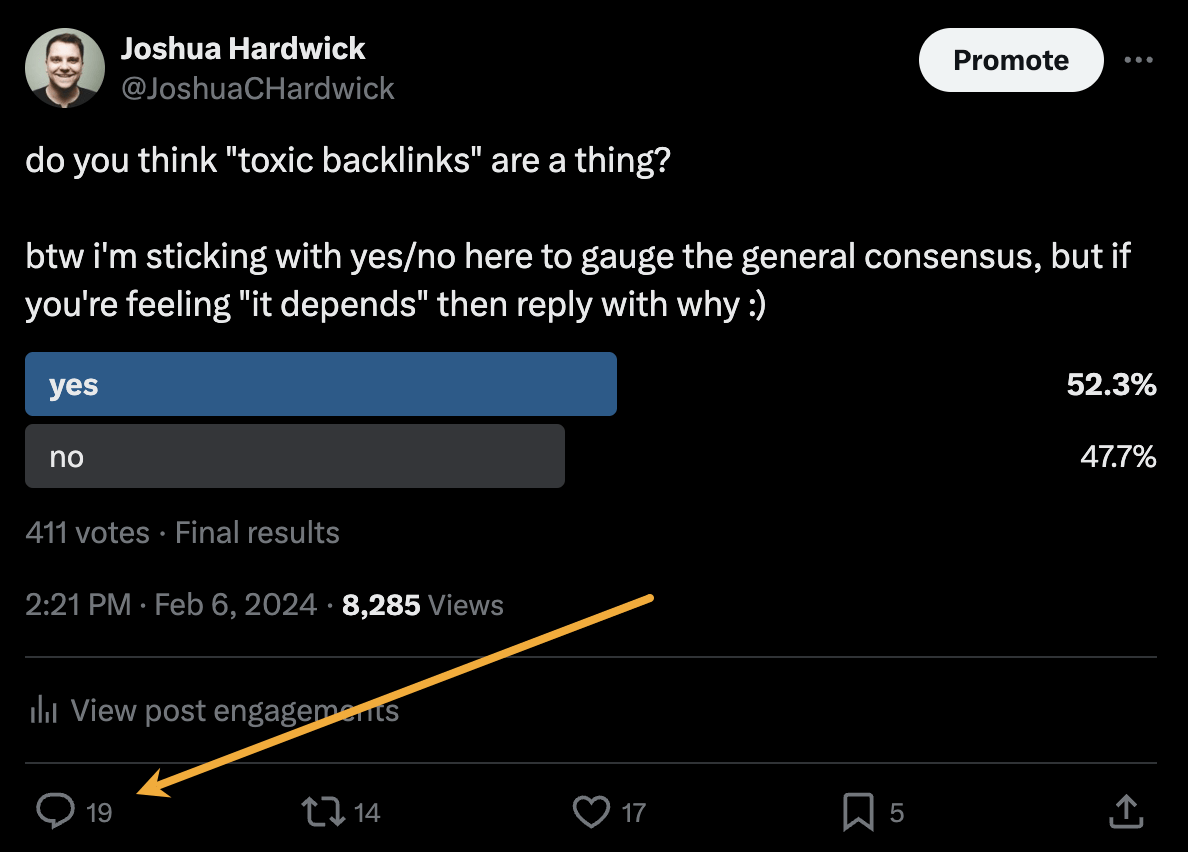

Alternatively, you can bait your audience into giving you their insights on social media. I did this recently with a poll on X and included many of the responses in my guide to toxic backlinks.

Either of these options is quicker than using HARO because you don’t have to sift through hundreds of responses looking for a needle in a haystack. If you disagree with me and still love HARO, feel free to tell me why on X 😉

-

SEO7 days ago

SEO7 days agoGoogle Limits News Links In California Over Proposed ‘Link Tax’ Law

-

SEARCHENGINES6 days ago

SEARCHENGINES6 days agoGoogle Core Update Volatility, Helpful Content Update Gone, Dangerous Google Search Results & Google Ads Confusion

-

SEARCHENGINES7 days ago

Daily Search Forum Recap: April 12, 2024

-

SEO6 days ago

SEO6 days ago10 Paid Search & PPC Planning Best Practices

-

MARKETING6 days ago

MARKETING6 days ago2 Ways to Take Back the Power in Your Business: Part 2

-

MARKETING4 days ago

MARKETING4 days ago5 Psychological Tactics to Write Better Emails

-

SEARCHENGINES5 days ago

SEARCHENGINES5 days agoWeekend Google Core Ranking Volatility

-

PPC6 days ago

PPC6 days agoCritical Display Error in Brand Safety Metrics On Twitter/X Corrected

You must be logged in to post a comment Login