SOCIAL

WhatsApp Adds New Privacy Tools, Including Online Activity Controls and the Ability to Silently Leave Group Chats

Amid ongoing concerns about how it can be used to organize criminal activity, due to its default encryption process, WhatsApp has announced some additional privacy features, providing even more assurance and control for users, in various respects.

First off, WhatsApp’s giving users more control over how others see them in the app, with the option to switch off online activity markers, or restrict those signals to certain users.

As shown here, you’ll soon be able to decide who can see when you’re online in the app – ‘Everyone’, ‘Contacts’, ‘My Contacts Except’ or ‘Nobody’.

That’ll provide more capacity to avoid unwanted interactions by hiding your active status, which could be of significant value for users who want to go about their interactions in their own time and space.

WhatsApp’s also adding a new option to leave groups silently, so you can skip out of a group chat without alerting all group members.

As you can see, group admins will still know you’ve left the chat, but there won’t be a ‘John Doe has left the discussion’ notification for all users in the thread.

In addition to this, WhatsApp is also extending the time window for deleting your messages from your chats.

???? Rethinking your message? Now you’ll have a little over 2 days to delete your messages from your chats after you hit send.

— WhatsApp (@WhatsApp) August 8, 2022

And finally, WhatsApp’s also rolling screenshot blocking for ‘View Once’ messages:

“View Once is already an incredibly popular way to share photos or media that don’t need to have a permanent digital record. Now we’re enabling screenshot blocking for View Once messages for an added layer of protection. We’re testing this feature now and are excited to roll it out to users soon.”

That could facilitate even more private sharing on WhatsApp, which may lead to more questionable material being shared. If that’s what people want – though that specific aspect has also been the focus of various authorities, in various regions, who have called on Meta to enable a level of messaging access to authorities, in order to avoid its apps being used for illegal activity, which is currently shielded by its privacy measures.

Recently, the UK National Cyber Security Center published a research paper that proposed a new automated scanning process for WhatsApp, and other messaging tools, which would better facilitate the detection of illegal exchanges, while still maintaining privacy for users. The European Union has also proposed new legislation that would put more onus on Meta itself to detect and report any such activity within its platforms.

Thus far, Meta has resisted all calls to add in ‘back door’ access, or anything like it, arguing that the trade-off between all users’ privacy, and catching out the small percentage of criminal activity, is simply too great to consider.

As explained by WhatsApp chief Will Cathcart in response to the UK proposal:

“What’s being proposed is that we – either directly or indirectly through software – read everyone’s messages. I don’t think people want that.”

Indeed, Meta is actually still in the process of rolling out end-to-end encryption in all of its messaging tools, with both Messenger and Instagram Direct both getting enhanced security features, in order to bring them into line with WhatsApp.

The next stage, then, will be to integrate all of its messaging platforms into a single back-end, facilitating cross-platform chat – though Meta has delayed the full implementation of this due to ongoing regulatory questions and concerns.

And there is valid concern here. An inarguable side effect of the connective capacity of social media is that while social platforms and messaging apps enable everyone to ‘find their tribe’, those tribes are not always wholesome communities of knitting enthusiasts and TV show fans.

Sometimes, those tribes are dangerous, even criminal. And with encryption hiding any such exchanges, from everyone, there’s no telling just how significant this could be, and what types of activity WhatsApp could be facilitated through its circuits.

But as Cathcart notes, the alternative is that all of WhatsApp’s 2 billion active users lose their privacy, due to the actions of a likely few.

It’s a difficult argument, which looks set to carry on for some time yet.

SOCIAL

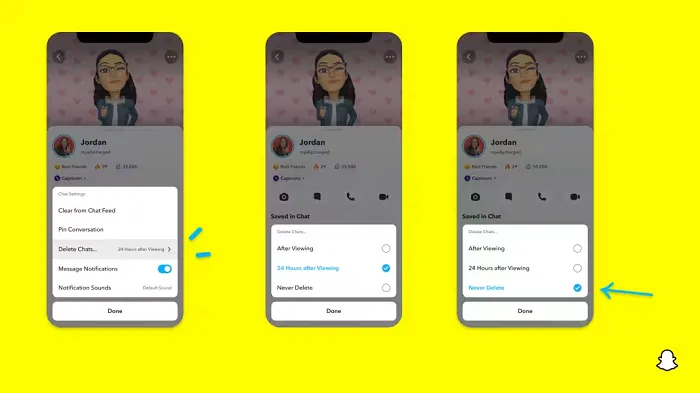

Snapchat Explores New Messaging Retention Feature: A Game-Changer or Risky Move?

In a recent announcement, Snapchat revealed a groundbreaking update that challenges its traditional design ethos. The platform is experimenting with an option that allows users to defy the 24-hour auto-delete rule, a feature synonymous with Snapchat’s ephemeral messaging model.

The proposed change aims to introduce a “Never delete” option in messaging retention settings, aligning Snapchat more closely with conventional messaging apps. While this move may blur Snapchat’s distinctive selling point, Snap appears convinced of its necessity.

According to Snap, the decision stems from user feedback and a commitment to innovation based on user needs. The company aims to provide greater flexibility and control over conversations, catering to the preferences of its community.

Currently undergoing trials in select markets, the new feature empowers users to adjust retention settings on a conversation-by-conversation basis. Flexibility remains paramount, with participants able to modify settings within chats and receive in-chat notifications to ensure transparency.

Snapchat underscores that the default auto-delete feature will persist, reinforcing its design philosophy centered on ephemerality. However, with the app gaining traction as a primary messaging platform, the option offers users a means to preserve longer chat histories.

The update marks a pivotal moment for Snapchat, renowned for its disappearing message premise, especially popular among younger demographics. Retaining this focus has been pivotal to Snapchat’s identity, but the shift suggests a broader strategy aimed at diversifying its user base.

This strategy may appeal particularly to older demographics, potentially extending Snapchat’s relevance as users age. By emulating features of conventional messaging platforms, Snapchat seeks to enhance its appeal and broaden its reach.

Yet, the introduction of message retention poses questions about Snapchat’s uniqueness. While addressing user demands, the risk of diluting Snapchat’s distinctiveness looms large.

As Snapchat ventures into uncharted territory, the outcome of this experiment remains uncertain. Will message retention propel Snapchat to new heights, or will it compromise the platform’s uniqueness?

Only time will tell.

SOCIAL

Catering to specific audience boosts your business, says accountant turned coach

While it is tempting to try to appeal to a broad audience, the founder of alcohol-free coaching service Just the Tonic, Sandra Parker, believes the best thing you can do for your business is focus on your niche. Here’s how she did just that.

When running a business, reaching out to as many clients as possible can be tempting. But it also risks making your marketing “too generic,” warns Sandra Parker, the founder of Just The Tonic Coaching.

“From the very start of my business, I knew exactly who I could help and who I couldn’t,” Parker told My Biggest Lessons.

Parker struggled with alcohol dependence as a young professional. Today, her business targets high-achieving individuals who face challenges similar to those she had early in her career.

“I understand their frustrations, I understand their fears, and I understand their coping mechanisms and the stories they’re telling themselves,” Parker said. “Because of that, I’m able to market very effectively, to speak in a language that they understand, and am able to reach them.”Â

“I believe that it’s really important that you know exactly who your customer or your client is, and you target them, and you resist the temptation to make your marketing too generic to try and reach everyone,” she explained.

“If you speak specifically to your target clients, you will reach them, and I believe that’s the way that you’re going to be more successful.

Watch the video for more of Sandra Parker’s biggest lessons.

SOCIAL

Instagram Tests Live-Stream Games to Enhance Engagement

Instagram’s testing out some new options to help spice up your live-streams in the app, with some live broadcasters now able to select a game that they can play with viewers in-stream.

As you can see in these example screens, posted by Ahmed Ghanem, some creators now have the option to play either “This or That”, a question and answer prompt that you can share with your viewers, or “Trivia”, to generate more engagement within your IG live-streams.

That could be a simple way to spark more conversation and interaction, which could then lead into further engagement opportunities from your live audience.

Meta’s been exploring more ways to make live-streaming a bigger consideration for IG creators, with a view to live-streams potentially catching on with more users.

That includes the gradual expansion of its “Stars” live-stream donation program, giving more creators in more regions a means to accept donations from live-stream viewers, while back in December, Instagram also added some new options to make it easier to go live using third-party tools via desktop PCs.

Live streaming has been a major shift in China, where shopping live-streams, in particular, have led to massive opportunities for streaming platforms. They haven’t caught on in the same way in Western regions, but as TikTok and YouTube look to push live-stream adoption, there is still a chance that they will become a much bigger element in future.

Which is why IG is also trying to stay in touch, and add more ways for its creators to engage via streams. Live-stream games is another element within this, which could make this a better community-building, and potentially sales-driving option.

We’ve asked Instagram for more information on this test, and we’ll update this post if/when we hear back.

-

SEO7 days ago

SEO7 days agoGoogle Limits News Links In California Over Proposed ‘Link Tax’ Law

-

SEARCHENGINES6 days ago

SEARCHENGINES6 days agoGoogle Core Update Volatility, Helpful Content Update Gone, Dangerous Google Search Results & Google Ads Confusion

-

SEARCHENGINES7 days ago

Daily Search Forum Recap: April 12, 2024

-

SEO6 days ago

SEO6 days ago10 Paid Search & PPC Planning Best Practices

-

MARKETING6 days ago

MARKETING6 days ago2 Ways to Take Back the Power in Your Business: Part 2

-

MARKETING5 days ago

MARKETING5 days ago5 Psychological Tactics to Write Better Emails

-

SEARCHENGINES5 days ago

SEARCHENGINES5 days agoWeekend Google Core Ranking Volatility

-

PPC6 days ago

PPC6 days agoCritical Display Error in Brand Safety Metrics On Twitter/X Corrected

You must be logged in to post a comment Login