SOCIAL

Facebook’s Stance on Political Ads Once Again Highlights a Common Flaw in its Policy Approach

There’s an old Saturday Night Live sketch, starring Norm McDonald, called ‘Bible Challenge’ in which the competition is based on honesty, with each contestant confessing to their knowledge of the Bible, and winning points based on whether they knew the answer, or they didn’t (apologies for the low-quality clip).

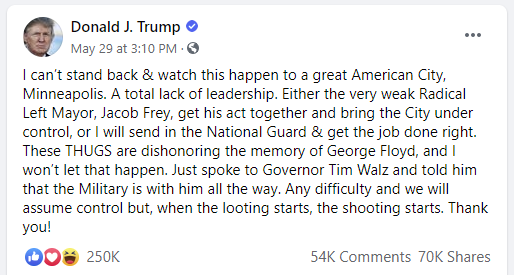

This came to mind when contemplating Facebook’s current approach to political ads and posted commentary from elected officials. Facebook’s stance once again came under scrutiny this week after Twitter decided to add a fact-check warning to two tweets from US President Donald Trump. That prompted Trump to call for changes to the laws which protect social platforms from liability for the content that users post on their platforms – if the platforms are going to edit people’s posts, then the current regulations should no longer apply, according to the Trump administration.

If any change is made to these laws, that would impact Facebook as well, and arguably even more so, given The Social Network has more than 10x as many daily active users. So where does Facebook stand on the matter?

As you would expect, Facebook says that any such change would have an adverse impact.

“Repealing or limiting Section 230 […] will restrict more speech online, not less. By exposing companies to potential liability for everything that billions of people around the world say, this would penalize companies that choose to allow controversial speech and encourage platforms to censor anything that might offend anyone.”

But Facebook has also stood by its decision not to take action on the same statements from President Trump that Twitter has, which Trump has also re-posted on his Facebook Page.

As per Facebook CEO Mark Zuckerberg:

“I disagree strongly with how the President spoke about this, but I believe people should be able to see this for themselves, because ultimately, accountability for those in positions of power can only happen when their speech is scrutinized out in the open.”

So Facebook is still sticking to its guns, and will not subject posts from politicians to fact checks.

Is that the right approach, or does it create a dangerous situation where influential leaders can say whatever they want, unchecked, unhindered, and able to reach a very large audience?

The answer is not simple – for better or worse, Facebook’s approach does make some sense.

Facebook’s stance, which I don’t think that it’s done well in communicating, is that these people are elected officials, these are the leaders that the voting public has chosen. Therefore we have a right to hear what they gave to say, good or bad, true or not. As the leaders chosen by the majority, it should be up to the people to then judge their public actions, which, in part, is based on what they say. If anything, social platforms merely add transparency, and for every post and comment, the voters can judge for themselves how they feel, and make a more informed decision on their support (or not).

It’s not an illogical position, but that process yet again highlights a common flaw in Facebook’s policy approach – that being that Facebook errs on the side of optimism, and assumes the best in people, while overlooking the potential negatives.

That’s what lead to the Cambridge Analytica situation – Facebook gave various academic groups access to vast collections of user data, under the promise that none of them would use such insight for any purpose beyond their stated research demands. Of course, at least one of them did, and in retrospect, it seems overly optimistic of Facebook to have assumed that nobody would be tempted to misuse its powerful audience insights for such purpose. But Facebook didn’t have any systems or processes in place to stop this, it just assumed nothing would go wrong. Until it did.

The same thing happened with Facebook’s SDK – Facebook gave developers full access to user insights, under the provision that they would only gather people’s personal information if they needed such. Many apps ended up sucking in a huge amounts of people’s personal data, and not only on the users of their apps, but also their friends and family, who were connected to them through Facebook’s expanded network.

The developers shouldn’t have been able to access so much data, and Facebook has since implemented significant restrictions on such. But Facebook, again, didn’t consider the potential negatives of this process – it simply, seemingly, hoped that developers just wouldn’t misuse its tools.

At best, Facebook failed to consider the potential for misuse in both cases. Which brings us back to its stance on political ads.

As noted, Facebook’s approach does make some sense – these are the people that we have elected, and we have a right to hear whatever they have to say. The flaw, however, is in how Facebook assumes that will play out.

As explained by Zuckerberg last October:

“I believe in giving people a voice, because at the end of the day, I believe in people. And as long as enough of us keep fighting for this, I believe that more people’s voices will eventually help us work through these issues together and write a new chapter in our history — where from all of our individual voices and perspectives, we can bring the world closer together.”

Again, the principle is that the people can judge for themselves, but that also assumes that people will have the capacity to determine truth from fact on their own, and that leaders won’t share outright lies or misinformation to challenge that. Which, as we’ve seen repeatedly, is not the case.

As an example, back in March, Brazilian President Jair Bolsonaro urged cities to remain open as normal amid the COVID-19 outbreak, saying that:

“We must return to normality – the few states and city halls should abandon their scorched-earth policies.”

Bolsonaro has constantly downplayed the pandemic, labeling it ‘a little flu’ and ‘ nothing to be afraid of’.

This is an elected official, so according to Facebook’s approach, the Brazilian people should be free to decide if these statements are true or not. But in this case, that’s an extremely dangerous approach.

Many people will take Bolsonaro’s advice based on his word alone, which could lead to them heading out, against official health advice. Almost 29,000 Brazilians have been killed by COVID-19 thus far, while the WHO has said that the nation is now the new epicenter for the pandemic. As such, Bolsonaro’s statement, in retrospect, is fairly dangerous, and the fact that the President advised such has given significant weight to a counter-narrative that has almost undoubtedly lead to more deaths.

The risks in this case would outweigh the transparency benefits.

But that’s basically Facebook’s approach, which brings me back to that SNL sketch from years ago. The flaw in Facebook’s stance on political content is that it won’t fact check such because it trusts that the various elements simply won’t gratuitously misuse their capacity.

Norm McDonald’s character wins ‘The Bible Game’ not because his character is the most honest, but because he’s the opposite – yet the rules of the game don’t account for his behavior.

In principle, the idea of not implementing fact checks on posts from political leaders makes some sense. But in practice, it might just make it easier for the most unscrupulous candidates to win.