SOCIAL

Federal Court Approves Facebook’s $5 Billion Privacy Agreement with the FTC Over Cambridge Analytica

Facebook is looking to move on from the Cambridge Analytica scandal, with the Federal Court this week approving its agreement with the FTC, that it made last July, which will see the company pay a $5 billion fine and implement strict new data privacy measures.

As explained by Facebook’s Chief Privacy Officer Michael Protti:

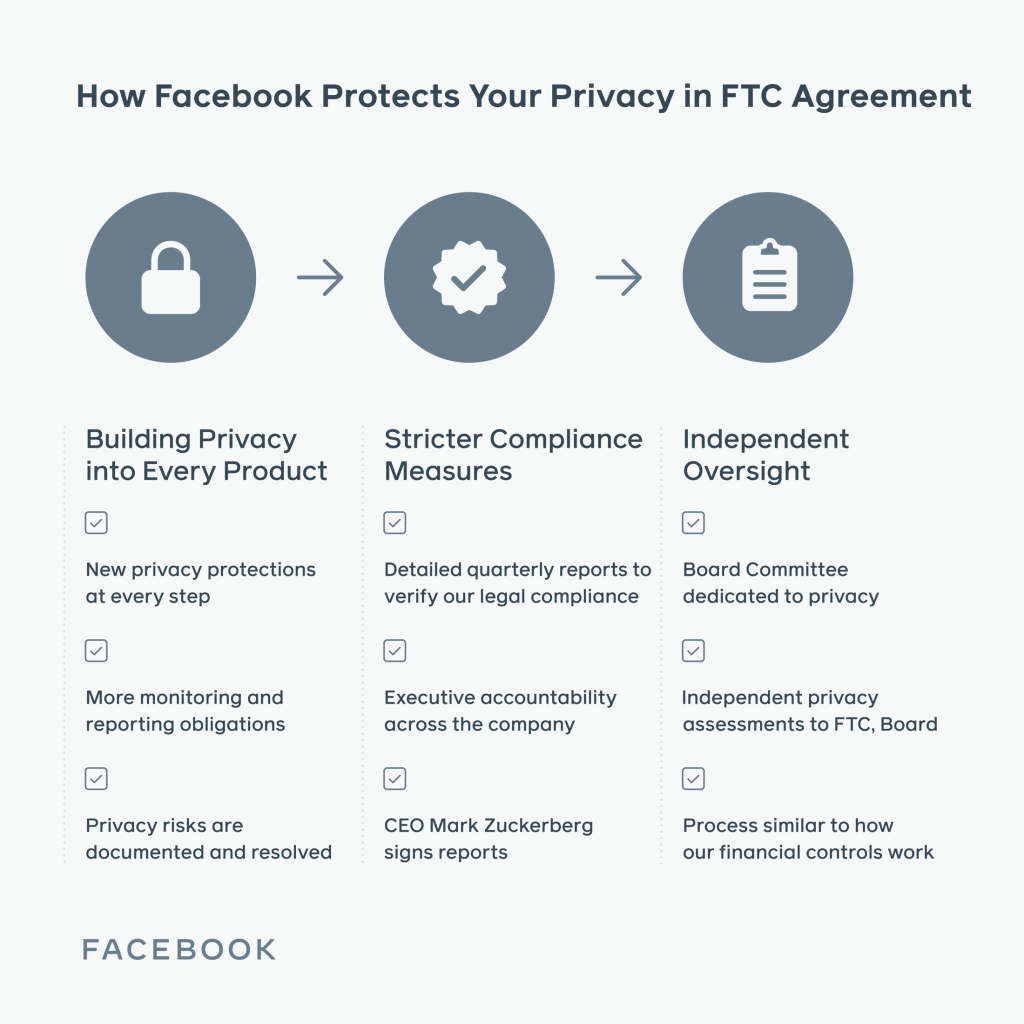

“On Thursday, a federal court officially approved the agreement we reached with the Federal Trade Commission (FTC) last July. This concludes the FTC’s investigation that began after the events surrounding Cambridge Analytica in 2018. […] With this agreement now in place, executive leaders at the company, including our CEO, will now certify our compliance with it quarterly and annually to the FTC. We are also creating a new Privacy Committee on our Board of Directors that will be comprised solely of independent directors, and we’ll work with a third-party, independent assessor who will regularly and directly report to the Privacy Committee on our privacy program compliance.”

As noted, the agreement was first announced last July, along with a record fine for breaches stemming from the way Cambridge Analytica accessed and utilized Facebook audience data for targeted political campaigning.

The FTC reiterated the significance of the fine at the time:

“The $5 billion penalty against Facebook is the largest ever imposed on any company for violating consumers’ privacy and almost 20 times greater than the largest privacy or data security penalty ever imposed worldwide. It is one of the largest penalties ever assessed by the U.S. government for any violation.”

After a long investigation into its processes, Facebook agreed that it had failed in its duty to protect user data, and has since committed to improving its systems in line with the FTC agreement.

Those improvements, thus far, have included:

The agreement will now go into full effect, with Facebook being held to these rulings on various fronts, including additional reporting and transparency requirements, along with the appointment of its Privacy Committee.

Facebook has already been working towards the implementation of this strategy already, with the Federal Court’s approval really more of a formality in this respect.

As noted by Protti:

“This agreement has been a catalyst for changing the culture of our company. We’ve changed the process by which we onboard every new employee at Facebook to make sure they think about their role through a privacy lens, design with privacy in mind from the beginning and work proactively to identify potential privacy risks so that mitigations can be implemented.”

Indeed, Facebook has made its data collection processes more transparent, and has provided simplified privacy tools to help people control what data Facebook and partner businesses are able to access. Facebook has also significantly limited access to its API, restricting third-party connection to its graph, including for academic organizations, which is how Cambridge Analytica first gained access to its information.

Definitely, Facebook has improved its systems, but at the same time, it is also worth noting that Facebook is still taking in a lot of data. Facebook is still gathering info on your every action – on Facebook, on Instagram, in WhatsApp and Messenger. And Facebook does still use that information to fuel its all-powerful ad targeting. And there will, at some point, be another significant controversy around Facebook’s data usage practices, because data is where Facebook wins, where it beats out everyone else, and what enables it to provide some of the best ad targeting options available.

But next time, Facebook will be able to point to its agreements, and show regulators that its users actually agreed to this. Which is where much of the regulatory and enforcement action is limited. Yes, you can force Facebook to make its users more aware of how their data is being used. But if the users themselves choose to accept those processes, and agree to Facebook’s data usage policies, then they’re largely on their own.

And most people do accept such. No one reads the fine print, and people sign up to social platforms because their friends are on them. So what if Facebook uses some of your data for targeted ads? It’s no problem, right?

The scope of potential data usage is difficult to outline in this respect. In isolation, it doesn’t mean a lot, but when you’re dealing with data collected from 2.5 billion people, that data set can provide significant insight.

Letting it get into the wrong hands is something that Facebook is now increasingly wary of, but Facebook still has it, and its old data sets are still out there. Others can also still utilize Facebook’s systems to target ads in a wide range of ways.

Nevertheless, in a legal sense, Facebook is now closing the book on the Cambridge Analytica debacle.

You can read more about Facebook’s final agreement here.

Stay in the loop with Entireweb

Get the latest updates delivered straight to your inbox. No spam - unsubscribe anytime.