SOCIAL

Instagram’s Testing a New, Full-Screen Main Feed of Feed Posts, Stories and Reels Content

It’s been on the horizon for a while, given the evolving usage trends in the app. And now, it looks a step closer to reality, with Instagram testing a new, fully-integrated home feed that would do away with the top Stories bar, and present everything in an immersive, full-screen, swipeable UI.

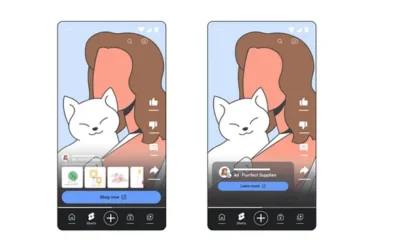

As you can see in this example, posted by app researcher Alessando Paluzzi, the experimental Instagram feed would include regular Feed posts, Stories and Reels all within a single flow.

Stories would be presented with a frame bar at the bottom of the display, indicating that you can swipe left to see the other frames, while videos have a progress bar instead.

It’s a more intuitive, and really logical way to present Instagram content, which would also align with evolving, TikTok-led usage trends. The update would also enable algorithmic improvements based on your response to each specific post, as opposed to the current format which presents things in different ways, and often shows more than one post on screen at a time.

Which is where TikTok has been able to gain its most significant advantage. Because all TikTok clips are displayed one at a time, in full-screen, everything you do while viewing that post can be used as a measure of your response to that specific content. If you tap ‘Like’ on a clip, if you watch it all the way through, if you let it play twice, swipe back to it again – every response is specific to that video, which gives TikTok a level of advantage in determining the specific elements of interest in each clip, which it can then align with your profile to improve your feed recommendations.

That’s why TikTok’s feed is so addictive – and while Instagram Reels are also presented in the same way, Instagram hasn’t yet been able to crack the algorithm as effectively as TikTok has, fueling its more immersive, more addictive ‘For You’ content stream.

This new presentation style could help to change that, and would be a big step in moving into line with the broader TikTok trend, which shows no sign of slowing as yet. And given that Reels is now the largest contributor to engagement growth on Instagram, and users spend more time with Stories than they do with their main feed, it makes perfect sense.

Again, I’ve been predicting that this would happen for the last two years – and really, the only surprise here is that it’s taken IG this long to actually move to live testing of the format.

Which, it’s important to note, hasn’t begun just yet. This is a back-end prototype at present, which might still not see the light of day. But it probably will, and given the state of its development, as shown here, I’d be expecting to see this soon, giving users a whole new way to engage with all of Instagram’s different content formats, while also aligning with the platform’s stated push on video content.

Indeed, back in December, Instagram chief Adam Mosseri said that video would be a key focus for IG in 2022.

“We’re going to double-down on our focus on video and consolidate all of our video formats around Reels”

This seems like the ultimate next step on this front, and another re-positioning in its face-off against TikTok, in order to mitigate TikTok’s rising dominance in the space.

You must be logged in to post a comment Login