SOCIAL

New Report Shows That 74% of People Don’t Believe Tech Platforms Will Be Able to Stop Political Manipulation

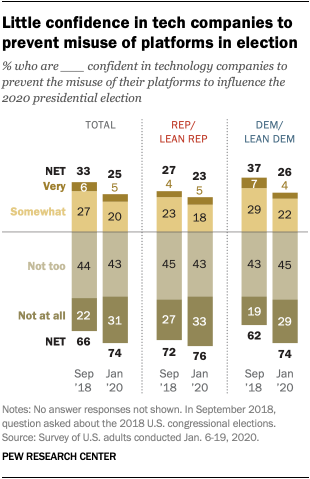

In what will likely come as little surprise, a new study from Pew Research has shown that 74% of Americans have little to no confidence that tech companies, including Facebook, Twitter and Google, will be able to prevent the misuse of their platforms to influence the outcome of the 2020 presidential election.

As you can see here, trust levels are similar across the political divide this time around, in slight variance to the same survey in 2018.

As per Pew:

“Confidence in technology companies to prevent the misuse of their platforms is even lower than it was in the weeks before the 2018 midterm elections, when about two-thirds of adults had little confidence these companies would prevent election influence on their platforms.”

Again, that’s not a major surprise. Another survey published by Pew earlier this month also found that both Republicans and Democrats “register far more distrust than trust of social media sites as sources for political and election news”, with 59% of respondents specifically noting that they do not trust the news content they see on Facebook.

So people don’t trust the news they’re seeing on social platforms already. Given this, it makes sense that they also don’t have much faith in avoiding manipulation. And while each platform has implemented new measures to better protect users, and weed out “inauthentic” actions, the data would suggest that it’s not enough.

It’s worth noting too that this latest survey was conducted between January 6th and January 19th, 2020, and incorporates responses from 12,638 people.

But while users don’t currently have a lot of faith, they do believe that technology companies should do more to stop the spread of misinformation.

As you can see here, 78% of respondents agree that tech companies “have a responsibility to prevent the misuse of their platforms to influence the 2020 Presidential election”.

So, to recap, people don’t trust the news they’re seeing on digital platforms, and have little faith that the situation will improve – even though they feel that the providers have a responsibility to do so.

The bigger question then is “does that matter?”

I don’t mean that in a moralistic sense – of course it matters that people are potentially being manipulated. But I mean in terms of what impacts that will have – will people, for example, stop getting their news content from Facebook and other platforms as a result of this lack of trust, as noted in their responses?

Do you want to know the answer?

Historical evidence shows that people won’t stop using Facebook as a result of these trends. They probably should, right? If people believe that they may well be manipulated by social media news coverage, maybe it’d be better to get off of these apps, and stop getting their news coverage from them. But that won’t happen.

Case in point – in yet another Pew Research report, its researchers found that, in 2016, the year of the last Presidential election, 62% of Americans got at least some of their news content from social media. In 2018, after all the discussion around foreign interference and manipulation, amid all the coverage around social media misuse by political activists. After all that, guess what happened?

More people now get more of their news exposure through social media. So while it’s one thing for people to say ‘we don’t trust what we see’, it’s another thing to actually get them to take action on such, and actively stop using social channels to source news content.

Because that’s hard to do. More than just content, social platforms provide engagement, and the dopamine rush of likes and shares. That can be addictive – so while people don’t necessarily agree with what they’re seeing online, they do like to engage with it, they like to argue against it, to virtue signal in the comments. If you’re looking for the reason why we’re so divided along political lines these days, look to the engagement that people see in disagreement, the allure of the battle which few can resist.

Sure, I might dislike my uncle’s views on climate change, for example, which he regularly shares on Facebook. But you can bet that in quiet moments, I’m going to check in on his posts. Because it’s addictive, the anger and outrage, like poking a wound to feel that little twinge of pain. It solidifies you in your beliefs – and when you finally feel the need to respond and call him/her out, there’s a rush in that engagement.

It’s not surprising that people distrust Facebook as a news source in this sense. But they’re still going there for the fight. And I would argue that Facebook is okay with that, as opposed to feeling any significant need to play referee and quell disagreement.

So while this new survey doesn’t reveal any amazing insights, it is interesting to note what it suggests, in terms of broader behavioral trends, and what that means for civic discussion and engagement.