SOCIAL

Facebook May Soon Enable Users to Share their Instagram Reels to Facebook Watch

As TikTok continues to grow, Facebook continues to look for new ways to limit its expansion, and stop users from migrating away from its own apps.

A key weapon for Facebook on this front is scale. It may not be able to compete with TikTok in terms of fun, nor with the personalization of TikTok’s algorithm. But Facebook can offer creators more reach, by showcasing their Reels clips to Instagram’s billion-plus users.

And it can actually provide significantly more reach potential than just that.

Back in December, Facebook began testing a new option which would enable Reels creators to also share their Reels clips into the Facebook News Feed and to Facebook Watch, facilitating a potentially huge expansion of Reels exposure.

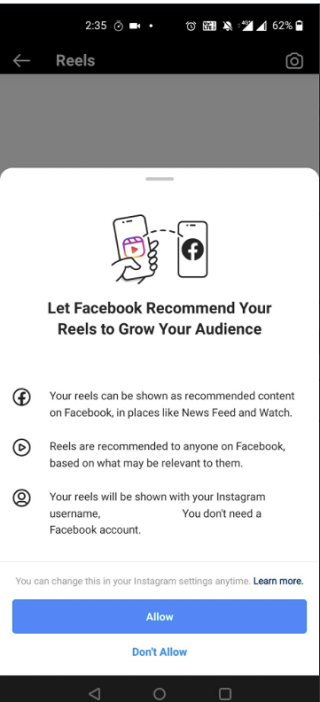

And now, it seems that Facebook’s advancing on this front, with a new, more polished prompt spotted in testing.

As you can see in this new, full-screen explainer, posted by user @VarunBanur (and shared by social media expert Matt Navarra), Facebook is now prompting some Reels creators to share their Reels clips to Facebook, where they can be recommended to any of Facebook’s 2.8 billion users, greatly expanding reach.

That could prove to be a powerful lure for Reels creators – though interestingly, this test appears to currently be being pushed in India, where Instagram initially launched Reels as a replacement for the banned TikTok.

TikTok reportedly had around 200 million Indian users at the time of its banning in the nation, and Instagram swooped in to launch Instagram Reels within days of TikTok’s removal, seeking to capitalize on this newly orphaned audience. Instagram says that Reels has seen steady growth in the Indian market, and by adding an option to share your Reels clips to Facebook, that would expand its audience potential, and likely put its reach on par with YouTube, which is also trying to gather up former TikTok users in India via its own ‘Shorts’ option.

Given this, the Facebook integration may not necessarily be about beating out TikTok, as such, as TikTok isn’t even present in the test region. But still, it would provide Facebook with another powerful lure for Reels, which could help negate competitors, while also, potentially, boosting the revenue potential of its short video option.

Right now, the option is not functional, so it’s not at the full live testing phase as yet. But it’s an interesting consideration – by providing more audience potential, Facebook could look to win over TikTok creators by giving them more opportunity to build audience, and generate income from their efforts.

TikTok is yet to establish a solid structure for creators to make money from the platform – but with projections that the app will reach a billion users in 2021, it’s evolving fast, and it likely won’t take long for TikTok to establish a more sustainable revenue-generation process.

Which probably means that Facebook needs to work quickly to win more creators over. There’s no word on if or when this new option might go live, or be released to more regions. But it once again underlines the rising influence of TikTok, and how it’s spooked The Social Network with its rapid growth.

Stay in the loop with Entireweb

Get the latest updates delivered straight to your inbox. No spam - unsubscribe anytime.