SOCIAL

Facebook Outlines New Measures to Better Detect and Remove Child Exploitation Material

Facebook has announced some new measures to better detect and remove content that exploits children, including updated warnings, improved automated alerts and new reporting tools.

Facebook says that it recently conducted a study of all the child exploitative content it had previously detected and reported to authorities, in order to determine the reasons behind such sharing, with a view to improving its processes. Their findings showed that many instances of such sharing were not malicious in intent, yet the damage caused by such is still the same, and still poses a significant risk.

Based on this, it’s now improved its policies, and added new, variable alerts to deter such behavior.

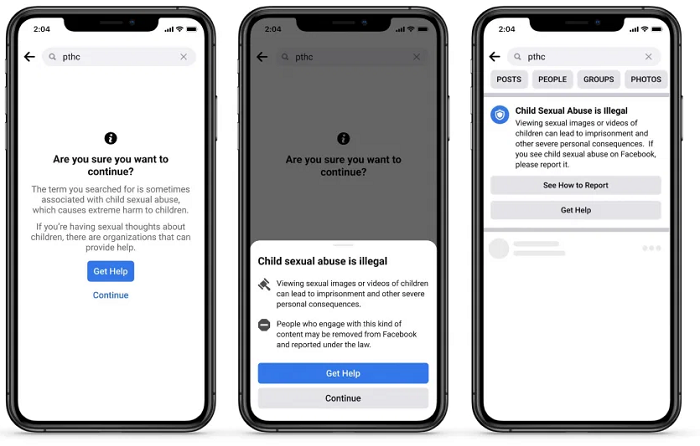

The first new alert is a pop-up that will be shown to people who search for terms commonly associated with child exploitation.

As explained by Facebook:

“The pop-up offers ways to get help from offender diversion organizations and shares information about the consequences of viewing illegal content.”

This is designed to address incidents where users may not be aware that the content they’re sharing is illegal, and could pose a risk to the child or children involved.

The second alert type is more serious, informing people who have shared child exploitative content about the harm it can cause, while also explicitly outlining Facebook’s policies on, and penalties for such.

“We share this safety alert in addition to removing the content, banking it and reporting it to NCMEC. Accounts that promote this content will be removed. We are using insights from this safety alert to help us identify behavioral signals of those who might be at risk of sharing this material, so we can also educate them on why it is harmful and encourage them not to share it on any surface – public or private.”

Those learnings could be critical in developing the next advance in its detection and deterrent tools, while also providing clear and definitive warnings to current offenders.

Facebook has also updated its child safety policies in order to clarify its rules and enforcement around not only the material itself, but also contextual engagement:

“We will remove Facebook profiles, Pages, groups and Instagram accounts that are dedicated to sharing otherwise innocent images of children with captions, hashtags or comments containing inappropriate signs of affection or commentary about the children depicted in the image. We’ve always removed content that explicitly sexualizes children, but content that isn’t explicit and doesn’t depict child nudity is harder to define. Under this new policy, while the images alone may not break our rules, the accompanying text can help us better determine whether the content is sexualizing children and if the associated profile, Page, group or account should be removed.”

Facebook has also improved its user reporting flow for such violations, which will also see such reports prioritized for review.

This is one of the most critical areas of focus for Facebook. With almost 3 billion users, it’s inevitable that there will be criminal elements looking to use and abuse its systems for their own purposes, and Facebook needs to ensure that it’s doing all it can to detect and protect younger people from predatory activity.

On a related front, Facebook has come under significant scrutiny in recent times over its plan to offer message encryption by default across all of its messaging apps, which child welfare advocates say will enable exploitation rings to utilize its tools, beyond the reach and enforcement of authorities. Various Government representatives have joined calls to block Facebook from shifting to encryption models, or to have the company work with law enforcement to provide ‘back door’ access as an alternative, and that could end up being another court challenge for Facebook to contend with in the coming months.

Last year, the National Centre for Missing and Exploited Children (NCMEC) reported that Facebook was responsible for 94% of the 69 million child sex abuse images reported by US technology companies. The figures underline the need for increased action on this front, and while these new measures from Facebook are critically important, it’s clear that more needs to be done to address the potential concerns associated with message encryption and the capacity for such content to be used to detect offenders.

Stay in the loop with Entireweb

Get the latest updates delivered straight to your inbox. No spam - unsubscribe anytime.