SOCIAL

Facebook Provides New Resources to Help Gaming Creators Manage their Communities

As Facebook pushes further into gaming, and game streaming in particular, The Social Network is also being forced to address issues with trolling and other types of anti-social behavior which have, unfortunately, become common staples in some corners of the community.

Though some of that is due to varying tolerance within different groups, and the unclear boundaries between each.

As explained by Facebook:

“While our Community Standards protect against the most egregious harms like hate speech and terrorism, sometimes all it takes is one person being rude, mean or simply disruptive to ruin a conversation for everyone. And what may be considered competitive banter in one streaming community, might be considered toxic in another.”

To address the latter point, Facebook has worked with the Fair Play Alliance, a coalition of game companies which are working to encourage healthy communities in online gaming, to establish a new set of rules that creators and moderators can apply to set clearer guidelines around such within their gaming streams and discussions.

“People form communities over a shared love of gaming, but we know some groups of people, like women, can be targets of negative, hurtful stereotypes – so, rules like “Be Accepting” and “Respect Boundaries” can help maintain a positive environment for everyone, regardless of race, ethnicity, sexual orientation, gender identity or ability. Similarly, “Don’t Criticize” can help newer players feel welcome. The rules will promote inclusion and respect to help people feel safe sharing their voice.”

The rules are not compulsory, but for gaming creators who want to establish more definitive parameters within their communities, they’ll be able access them via a new “Chat Rules” button in the streamer dashboard before going live.

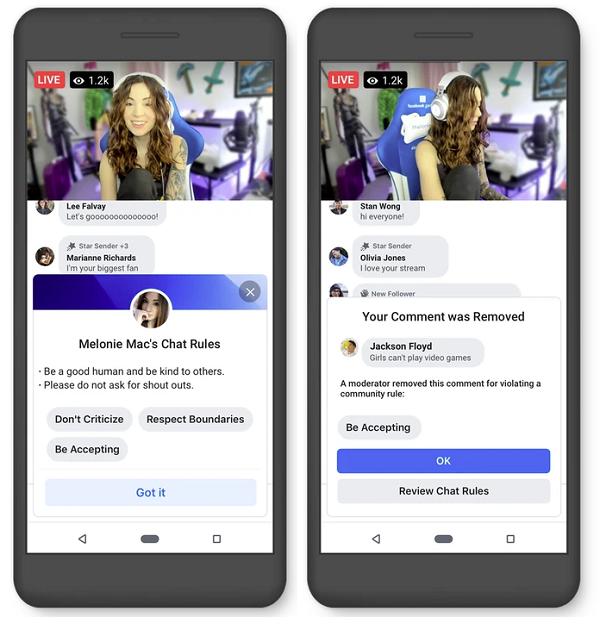

As displayed here, once a streamer taps on ‘Chat Rules’, they’ll be able to select from a list of gaming-specific rules for their community. They’ll also be able to add a custom description about their stream, in order to set clearer expectations around the types of conversations they want to facilitate.

“Once a creator selects rules from the Chat Rules section of the streamer dashboard, fans will be asked to accept the rules before they’re allowed to leave a comment.”

You can see this in action in the above screenshot on the left.

In addition to this, Facebook has also improved its comment removals process, so they’ll disappear from the stream quicker, while moderators will now also have access to a new moderation dashboard within streams, where they’ll be able to give fans feedback as to why their content was removed.

The new tools are being made to a selected group of game streamers to begin with, before an expanded roll-out in future.

As noted, Facebook has been making a bigger push into gaming of late, with dedicated game streamer programs and revenue-sharing options to incentivize participation.

And those efforts are paying off – earlier this month, CNBC reported that Facebook Gaming saw its live-stream gaming market share jump from 3.1% in 2018 to 8.5% in 2019.

Twitch is still the clear leader, but with Microsoft and Google offering big deals for top creators, and Facebook gaining more traction, and offering the largest potential for reach, Twitch will have its work cut out for it to maintain its place.

And with the global gaming market set to grow to $196 billion by 2022, you can see why the big players are working hard to refine and improve their offerings. As we noted recently, you may not personally watch gaming content yourself, you may not be interested in gaming live-streams, or gaming culture more generally. But the influence of gaming is massive, and as younger users who’ve grown up with functions like live-streaming move into adulthood, and more viable spending demographics, you can expect the focus on gaming to evolve in-step.