SOCIAL

Twitter Moves to the Next Stage of Testing with its ‘Birdwatch’ Crowdsourced Fact-Checking Program

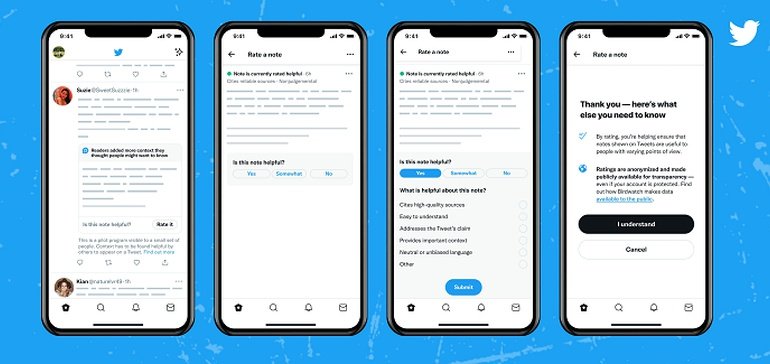

After a year of testing, Twitter has announced some new updates to its Birdwatch crowdsourced fact-checking program, which enables Twitter users to add notes to Tweets that they believe contain misleading information.

As you can see, through Birdwatch, users can add in manual notes and tips on tweets, which can help to provide more context to future readers. And while that could also be problematic, in terms of people using it as a tool to silence dissenting opinions, Birdwatch reports don’t limit a tweet’s reach or performance, as such, they merely provide more context to those that seek it. And if a lot of people are saying it’s false, it probably is, while Twitter’s also working with official fact-checking groups and journalists to add more credibility to the notes.

And now, Twitter’s looking to take Birdwatch to the next stage:

“Starting today, a small (and randomized) group of people on Twitter in the US will see Birdwatch notes directly on some Tweets. They’ll also be able to rate notes, providing input that will help improve Birdwatch’s ability to add context that is helpful to people from different points of view.”

As you can see in these example screenshots, now, some users will see Birdwatch notes displayed upfront on tweets in their timeline, and they’ll also be prompted to rate that supplemental information to further qualify the info.

That’ll no doubt raise the ire of free speech activists, who already feel that social platforms are over-stepping the fact-checking mark, but it could be a simple, valuable way to facilitate crowd-sourced fact-checking, while also reducing the reach of questionable claims.

But again, it could also be problematic. You can imagine that some groups will ‘brigade’ these reports if they can, in order to counter claims they don’t like or agree with – though Twitter does have some additional qualifiers for its displayed notes.

“To appear on a Tweet, notes first need to be rated helpful by enough Birdwatch contributors from different perspectives. Difference in perspectives is determined by how people have rated notes in the past, not based on demographics.”

So there is a weighting of some sort to the Birdwatch responses, which could eliminate bias, at least to some degree.

But the process is still a work in progress, which is why Twitter’s taking its time, and only launching this new update to a small group to begin with.

As explained by Twitter’s GM of Consumer Services Kayvon Beykpour:

“An open and community-driven program like this is extremely ambitious (we look to Wikipedia as a source of inspiration here), and ultimately only effective if it’s able to result in high quality and informative content consistently, at scale, and through self-correcting incentives. Everything we’ve learned so far makes us feel even more encouraged by the potential for impact as Birdwatch scales.”

Indeed, there have been some encouraging signs for the program thus far, with Twitter reporting that those who’ve viewed Birdwatch notes are 20-40% less likely to agree with the substance of a potentially misleading Tweet, while the majority of users who’ve seen them have found the Birdwatch notes to be helpful.

Twitter’s still working through the full details, and it has made various changes to the process, including ensuring diversity among Birdwatch participants and adding Birdwatch aliases so people can make reports without fear of being identified and targeted for their comments.

It’s an ambitious program, and it’s still too early to say whether Birdwatch will prove valuable, but the concept has merit, using a Reddit-style crowd-sourcing system to refute questionable claims, without having to introduce downvotes like Reddit’s process.

And it could prove to be a valuable addition to the broader detection scope of the platform. Despite the test running for a year, however, it’s still really early days, and it seems right for Twitter to take a cautious approach with this next stage.

You must be logged in to post a comment Login