SOCIAL

WhatsApp Adds Encryption for Chat Back-Ups, Closing a Loophole in its Privacy Systems

Facebook’s looking to expand WhatsApp’s message privacy options even further, by giving users the option to encrypt their message back-ups as well, adding another layer of security to their private WhatsApp communications.

Right now, all WhatsApp messages are end-to-end encrypted by default, which has become a key value proposition for the app amid rising concerns about digital data trails and maintaining privacy.

Soon, that will be extended to your data history as well – as explained by WhatsApp:

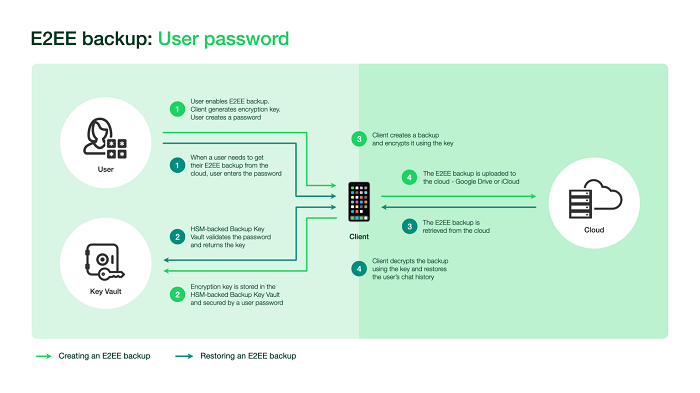

“People can already back up their WhatsApp message history via cloud-based services like Google Drive and iCloud. WhatsApp does not have access to these backups, and they are secured by the individual cloud-based storage services. But now, if people choose to enable end-to-end encrypted (E2EE) backups, neither WhatsApp nor the backup service provider will be able to access their backup or their backup encryption key.”

The measure will provide extra assurance for WhatsApp users, which is likely important given the perceptual hit the platform took earlier this year when it announced an update to its privacy policy. That change, which allows some additional data sharing between WhatsApp and parent company Facebook, was perceived by many to be a watering down of WhatsApp’s fundamental approach to individual privacy, and as a result, many users switched to alternative messaging platforms in order to get away from the prying eyes of Zuckerberg and his cohorts.

The update wasn’t a breach of WhatsApp’s long-standing data privacy approach, and only related to communications between individuals and businesses in WhatsApp, and subsequent outreach targeting as a result. But still, the backlash was significant enough for WhatsApp to delay the change to better explain, and for Facebook execs to go on a PR push to stem the tide of users looking to abandon the platform.

How big an impact the controversy actually had on WhatsApp usage, we don’t know, but definitely, WhatsApp could use a new feature like this to reinforce its privacy stance, and underline to its users that nobody can access their private messages, not even those within WhatsApp itself.

Functionally, being able to encrypt your message back-ups probably doesn’t add much for regular users. But then again, as noted by TechCrunch, gaining access to WhatsApp chat data via third-party workarounds has thus far been the only way for government and law enforcement agencies to peer into the WhatsApp network.

“Tapping these unencrypted WhatsApp chat backups on Google and Apple servers is one of the widely known ways law enforcement agencies across the globe have for years been able to access WhatsApp chats of suspect individuals.”

In other words, the current back-up options, which rely on third-party providers, reduce the overall security of WhatsApp chats, a loophole that Facebook is now closing up. Which will also undoubtedly raise the hackles of various organizations that have voiced their opposition to Facebook further locking down its messaging systems.

Back In October 2019, representatives from the US, UK and Australia co-signed an open letter to Facebook which called on the company to abandon its full messaging encryption plans, arguing that it would:

“…put our citizens and societies at risk by severely eroding capacity to detect and respond to illegal content and activity, such as child sexual exploitation and abuse, terrorism, and foreign adversaries’ attempts to undermine democratic values and institutions, preventing the prosecution of offenders and safeguarding of victims.”

The Governments of each region called for Facebook to provide, at the least, ‘backdoor access’ for official investigations, which Facebook has repeatedly refused.

Which is what’s pushed authorities to seek out alternate means, like tapping into third-party back-ups – and with Facebook now moving to cut that off as well, that could see a new ramp-up of opposition to Facebook’s plans, and renewed calls for limits on the same.

A key focus of the concern on this front is the potential of such options to shield child traffickers, with the National Society for the Prevention of Cruelty to Children arguing that any move to further restrict access to such by law enforcement increases the potential for use of these platforms among perpetrator groups.

As per NSPCC chief executive Peter Wanless:

“Private messaging is at the front line of child sexual abuse, but the current debate around end-to-end encryption risks leaving children unprotected where there is most harm.”

This is the most compelling, and important argument against the move at present. By providing full encryption across all of its messaging apps, Facebook will essentially hide all communications by predators, and those who would seek to use such systems for child exploitation, which could then lead to an expansion of such activity.

Yet, at the same time, the broader push for increased online privacy continues to gain momentum, with people seeking options to protect their private communications from outside monitoring.

It’s a complex balance, and there’s compelling logic on both sides, but either way, it seems that Facebook it pushing ahead, with the company also repeatedly noting that it’s moving to integrate all of its messaging tools (Messenger, Instagram Direct and WhatsApp) and add more encryption options across the board.

There’s no definitive right answer here, but it is interesting to note the ongoing debate, which could eventually force Facebook to reverse course, or change its approach, if regulators from one of its major usage regions decides to make a more definitive push back.

Stay in the loop with Entireweb

Get the latest updates delivered straight to your inbox. No spam - unsubscribe anytime.