SEO

6 Things You Can Do to Compete With Big Sites

Zillow, Trulia, Redfin. These names appear in almost every conversation about buying, selling, or renting property. This is not because people are particularly interested in these platforms but because they’ve become the default starting point for most property searches.

The best illustration of this is that out of over 4.5M keywords that Zillow ranks for, bringing them an estimated 32.7M visits from search, the top keyword is “zillow”. And did you know that’s a more popular search term than “houses for sale” or even “apartments”?

You might think there’s really nothing left for realtors and agencies. But here’s the twist: their niche focus is their secret weapon. These local experts can outshine the big names, proving that sometimes, being small is the biggest advantage.

This is where SEO comes in. SEO (search engine optimization) for real estate involves strategies to boost your visibility in Google’s organic search results. This visibility brings free, consistent traffic that grows as you create more optimized content.

The opportunity for boutique, small, and medium real estate businesses lies in four key areas:

- Hyperlocal keyword targeting.

- Long-tail keywords with high intent.

- Local link building.

- Exceptional customer service that fuels positive reviews, boosting your local search rankings.

In other words, you need to do SEO better where it counts.

In this article, I’ll share strategies and tips from SEO experts in the real estate sector, along with insights from high-performing niche sites. Our focus is exclusively on SEO, so we won’t cover search ads or listing your business on aggregators, as you’re likely already doing those.

SEO for real estate faces a few specific challenges. It’s good to know them to understand how to shape your strategy.

Big sites dominate the share of voice. National real estate portals and aggregators often outrank smaller agencies. They’ve got tons of backlinks, tons of well-ranking pages fueled by inventory from practically every possible source, and they are well-optimized for Google. It just so happens that all of that is called authority, which Google likes to promote in search engine result pages (SERPs).

A huge challenge is figuring out where big competitors leave content gaps and missed keyword opportunities. Big real estate platforms dominate the market, so you need to dig deep into what they aren’t addressing.

Both local and national competition. Big sites will appear in both national and local search results. Moreover, chances are on the local level, you’ll be competing with local players who already started investing in SEO.

Our biggest SEO challenge is standing out in local searches amidst fierce competition because we are battling local real estate investors and also national companies.

Real estate SEO is incredibly local. Unlike other industries, where a broad audience can be targeted, real estate businesses must rank well in specific cities or neighborhoods. This means you’re not just competing with the big-name RE platforms but also with other local agencies, making it even harder to stand out.

Serving both sides of the market. As a real estate agent, you’re practically a one-person marketplace serving both sellers and buyers.

Each agent has different ambitions, so they need to ensure their SEO strategy aligns with their overall business goals.

Many topics within real estate will count as Your Money or Your Life (YMYL). In recent years, Google has recognized that certain subjects, including real estate, require higher standards of trustworthiness.

Any content should go through multiple fact checks before publication, and each data point should be well sourced with an external link where possible as this will aid authority.

Now, let’s see what we can do about those challenges.

A well-optimized Google Business Profile (GBP) is crucial to outrank aggregators and local competition. As you probably already know, this free listing appears in Google Search and Maps.

I won’t go into the basics of GBP profiles. I’m sure most of you already have one, and if not, you can get up to speed with our full guide for beginners.

What I’d like to emphasize here is two things.

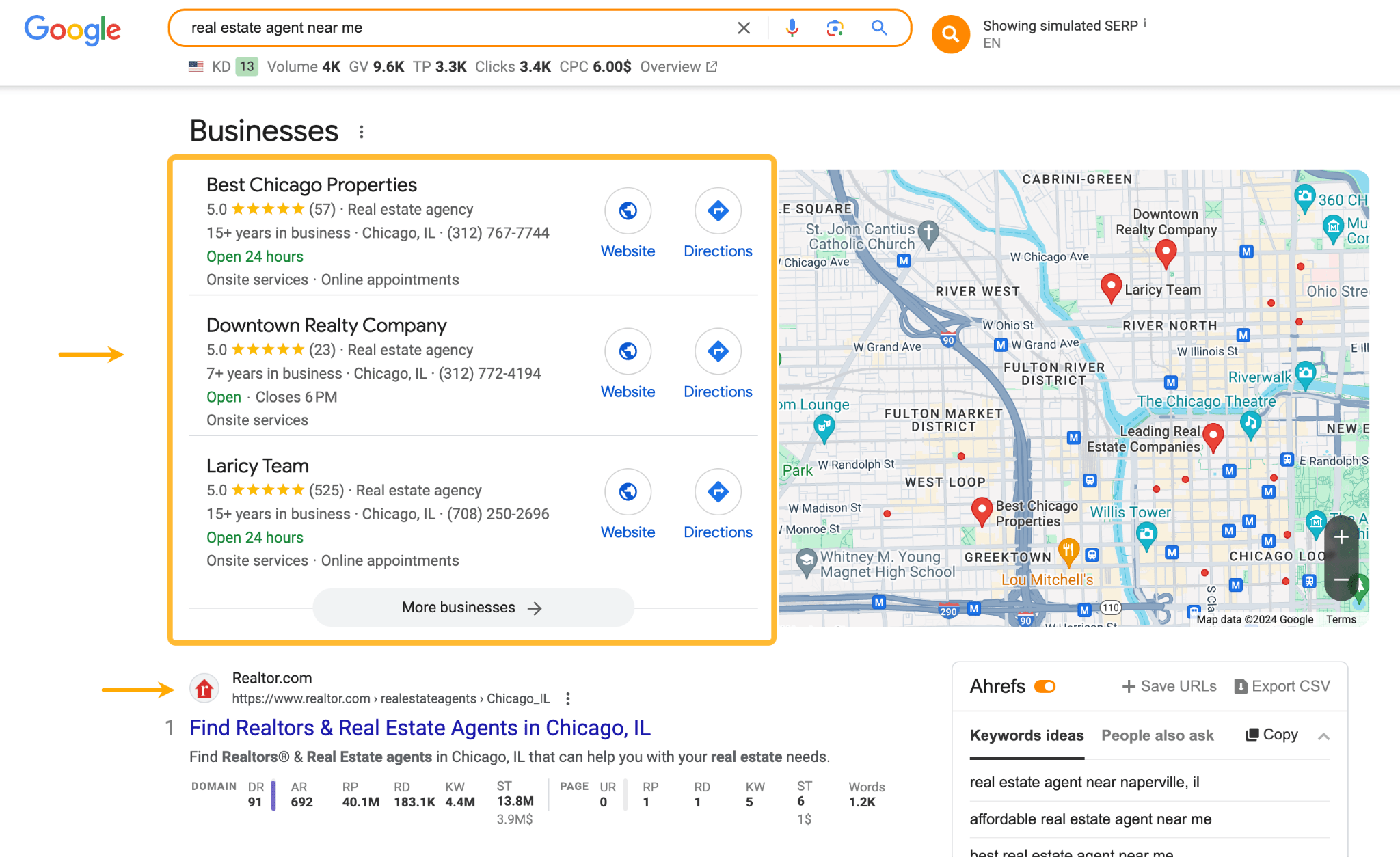

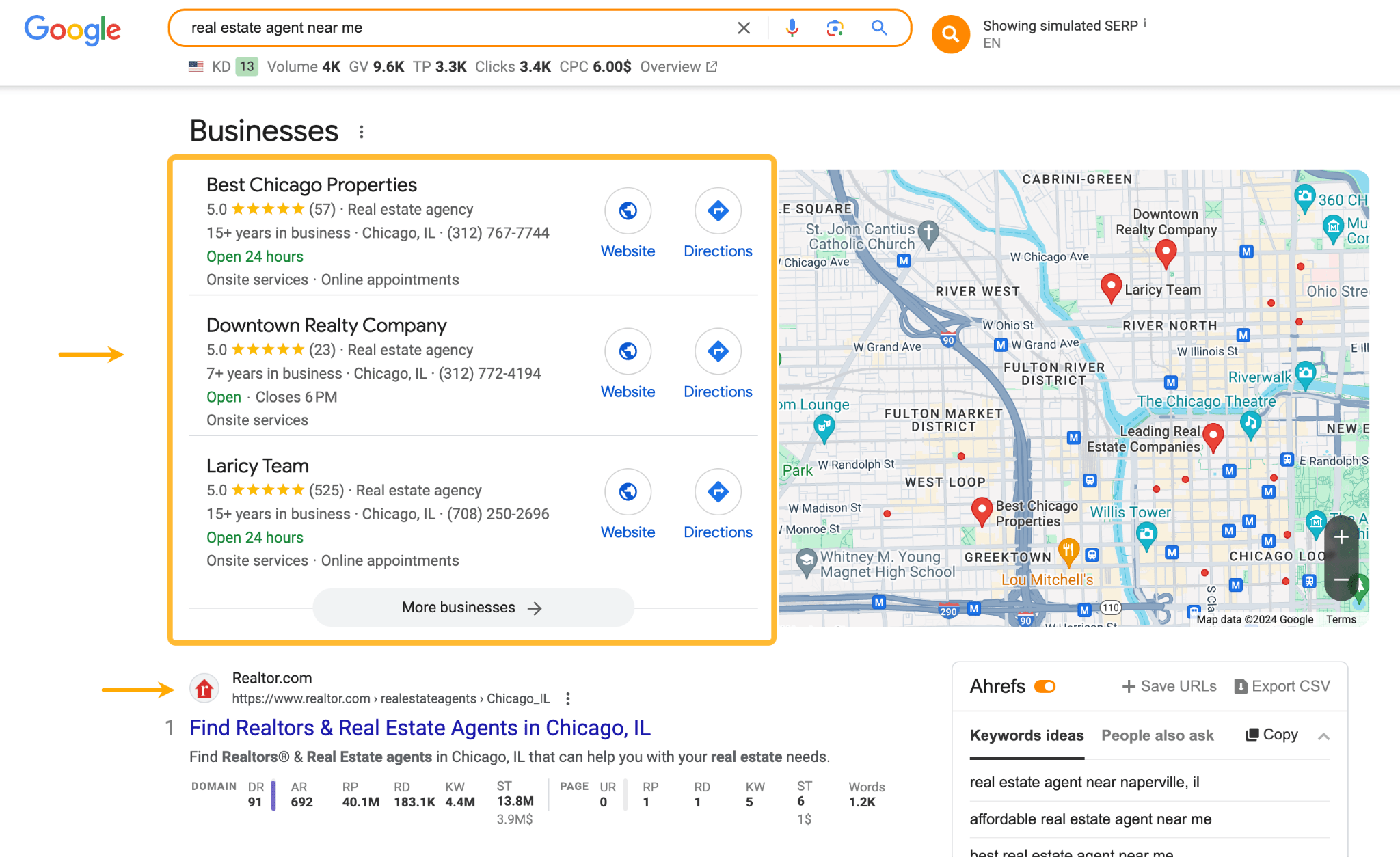

A GBP is one of your best bets to outrank both big sites and local competitors. I can’t even cite a specific expert here because they’ve all said the same thing. That’s because the so-called map pack featuring GBPs often shows on top of regular organic results.

A GBP is your answer to big brands. They have the marketing budgets, the authority on Google, and brand awareness. A GBP gives you a strong local presence backed by reviews and the effort you take to make the profile stand out.

You have to keep in mind that the profile is not just something that people will see only once, and only if they find it through Google. Even if they discover you in other ways, they will circle back to the GBP to see if you can be trusted.

Secondly, there are a few things that can make or break a GBP:

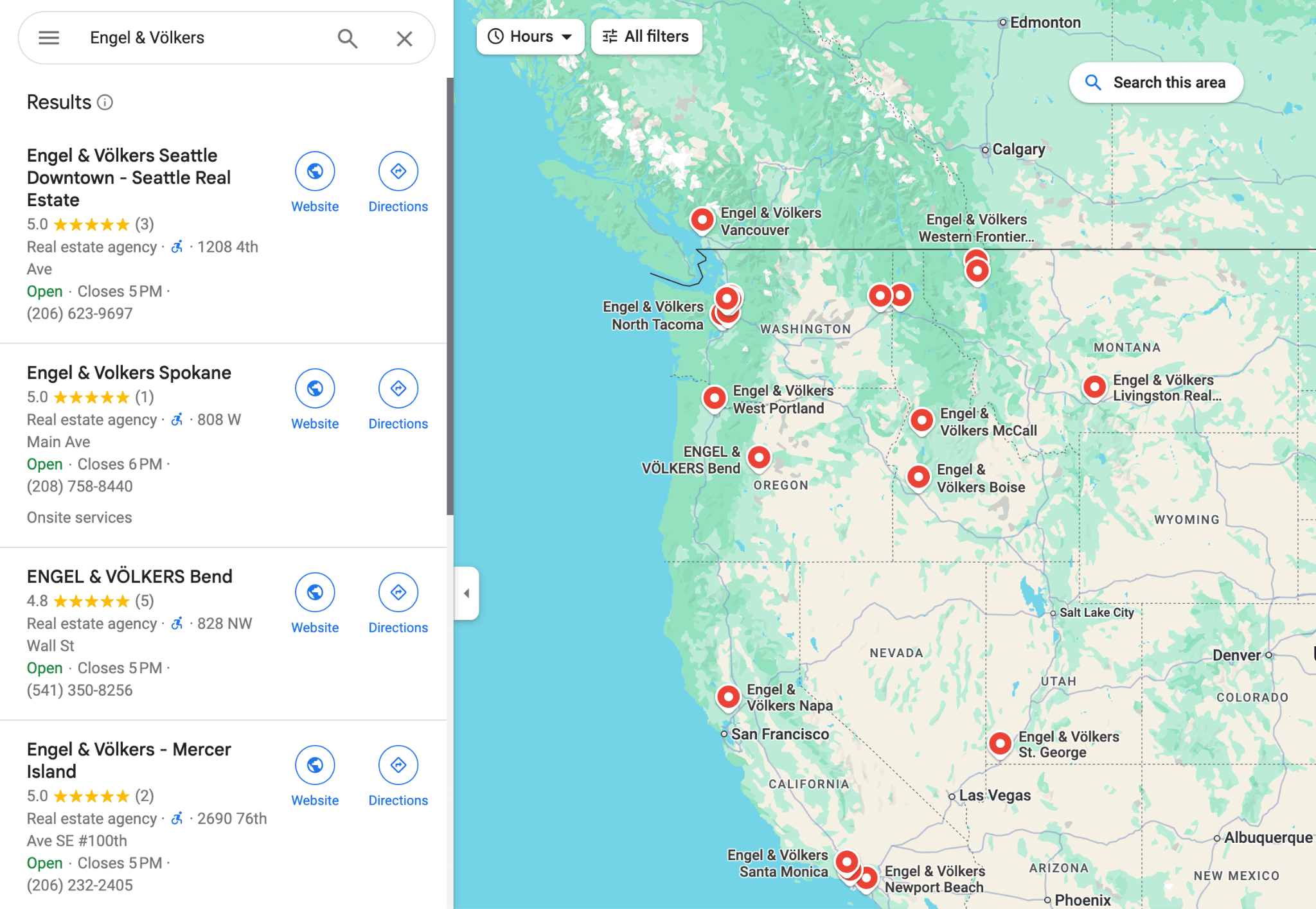

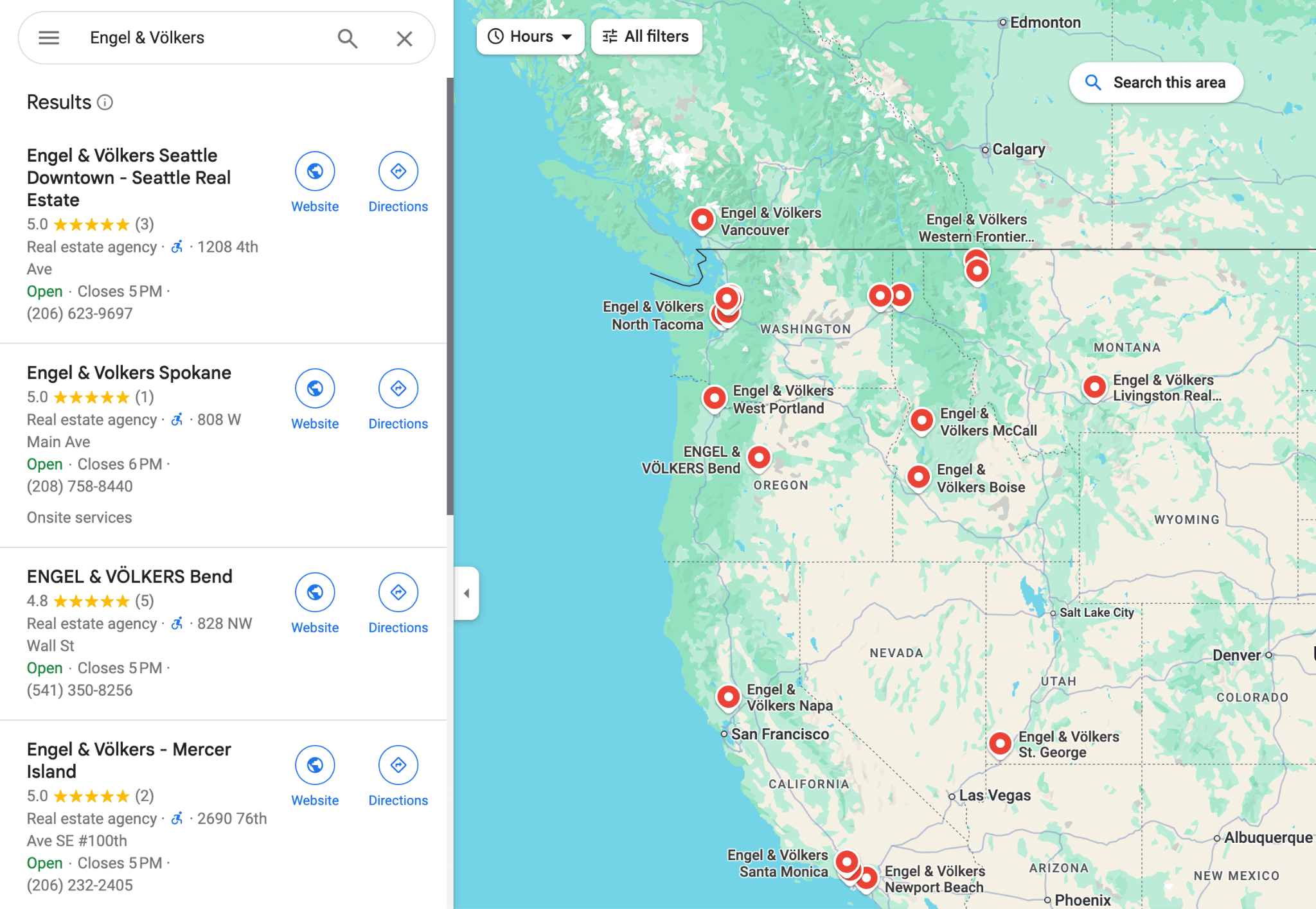

- Listing each branch separately.

- Giving people reasons to leave a positive review.

- Showing who you are and how you work in the photos feed.

Make sure you list branches separately. This is important because Google ranks GBPs based on the distance of the searcher or the location used in the query to the business (among a few other things). So if you want to be visible in all of the cities or neighborhoods where you have a physical address, make sure to list them separately.

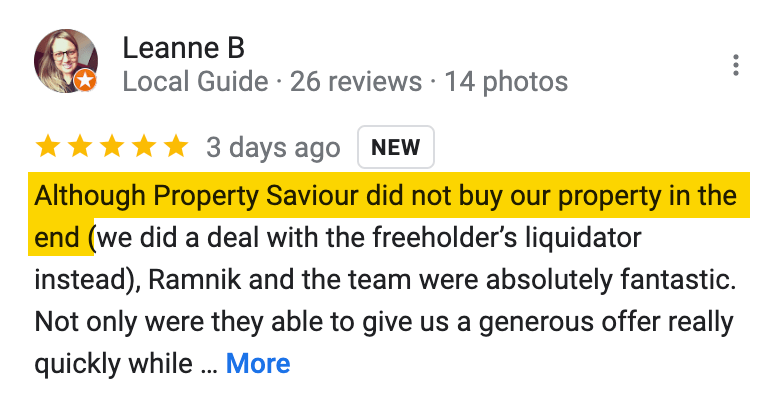

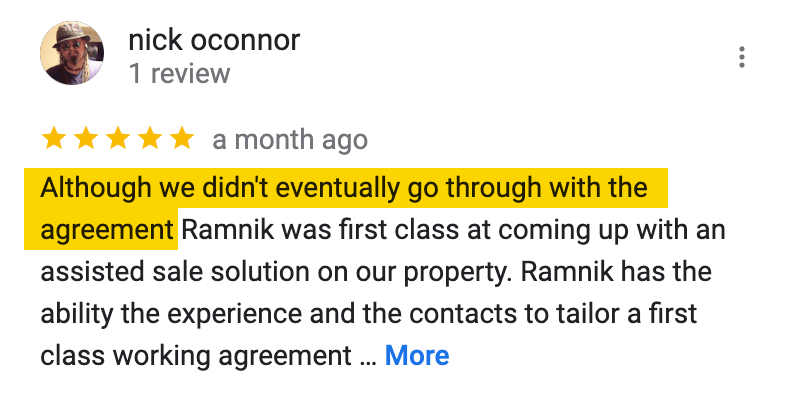

Reviews are one of the most impactful ranking factors for GBPs. Virtually everything about them counts: how many are there, what’s the overall ranking, are they fresh, do you respond to them, etc.. Google pretty much reads them just as a potential client would.

Obviously, the goal is to get as many positive reviews as possible. But here’s the tip: not all of them need to come from actual real estate transactions. You can receive excellent reviews by just being helpful.

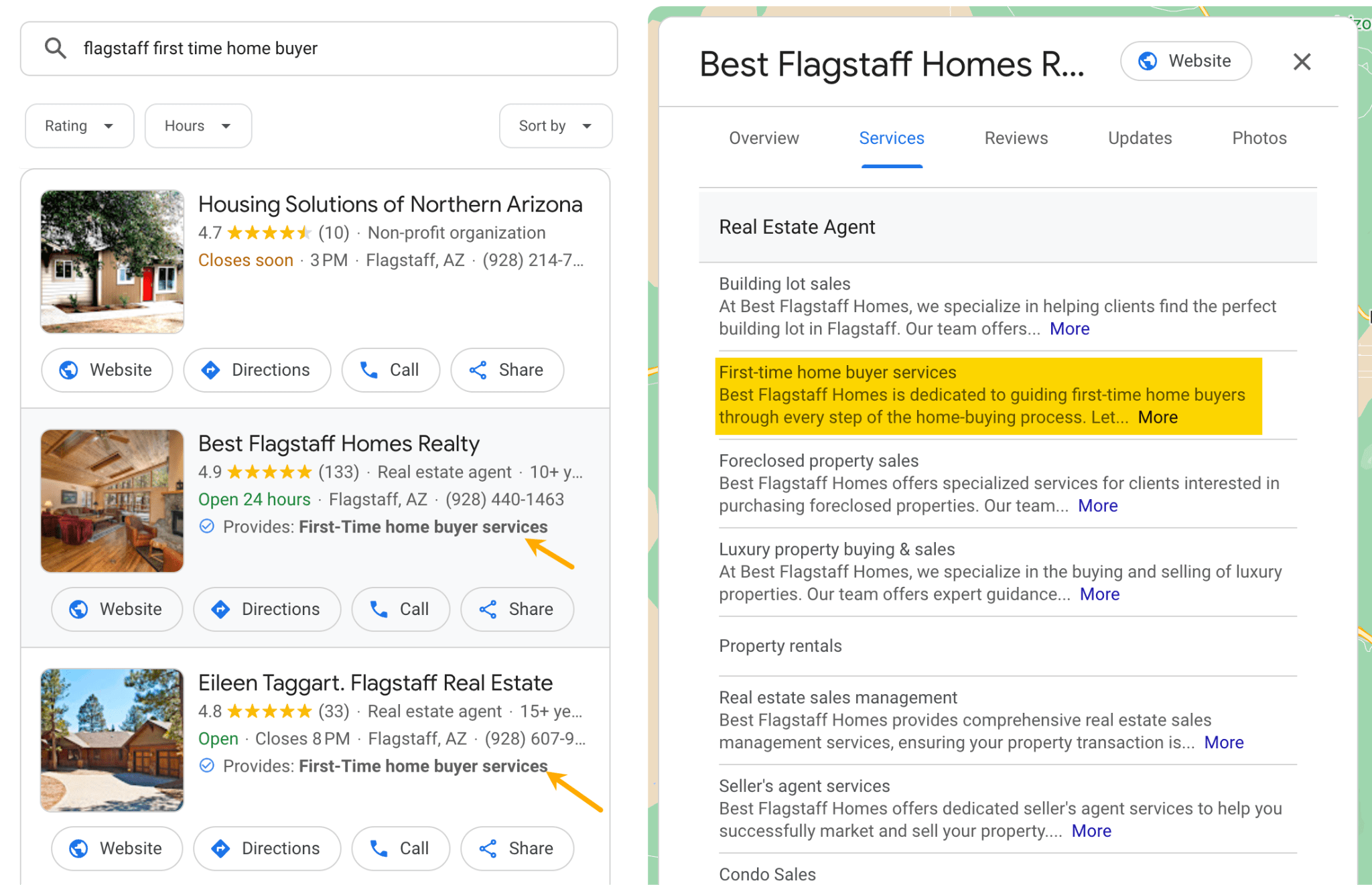

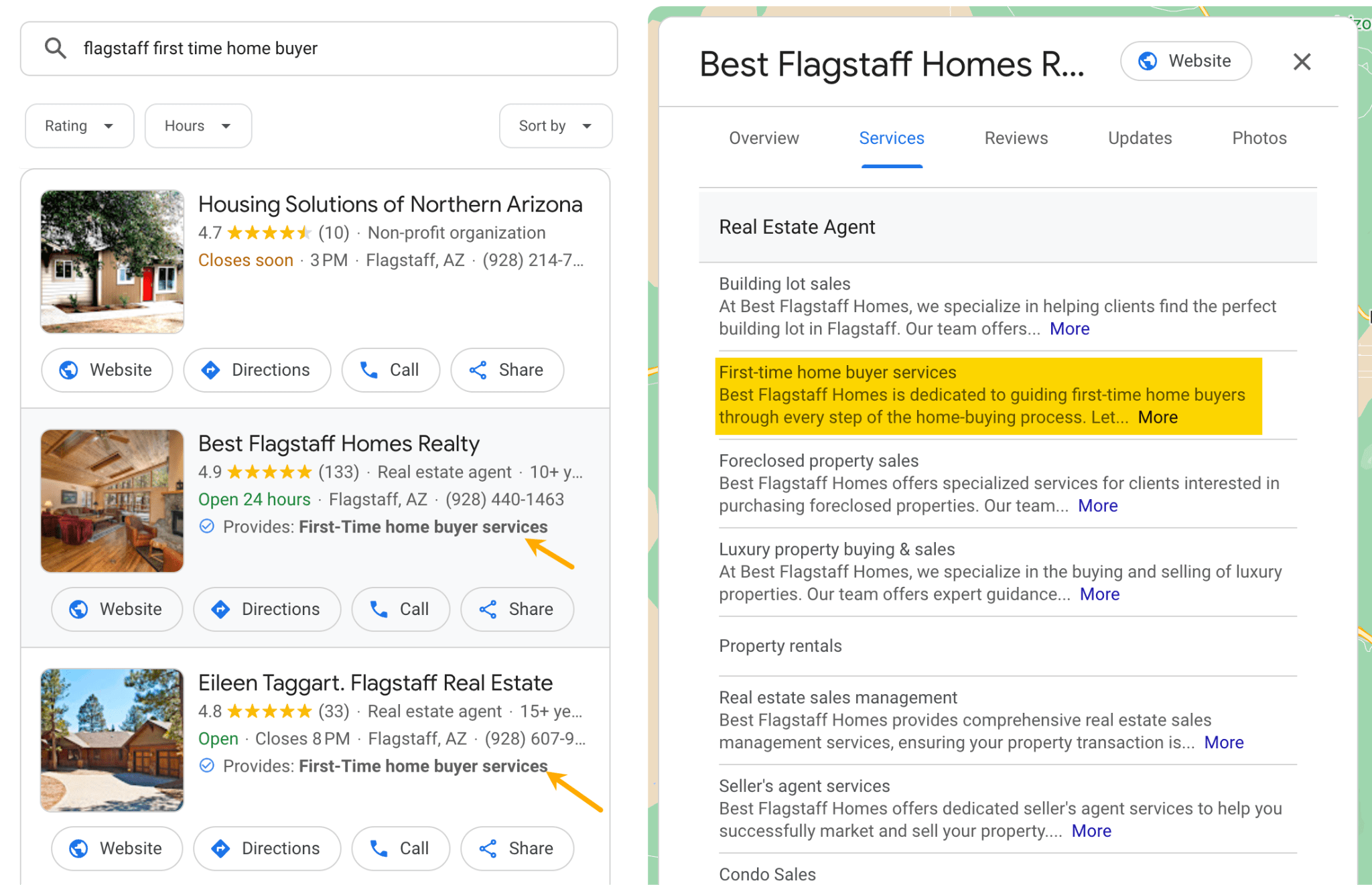

Next, list ALL your services. By listing all services, you increase your chances of appearing in a wider range of relevant searches. Example below:

Next, list ALL your services. By listing all services, you increase your chances of appearing in a wider range of relevant searches. Example below:

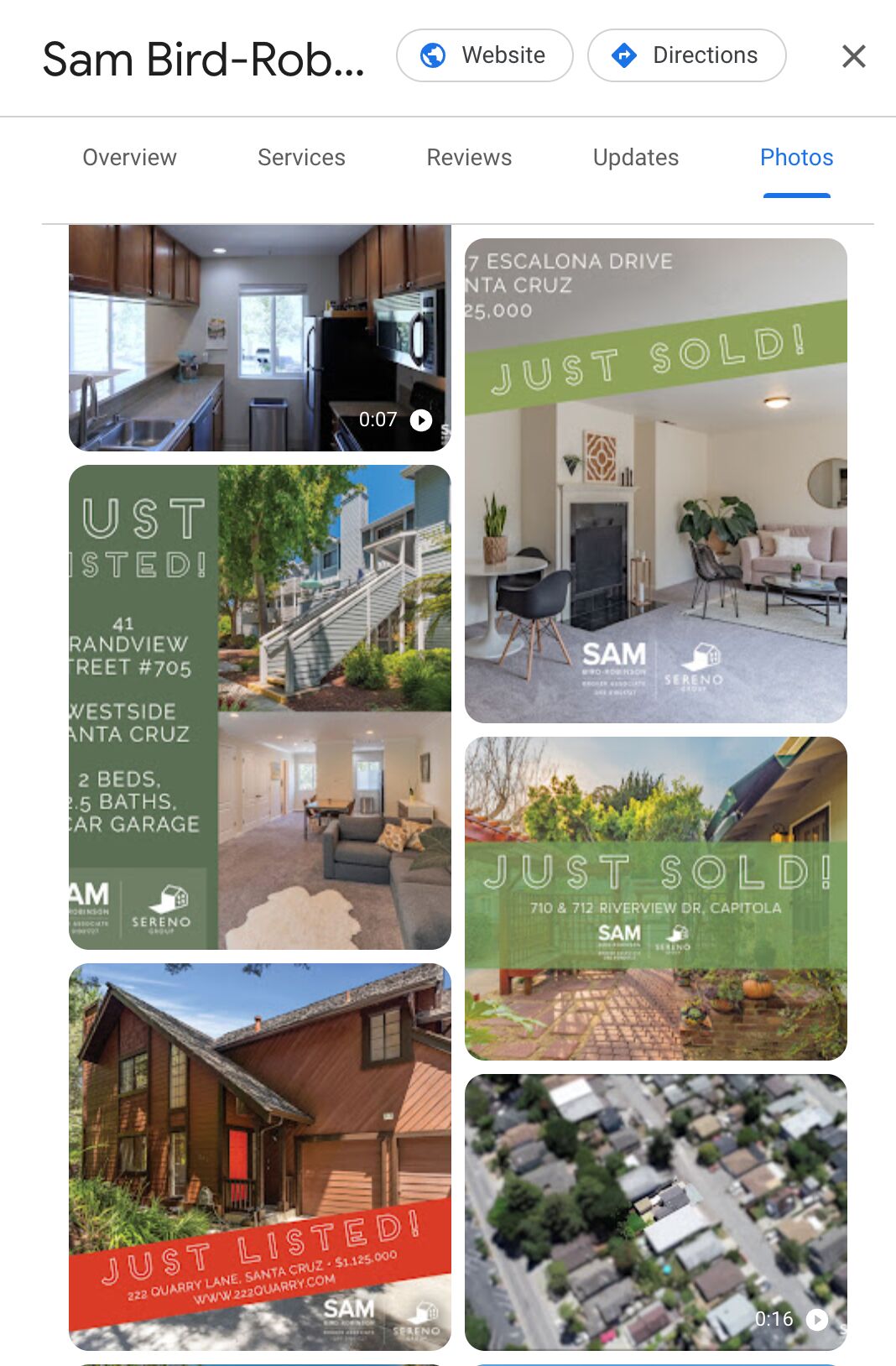

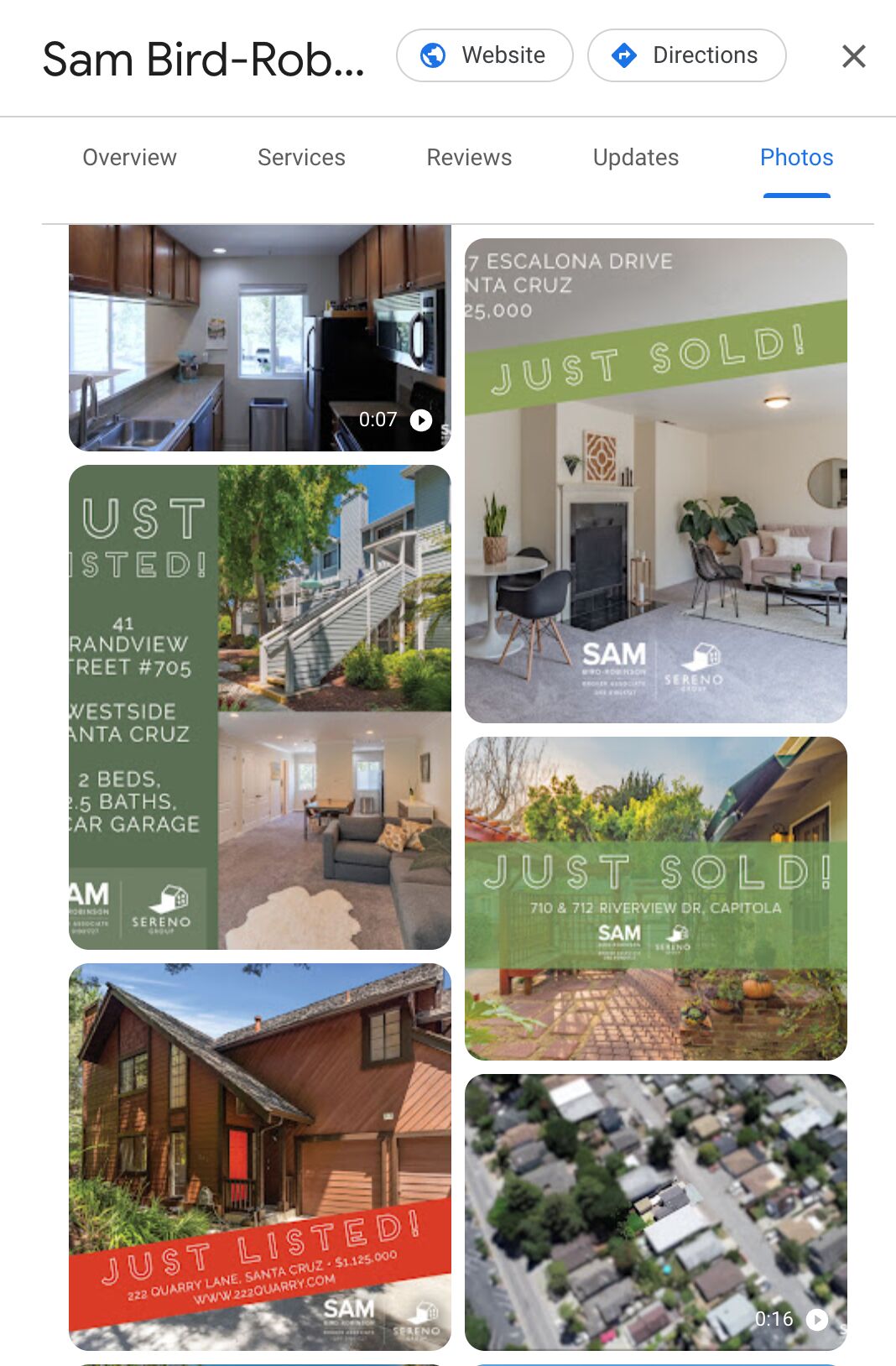

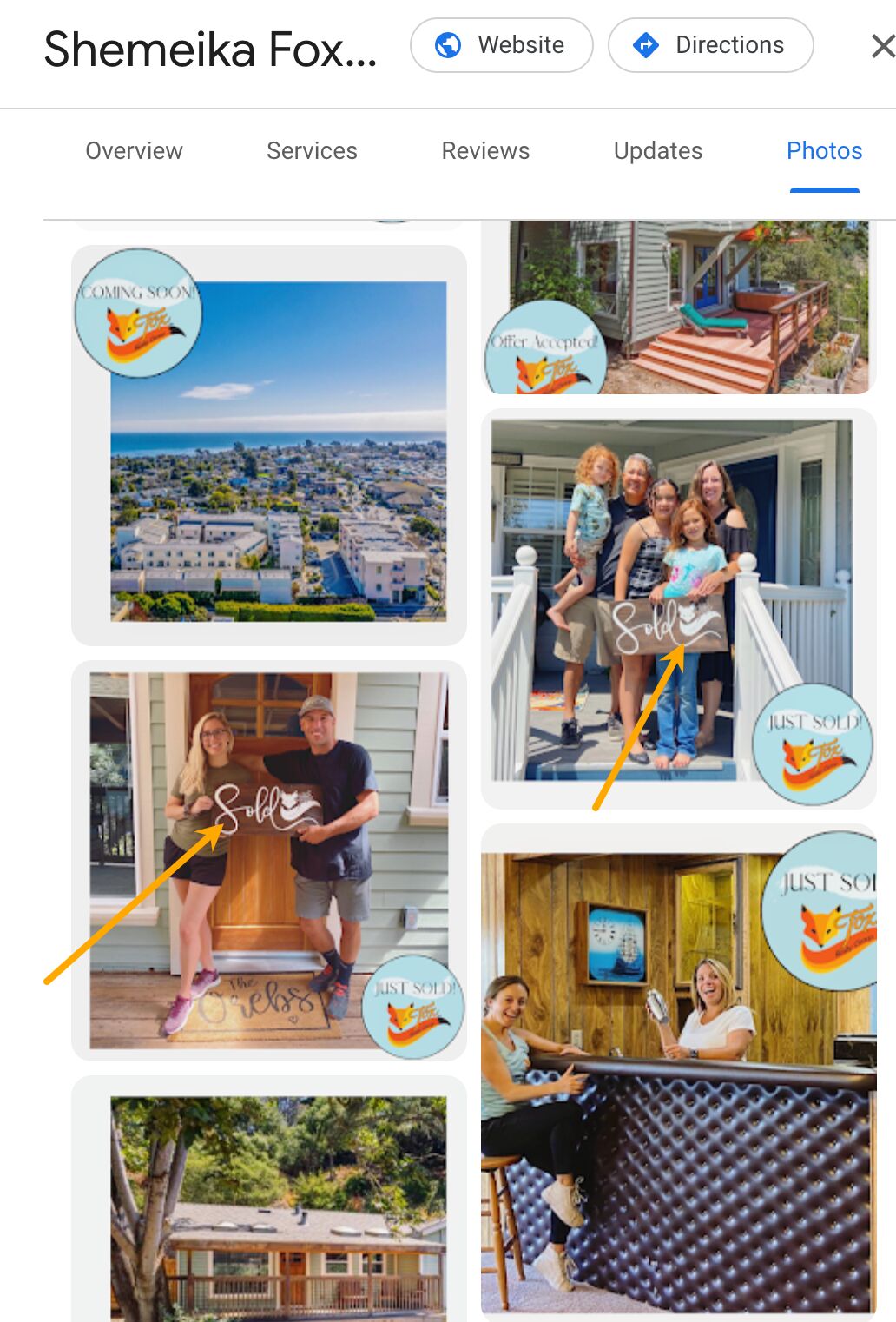

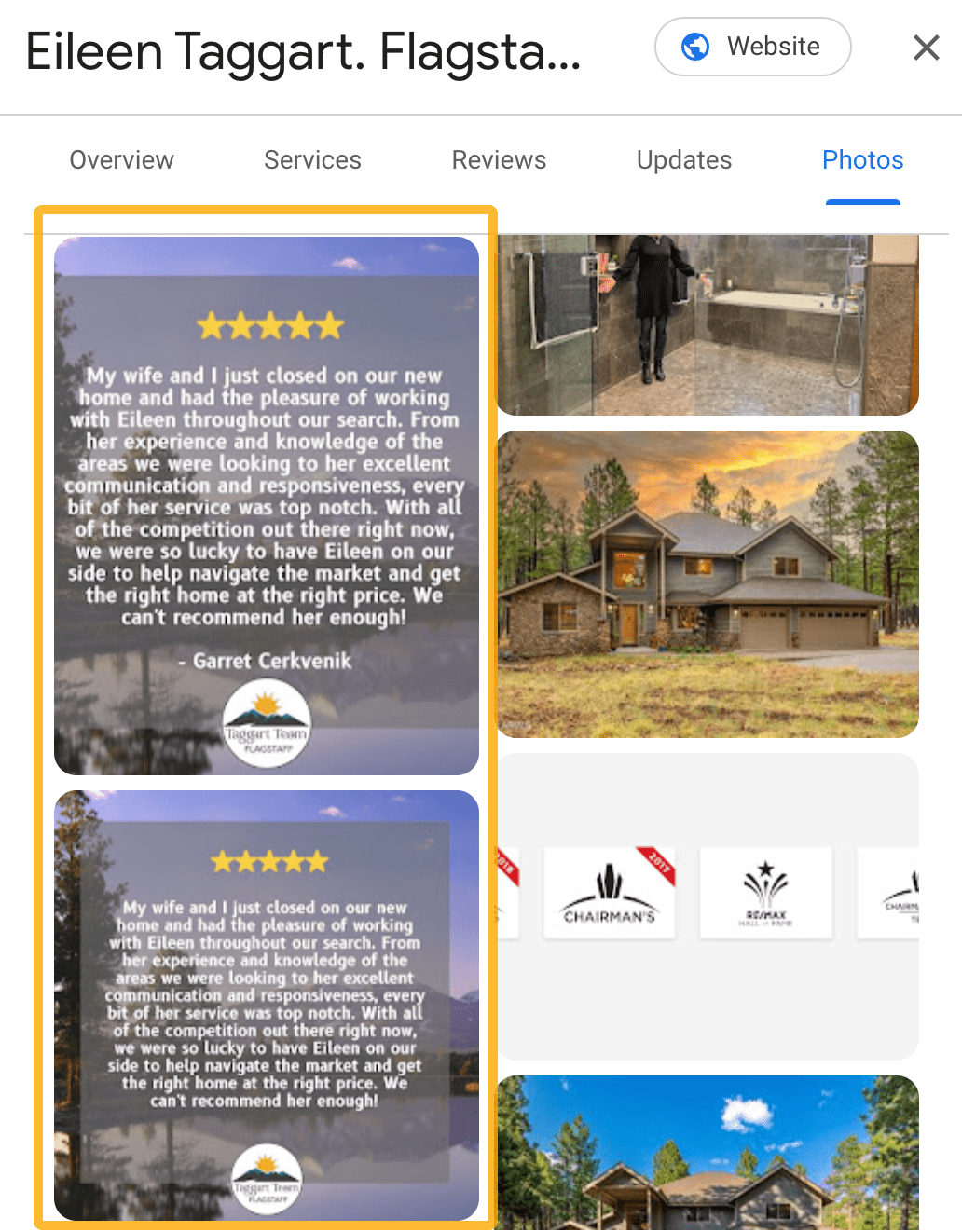

Finally, consider adding photos of your team and client interactions. This isn’t about Google rankings — it’s all about how people think. Photos of your team and happy customers help new clients feel like they know who they’re trusting with their biggest assets. Most GBPs just show normal real estate photos. Just make sure to ask for their permission first.

I’ve gathered a few examples of photo feeds that stood out in my research. Photos like these draw attention yet don’t require much effort to make.

Keywords are the words and phrases that people type into search engines to find what they’re looking for. In SEO, you use keywords as topics for your content so that when someone uses the keywords, they can find your content.

The keyword strategy should focus on niching down if you’re a small or medium-sized real estate business (or you’re working for such a client). Keywords with high search volume are usually harder to rank for. Plus, these big keywords often relate to the national market, not your local area. They’re less likely to bring you leads from nearby customers.

Use the niche market to your advantage and focus on using long-tail keywords with low to medium competition. Rather than looking to target broader terms like “real estate” or “investment property UK”, target more specific phrases like “luxury homes in Manchester” or “affordable property in york”.

A huge challenge is figuring out where big competitors leave content gaps and missed keyword opportunities. Big real estate platforms dominate the market, so you need to dig deep into what they aren’t addressing.

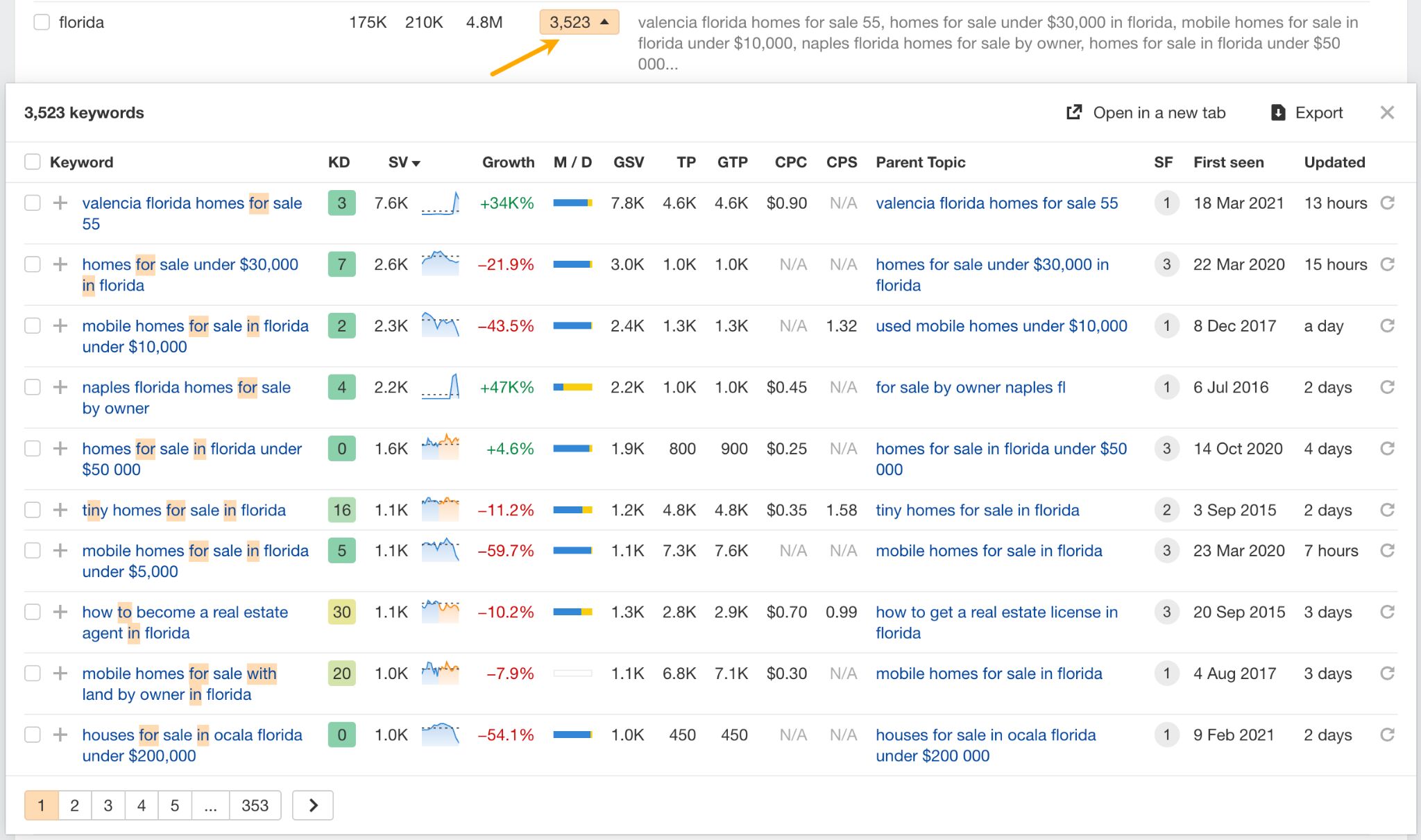

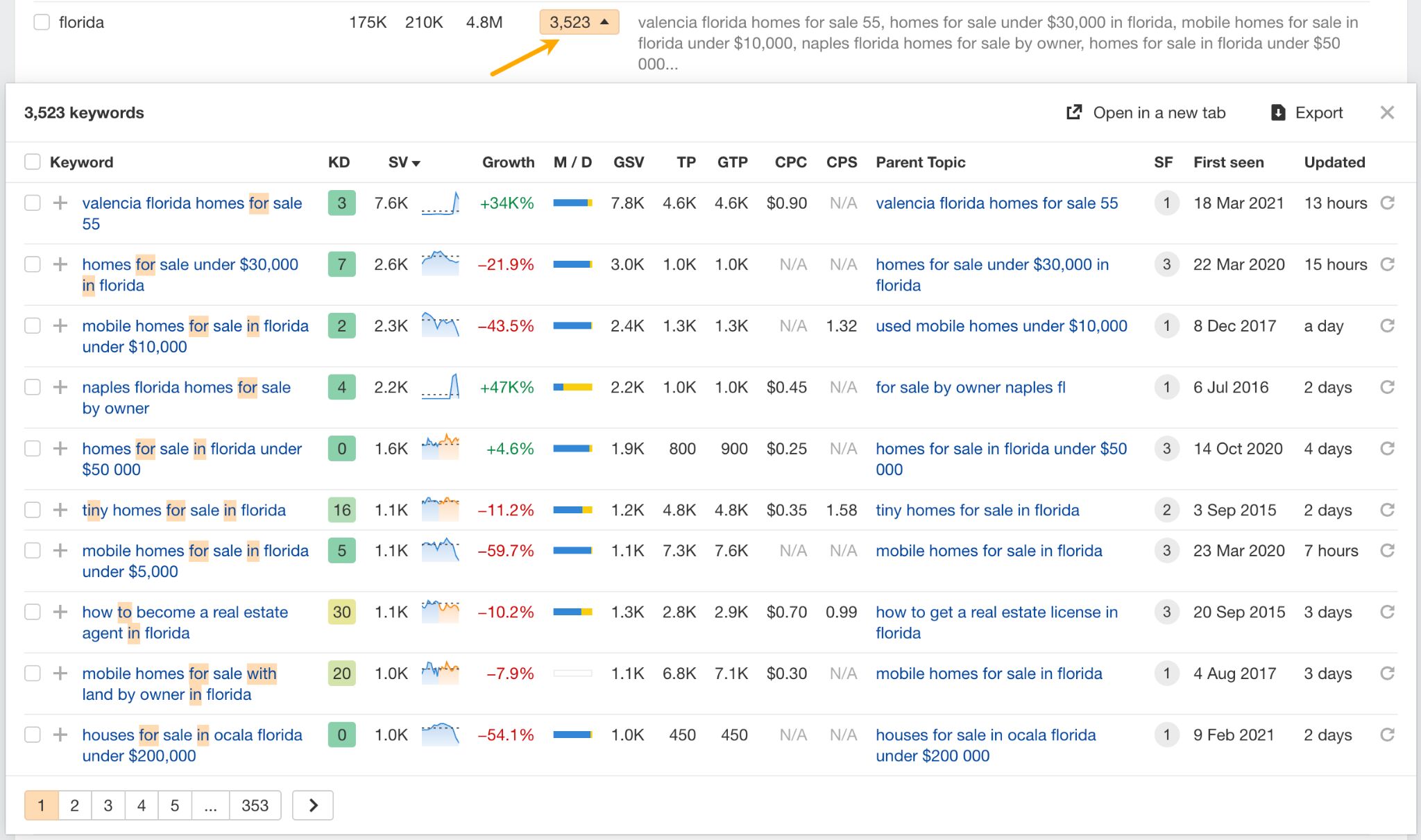

Tools like Ahrefs’ Keywords Explorer show what words people use when looking for real estate to buy or an agent to help them sell. Let’s look at how you can use this tool to find the best types of keywords.

Local and hyperlocal real estate keywords

Local and hyperlocal keywords are search terms that are highly specific to a particular geographic location or small community. These keywords typically include:

- Neighborhood names.

- Street names.

- Local landmarks.

- Local attractions.

- Zip codes or postal codes.

- Specific districts within a city.

- Names of local businesses or institutions.

- City comparison (e.g., Portland vs. Austin).

Rather than targeting neighborhood key terms alone, you should also hit landmarks, popular streets, and more. Build expertise and authority through neighborhood-specific landing pages with unique local content. You sacrifice some volume, but you attract highly qualified traffic and increase your chances of showing up at the top of the right search results pages.

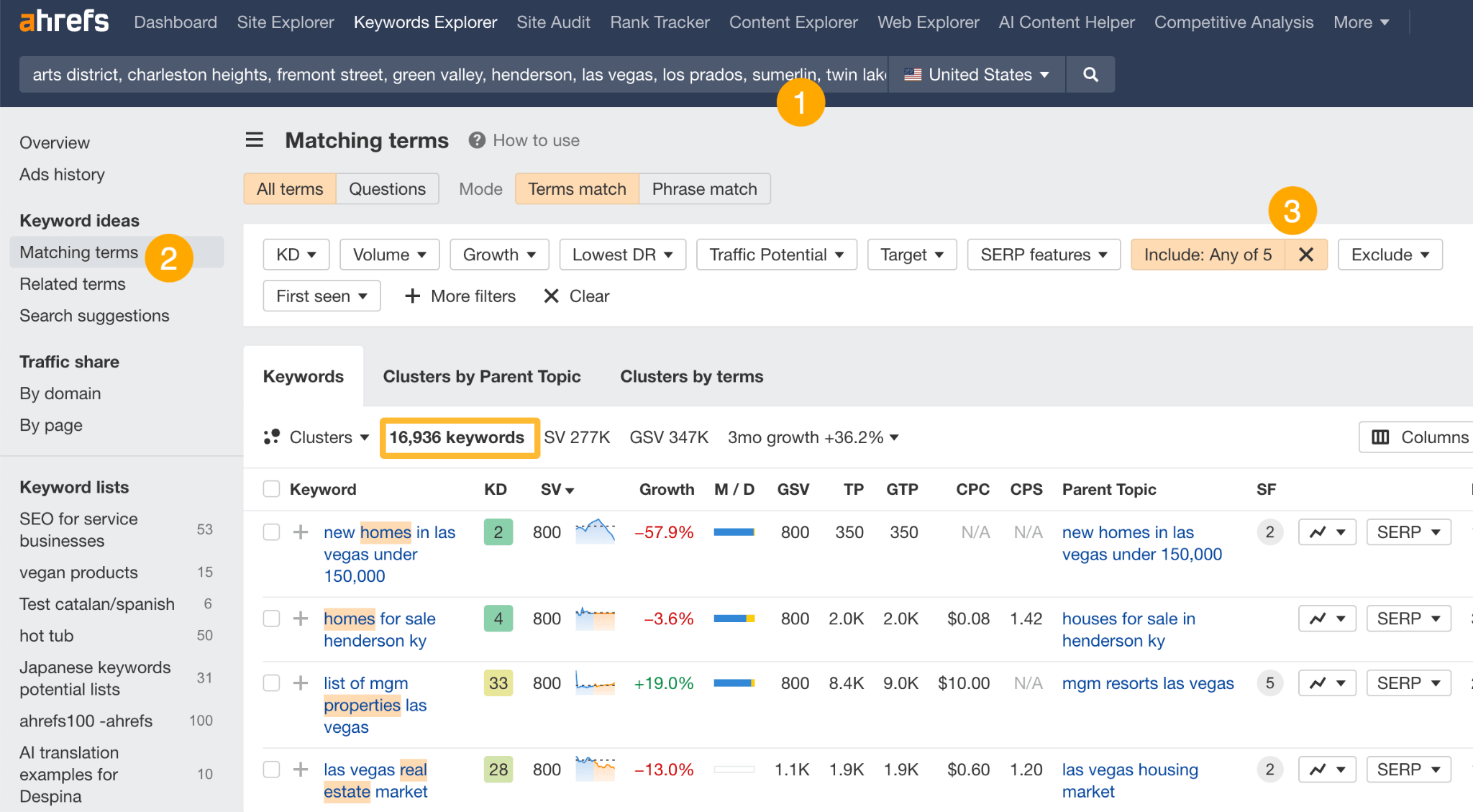

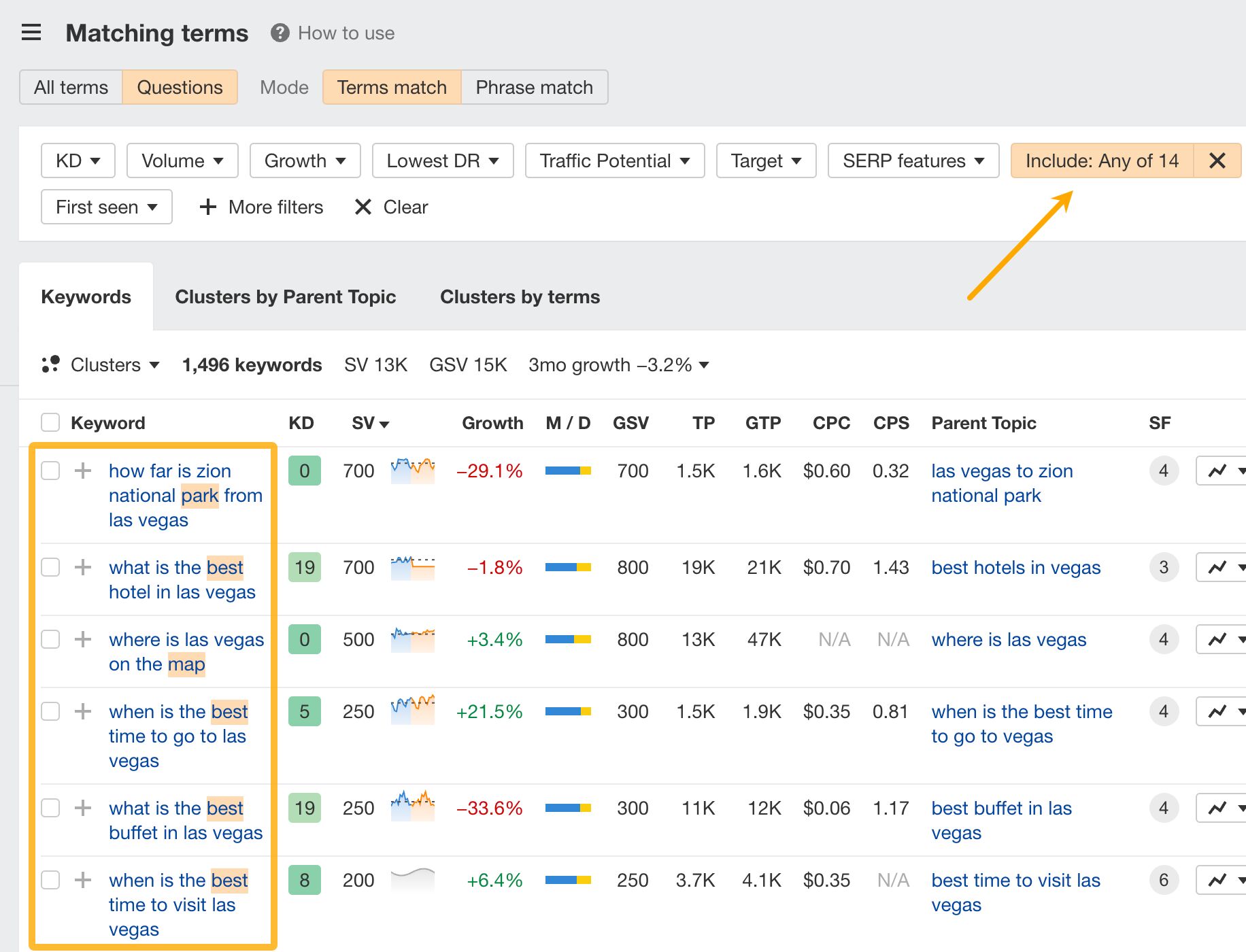

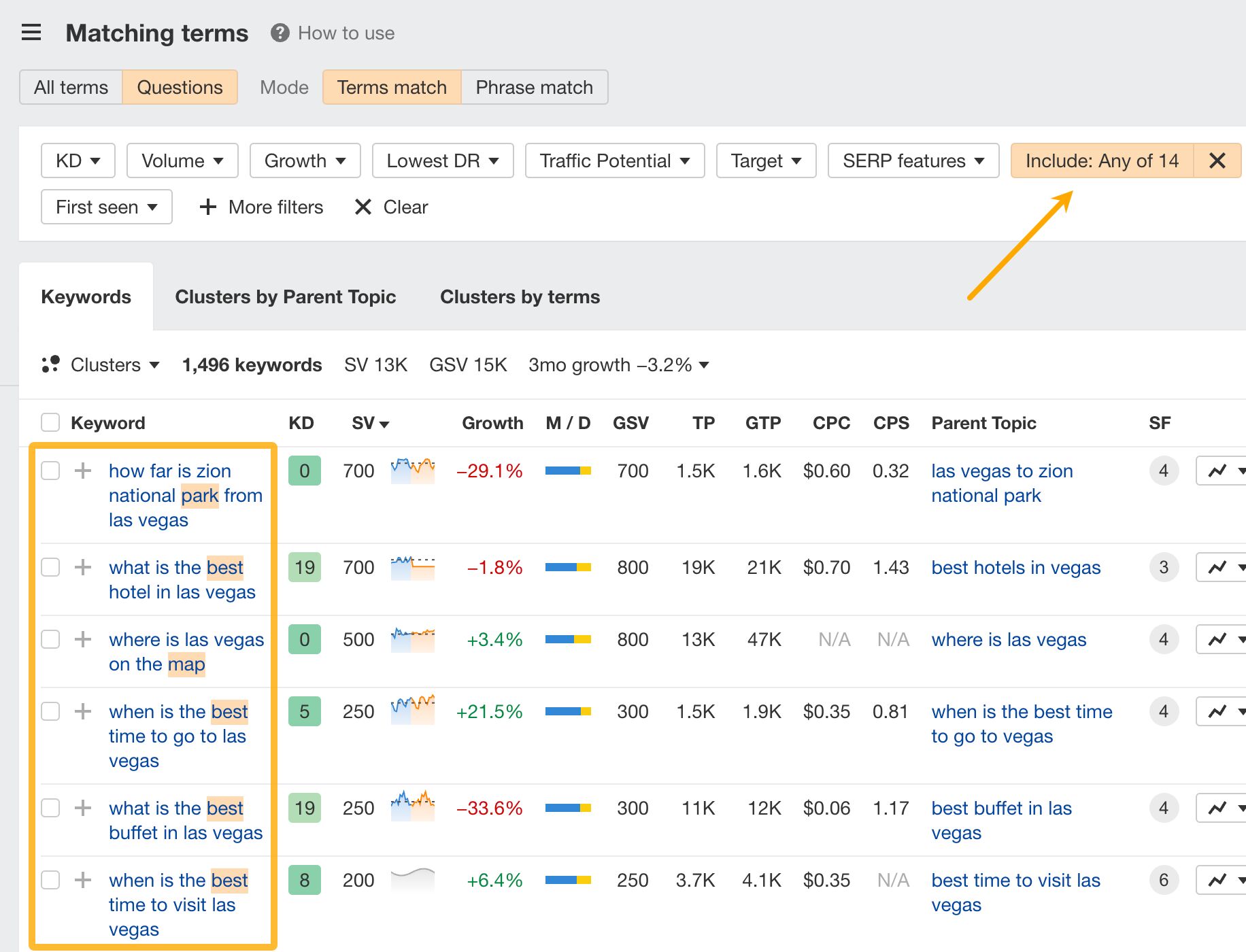

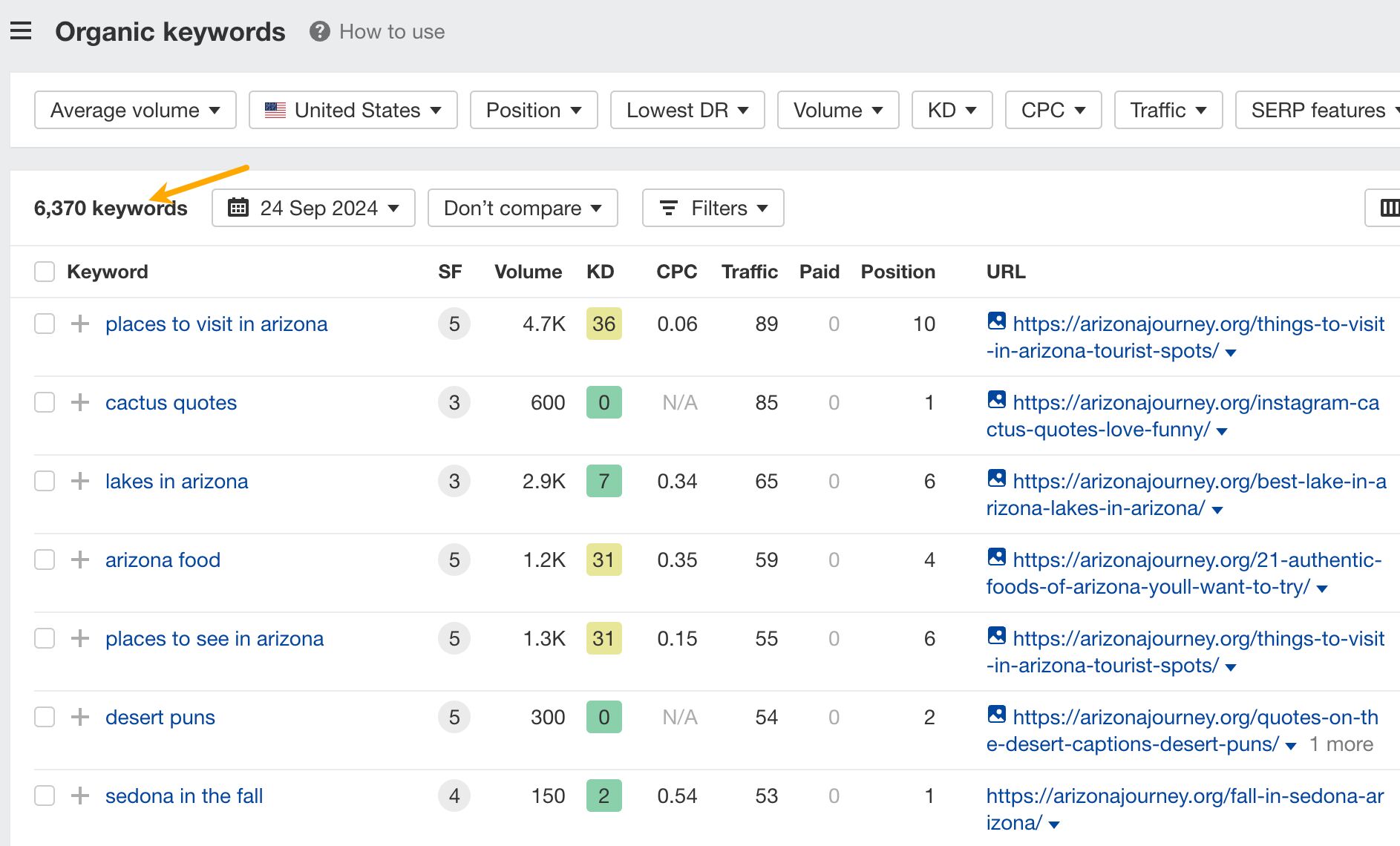

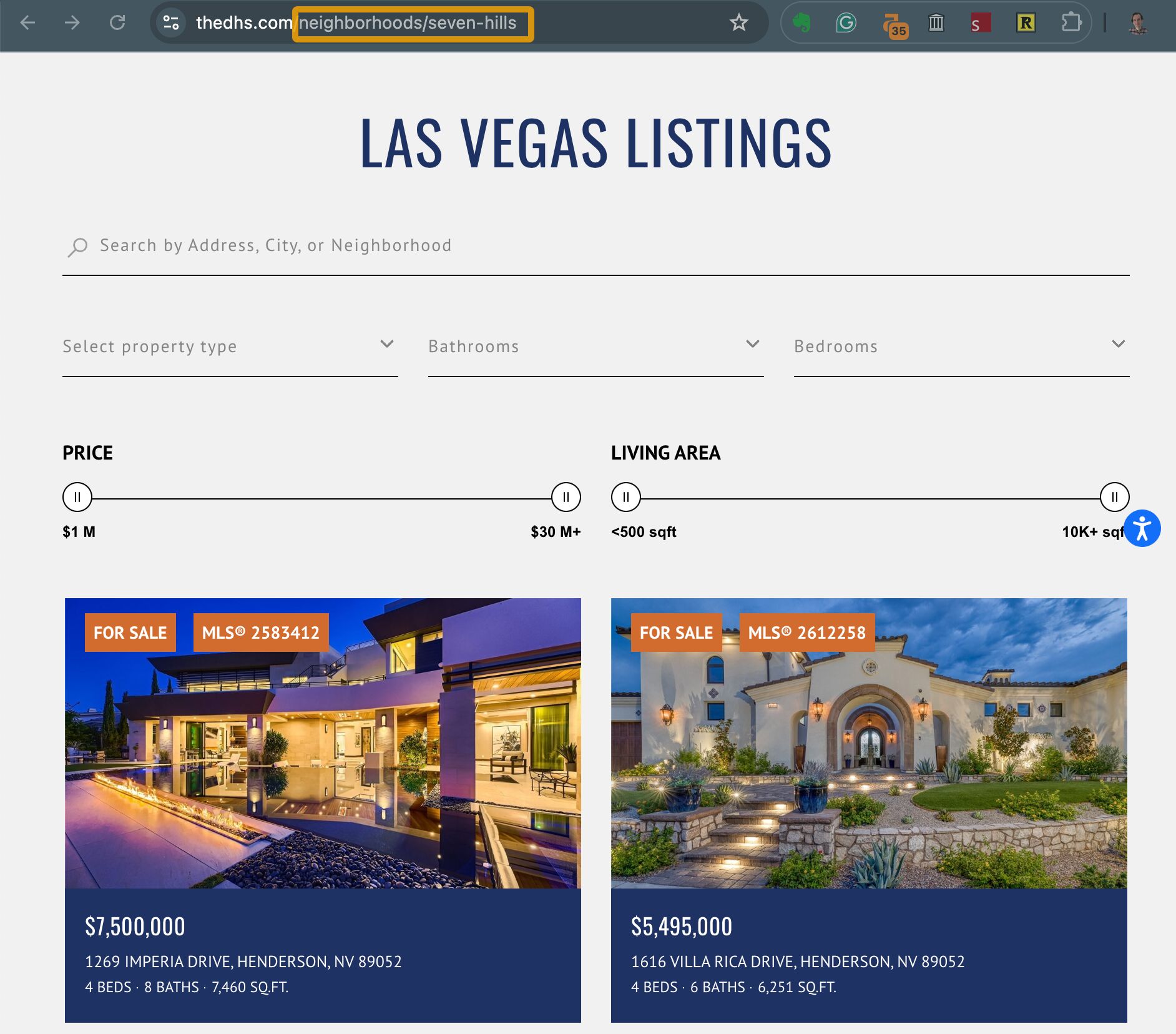

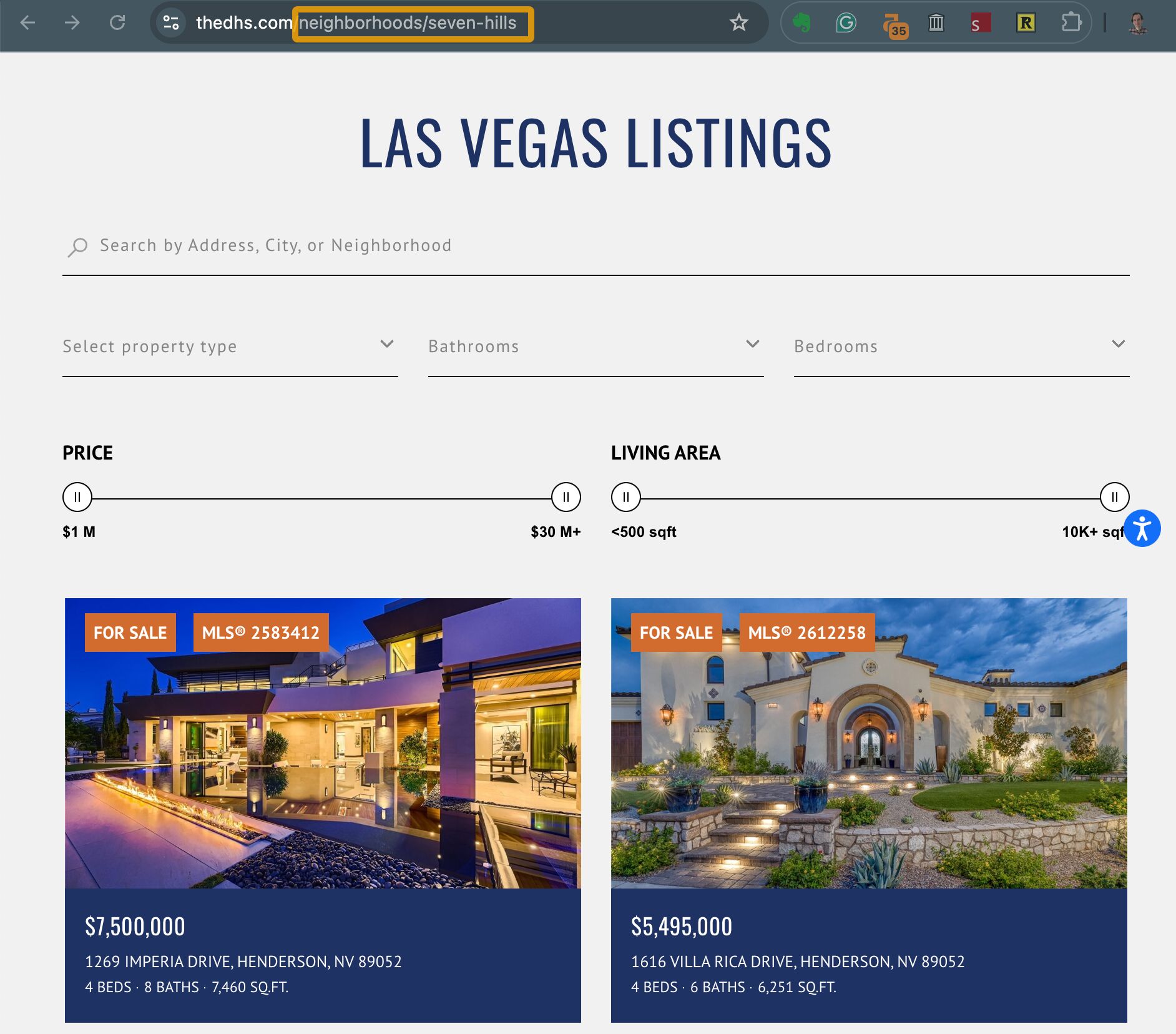

To find your keywords in Keywords Explorer:

- Type in broad terms related to the area you operate. For example, in Las Vegas that could be “las vegas, arts district, charleston heights, fremont street, green valley, henderson, los prados, sumerlin, twin lakes, unlv”.

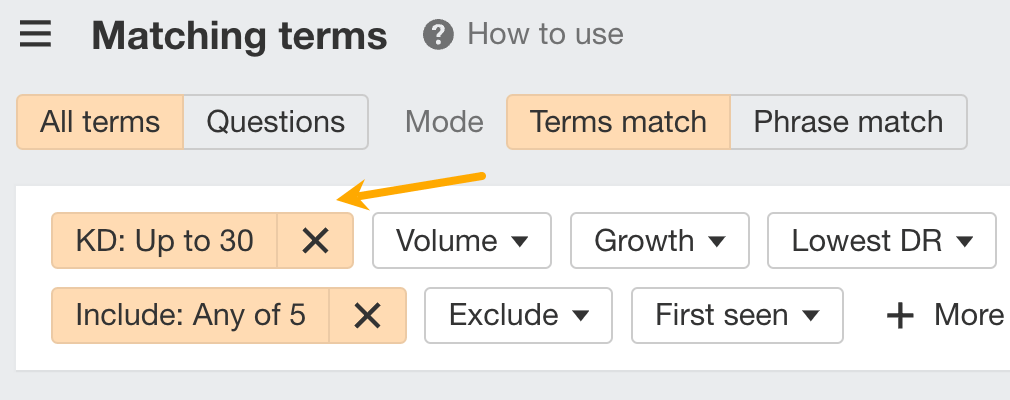

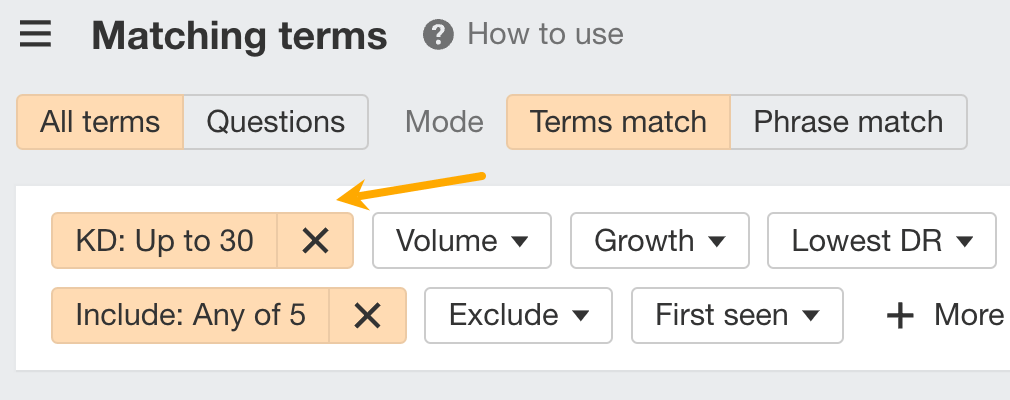

- Go to the Matching terms report.

- In the Include filter add types of the real estate you offer. For example “real estate, house, condo, homes, properties”. Make sure to use the Any mode.

From that point, you can use additional filters to refine results. For example, to find low to medium-difficulty keywords set the KD filter to Max 30.

As you browse through the keywords, add them to a list.

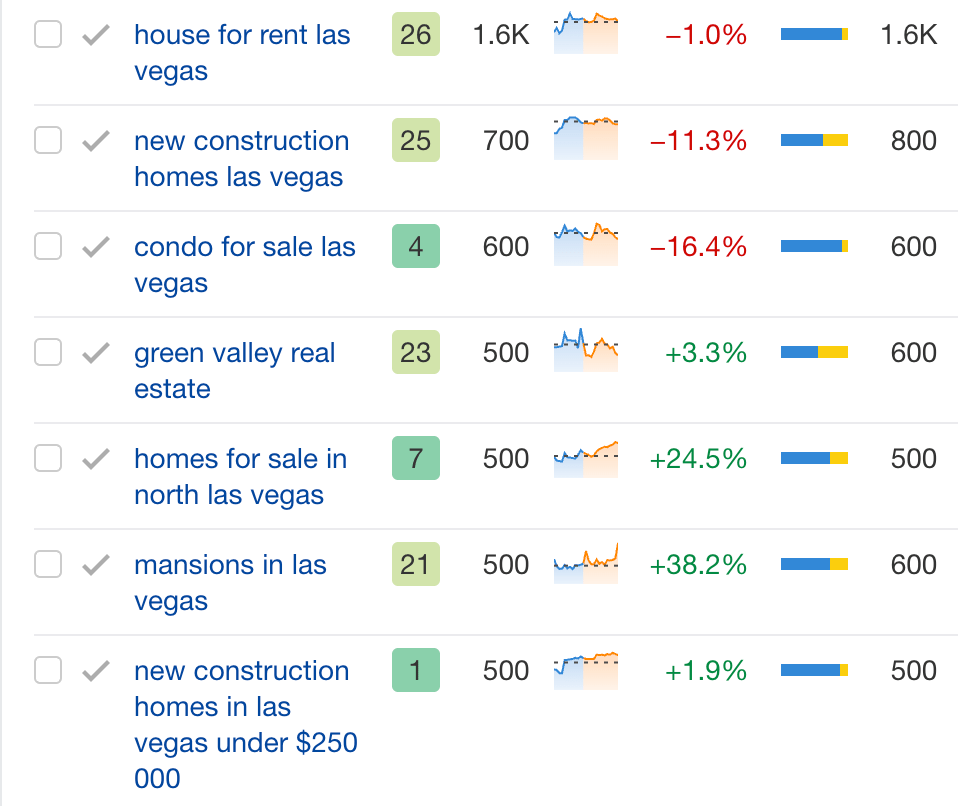

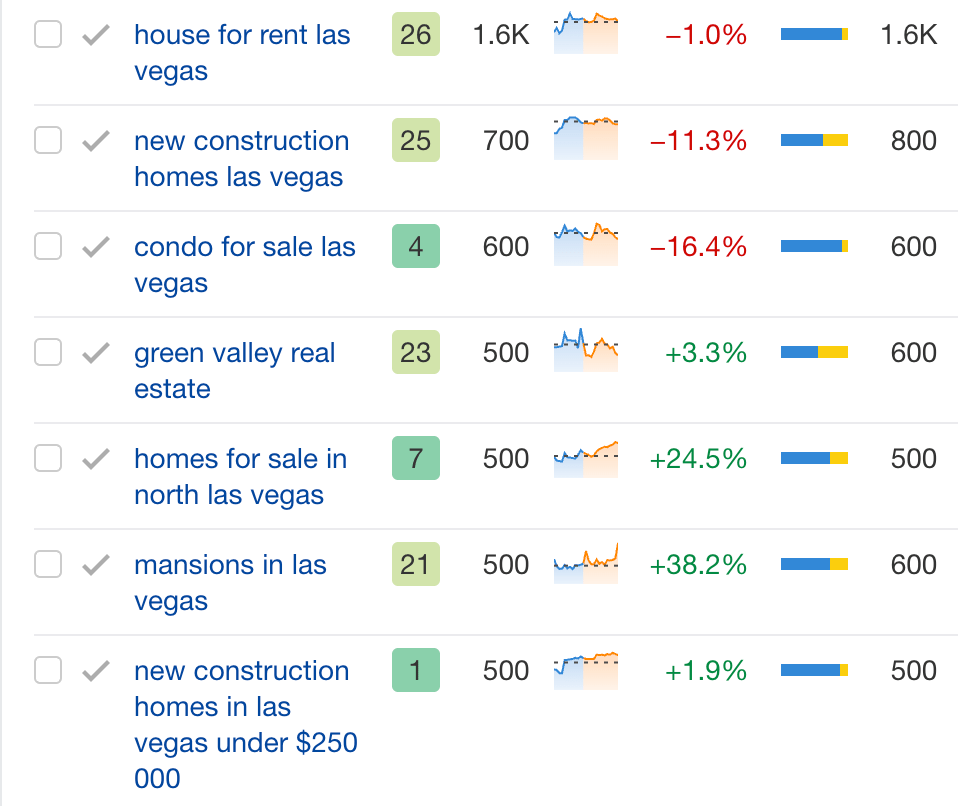

Here are some examples I found:

Questions and real estate buying/selling terminology

Answers to popular questions and terminology allow you to attract customers seeking information first, show off your relevant listings, and get people to contact you for more details.

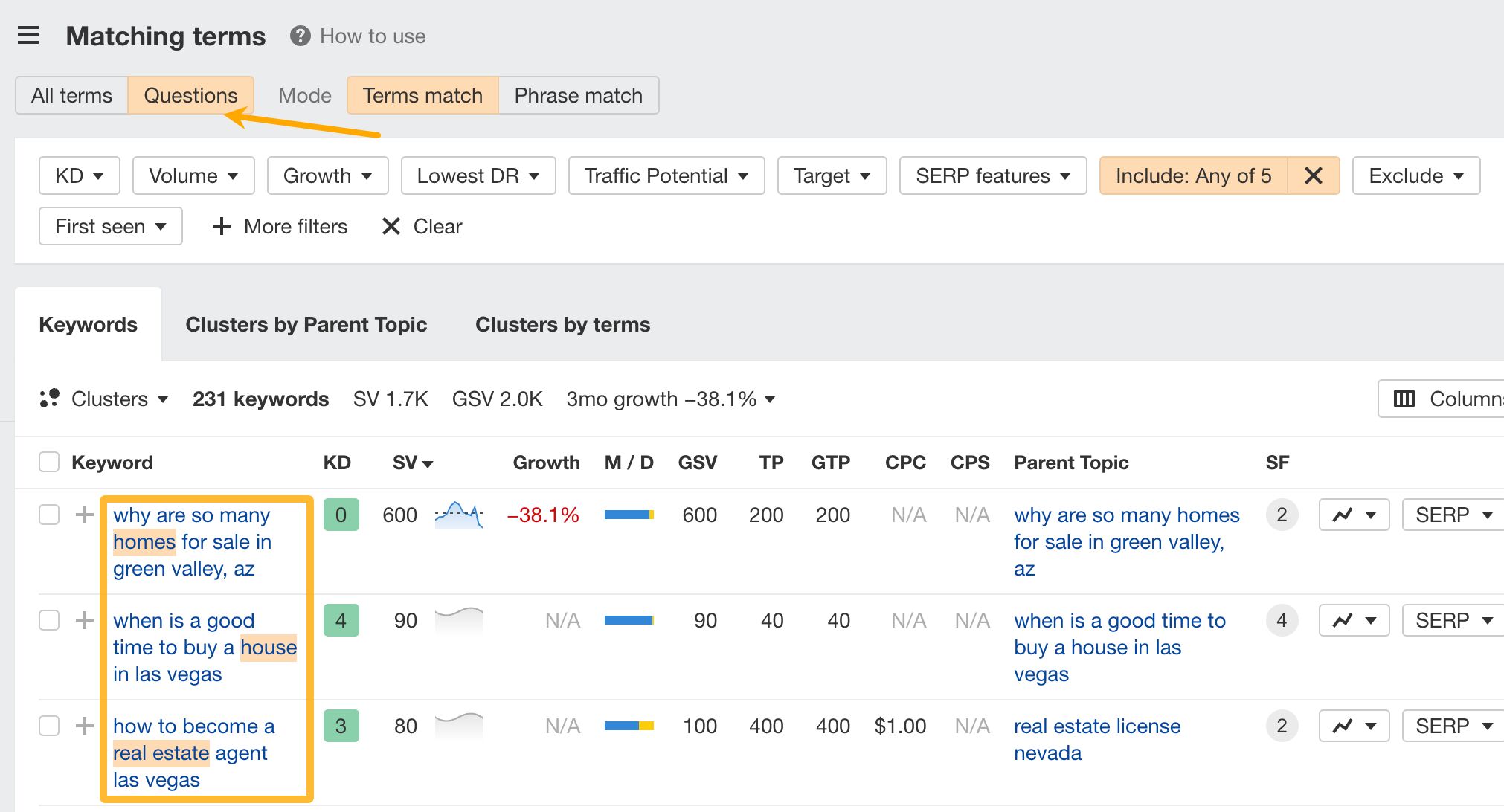

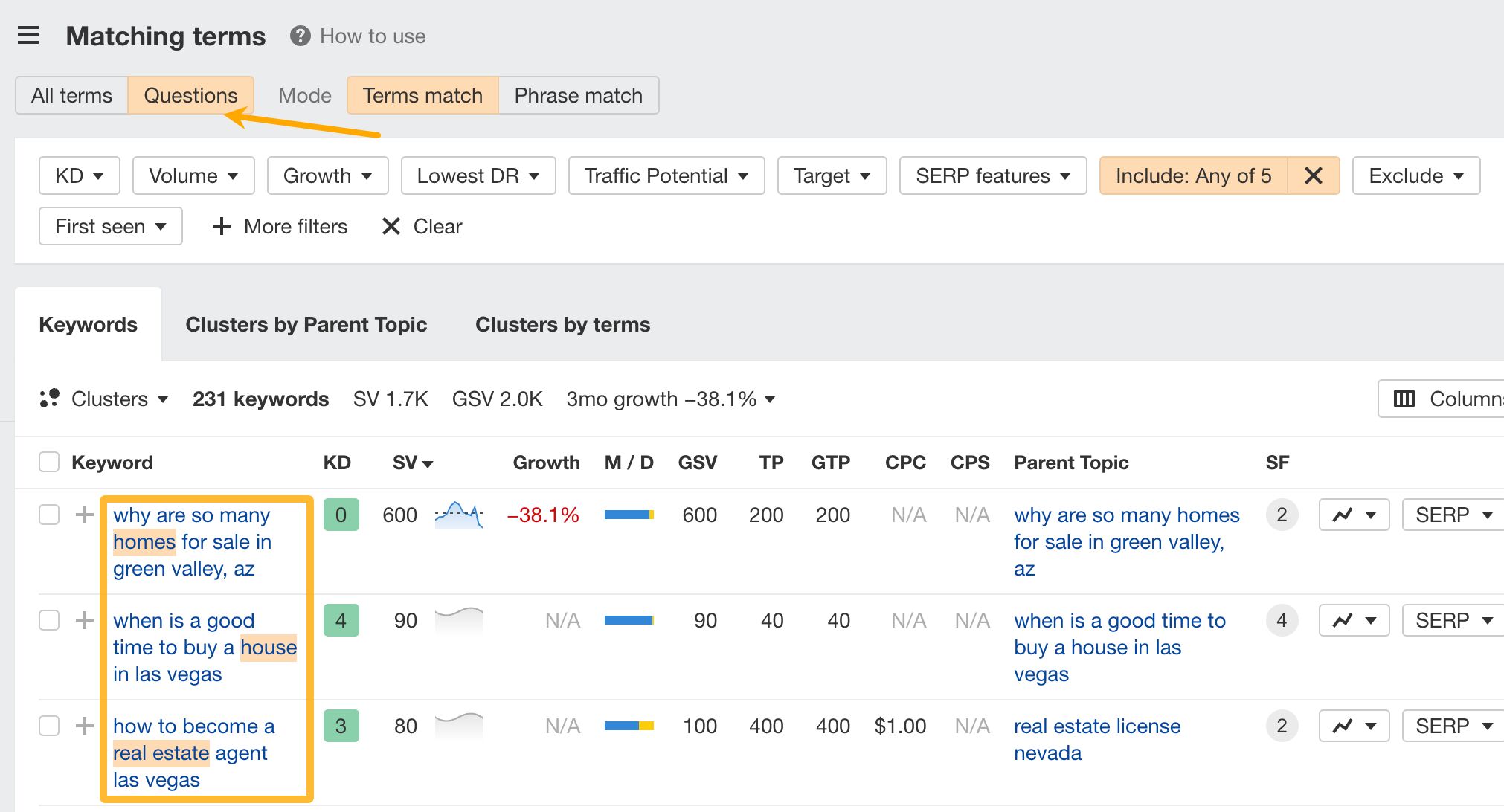

You can use these same seed keywords to find questions that buyers and sellers are asking. All you need to do is use the Questions tab:

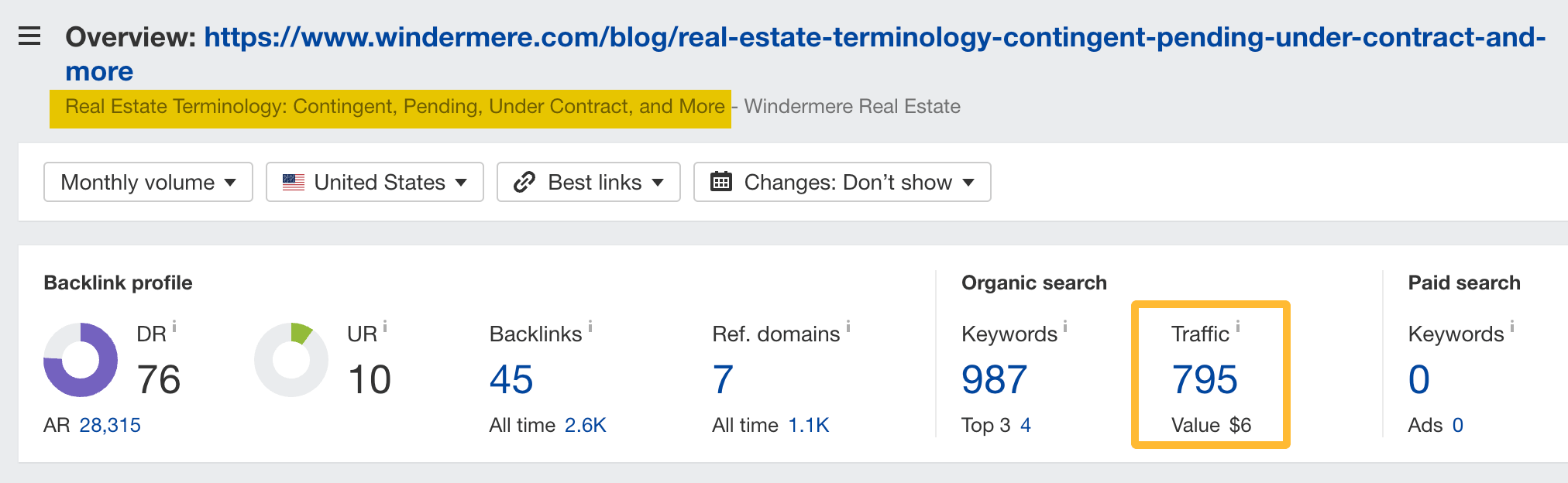

For example, here’s a page explaining some of the basic terms. It generates an estimated 795 organic visits each month.

Local guides

These keywords include a geographic location and offer insights about the local area, like neighborhoods, restaurants, bars, attractions, or real estate market trends.

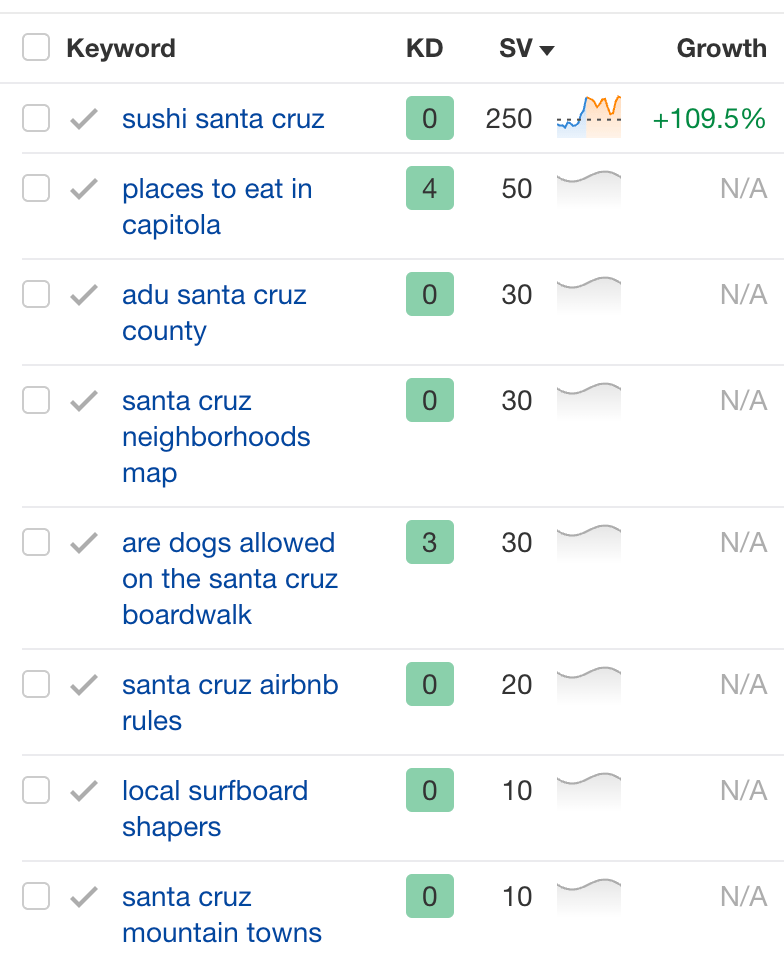

For instance, Live Love Santa Cruz, a boutique real estate, targets various keywords related to local services and attractions. I’ve listed some keywords where she ranks in the top 10: sushi, beaches, surfboard services; you get the idea. It’s practically a local guide’s blog attached to a real estate business.

To find these keywords, you can again use the standard set of locations and these modifier keywords: “best, things, top-rated, event*, guide, list, tips, map, information, resource, transportation, park*, recreation, shopping”. You can add your own or ask AI to expand this list.

Sidenote.

The asterisk acts as a wildcard for modifier keywords. It will automatically include all the words that start with “park.”

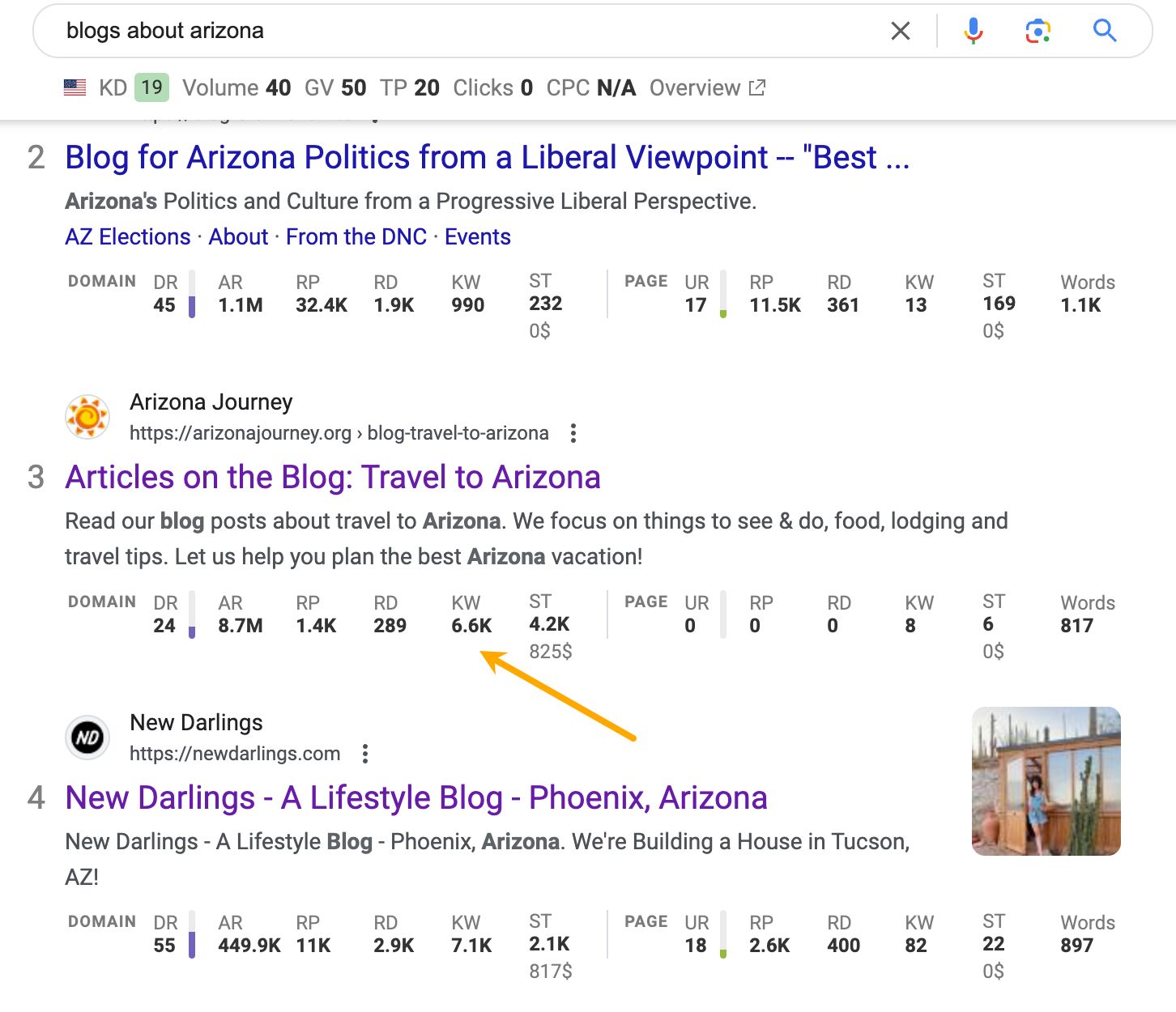

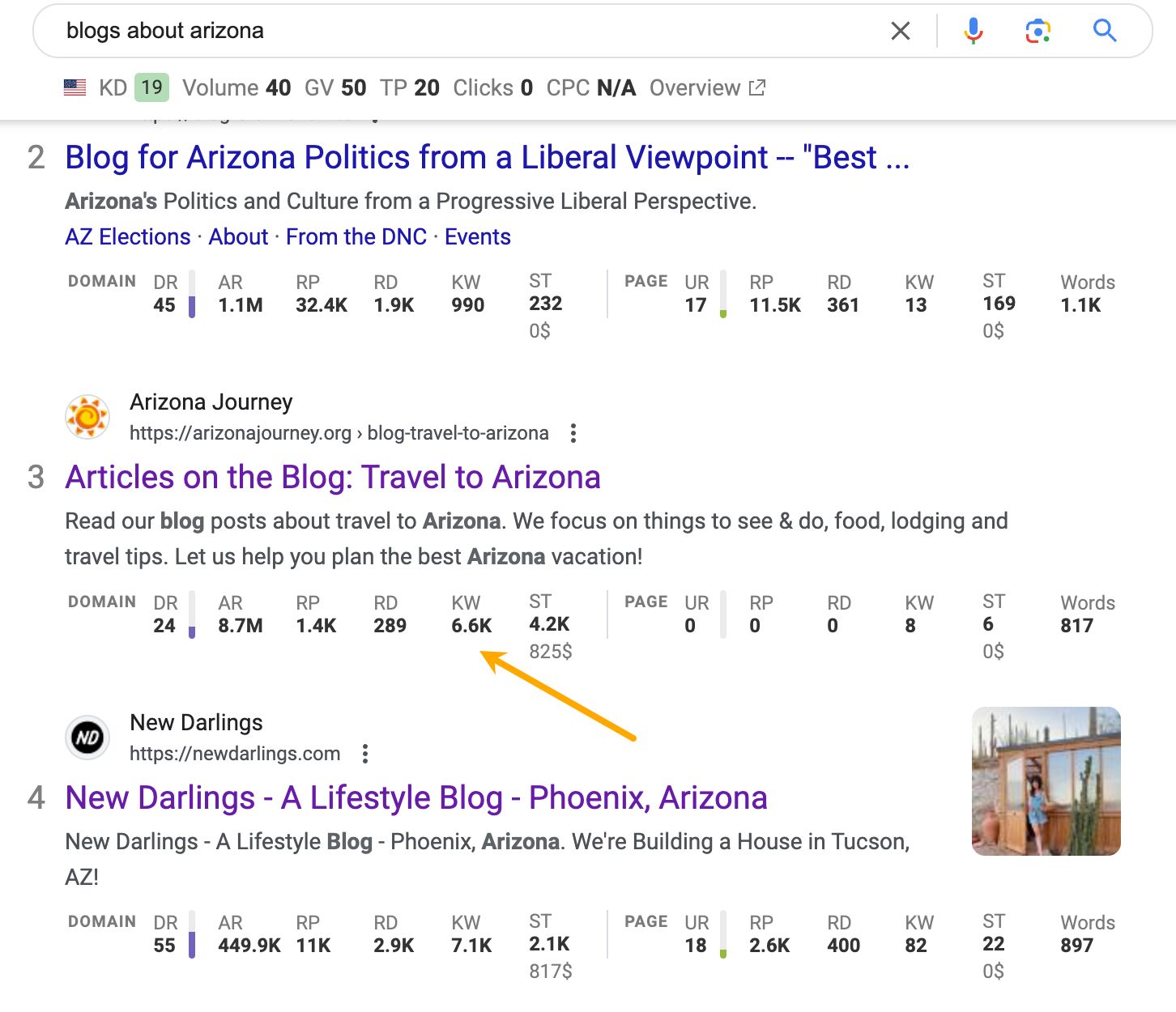

Since these keywords can have irregular structures, it’s a good idea to use competitive keyword research.

To do this, type in “blogs about [local area]” or “[local area] blogs” in Google. If you’re an Ahrefs user, you can use the toolbar to reveal SEO data for each site. Choose sites with the most traffic (ST) and click the KW link.

This will show you the keywords the site ranks for — your new source of content inspiration.

Unique features and buying scenarios

Brandy Hastings from SmartSites and Ally Dyck from seoplus+ mentioned a specific subset of keywords: properties with unique features and specific buying scenarios. For instance “pet-friendly apartments in [suburb]” or “townhomes for sale with low HOA fees”. These keywords typically have low search volume, but they’re high in intent.

Here are some of the ideas you can use for keyword modifiers: “for, near, with, buyer, close to, invest, relocate, retire, in”. Use them with seed keywords related to the type of realty you offer.

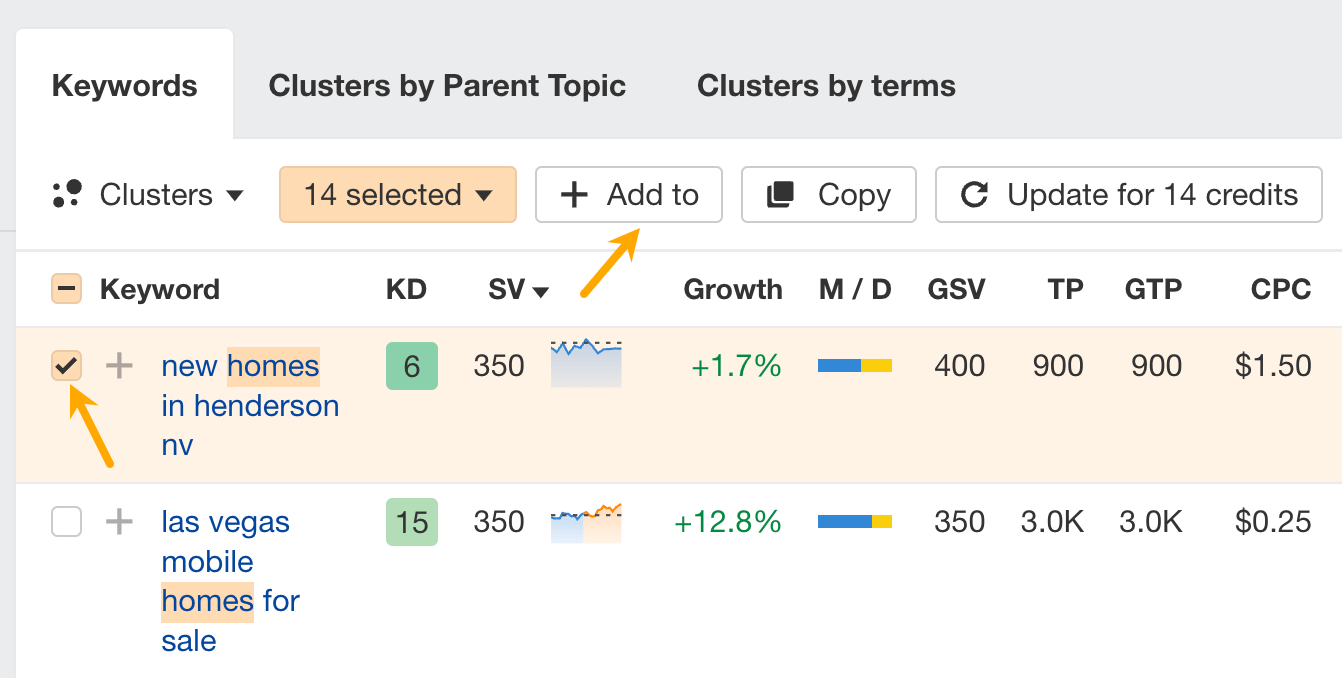

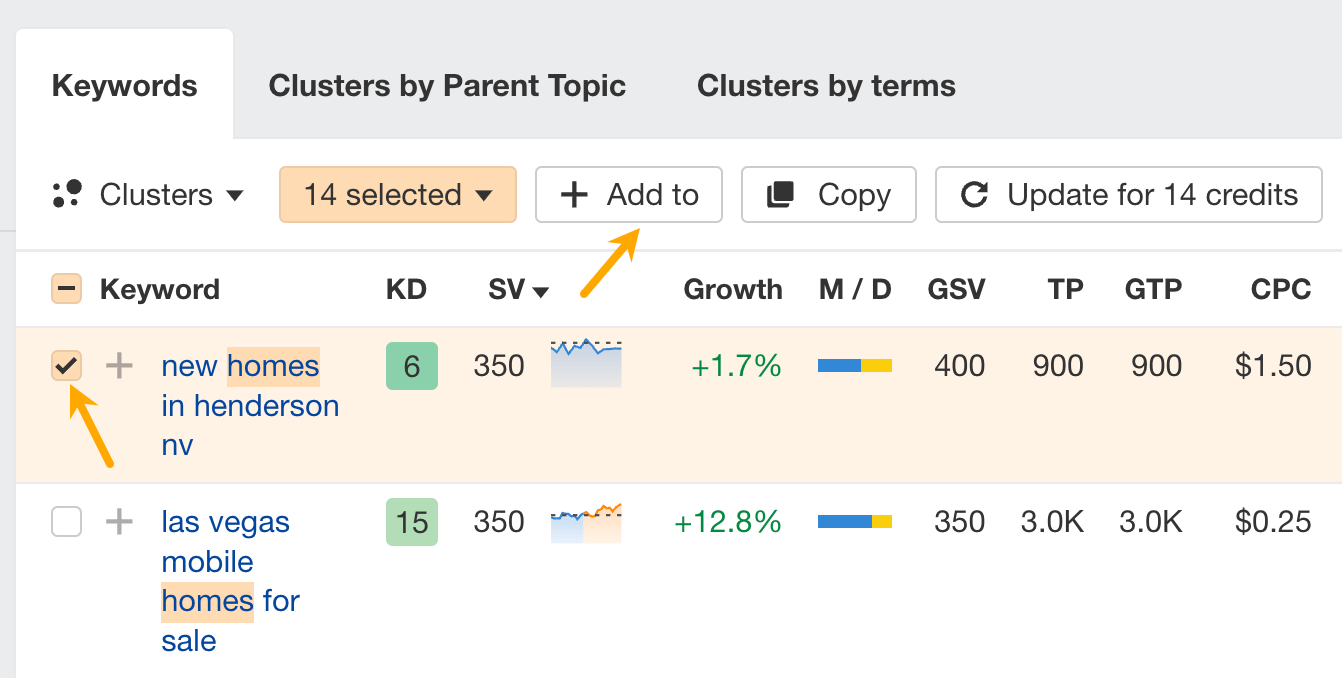

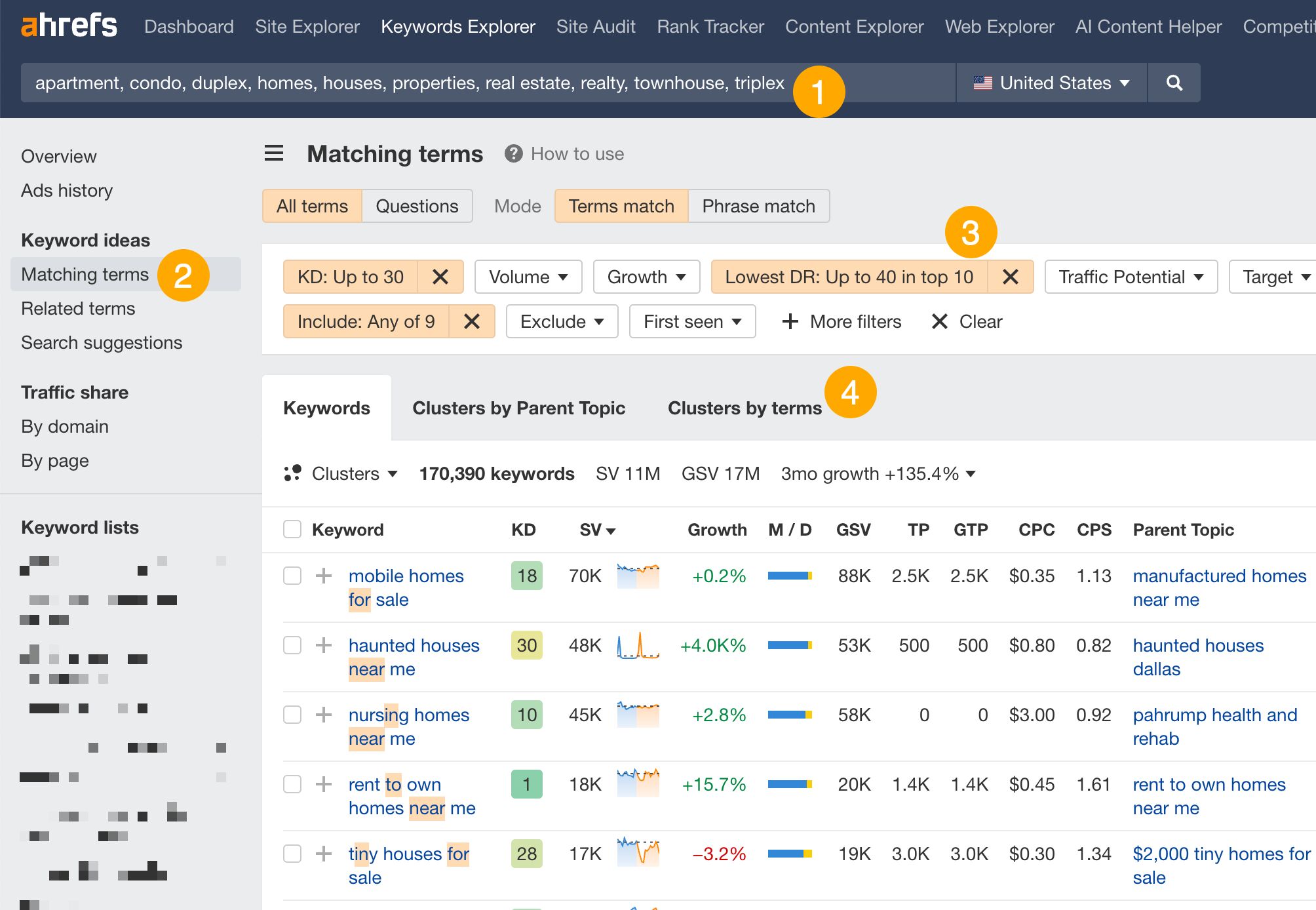

- Type in types of real estate as seed keywords.

- Open Matching terms report.

- Set the following filters. Include: add the modifier words mentioned above. Optional filters for finding easier keywords: KD up to 30, Lowest DR Up to 40 in top 10.

- Open the Cluster by terms tab.

From there, look for the areas you serve and browse keyword ideas.

Don’t expect to get leads from every organic visit — it’s a very important thing to understand with this source of traffic.

Real estate decisions take time, and users are often at different stages of their journey. Your goal should be to engage visitors and guide them toward taking the logical next step rather than pushing for an immediate conversion.

I like to think holistically about the different stages someone may be at as they’re researching an area or neighborhood. Informational guides can be really useful for the earlier stages as people are just learning about a place and determining if it’s a good fit. Things like neighborhood overviews, school profiles, guides to local amenities. Then as people start narrowing down their search, more transactional pages optimized for queries like “homes for sale in Neighborhood X” can be effective.

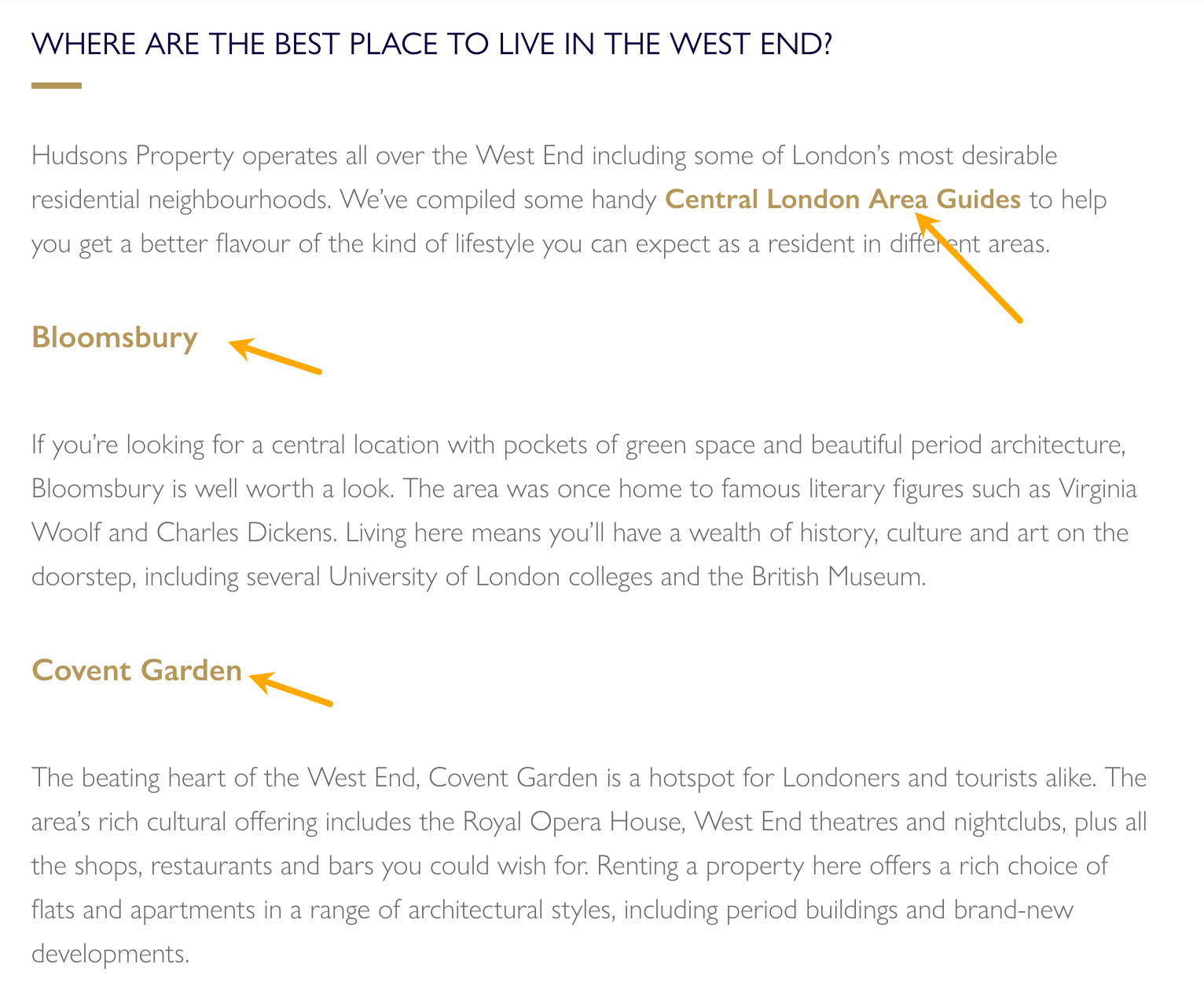

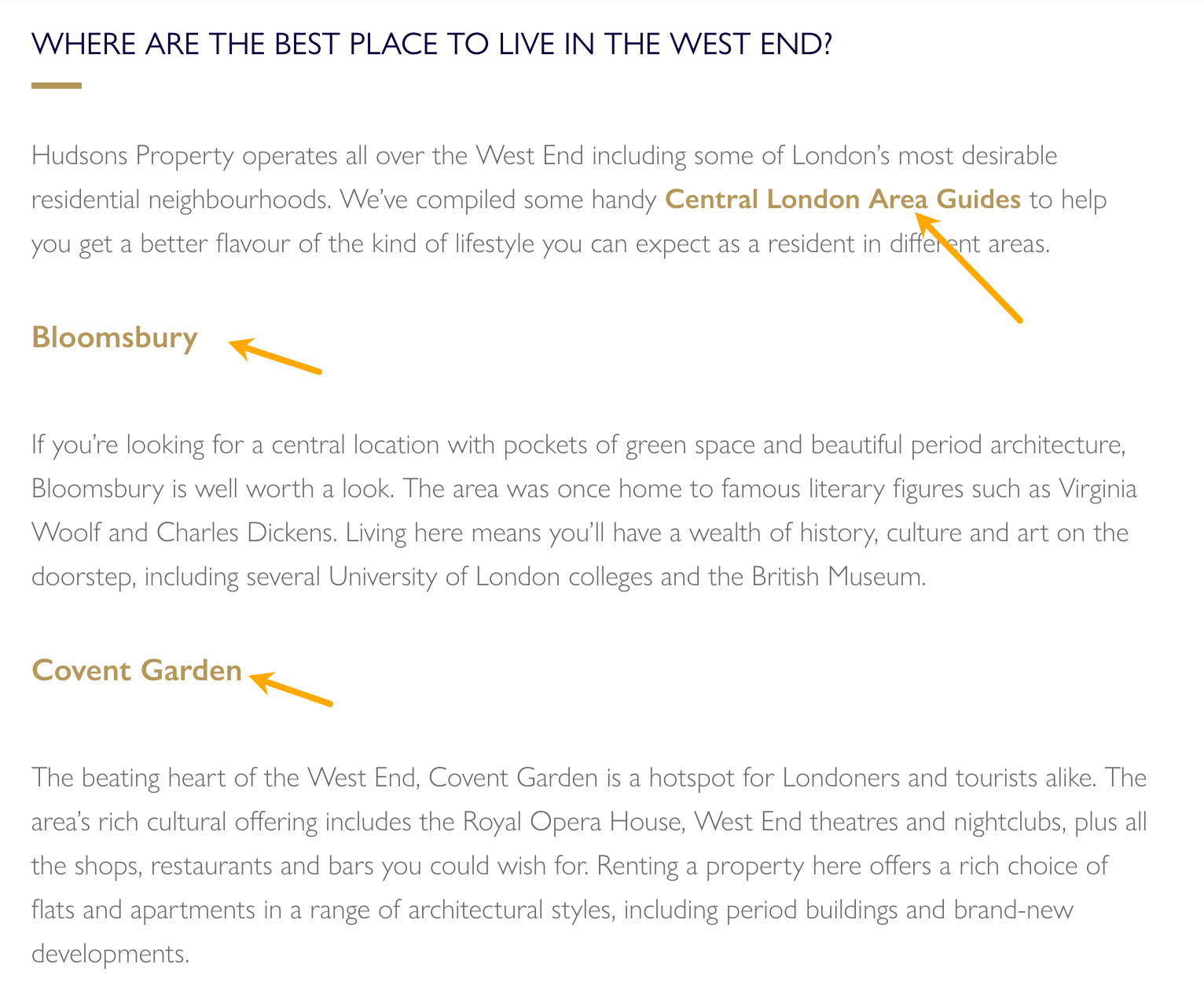

Here’s a simple example of this concept. One of the pages that generates the most traffic for Hudsons Property is a guide to renting and buying a home in London. Each guide links to other relevant content on the site, including London areas.

The visitor can learn not only how to buy or rent but also where. The area guides take them a step further in their buyer’s journey, providing a form to inquire about real estate options.

And that is the whole idea. Each page needs to deliver a logical next step for the visitor to get in touch.

Here are some other ways real estate sites try to engage visitors.

Highlight selected real estate in a neighborhood guide. Even if someone is not ready to buy yet, pictures of nice homes will likely draw them in.

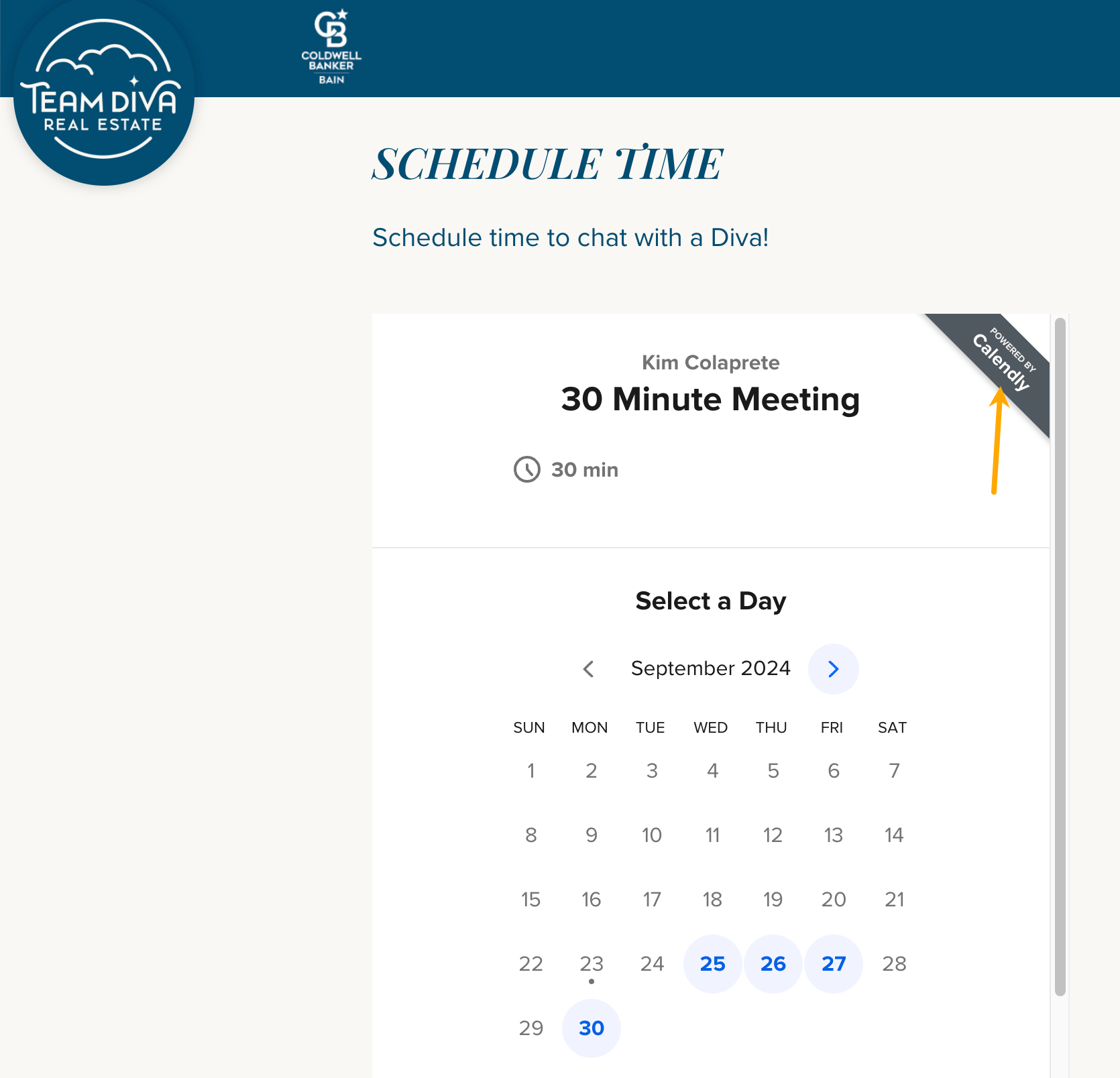

Get Calendly and integrate it with your site. This will give people a quick and easy way to contact you, without back-and-forth with setting up meeting dates.

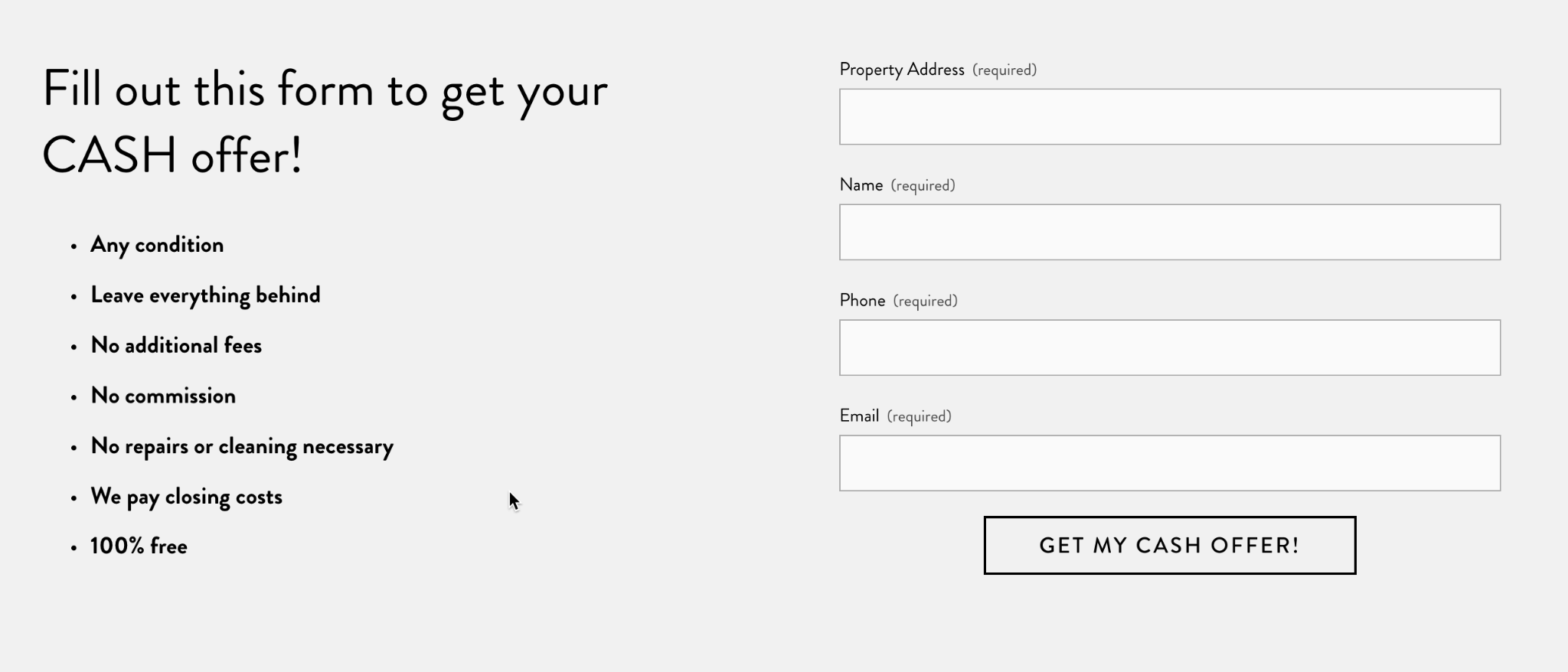

Encourage contact by making a special offer, such as a free valuation.

Keep main contact options visible at all times. You can include them in a floating menu bar. Simple yet effective. It reduces the time to find contact details and demonstrates your openness.

Within seconds, visitors form lasting impressions about your credibility and professionalism. If they feel something is off, they will leave.

Establishing trust isn’t just about appealing to human psychology — it’s also a critical factor in Google’s ranking algorithm, built into the EEAT concept.

EEAT is how Google’s systems are trained to determine a page’s credibility. The acronym stands for Experience, Expertise, Authoritativeness, and Trustworthiness, with the last element being the most important.

It basically means that a website exhibiting strong EEAT signals is more likely to rank well in search results because Google aims to provide users with credible and reliable information.

Here are some ways you can cater to potential customers and Google.

Getting a TLS certificate is an absolute must. This protects sensitive information, like login credentials and personal details, from being intercepted by malicious actors. It also displays a padlock icon in the browser’s address bar, visually signaling to users that the website is secure.

On Dana Fitzpatrick’s site, I found these few hundred pixels that clearly establish this realtor’s credibility. It features impressive performance data, a compelling testimonial, and a series of recognitions highlighting her experience.

On Dana Fitzpatrick’s site, I found these few hundred pixels that clearly establish this realtor’s credibility. It features impressive performance data, a compelling testimonial, and a series of recognitions highlighting her experience.

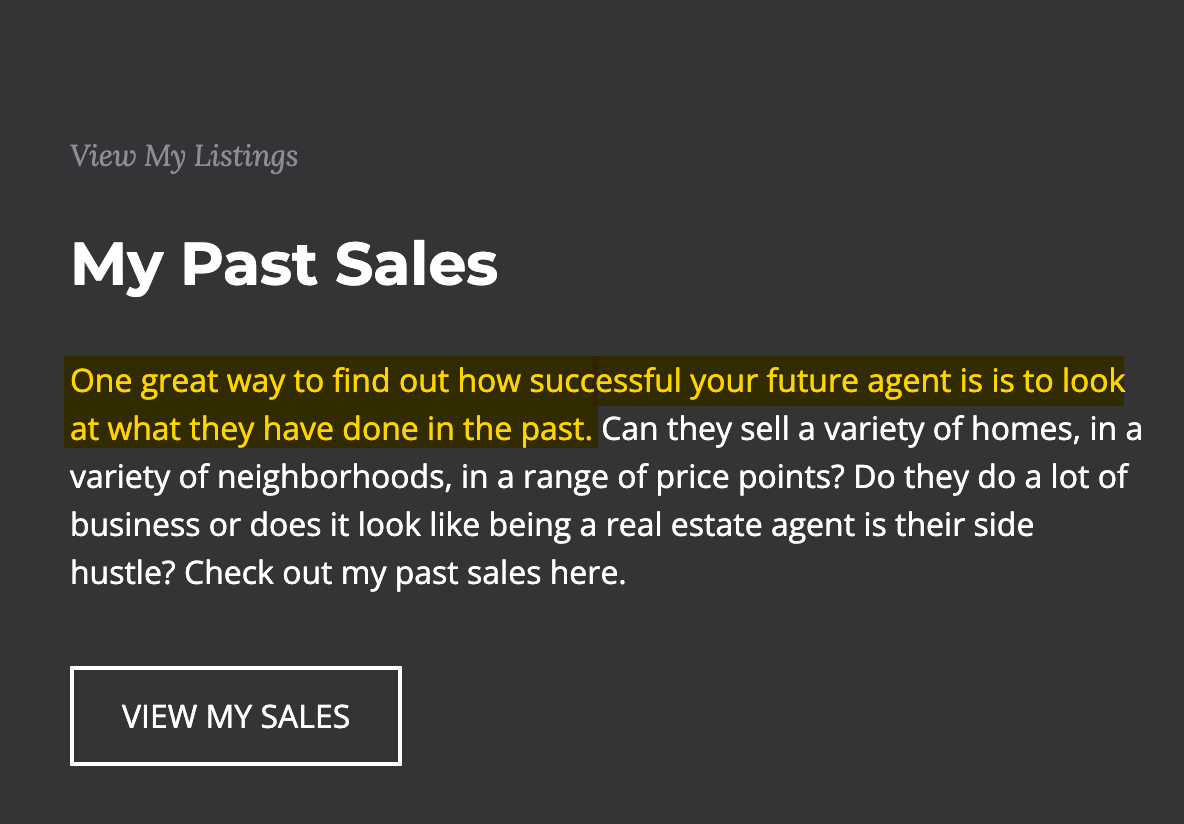

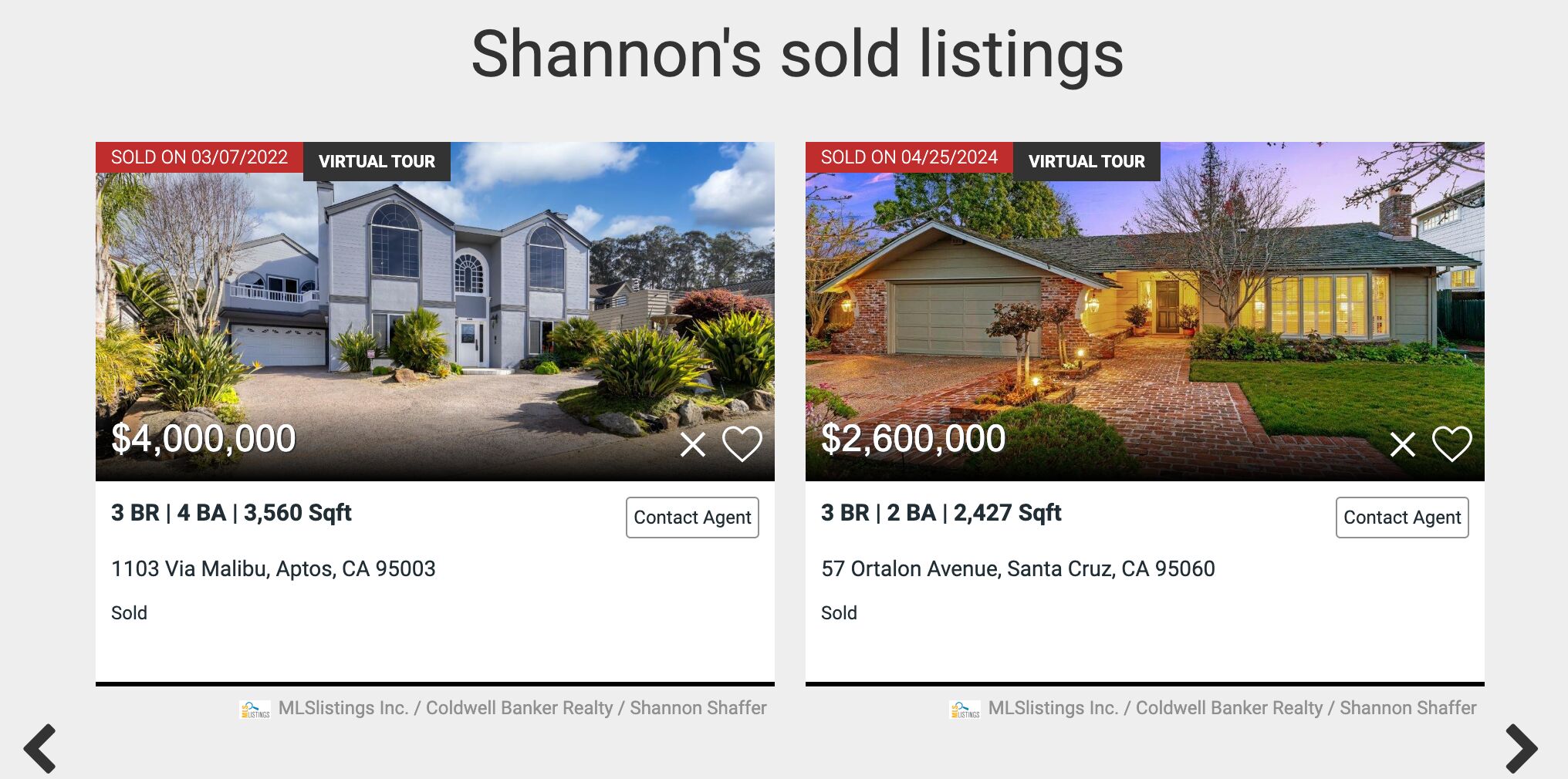

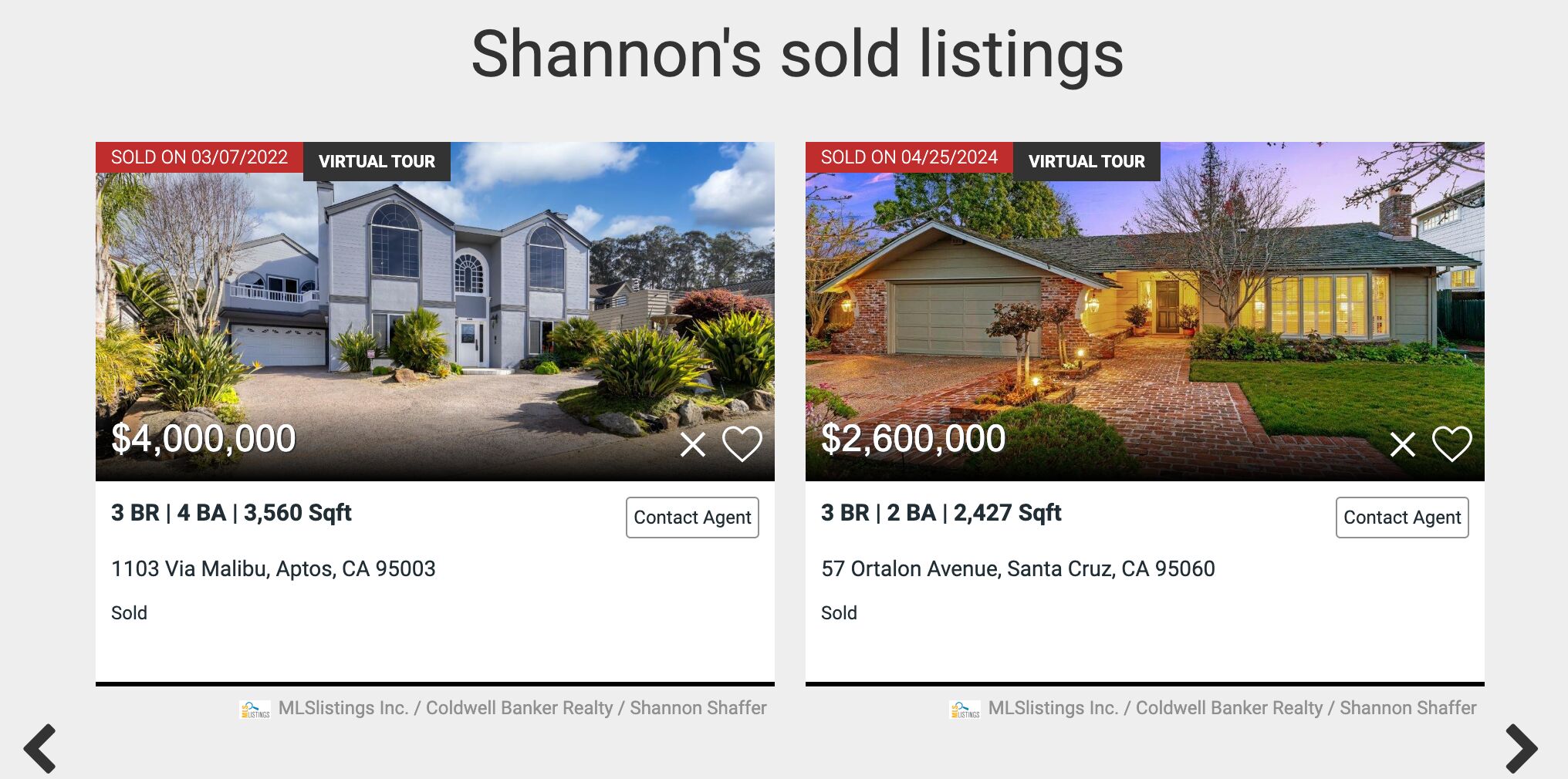

On Nathan Sherman’s site, I found this:

And I couldn’t agree more — these sold properties acts as strong testimonials. They’re not just a list of past transactions; they’re a visual showcase of an agency’s success story.

Moreover, they catch your eye, because not every agency keeps their sold properties in a visible spot on their site. I know it caught my attention when I first saw this after looking at dozens of real estate sites.

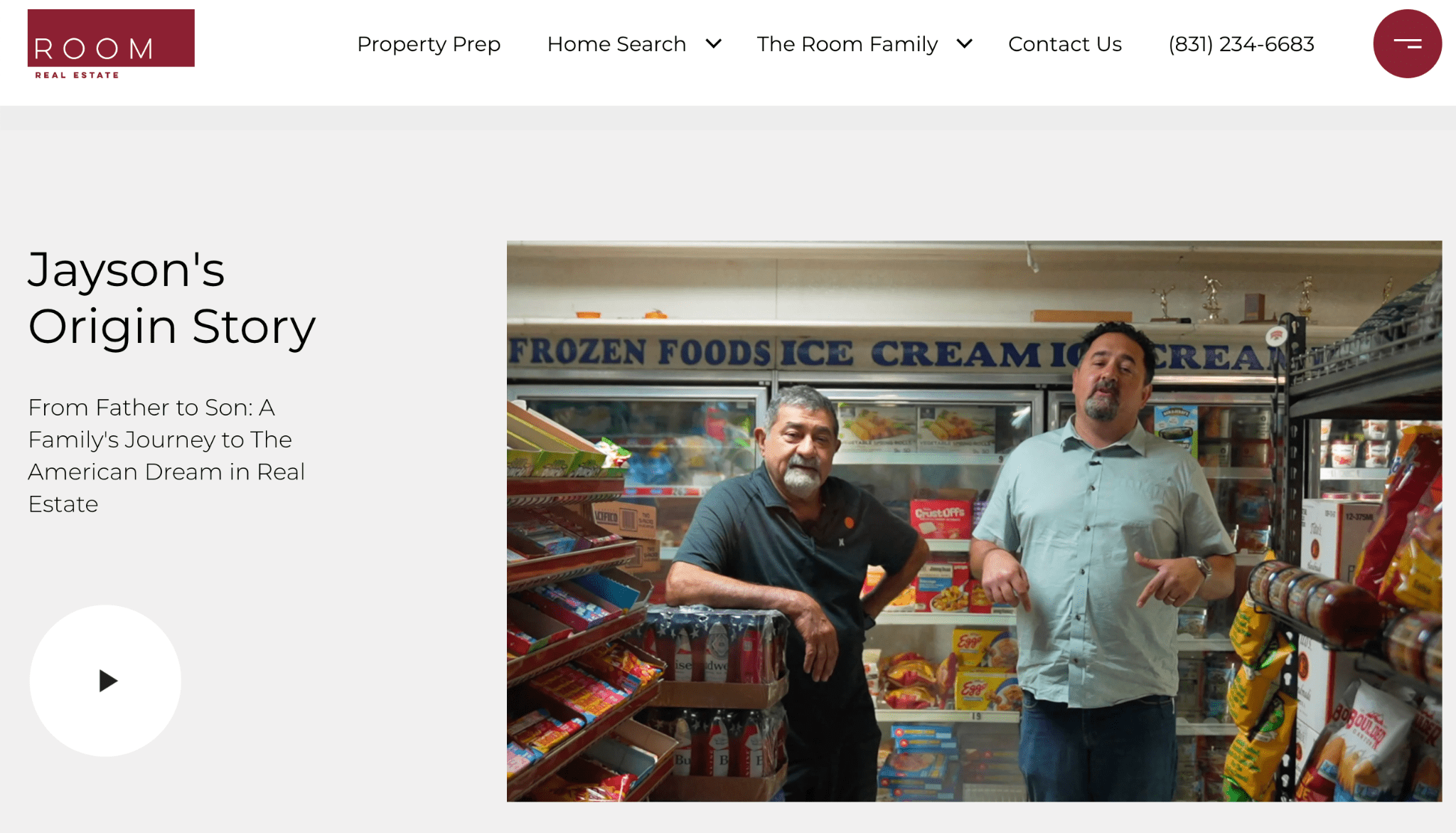

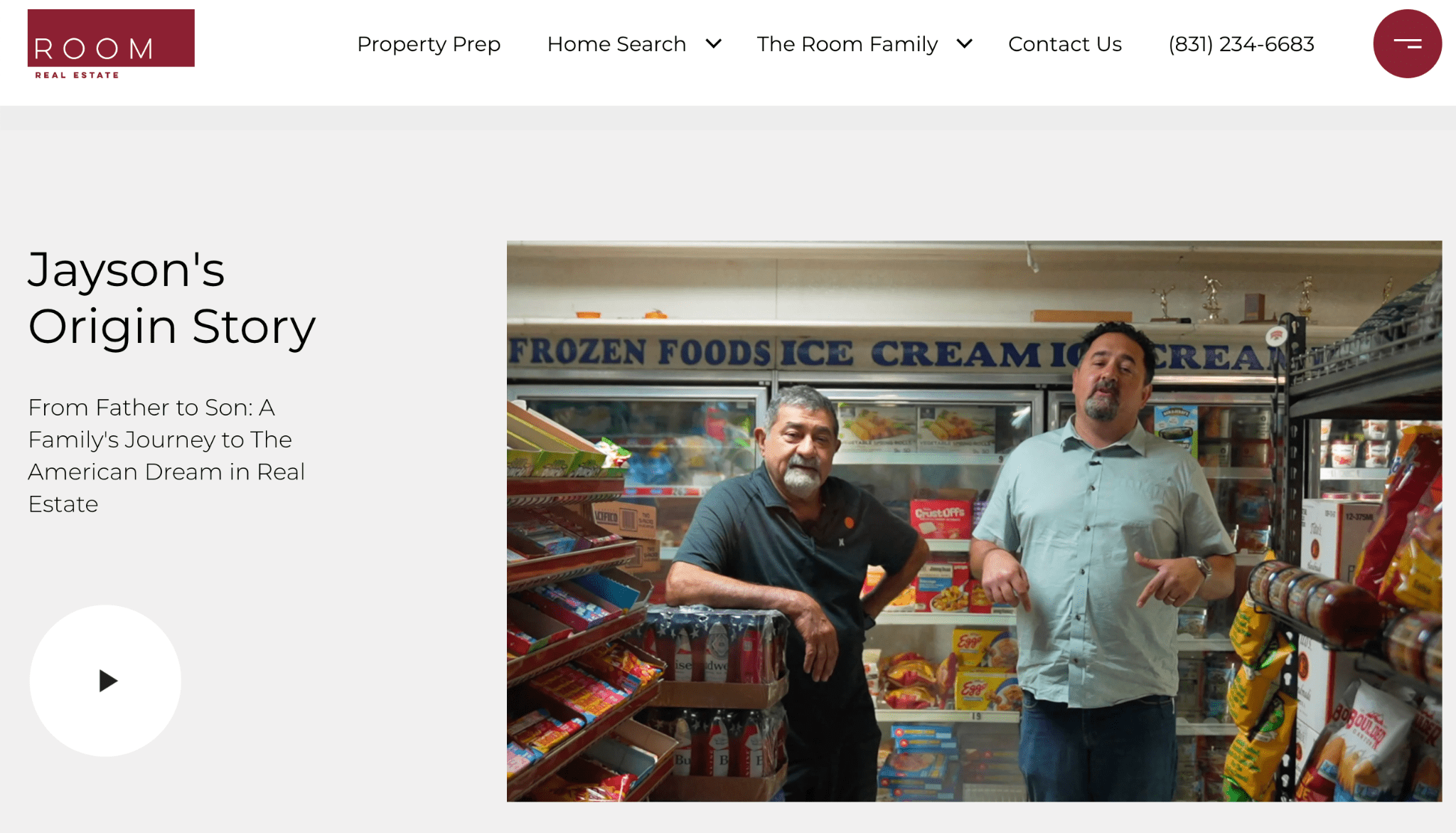

Here’s a real estate business that went the extra mile, although with very simple means. Room Real Estate captured the family business spirit in a short video. This video introduces the visitor to two generations who have worked hard for their success.

There’s going to be a lot of visual content on your site, so make sure the images are compressed and the code is optimized. This will keep your site fast which, again, matters both to visitors and Google.

Real estate websites often rely heavily on images and virtual tours, which can slow down site speed if not optimized properly. In one case, we improved a client’s site speed by compressing images and restructuring their code, which led to a significant boost in their search rankings.

Mobile-friendliness is a ranking factor and a must-have if you want your visitors to stick around. Photos of houses and apartments look better on a big screen, but many of your visitors will use a smartphone instead.

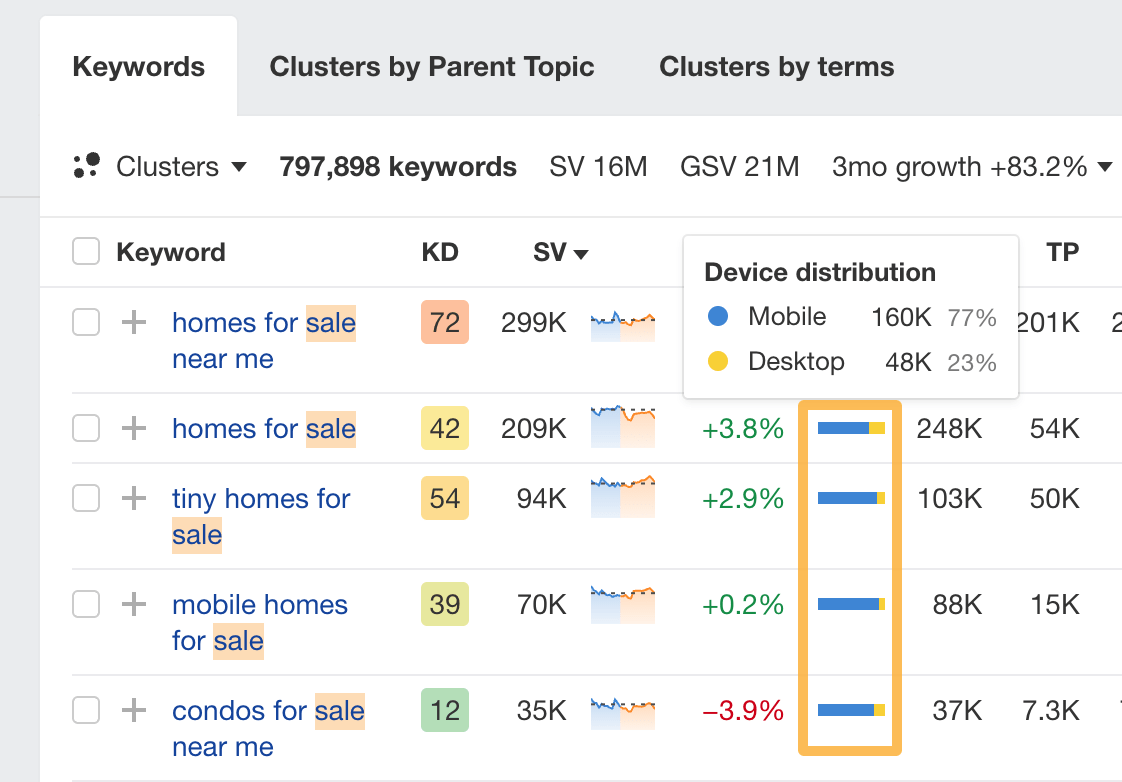

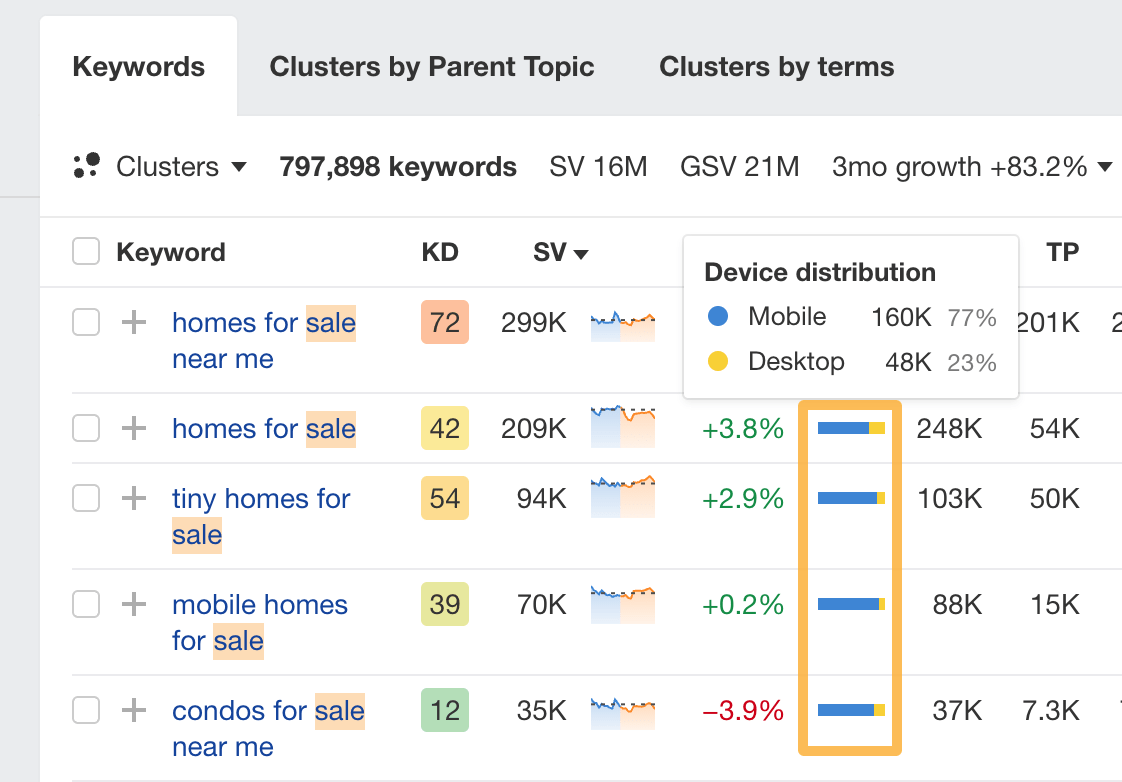

To illustrate, here’s the mobile vs desktop distribution looks on most real estate-related keywords I’ve seen so far.

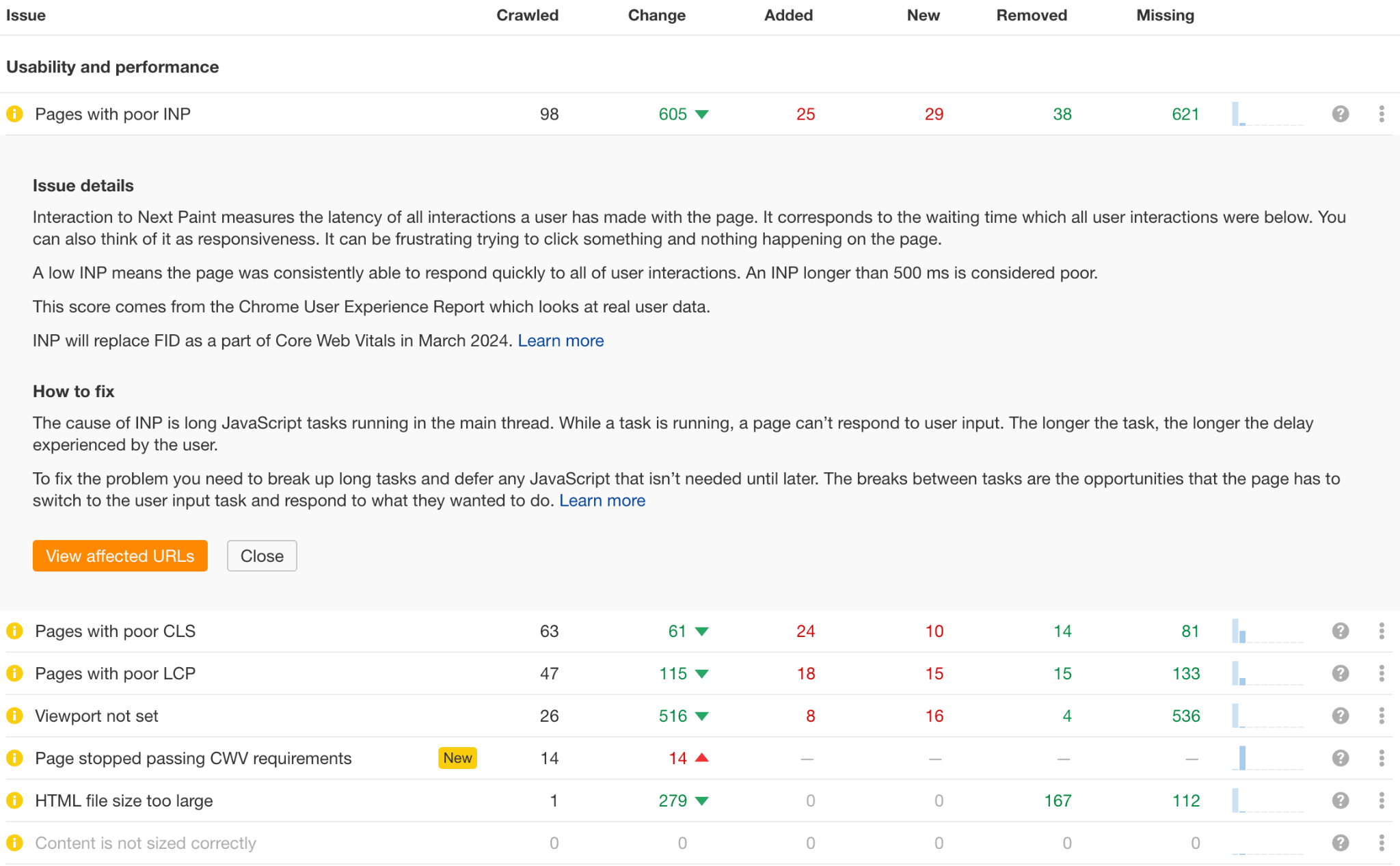

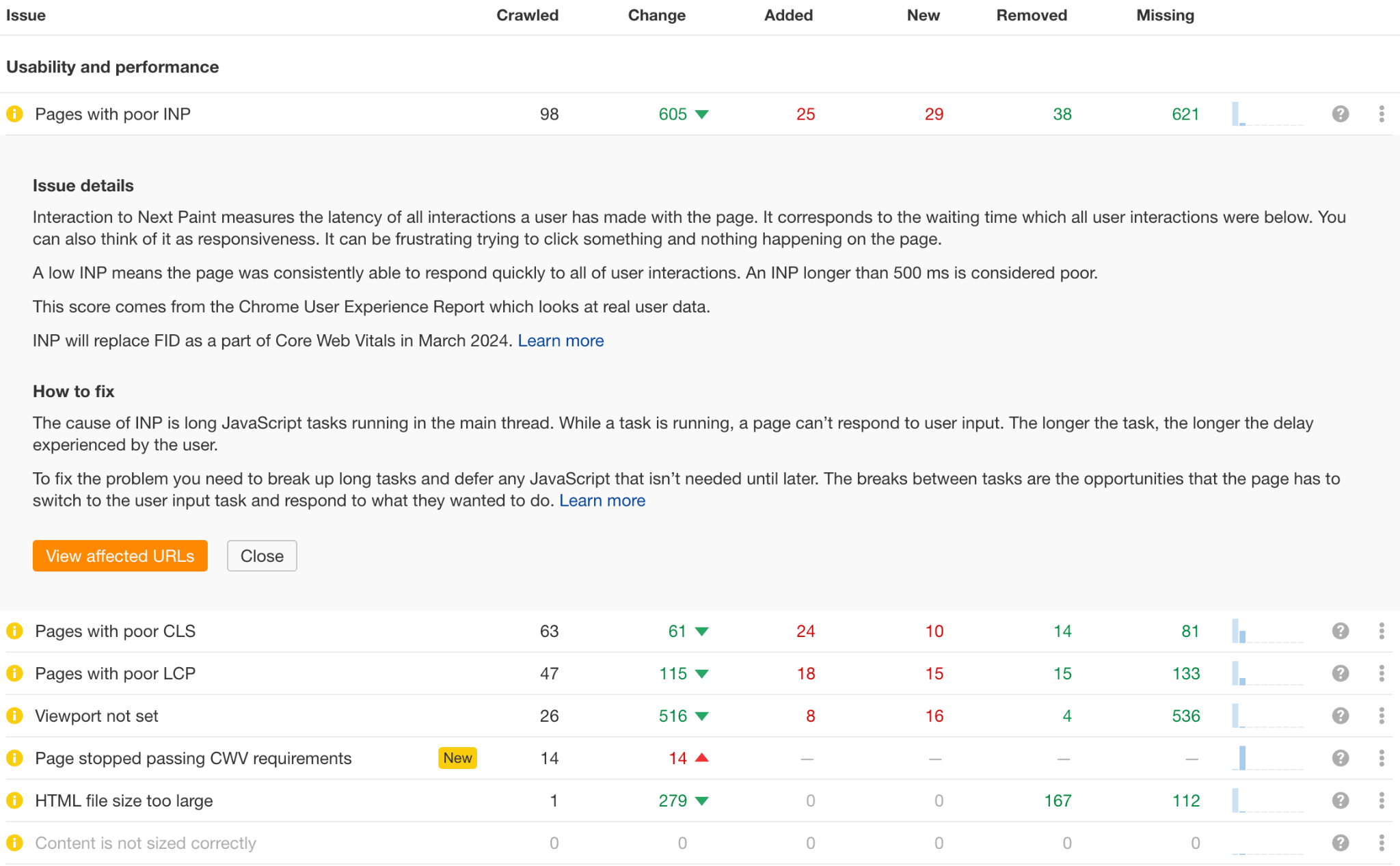

It’s very easy to test your site for these factors. You can use free tools such as our Webmaster Tools. The tool will show you affected pages, tips on how to fix them, and whether the changes you implemented worked.

That said, fixing those things will require some technical skills. So unless you’re a realtor by day and a web developer by night, you might want to get someone to help you.

Backlinks, also known as inbound or incoming links, are links from one website to another. Search engines like Google see these links as votes of trust.

Link building is one of the pillars of SEO as more backlinks from unique domains can improve your search rankings.

There are many tactics to get backlinks, so you need to choose wisely. Based on expert opinions and an analysis of high-performing real estate sites, here’s where you can get quality backlinks:

- Directories.

- Press.

- Podcasts, shows, and public speaking.

- Local organizations, schols, and events.

- Your terminology and data pages.

Let’s look at them in more detail.

1. Directories

Directories are organized listings of websites, typically categorized by topic, industry, or location. For example, Circa is a niche directory for old house listings. They also feature agents and brokers.

Getting your site on a directory is pretty simple. Depending on where you’re listing, you might just add your info yourself, fill out a form and wait for approval, or “pay to play”.

A quick search of online directories or business listings will give you enough sites to keep you busy for a few hours (for example this list from HubSpot). On top of that, I’d recommend you also check out our advanced guide to this type of link building and find some hidden gems.

2. Press

Backlinks from the press come from providing journalists and bloggers a reason to mention you, and therefore, link to you.

For instance, you can offer expertise like Michael Bondi.

Or get your listings featured like Berkshire Property Agents.

You will find lots of requests from journalists requests on HARO, Help a B2B Writer, and similar sites.

You will find lots of requests from journalists requests on HARO, Help a B2B Writer, and similar sites.

Consider reaching out to local press outlets with real estate-focused story ideas. For example, you could propose an article exploring ‘Why there’s a surge of homes for sale in [specific area]’. Alternatively, offer your expertise to journalists working on real estate-related pieces.

If you have a bit more budget, you can hire a PR or link building agency to seek out the right opportunities.

3. Podcasts, shows and public speaking

These events often list speakers or participants on their websites, providing an opportunity for valuable backlinks from reputable sources.

Whenever you get a chance to appear on a show, conference, or lecture, ask for a link back to your site.

4. Local organizations, schools and events

Local organizations, schools, and events often link to sponsors, businesses that are involved in charities or community initiatives, and helpful resources.

These backlinks might need a bit more effort but the benefits of networking will likely surpass SEO. Here are a few ideas to try:

- Join local business associations and chambers of commerce.

- Reach out to local schools and offer to participate in career days or provide educational resources about real estate.

- Sponsor local sports teams or cultural events.

- Volunteer for community service projects or organize charity events.

- Create and share valuable content about the local real estate market, homebuying tips, or neighborhood guides.

- Offer free workshops or seminars on real estate topics for community members.

- Partner with local non-profits for fundraising initiatives.

- Offer internship opportunities to local students interested in real estate.

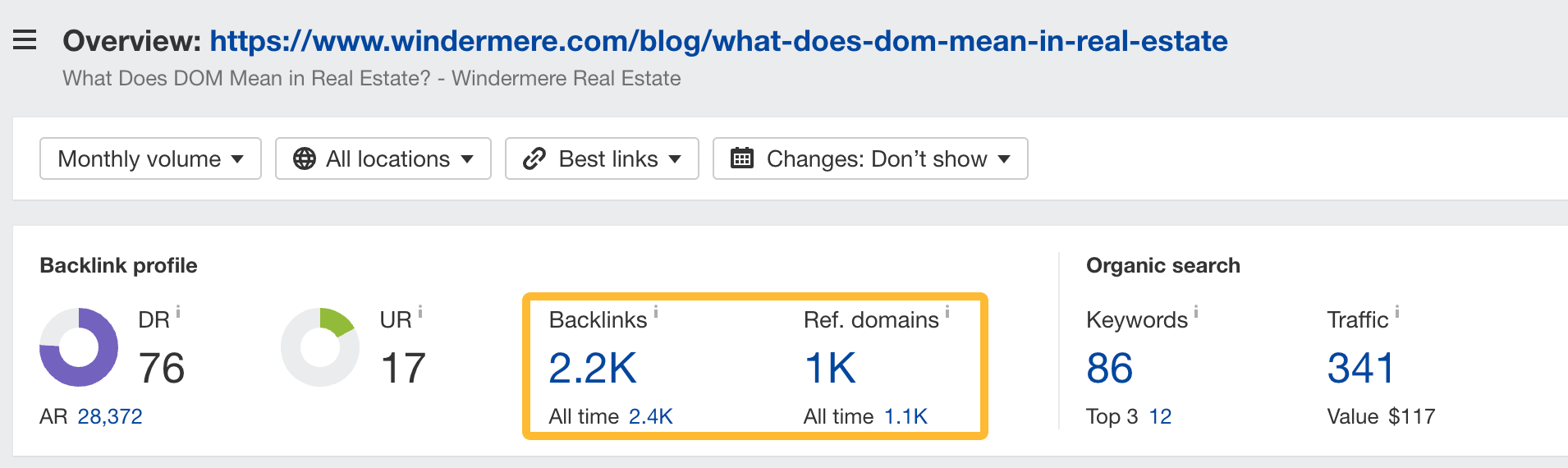

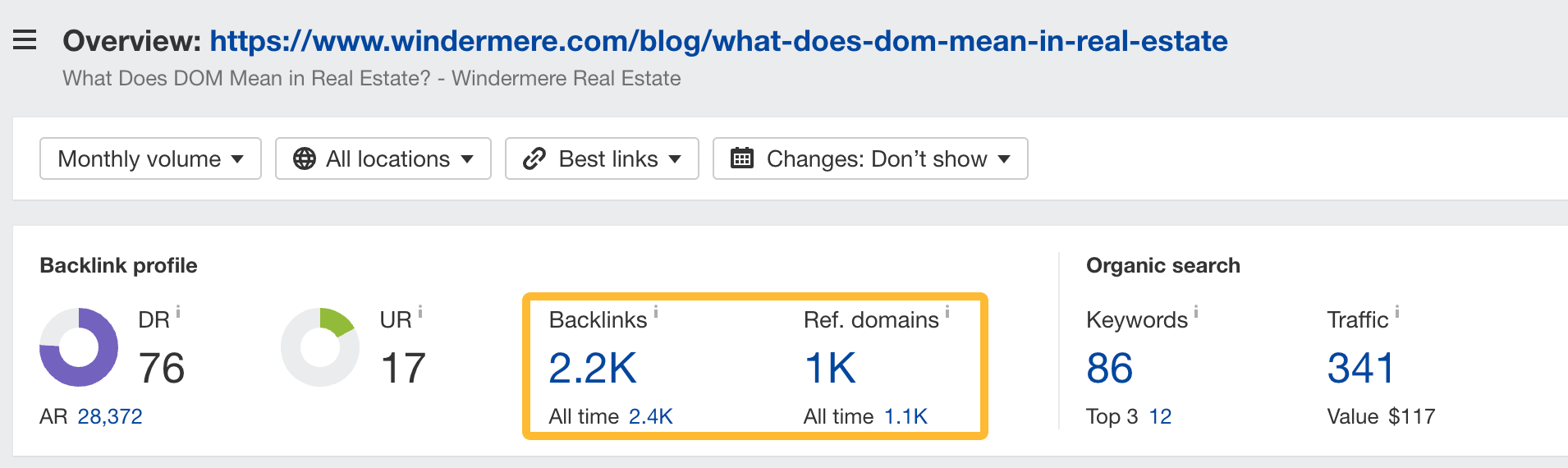

5. Terminology and data pages

Citing data and facts is one of the most popular reasons to link. Become the source, and you might earn lots of links this way passively.

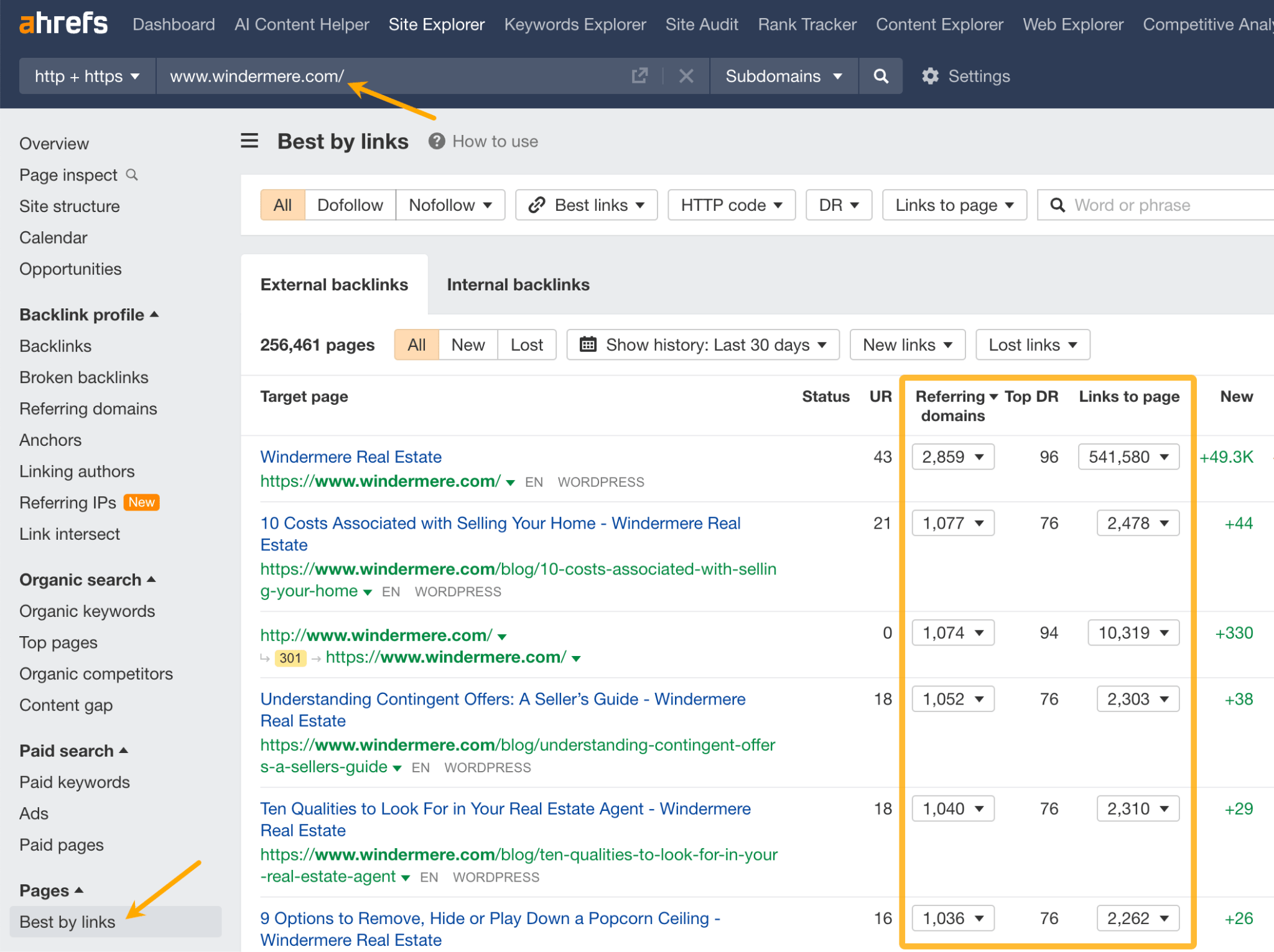

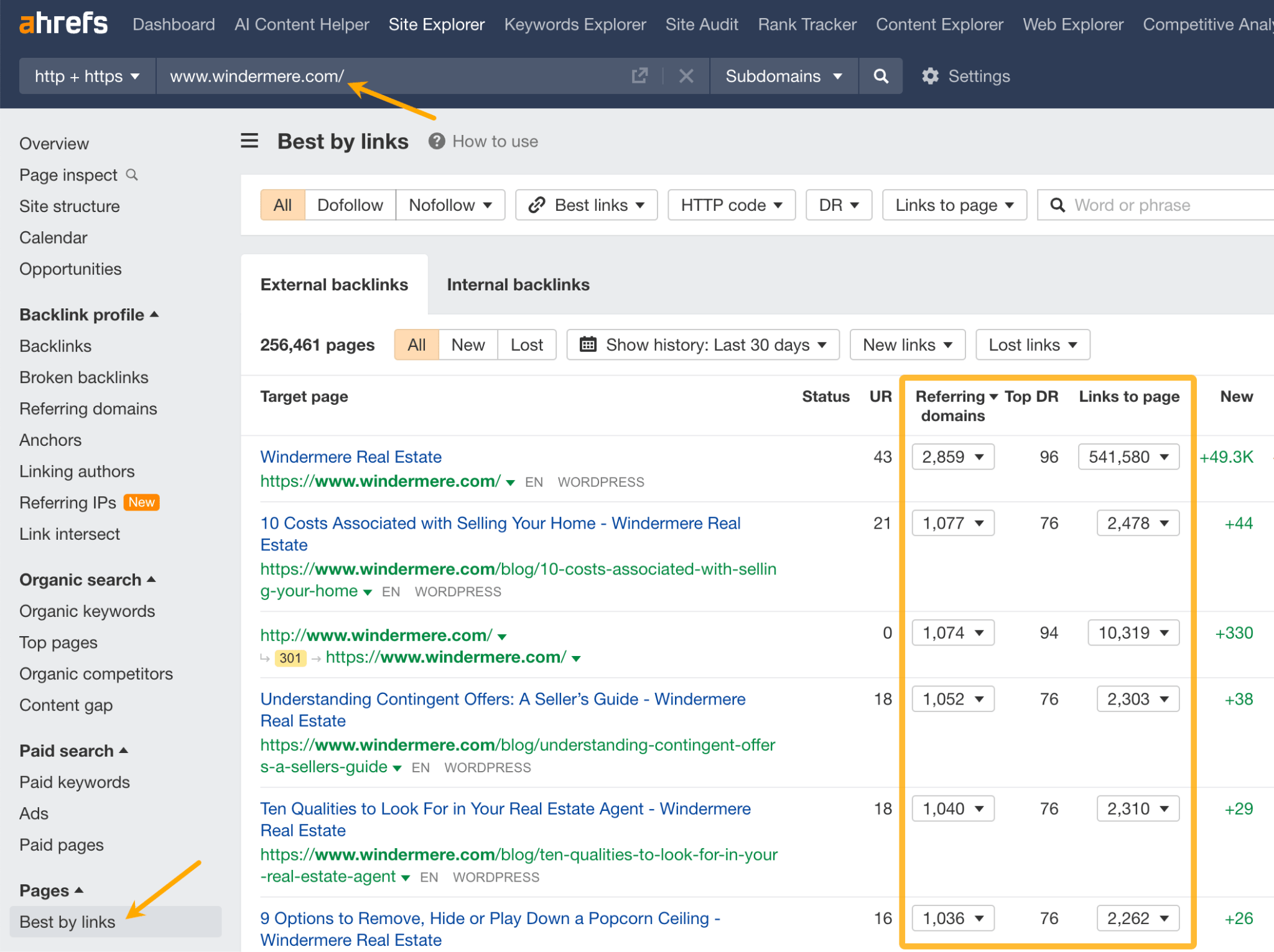

To get an idea of what kind of resources earn links in real estate, you can look at competitors’ sites in Ahrefs’ Site Explorer. Just paste their domain and go to the Best by links report.

Tip

Before investing time in link building, I strongly encourage you to read our beginner’s guide. Learn how to tell good links from links that are less likely to give you a boost, and which practices could possibly hurt your site.

Final thoughts

I’d like to leave you with two more tips.

I’ve seen many realtors create video content for YouTube, including virtual property tours, and neighborhood showcases. However, just a few of my sources mentioned this strategy. For inspiration, check out Brad McCallum’s channel. To find keywords for YouTube SEO, you can use tools like vidIQ.

Finally, I want to quickly discuss your KPIs. Since buyer’s journey in this business can be quite long, a good idea would be to track the correlation between SEO metrics and closed deals. To crunch the numbers, simply ask ChatGPT.

We measure the ROI of SEO from the number of quality leads that are generated by our website and then correlate them with closed deals, giving a clear picture of how organic search is contributing to our bottom line.

Got questions or comments? Send me a message on LinkedIn.