SEO

Google’s Strategies For Dealing With Content Decay

In the latest episode of the Search Off The Record podcast, Google Search Relations team members John Mueller and Lizzi Sassman did a deep dive into dealing with “content decay” on websites.

Outdated content is a natural issue all sites face over time, and Google has outlined strategies beyond just deleting old pages.

While removing stale content is sometimes necessary, Google recommends taking an intentional, format-specific approach to tackling content decay.

Archiving vs. Transitional Guides

Google advises against immediately removing content that becomes obsolete, like materials referencing discontinued products or services.

Removing content too soon could confuse readers and lead to a poor experience, Sassman explains:

“So, if I’m trying to find out like what happened, I almost need that first thing to know. Like, “What happened to you?” And, otherwise, it feels almost like an error. Like, “Did I click a wrong link or they redirect to the wrong thing?””

Sassman says you can avoid confusion by providing transitional “explainer” pages during deprecation periods.

A temporary transition guide informs readers of the outdated content while steering them toward updated resources.

Sassman continues:

“That could be like an intermediary step where maybe you don’t do that forever, but you do it during the transition period where, for like six months, you have them go funnel them to the explanation, and then after that, all right, call it a day. Like enough people know about it. Enough time has passed. We can just redirect right to the thing and people aren’t as confused anymore.”

When To Update Vs. When To Write New Content

For reference guides and content that provide authoritative overviews, Google suggests updating information to maintain accuracy and relevance.

However, for archival purposes, major updates may warrant creating a new piece instead of editing the original.

Sassman explains:

“I still want to retain the original piece of content as it was, in case we need to look back or refer to it, and to change it or rehabilitate it into a new thing would almost be worth republishing as a new blog post if we had that much additional things to say about it.”

Remove Potentially Harmful Content

Google recommends removing pages in cases where the outdated information is potentially harmful.

Sassman says she arrived at this conclusion when deciding what to do with a guide involving obsolete structured data:

“I think something that we deleted recently was the “How to Structure Data” documentation page, which I thought we should just get rid of it… it almost felt like that’s going to be more confusing to leave it up for a period of time.

And actually it would be negative if people are still adding markup, thinking they’re going to get something. So what we ended up doing was just delete the page and redirect to the changelog entry so that, if people clicked “How To Structure Data” still, if there was a link somewhere, they could still find out what happened to that feature.”

Internal Auditing Processes

To keep your content current, Google advises implementing a system for auditing aging content and flagging it for review.

Sassman says she sets automated alerts for pages that haven’t been checked in set periods:

“Oh, so we have a little robot to come and remind us, “Hey, you should come investigate this documentation page. It’s been x amount of time. Please come and look at it again to make sure that all of your links are still up to date, that it’s still fresh.””

Context Is Key

Google’s tips for dealing with content decay center around understanding the context of outdated materials.

You want to prevent visitors from stumbling across obsolete pages without clarity.

Additional Google-recommended tactics include:

- Prominent banners or notices clarifying a page’s dated nature

- Listing original publish dates

- Providing inline annotations explaining how older references or screenshots may be obsolete

How This Can Help You

Following Google’s recommendations for tackling content decay can benefit you in several ways:

- Improved user experience: By providing clear explanations, transition guides, and redirects, you can ensure that visitors don’t encounter confusing or broken pages.

- Maintained trust and credibility: Removing potentially harmful or inaccurate content and keeping your information up-to-date demonstrates your commitment to providing reliable and trustworthy resources.

- Better SEO: Regularly auditing and updating your pages can benefit your website’s search rankings and visibility.

- Archival purposes: By creating new content instead of editing older pieces, you can maintain a historical record of your website’s evolution.

- Streamlined content management: Implementing internal auditing processes makes it easier to identify and address outdated or problematic pages.

By proactively tackling content decay, you can keep your website a valuable resource, improve SEO, and maintain an organized content library.

Listen to the full episode of Google’s podcast below:

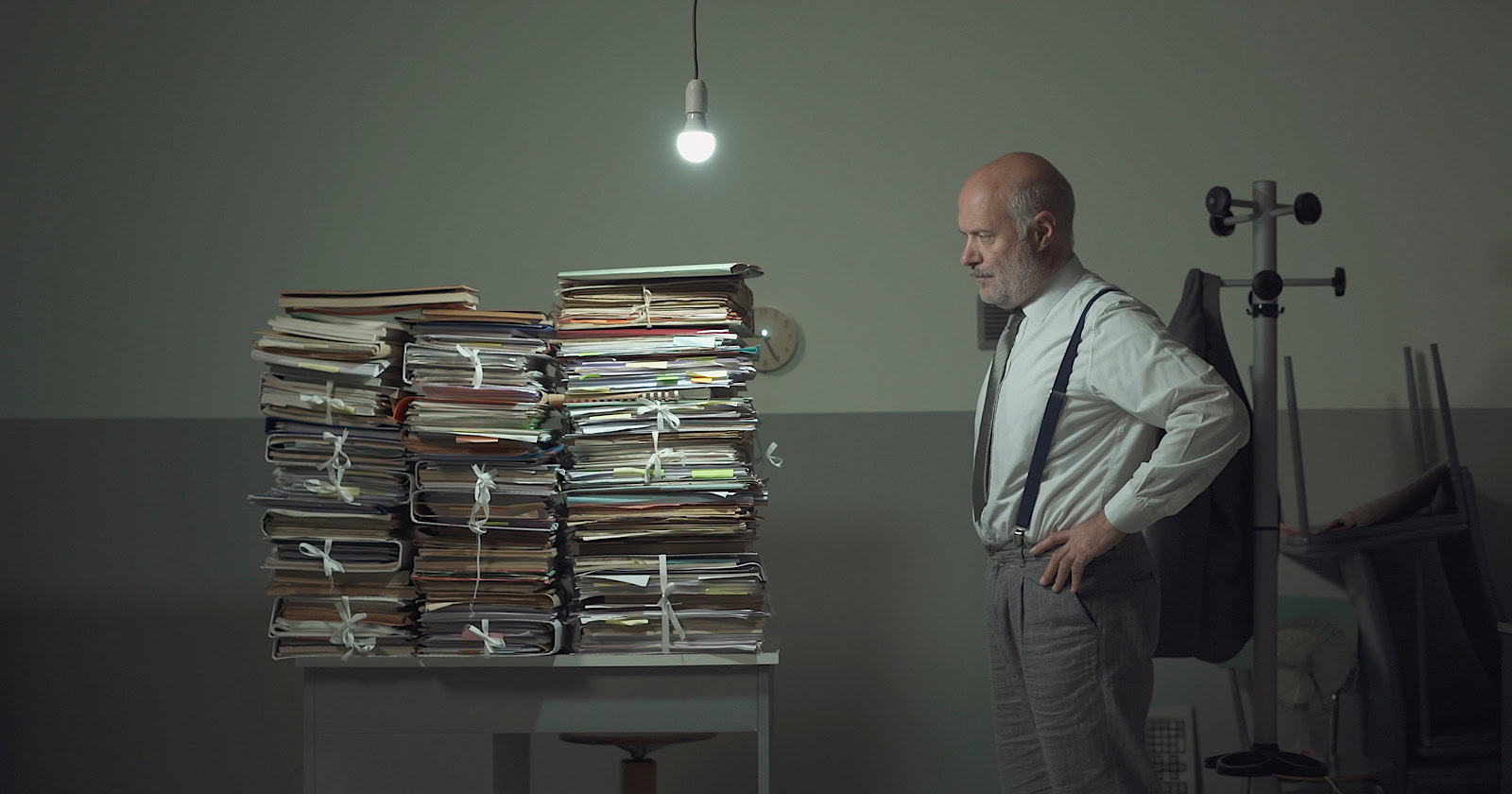

Featured Image: Stokkete/Shutterstock