SEO

Headless SEO Explained + 6 Best Practices

Put simply, a headless content management system (CMS) separates a website’s content from its design and code.

It functions differently from a traditional CMS, like WordPress, and therefore requires different considerations for SEO as well. While general SEO best practices and rules remain the same, how you go about implementing them will differ in a headless setup.

This is a beginner-friendly guide covering everything you need to know about headless CMS SEO, including:

- How headless SEO is different from regular SEO

- The benefits of using a headless CMS

- Headless SEO best practices

But first, let’s make sure we’re on the same page about the ins and outs of what a headless CMS is and how its differences may affect your SEO strategy.

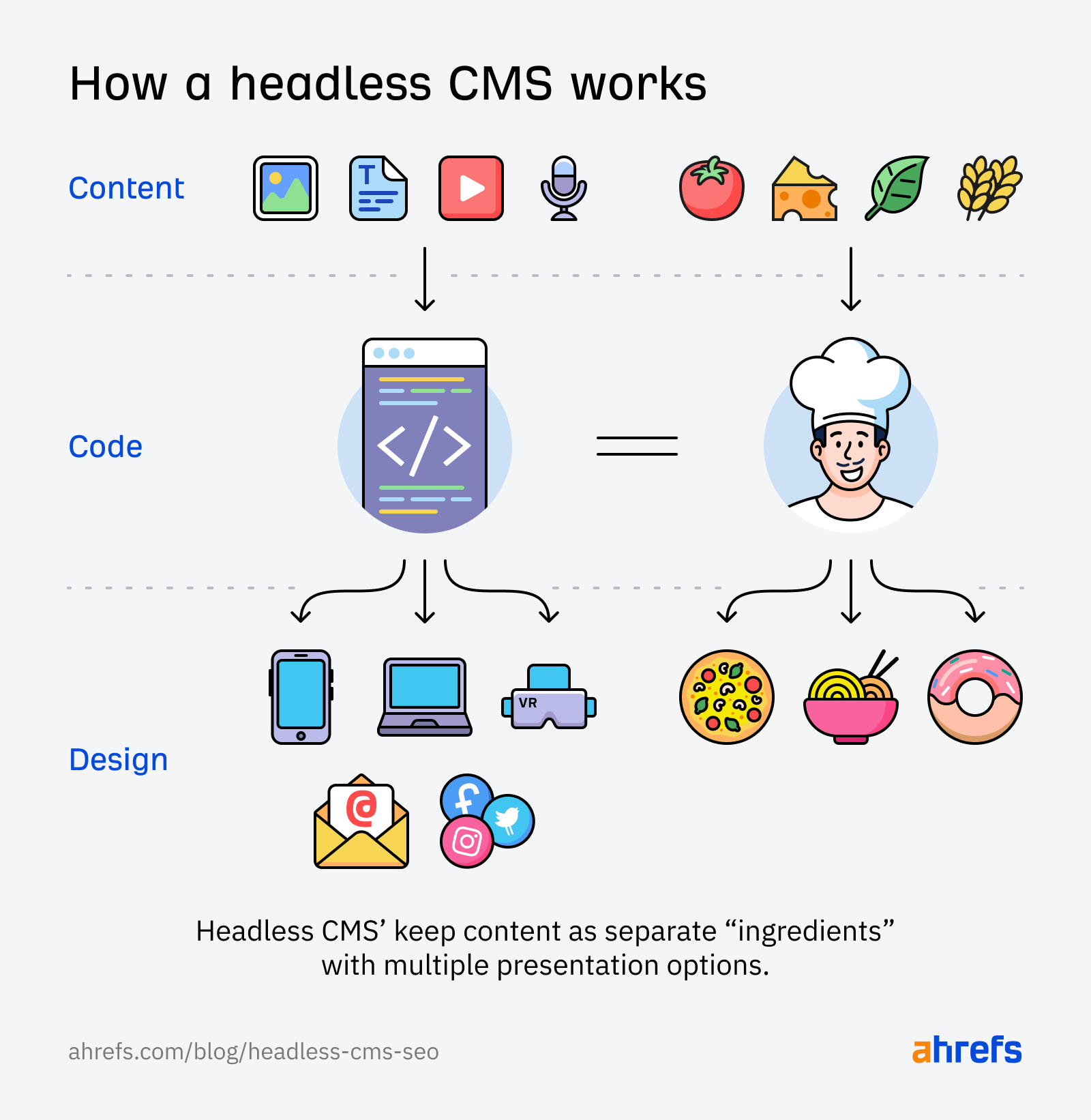

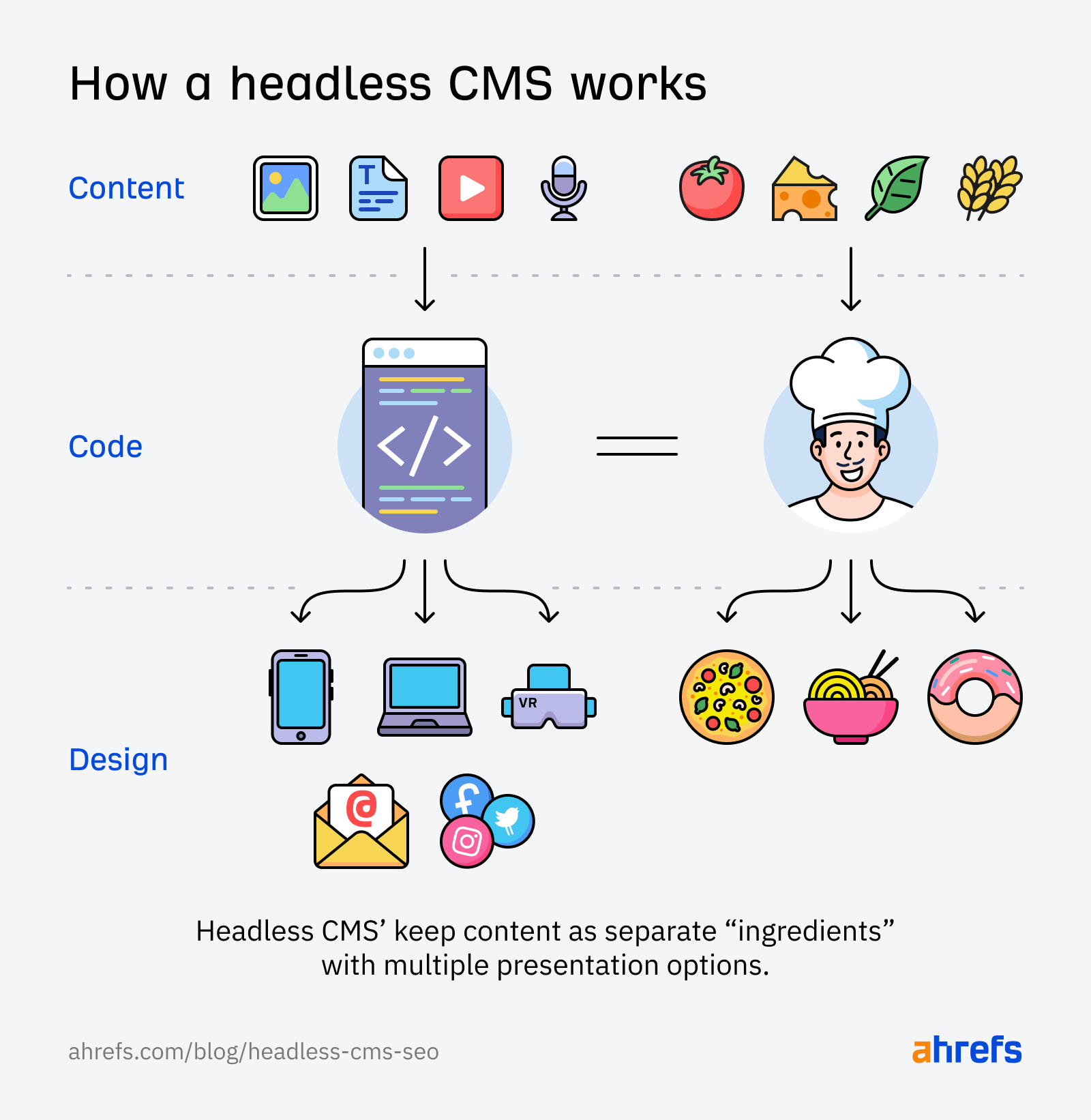

In a traditional CMS, all your content, code, and design live in one place. While you can design a responsive layout for some devices, the content cannot be displayed separately from the website itself.

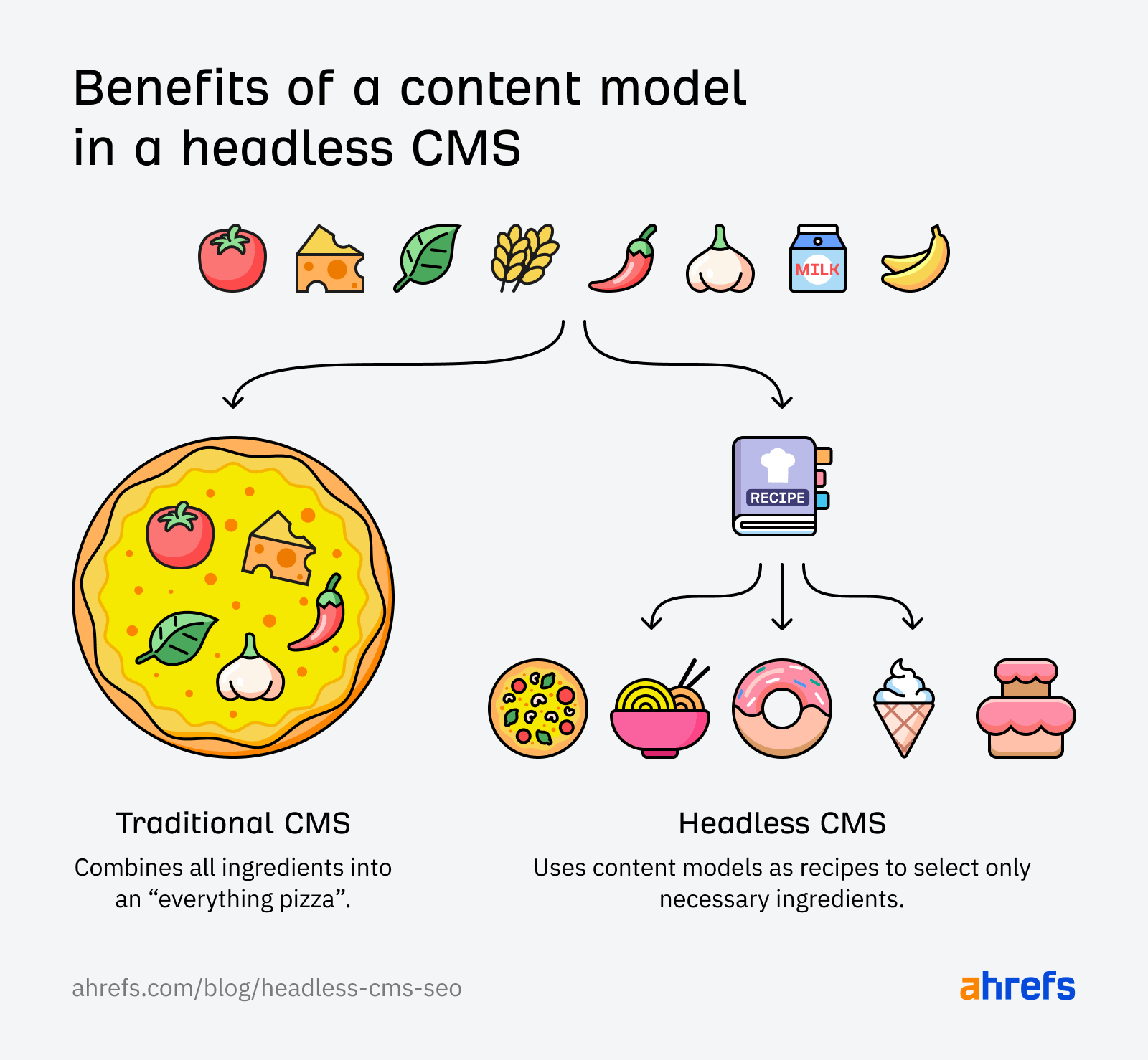

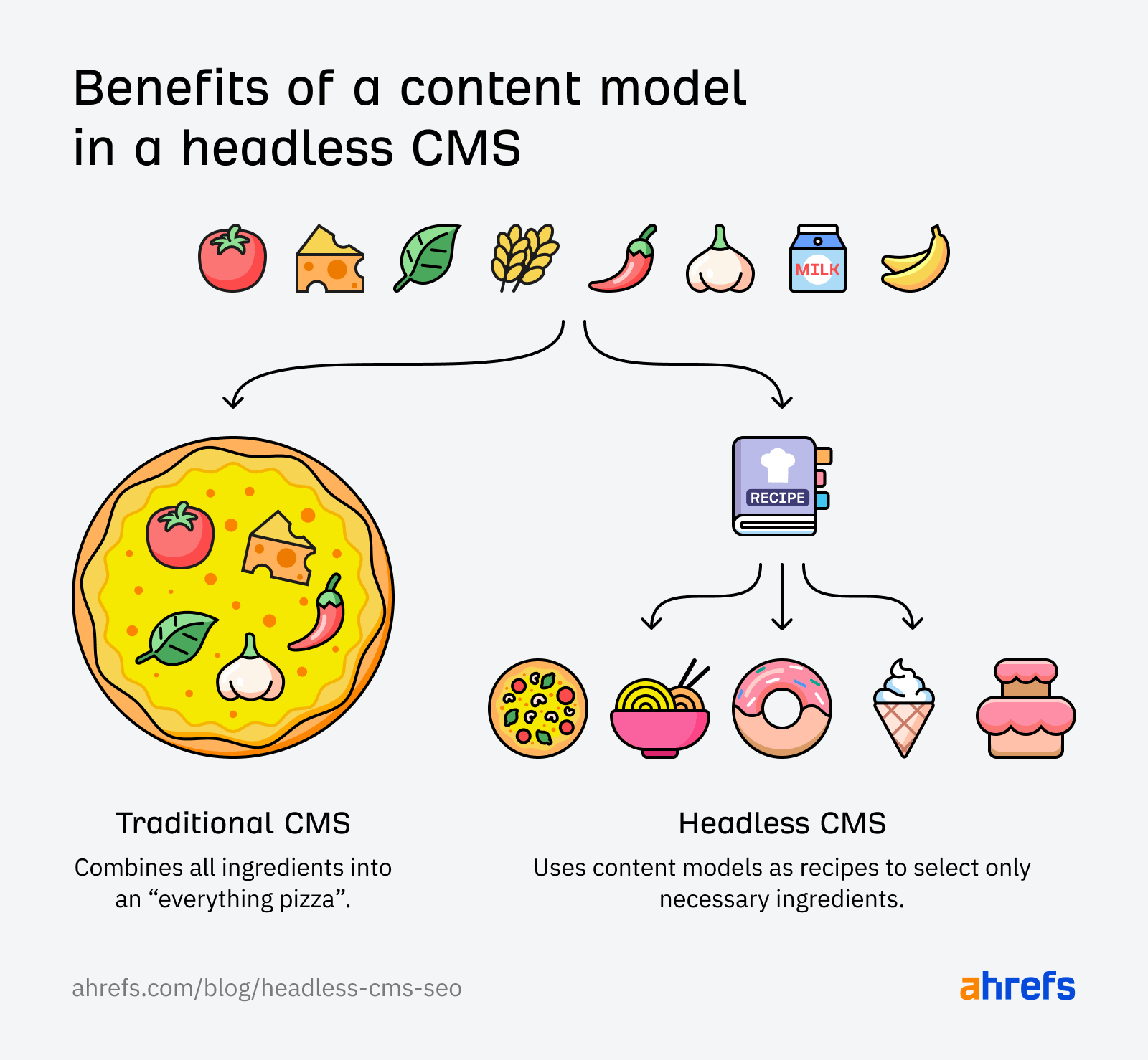

Like a pizza, you can’t easily separate all the ingredients. It’s an all-or-nothing deal.

A headless CMS, however, separates your content, code, and design so you can create content once and distribute it across different channels and devices easily.

For example, a headless eCommerce site can pull its pricing and inventory from two different systems and then push these to the website or other applications independently of any other content.

A headless CMS allows your content distribution to be greater than the sum of its parts in a way that a traditional CMS never will be able to achieve.

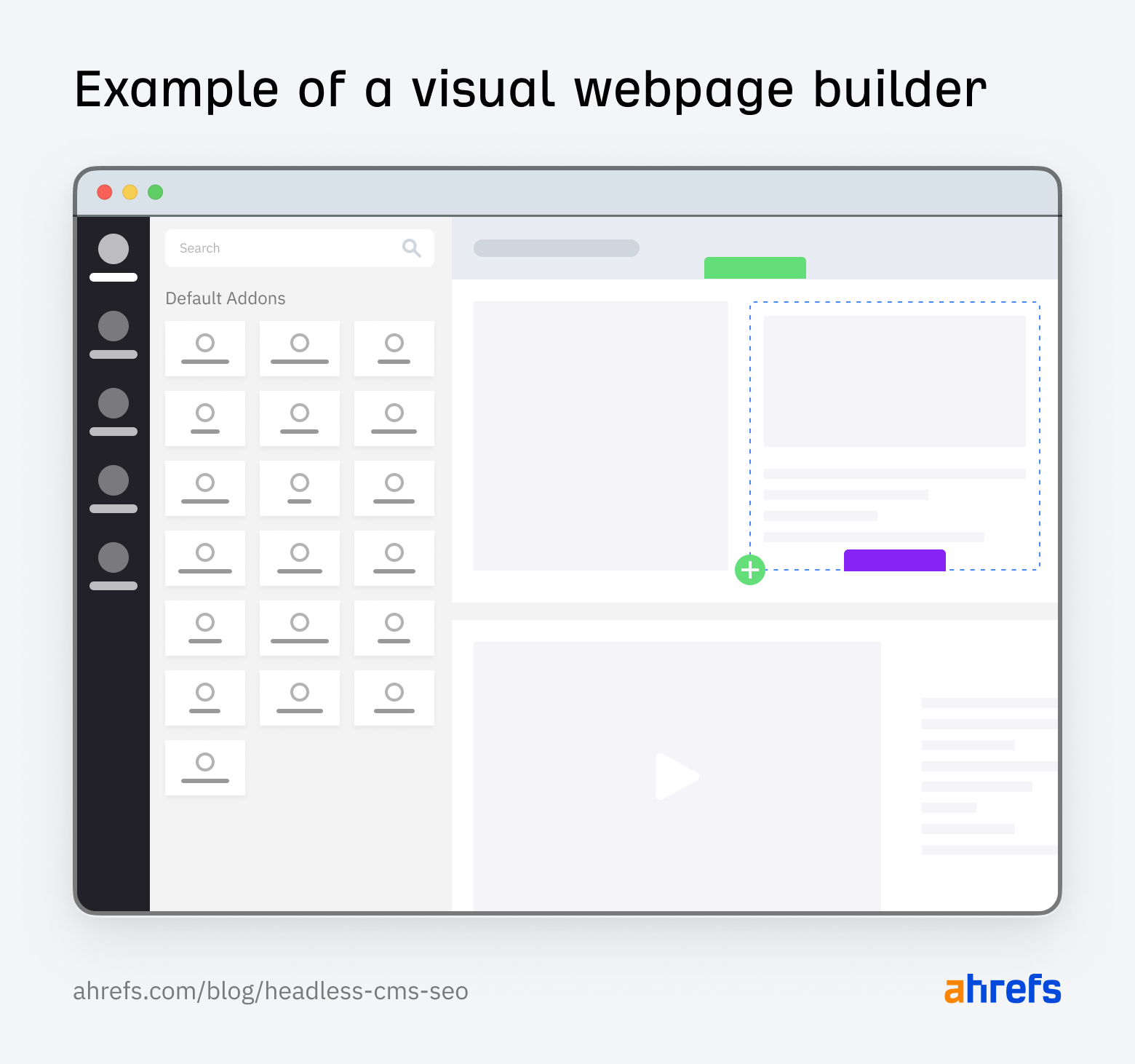

For example, when you build a webpage using a traditional CMS, you’ll often use a visual drag-and-drop editor that looks a bit like this:

How you enter content and images here will closely represent what your website visitors see.

Now, let’s say you want to distribute the content you’ve added here through a different device or channel, like a VR headset or an electronic billboard.

With a traditional website CMS, you simply can’t do that. You would need to recreate your content and adapt it to the platform you’re delivering it on.

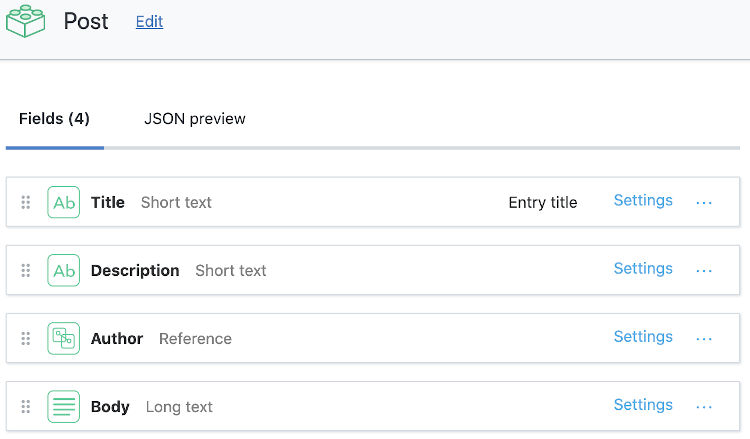

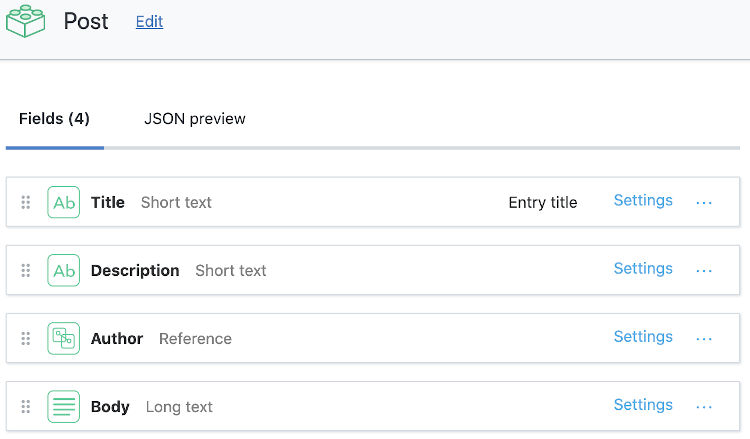

But, with a headless CMS, you don’t have such a limitation. That’s because how you organize and arrange your content within the CMS is completely different. It looks a bit like this:

Instead of adding content and images based on how you want it to look, you simply enter the content as a collection of separate “ingredients.” These ingredients can then be dynamically distributed and designed to match the needs of each different channel and device.

Headless SEO is the practice of optimizing your headless CMS so that it meets search engine optimization best practices and gives your content the best chance of ranking for relevant keywords.

Since content can be distributed across other channels, beyond the website, headless SEO offers a more flexible approach towards optimizing content no matter where it’s viewed.

If the tagline for a headless CMS is “create content once, distribute it everywhere,” then the tagline for headless SEO would be “optimize everything, everywhere, all at once.”

There are a few key differences between doing SEO for a headless CMS vs traditional SEO.

1. You’ll have greater control and flexibility

Have you ever wanted to customize some element of your technical SEO setup but found that a CMS wouldn’t let you? Yeah, it’s a common gripe and happens more frequently than SEO pros would like.

With headless SEO, you get to custom-design your CMS to be exactly how you want it to be.

- Want a specific SEO-friendly URL structure? Easy peasy!

- Want a custom robots.txt or sitemap file? Coming right up!

- Want specific schema templates for different types of content? You got it!

It is (quite literally) a case of “ask and you shall receive.”

Any optimization that you can dream of, a headless CMS can achieve, but only if you ask your developer to create it and guide them on how you want it done.

The caveat with this is that you’ll be 100% responsible for everything to do with your SEO setup. You’ll need to think about things you may not normally have to worry about when using a traditional CMS, like:

- Adding validation rules to prevent mistakes

- Adding customized logic to canonicals

- Architecting faceted navigation systems

- Defining pagination preferences

- And more

Tip

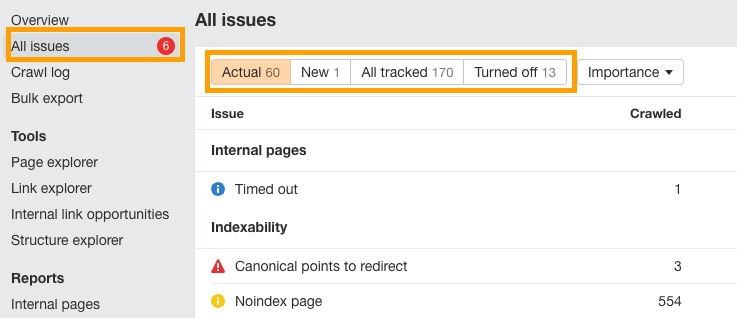

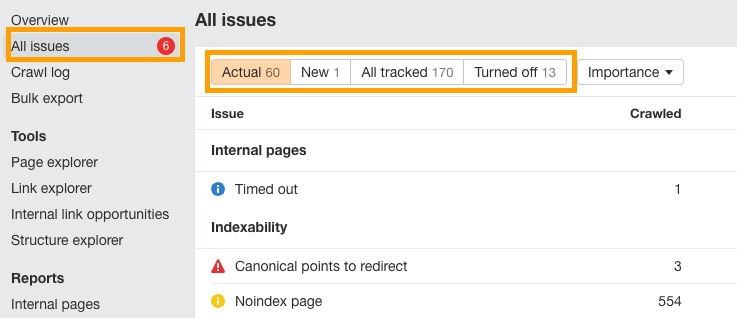

You can use Ahrefs’ Site Audit tool to get a list of over 170 technical SEO issues to educate your devs about so they don’t accidentally make mistakes with your headless SEO implementation.

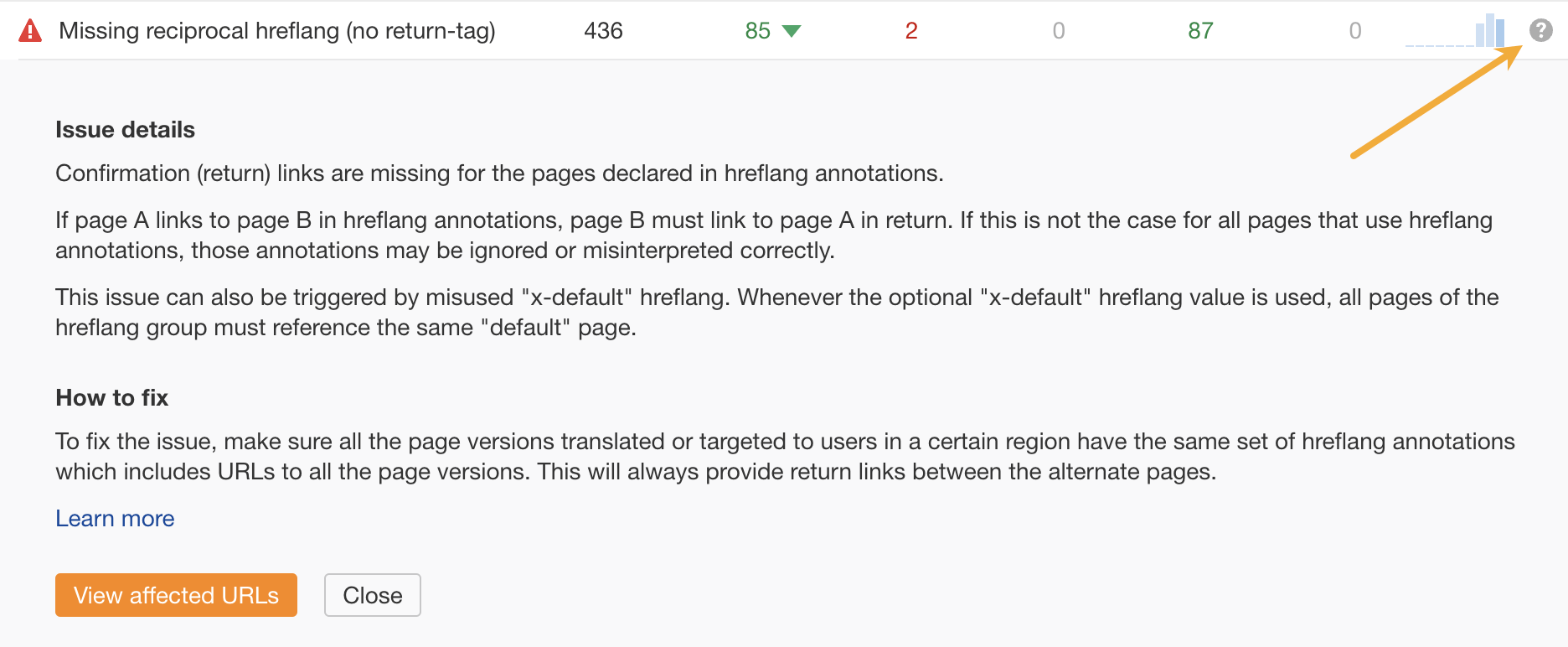

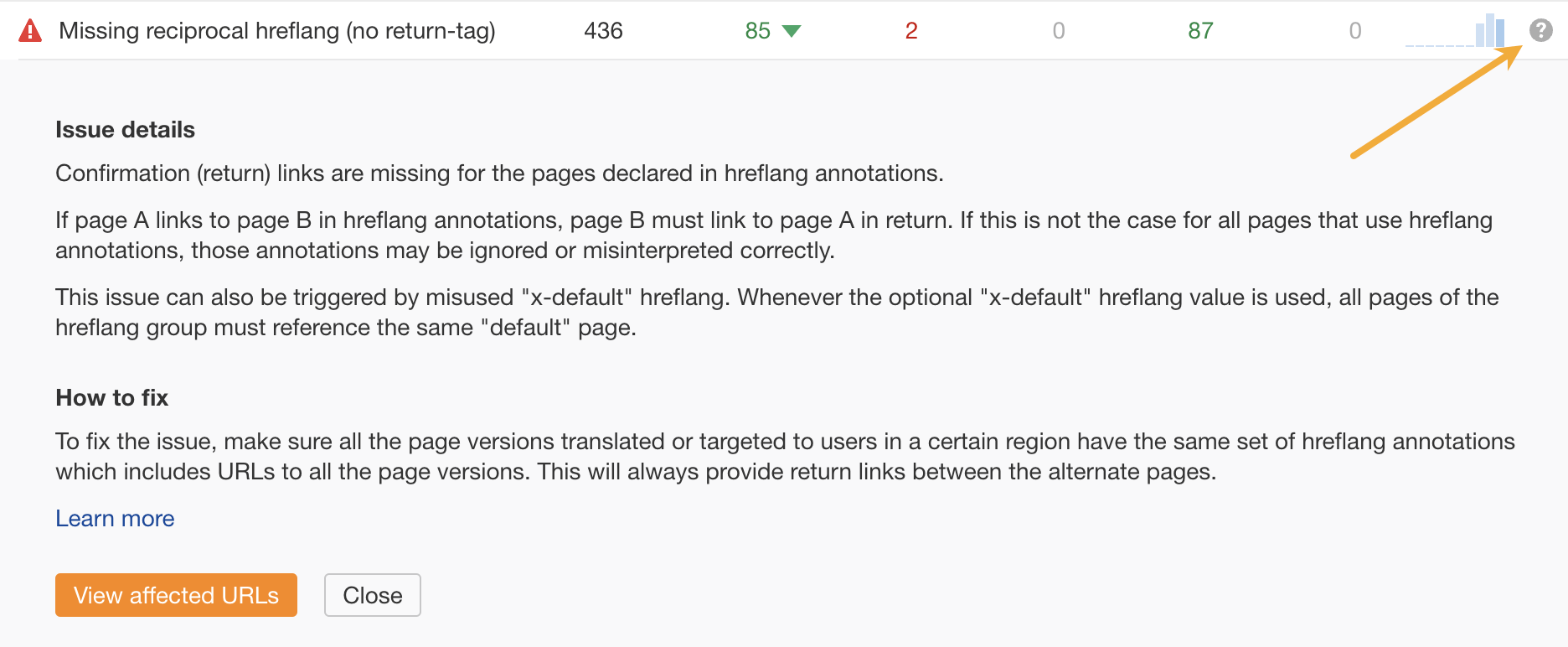

Make sure to schedule automated audits so you can monitor these issues over time. If you’re unsure how to direct your developers to fix any of them, click the “?” next to each issue to see a description and some advice.

2. You’ll need to optimize content, code & design separately

Perhaps the most difficult thing to adapt to with headless SEO is that you’ll need to optimize content, code, and design independently of each other.

- Content Optimization: Mainly occurs through a process called “content modeling.” Content models break your content down into various file formats and blocks which can be optimized individually. More on this in a moment.

- Technical Optimization: Technical SEO is implemented separately from on-page. Writers can upload content without bogging down page speed or other performance metrics. And, developers can deploy updates without halting publishing activities (unlike with some traditional CMS’).

- Design Optimization: Instead of trying to squeeze technical and SEO requirements into your design process, you can focus 100% on designing optimal user experiences for each device and channel that your content will appear on.

There’s a lot more planning and architecting involved when it comes to headless SEO and you’ll need to work closely with developers to make sure your optimizations are implemented as you want them to be.

3. You need to create and optimize content models instead of pages

If you’re used to using a CMS like WordPress, then you’re also used to optimizing complete pages and posts for the most part.

But with a headless CMS, you’ll need to build and optimize content models instead of pages. What’s a content model you ask?

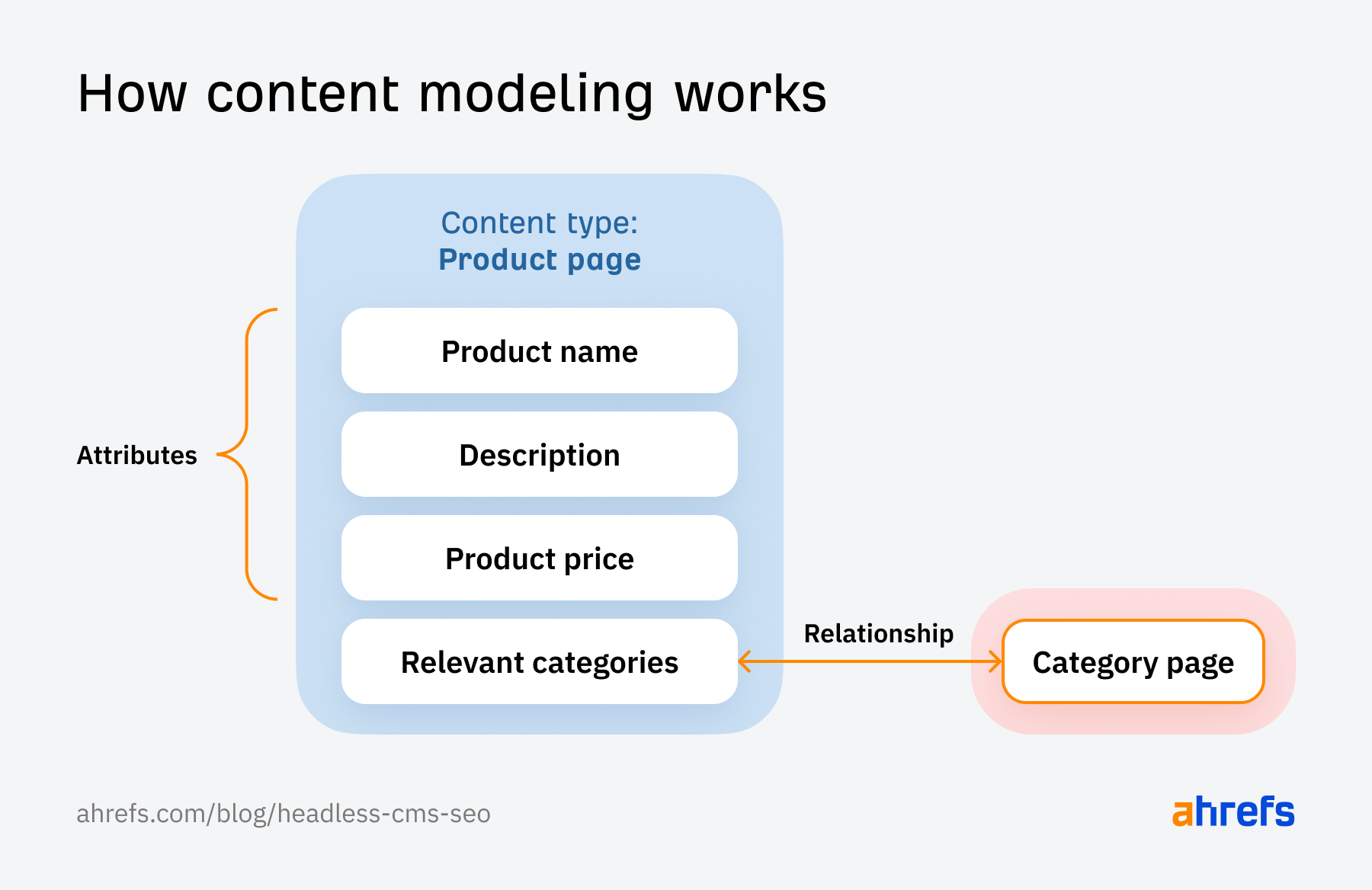

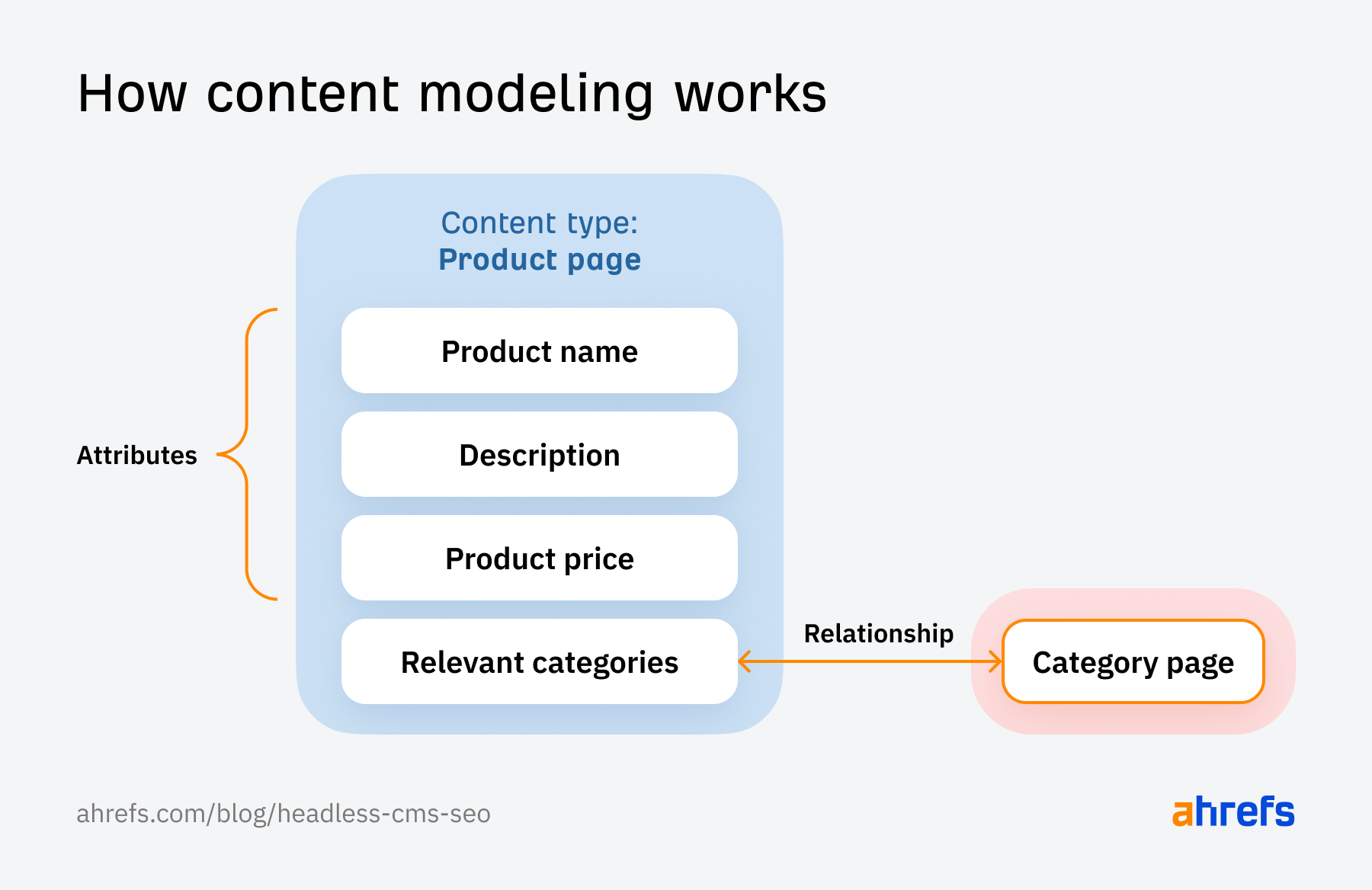

Content modeling structures and organizes your content in a way that APIs can then distribute to any kind of interface. Not only can you define the attributes each type of content will feature, but you can also create relationships between different types of content.

Think of a content model as the recipe needed to instruct your code on where it should send various types of content.

Like any good recipe, a content model will gather the correct ingredients (your content blocks) and organize them in a way that will deliver a specific outcome (a complete piece of content adapted to the interface it’s displayed on).

Without a recipe, you end up with an “everything” pizza that combines all ingredients, always—even if it doesn’t make sense to include them. For instance, on WordPress, the mobile, tablet, and desktop views of a website are all different slices of the “everything” pizza, but on a headless CMS, they’re entirely different meals.

The great thing about content modeling for SEO is that you can create a field or attribute for absolutely anything you want.

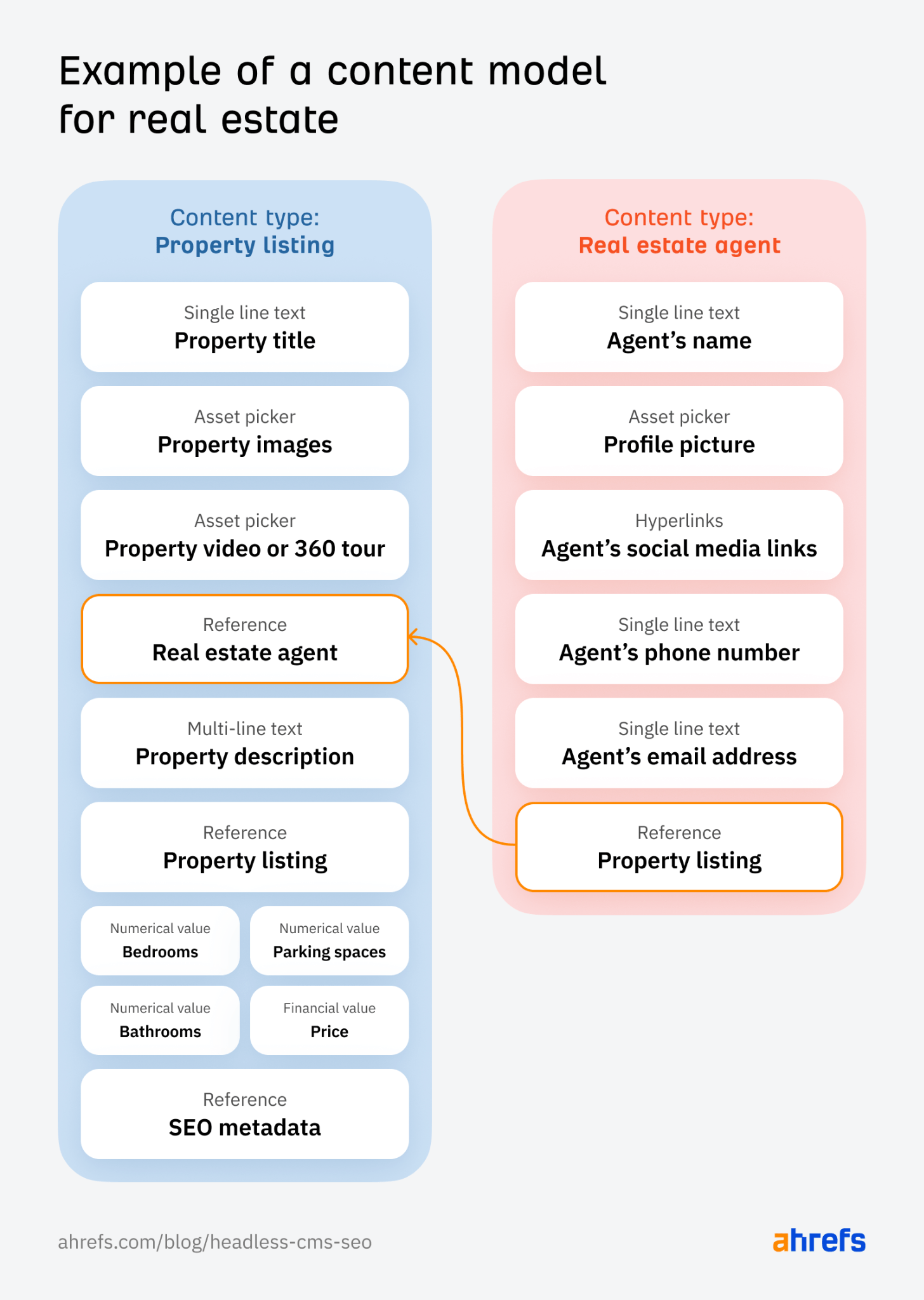

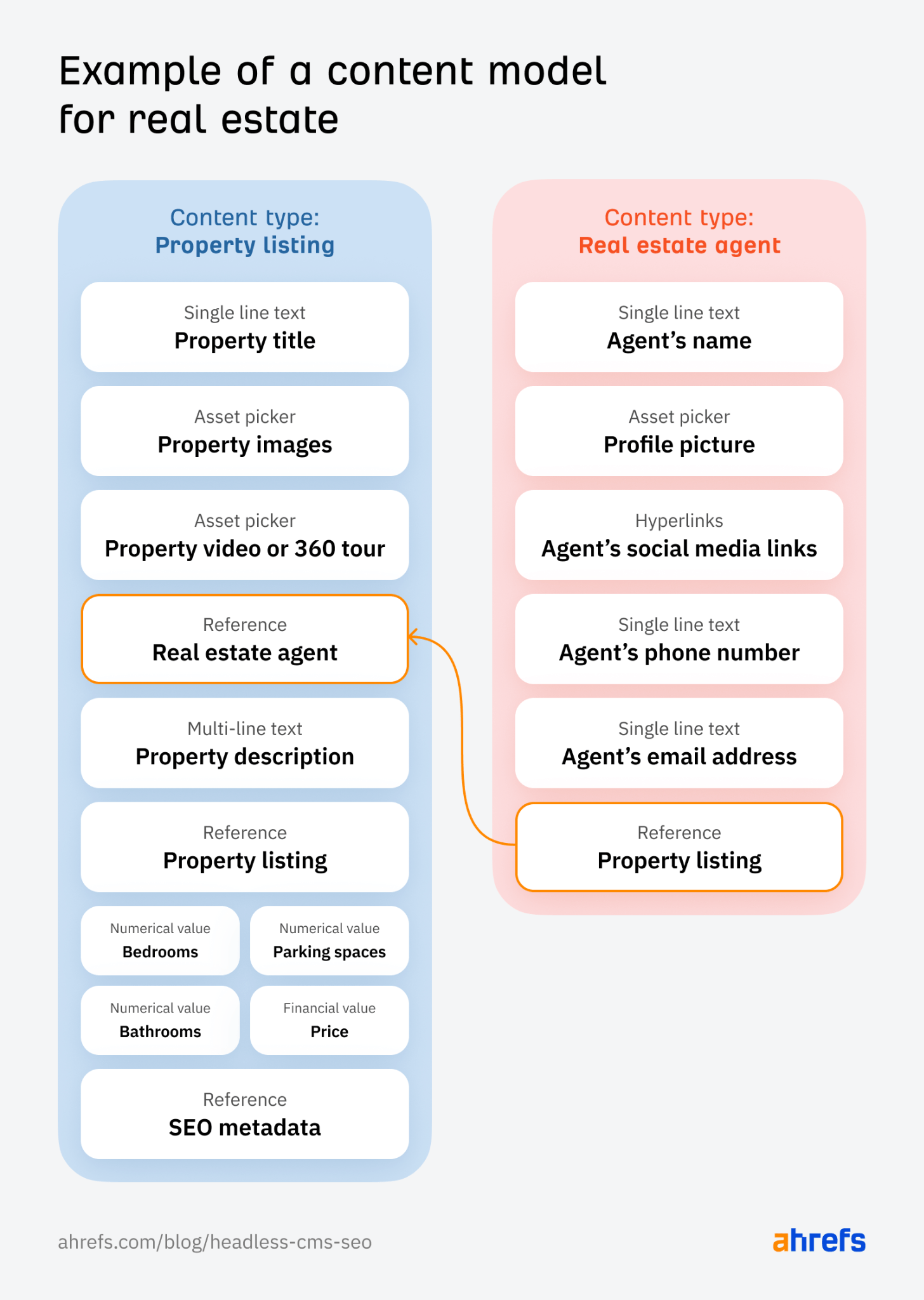

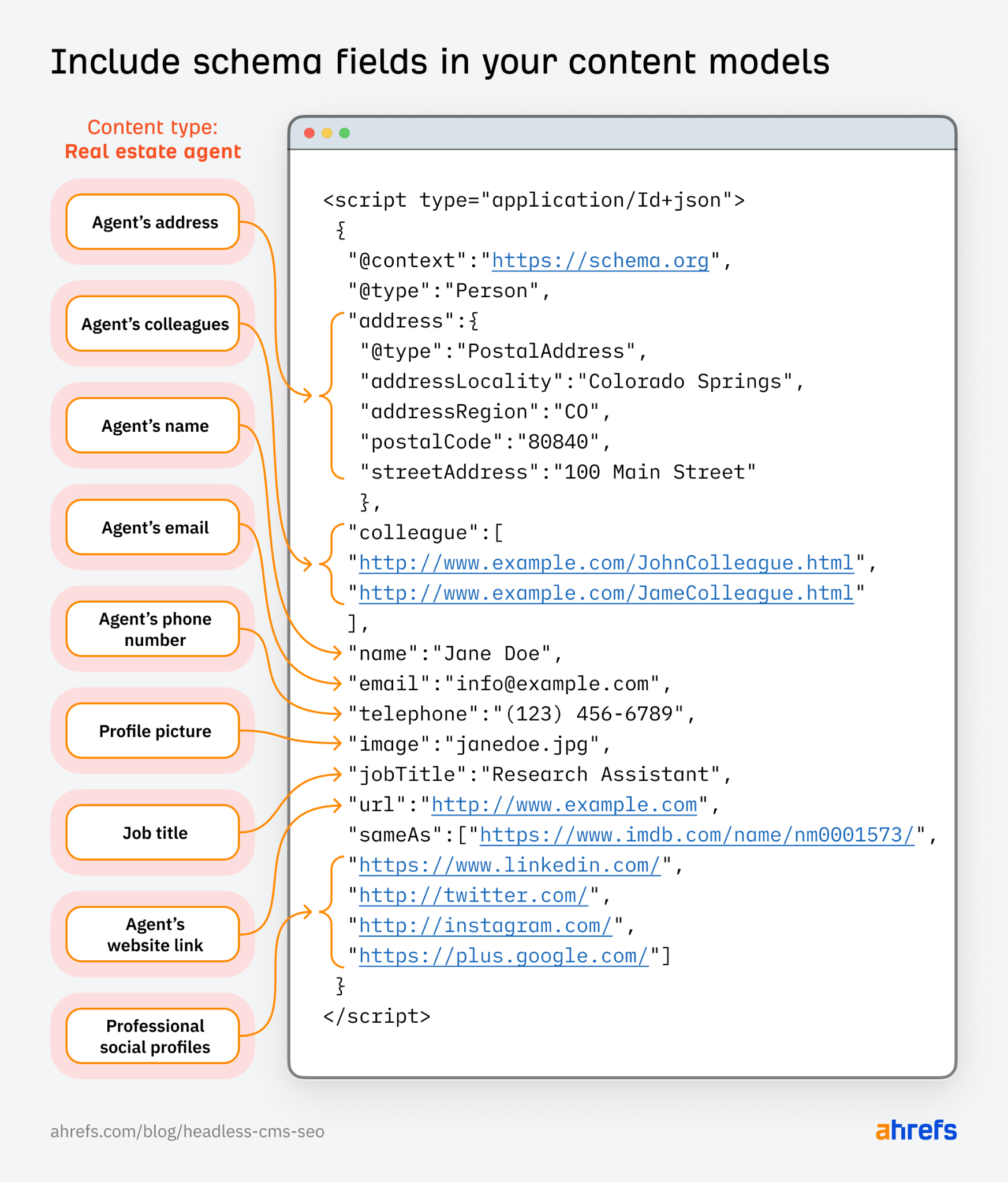

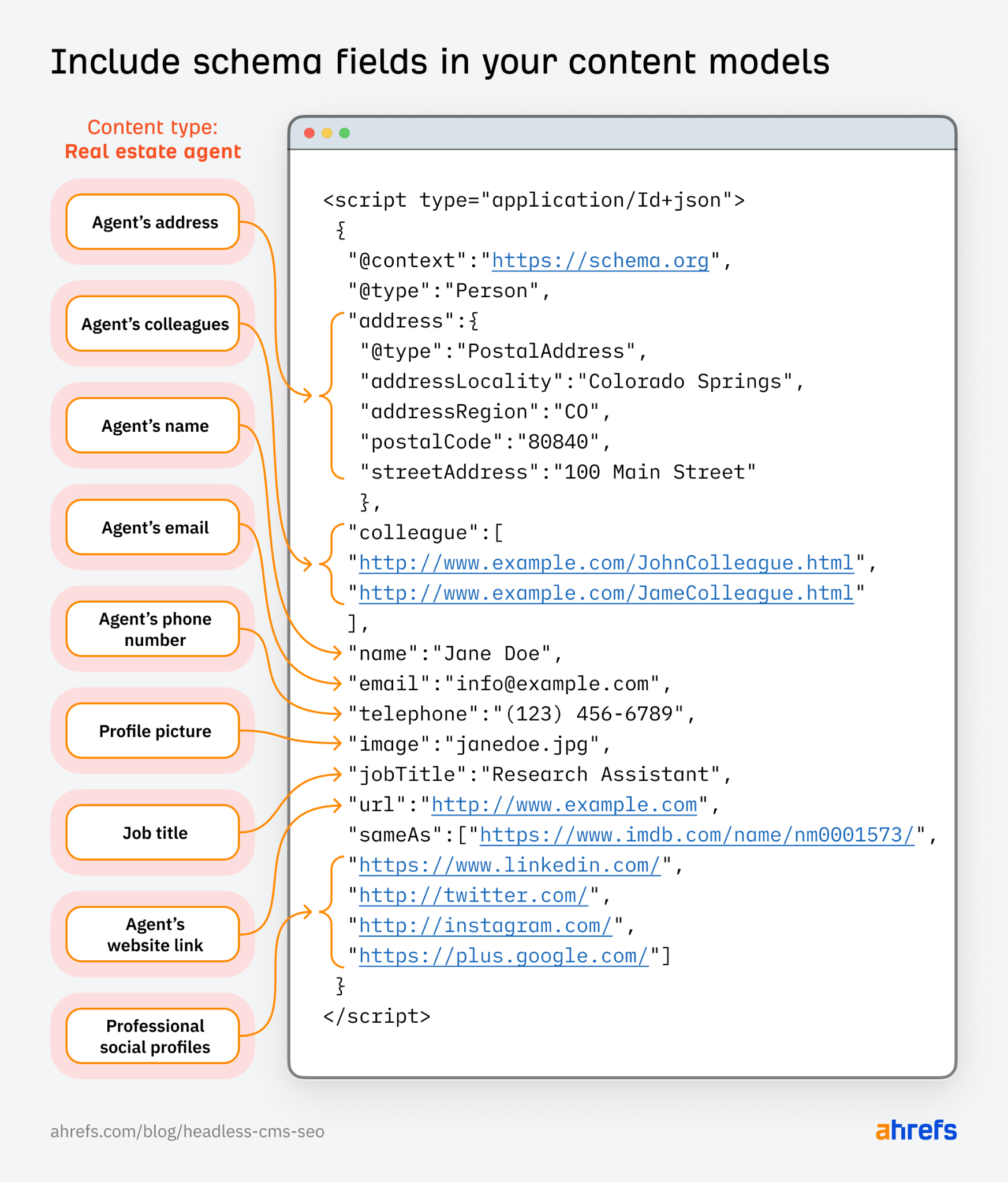

For example, if you have a real estate business, then you’ll need to include property listings and information about your agents in your content model. And, you’ll need to consider all the attributes you’ll need for each of these types of content.

Here’s an example of what that may look, like:

Things like the “property title” or the “agent’s name” are the attributes that fit each type of content best. You’ll need to think about all the attributes needed for every content type you add to your headless CMS.

Now, just because you have two different types of content, it doesn’t mean they can’t show on the same page. They can indeed.

Notice how the property listing includes a reference to the agent managing that property? This reference connects the two different content types to each other and allows every property listing to display information about the relevant agent.

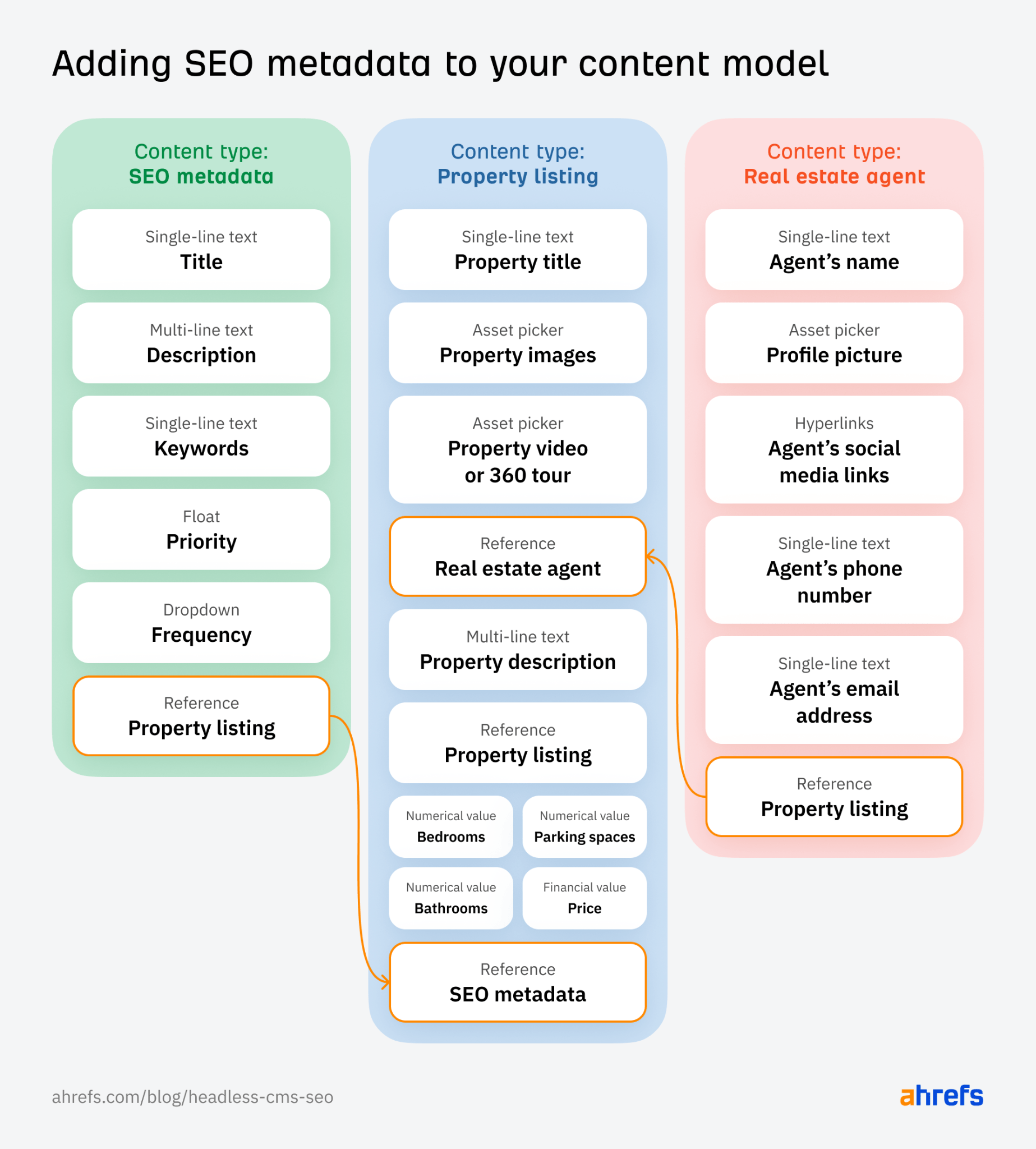

When it comes to headless SEO, you’ll need to include a similar sort of reference for content types that require SEO metadata or particular types of schema markup, like so:

Doing this allows your website to include all the relevant on-page optimizations you need. But then you can also choose not to load this metadata for platforms and channels where SEO is not a priority, like in a mobile app.

Here are the biggest ones:

- Publish content across channels more easily, reaching more people in the process

- Lighter and more versatile website

- Remove bottlenecks between dev and content teams

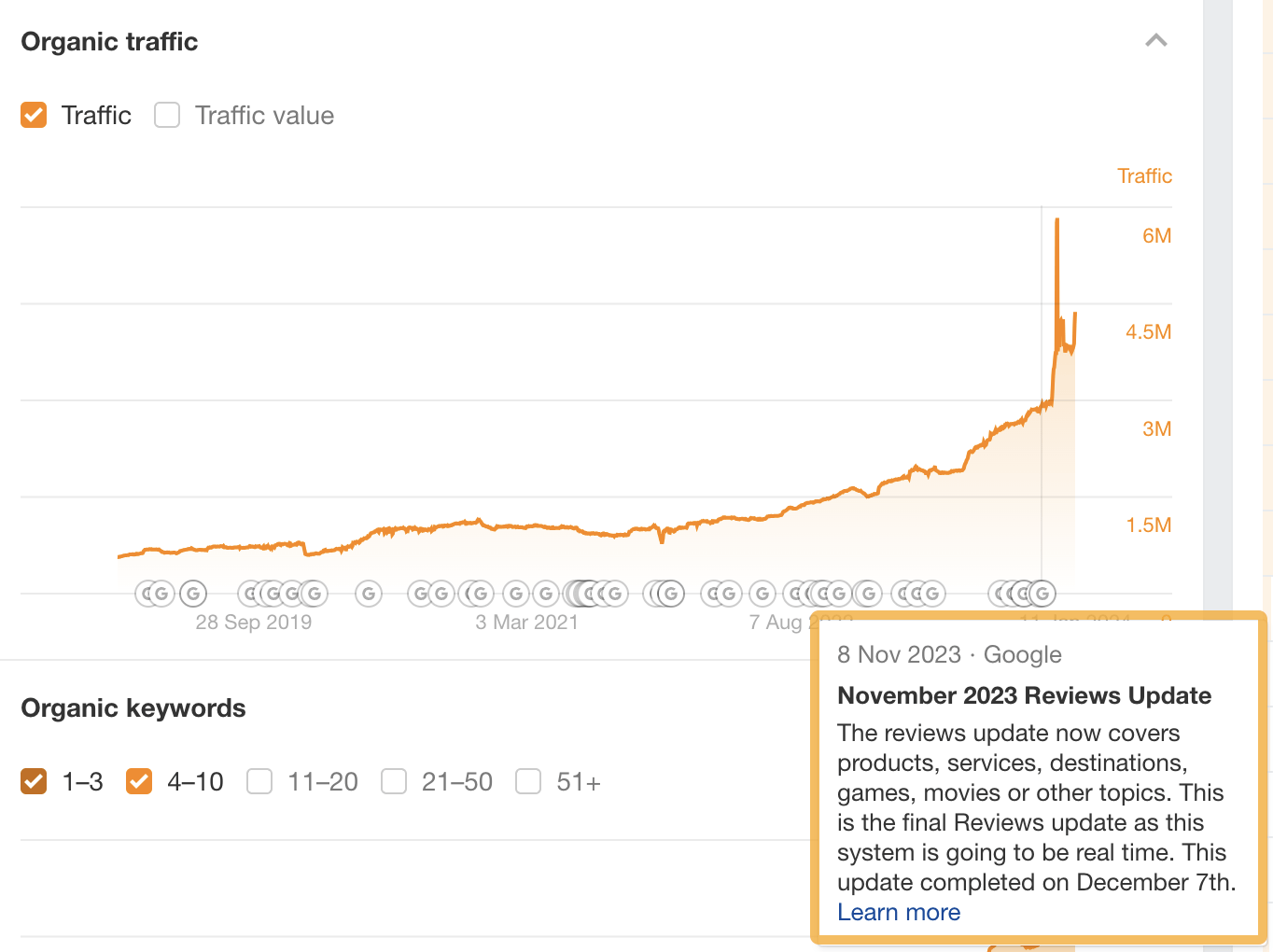

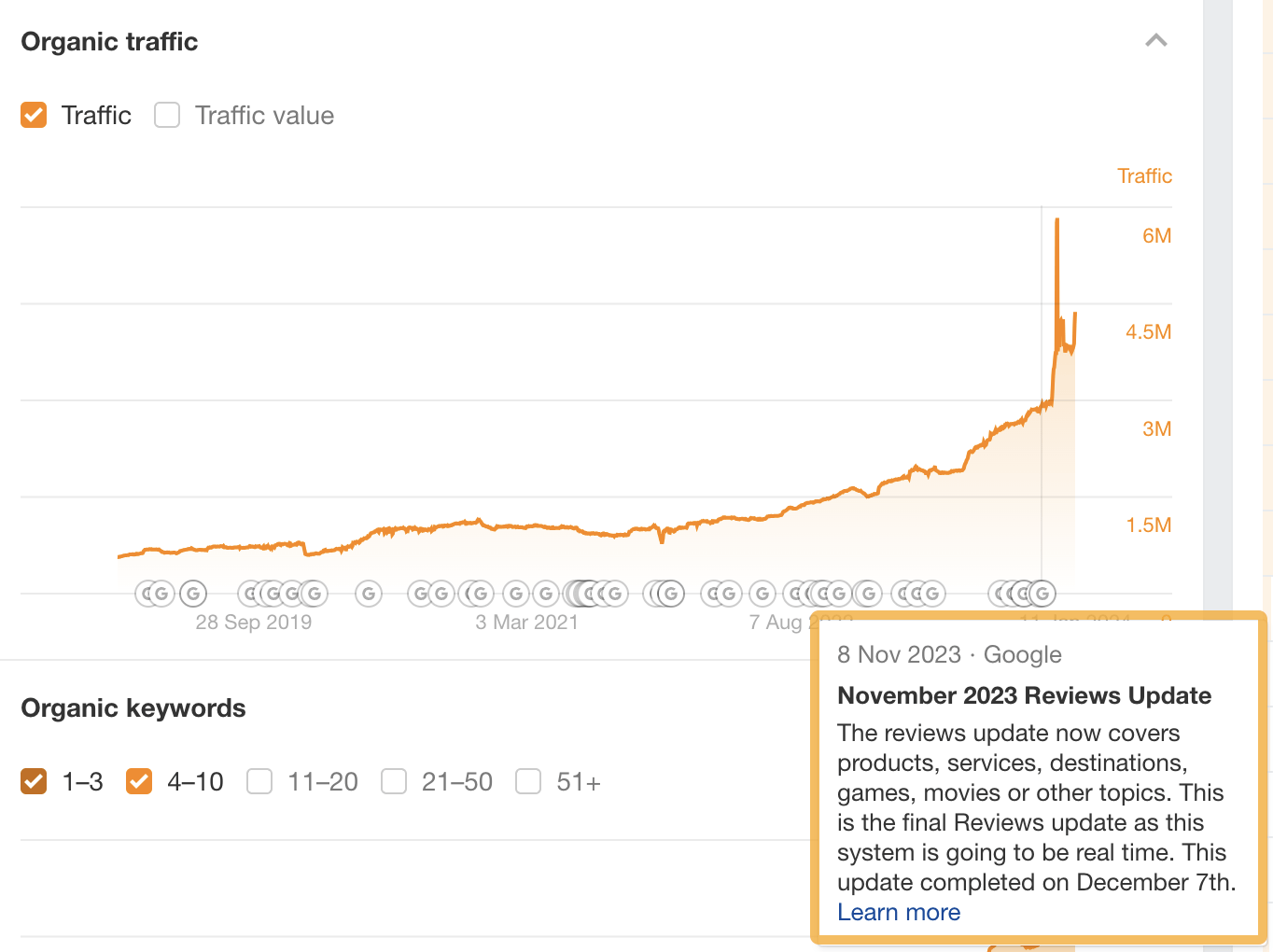

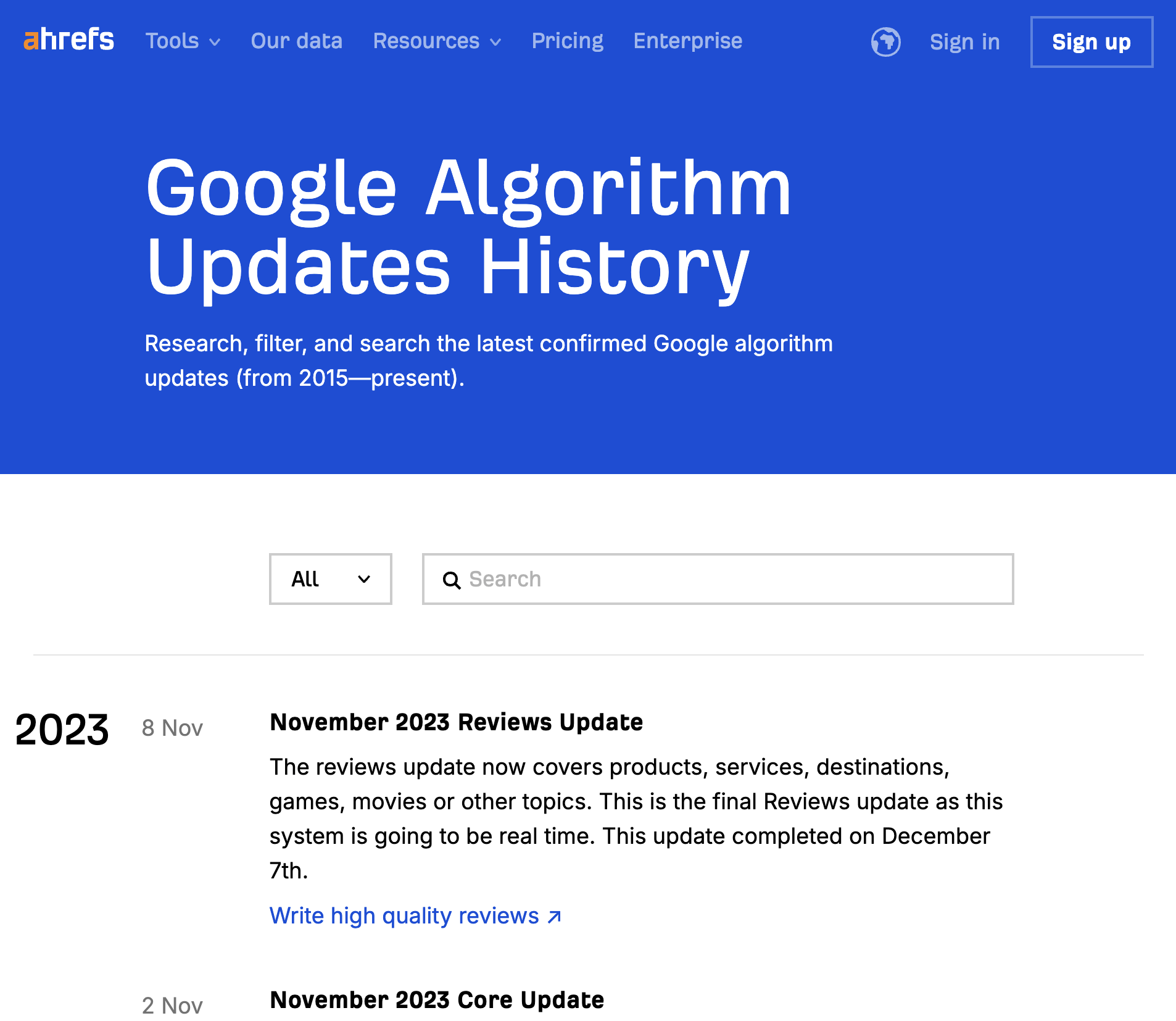

For example, at Ahrefs, we use a headless framework to show content about Google algorithm updates in two places: the organic traffic chart in Site Explorer…

… and our page listing Google algorithm updates (that anyone can view):

Each time a new Google update is released, we simply add information about it in our headless CMS and it gets pushed to both locations. Even better, as there’s no need to involve developers, our content team can move fast and keep everything updated with ease.

But we’re not the only ones benefitting from a headless approach. My buddy Dion Lovrecich at Extra Strength (a marketing agency here in Australia) recently implemented it for a client and had this to say:

Deploying headless SEO took our client’s content process from an internal fiasco to big productivity wins.

Until we moved to headless, the engineering team would halt any front-end work when content was being updated. This is no longer required.

We’re also able to more easily adapt and update content (very important in the fast-moving legal landscape), segment SEO requirements for different types of content formats (like blogs, videos, and images), and publish relevant content faster—all thanks to headless approach.

Despite the many benefits that headless systems offer, there are also some disadvantages to consider.

For example, these systems are more complex than traditional CMS solutions. They require a lot of resources to build and maintain. It’s also difficult for non-technical teams to get started with headless due to the integrative nature and the various APIs that may need to be connected.

Even with developer support, you’ll need a greater technical skill set so you can brief developers correctly and minimize mistakes in your headless SEO setup.

These limitations make it challenging for smaller businesses and non-technical teams to successfully work in a headless environment. That being said, there are many emerging solutions that make headless sites easier to build and optimize and it’s likely we’ll see smaller organizations begin to adopt such technologies in the future.

Headless SEO best practices typically follow the same rules as any SEO strategy. You should still create valuable content that meets search intent, deliver optimal user experiences, and ensure search engines are crawling a lean, optimized website.

However, headless SEO also requires a greater degree of competency and knowledge when it comes to some technical and on-page SEO implementations which we’ve outlined for you below.

1. Brief your devs on technical SEO best practices

This may sound boring but you really can’t succeed with headless SEO unless your devs understand what they need to implement. And that comes down to how well you communicate with them.

For example, instead of instructing them to simply “add a sitemap,” get specific. “I need an XML sitemap that updates dynamically on a daily basis and only includes indexable, canonical URLs with a 200 status code.”

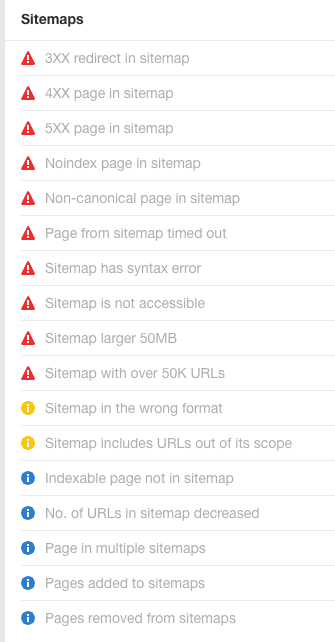

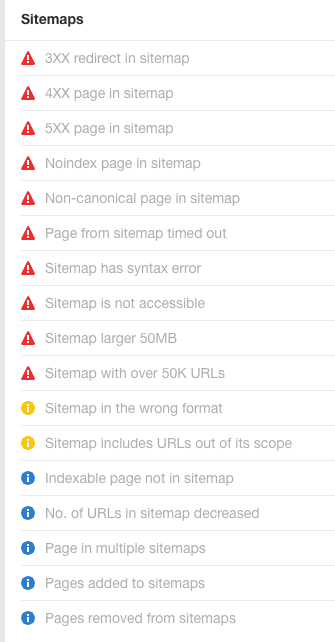

Then, you can leverage Ahrefs’ Site Audit to keep track of how developers are implementing your requests. Set up a regular audit schedule so you can keep tabs on critical errors across a list of over 170 technical issues.

For example, here are all the ones for sitemaps that you can use to audit the implementation of the above instructions:

2. Use keyword insights to create your content models

The best place to start content modeling is by doing keyword research. Not only can you uncover the dominant search intents your content will need to meet, you can also get really cool insights on attributes to include in your content models.

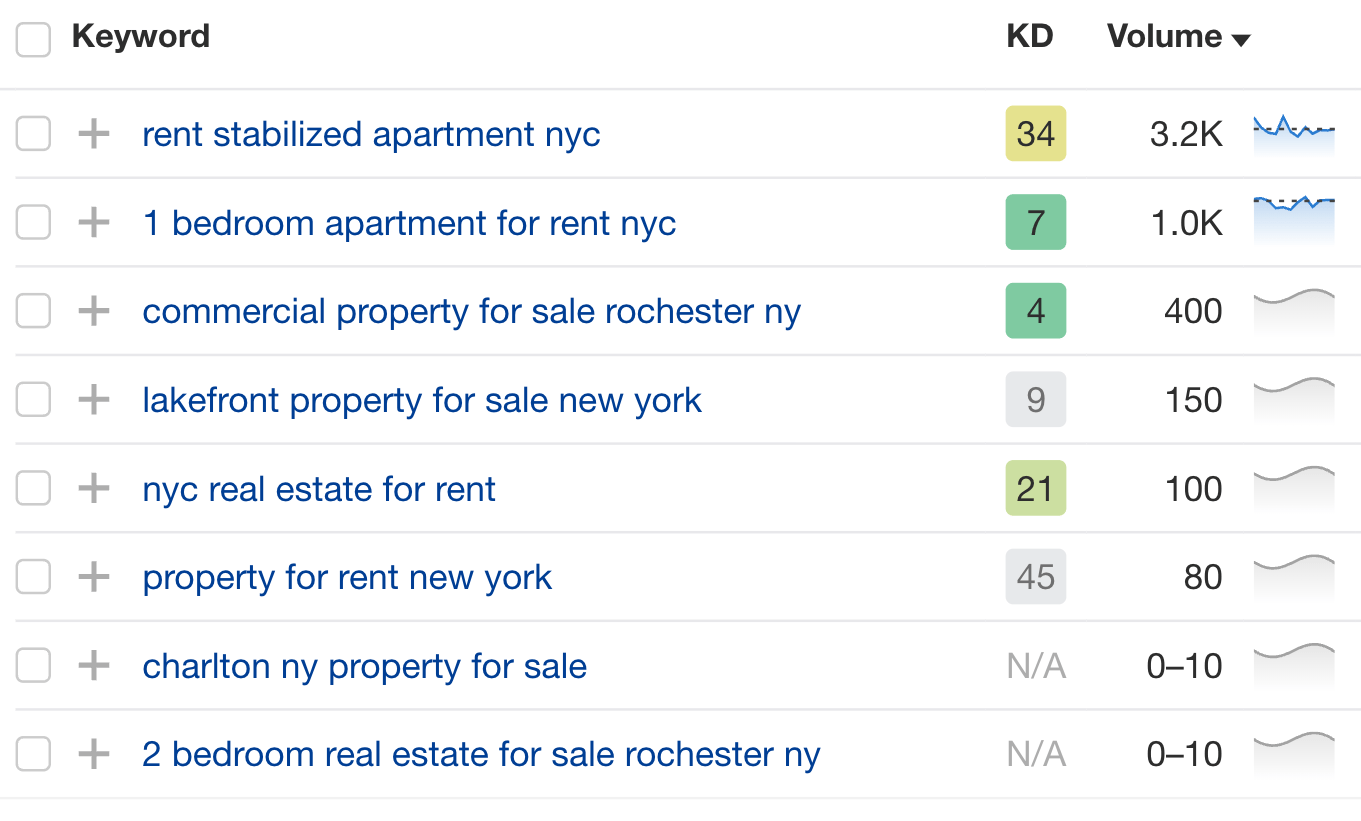

For example, let’s say you’re a real estate agent in New York. Using Ahrefs’ Keywords Explorer, you see that there are a number of specific, medium- to long-tail keywords people search for:

From these, we can:

- Infer search intent, like if people want to buy or rent.

- Discover attributes people care most about, like the number of bedrooms or a lakefront location.

- Map out suburbs with a lot of interest, like Rochester or Charlton.

- Consider categories for different property types, like apartments or commercial properties.

With these insights, we can then create the following in our content model:

- Categories based on a purchase or rental intent

- Property listings with meaningful attributes included

- Categories and tags based on location or property types

- A dynamic map with filters for specific attributes

And this is just a starting point! The possibilities headless SEO provides are endlessly customizable and using keyword data will help you hone in on what matters most to your audience.

3. Map out your taxonomies like tags and categories

Taxonomies help name, describe, and classify your content so you can easily find it and so it appears dynamically in the right places.

When it comes to headless SEO, you will need to create a detailed plan so the right content shows up at the right time and is optimized correctly for the device or channel it’s being viewed on.

Tags and categories are common examples of taxonomy structures you can use to organize your content.

For example, a real estate agent might create categories based on:

- Location: e.g., New York, Los Angeles, San Francisco

- Property types: e.g., Apartment, house, villa, multi-plex

- Intent: To buy, to rent, to sell

Furthermore, you can create tags that align with the attributes of each property.

Instead of simply tagging a page or post, however, you’ll be tagging all content types in your headless CMS. This means you may need to consider taxonomies that won’t display on the front-end view layer.

For instance, you can categorize your content based on things like the type of file it is (image, video, text) or the device it’s best viewed on (mobile, desktop, VR headset).

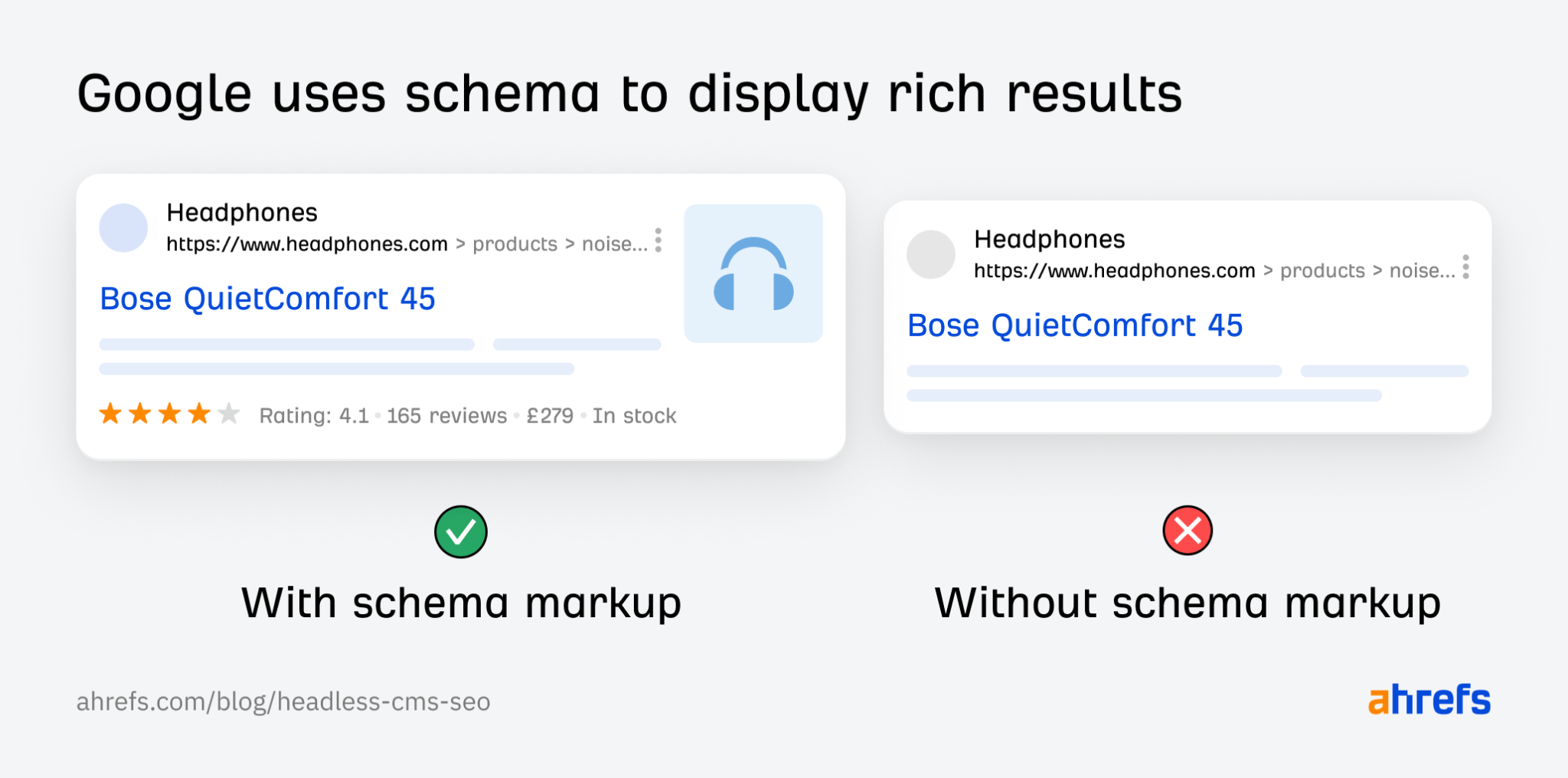

4. Add separate fields for schema markup

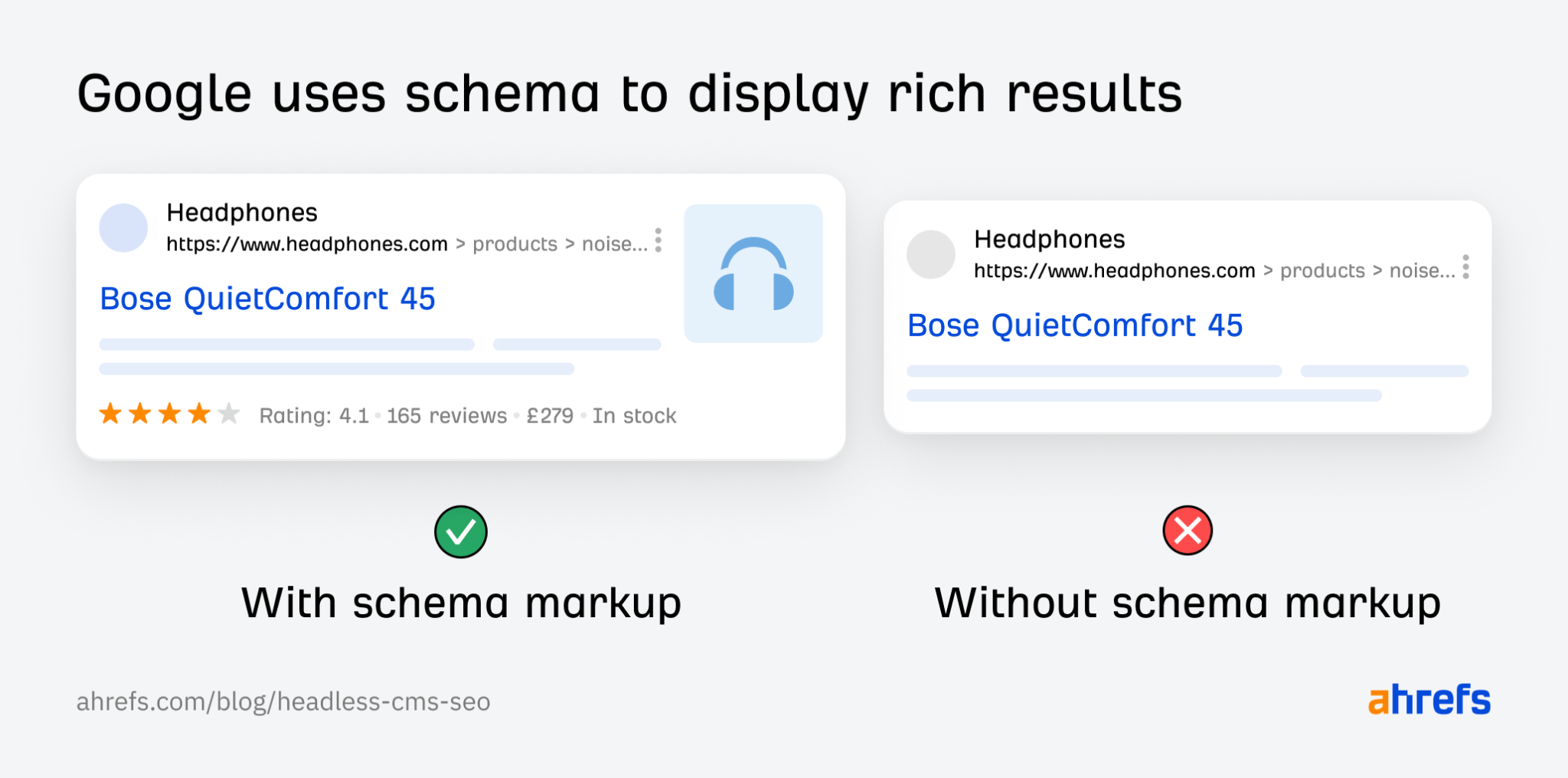

Schema markup helps search engines better understand content. Many search engines use schema markup to enhance the interface and display content in a more visually rich manner.

The great thing about headless SEO is that your content models can easily be converted into rich schema markup.

For example, if you create a content type for your real estate agents, you can align the attributes with those required for detailed person schema. Then, schema can be created programmatically and included everywhere those fields display (even if they’re not on your website).

You can also request that your devs create a field for inputting custom schema instead. This can be done for each URL or for each content component in your content mode, and you can set rules for delivering it in a single script on the front end.

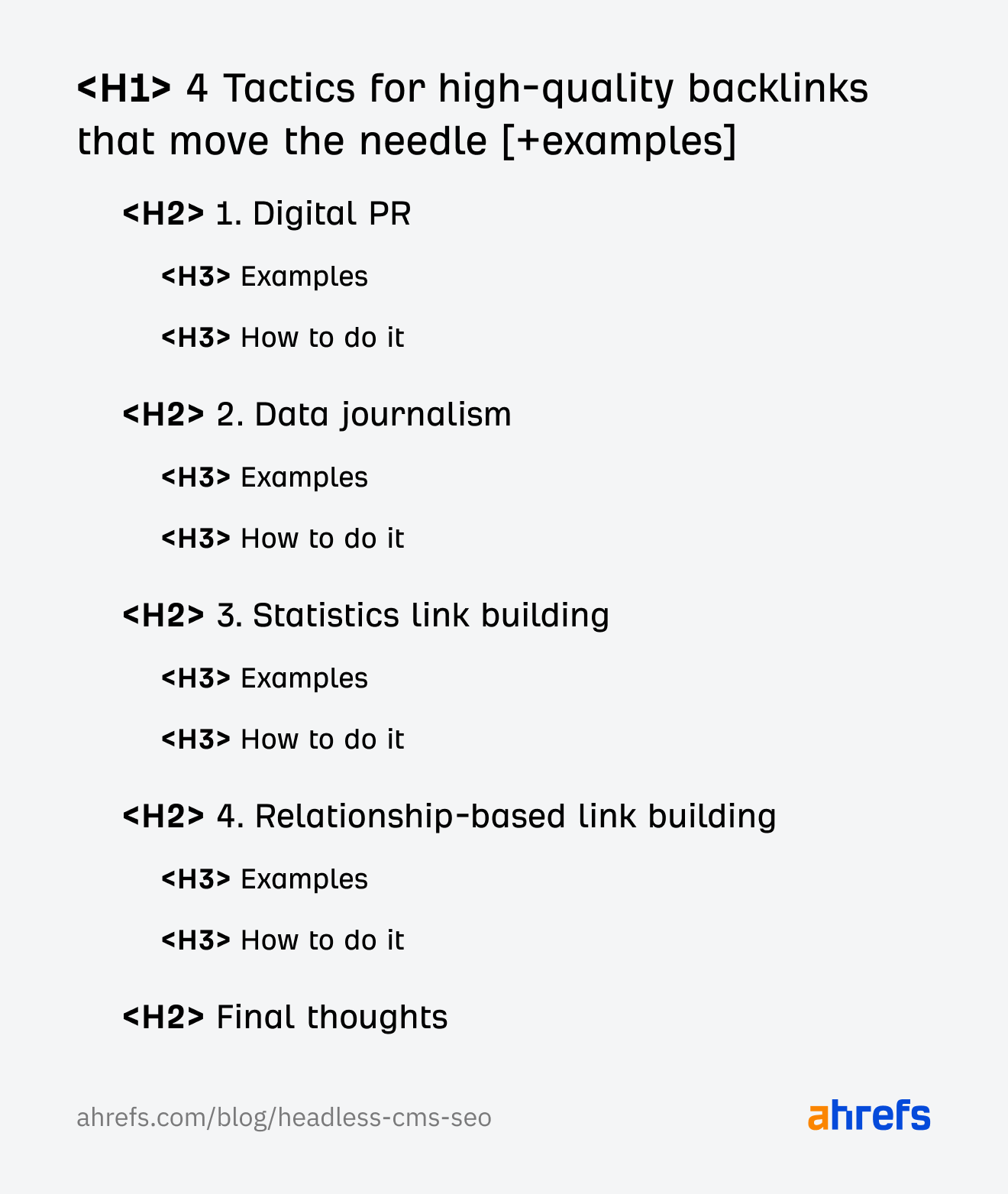

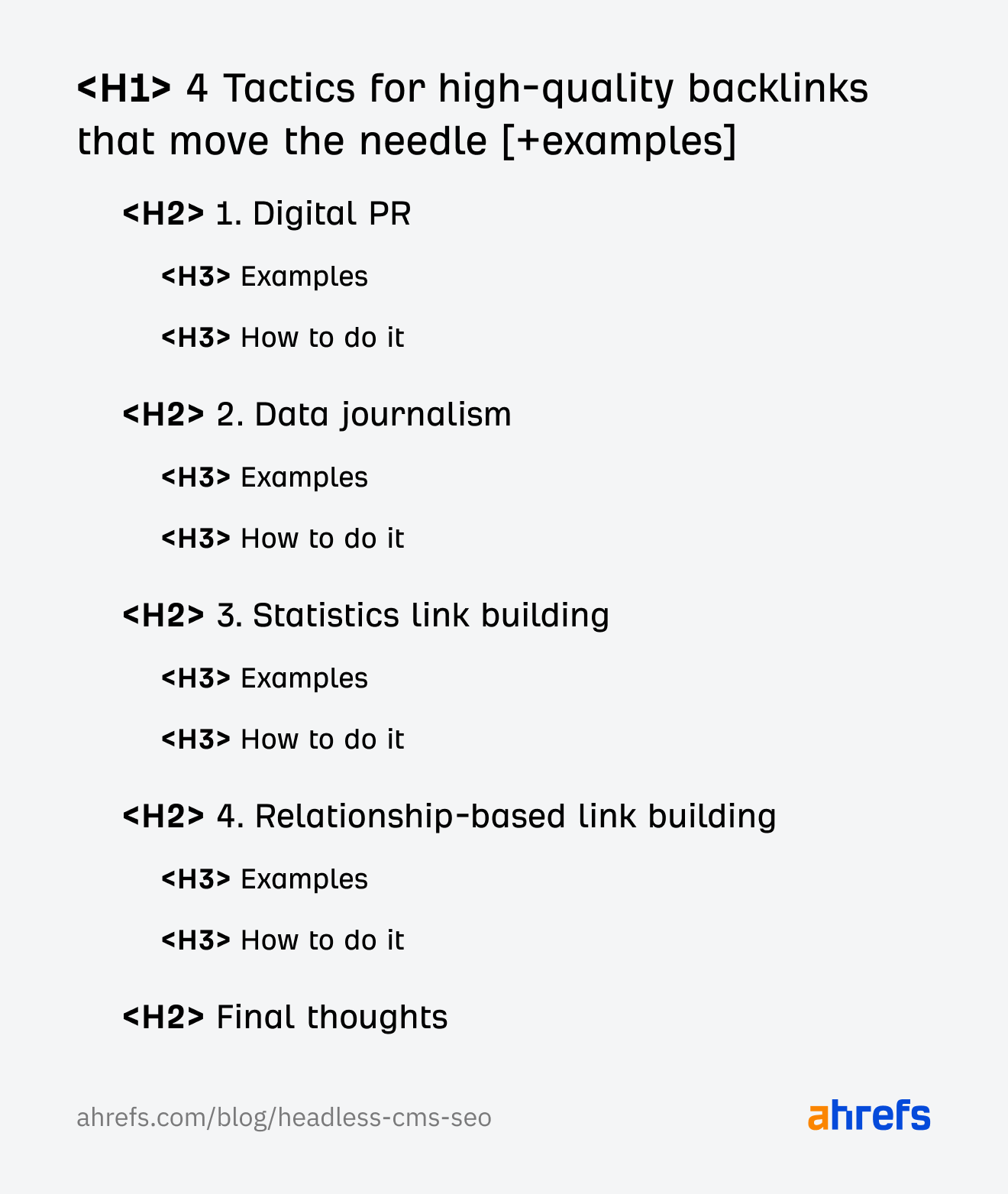

5. Think about heading hierarchy and integrate it into your model

Heading hierarchy relates to the relationship between main headings and sub-headings in your content. There are SEO and accessibility best practices that are best for your content to abide by, but with a headless CMS, it can be difficult to track the heading hierarchy for each page.

As a standard rule, only use one H1 tag and reserve it for the main title of the page. You can do this in the view layer of the website by denoting the field for the main title to be tagged as a H1 in the HTML code.

You can default to H2 or H3 headings for the remaining sub-headings. However, it is important your designers only allocate heading tags to content that is part of the main body.

Designers can often add heading tags for things they want to look visually similar, even if those elements aren’t part of the main content, so make sure you instruct them not to get carried away!

6. Use references for internal links

When it comes to internal links in a headless SEO strategy, consider adding references instead of full URLs. A reference operates in the background of your CMS and connects content dynamically to each other.

If you change a URL down the track, every reference to it will update automatically on your live site. This can save your team hours of time that would otherwise be wasted finding and fixing broken links, all without them having to touch a single piece of code.

Final thoughts

There are some clear advantages to using a headless CMS for SEO. But, SEO is not the only factor to consider, and it’s often a decision outside of the SEO team’s control.

It’s worth pitching headless SEO to the decision-makers in your organization if:

- You need a more flexible approach to content deliverability.

- You’d like total control over every on-page and technical SEO element.

- You’d like to unlock omnichannel marketing capabilities.

- You need a more scalable solution for content publishing.

- You’d like to deliver better user experiences on your front-end.

- You’d like to better segment content by locale or language.

Have any questions? Got a cool headless SEO use case to share? Reach out on LinkedIn and let me know.