SEO

Results-Driven SEO Project Management: From Chaos to Cash

Making a profit in SEO relies on good project management. That means doing things that get results rather than just drowning yourself in endless tasks.

Below, I’ll walk you through a 7-step process to do exactly that.

Having clear goals keeps your team unified toward a specific direction. For example, if your boss allocates $5,000/month for the SEO project, you need to translate this into meaningful results and milestones you can report on.

A goal you can easily set is to increase the website’s organic traffic value. This is a metric unique to Ahrefs that estimates a dollar value of SEO traffic.

If you invest $5,000/month in SEO for six months, you could aim to increase your website’s organic traffic value by $30,000 ($5,000 ✕ 6 months).

This isn’t the most accurate method because traffic value doesn’t necessarily correlate with real-world revenues, but it works as an easy starting point for setting targets.

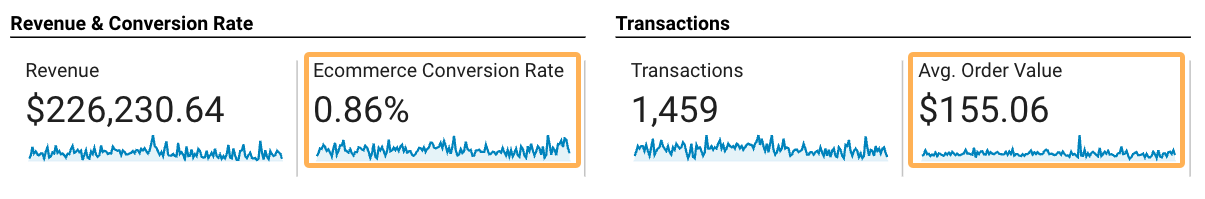

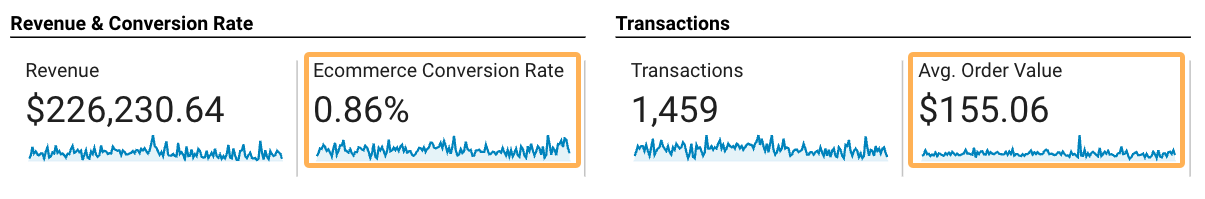

A better solution is to use conversion data and average order or deal value to set goals around delivering a return on investment. You can find these metrics in your analytics tool, like Google Analytics, if conversion tracking is set up:

Sidenote.

If you don’t have access to conversion metrics like this, to be conservative, use 1% as a ballpark conversion rate and the cheapest product or service price for the average order value.

Using these metrics, you can calculate the number of sales needed to break even on the SEO campaign.

# of monthly sales to break even = monthly SEO cost average order value

Since this project’s monthly SEO cost is $5,000, we’ll need to grow sales from organic traffic by 32.25 for each month of the project’s duration.

Here’s the formula to discover roughly how much traffic or projected organic sessions you’ll need:

projected organic sessions = transactions needed to break even conversion rate

So in this example, we divide 32.25 transactions by the conversion rate of 0.86% to learn that we need at least 3,750 organic monthly sessions to break even. Of course, not all traffic is created equal, so keep that in mind going forward.

So far, so good! (Save this number, we’ll need it in a moment).

In many cases, the timeline will be decided for you by your boss or client. For instance, if you take on a client with a six-month contract, that’s the timeframe in which you generally have to deliver results.

The question at this stage is whether it’s possible to reach your performance goal in that time.

Truthfully, there’s no way to know for sure, but you can look to your competitors for an idea.

Sure, you have no idea what their SEO budgets are (they could be spending 10x what you are), but if you see multiple competitors of a similar caliber getting similar results over a similar timeframe, that’s a good sign.

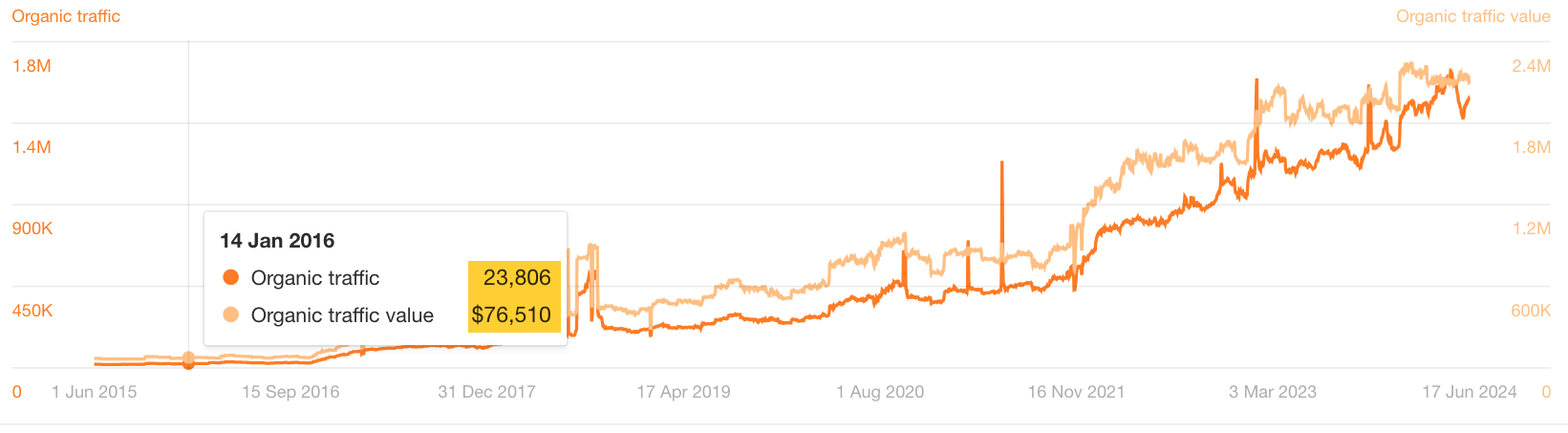

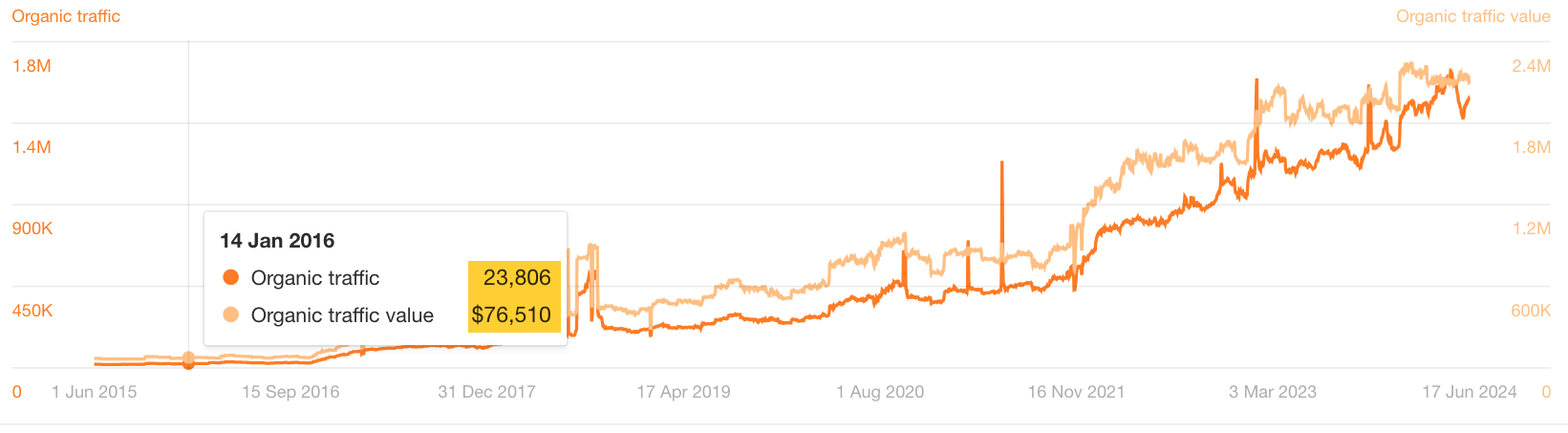

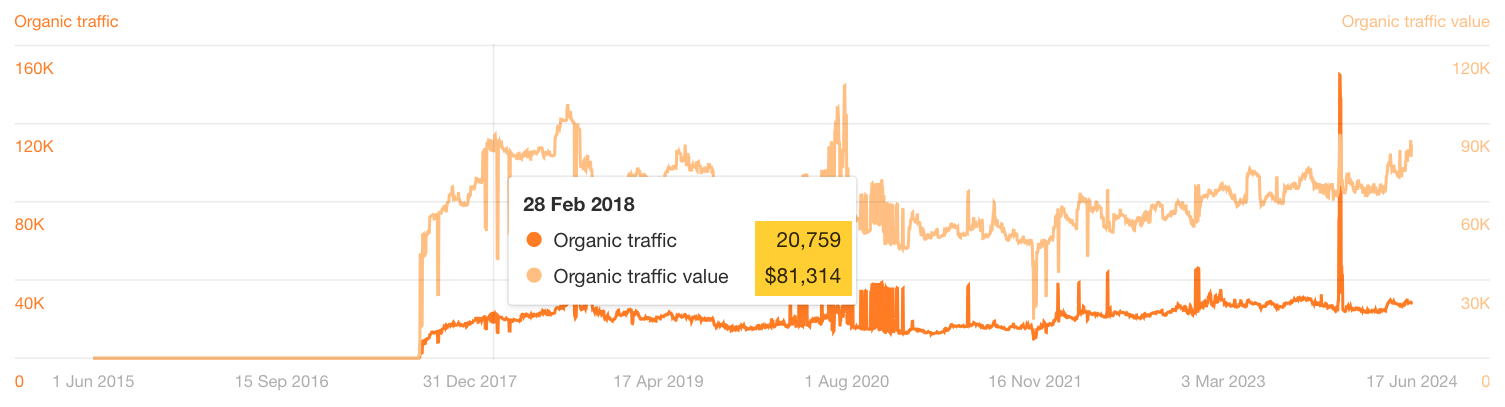

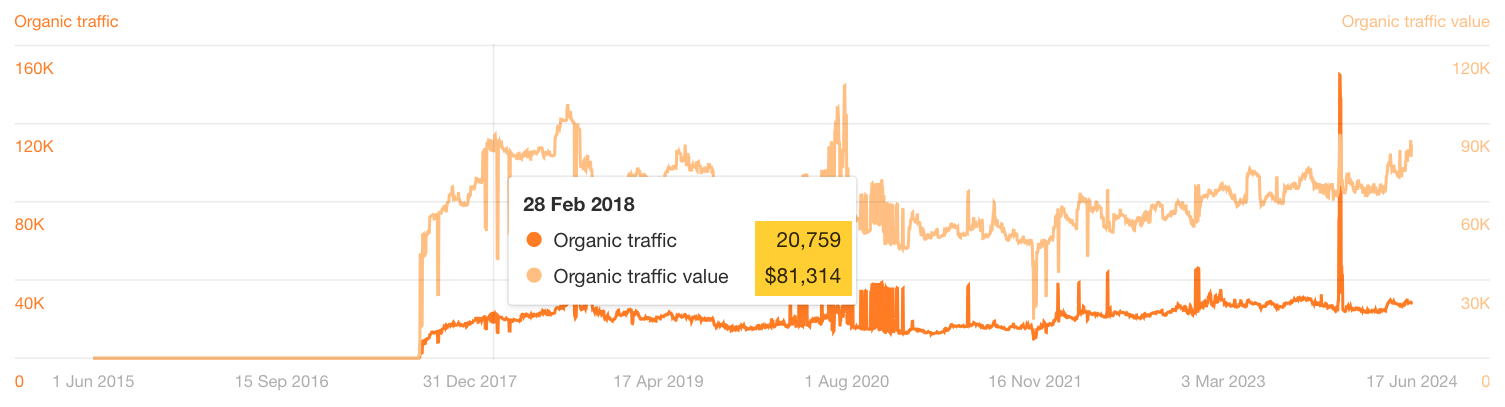

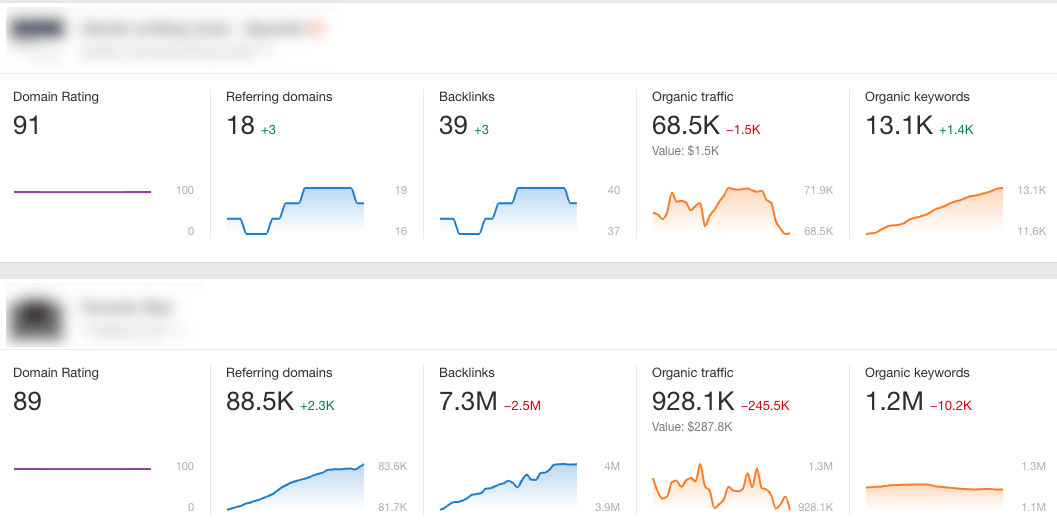

For instance, in its first six months of SEO, Webflow reached just shy of 24,000 organic monthly traffic, with a traffic value of $76,510 (according to Ahrefs).

By comparison, Duda’s first-year performance is also fairly close to Webflow’s.

So, if these are your competitors and your target is to reach a traffic value of $30,000 in six months or to increase monthly traffic by 3,750 sessions, it certainly seems achievable.

If you don’t see competitors hitting your target in your timeframe like this, you’ll need to rethink your goals and communicate them to key stakeholders. Communication is critical for setting the right expectations with your boss or clients.

Now that you’ve set an achievable goal for the project timeline, the next step is to plan what tasks actually need to be done to get you there.

You’ll need to spend some time on strategic tasks to help you determine the correct implementations for the project.

Don’t be tempted to skip this part!!

If you don’t spend enough time on strategic tasks like competitor analysis, keyword research, and auditing the current website, no matter how much action you take, it’ll be useless if you’re heading in the wrong direction.

But don’t overdo it, either. You need to balance strategy with implementation to get results.

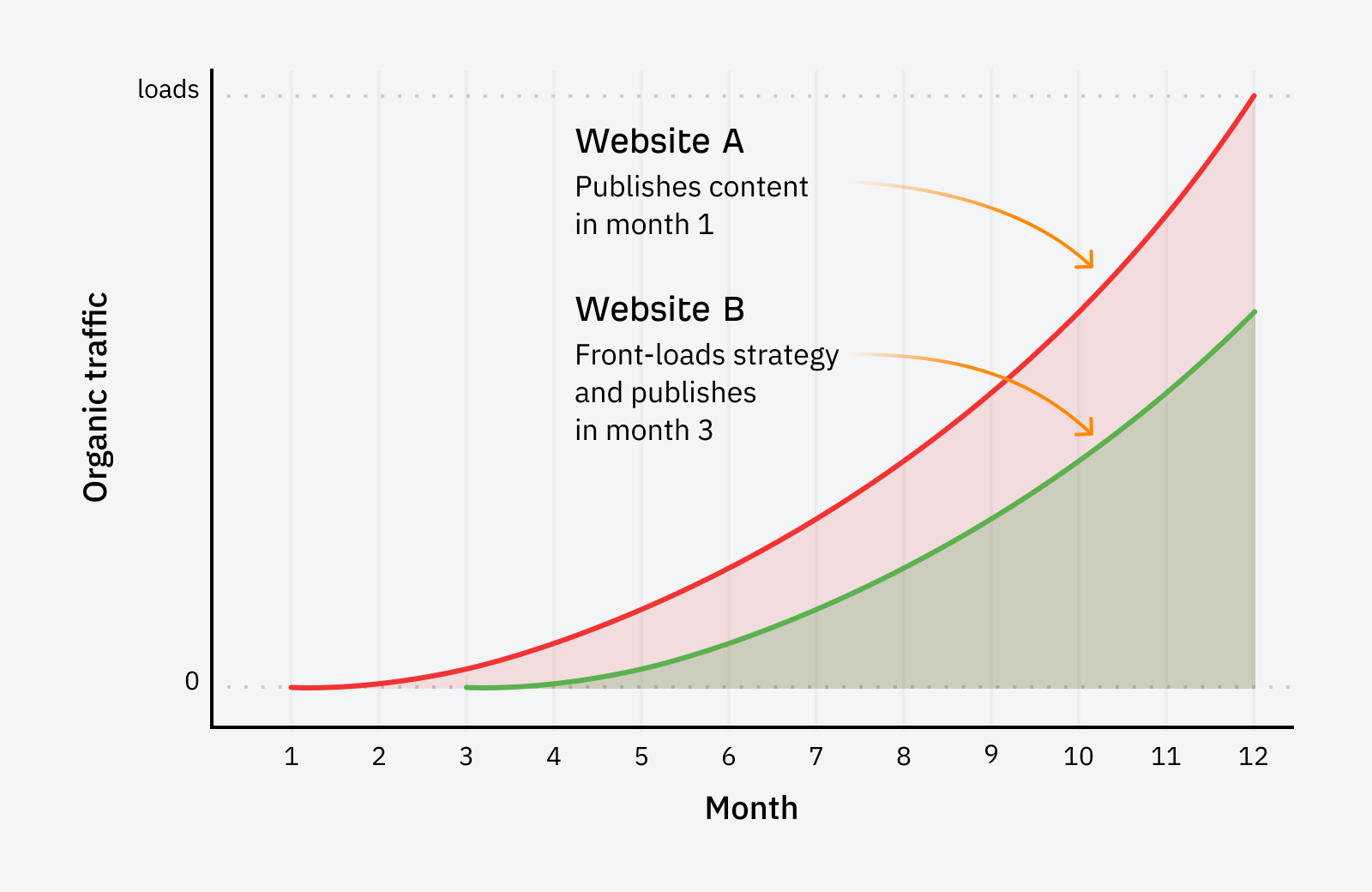

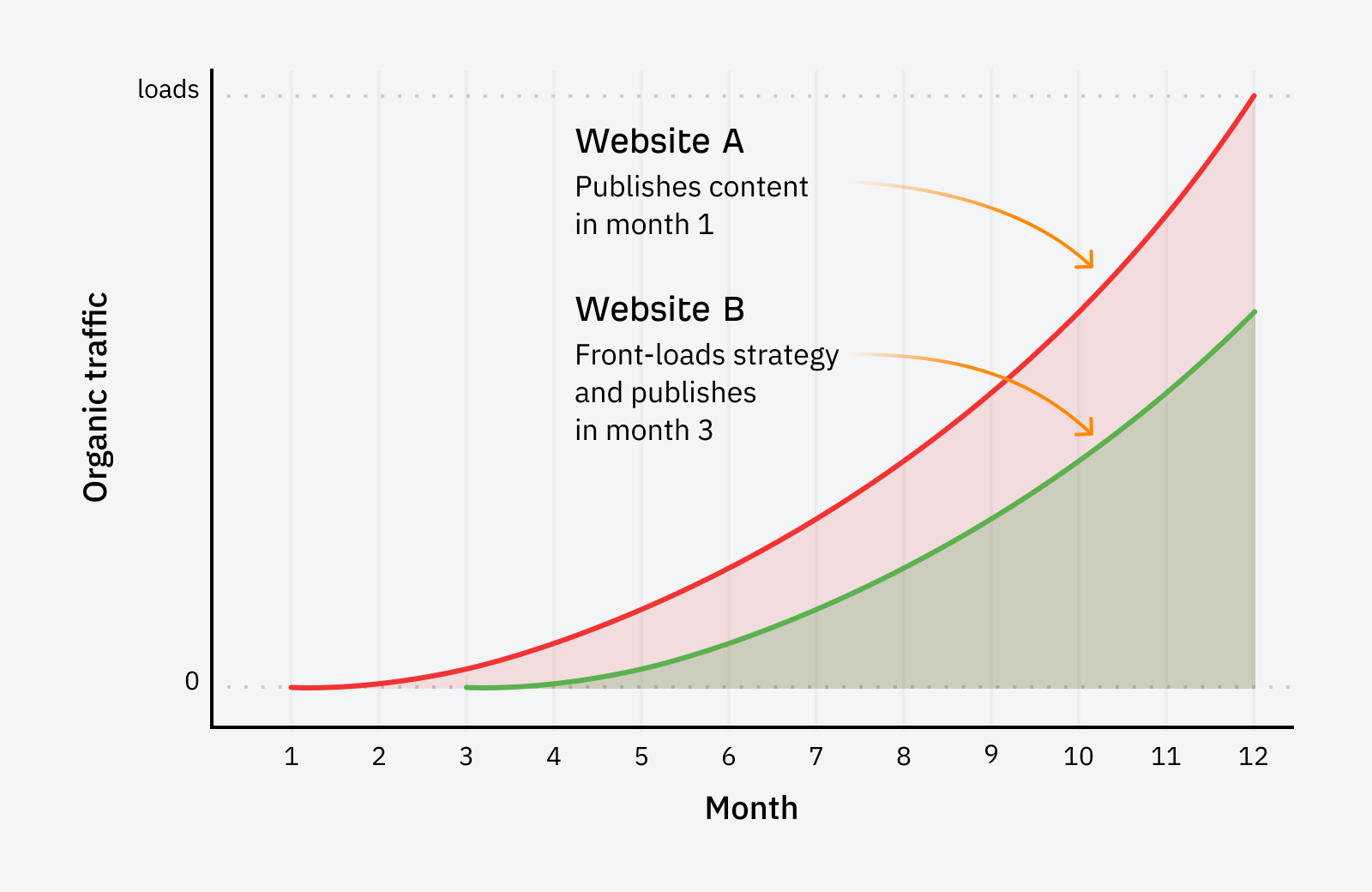

For example, there’s generally a notable difference in performance between a project that spends one month on strategy and publishes content ASAP compared to a project that front-loads strategic tasks and implements content a few months later.

I recommend spending ⅙ of the project timeline on strategy and ⅚ on implementation for the best balance.

As for what specific tasks you can plan, there are many things you could focus on here. The right things for your website will vary depending on your available skills and resources, plus what’s working best in your industry… but here’s where I’d start, given that the target is to increase traffic.

a) Fill content gaps

Start by finding pages that deliver traffic to competitors that your website doesn’t have.

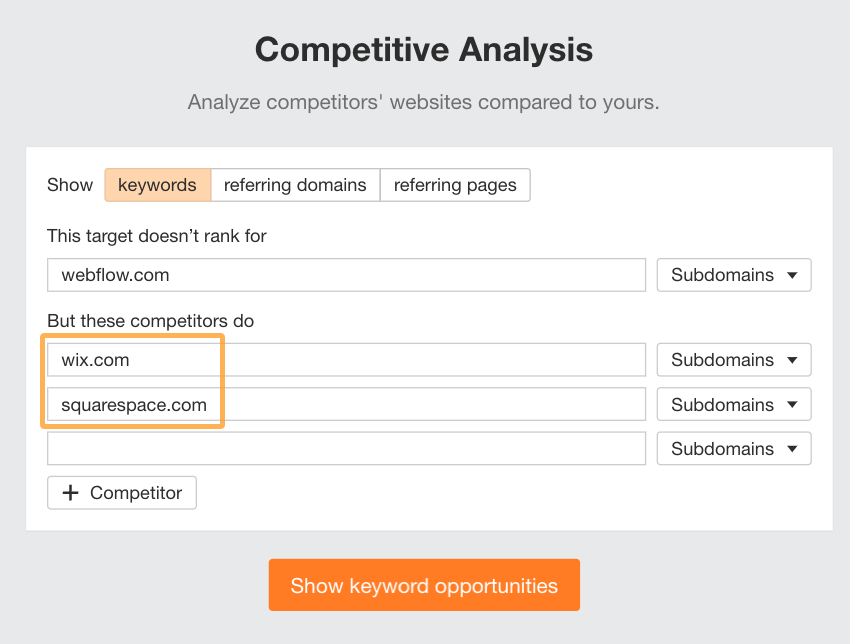

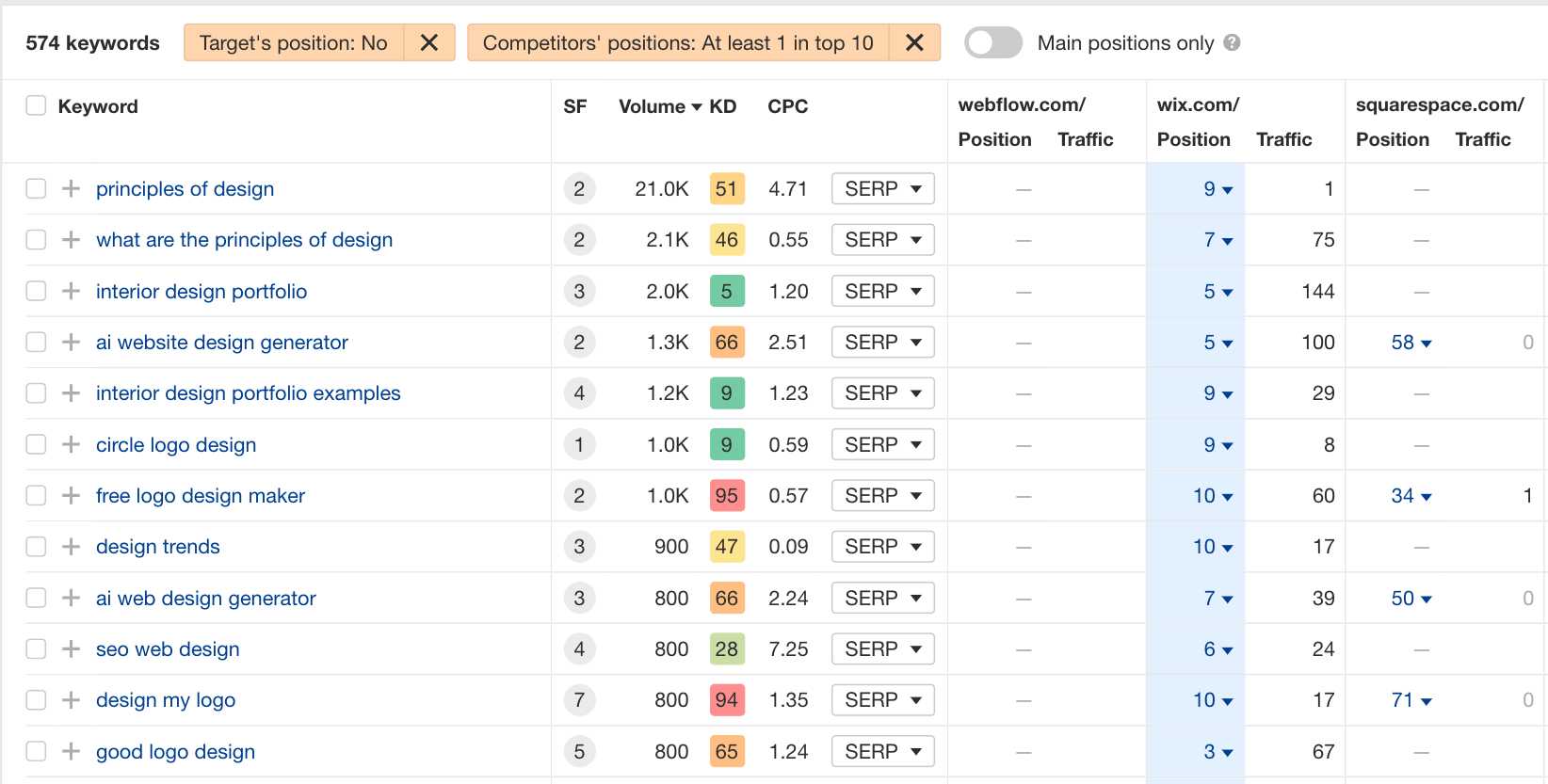

Using Ahrefs’ Competitive Analysis tool, make sure you select the “keywords” tab and then enter your website along with a handful of your top competitors, like so:

Then check out the results to find topics your competitors have written about that you haven’t. Make sure you qualify the topics according to what has business value for you.

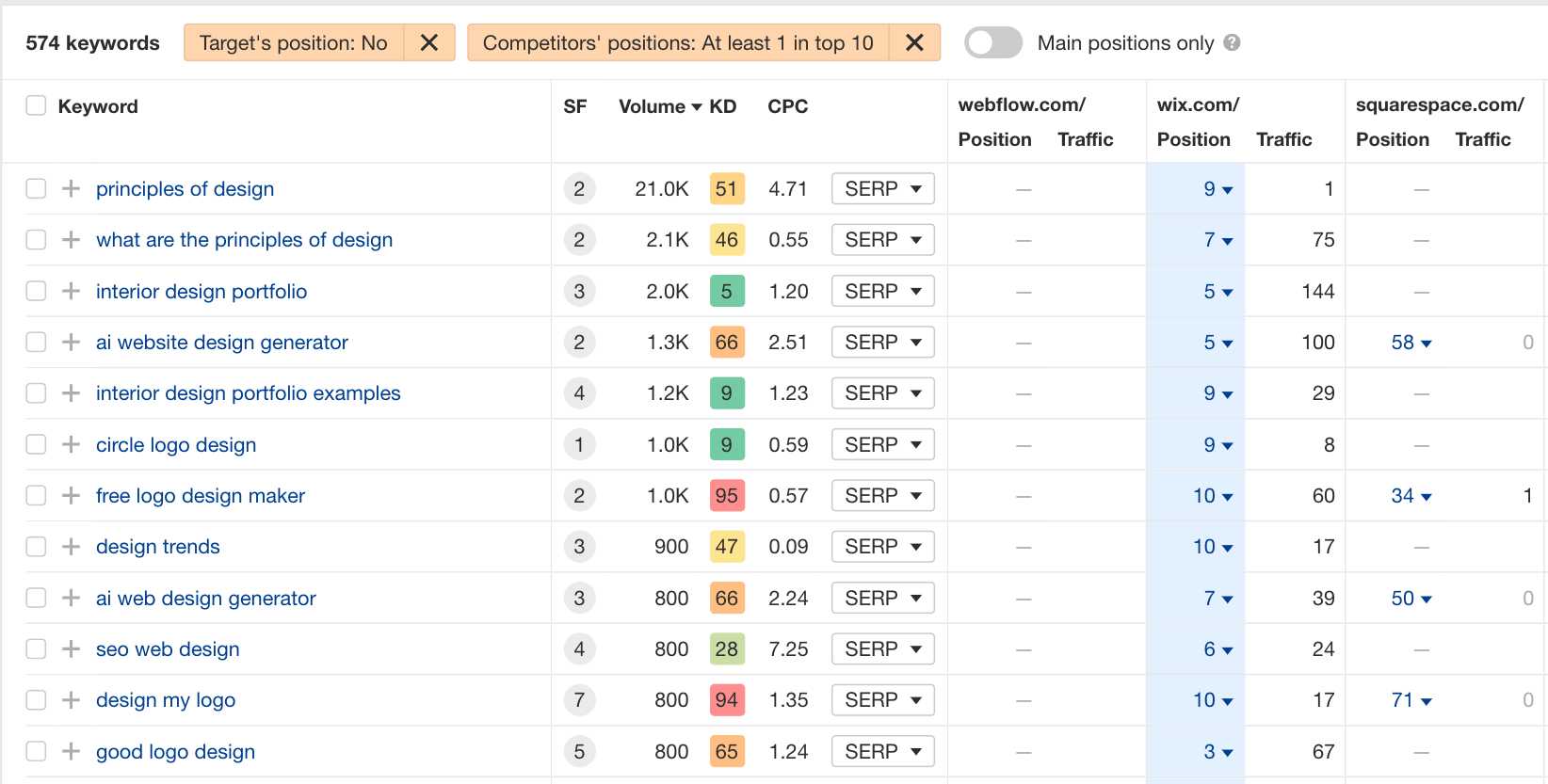

For instance, let’s look at design-related keywords that Wix or Squarespace rank for but Webflow doesn’t.

Many of these keywords hold very little business value for a company like Webflow, like any related to logo makers and generators. However, keywords related to design trends and principles might be topics Webflow can consider for its blog since designers are a staple part of its audience demographic.

For topics that have business value, create new content targeting these keywords.

There can be a lot of data to sift through here, so I recommend my content gap analysis template for a faster and smoother process 😉

b) Boost authority of top pages

This task is about identifying which of your content is already performing well and sending more internal links and backlinks to those pages.

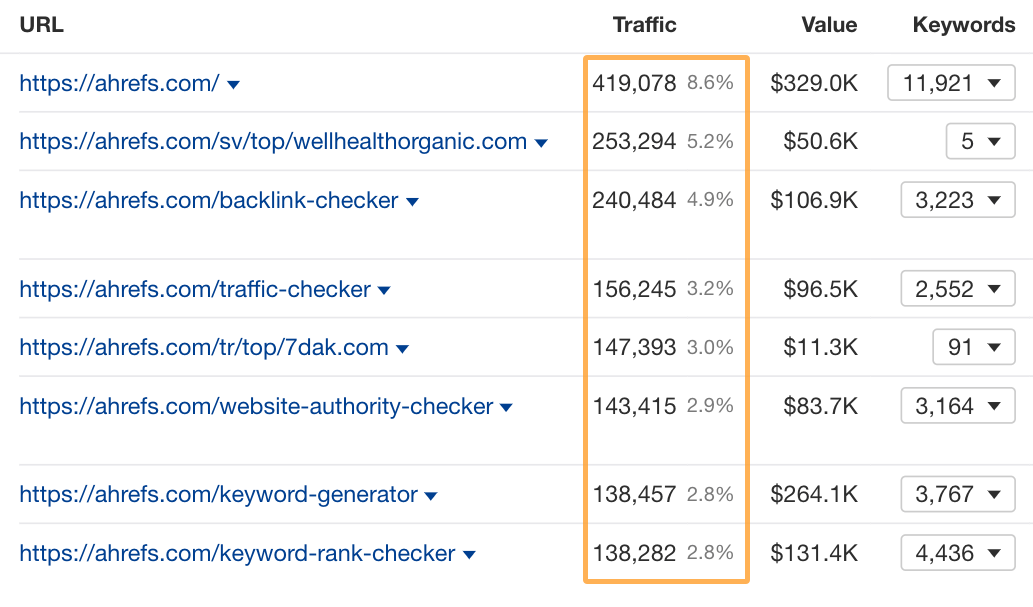

You can find the best pages to promote by using the Top Pages report in Site Explorer. Here you’ll see which pages on your site get the most traffic:

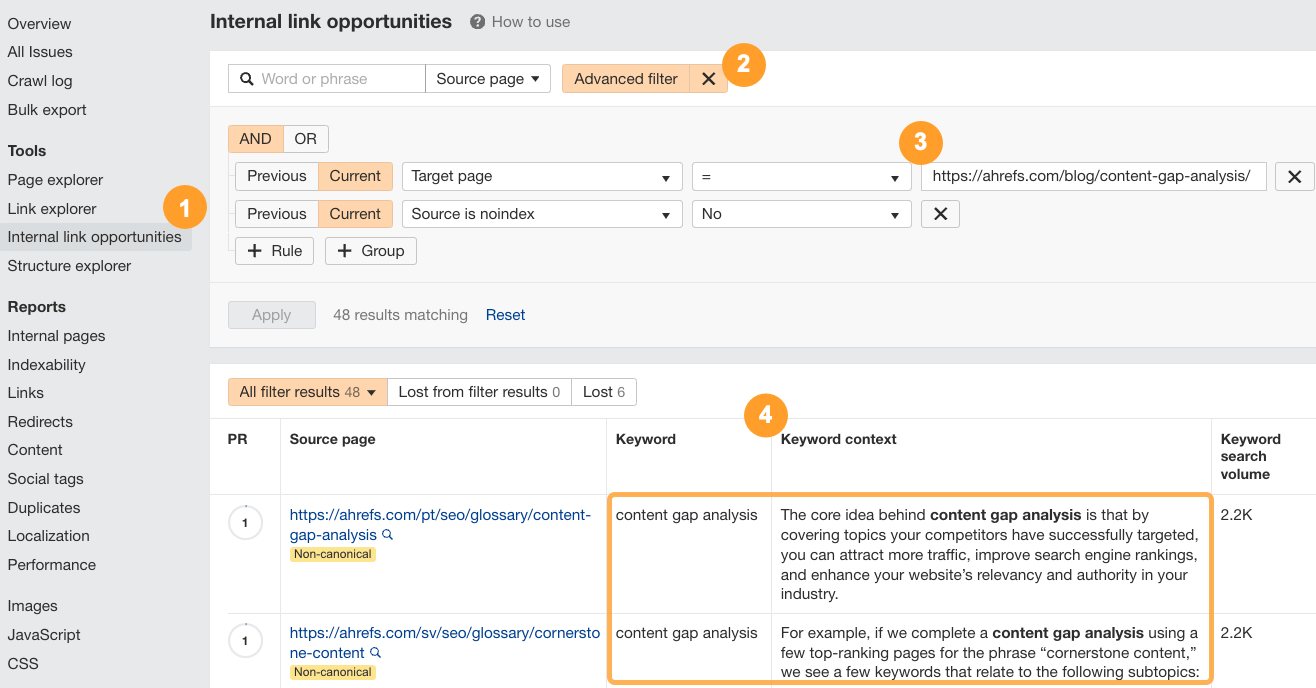

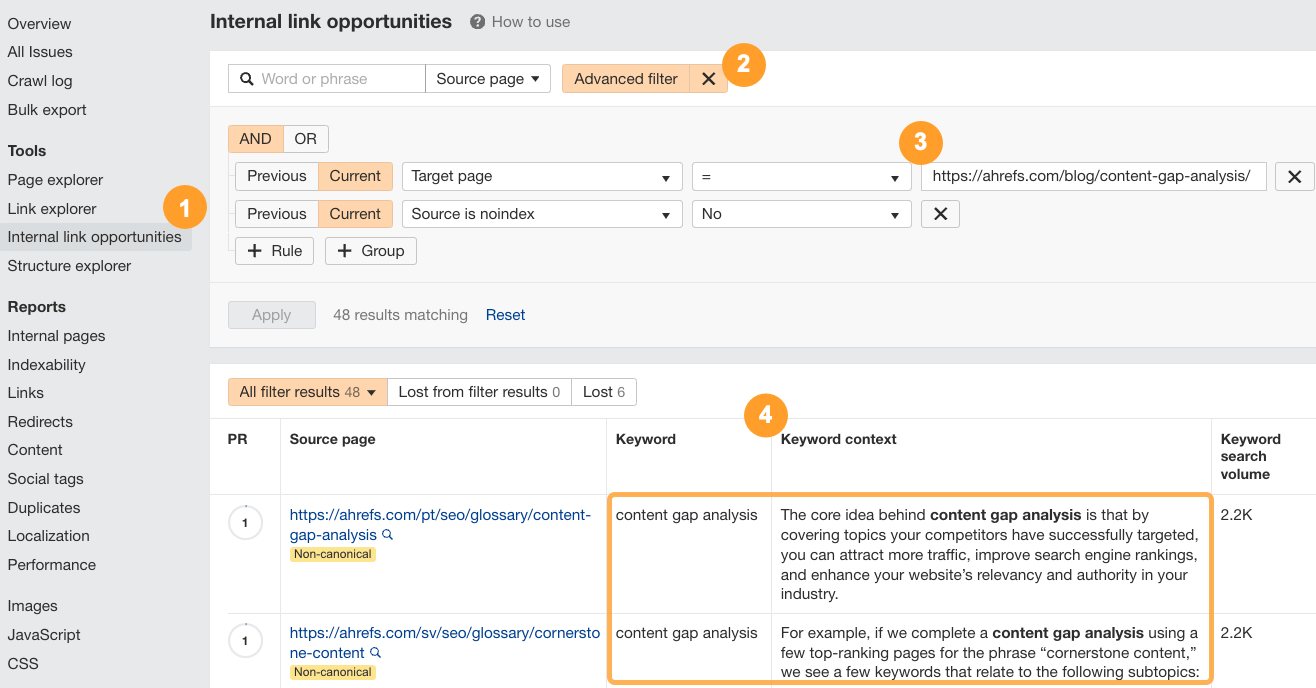

Then, navigate to the Internal Link Opportunities report in Site Audit. You can set an advanced filter to narrow down the opportunities to the pages you care most about. Check out the suggested anchor text and keyword contexts and implement all the internal links that make sense in your content.

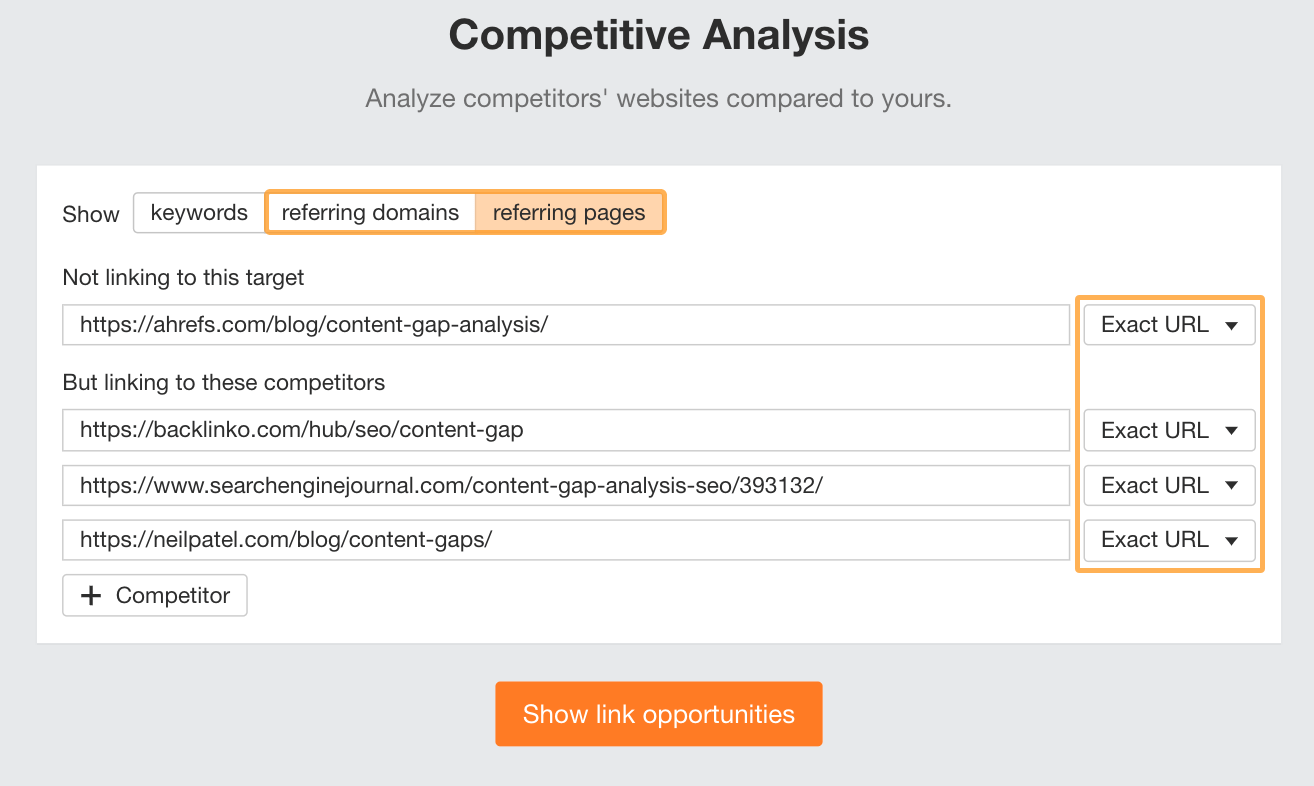

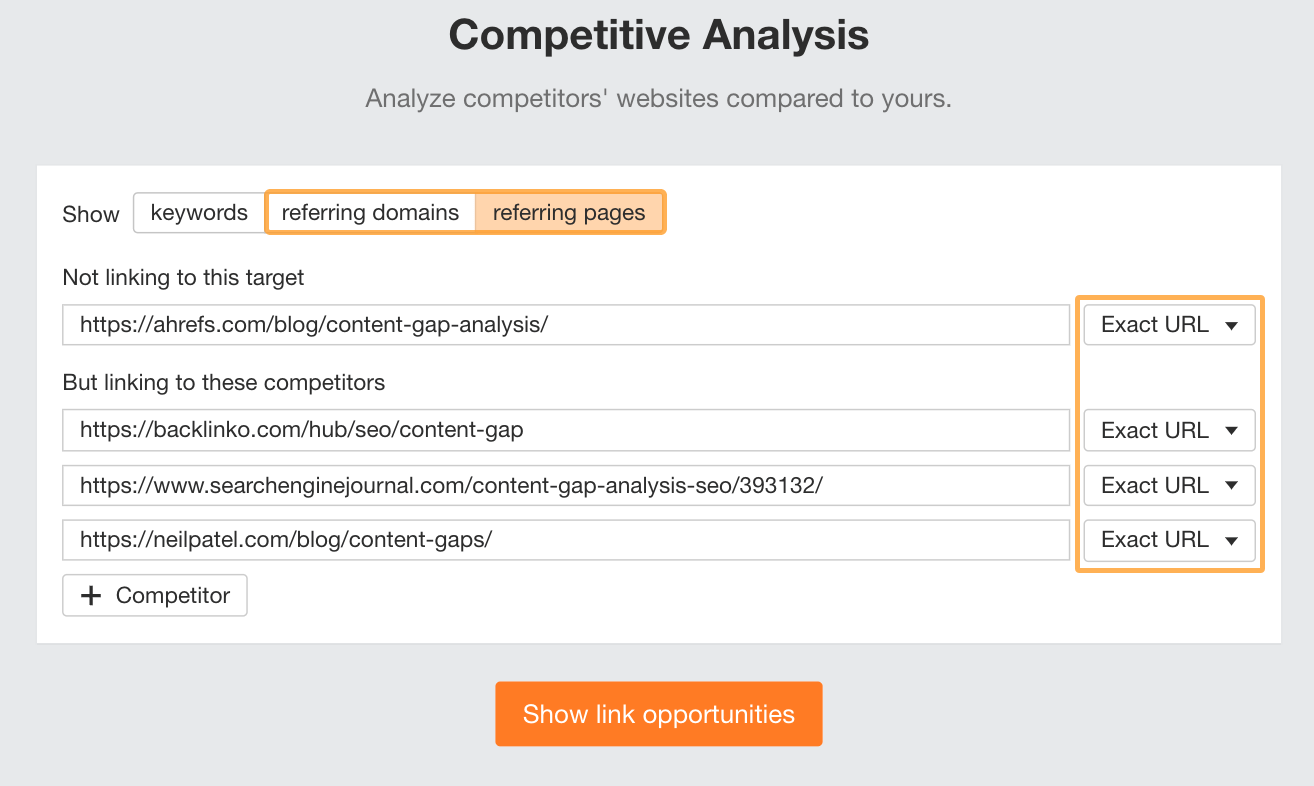

You should also build backlinks to these pages. You can use the Competitive Analysis report again, but this time, set it to referring domains or pages.

Sidenote.

Setting it to referring domains will give you a list of websites you can add to an outreach list. Setting it to referring pages will give you the exact URLs where the links to your competitors are. These links can be included in outreach messages to make them more customized.

Also, instead of using the homepage, add the exact page you want to link to and compare it to your competitors’ pages on the same topic. Make sure you set all pages to “exact URL” to get the page-level (instead of website-level) backlink data.

There are many different backlinking techniques you can consider implementing. Check out our video on how to get your first 100 links if you’re unsure where to start:

c) Update content with low-hanging fruit opportunities

For an established website with a decent amount of existing content, you can also look for opportunities to quickly update existing content and boost performance with little effort.

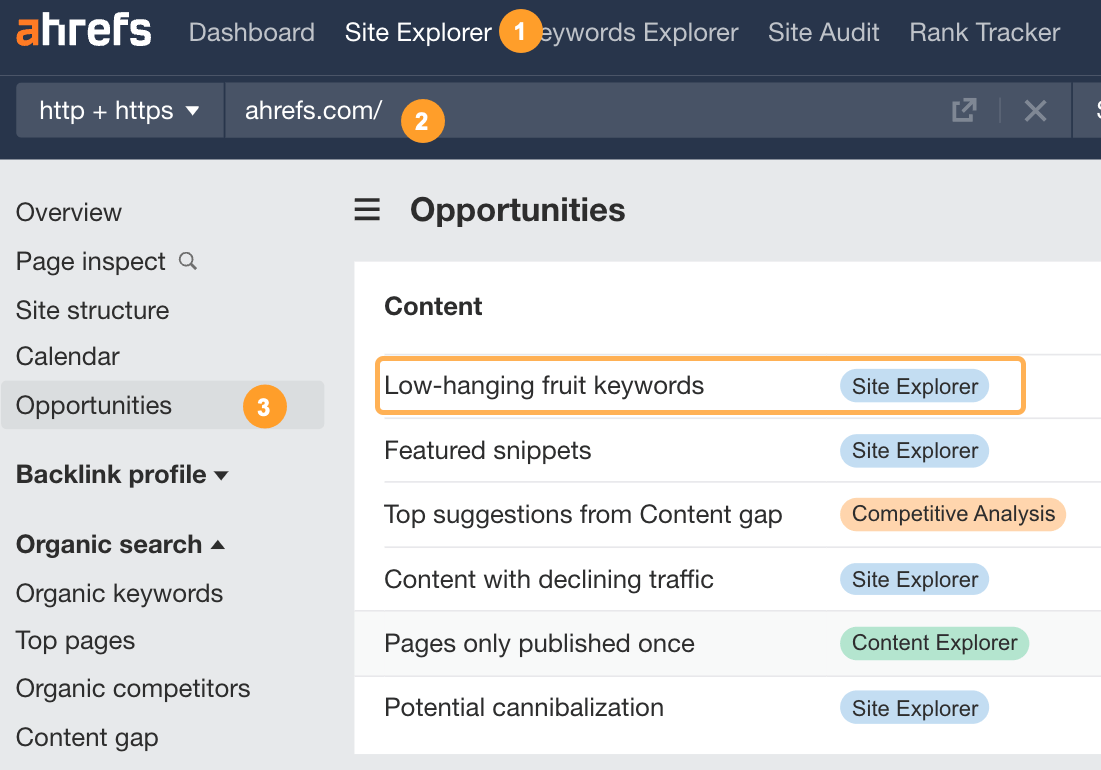

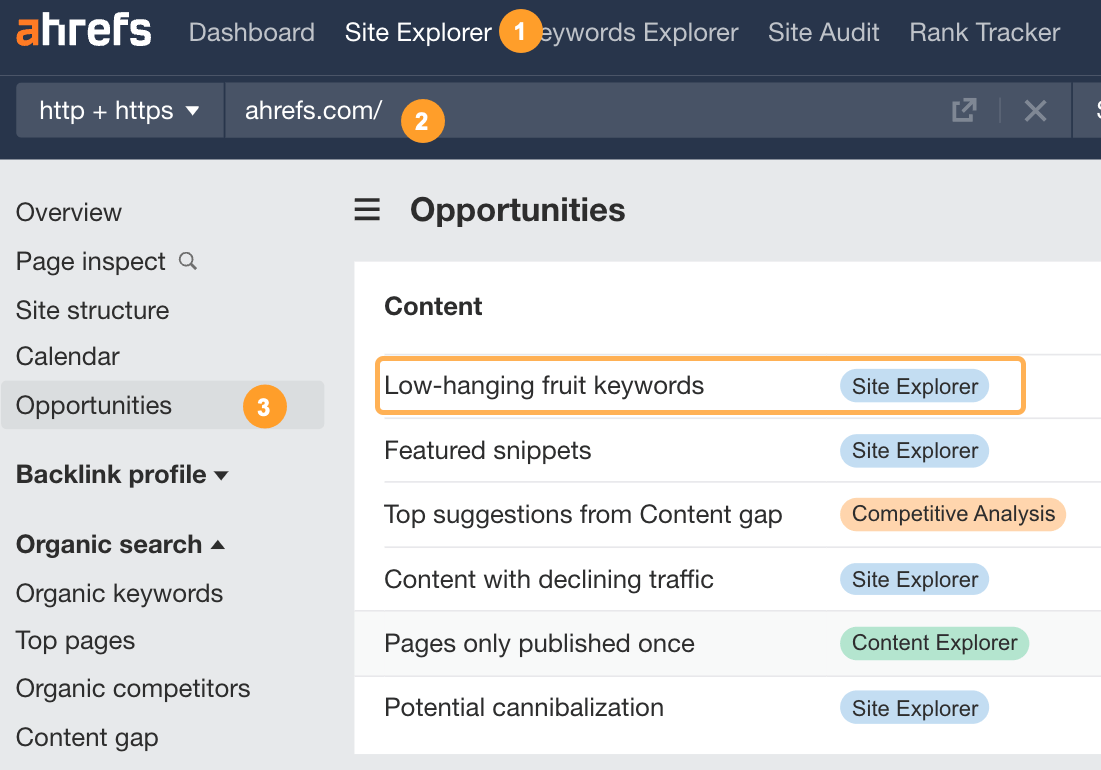

In Ahrefs’ Site Explorer, check out your pages that are already ranking in positions 4-15 by using Opportunities > Low-Hanging Fruit Keywords:

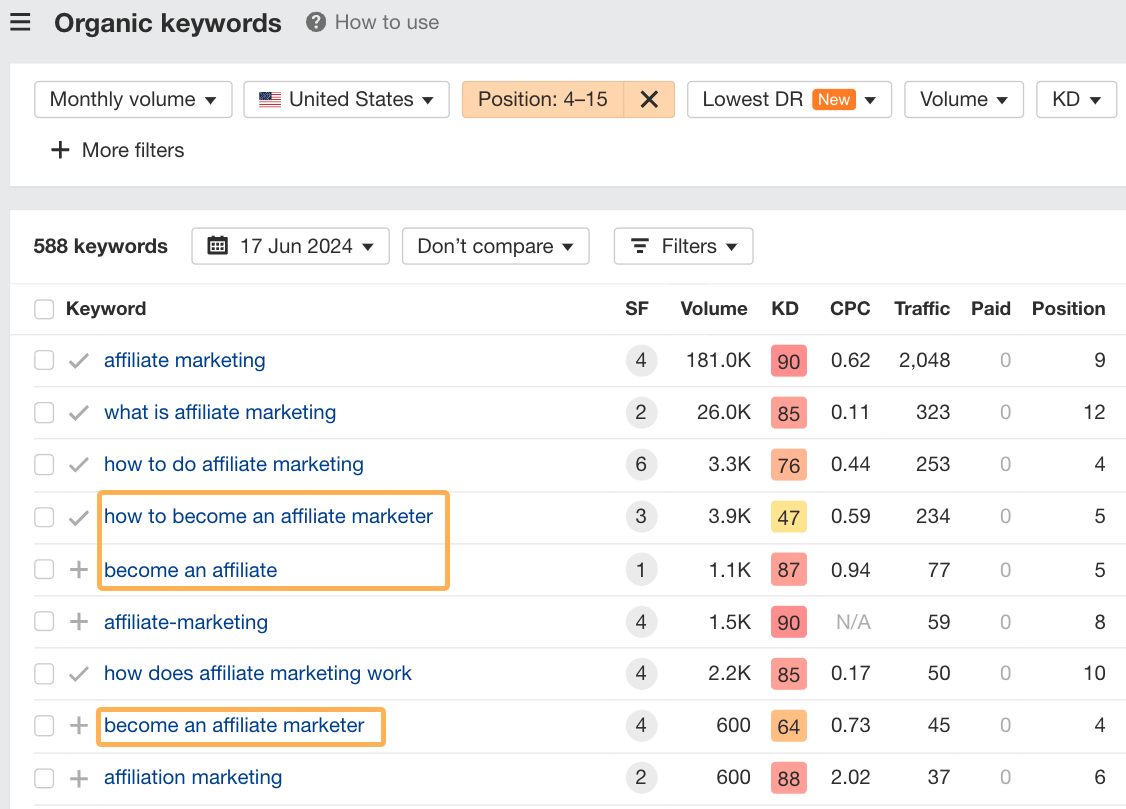

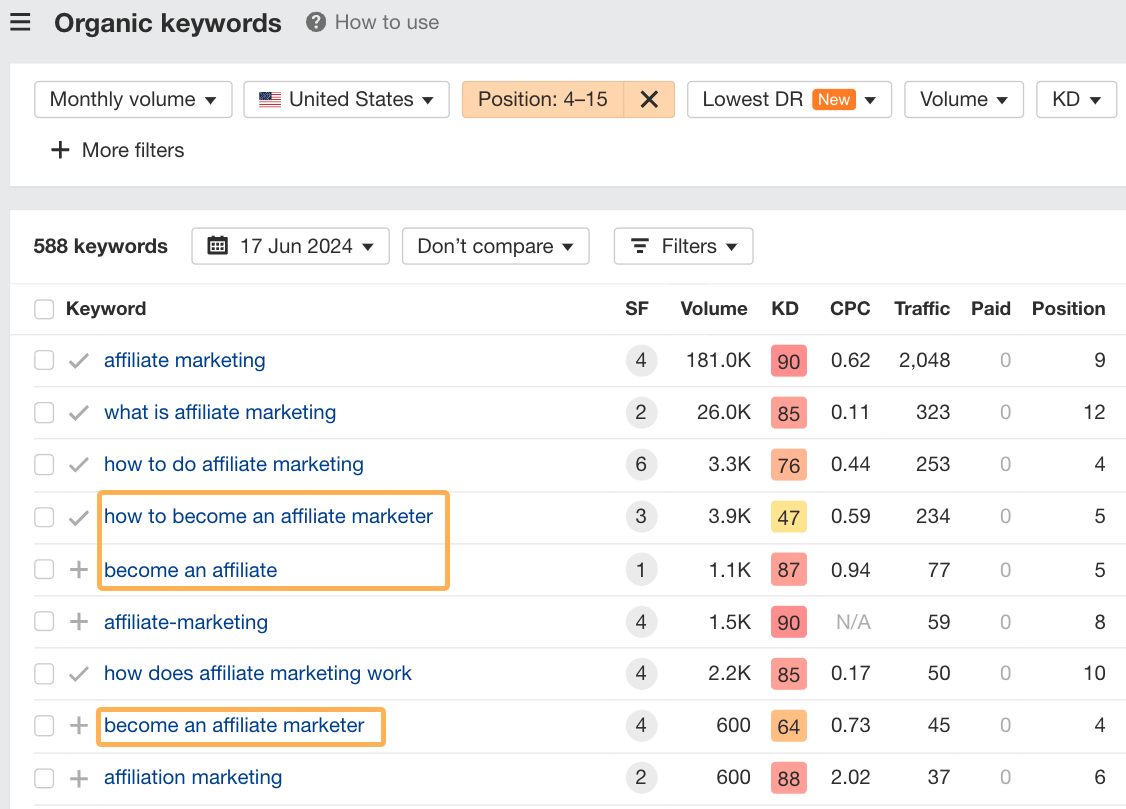

Find pages with many keywords in this range and try to close topic gaps on those pages. For example, let’s take our post on affiliate marketing and look at its low-hanging fruit opportunities.

We could isolate similar keywords that don’t already have a dedicated section in our article, like the following about becoming an affiliate.

These are already hovering around the middle of page one on Google. With a small, dedicated section about this topic, we can likely improve rankings for these keywords with minimal effort.

Most tasks aren’t a one-time thing. For example, you’ll probably create or update multiple pieces of content during an SEO project.

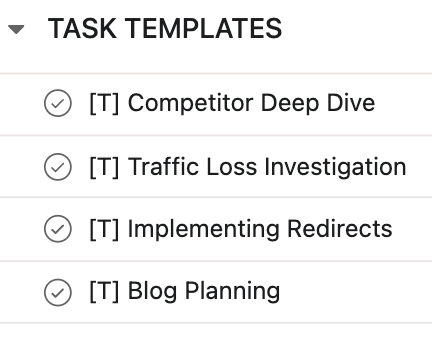

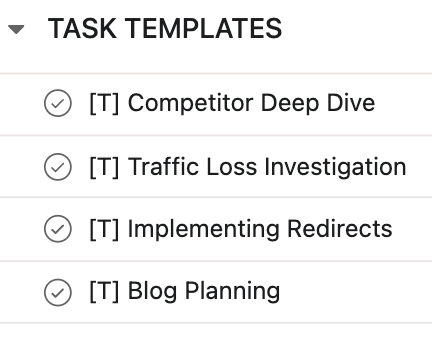

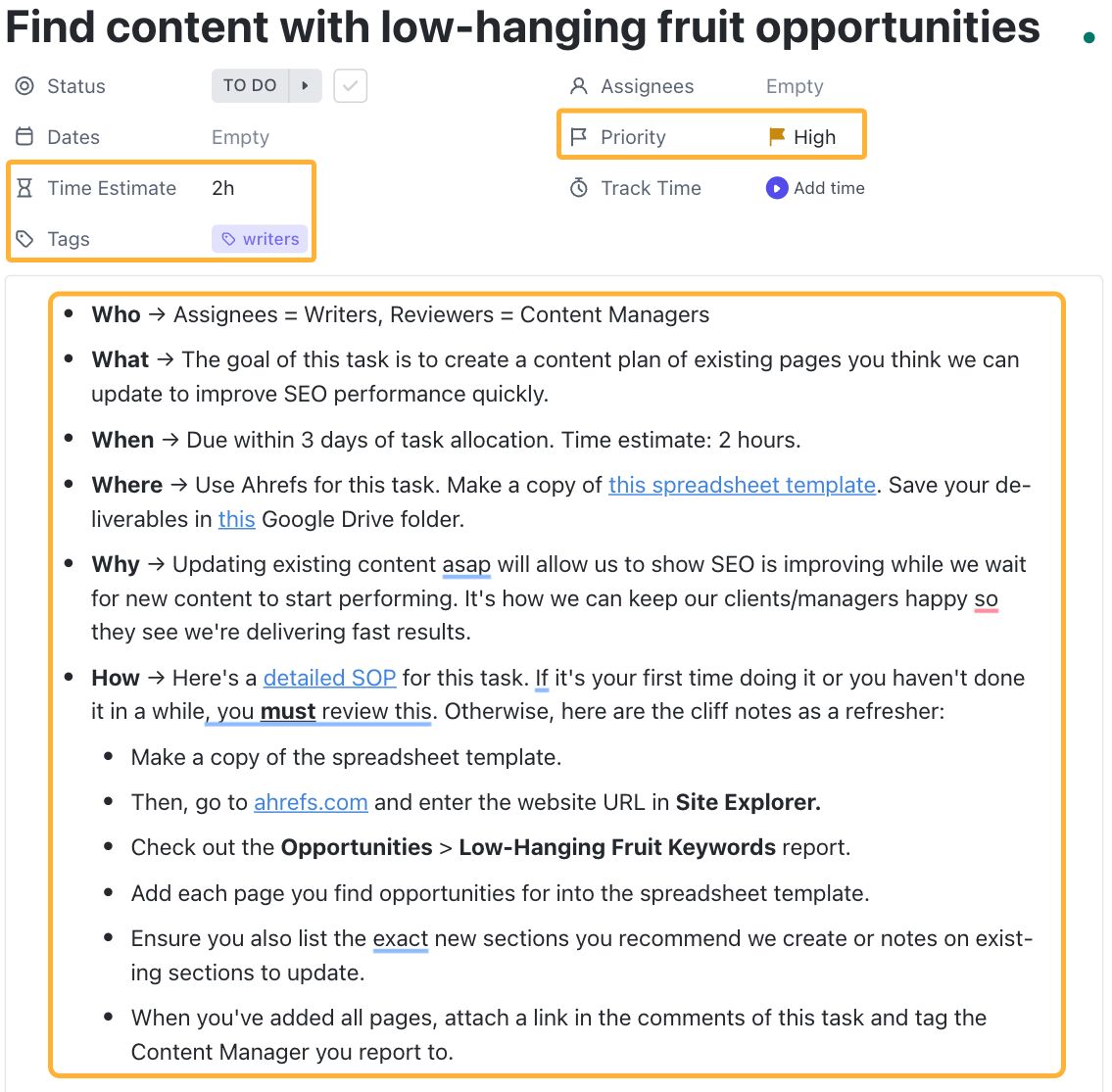

So, the next step is to create a library of repeatable task templates that you can duplicate in your project.

If you don’t do this and just assume your team knows what to do, it can cause chaos, and there’s a high chance your project won’t succeed.

Here’s what you should add to each task template:

- Who → assignees, reviewers, watchers, key stakeholders

- What → what’s the goal of the task + what exactly needs to be done

- When → dates to start and finish a task, estimated hours to complete

- Where → what tools should be used, where should deliverables be added, where can templates/relevant info be found

- Why → connect the task to a strategic objective

- How → SOP or process outlined in a clear and detailed brief

Obviously, the exact details for some of these will need to be filled in on a task-by-task basis as you duplicate them into your project. For example, instead of assigning the template tasks to a specific person, indicate the role that is responsible for the task until you’re ready to assign it to someone.

Likewise, with the due dates. In the template, instead of adding exact due dates, indicate an estimated length of time each task should take and a general rule for when the task is due after it’s been assigned.

Not every project will need every task, so the idea is to pull in what’s required as needed and have the bulk of the info pre-filled to reduce the time it takes to brief the task.

With your tasks set and templates created, it’s now time to start doing.

This is where things can often fall apart unless you distribute responsibility and ownership of tasks and processes throughout your team.

SEO project management doesn’t fail because there aren’t SOPs and processes in place. It fails because the people executing the processes aren’t given ownership of them.

Here are 3 reasons why this can happen:

- Without clear ownership, all team members rely on you for approvals before they can complete a task or start another. It slows everything down, and very little gets done efficiently.

- A “not my job” mentality can take root in your team. Unless team members take ownership of their tasks, you will be responsible for micromanaging everything to ensure your team is doing what it’s supposed to be.

- The people best placed to decide upon and update processes aren’t the ones doing so. They’re just doing whatever “management” tells them to do even if they see a better way.

You can solve the first two problems by clearly identifying who is responsible for specific tasks and processes and allowing them to get on with those tasks without having to run every tiny thing through you.

You can solve the third problem by letting the people on the ground decide how their tasks are done and giving them responsibility for updating SOPs and relevant task templates. This again frees up your time and attention to focus on strategy, not micromanaging.

PRO TIP

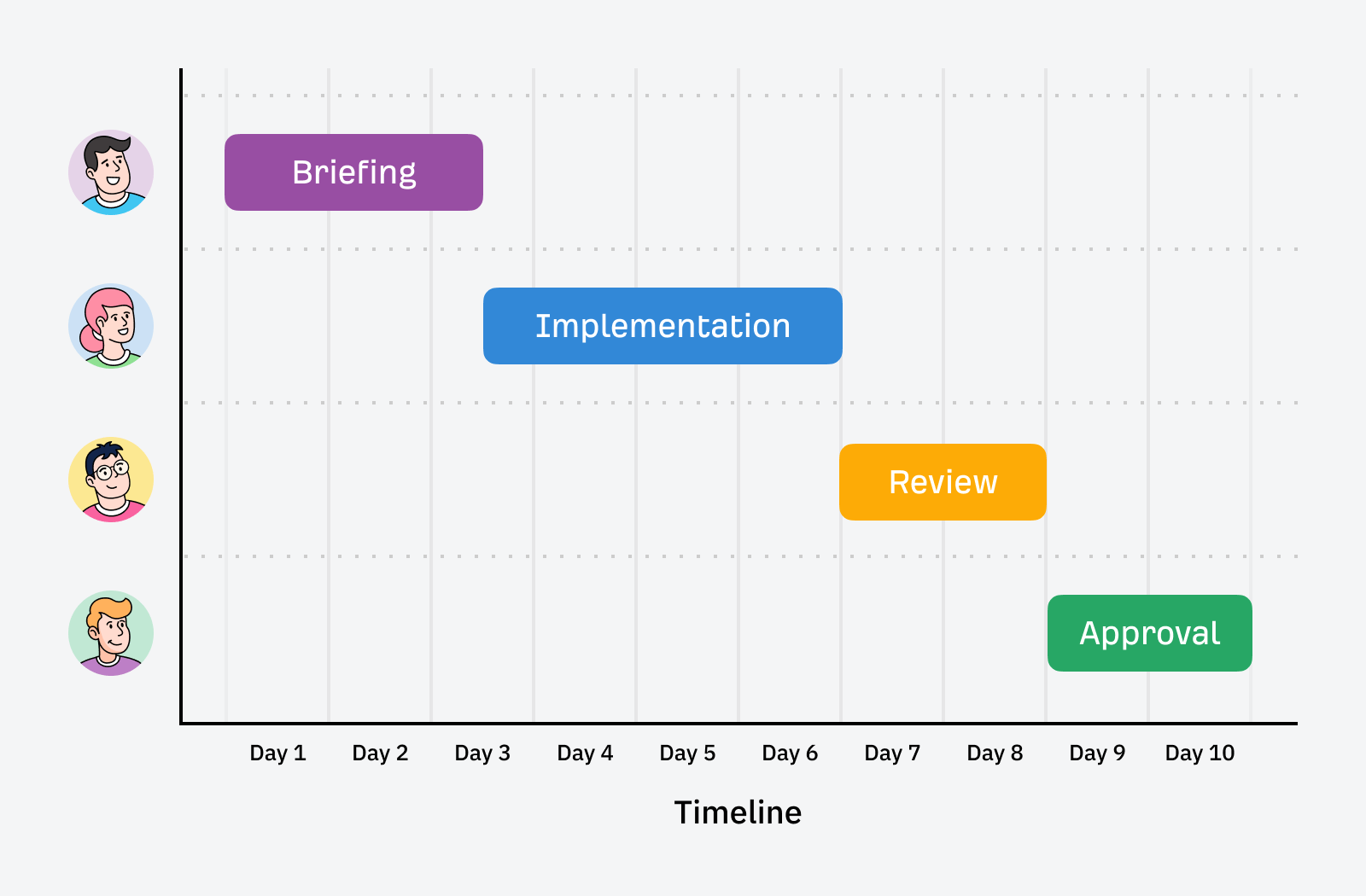

It also helps to break up bigger tasks into sub-components when multiple people are involved, like:

- Briefing → SEO Strategist or Account Manager

- Implementation → often, a non-SEO professional like a writer, developer, or designer

- Review → Senior SEO

- Final approval → Client

For the love of all things good, please don’t manage SEO projects via email. It’s horrible.

Invest in setting up a proper project management tool to scale with you. Consider your needs before you start planning all your tasks and projects.

There’s no tool that’s best for everyone, but I recommend you check out Asana, ClickUp, or Monday to get you started.

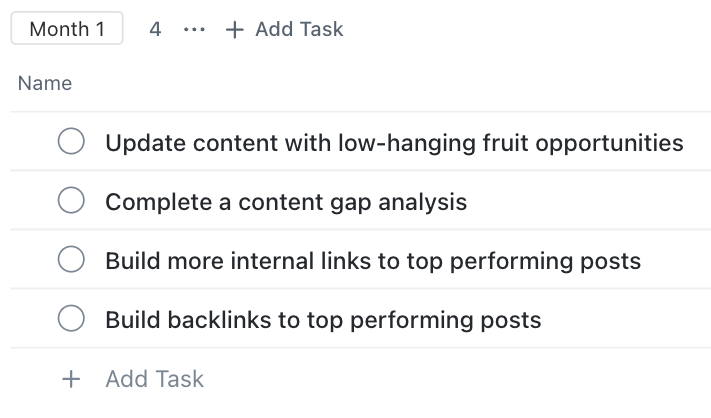

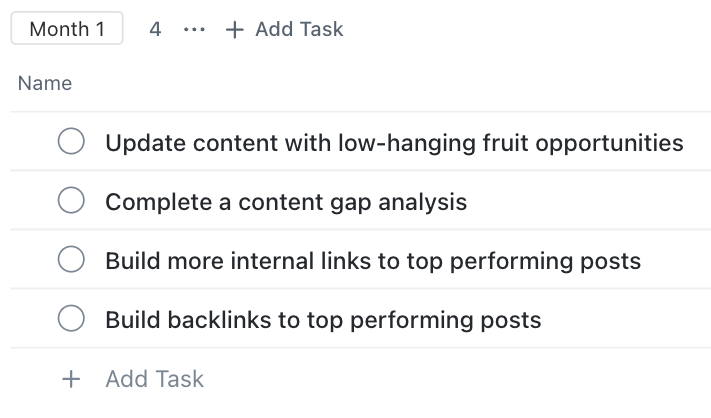

In any of these tools, you can easily set up separate projects and task templates. For example, here’s a basic setup of the first month’s tasks you can consider in Clickup:

Within each task, you can pre-fill certain fields and add a description, like so:

This is where you can add your brief, relevant links, and the essential details needed to turn the task into a template. Of course, there are nuances of how this works between different project management tools, but the basic idea remains the same.

It’s worth spending time setting up your tasks and templates correctly so you can save time down the track as your project or team grows.

The last piece of this framework is tracking resources spent and results achieved.

Tracking resources

The easiest way to track resources is to create custom fields in your project management tool that measure specific resources allocated for each task. Some tools also let you build out reports to see how your resource allocation is going across different time frames, teams, or projects.

The types of resources you might consider tracking include:

- Cost of the task

- Planned time allocated

- Actual time spent

- Cost of tools required to do the task

- Credits the task is worth (if you use a credit system)

- Sprint points (if you work in sprints)

For more in-depth insights on where your resources are going, consider tagging tasks according to whether they’re strategic, implementation, or administrative. This way, you can quickly and easily spot imbalances like investing too much in tasks that don’t contribute to results.

Tracking results

Measuring your results requires going beyond your project management tool and using a combination of your analytics software and an SEO tool like Ahrefs.

When you start working on a new campaign, make sure you record a benchmark of the existing performance of the website. Then, keep regular tabs on the metrics that matter for the project and performance milestones you’ve achieved along the way.

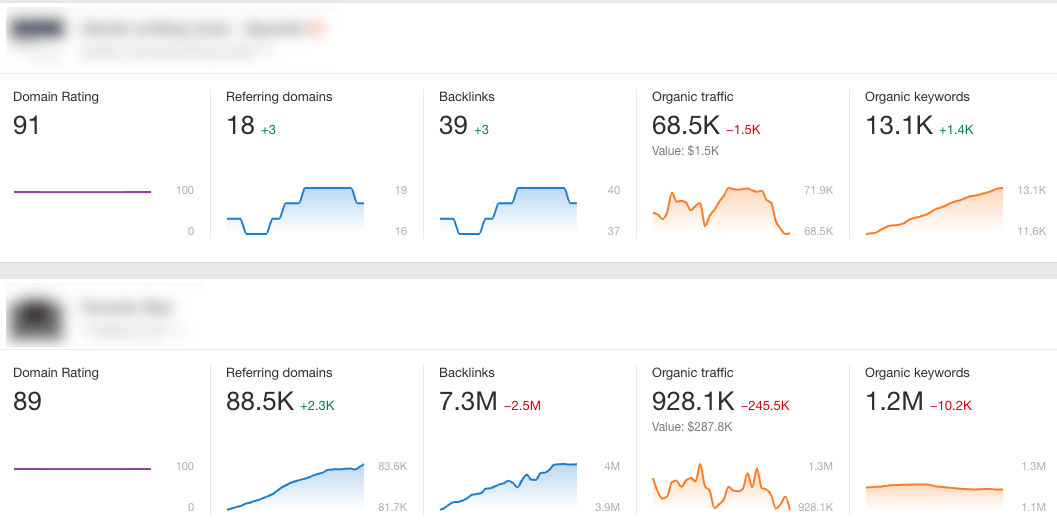

For example, you can use Ahrefs Webmaster Tools to monitor performance across your entire portfolio for free.

The dashboard allows you to quickly see how performance is trending for key SEO metrics across all projects you’ve added:

Key Takeaways

Results-driven SEO project management starts with the end in mind and works backward. It doesn’t assume you’ll see performance improvements just because you’re doing lots of stuff.

Instead, it is very intentional about figuring out exactly what needs to be done and linking those actions to realistic and achievable outcomes.

In the words of Mads Singers:

The starting point is figuring out how to deliver a return on investment. This is the most important thing. Then it’s about giving your team ownership and control over the tasks related to their roles.

Once these foundations are in place, only then is it about documenting processes. But it shouldn’t be a business owner or manager who does the documentation. Processes should be owned by the people doing the work and who can keep SOPs current.

The process shared above allows you to do all of this and more. If you have any questions about your SEO project management goals or processes, feel free to contact me on LinkedIn anytime.