Throughout Europe, strict rules govern how traditional media operates during elections. Often that means imposing a period of silence so that voters can reflect on their choices without undue influence. In France, for example, no polls are allowed to be published on the day of an election.

There are, however, very few laws governing what social media companies do in relation to elections. This is a problem now that political parties campaign on these platforms as a matter of course.

So this year, the European Commission intends to introduce regulations for political adverts that will apply across the countries of the EU.

To understand why such action is being considered, we can look to recent concerning practices during election cycles in the UK and US.

As more people consume their news online, and as advertising revenues move online, social media poses a greater threat to fair and transparent elections.

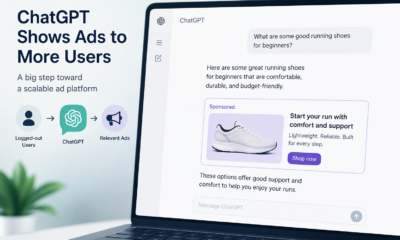

The largest social media networks are for-profit companies. They offer marketing services to other businesses wanting to direct advertising towards network users who are a good match for their products.

To facilitate this, social media companies gather and store behavioural data on our activities – what we click on, what makes us hit the like button, the comments we leave.

Knowing these things for each person gives these companies a detailed understanding of its users. That’s ideal for identifying which user segments will be most receptive to a certain message or ad.

The user marketplace

Social media companies generally use an in-house artificial intelligence bidding system, operating in real-time, for each page that is presented to a user. Businesses compete for customer access by signalling how much they are willing to pay to place an ad and the algorithm chooses what will appear on the page, and where.

This inventive model was originally conceived by Google and has radically changed the world of marketing. Because the basis of the model lies in gathering each person’s behavioural activities on the platforms for marketing purposes, it has been described as surveillance capitalism.

All this is significant enough when we are being marketed products, but using such information in the context of election campaigning is even more questionable.

A new level of AI, surveillance and business cooperation was achieved when Facebook began providing services to companies involved in political campaigning. Of particular concern were activities around the use of targeting custom audiences in the 2016 Brexit referendum and the US presidential election of the same year.

To this day, it is unclear how these activities affected those votes, but we know companies worked together to gather voter information and perform their own behavioural analytics for the segments of interest using, among other things, efficient computer-generated personality judgments based on inappropriately harvested Facebook profiles. Persuasive materials were then delivered at specific times to the users by Facebook.

Enlightening information provided to a British parliamentary inquiry by Facebook shows that many of the large number of ads about Brexit sent to users were misleading and employed debatable half-truths.

In the US, the Federal Trades Commission imposed an extraordinary US$5 billion (€4.6 billion) fine on Facebook for misleading users and allowing profiles to be shared with business app developers.

In 2018, Facebook CEO Mark Zuckerberg said: “I’ve been working to understand exactly what happened and how to make sure this doesn’t happen again. The good news is that the most important actions to prevent this from happening again today we have already taken years ago. But we also made mistakes, there’s more to do, and we need to step up and do it.”

However, the EU is clearly not content with a pledge from Facebook not to let this happen again and plans to take a more heavy handed approach than it had in the past.

My own work in this area argues that such business projects as election influencing using advanced AI with behavioural analytics can be considered as artificial people at work and should be regulated in the same way as any human seeking to influence elections would be.

The European approach

There is currently no usable, shared definition of a political advertisement. The EU, therefore, needs to provide a definition that does not infringe on freedom of expression but enables the market to be properly regulated.

With this in mind, we can expect the law to make reference to there being a link between payment and the use or creation of a post. That will help separate ads from personal opinions shared on social media.

EPA/Olivier Hoslet

Once a political ad has been identified, legislation will require it to be clearly labelled as relating to a specific election or referendum. The name of the sponsor will have to be clear as well as the amount spent on the ad.

A key issue with the US and UK scandals was that amplification techniques had been used to position political ads on Facebook where they could be most effective.

This meant using potentially sensitive information about a person, such as ethnic origin, psychological profiling, religious beliefs or sexual orientation to sort them into groups to be targeted. This will not be allowed in EU countries, unless people give their explicit permission.

In the past, political ads have been delivered to individuals in their own private spaces, and so have not been open to public examination. The new European legislation will aim to put all political ads in an open repository, where they will be open to public scrutiny and regulation.

The European Commission wants to see these regulations come into force before the European elections of 2024. Getting the regulations exactly right will be challenging, and the Commission is in the final stages of discussion on the matter. Regulation of political ads will come in some form or another, making it more possible to hold social media companies to account.