SOCIAL

Meta Releases New ‘Widely Viewed Content’ Report for Facebook, Which Continues to be a Baffling Overview

Safe to say that Meta’s efforts to refute the idea that Facebook amplifies divisive political content are not going exactly as it would have hoped.

As a quick recap, last year, Facebook published its first ever ‘Widely Viewed Content’ report for Facebook, which it launched largely in response to this Twitter account, created by New York Times journalist Kevin Roose, which highlights the most popular Facebook posts every day, based on listings from Facebook’s own CrowdTangle monitoring platform.

The top-performing link posts by U.S. Facebook pages in the last 24 hours are from:

1. Occupy Democrats

2. Ben Shapiro

3. Occupy Democrats

4. Ben Shapiro

5. Ben Shapiro

6. Bloomberg

7. Occupy Democrats

8. People

9. VOA Burmese News

10. Fox News— Facebook’s Top 10 (@FacebooksTop10) March 1, 2022

The listings are regularly dominated by right-wing spokespeople and Pages, which gives the impression that Facebook amplifies this type of content specifically, via its algorithms.

Understandably, Facebook was unhappy with this characterization, so first, it disbanded the CrowdTangle team after a dispute over what content the app should display. Then it launched its own, more favorable report, based on more indicative data, according to its estimation, which it then vowed to share each quarter moving forward, as a transparency measure,

Which sounds good, it’s great when we have more insight into what’s actually happening. Yet the actual report doesn’t really clarify or refute all that much.

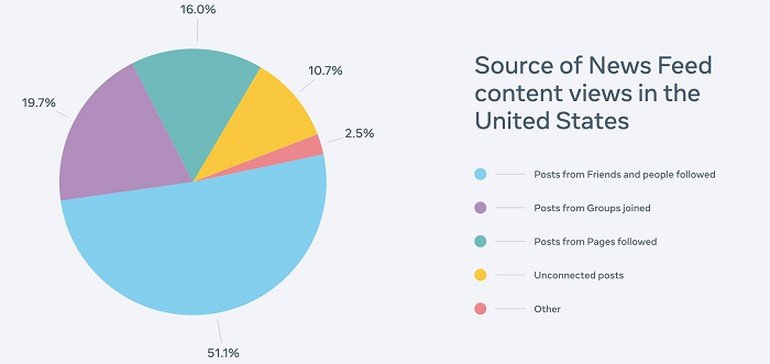

For example, Facebook includes this chart in each of the Widely Viewed Content reports, to show that news content really isn’t that big of a deal in the app.

So posts from friends and family are the most prominent – which doesn’t really tell you much, because those posts could, of course, be shares of content from news pages, or opinions on the news of the day, based on publisher content.

Which is the real focus of the report – in the first Widely Viewed Content report, Meta showed that it wasn’t actually news content that was getting the most traction in the app, but really, it was spam, junk and recipes that were seeing the most exposure.

Meta’s latest Widely Viewed Content report, released today, shows similar – with one particularly notable exception:

Note the issue here?

The first listed Page here, the most viewed Facebook Page for the quarter, in the report that Meta is using to show that its platform isn’t a negative influence, has actually been banned by Meta itself for violating its Community Standards.

That’s not a great look – while the rest of the listings in the report also, once again, highlight that spam, junk and random pages (a tyre lettering company, letters to Santa via UPS) also gained major traction throughout the period.

Really, this latest report further underlines concerns with Facebook’s distribution, as a Page that it’s identified as sharing questionable posts, for whatever reason (Meta won’t clarify the details), has gained huge traction in the app, before Meta eventually shut it down.

Worth also noting that this report covers a three-month period (in this case, the period between October 1, 2021 and December 31, 2021), which means that it’s probably less likely to see news content listed anyway, as the news cycle changes quickly, and major news stories only gain traction on any given day.

You could argue, then, that if the same right-wing news outlets that are regularly highlighted in Roose’s Daily Top 10 list are actually indicative of Facebook sharing trends, then they’d show up in this list.

But for one, many of these Facebook Pages share YouTube links, and we don’t have the context on the specifics of this referral traffic (with YouTube being the top domain source), while it’s also questionable as to how many users actually click on the links shared by each Page.

Often, the headline is enough to spark outrage and debate, with the comment sections going crazy with responses, without users actually reading the post.

If somebody shares a post with a divisive headline, is its capacity for division diminished if people don’t actually click through to read it?

Basically, there are a lot of gaps in the logic Meta’s using here, which leaves a lot of room for interpretation. And really, it’s impossible to argue that Facebook’s algorithm doesn’t incentivize divisive, argumentative posts, because its system does indeed look to fuel engagement, and keep users interacting as a means to keep them in the app.

What fuels engagement online? Emotionally-charged posts, with anger and joy being among the most highly shareable emotions. As any social media marketer knows, trigger these responses in your audience and you’ll generate engagement, because more emotional pull means more comments, more reactions – and in Facebook’s case, more reach, because the algorithm will give your content more exposure based on that activity.

It makes sense, then, that Facebook has helped to fuel a whole industry of emotion-charged takes, in the battle for audience attention – and the subsequent ad dollars that this increased exposure can bring.

People have often pinned social media, in general, as the key element that’s sparked more societal division, and there is an argument for that as well, in terms of having more exposure to everyone’s thoughts on every issue. But the algorithmic incentive, the dopamine rush of Likes and comments, the buzz of notifications. All of these elements play into the more partisan media landscape, and the impetus to share increasingly incendiary takes.

Take the biggest issue of the day, come up with the worst take you can on it. Then press ‘Post’. Like it or not, that’s now an effective strategy in many cases, and honestly, it’s pretty ridiculous the lengths that Meta continues to go to in order to try and suggest that this isn’t the case.

Either way, that is the direction that Meta has taken, and its Widely Viewed Content reports continue to show, essentially, that the time people spend on Facebook is mostly spent on mindless junk.

But mindless rubbish is better than divisive misinformation, right? That’s better.

Right?

Honestly, I don’t know, but I do know that this report is doing Meta no favors in terms of overall perception.

You can view Meta’s ‘Widely Viewed Content’ report for Q4 2021 here.

Stay in the loop with Entireweb

Get the latest updates delivered straight to your inbox. No spam - unsubscribe anytime.

You must be logged in to post a comment Login