SOCIAL

Meta Shares Insights into its Reporting and Safety Tools in New Video Series

Social media has become a key connective tool for many people, and the only way to stay connected with family and friends at times (especially, say, during a pandemic when we’re all locked in place).

But with social media use comes an inherent level of risk. This was highlighted last year when a report shared as part of the ‘Facebook Files’ leak underlined the mental health dangers of Instagram use, for teen girls specifically. With unrealistic beauty standards on show, combined with criticisms and other behaviors that can frame things in an unsafe way, Instagram in particular, can be a dangerous place to be spending too much time, which is why Meta has provided a range of safety tools and controls to help.

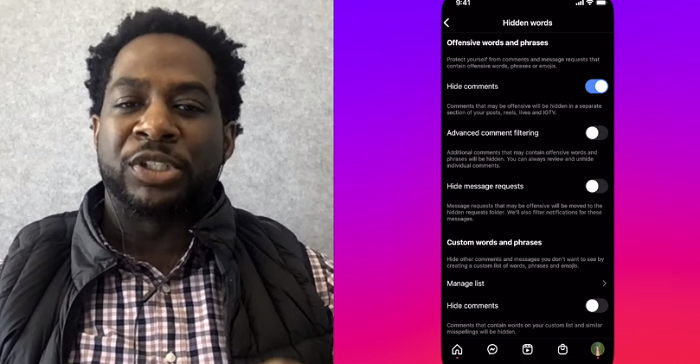

Which is where this new series comes in. Highlighting the various controls and options you have at your disposal, Meta’s published a range of video clips with platform experts to share how you can – and why you should – use its various safety controls to avoid harmful behaviors in its apps.

The video series began with this look at Instagram specifically, and the tools you can use to manage your IG experience.

Meta has followed that up with a video on its reporting tools, including in-depth insight into how reporting works in its apps.

As well as the latest video on bullying and harassment on Facebook, and how Meta’s looking to manage and mitigate harm in its main app.

If you’re trying to get a better understanding of how Meta’s approaching these issues, and what you can do to limit harm, it’s worth taking the time to go through each of these clips, which provide a clear overview of Meta’s varying approaches, and the best actions to take in different circumstances.

For parents and teachers, in particular, these could be helpful, with more internal insight into its approaches and processes, which could help to best manage your approach.

Meta will be publishing more videos in the series in the coming weeks.

Source link

Stay in the loop with Entireweb

Get the latest updates delivered straight to your inbox. No spam - unsubscribe anytime.

You must be logged in to post a comment Login