SEO

5 Automated And AI-Driven Workflows To Scale Enterprise SEO

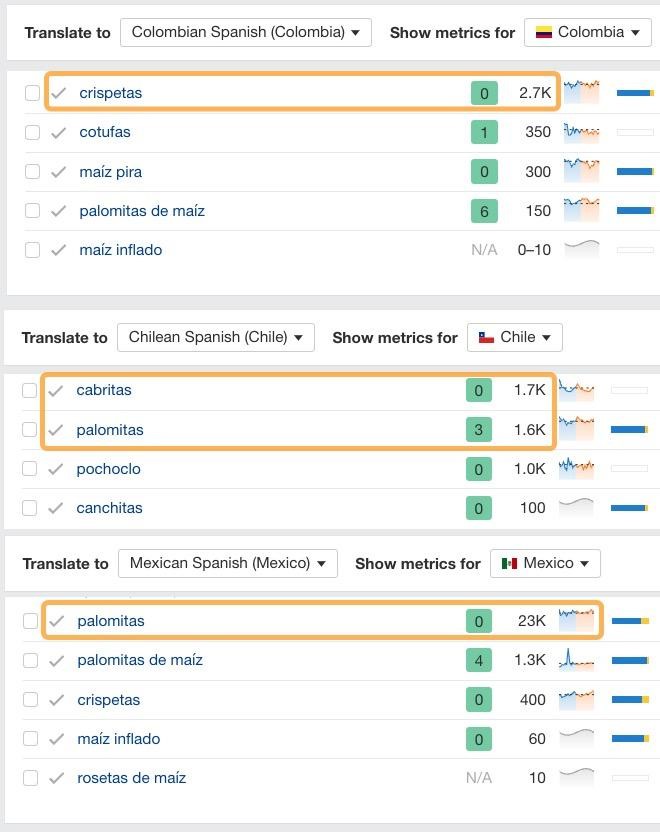

That’s where Ahrefs’ in-built AI translator may be a better fit for your project, solving both problems in one go:

GIF from Ahrefs Keywords Explorer, July 2024

It offers automatic translations for 40+ languages and dialects in 180+ countries, with more coming soon.

However, the biggest benefit is that you’ll get a handful of alternative translations to select from, giving you greater insight into the nuances of how people search in local markets.

For example, there are over a dozen ways to say ‘popcorn’ across all Spanish-speaking countries and dialects. The AI translator is able to detect the most popular variation in each country.

Screenshot from Ahrefs Keywords Explorer, July 2024

This, my friends, is quality international SEO on steroids.

2. Identify The Dominant Search Intent Of Any Keyword

Search intent is the internal motivator that leads someone to look for something online. It’s the reason why they’re looking and the expectations they have about what they’d like to find.

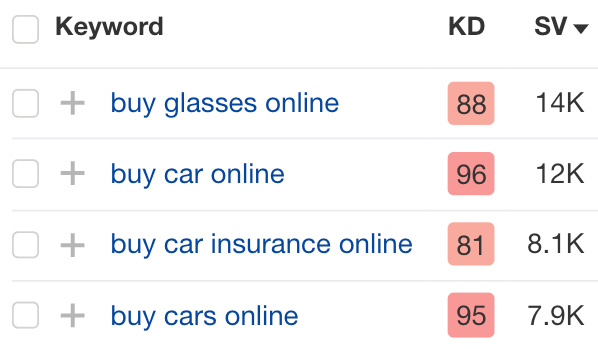

The intent behind many keywords is often obvious. For example, it’s not rocket science to infer that people expect to purchase a product when searching any of these terms:

Screenshot from Ahrefs Keywords Explorer, July 2024

However, there are many keywords where the intent isn’t quite so clear-cut.

For instance, take the keyword “waterbed.” We could try to guess its intent, or we could use AI to analyze the top-ranking pages and give us a breakdown of the type of content most users seem to be looking for.

Gif from Ahrefs Keywords Explorer, July 2024

For this particular keyword, 89% of results skew toward purchase intent. So, it makes sense to create or optimize a product page for this term.

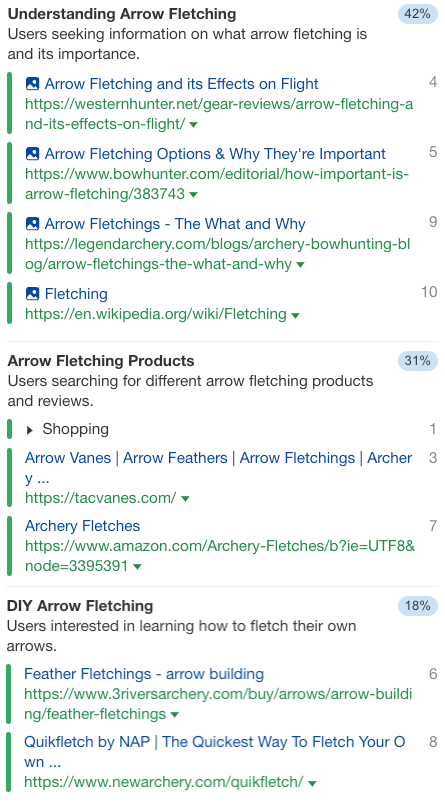

For the keyword “arrow fletchings,” there is a mix of different types of content ranking, like informational posts, product pages, and how-to guides.

Screenshot from Ahrefs Identify Intents, July 2024

If your brand or product lent itself to one of the popular content types, that’s what you could plan in your content calendar.

Or, you could use the data here to outline a piece of content that covers all the dominant intents in a similar proportion to what’s already ranking:

- ~40% providing information and answers to common questions.

- ~30% providing information on fletching products and where to buy them.

- ~20% providing a process for a reader to make their own fletchings.

- And so on.

For enterprises, the value of outsourcing this to AI is simple. If you guess and get it wrong, you’ll have to allocate your limited SEO funds toward fixing the mistake instead of working on new content.

It’s better to have data on your side confirming the intent of any keyword before you publish content with an intent misalignment, let alone rolling it out over multiple websites or languages!

3. Easily Identify Missing Topics Within Your Content

Topical gap analysis is very important in modern SEO. We’ve evolved well beyond the times when simply adding keywords to your content was enough to make it rank.

However, it’s not always quick or easy to identify missing topics within your content. Generative AI can help plug gaps beyond what most content-scoring tools can identify.

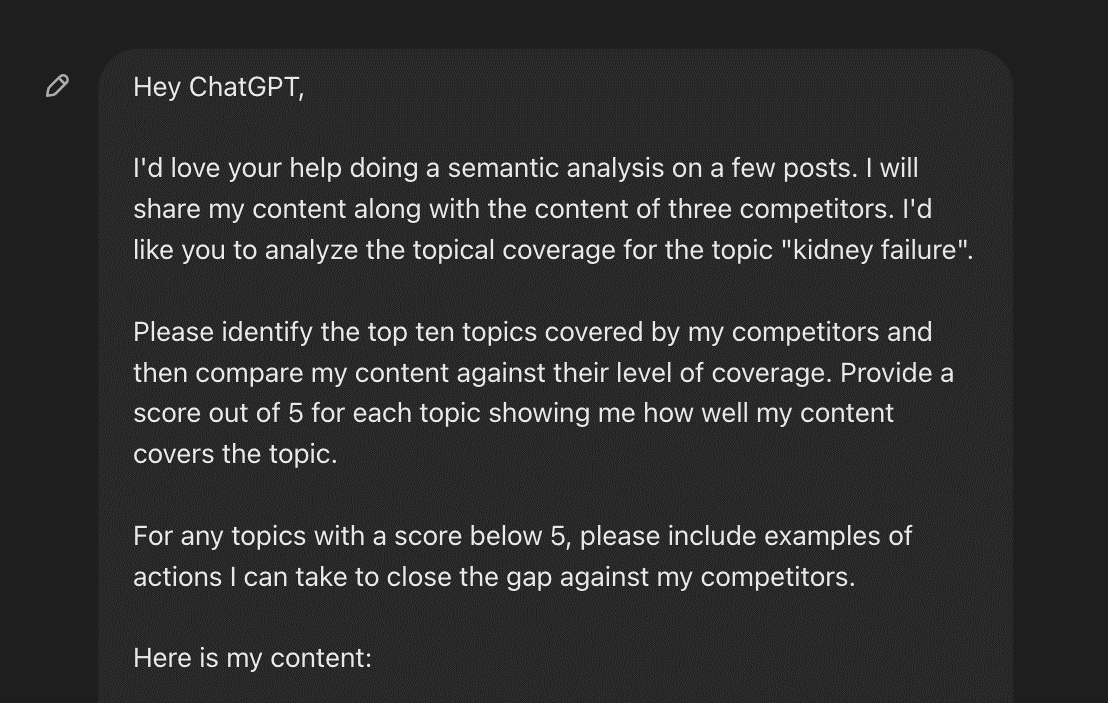

For example, ChatGPT can analyze your text against competitors’ to find missing topics you can include. You could prompt it to do something like the following:

Screenshot from ChatGPT, July 2024

SIDENOTE. You’ll need to add your content and competitors’ content to complete the prompt.

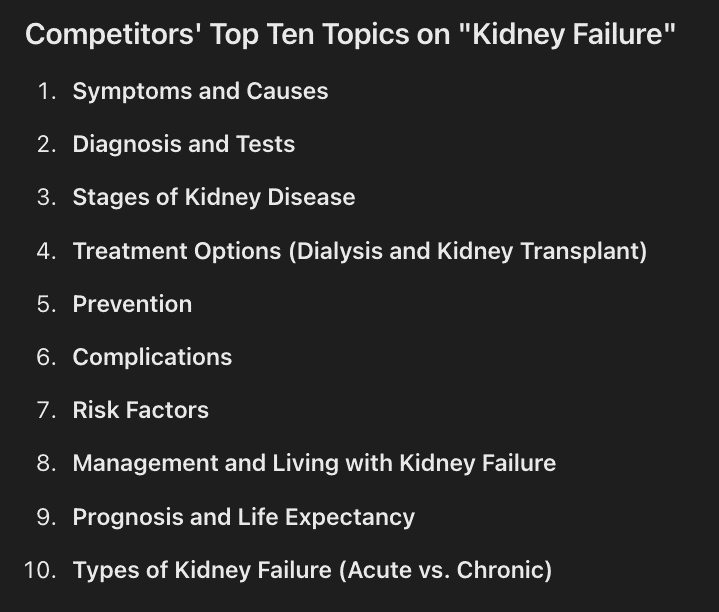

Here’s an example of the list of topics it identifies:

Screenshot from ChatGPT, July 2024

And the scores and analysis it can provide for your content:

Screenshot from ChatGPT, July 2024

This goes well beyond adding words and entities, like what most content scoring tools suggest.

The scores on many of these tools can easily be manipulated, providing higher scores the more you add certain terms; even if, from a conceptual standpoint, your content doesn’t do a good job of covering a topic.

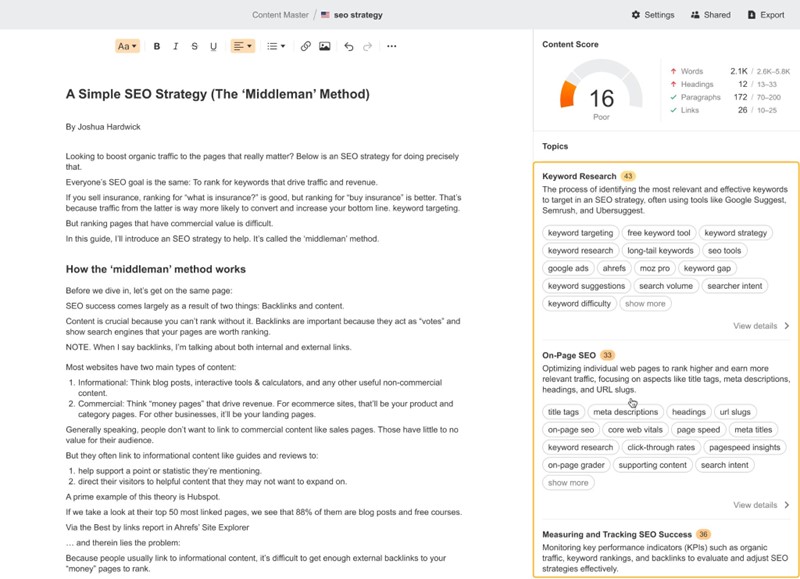

If you want the detailed analysis offered by ChatGPT but available in bulk and near-instantly… then good news. We’re working on Content Master, a content grading solution that automates topic gap analysis.

I can’t reveal too much about this yet, but it has a big USP compared to most existing content optimization tools: its content score is based on topic coverage—not just keywords.

Screenshot from Ahrefs Content Master, July 2024

You can’t just lazily copy and paste related keywords or entities into the content to improve the score.

If you rely on a pool of freelancers to create content at scale for your enterprise company, this tool will provide you with peace of mind that they aren’t taking any shortcuts.

4. Update Search Engines With Changes On Your Website As They Happen

Have you ever made a critical change on your website, but search engines haven’t picked up on it for ages? There’s now a fix for that.

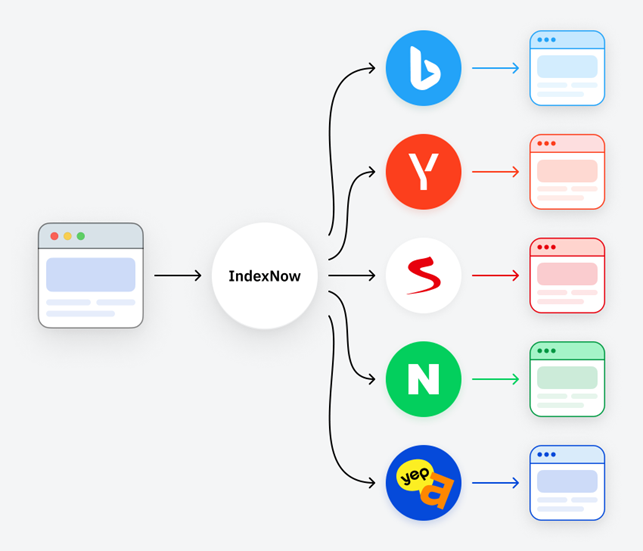

If you aren’t already aware of IndexNow, it’s time to check it out.

It tells participating search engines when a change, any change, has been made on a website. If you add, update, remove, or redirect pages, participating search engines can pick up on the changes faster.

Not all search engines have adopted this yet, including Google. However, Microsoft Bing, Yandex, Naver, Seznam.cz, and Yep all have. Once one partner is pinged, all the information is shared with the other partners making it very valuable for international organizations:

Most content management systems and delivery networks already use IndexNow and will ping search engines automatically for you. However, since many enterprise websites are built on custom ERP platforms or tech stacks, it’s worth looking into whether this is happening for the website you’re managing or not.

You could partner with the dev team to implement the free IndexNow API. Ask them to try these steps as shared by Bing if your website tech stack doesn’t already use IndexNow:

- Get your free IndexNow API key

- Place the key in your site’s root directory as a .txt file

- Submit your key as a URL parameter

- Track URL discoveries by search engines

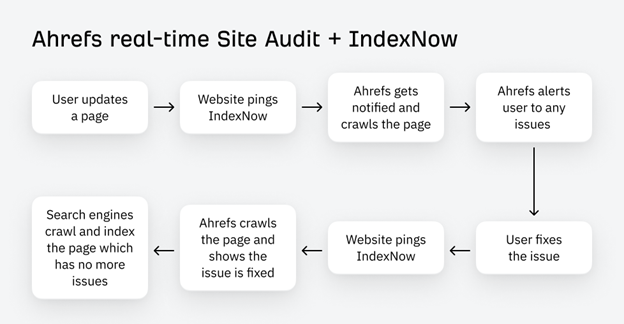

You could also use Ahrefs instead of involving developers. You can easily connect your IndexNow API directly within Site Audit and configure your desired settings.

Here’s a quick snapshot of how IndexNow works with Ahrefs:

In short, it’s an actual real-time monitoring and alerting system, a dream come true for technical SEOs worldwide. Check out Patrick Stox’s update for all the details.

Paired with our always-on crawler, no matter what changes you’re making, you can trust search engines will be notified of any changes you want, automatically. It’s the indexing shortcut you’ve been looking for.

5. Automatically Fix Common Technical SEO Issues

Creative SEO professionals get stuff done with or without support from other departments. Unfortunately, in many enterprise organizations, relationships between the SEO team and devs can be tenuous, affecting how many technical fixes are implemented on a website.

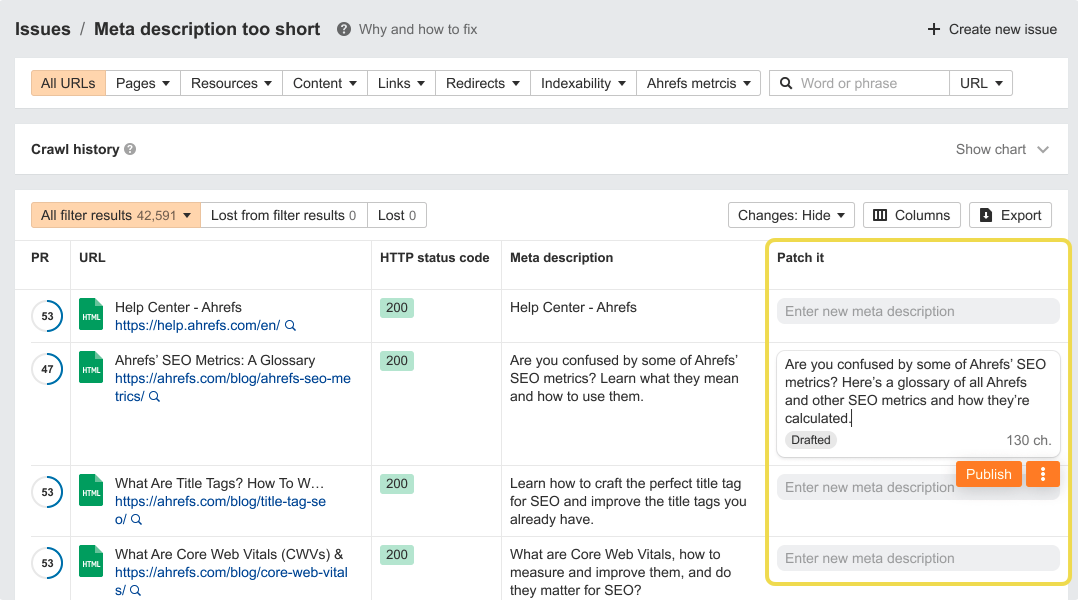

If you’re a savvy in-house SEO, you’ll love this new enterprise feature we’re about to drop. It’s called Patches.

It’s designed to automatically fix common technical issues with the click of a button. You will be able to launch these fixes directly from our platform using Cloudflare workers or JavaScript snippets.

Picture this:

- You run a technical SEO crawl.

- You identify key issues to fix across one page, a subset of pages, or all affected pages.

- With the click of a button, you fix the issue across your selected pages.

- Then you instantly re-crawl these pages to check the fixes are working as expected.

For example, you can make page-level fixes for pesky issues like re-writing page titles, descriptions, and headings:

Screenshot from Ahrefs Site Audit, July 2024

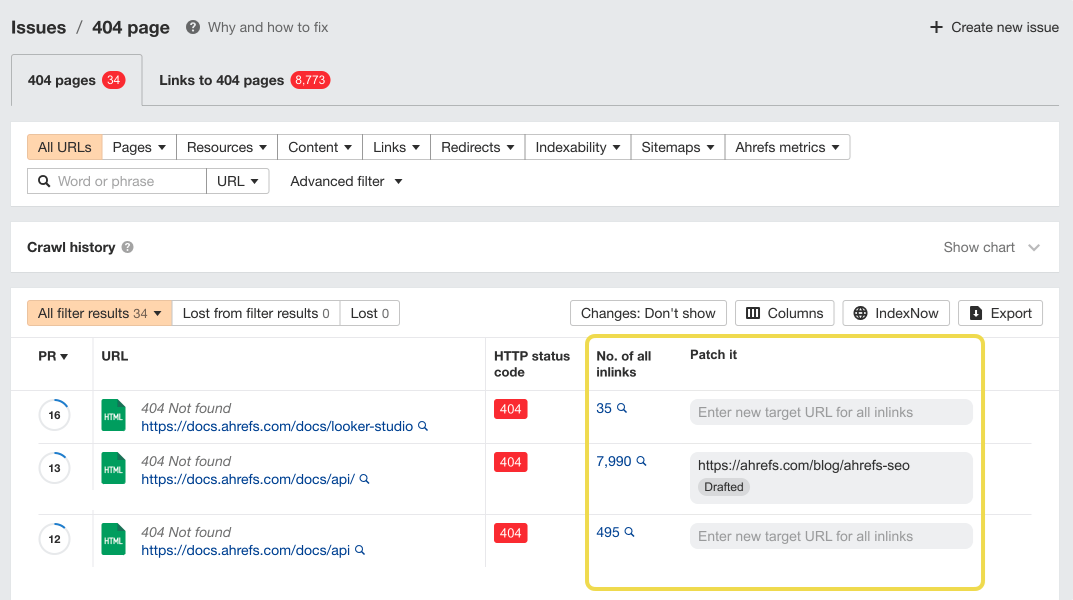

You can also make site-wide fixes. For example, fixing internal links to broken pages can be challenging without support from developers on large sites. With Patches, you’ll be able to roll out automatic fixes for issues like this yourself:

Screenshot from Ahrefs Site Audit, July 2024

As we grow this tool, we plan to automate over 95% of technical fixes via JavaScript snippets or Cloudflare workers, so you don’t have to rely on developers as much as you may right now. We’re also integrating AI to help you speed up the process of fixing fiddly tasks even more.

Get More Buy-In For Enterprise SEO With These Workflows

Now, as exciting and helpful as these workflows may be for you, the key is to get your boss and your boss’ boss on board.

If you’re ever having trouble getting buy-in for SEO projects or budgets for new initiatives, try using the cost savings you can pass as leverage.

For instance, you can show how, usually, three engineers would dedicate five sprints to fixing a particular issue, costing the company illions of dollars—millions, billions, bajillions, whatever it is. But with your proposed solution, you can reduce costs and free up the engineers’ time to work on high-value tasks.

You can also share the Ultimate Enterprise SEO Playbook with them. It’s designed to show executives how your team is strategically valuable and can solve many other challenges within the organization.

Stay in the loop with Entireweb

Get the latest updates delivered straight to your inbox. No spam - unsubscribe anytime.