SEO

8 Types of Bad Links to Avoid

Have you ever wondered why your website isn’t ranking despite having quality content? Well, it could be because of the links you’ve been building.

In the world of SEO, links are a crucial ranking factor. With that comes a temptation for many website owners and marketers to resort to manipulative tactics to acquire links, which can lead to link spam.

This harmful practice can result in penalties from search engines, losing you both traffic and revenue. To avoid being penalized by search engines, it’s crucial to be familiar with the various types of bad links that fall under the umbrella of link spam.

In this article, we’ll provide you with the lowdown on link spam and eight types of bad links you should avoid at all costs.

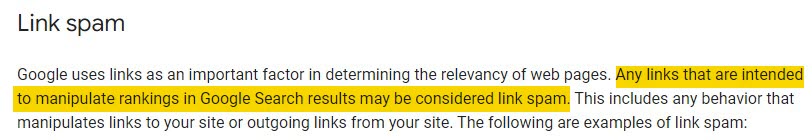

Bad links are those that violate Google’s spam policies. In Google’s words, if the links are intended to manipulate rankings in Google Search results, they may be considered link spam.

Bad links mainly fall into two categories: (1) spammy, waste-of-time links and (2) those that are potentially dangerous to your site.

Bad links can trigger a Google penalty, which means a significant drop in rankings and organic traffic. Your site may even be removed from the search results altogether:

Sites that violate our policies may rank lower in results or not appear in results at all.

But even if Google doesn’t penalize you, bad links are often a waste of time and money because many have no positive impact on rankings or traffic.

When to take action

Google has consistently said it’s very good at identifying and discounting spam links algorithmically. Therefore, you do not need to worry about finding and removing every single bad link.

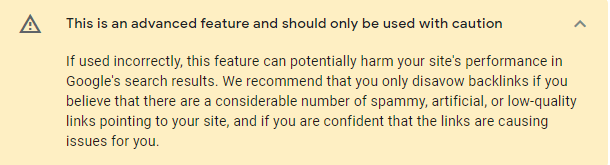

Google’s disavow tool allows you to discount links to avoid link-based penalties. But this tool can be incredibly damaging to your site, especially if you do not know what you are doing.

You shouldn’t disavow links haphazardly because you risk disavowing links that are actually helping you to rank. Just because you think a link is spammy doesn’t mean Google does.

With that in mind, there are only two instances where you should worry about bad links:

- If you have received a manual action against your site for unnatural links.

- If you know (or strongly suspect) a site has previously participated in shady link building.

Recently purchased a site or taken over the SEO of a site for a new client? If you know (or strongly suspect) those previously responsible actively participated in shady link building—such as PBNs—then it’s time to clean house.

So what types of links are considered link spam?

From Google’s definition of link spam, it could be argued that any link you land from link building is effectively link spam. After all, you are actively looking to acquire backlinks to improve your rankings in the search engine results pages (SERPs).

So doesn’t that mean you are trying to manipulate rankings? 65% of SEOs agree.

But this is probably not what Google means. In fact, Google legend John Mueller has even previously praised quality link building acquisition methods like digital PR, stating they are just as crucial as tech SEO.

The examples Google gives of link spam are all things that most of us would consider crafty tactics that make the web a worse place. So these are basically what Google doesn’t want to see and those that you should avoid.

Let’s look at some examples:

1. PBNs

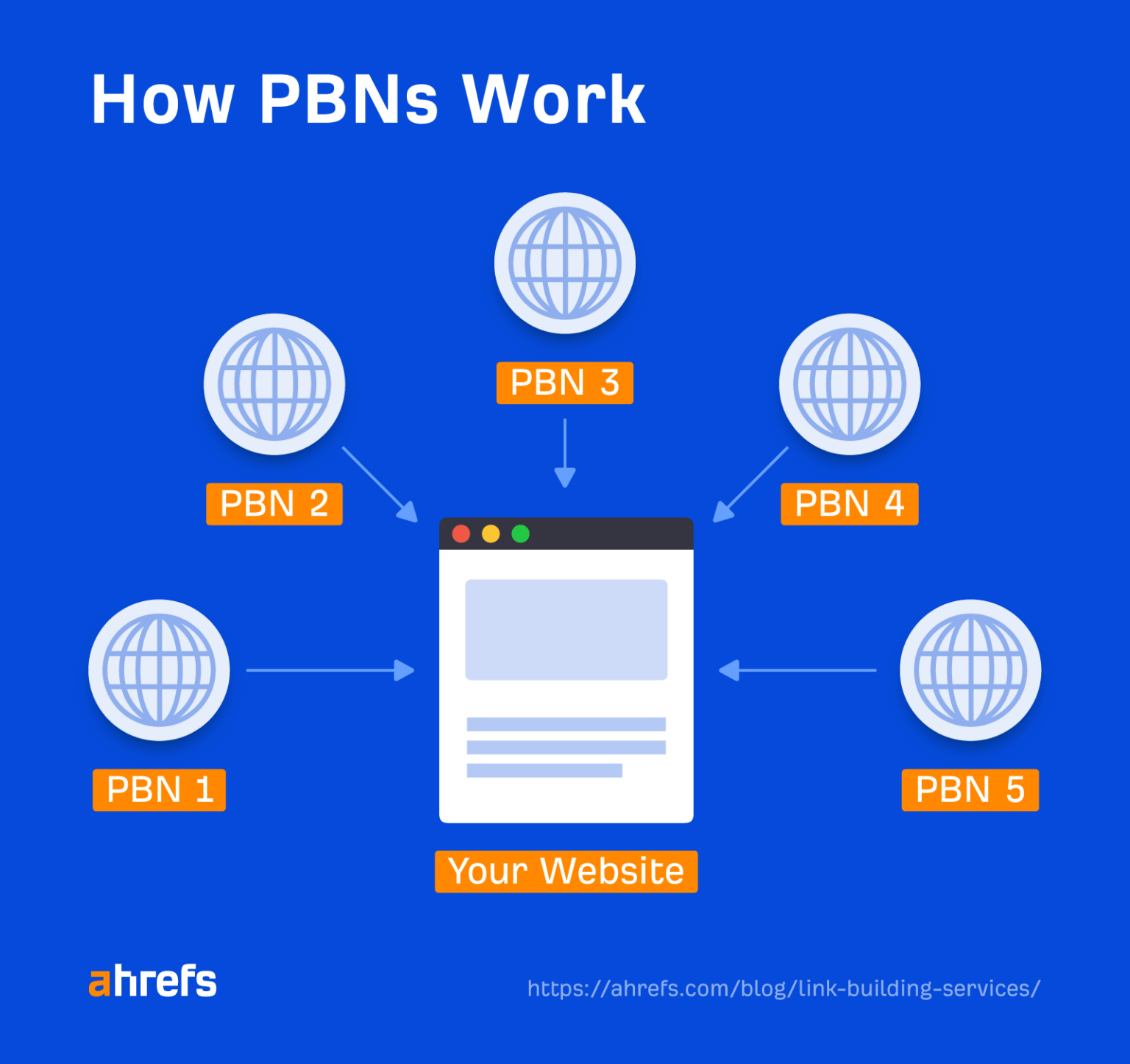

Private blog networks (PBNs) are made up of many websites you own linked together to manipulate search engine rankings.

These link networks can be massive and mean building multiple topically relevant sites solely to link to one another. They’re designed to give the impression that links have been “earned” to create trust signals artificially.

Why should you avoid these types of links?

If Google doesn’t catch you, then yes, PBNs can work and move the needle. The problem is that it’s hard to build an “undetectable” PBN unless you’re an experienced spammer.

A truly sophisticated PBN needs to look and act like a real website, with genuine content and regular posting. It also needs to have different ownership, domain, and hosting providers to avoid being detected.

Quite honestly, if you are going to put that much work in, you may as well spend that time and effort on quality link building instead.

How to check if you have any

PBNs can be difficult to detect. However, if you suspect a website you just took over previously used PBNs to build links, then there’ll likely be some key giveaways.

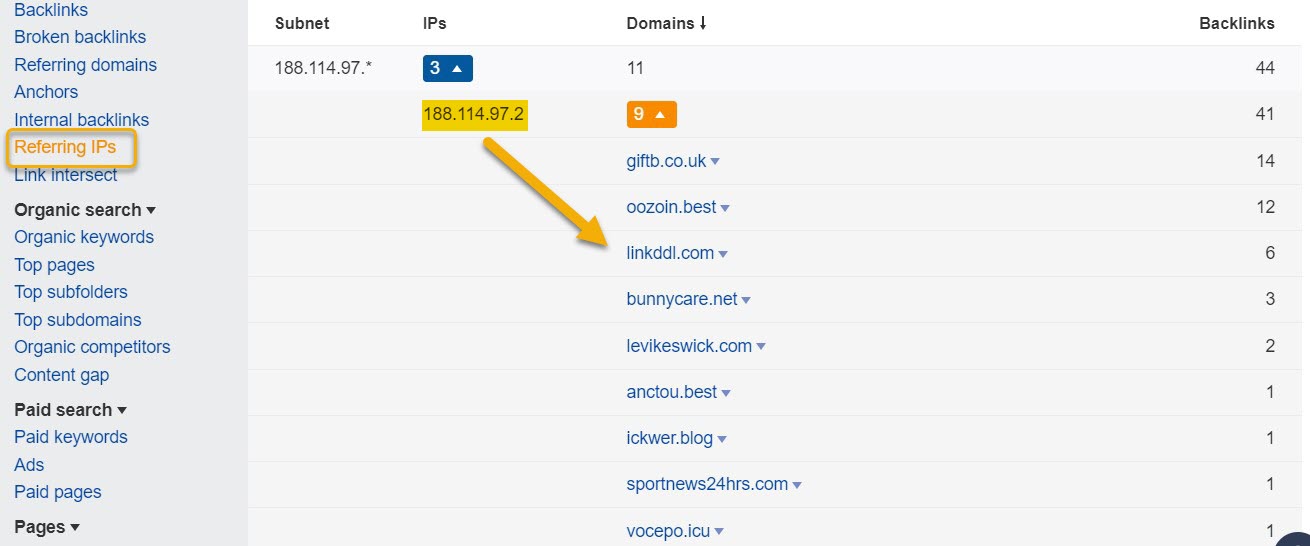

One big one is websites that are all hosted on the same network, meaning they are all coming from the same IP address.

With Ahrefs’ Site Explorer, you can find if multiple sites are linking to you from the same IP address with the Referring IPs report.

It’s important to say that just because multiple sites are on the same IP alone doesn’t confirm that sites are part of a PBN. They can be using the same hosting service (the examples above are all using Cloudflare).

However, if the websites also have these:

- Little to no organic traffic

- Little content/no keywords

- Links to each other

- Spammy appearance and/or default themes

Then they can be key indicators of a PBN.

2. Paid links

Paid links are where you “buy or sell links for ranking purposes.” It’s important to mention that this doesn’t just include buying links for money. Exchanging links for goods or services or even buying a bloke a pint in return for a link all qualify as paid links.

However, not all paid links are a problem as long as they have the right attributes. Google even states:

Google does understand that buying and selling links is a normal part of the economy of the web for advertising and sponsorship purposes.

However, any link that falls under those examples should have either a “nofollow” or “sponsored” attribute.

Why should you avoid these types of links?

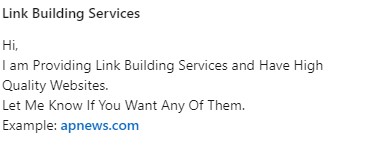

If you buy links from a site or one of the many “dear sir club” link builders, you’re going to get very little value from those links.

The reason is they will sell these to anyone. This makes the site an absolute spam fest and completely dilutes any link equity you could acquire. It also means these links are worthless at best and, at worst, could land you with a penalty.

How to check if you have any

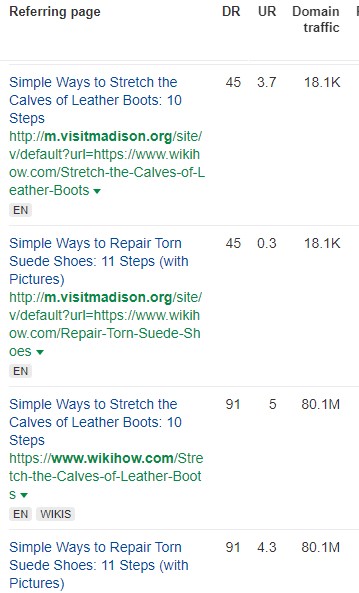

From my experience, it’s often easy to identify these links based on the anchor text. Most paid links will use exact match anchors and, on top of that, they are usually from low-quality sites with poor content.

Using Site Explorer, you can see all of the links to your site, including the anchor text.

Of course, not every link with an exact match anchor will be a paid link. But you can get an idea of the quality from the URL of the linking site and the domain traffic. If these aren’t looking good, you can click through and check out the page directly.

You don’t necessarily need to worry about removing these but, rather, avoid acquiring paid links in the first place.

3. Hacked links

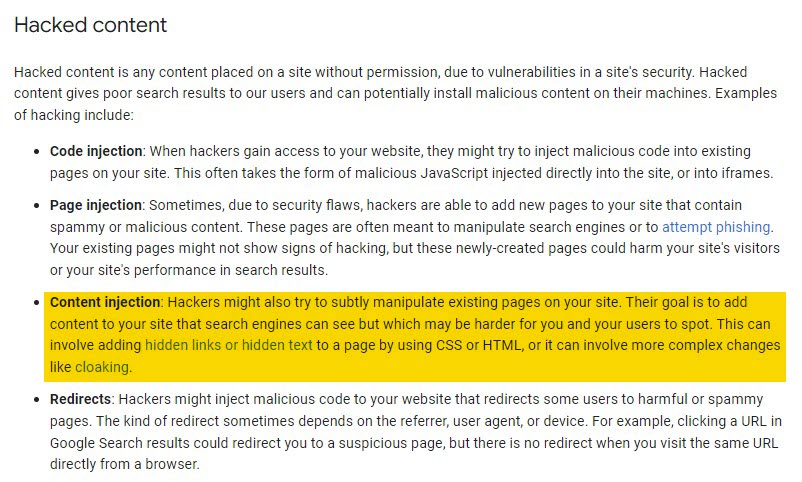

Hacked links involve someone gaining access to a site and inserting content into existing pages. This means that search engines can see it. But as this is done within an existing page, it is often harder for website owners to detect.

Why should you avoid these types of links?

Hacked links are completely unethical. Yet unfortunately, they still exist. These don’t fall specifically under link spam but under Google’s general spam policies.

If you have links pointing to your site that were placed by hacking, the owner of the hacked website can report your site to Google and to your domain and hosting provider, resulting in your site being taken down.

Hacking is also illegal in many countries, including the U.S. (under the Computer Fraud and Abuse Act) and in the U.K. (Computer Misuse Act 1990).

Adding hacked links could be considered “hacking for notoriety” in order to appear as an authority on a particular topic which, in most cases, is illegal.

How to check if you have any

There are instances of website owners paying link building agencies for guest posting services only to find the links they’ve acquired are indeed hacked after the hacked website has reported them.

Unfortunately, there aren’t many ways to ensure that a link to your site was placed knowingly by the referring site. The best course of action is to be proactive and ensure that you either take charge of all link building activities yourself or use a reputable link building provider.

4. Hidden links

Hidden links are another thing that falls under Google’s general spam policies and is, again, totally unethical.

These types of links can be done in many different ways:

- Using white text on a white background

- Hiding text behind an image

- Using CSS to position text off-screen

- Setting the font size or opacity to 0

- Hiding a link by only linking one small character (for example, a hyphen in the middle of a paragraph)

Why should you avoid these types of links?

Like hacked links, these can be done without either the website owner or the linking site having any knowledge of these link placements but can land both sites with a Google penalty.

Unfortunately, I have personally worked with clients who have had this happen to them. In this instance, a link building agency they have previously worked with has had access to their site and placed hidden links to all their other clients’ websites, using them like a PBN.

How to check if you have any

These links can be difficult to detect unless you already have a suspicion that they exist.

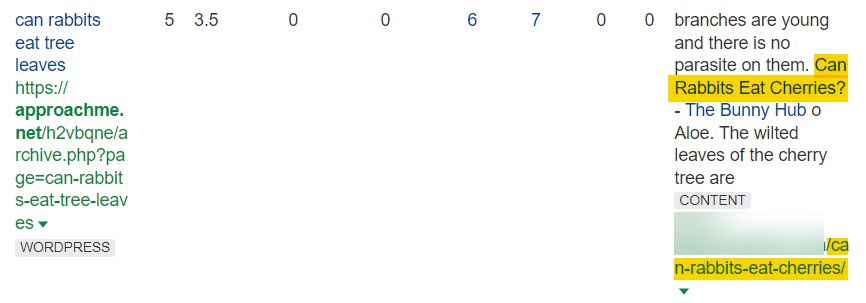

I’ve found that one of the best ways to identify these links is by looking at the anchors and surrounding text.

Usually, the link will have a very specific anchor, e.g., “we buy houses” for a realtor site. However, this will be surrounded by other random specific anchors and usually from a referring page that has no relevance to your own.

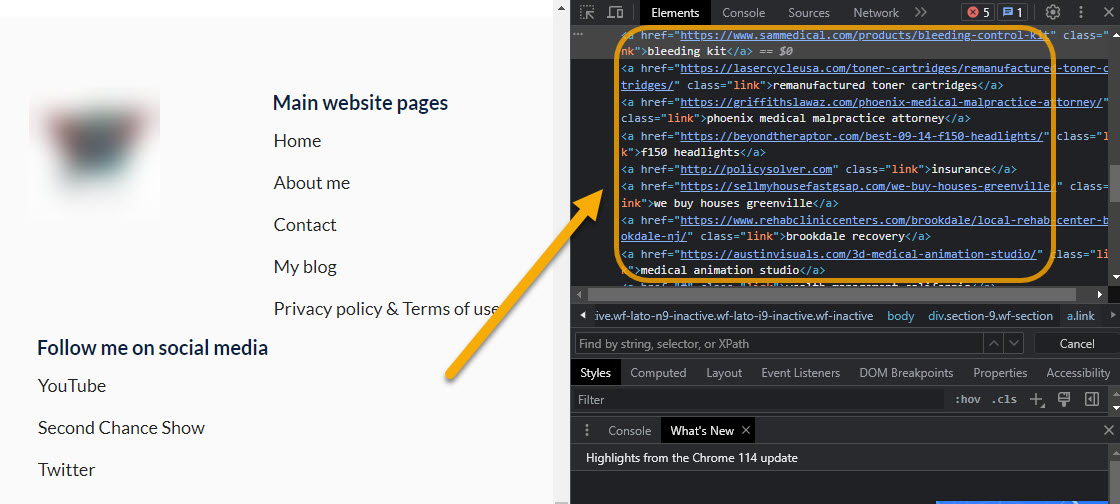

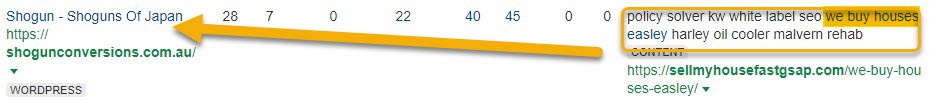

Here is an example from the Backlinks report in Site Explorer:

The above image shows a link with the anchor “we buy houses easley.” However, this is clearly surrounded by other keywords, which are also most likely anchor texts to other sites (policy solver, kw white label seo, etc.).

These links also come from a website about Japanese shoguns with no topical relevance.

This is enough to cause suspicion, so we should click through to the website and check if we can clearly see the links.

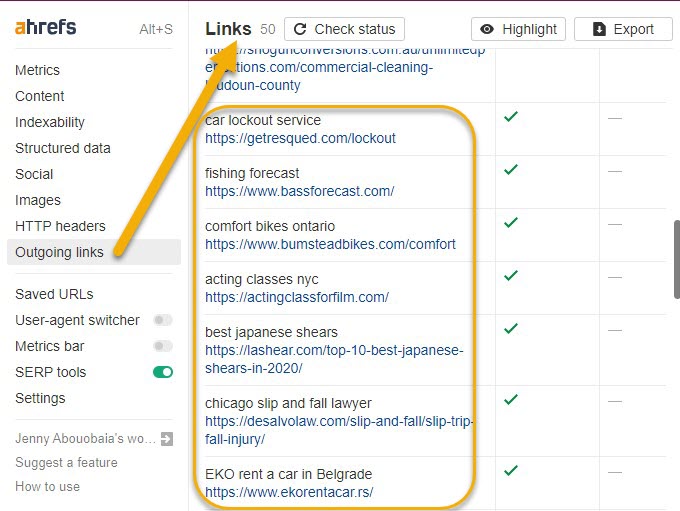

In this case, no external links are visible on the page. However, with Ahrefs’ SEO Toolbar, you can see there are more than 50 similar outgoing links hidden on the page.

5. Link exchanges

Link exchanges or reciprocal linking is when two sites agree to exchange links with each other. Although link exchanges generally aren’t against Google’s guidelines, “excessive link exchanges” and “partner pages exclusively for the sake of cross-linking” are.

Why should you avoid these types of links?

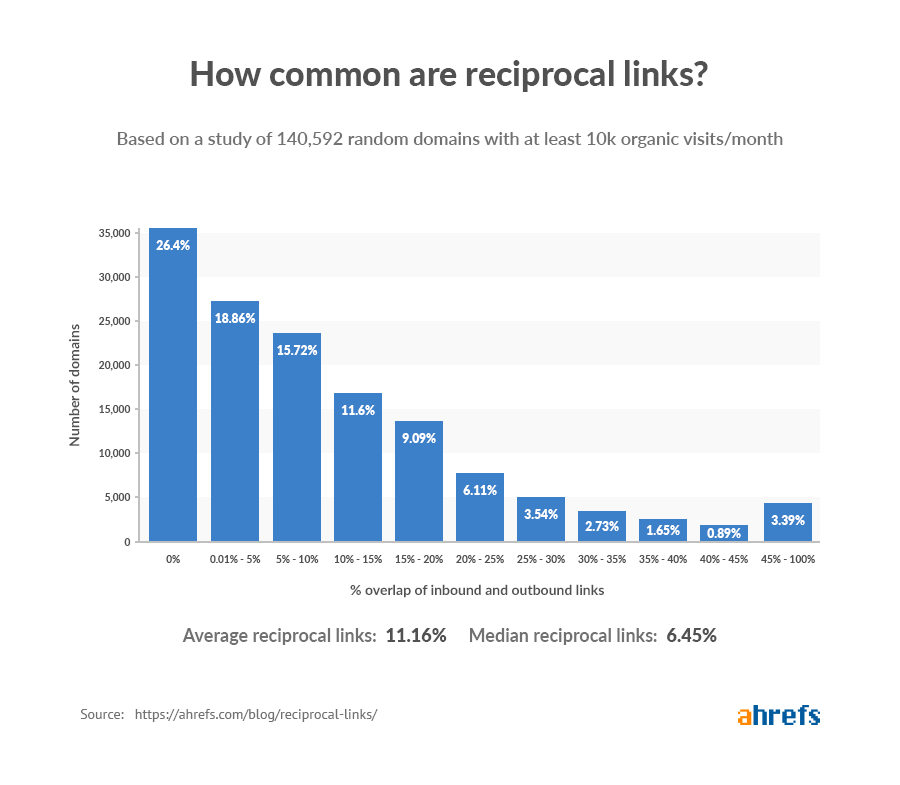

Reciprocal link building happens naturally on the web all of the time (study). After all, it makes sense that you talk about other sites in your niche, and they talk about you.

Linking out is also a great way to get the attention of other high-quality sites in your niche. If they return the favor by linking back in the future, then that’s definitely going to help your site.

But if you are going around contacting hundreds of sites and asking to exchange links, then that’s eventually going to cause you some harm.

Therefore, there is a fine line with this one. You must tread carefully not to be perceived as participating in any link exchange schemes.

How to check if you have any

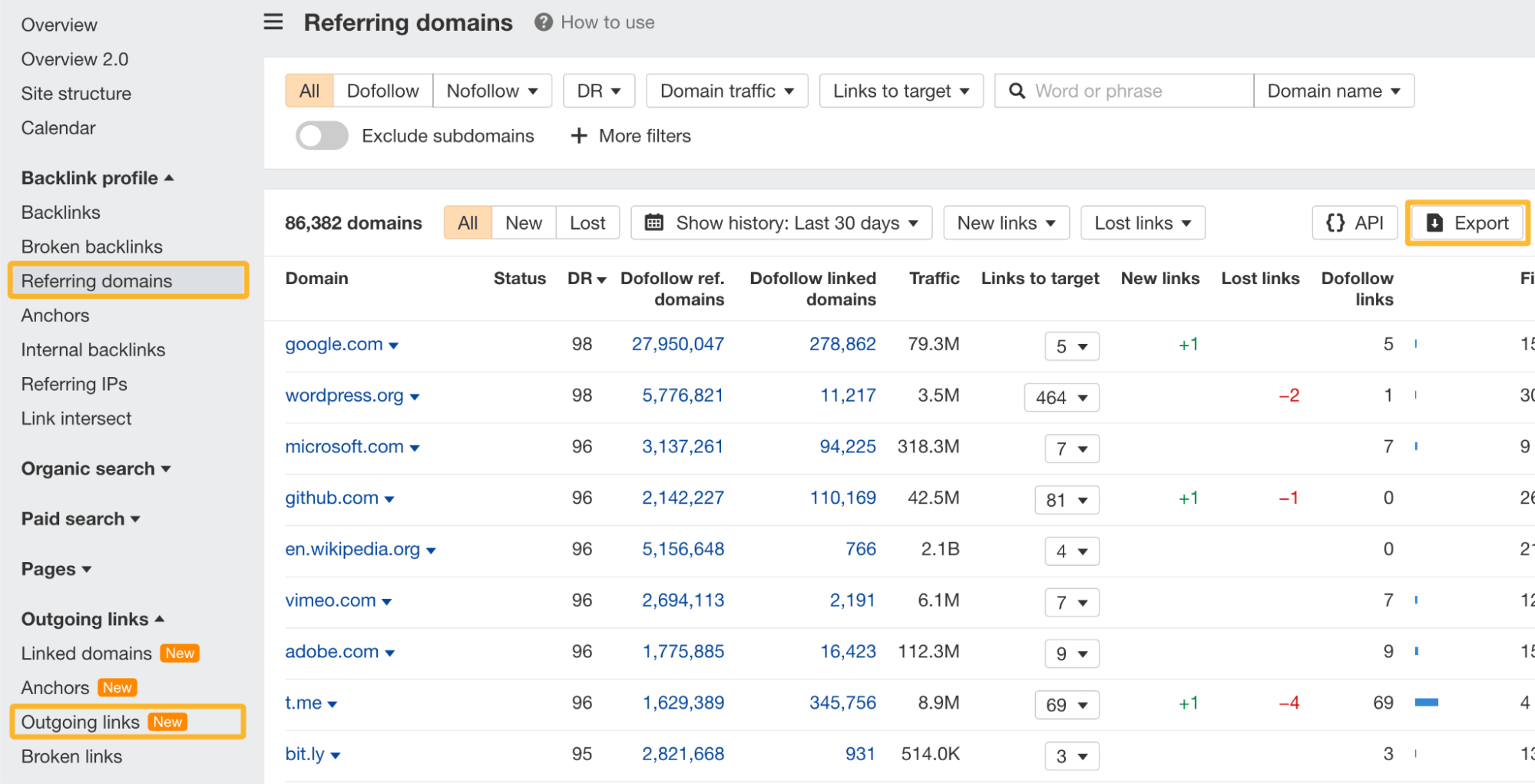

You can see if you have multiple domains that have both outgoing and incoming links from your domain with the help of Site Explorer and Google Sheets.

In Site Explorer, head over to Backlinks profile > Referring domains and export all of the data. Then head over to Outgoing links > Outgoing links report and, again, export the data.

Add all of the exported data into a Google Sheet and look for duplicate domains. You can use a Google add-on like Remove Duplicates. This will highlight any duplicate domains so you can see which domains you have links both to and from.

Note that the key here is excessive link exchanges. Having a handful of reciprocal links isn’t going to send out red flags. However, if you have 30 or 40 (or more) reciprocal links to a single site, you may need to look at dialing those down.

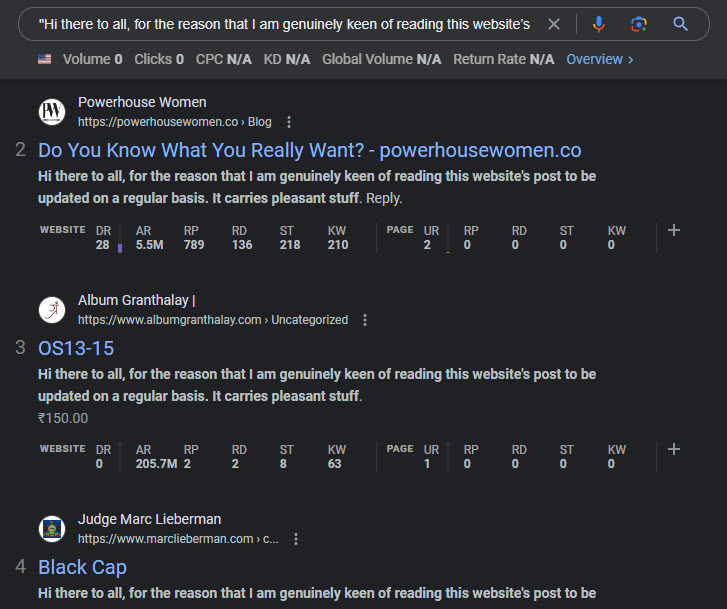

6. Automated link building

Automated link building is where you use tools to build links at scale to your website with the touch of a button.

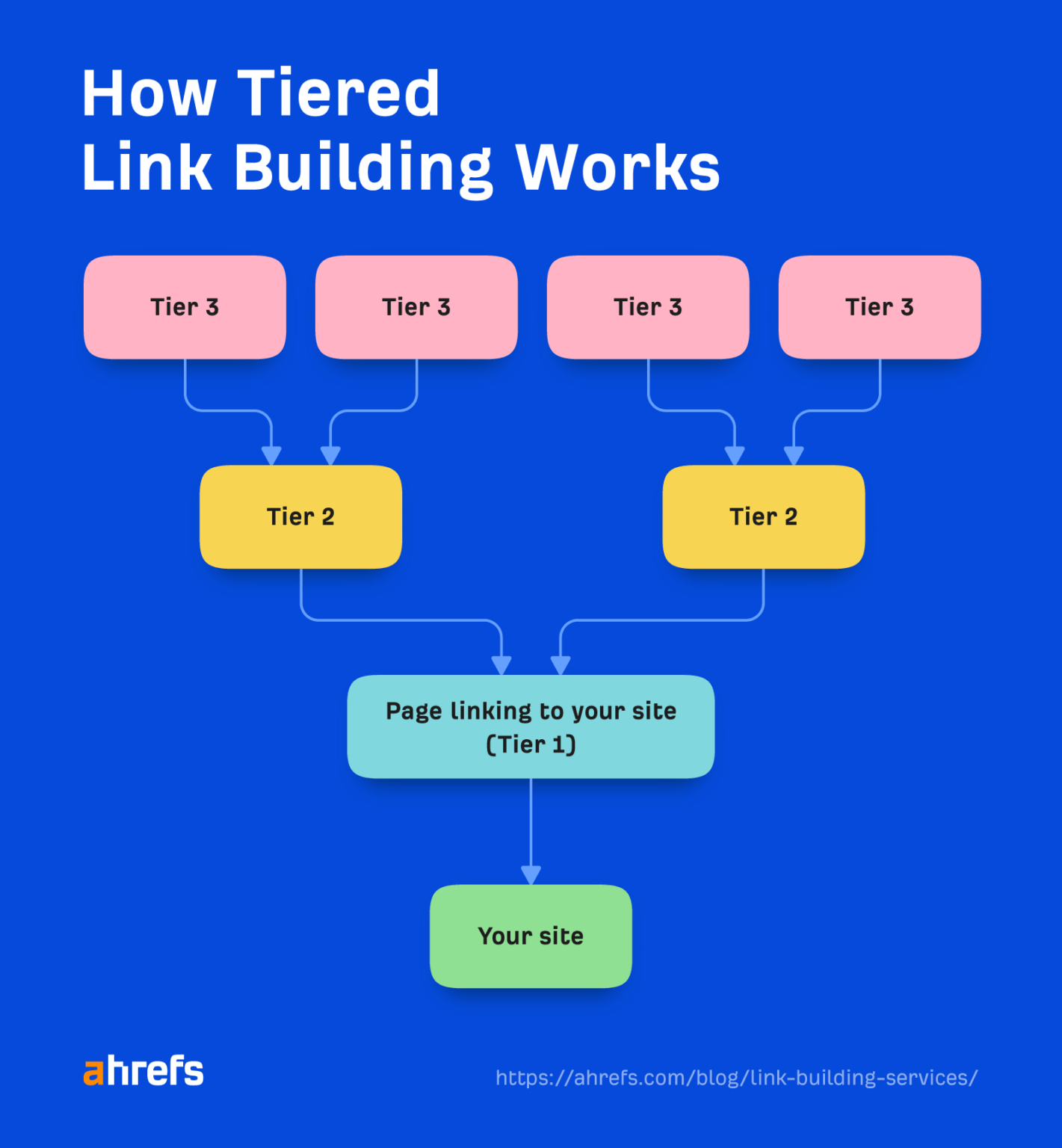

Usually, these are in the form of tiered links. This means that you build links directly to your site (Tier 1) and then build additional links to the sites linking to yours (Tier 2, Tier 3, etc.).

Mostly, these automated programs build spammy links from poor-quality web 2.0 blog sites (yes, they’re really still a thing) and pile on some social signals for good measure.

Why should you avoid these types of links?

Not only will the links these tools build do absolutely nothing for your site, but they are also clearly defined under Google’s link spam policy. If detected, these will likely land you with a penalty.

Often, these types of links are offered as very cheap link building services on platforms like Fiverr, usually advertised as things like “foundation links.” You should avoid these services at all costs, as they are even more risky when you are not in control of the automation process.

How to check if you have any

These are often easy to spot as, usually, there are a mass of different referring domains linking to your site all with the same (or very similar) titles and anchor text.

Again, these aren’t really something to worry about removing. These spammy links are easily identified and ignored by Google. They are simply better to avoid in the first place, as they won’t do anything for your site.

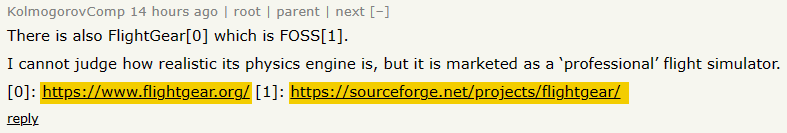

7. Forum and comment spam

Forum spamming is another link building tactic where someone posts their website’s link in forums. Comment spam is where a person (or bot) leaves an irrelevant comment on a website with a link.

Why should you avoid these types of links?

There are two ways to spam forums. First, you can simply create multiple profiles and put your link in there. Secondly, you can place links in actual forum posts, either in the signature or in the body of the post.

The only way links should be used in forums is if they advise someone of something as part of a discussion.

If you run a WordPress blog, you’re probably already familiar with comment spam:

Comment spam blasts rely on the fact that:

- Many sites allow comments to be posted without moderation.

- Others just simply let spam comments slip through the net.

And that happens a lot…

Multiple sites with the same comment leave a pretty clear footprint of suspicious links for Google to pick up on and happily penalize.

Both forum and comment spam violate Google’s quality guidelines and can lead to a penalty.

How to check if you have any

In honesty, I wouldn’t even waste time checking these. If you have links from comment spam, Google will likely automatically discount them. It’s more important to avoid acquiring them in the first place.

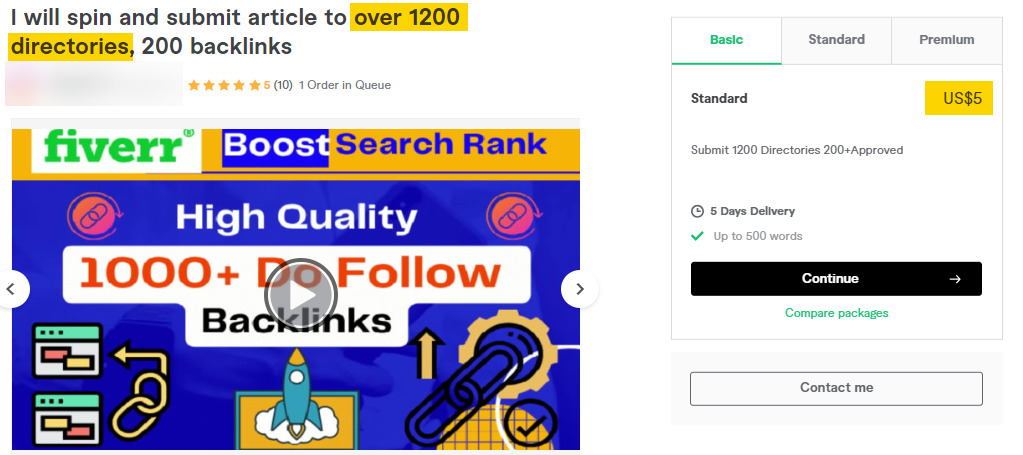

8. Low-quality directories

Creating profiles on high-quality directory sites like Crunchbase is a great way to build an online presence for your business. However, when you start building these at scale on low-quality sites, it becomes spammy.

Why should you avoid these types of links?

It’s easy to fall into a trap with this one, especially for new business owners. Of course, you want to get your name out there, but this is all about quality over quantity. That’s why it is especially important to avoid link building services that do this at scale.

This kind of low-quality, bulk-directory submission hasn’t worked for at least 15 years. These days, a forum blast such as this is likely to get your site penalized.

So stick to well-known directory sites and avoid low-quality ones that will do nothing for your business and simply waste your time.

How to check if you have any

You can usually spot directory links pretty quickly in Ahrefs’ free backlink checker, as the title of the referring page will most likely include your business name.

Again, I wouldn’t worry too much about checking to see if you have these; simply avoid them in the first place.

Final thoughts

A healthy backlink profile with high-quality links is an incredibly important aspect of SEO. Ensuring you use white-hat link building techniques and avoid actively acquiring bad links is key to preventing you from being penalized by Google.

But it’s also important not to be too reactive when dealing with link spam. Basically, if you’ve already received a manual action, then you need to perform a link audit and use the disavow tool to remove any suspect links. But if you haven’t, it’s better to be proactive and try to avoid bad backlinks in the first place.

If you want to learn how to build awesome links the right way, check out our advanced link building course.

Got questions? Ping me on Twitter.

Stay in the loop with Entireweb

Get the latest updates delivered straight to your inbox. No spam - unsubscribe anytime.