SEO

A Simple (But Complete) Guide

Most website owners have to deal with redirects at one point or another. Redirects help keep things accessible for users and search engines when you rebrand, merge multiple websites, delete a page, or simply move a page to a new location.

However, the world of redirects is a murky one, as different types of redirects exist for different scenarios. So it’s important to understand the differences between them.

In this guide, you’ll learn:

Redirects are a way to forward users (and bots) to a URL other than the one they requested.

There are two reasons why you should use redirects when moving content:

- Better user experience for visitors – You don’t want visitors to get hit with a “page not found” warning when they’re trying to access a page that’s moved. Redirects solve this problem by seamlessly sending visitors to the content’s new location.

- Help search engines understand your site – Redirects tell search engines where content has moved and whether the move is permanent or temporary. This affects if and how the pages appear in their search results.

You should use redirects when you move content from one URL to another and, occasionally, when you delete content. Let’s take a quick look at a few common scenarios where you’ll want to use them.

When moving domains

If you’re rebranding and moving from one domain to another, you’ll need to permanently redirect all the pages on the old domain to their locations on the new domain.

When merging websites

If you’re merging multiple websites into one, you’ll need to permanently redirect old URLs to new URLs.

When switching to HTTPS

If you’re switching from HTTP to HTTPS (strongly recommended), you’ll need to permanently redirect every unsecure (HTTP) page and resource to its secure (HTTPS) location.

When running a promotion

If you’re running a temporary promotion and want to send visitors from, say, domain.com/laptops to domain.com/laptops-black-friday-deals, you’ll need to use a temporary redirect.

When deleting pages

If you’re removing content from your site, you should permanently redirect its URL to a relevant, similar page where possible. This helps to ensure that any backlinks to the old page still count for SEO purposes. It also ensures that any bookmarks or internal links still work.

Redirects are split into two groups: server-side redirects and client-side redirects. Each group contains a number of redirects that search engines view as either temporary or permanent. So you’ll need to use the right redirect for the task at hand to avoid potential SEO issues.

Server-side redirects

A server-side redirect is one where the server decides where to redirect the user or search engine when a page is requested. It does this by returning a 3XX HTTP status code.

If you’re doing SEO, you’ll be using server-side redirects most of the time, as client-side redirects (we’ll discuss those shortly) have a few drawbacks and tend to be more suitable for quite specific and rare use cases.

Here are the 3XX redirects every SEO should know:

301 redirect

A 301 redirect forwards users to the new URL and tells search engines that the resource has permanently moved. When confronted with a 301 redirect, search engines typically drop the old redirected URL from their index in favor of the new URL. They also transfer PageRank (authority) to the new URL.

302 redirect

A 302 redirect forwards users to the new URL and tells search engines that the resource has temporarily moved. When confronted with a 302 redirect, search engines keep the old URL indexed even though it’s redirected. However, if you leave the 302 redirect in place for a long time, search engines will likely start treating it like a 301 redirect and index the new URL instead.

Like 301s, 302s transfer PageRank. The difference is the transfer happens “backward.” In other words, the “new” URL’s PageRank transfers backward to the old URL (unless search engines are treating it like a 301).

303 redirect

A 303 redirect forwards the user to a resource similar to the one requested and is a temporary form of redirect. It’s typically used for things like preventing form resubmissions when a user hits the “back” button in their browser. You won’t typically use 303 redirects for SEO purposes. If you do, search engines may treat them as either a 301 or 302.

307 redirect

A 307 redirect is the same as a 302 redirect, except it retains the HTTP method (POST, GET) of the original request when performing the redirect.

308 redirect

A 308 redirect is the same as a 301 redirect, except it retains the HTTP method of the original request when performing the redirect. Google says it treats 308 redirects the same as 301 redirects, but most SEOs still use 301 redirects.

If you use it like a 301 we’ll treat it as such.

— ❄️ John ❄️ (@JohnMu) May 10, 2018

Client-side redirects

A client-side redirect is one where the browser decides where to redirect the user. You generally shouldn’t use it unless you don’t have another option.

307 redirect

A 307 redirect commonly occurs client-side when a site uses HSTS. This is because HSTS tells the client’s browser that the server only accepts secure (HTTPS) connections and to perform an internal 307 redirect if asked to request unsecure (HTTP) resources from the site in the future.

Meta refresh redirect

A meta refresh redirect tells the browser to redirect the user after a set number of seconds. Google understands it and will typically treat it the same as a 301 redirect. However, when asked about meta redirects with delays on Twitter, Google’s John Mueller said, “If you want it treated like a redirect, it makes sense to have it act like a redirect.”

Either way, Google doesn’t recommend using them, as they can be confusing for the user and aren’t supported by all browsers. Google recommends using a server-side 301 redirect instead.

JavaScript redirect

A JavaScript redirect, as you probably guessed, uses JavaScript to instruct the browser to redirect the user to a different URL. Some people believe a JS redirect causes issues for search engines because they have to render the page to see the redirect. Although this is true, it’s not usually an issue for Google because it renders pages so fast these days. (Though, there could still be issues with other search engines.) All in all, it’s still better to use a 3XX redirect where possible, but a JS redirect is typically fine if that’s your only option.

Redirects can get complicated. To help you along, here are a few best practices to keep in mind if you’re involved in SEO.

Redirect HTTP to HTTPS

Everyone should be using HTTPS at this stage. It gives your site an extra layer of security, and it’s a small Google ranking factor.

There are a couple of ways to check that your site is properly redirecting from HTTP to HTTPS. The first is to install and activate Ahrefs’ SEO Toolbar, then try to navigate to the HTTP version of your homepage. It should redirect, and you should see a 301 response code on the toolbar.

The problem with this method is you may see a 307 if your site uses HSTS. So here’s another method:

- Go to Ahrefs’ Site Audit

- Click + New Project

- Click Add manually

- Change the Scope to HTTP

- Enter your domain

You should see the “Not crawlable” error for both the www and non-www versions of your homepage, along with the “301 moved permanently” notification.

If there isn’t a redirect in place or you’re using a type of redirect other than 301 or 308, it’s probably worth asking your developer to switch to 301.

TIP

Whichever method you use, it’s worth repeating it for a few pages so that you can be confident proper redirects are in place across your site.

Use HSTS (to create 307 redirects)

Implementing HSTS (HTTP Strict Transport Security) on your server stops people from accessing non-secure (HTTP) content on your site. It does this by telling browsers that your server only accepts secure connections and that they should do an internal 307 redirect to the HTTPS version of any HTTP resource they’re asked to access.

This isn’t a substitute for 301 or 302 redirects, and it’s not strictly necessary if those are properly set up on your site. However, we argue that it’s best practice these days—even if just to speed things up a bit for users.

Learn more: Strict-Transport-Security — Mozilla

TIP

After implementing HSTS, consider submitting your site to the HSTS preload list. This enables HSTS for everyone trying to visit your website—even if they haven’t visited it before.

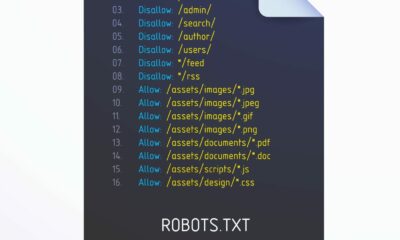

Avoid meta refresh redirects

Meta refresh redirects aren’t ideal, so it’s worth checking your site for these and replacing them with either a 301 or 302 redirect. You can do this easily enough with a free Ahrefs Webmaster Tools account. Just crawl your site with Site Audit and look for the “meta refresh redirect” error.

If you then click the error and hit “View affected URLs,” you’ll see the URLs with meta refresh redirects.

Redirect deleted pages to relevant working alternatives (where possible)

Redirecting URLs makes sense when you move content, but it also often makes sense to redirect when you delete content. This is because seeing a “404 not found” error isn’t ideal when a user tries to access a deleted page. It’s often more user friendly to redirect them to a relevant working alternative.

For example, we recently revamped our blog category pages. During the process, we deleted a few categories, including “Outreach & Content Promotion.” Rather than leave this as a 404, we redirected it to our “Link Building” category, as it’s a closely related working alternative.

You can’t do this every time, as there isn’t always a relevant alternative. But if there is, doing so also has the benefit of preserving and transferring PageRank (authority) from the redirected page to the alternative resource.

Most sites will already have some dead or deleted pages that return a 404 status code. To find these, sign up for a free Ahrefs Webmaster Tools account, crawl your site with Site Audit, go to the Internal pages report, then look for the “4XX page” error:

TIP

Enable “backlinks” as a source when setting up your crawl. This will allow Site Audit to find deleted pages with backlinks, even if there are no internal links to the pages on your site.

To see the affected pages, click the error and hit “View affected URLs.” If you see a lot of URLs, click the “Manage columns” button, add the “Referring domains” column, then sort by referring domains in descending order. You can then tackle the 404s with the most backlinks first.

Avoid long redirect chains

Redirect chains are when multiple redirects take place between a requested resource and its final destination.

Google’s official documentation says that it follows up to 10 redirect hops, so any redirect chains shorter than that aren’t really a problem for SEO.

Googlebot follows up to 10 redirect hops. If the crawler doesn’t receive content within 10 hops, Search Console will show a redirect error in the site’s Index Coverage report.

However, long chains still slow things down for users, so it’s best to avoid them if possible.

You can find long redirect chains for free using Ahrefs Webmaster Tools:

- Crawl your site with Site Audit

- Go to the Redirects report

- Click the Issues tab

- Look for the “Redirect chain too long” error

Click the issue and hit “View affected URLs” to see URLs that begin a redirect chain and all the URLs in the chain.

Avoid redirect loops

Redirect loops are infinite loops of redirects that occur when a URL redirects to itself or when a URL in a redirect chain redirects back to a URL earlier in the chain.

They’re problematic for two reasons:

- For users –They cut off access to an intended resource and trigger a “too many redirects” error in the browser.

- For search engines – They “trap” crawlers and waste the crawl budget.

The simplest way to find redirect loops is to crawl your site with a tool like Ahrefs’ Site Audit. You can do this for free with an Ahrefs Webmaster Tools account.

- Crawl your site with Site Audit

- Go to the Redirects report

- Click the Issues tab

- Look for the “Redirect loop” error

If you then click the error and click “View affected URLs,” you’ll see a list of URLs that redirect, as well as all URLs in the chain:

The best way to fix a redirect loop depends on whether the last URL in the chain (before the loop) is the intended final destination.

If it is, remove the redirect from the final URL. Then make sure the resource is accessible and returns a 200 status code.

If it isn’t, change the looping redirect to the intended final destination.

In both cases, it’s good practice to swap out any internal links to remaining redirects for direct links to the final URL.

Final thoughts

Redirects for SEO are pretty straightforward. You’ll be using server-side 301 and 302 redirects most of the time, depending on whether the redirect is permanent or temporary. However, there are some nuances to the way Google treats 301s and 302s, so it’s worth reading these two guides if you’re facing issues:

Got questions? Ping me on Twitter.

Stay in the loop with Entireweb

Get the latest updates delivered straight to your inbox. No spam - unsubscribe anytime.

You must be logged in to post a comment Login