SEO

How I Beat Google’s Core Update by Changing the Game

Google released a major update. They typically don’t announce their updates, but you know when they do, it is going to be big.

And that’s what happened with the most recent update that they announced.

A lot of people saw their traffic drop. And of course, at the same time, people saw their traffic increase because when one site goes down in rankings another site moves up to take its spot.

Can you guess what happened to my traffic?

Well, based on the title of the post you are probably going to guess that it went up.

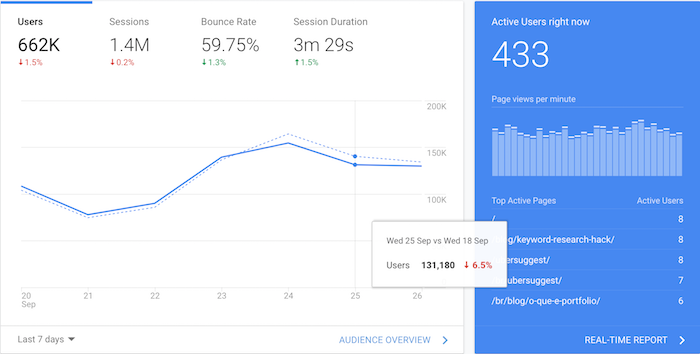

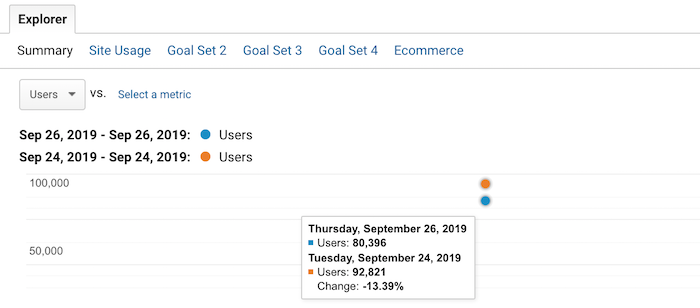

Now, let’s see what happened to my search traffic.

My overall traffic has already dipped by roughly 6%. When you look at my organic traffic, you can see that it has dropped by 13.39%.

I know what you are thinking… how did you beat Google’s core update when your traffic went down?

What if I told you that I saw this coming and I came up with a solution and contingency strategy in case my organic search traffic would ever drop?

But before I go into that, let me first break down how it all started and then I will get into how I beat Google’s core update.

A new trend

I’ve been doing SEO for a long time… roughly 18 years now.

When I first started, Google algorithm updates still sucked but they were much more simple. For example, you could get hit hard if you built spammy links or if your content was super thin and provided no value.

Over the years, their algorithm has gotten much more complex. Nowadays, it isn’t about if you are breaking the rules or not. Today, it is about optimizing for user experience and doing what’s best for your visitors.

But that in and of itself is never very clear. How do you know that what you are doing is better for a visitor than your competition?

Honestly, you can never be 100% sure. The only one who actually knows is Google. And it is based on whoever it is they decide to work on coding or adjusting their algorithm.

Years ago, I started to notice a new trend with my search traffic.

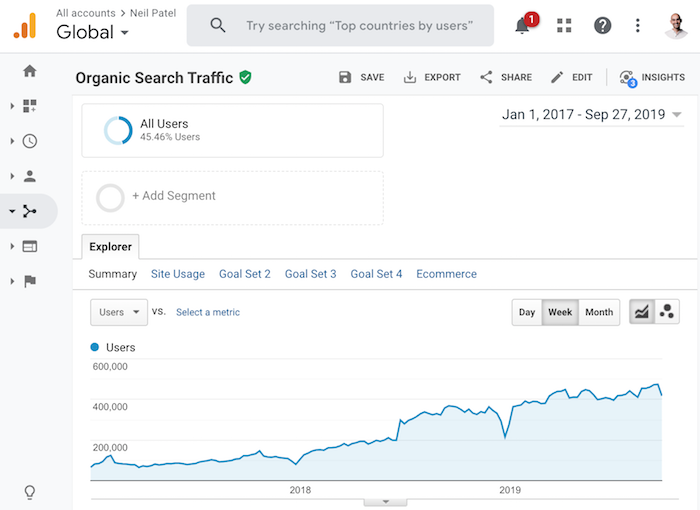

Look at the graph above, do you see the trend?

And no, my traffic doesn’t just climb up and to the right. There are a lot of dips in there. But, of course, my rankings eventually started to continually climb because I figured out how to adapt to algorithm updates.

On a side note, if you aren’t sure how to adapt to the latest algorithm update, read this. It will teach you how to recover your traffic… assuming you saw a dip. Or if you need extra help, check out my ad agency.

In many cases after an algorithm update, Google continues to fine-tune and tweak the algorithm. And if you saw a dip when you shouldn’t have, you’ll eventually start recovering.

But even then, there was one big issue. Compared to all of the previous years, I started to feel like I didn’t have control as an SEO anymore back in 2017. I could no longer guarantee my success, even if I did everything correctly.

Now, I am not trying to blame Google… they didn’t do anything wrong. Overall, their algorithm is great and relevant. If it wasn’t, I wouldn’t be using them.

And just like you and me, Google isn’t perfect. They continually adjust and aim to improve. That’s why they do over 3,200 algorithm updates in a year.

But still, even though I love Google, I didn’t like the feeling of being helpless. Because I knew if my traffic took a drastic dip, I would lose a ton of money.

I need that traffic, not only to drive new revenue but, more importantly, to pay my team members. The concept of not being able to pay my team on any given month is scary, especially when your business is bootstrapped.

So what did I do?

I took matters into my own hands

Although I love SEO, and I think I’m pretty decent at it based on my traffic and my track record, I knew I had to come up with another solution that could provide me with sustainable traffic that could still generate leads for my business.

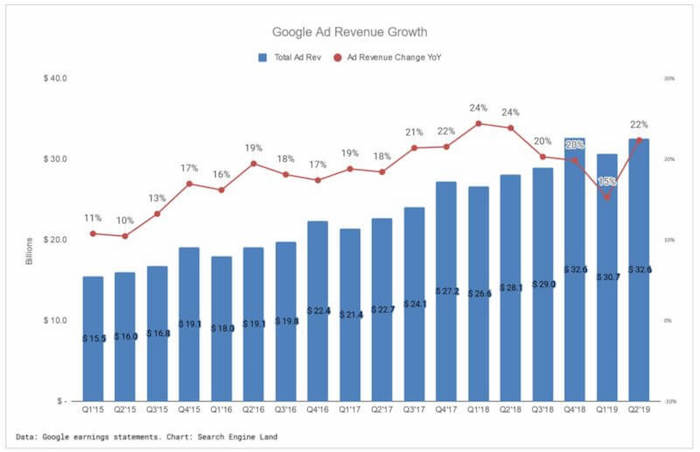

In addition to that, I wanted to find something that wasn’t “paid,” as I was bootstrapping. Just like how SEO was starting to have more ups and downs compared to what I’ve seen in my 18-year career, I knew the cost at paid ads would continually rise.

Just look at Google’s ad revenue. They have some ups and downs every quarter but the overall trend is up and to the right.

In other words, advertising will continually get more expensive over time.

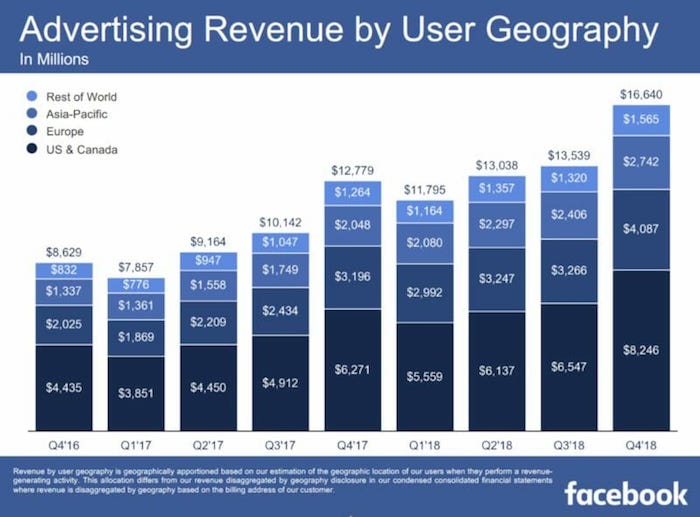

And it’s not just Google either. Facebook Ads keep getting more expensive as well.

I didn’t want to rely on a channel that would cost me more next year and the year after because it could get so expensive that I may not be able to profitably leverage it in the future.

So, what did I do?

I went on a hunt to figure out a way to get direct, referral, and organic traffic that didn’t rely on any algorithm updates. (I will explain what I mean by organic traffic in a bit.)

I went on my mission

With the help of my buddy, Andrew Dumont, I went searching for websites that continually received good traffic even after algorithm updates.

Here were the criteria that we were looking for:

- Sites that weren’t reliant on Google traffic

- Sites that didn’t need to continually produce more content to get more traffic

- Sites that weren’t popular due to social media traffic (we both saw social traffic dying)

- Sites that didn’t leverage paid ads in the past or present

- Sites that didn’t leverage marketing

In essence, we were looking for sites that were popular because people naturally liked them. Our intentions at first weren’t to necessarily buy any of these sites. Instead, we were trying to figure out how to naturally become popular so we could replicate it.

Do you know what we figured out?

I’ll give you a hint.

Think of it this way: Google doesn’t get the majority of their traffic from SEO. And Facebook doesn’t get their traffic because they rank everywhere on Google or that people share Facebook.com on the social web.

Do you know how they are naturally popular?

It comes down to building a good product.

That was my aha! moment. Why continually crank out thousands of pieces of content, which isn’t scalable and is a pain as you eventually have to update your old content, when I could just build a product?

That’s when Andrew and I stumbled upon Ubersuggest.

Now the Ubersuggest you see today isn’t what it looked like in February 2017 when I bought it.

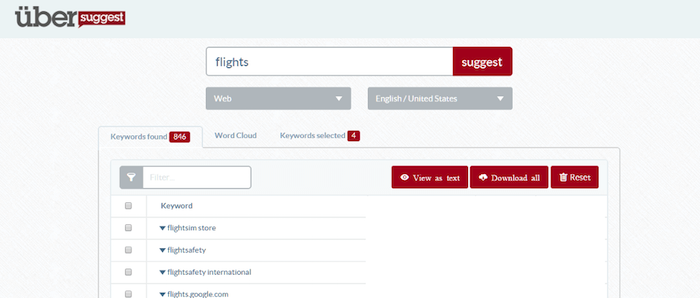

It used to be a simple tool that just showed you Google Suggest results based on any query.

Before I took it over, it was generating 117,425 unique visitors per month and had 38,700 backlinks from 8,490 referring domains.

All of this was natural. The original founder didn’t do any marketing. He just built a product and it naturally spread.

The tool did, however, have roughly 43% of its traffic coming from organic search. Now, can you guess what keyword it was?

The term was “Ubersuggest”.

In other words, its organic traffic mainly came from its own brand, which isn’t really reliant on SEO or affected by Google algorithm updates. That’s also what I meant when I talked about organic traffic that wasn’t reliant on Google.

Now since then I’ve gone a bit crazy with Ubersuggest and released loads of new features… from daily rank tracking to a domain analysis and site audit report to a content ideas report and backlinks report.

In other words, I’ve been making it a robust SEO tool that has everything you need and is easy to use.

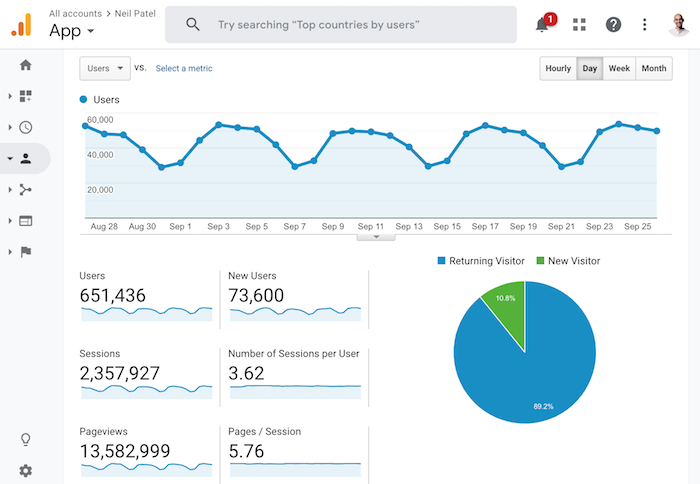

It’s been so effective that the traffic on Ubersuggest went from 117,425 unique visitors to a whopping 651,436 unique visitors that generates 2,357,927 visits and 13,582,999 pageviews per month.

Best of all, the users are sticky, meaning the average Ubersuggest user spends over 26 minutes on the application each month. This means that they are engaged and will likely to convert into customers.

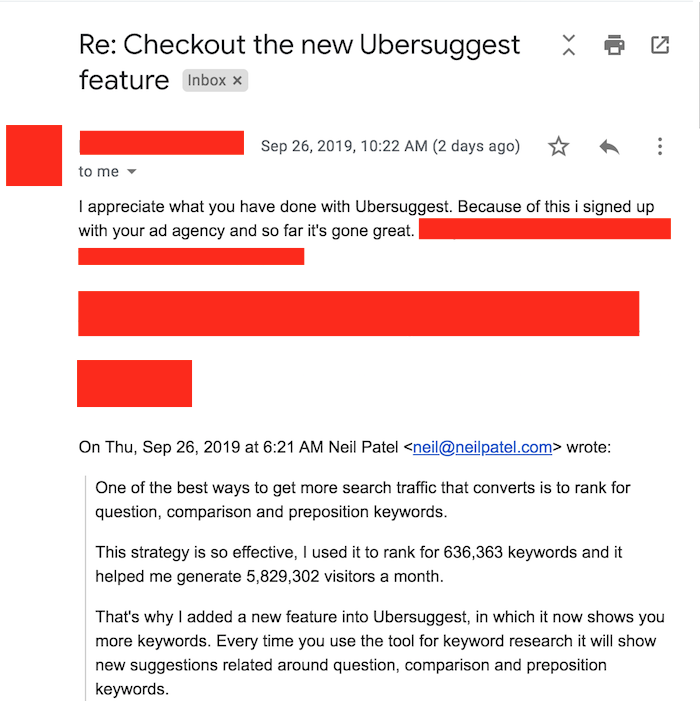

As I get more aggressive with my Ubersuggest funnel and start collecting leads from it, I expect to receive many more emails like that.

And over the years, I expect the traffic to continually grow.

Best of all, do you know what happens to the traffic on Ubersuggest when my site gets hit by a Google algorithm update or when my content stops going viral on Facebook?

It continually goes up and to the right.

Now, unless you dump a ton of money and time into replicating what I am doing with Ubersuggest, but for your industry, you won’t generate the results I am generating.

As my mom says, I’m kind of crazy…

But that doesn’t mean you can’t do well on a budget.

Back in 2013, I did a test where I released a tool on my old blog Quick Sprout. It was an SEO tool that wasn’t too great and honestly, I probably spent too much money on it.

Here were the stats for the first 4 days of releasing the tool:

- Day #1: 8,462 people ran 10,766 URLs

- Day #2: 5,685 people ran 7,241 URLs

- Day #3: 1,758 people ran 2,264 URLs

- Day #4: 1,842 people ran 2,291 URLs

Even after the launch traffic died down, still 1,000+ people per day used the tool. And, over time, it actually went up to over 2,000.

It was at that point in my career, I realized that people love tools.

I know what you are thinking though… how do you do this on a budget, right?

How to build tools without hiring developers or spending lots of money

What’s silly is, and I wish I knew this before I built my first tool on Quick Sprout back in the day, there are tools that already exist for every industry.

You don’t have to create something new or hire some expensive developers. You can just use an existing tool on the market.

And if you want to go crazy like me, you can start adding multiple tools to your site… just like how I have an A/B testing calculator.

So how do you add tools without breaking the bank?

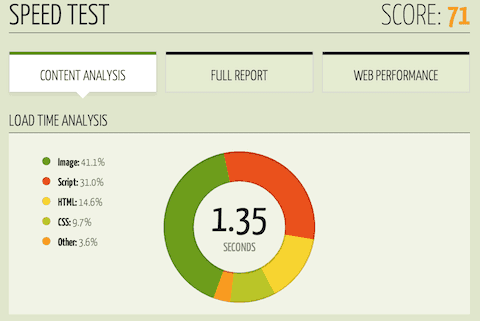

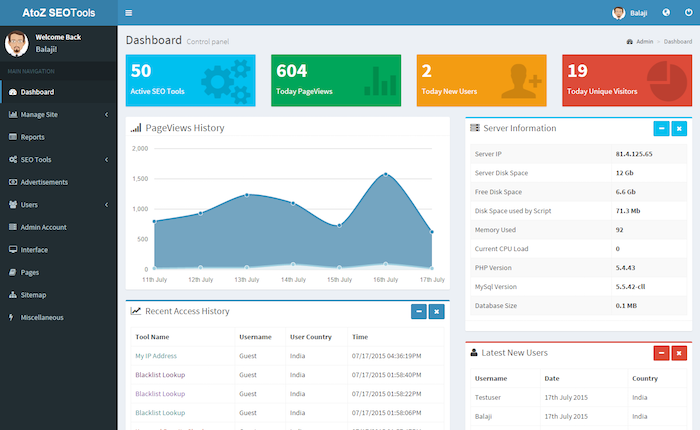

You buy them from sites like Code Canyon. From $2 to $50, you can find tools on just about anything. For example, if I wanted an SEO tool, Code Canyon has a ton to choose from. Just look at this one.

Not a bad looking tool that you can have on your website for just $40. You don’t have to pay monthly fees and you don’t need a developer… it’s easy to install and it doesn’t cost much in the grand scheme of things.

And here is the crazy thing: The $40 SEO tool has more features than the Quick Sprout one I built, has a better overall design, and it is .1% the cost.

Only if I knew that before I built it years ago. :/

Look, there are tools out there for every industry. From mortgage calculators to calorie counters to a parking spot finder and even video games that you can add to your site and make your own.

In other words, you don’t have to build something from scratch. There are tools for every industry that already exists and you can buy them for pennies on the dollar.

Conclusion

I love SEO and always will. Heck, even though many SEOs hate how Google does algorithm updates, that doesn’t bother me either… I love Google and they have built a great product.

But if you want to continually do well, you can’t rely on one marketing channel. You need to take an omnichannel approach and leverage as many as possible.

That way, when one goes down, you are still generating traffic.

Now if you want to do really well, think about most of the large companies out there. You don’t build a billion-dollar business from SEO, paid ads, or any other form of marketing. You first need to build an amazing product or service.

So, consider adding tools to your site, the data shows it is more effective than content marketing and it is more scalable.

Sure you probably won’t achieve the results I achieved with Ubersuggest, but you can achieve the results I had with Quick Sprout. And you can achieve better results than what you are currently getting from content marketing.

What do you think? Are you going to add tools to your site?

PS: If you aren’t sure what type of tool you should add to your site, leave a comment and I will see if I can give you any ideas. 🙂

Stay in the loop with Entireweb

Get the latest updates delivered straight to your inbox. No spam - unsubscribe anytime.