SEO

My Top 8 Tactics Shared By 107 SEOs

I asked the SEO community and a few experts to share advanced SEO tactics that are working for them.

I received 107 replies and had the opportunity to explore where people draw the line between basic and advanced skills in our industry.

Here’s everything people considered advanced, ranked based on the frequency of mentions.

If you’re wondering how to progress in your SEO career, these are the sorts of skills you’ll need to develop.

Here are my 8 personal favorites and how to get started with them.

Learning non-SEO skills that integrate with SEO can help advance your career in a few ways:

- They improve your communication with non-SEO teams (who you often rely on to get things done).

- They elevate your SEO skills beyond the basics of “keywords, content, and links”.

- They accelerate results, so your boss or clients see faster returns on investment.

In particular, user experience and conversion optimization tie in with SEO quite nicely.

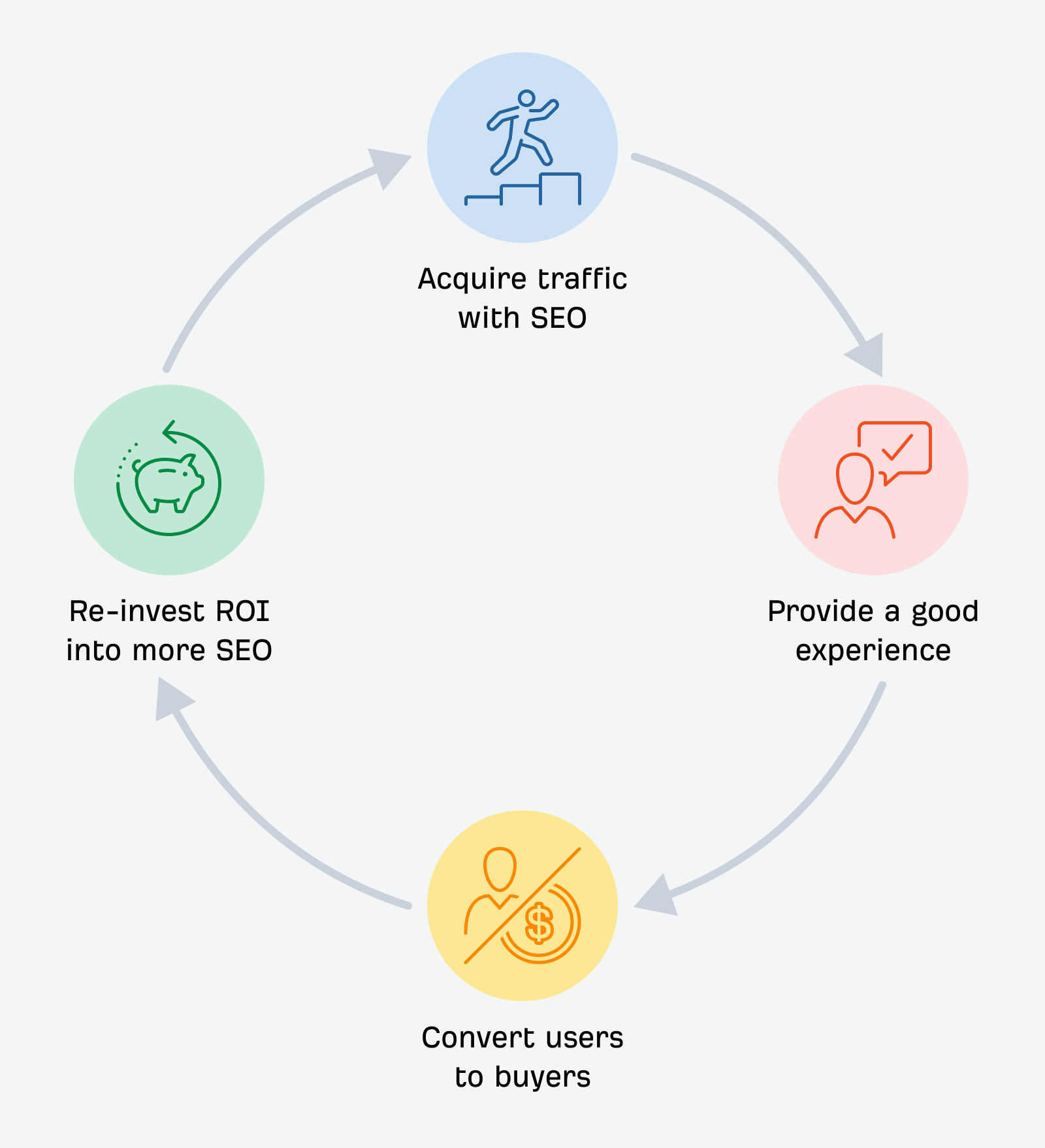

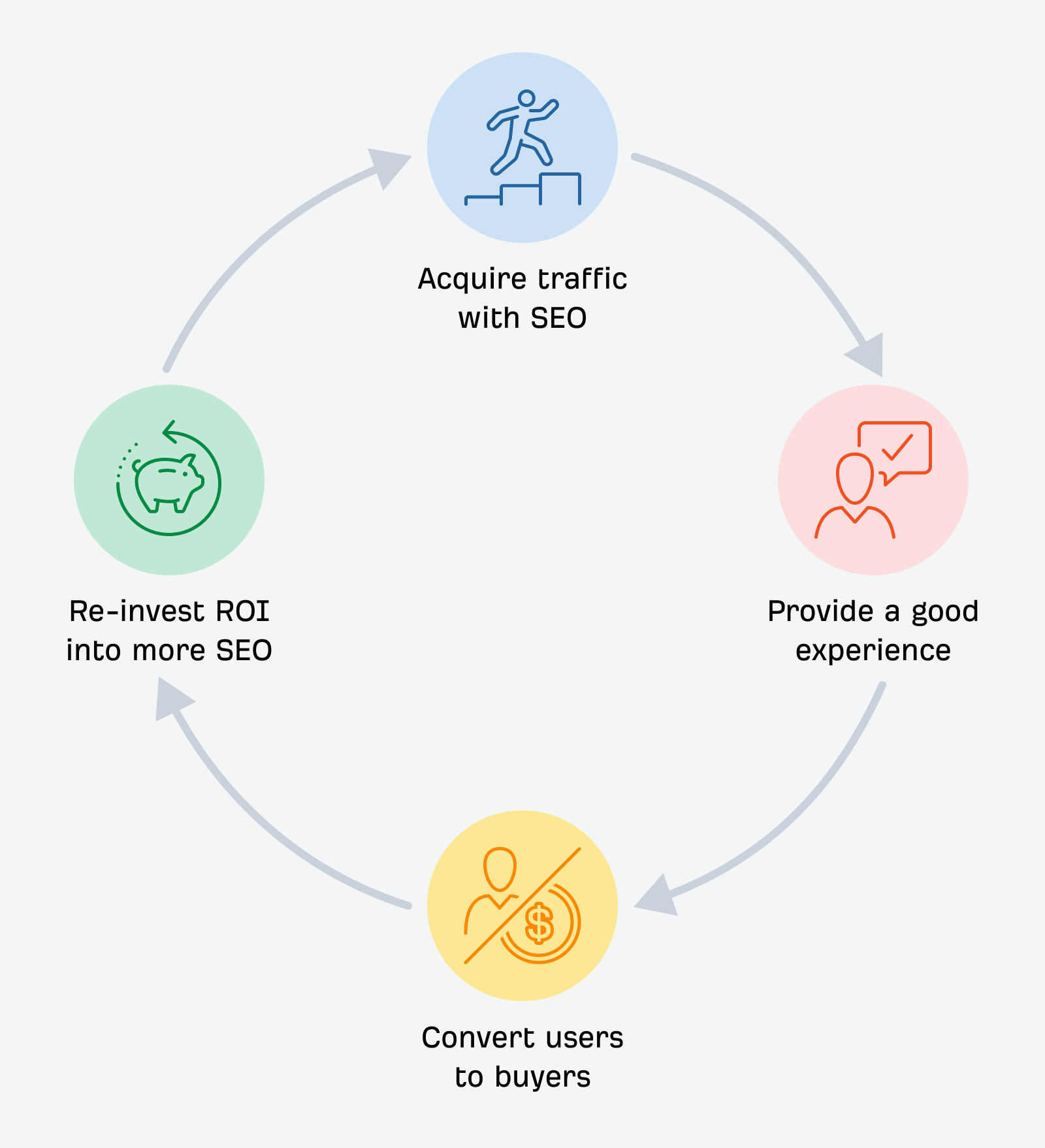

While SEO is primarily a traffic acquisition channel, it’s useless to a business if the traffic you acquire doesn’t convert.

To understand the full journey searchers take, I recommend learning about SXO. It’s short for search experience optimization and focuses on a searcher’s journey from the moment they search until they convert.

You can also get more granular and look at specific user experience elements on your website that could be impacting your SEO performance. For example, Cyrus Shepard has seen big improvements in his client’s SEO campaigns by working on UX elements like:

- Reducing ad density, particularly fixed-video ad

- Removing browser notifications

- Simplifying navigation

- Improving contact information visibility

- Clarifying site identity (logo + tagline)

Either way, combining SEO with UX and CRO can yield big gains, so it’s a worthwhile skill to learn.

Whenever I think of integrating SEO with paid ads, I can’t help picturing the Old El Paso girl.

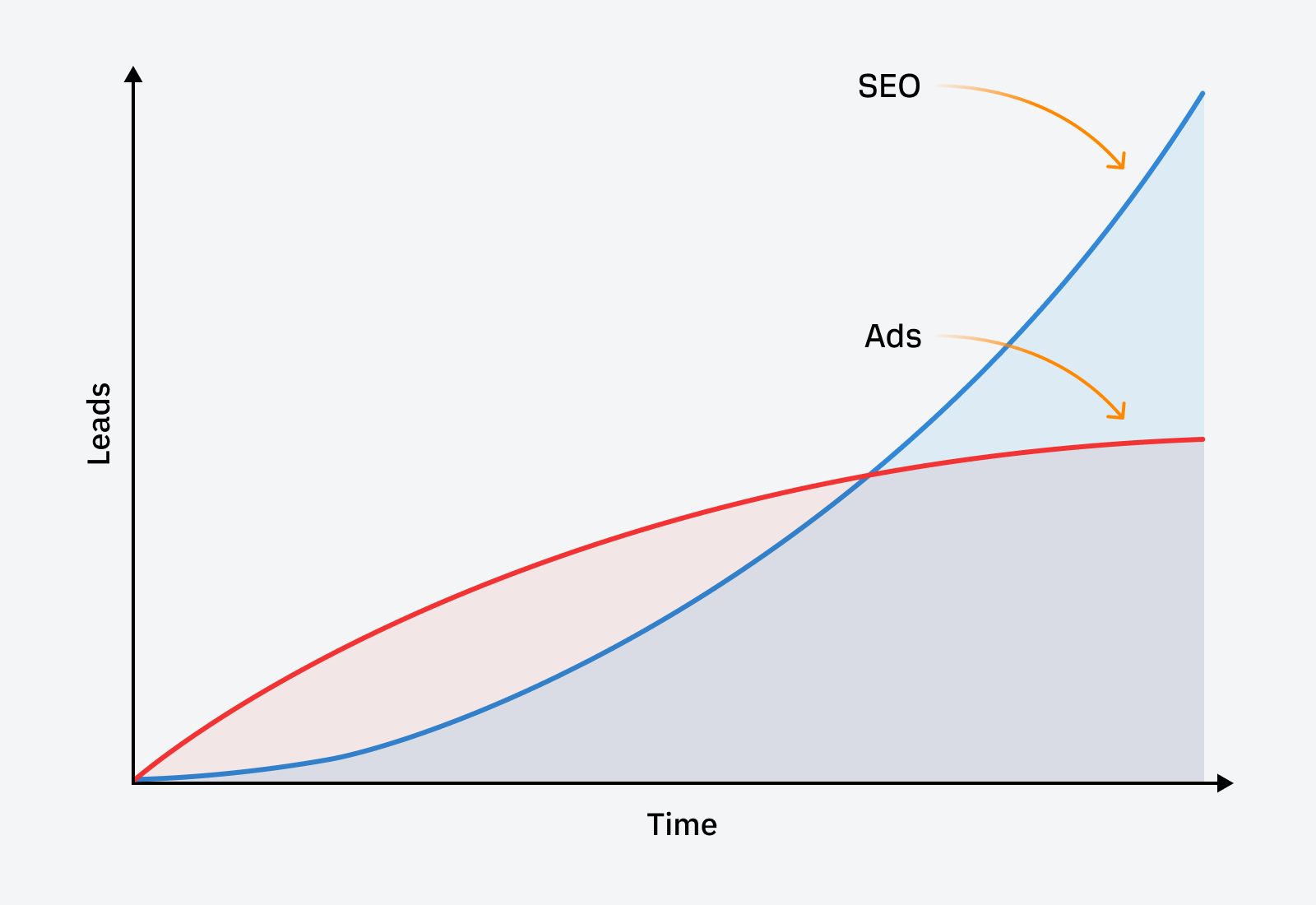

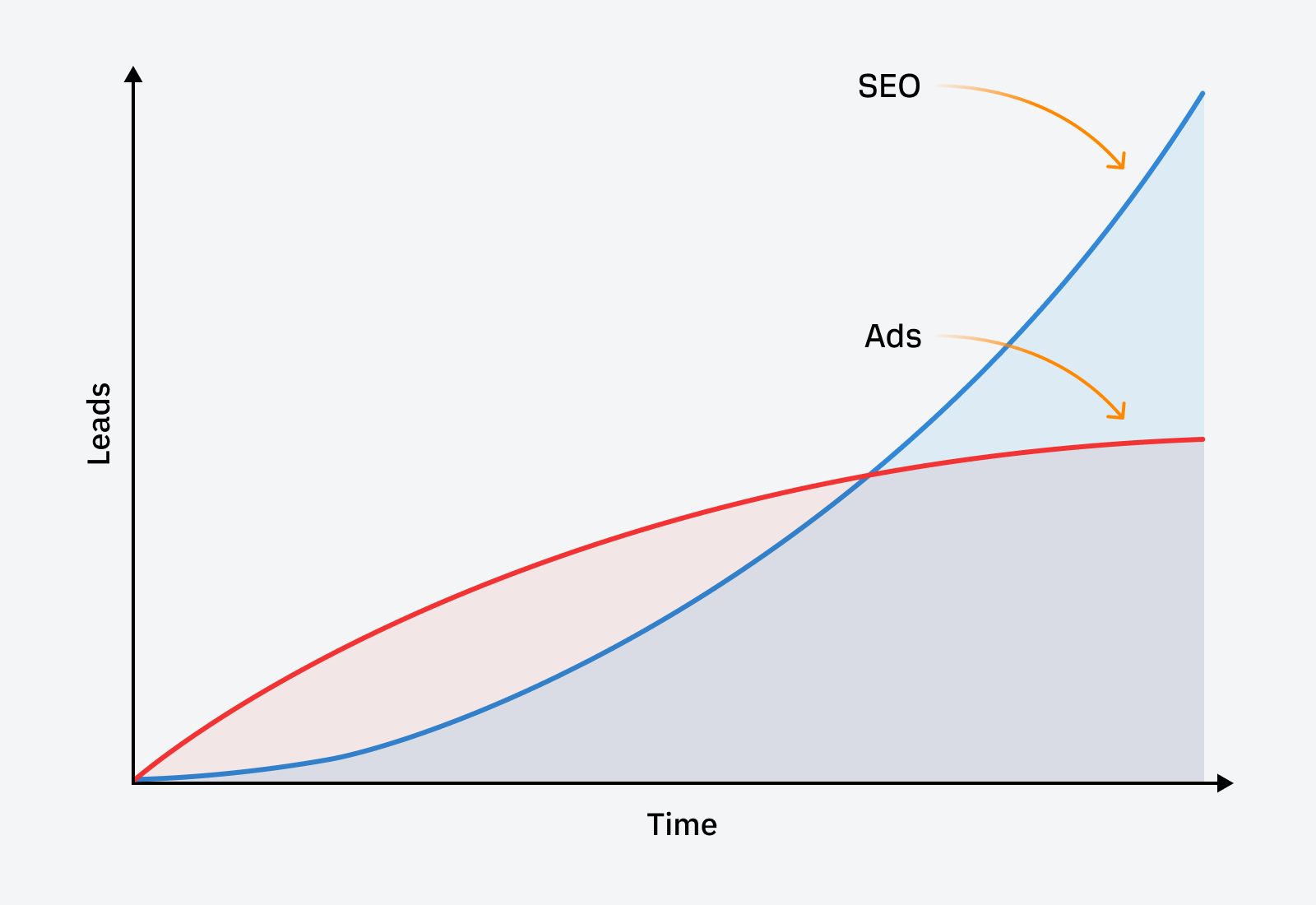

Blending paid ads with SEO is an essential strategy for businesses to generate more leads and a faster return on investment. They’re complimentary channels, and you can use one to overcome the shortcomings of the other.

For example, local businesses can generate many leads directly from Google with:

- Local service ads

- Pay-per-click ads

- Local and map pack SEO

- Traditional SEO

With the help of paid ads, they can earn phone calls within the first few weeks of the campaign instead of waiting months for SEO to kick in.

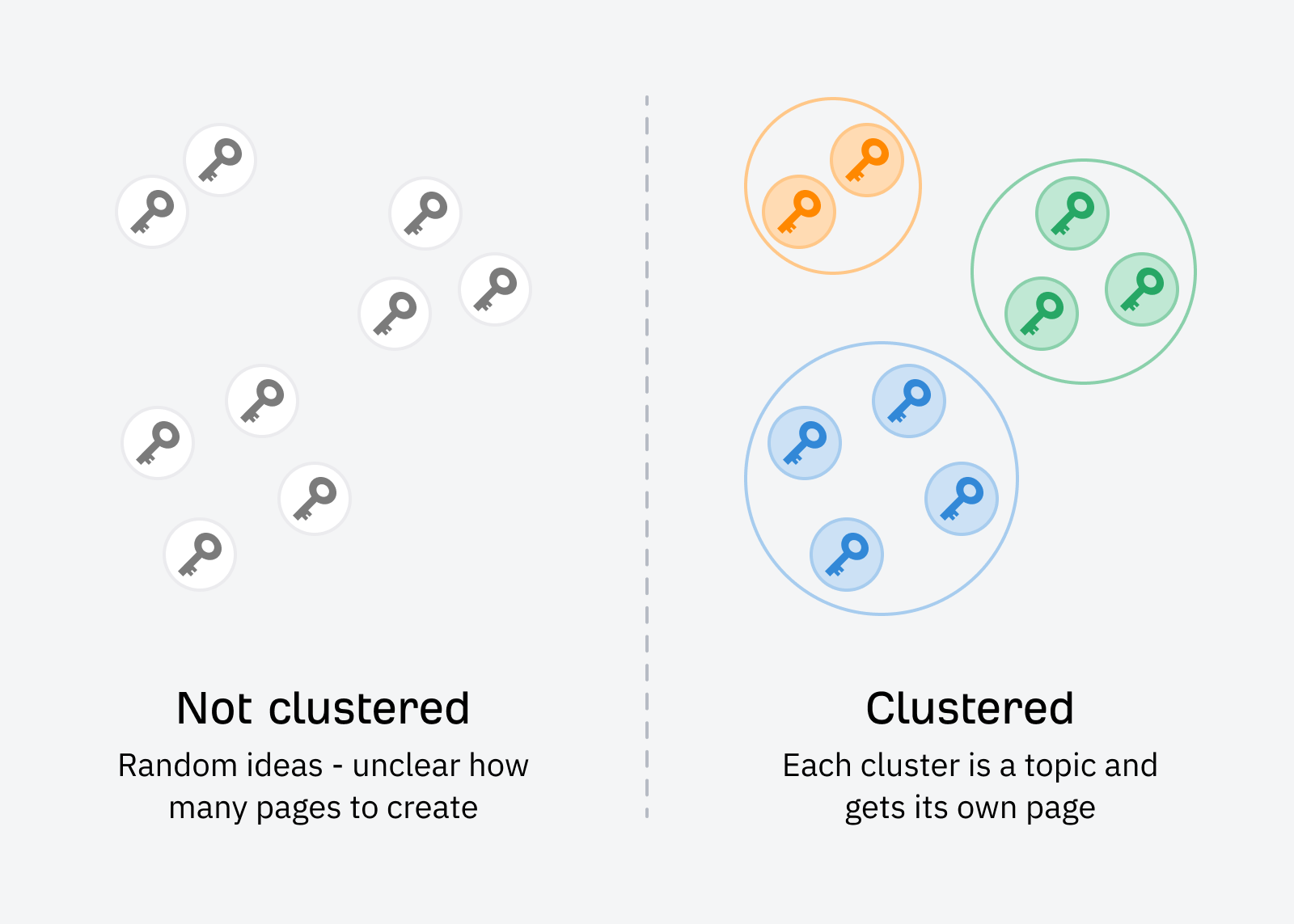

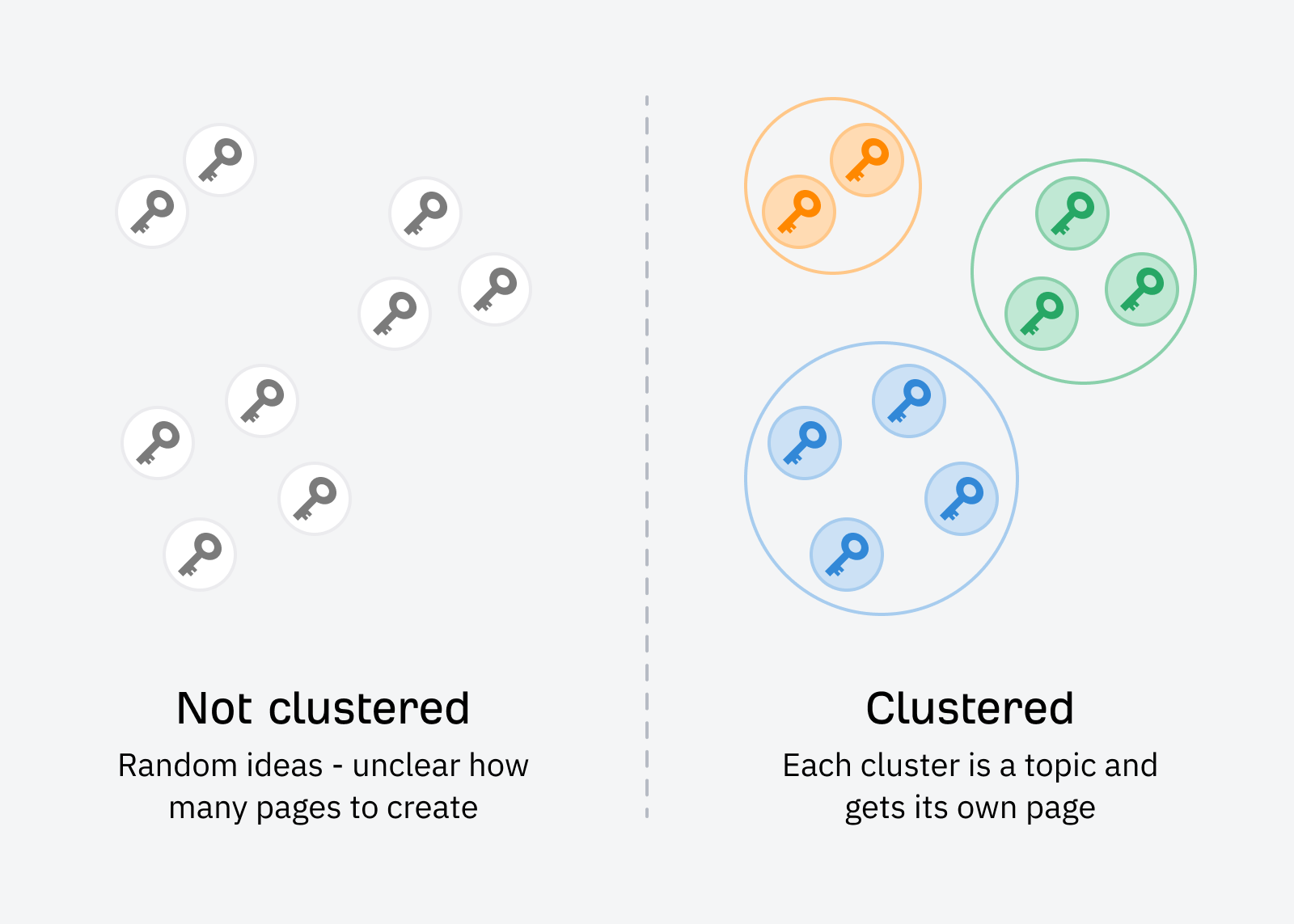

Topic clustering is a core aspect of SEO. However, most people don’t consider it an “advanced” tactic on its own, as it’s become a staple of creating strategic content for SEO nowadays.

If you’re new to topic clustering, it involves:

- Gathering a list of keywords

- Grouping the ones with similar intent together

- Creating content to target each group

- Interlinking between each post

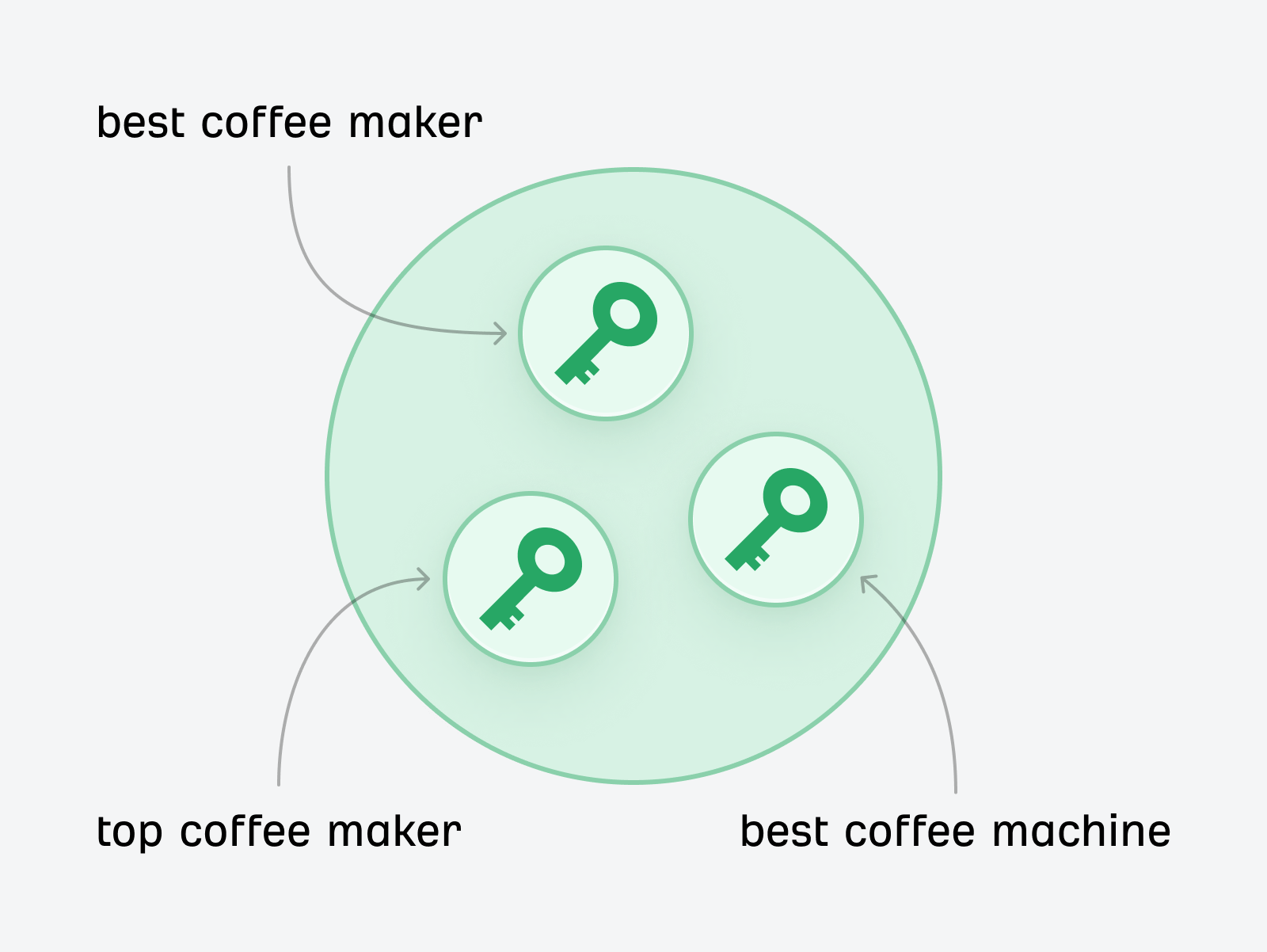

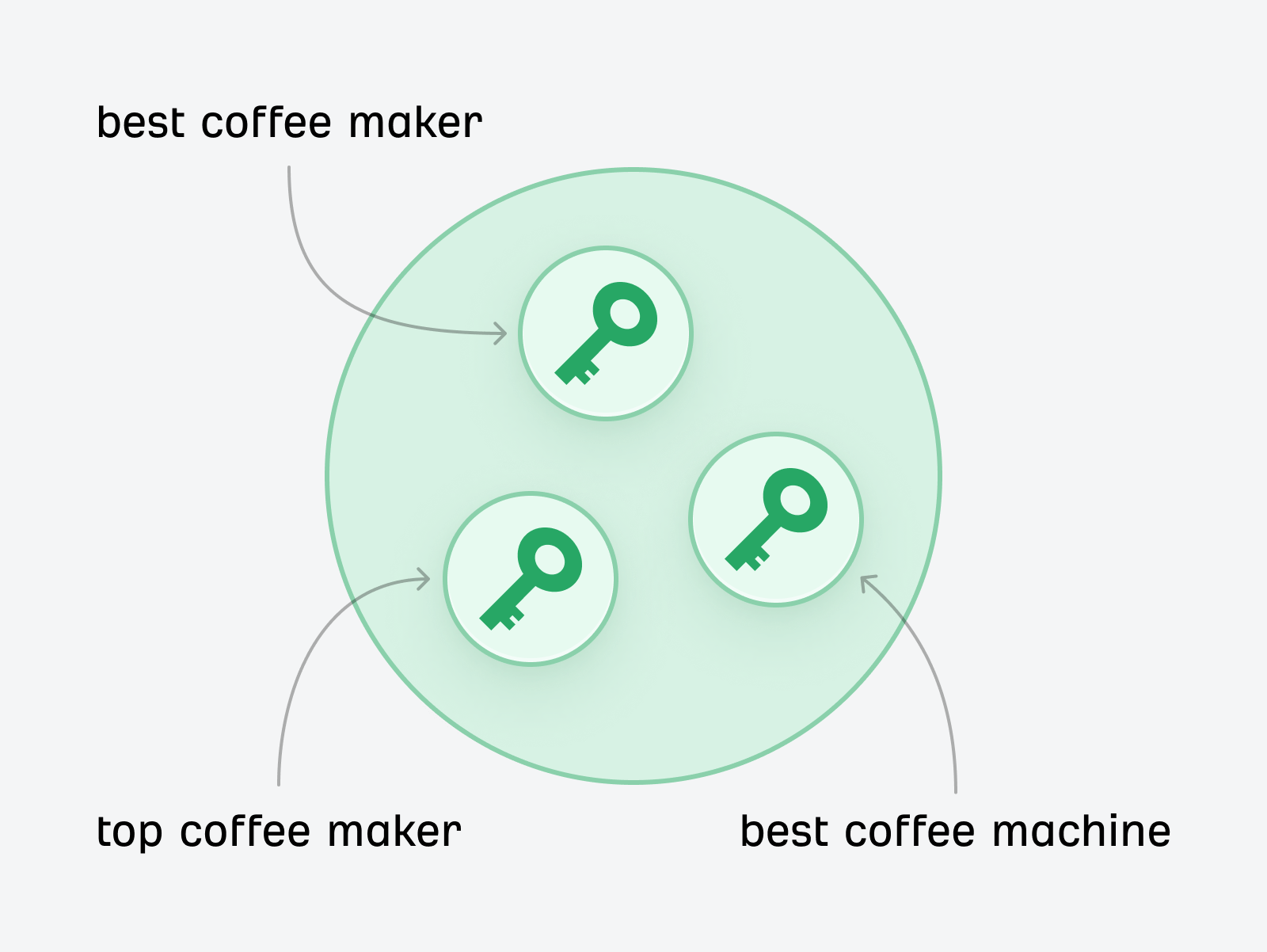

Here’s an example of what a cluster might look like:

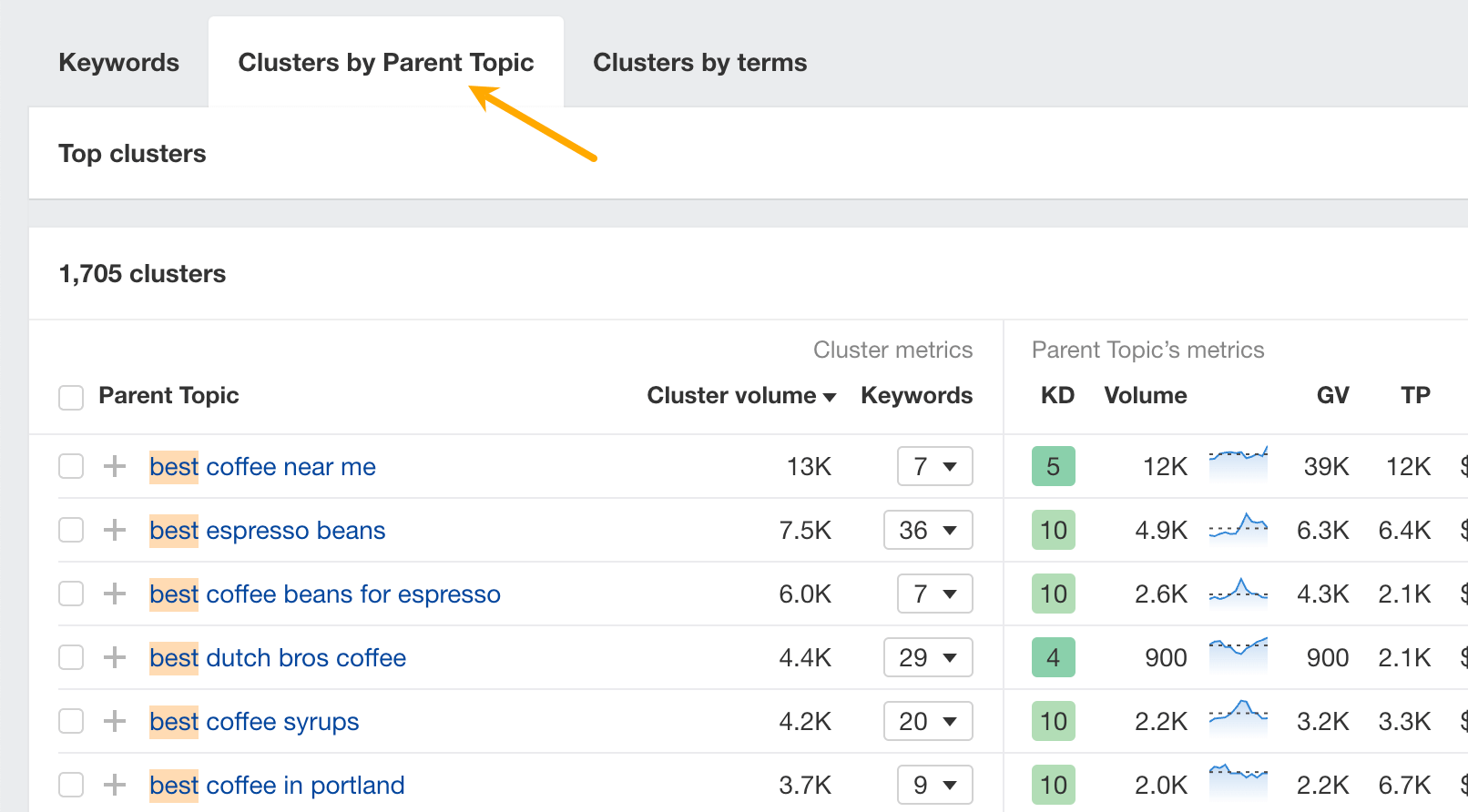

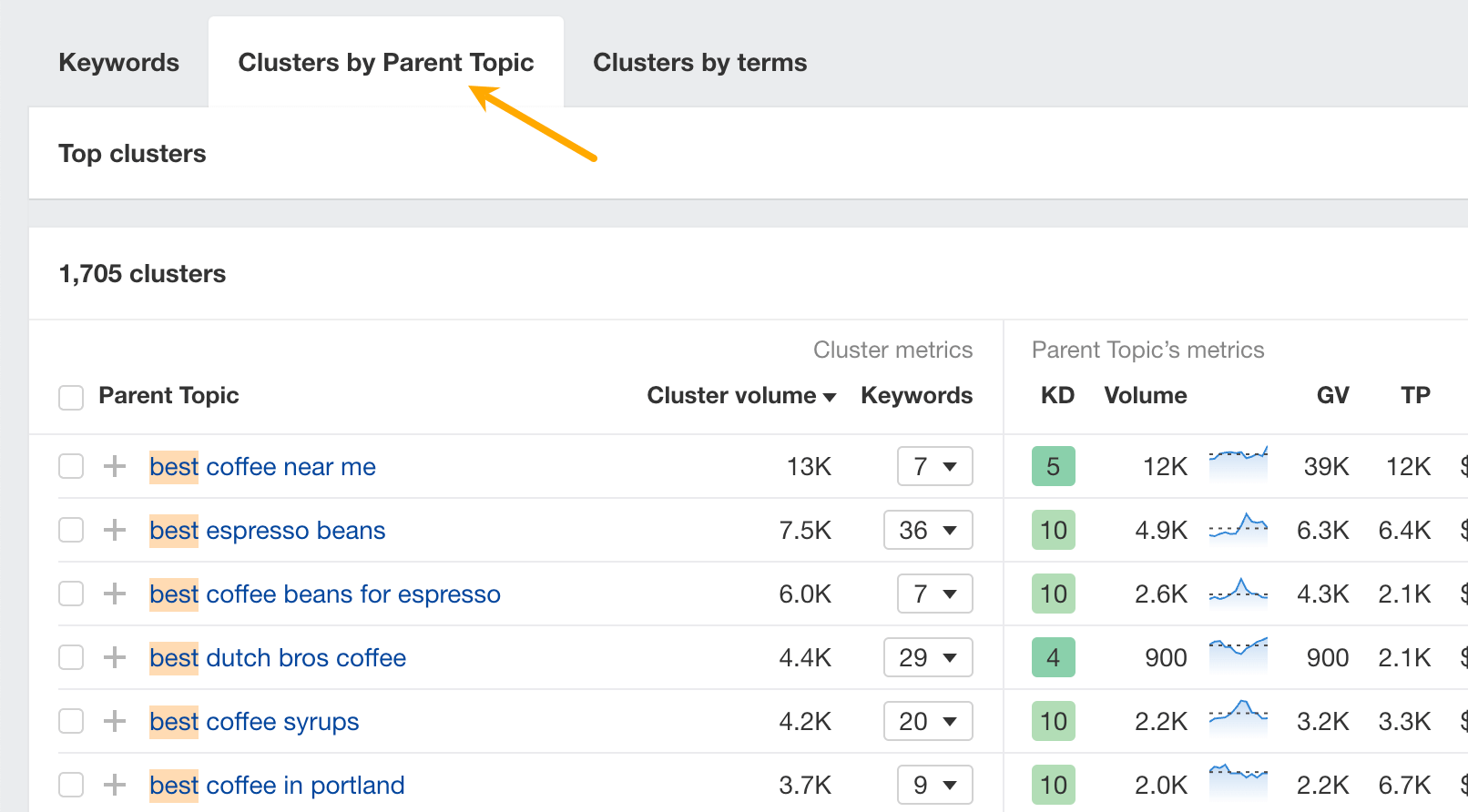

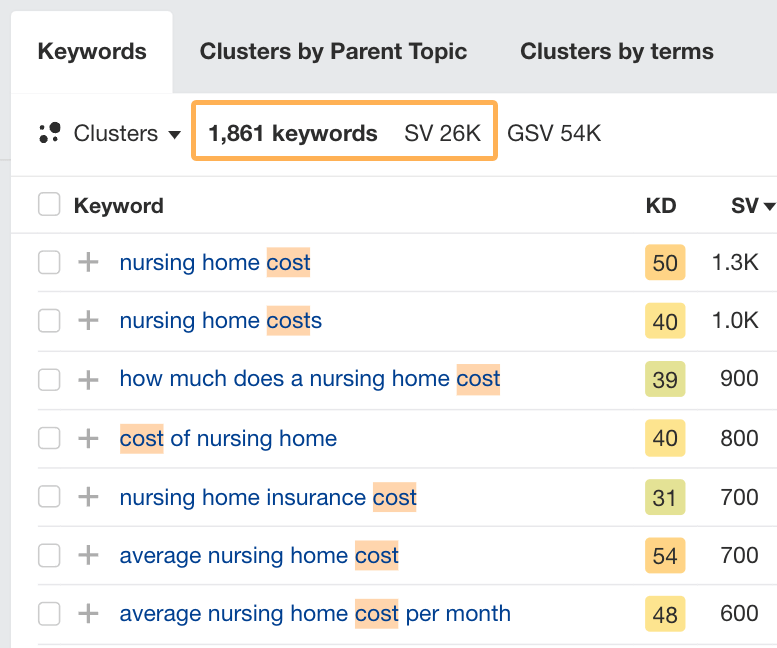

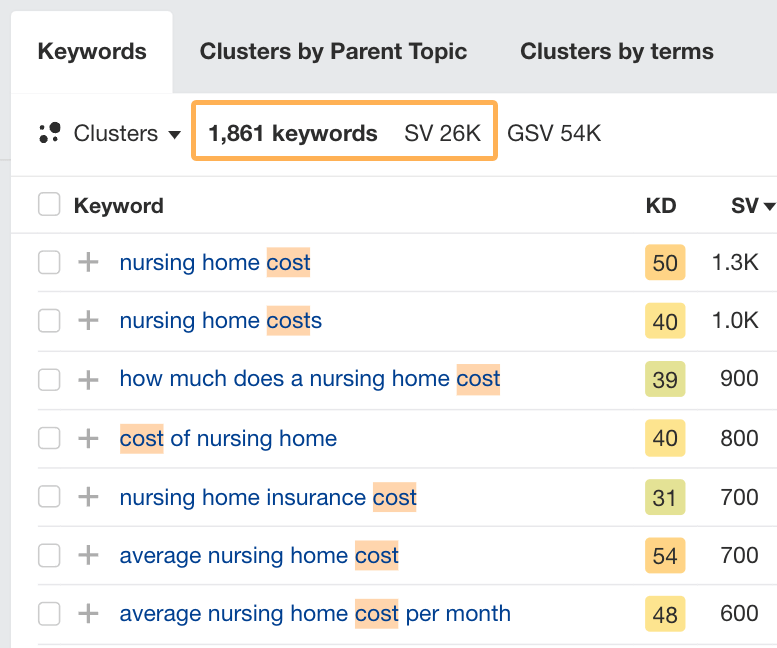

You can easily spot potential clusters for your topic using Ahrefs’ clustering reports in Keywords Explorer.

Enter your main keyword, and then in the Matching terms report, check out clusters by parent topic or terms.

This technique allows your content to rank for a variety of keywords and to improve your website’s coverage of a topic. It can also help your brand be perceived as an authority on the topic.

Advanced keyword clustering

If you want to take things up a notch or two, you can also create your own intent identification and clustering model using a combination of SEO APIs and machine learning models.

This is not for the faint of heart, but it was widely acknowledged as an advanced SEO tactic that people use to get results.

For instance, you can pull a keyword list using the Ahrefs API, use a large language model to identify each keyword’s intent, and then use a model like BERT or a custom-trained clustering model to create your keyword clusters automatically.

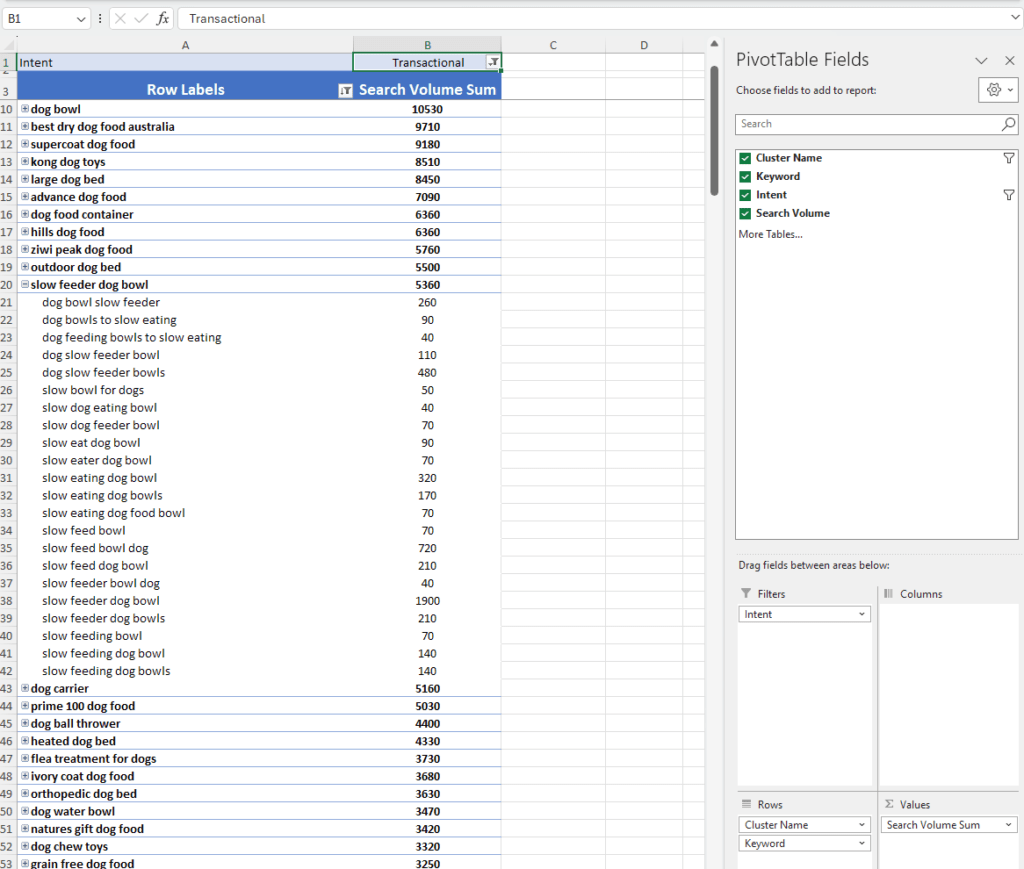

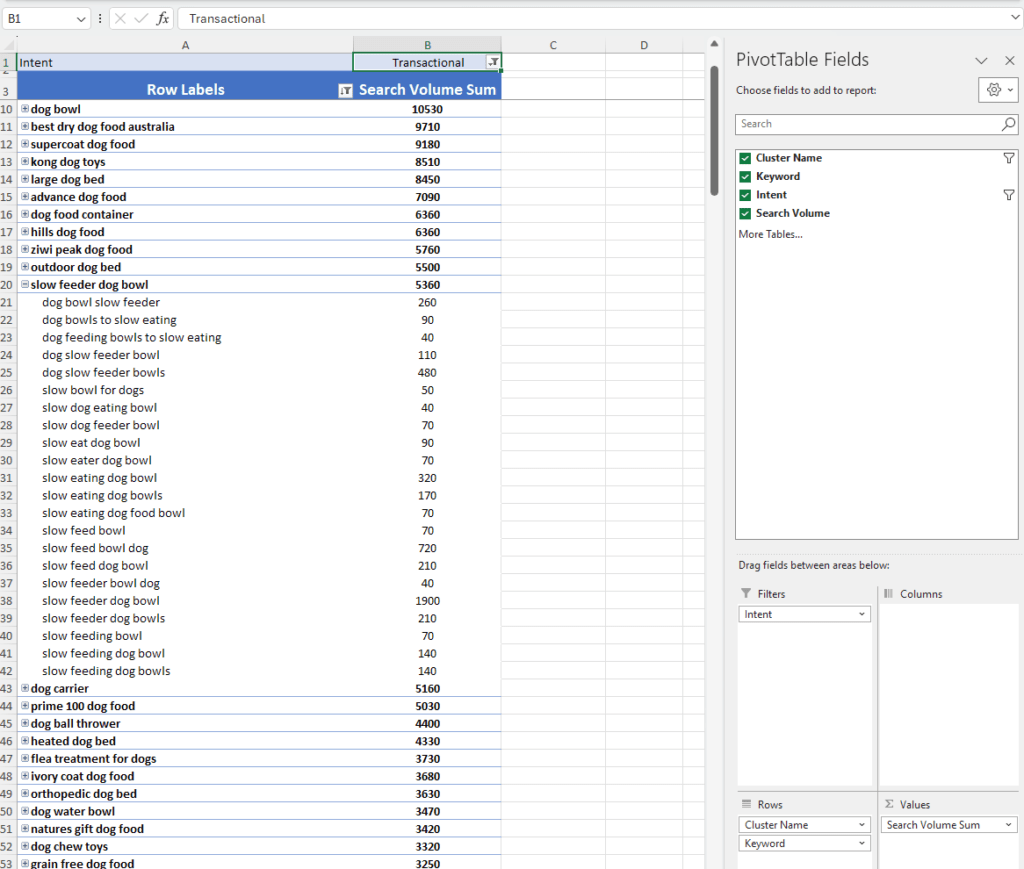

Many SEO experts, including Patrick Stox, Nik Ranger, Sally Mills, and others, are seeing success with similar methods. Here’s an example of how Sally has implemented this:

If you’re an in-house SEO or working on a large website, you need to find ways of automating tasks like topic clustering for a huge keyword list, especially if you’re working with a limited budget.

SEO is data-heavy. Without data analysis skills, creating comprehensive strategies that generate results for clients can be very challenging.

My take is that data analysis is essential for any type of data-driven marketing these days. However, to develop more advanced skills, it’s not so much a matter of looking at more data as it is about developing your mindset to find more interesting insights.

| Basic Insights | Advanced Insights |

|---|---|

| 53% of website visitors bounce | People who download X are 73% more likely to convert |

| Organic traffic grew by 231% | We’ve reduced time to conversion by doing Y |

| Our top-performing content is X | Based on YoY performance, we forecast 150% growth by doing Z |

| We’re ranking #1 for these keywords | |

| Our blog gets Y traffic from Google |

The difference is that the basic insights on the left are readily available in your analytics software. You don’t have to look too hard to find these insights. Nor do you have to think too deeply about them.

They’re also not particularly helpful or actionable. Especially when you share these with clients or managers, they often don’t know what these numbers mean or what decisions to make from them.

However, the insights on the right connect specific actions to their results and can be used to make a case for increasing the budget of an SEO project. They help non-SEO stakeholders see the steps you took to achieve a result and why investing in doing more of what worked makes sense.

Further reading

Here are a couple of step-by-step guides to help you improve your analytical and forecasting skills for SEO.

The type of data you gather to create your SEO strategy can also be the difference between doing basic SEO vs advanced SEO.

For example, here are some different data sources to consider:

| Common Data Sources | Advanced Data Sources |

|---|---|

| Google Analytics | CRM for customer data |

| Google Search Console | Sales pipeline software for sales data |

| An SEO platform like Ahrefs | Accounting software for revenue data |

| Internal dashboards for product data |

Don’t get me wrong; you can still make an advanced SEO strategy using the data sources on the left.

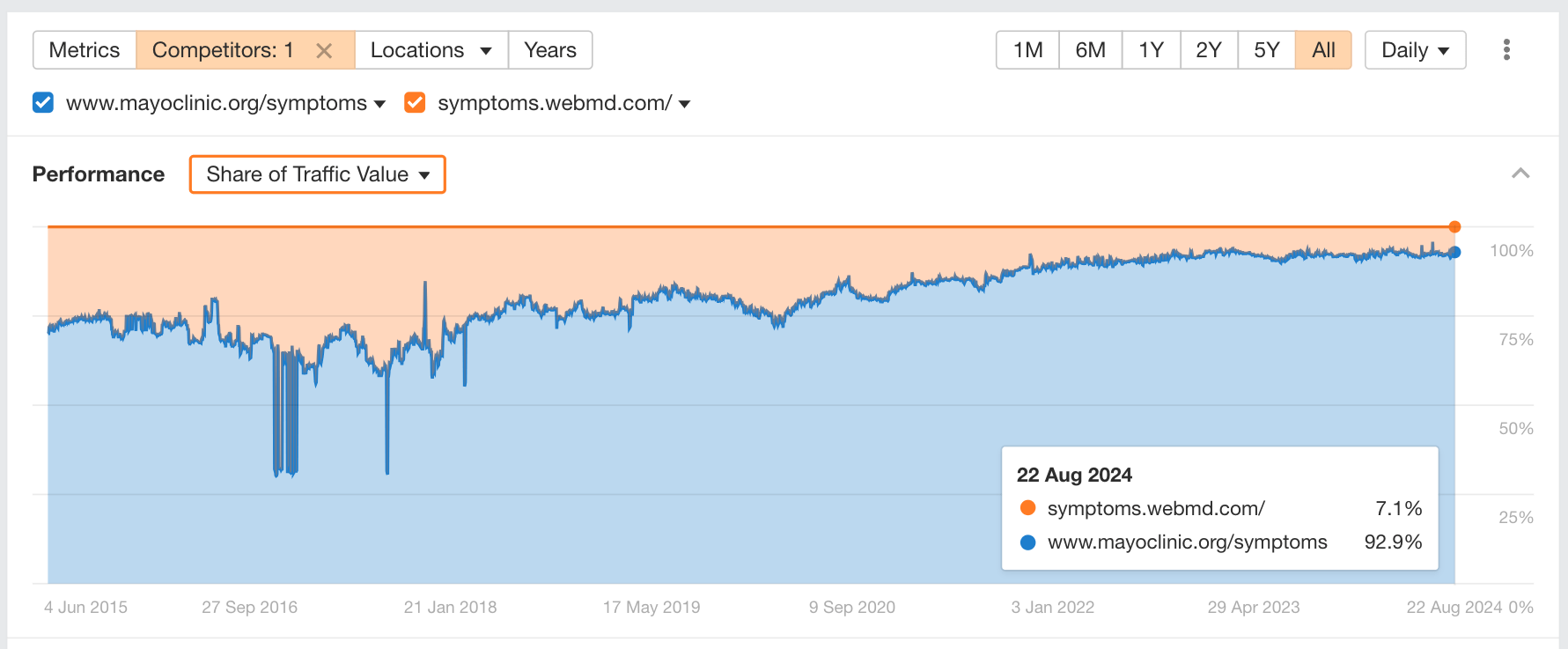

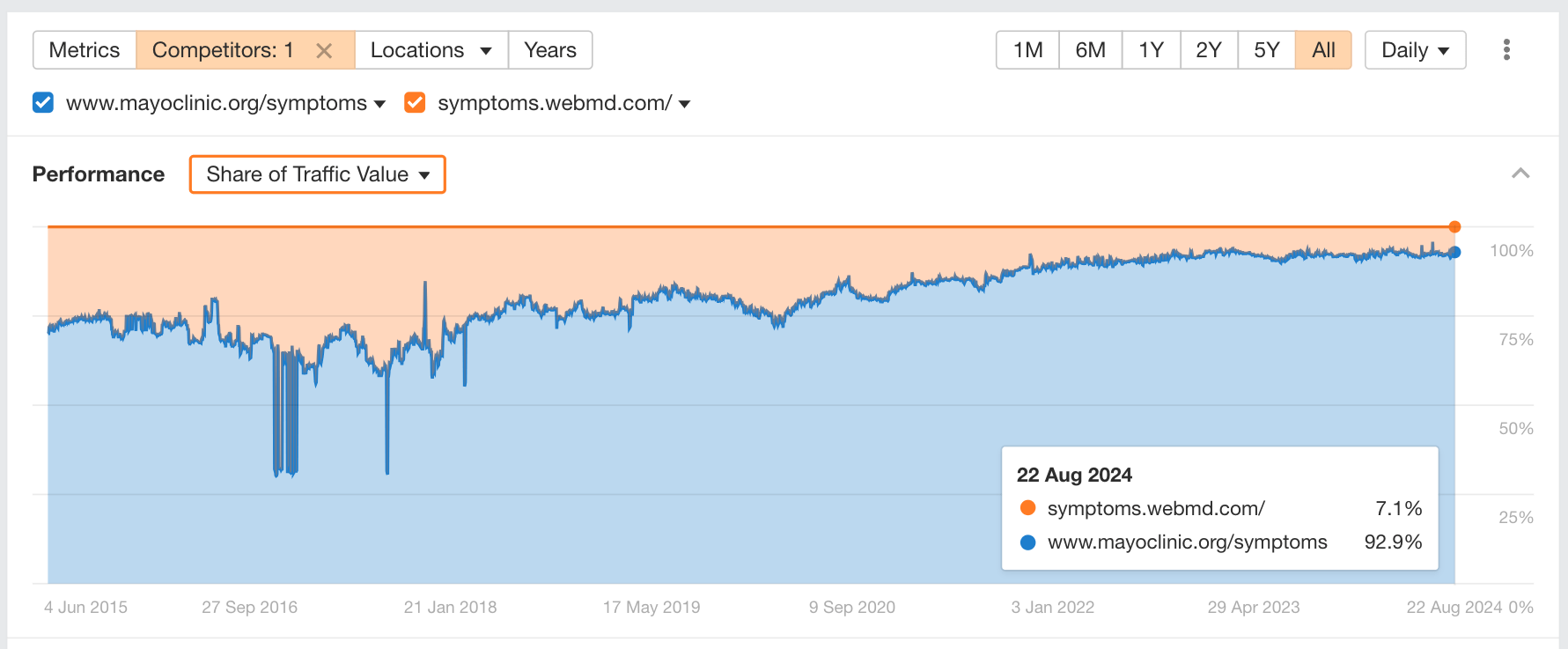

For example, with Ahrefs, you can use metrics like Share of Traffic Value to see where your competitors are gaining market share.

The analysis that would follow is not basic by any means.

But you can also go in the wrong direction if you don’t incorporate a brand’s internal data into your strategy.

For example, many SEO professionals don’t think much of a brand’s unique selling points. Maybe they include these in landing page copy, but that’s it.

However, advanced practitioners recognize product data and USPs as a goldmine for SEO. It’s how we’re able to:

- Do strategic keyword and intent analysis

- Target meaningful keywords with low competition

- Validate keyword opportunities with product or brand managers

- Ensure the SEO strategy aligns with the brand’s product-market fit

It’s critical to understand your product USP and do strategic keyword research. It’s how you’ll find low-competition, meaningful queries that are equally relevant from a business standpoint. This seems easy and rather “basic,” but it is not, and definitely not the type of task I would leave a junior SEO with.

For example, here’s how I implemented this for one of my aged care clients.

This particular brand had invested millions into a state-of-the-art facility that offered hotel-like quality of service and care. It was important to them that their residents maintained a sense of independence and dignity while also receiving the best care available in their city.

When you hear that, you probably don’t think that their services are affordable or low-cost, right?

But here’s the thing. By gathering data from government sources, I was able to identify that this facility offered rooms that were 50% larger and 33% cheaper on average than other facilities in their city.

Uncovering this value proposition opened up a lot of potential for SEO, especially since price is a common concern for my client’s customers.

The data gathered allowed the client to consider a price-related content strategy that we would have otherwise ignored.

They had the potential to:

- Answer common pricing questions

- Overcome price-based objections before people book a tour

- Correct people’s assumptions that the service is likely out of their budget

- Position themselves based on the unique value they offer

- Increase the number of tours booked because price assumptions were no longer a blocker

Not gonna lie; when I first saw people’s responses that using ChatGPT = advanced SEO, I had a couple of “what on earth?!” moments.

However, when I asked a few SEO experts and speakers in Ahrefs Evolve’s lineup, I uncovered some pretty cool advanced use cases of AI and machine learning for SEO.

In addition to Sally’s clustering method above, here are some other examples.

Automate multi-lingual keyword translation

One of my favorite use cases of AI for SEO is for translating keywords to fast-track international SEO.

Using Ahrefs’ new AI keyword translator makes this process so quick, smooth, and easy by:

- Automatically translating your entire list of keywords with one click

- Preserving local lingo and the nuances of each dialect

- Allowing you to see search metrics for each translated variation

- Helping you discover multiple translation options for each keyword

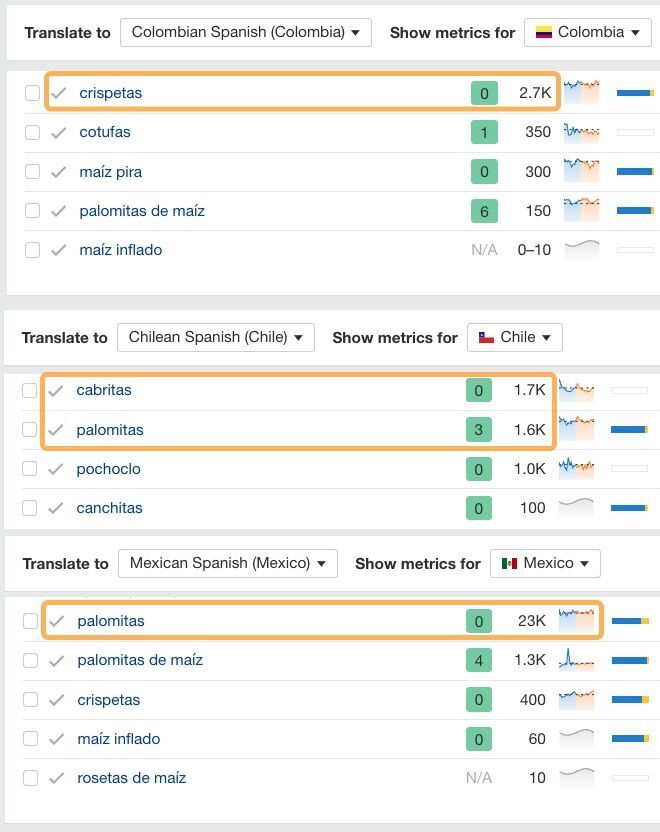

For example, there are dozens of ways to say “popcorn” in Spanish dialects.

Instead of offering a single option, our translator shows you multiple variations to consider while also localizing these variations to your target region!

Since international SEO was one of the top “advanced” SEO skills mentioned, any way you can make the process easier and faster is a bonus.

AI can also help you squeeze more juice out of a tight budget by streamlining your workflow and saving hours on tasks that would otherwise be very time-intensive.

Automate redirect mapping with AI

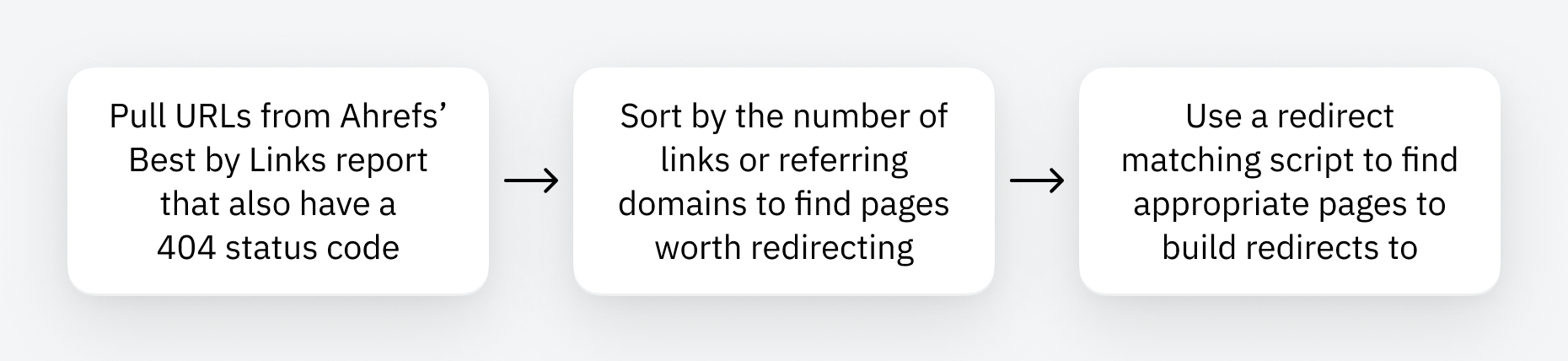

I love Patrick’s approach to automating redirects for large sites. In a nutshell, the process looks like this:

If this use case sounds interesting, feel free to check out the exact redirect-matching script he uses. Once configured, it automatically runs through the above process for you.

Internal link optimization, en masse

Internal linking is an area folks are automating with AI in different ways.

For instance, Kashif Riaz uses Gemini + ChatGPT to read his sitemap and develop contextually relevant anchor texts for each URL that he can use for internal links.

As a simple process, you could:

- Give ChatGPT a URL

- Ask it for relevant phrases that would be good as internal link anchors

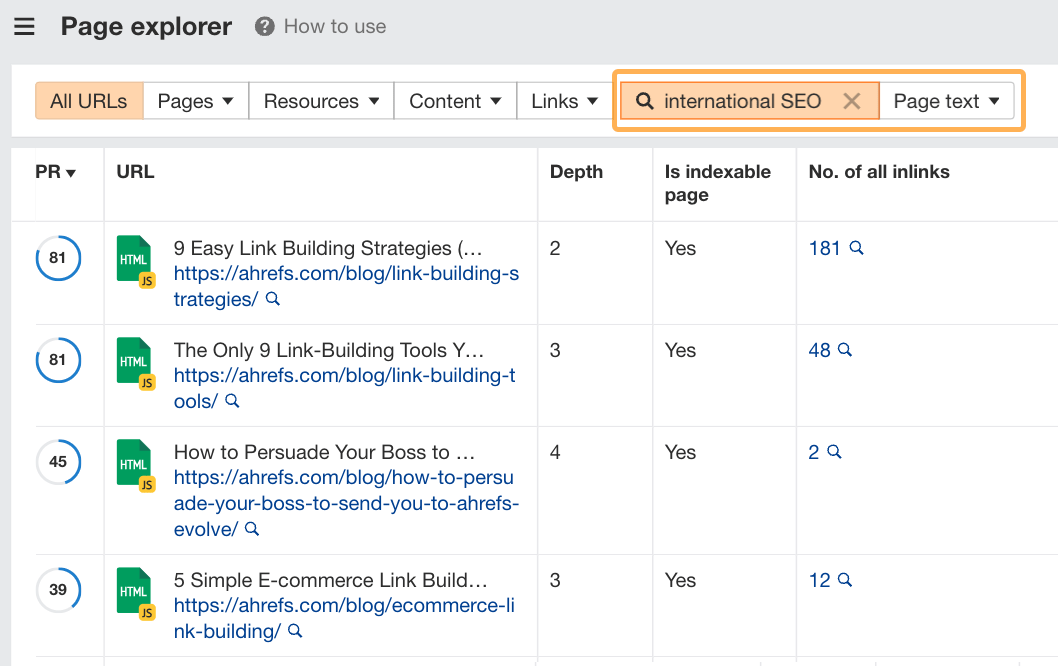

- Search Ahrefs’ Page Explorer for pages that include these phrases

Page Explorer is a report within Site Audit. You can search for any phrase in your website’s page text and get a list of pages that include it.

It’s a great way to jumpstart internal linking for a new site that isn’t already ranking for keywords.

If you’re working on a site with URLs like example.com/363863852itwiy (ew, I feel for you), you can also try a more advanced approach.

Nik Ranger blew my mind when I first saw her present on how she and the team at Dejan SEO are using machine learning to automate internal linking at a large scale.

For instance, their innovative process:

- Creates a link graph of an existing website

- Pairs the link graph with Search Console data

- Uses vector embeddings to incorporate a page’s title, headings, and content into recommendations

- Recommends internal links and anchor text

The model they’ve built can recommend internal links even if the URLs don’t contain any words or contextual clues. Normally, finding appropriate internal links on sites with these issues is incredibly challenging, but clever uses of AI make it a lot easier!

As large language models improve their contextual understanding of language, we’ll likely see more innovative use cases for SEO like this.

Quality content was mentioned quite a lot overall.

I struggled to see how creating quality content is an “advanced” strategy. To me, it’s an essential cornerstone of modern SEO, and you can’t get results without it.

However, I really liked the approaches some folks are taking to increase the revenue generation potential of their content strategies. This is what I think separates a basic content plan from an advanced one.

For example, the following content strategies were mentioned in the context of delivering results:

- Product-led content (which we covered above)

- Programmatic SEO (of the non-spammy variety)

- Bottom-of-funnel content (to increase revenue)

- Mid-funnel content (to get an edge over competitors)

I think [advanced SEO] is coming up with site-wide SEO strategies that move the needle – like creating new sections, doing programmatic content for BOFU keywords, doing product-led SEO on a site-wide level.

Incorporating other channels, like social media and Reddit, was mentioned by a number of people.

This one surprised me as something people consider “advanced” SEO. Mainly because, for a long time, Google has been the dominant platform SEO folks focus on. But I’m all for this shift. I think it’s been a long time coming.

Using Reddit for keyword research

I really liked Andy Chadwick’s process for using Reddit to find golden keyword opportunities. His process is based on the premise that people ask for the same thing in many different ways.

He uses this redundancy to his advantage by:

- Identifying information gaps he can easily close

- Finding low-competition keywords that are overlooked by competitors

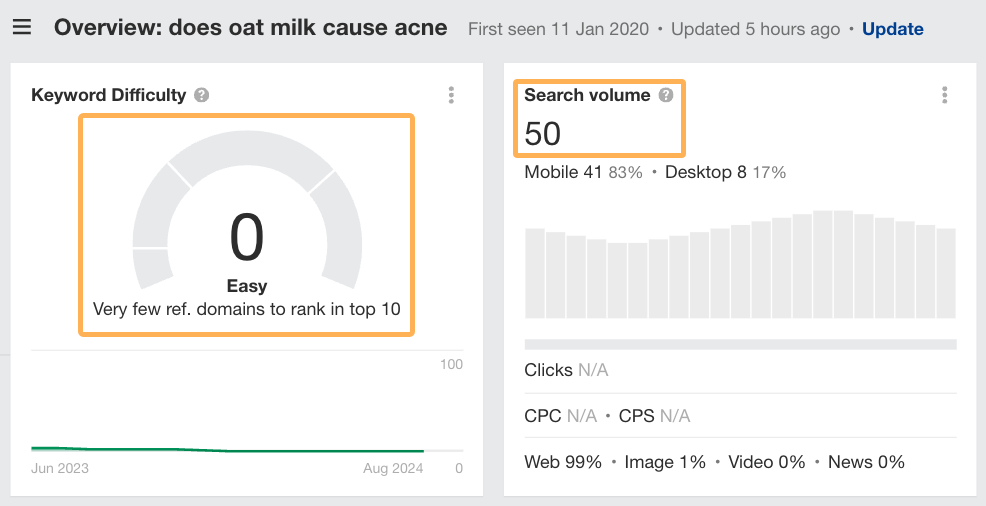

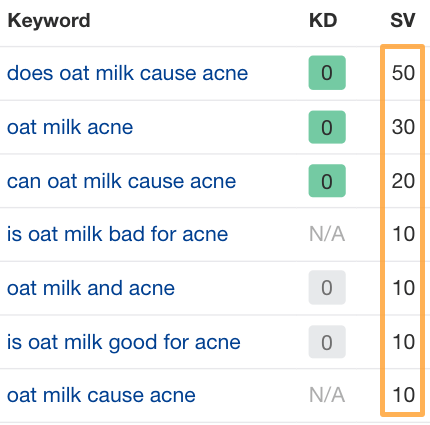

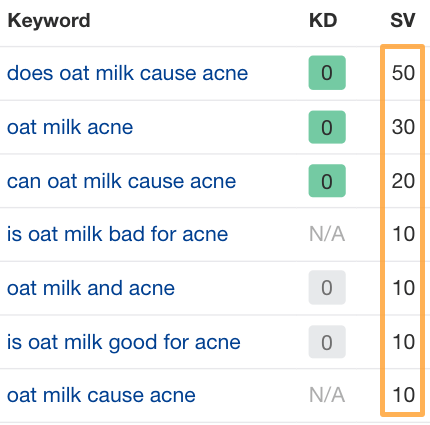

For instance, he shares a great example of the keyword “does oat milk cause acne”…

At first glance, it has a seemingly low search volume.

However, this is deceptive because people search for answers to this question in a bunch of different ways and the sum total of all their searches will yield a much higher monthly search volume.

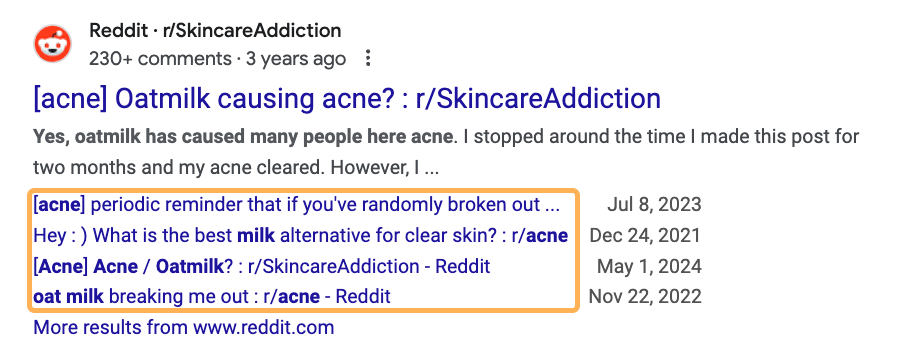

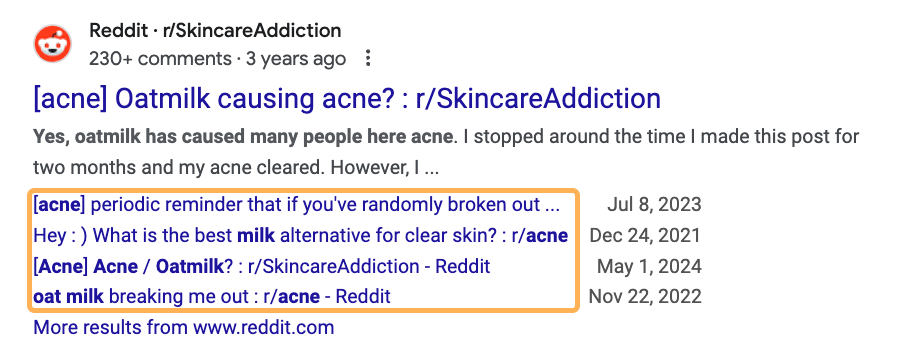

Not to mention that Reddit ranks in the top three positions, with many people asking the same question in different ways:

What’s more fascinating, though, is that there’s no competition for it.

With a difficulty score of 0, it shouldn’t be too difficult for a new skincare brand to rank for this topic above more established competitors. It can also join the conversations on Reddit and provide a clear and trustworthy answer to plug this information gap.

This strategy, rolled out across dozens of similar low-competition topics, can become an easy way for new or establishing brands to enter a competitive market.

Using social media for SEO

You can also use other social channels to help your SEO efforts. At Ahrefs, we use multiple channels to reach our audience like YouTube, LinkedIn, Facebook and X.

I reached out to Sam, the VP of Marketing here at Ahrefs. He also runs our YouTube channel, and he shared some tips you can try out if you want to expand your SEO skills by integrating social media.

As a simple process, you can start by:

- Finding video keywords that relate to your existing blog content

- Repurposing your blog content into videos

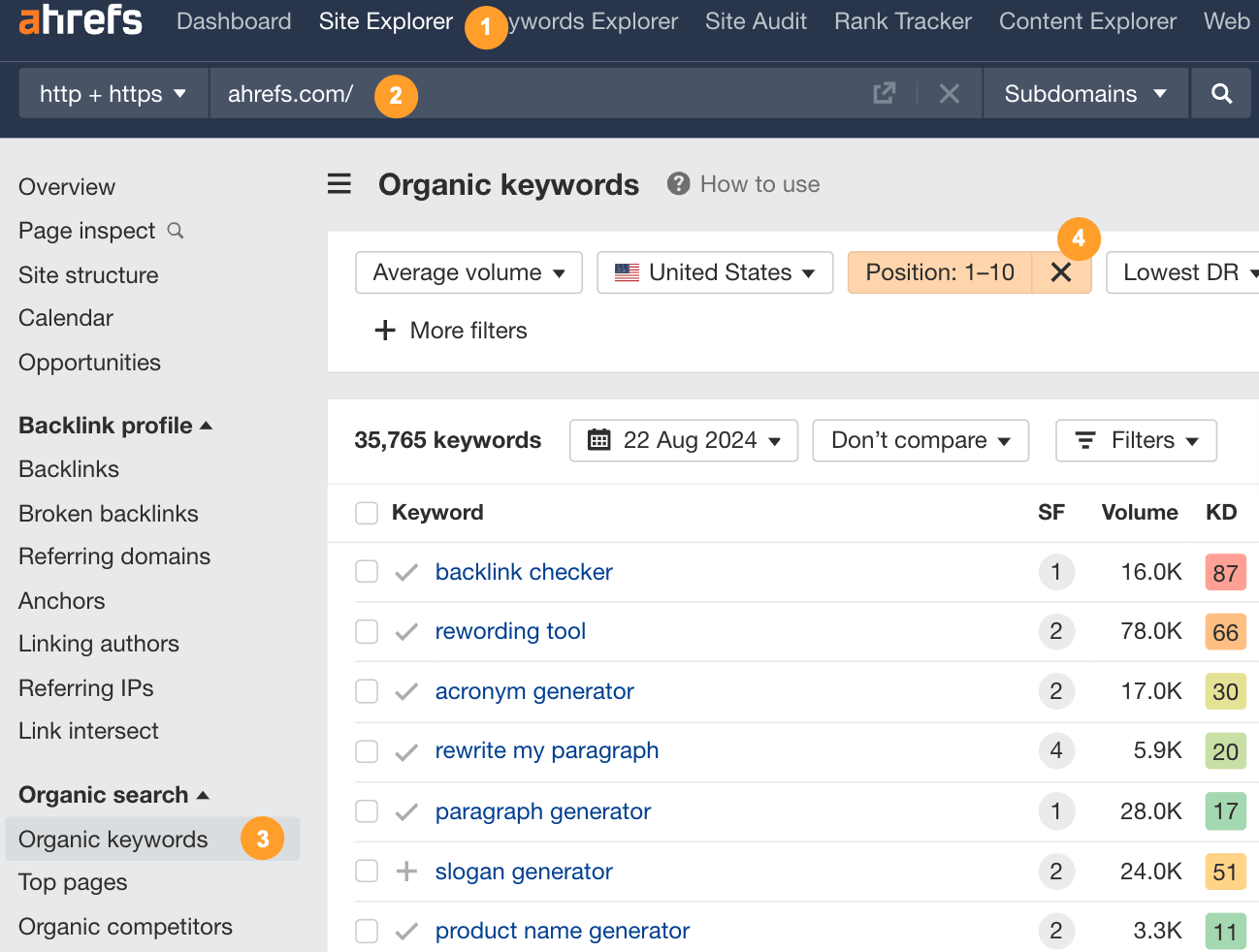

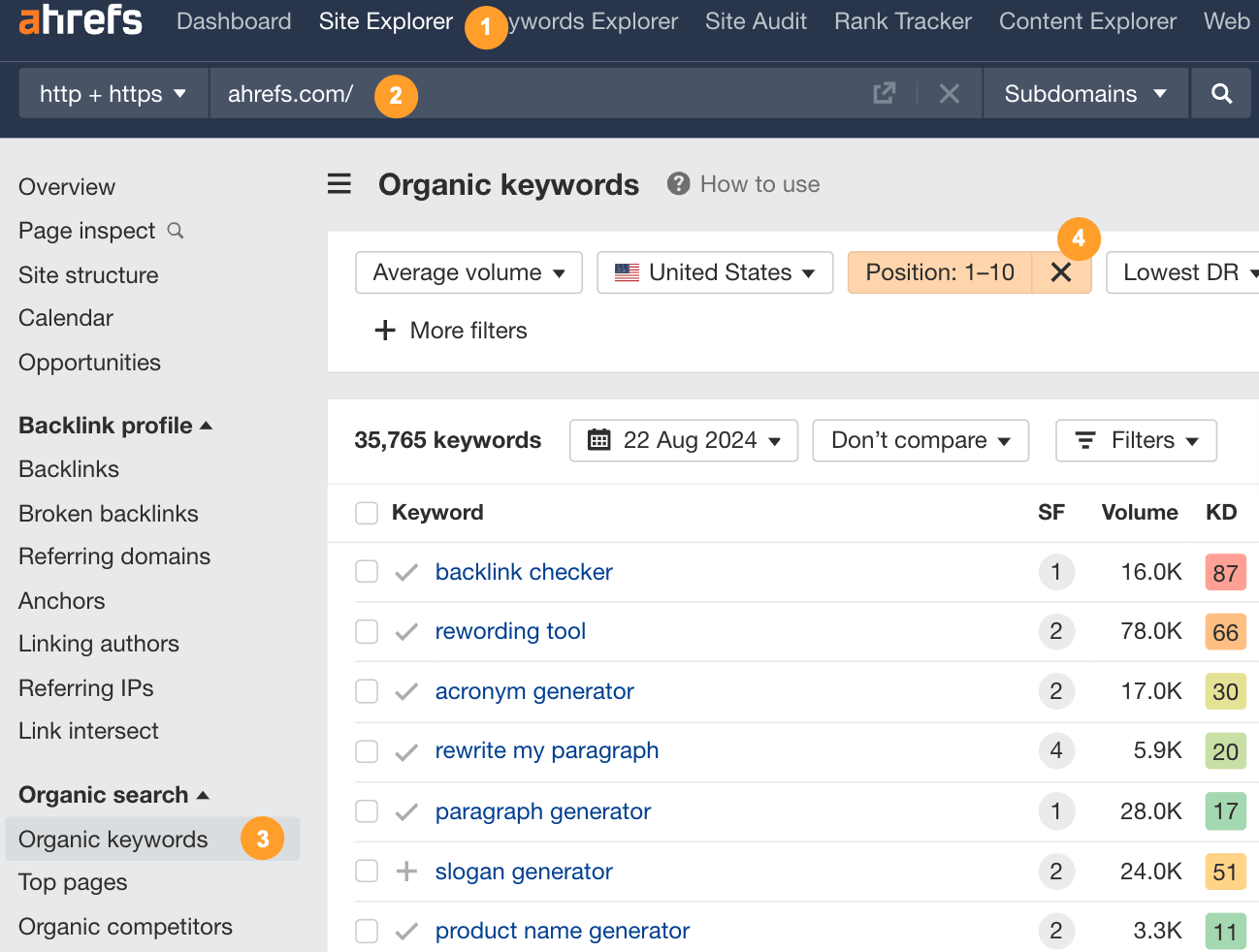

For instance, check out your website’s organic keywords in Ahrefs Site Explorer and filter for keywords in the top 10 positions only.

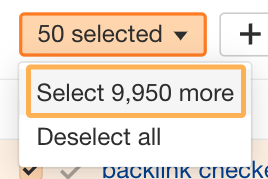

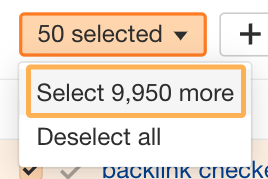

Remove any branded keywords and then copy the top 10,000 keywords:

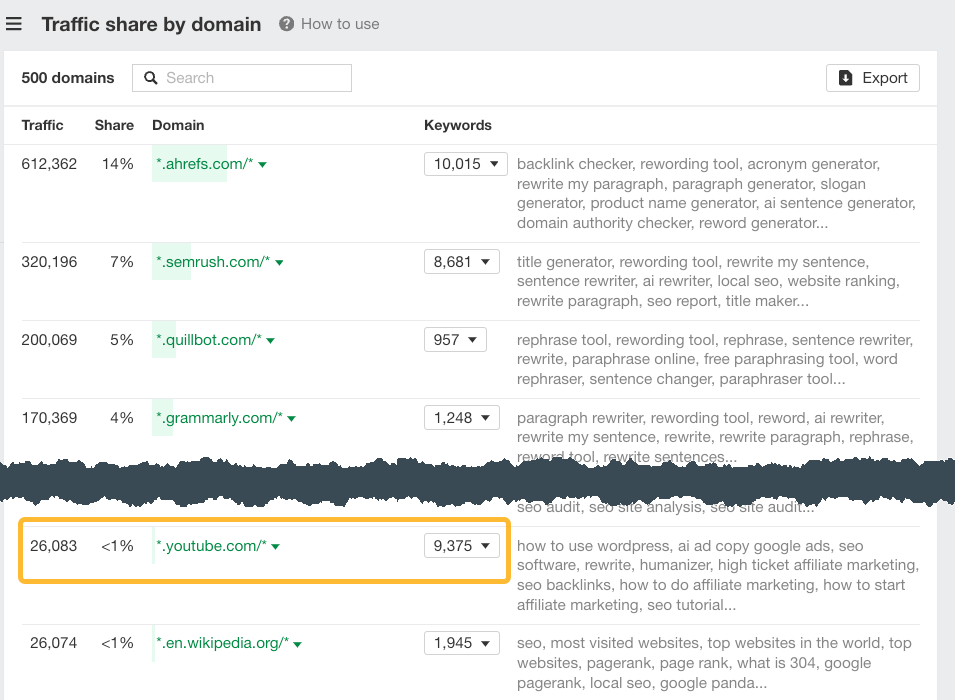

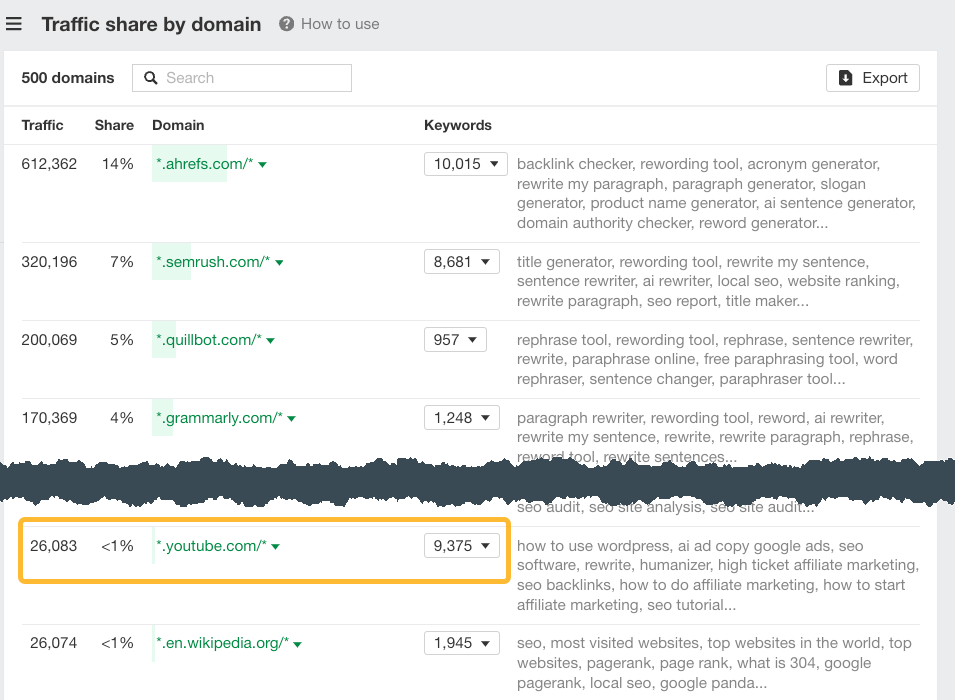

Pop these into Keywords Explorer and check out the Traffic share by domain report for an idea of how well YouTube ranks for keywords in this list:

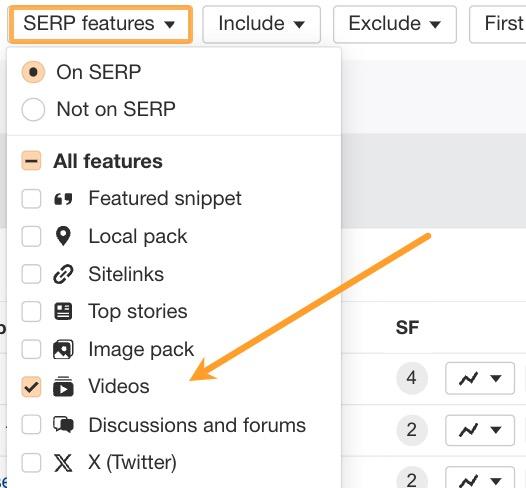

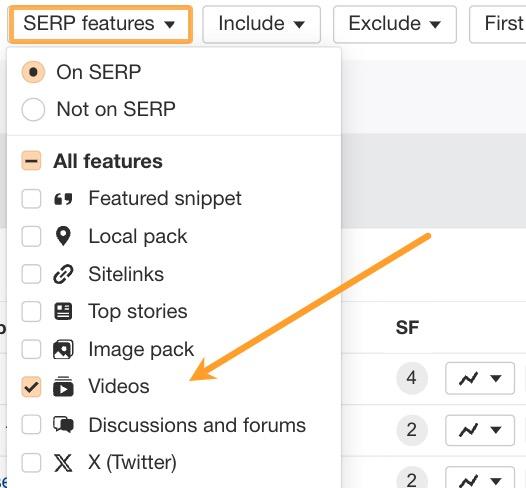

You can also use the SERP feature filter and include only keywords that contain a video on the SERP:

Doing this gives you a feel for how video-friendly a particular topic is. You can also create a list of exact keywords to target with a video strategy.

This method has helped Ahrefs get loads of high-retention views from search. We also pair this with native YouTube optimization to increase our organic visibility within the YouTube ecosystem.

But, when it comes to creating social media content from blogs, it’s important that you don’t just re-word the blog post. You’ve got to match the content to the platform to retain your audience’s attention.

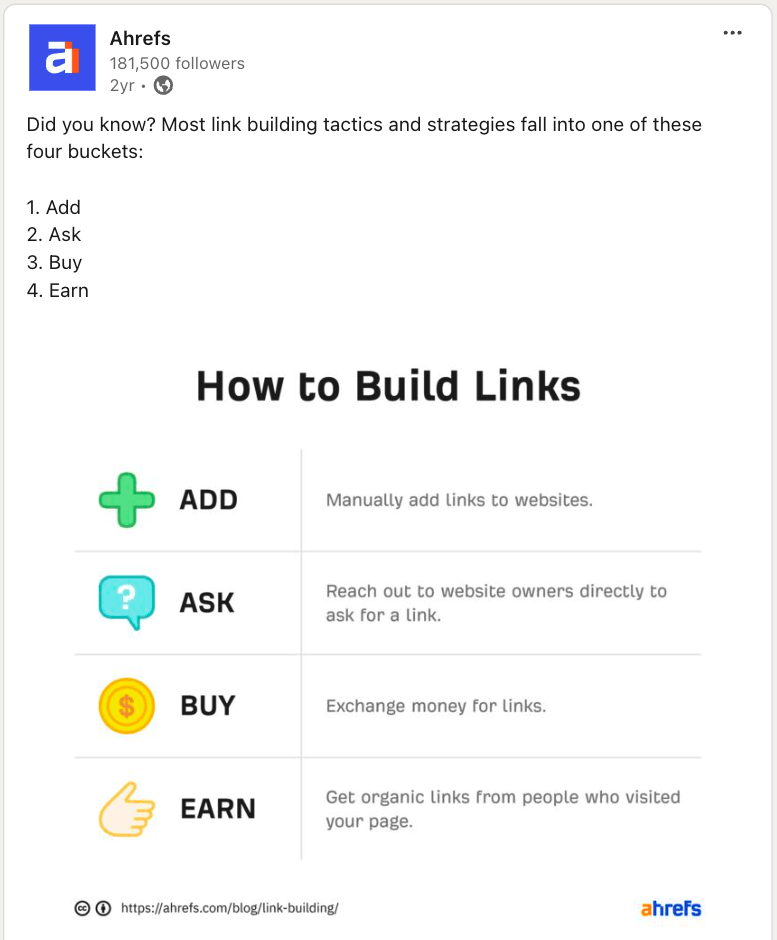

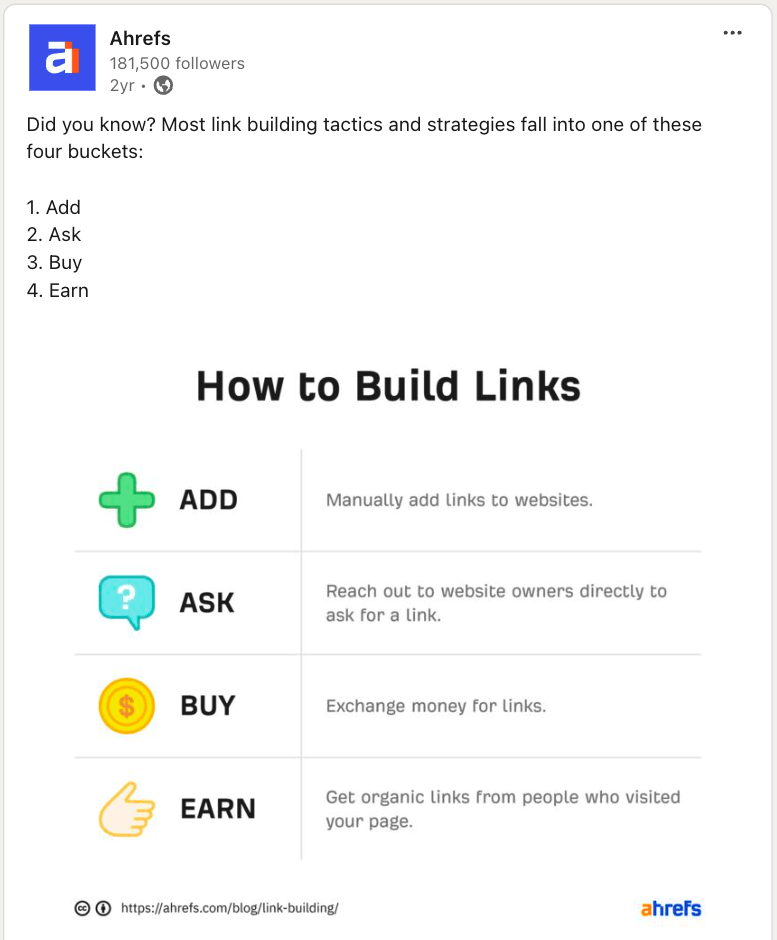

For example, we’ve published a handful of long-form blog posts on link building strategies and tactics. Each covers different angles, like:

However, Sam has also created a video but has selected an angle that’s a better fit for audiences on YouTube:

And, we’ve also published many social posts about it, adapting the content to fit the native audience of each platform, like this short and sweet LinkedIn post:

When integrating social media into your strategy, the end goal will often be about more than just rankings.

Conversion or leading someone closer to a conversion is more important in most cases.

Further reading

Here are some additional posts you can check out to learn more about how to incorporate social media into your SEO strategy.

Key takeaways

Advanced SEO means different things to different people. However, one thing’s clear.

Those who go beyond the basics are the ones who not only deliver better results to clients but also unlock more opportunities to grow in their careers.

If you’d like to learn more advanced SEO tips from experts, come to Ahrefs Evolve 😉

Most of the experts mentioned in this post will be there sharing their latest and greatest advice.

Having said that, it’s also important to remember the basics. Never cut corners because you’re chasing some new “advanced” tactic. Rather, add advanced skills on top of the foundation of the tried-and-true fundamentals.

I’m not a fan of tactics, but I am all about consistently doing the basics – doing keyword research, creating solid content, making sure they are SEO friendly, and working hard to acquire links.Doing this alone has lasted us decades in SEO, avoiding all the Google penalties along the way!

If you’ve got thoughts to share or advanced techniques working well for you right now, reach out on LinkedIn.

Stay in the loop with Entireweb

Get the latest updates delivered straight to your inbox. No spam - unsubscribe anytime.