SEO

The 6 Biggest SEO Challenges You’ll Face in 2024

Seen any stressed-out SEOs recently? If so, that’s because they’ve got their work cut out this year.

Between navigating Google’s never-ending algorithm updates, fighting off competitors, and getting buy-in for projects, there are many significant SEO challenges to consider.

So, which ones should you focus on? Here are the six biggest ones I think you should pay close attention to.

Make no mistake—Google’s algorithm updates can make or break your site.

Core updates, spam updates, helpful content updates—you name it, they can all impact your site’s performance.

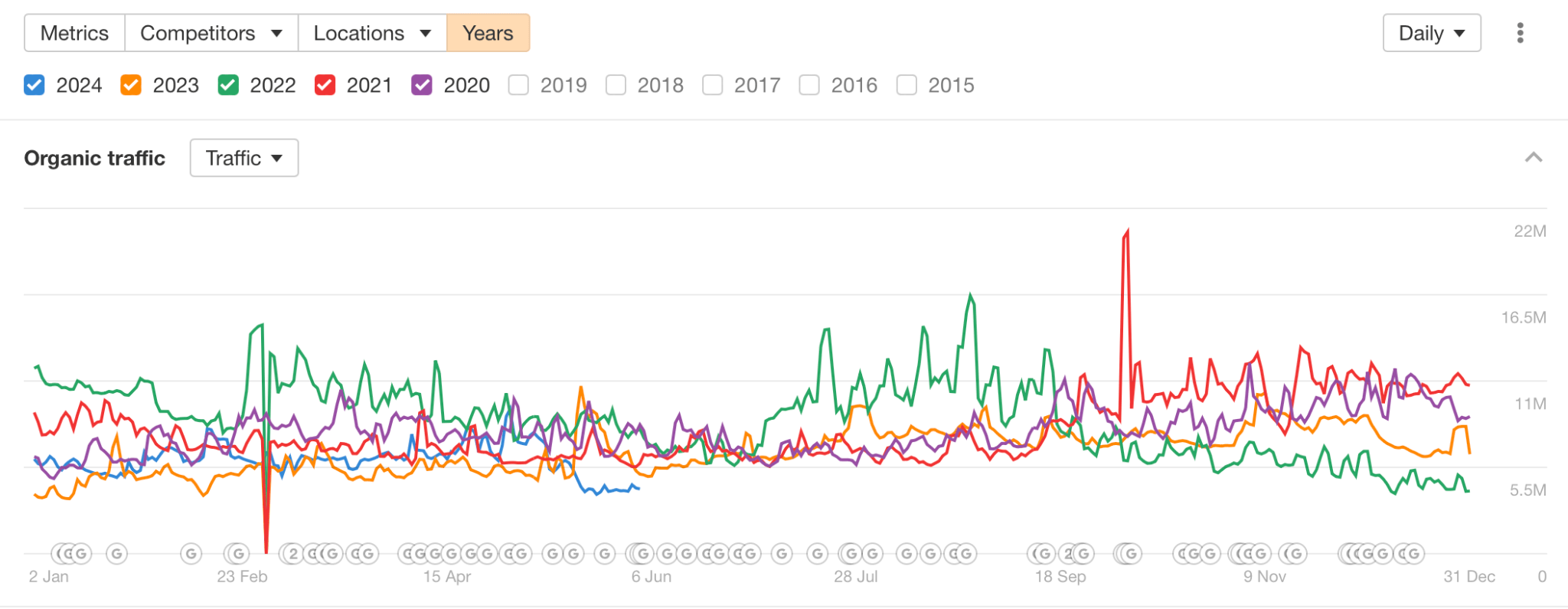

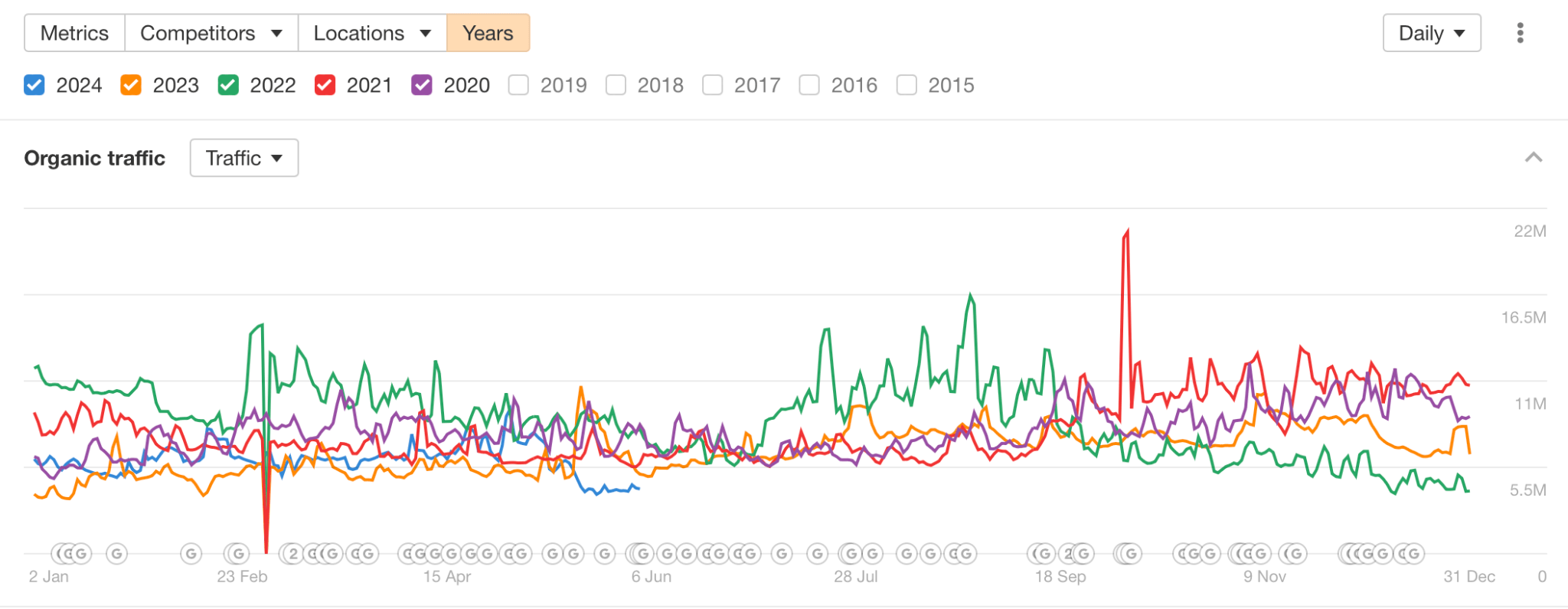

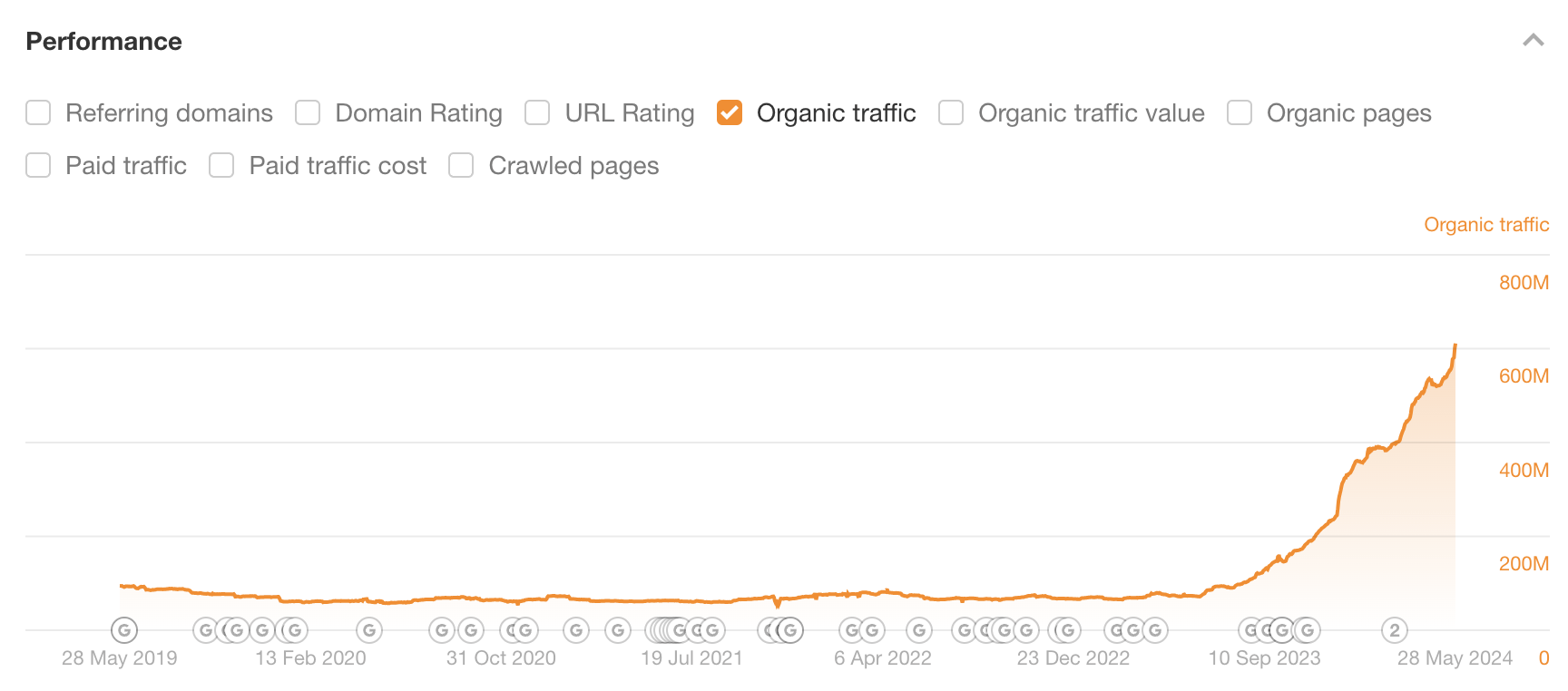

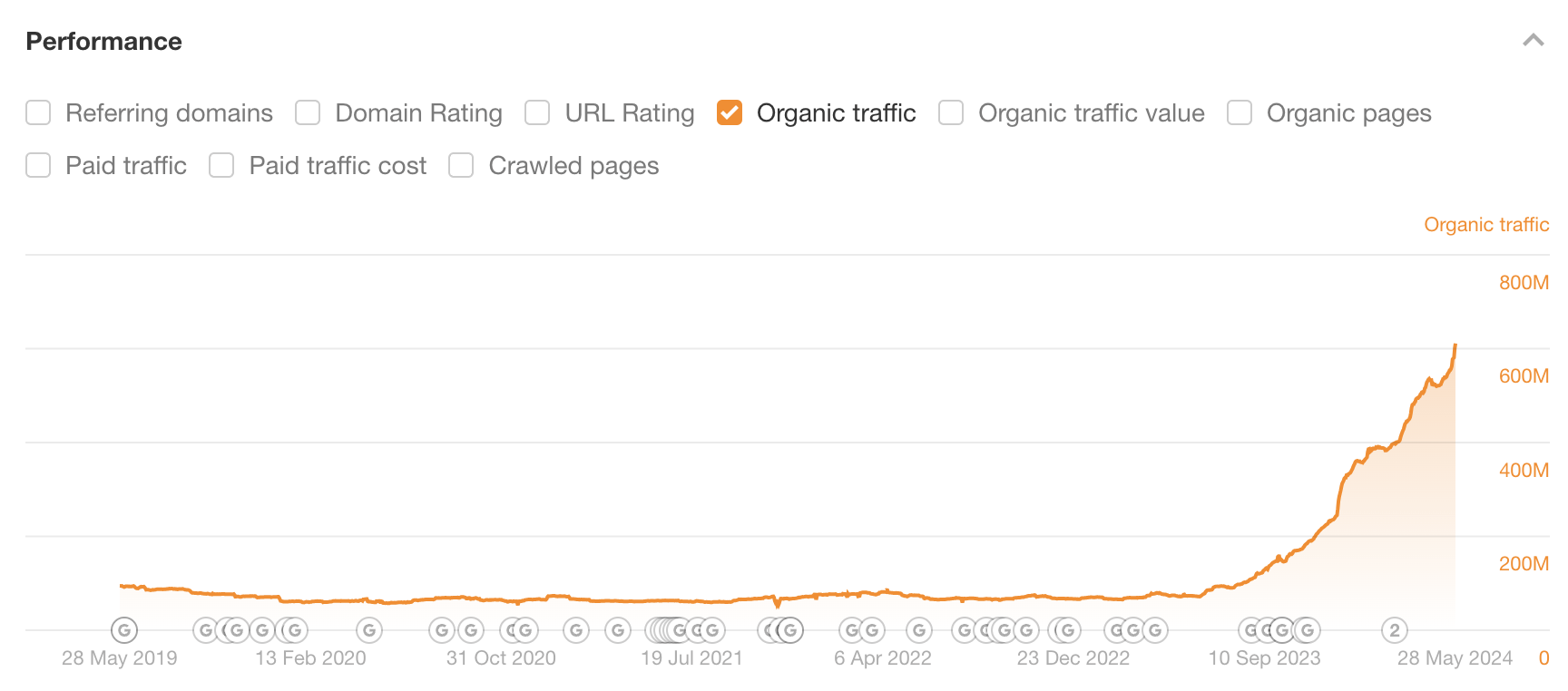

As we can see below, the frequency of Google updates has increased in recent years, meaning that the likelihood of being impacted by a Google update has also increased.

How to deal with it:

Recovering from a Google update isn’t easy—and sometimes, websites that get hit by updates may never fully recover.

For the reasons outlined above, most businesses try to stay on the right side of Google and avoid incurring Google’s wrath.

SEOs do this by following Google’s Search Essentials, SEO best practices and avoiding risky black hat SEO tactics. But sadly, even if you think you’ve done this, there is no guarantee that you won’t get hit.

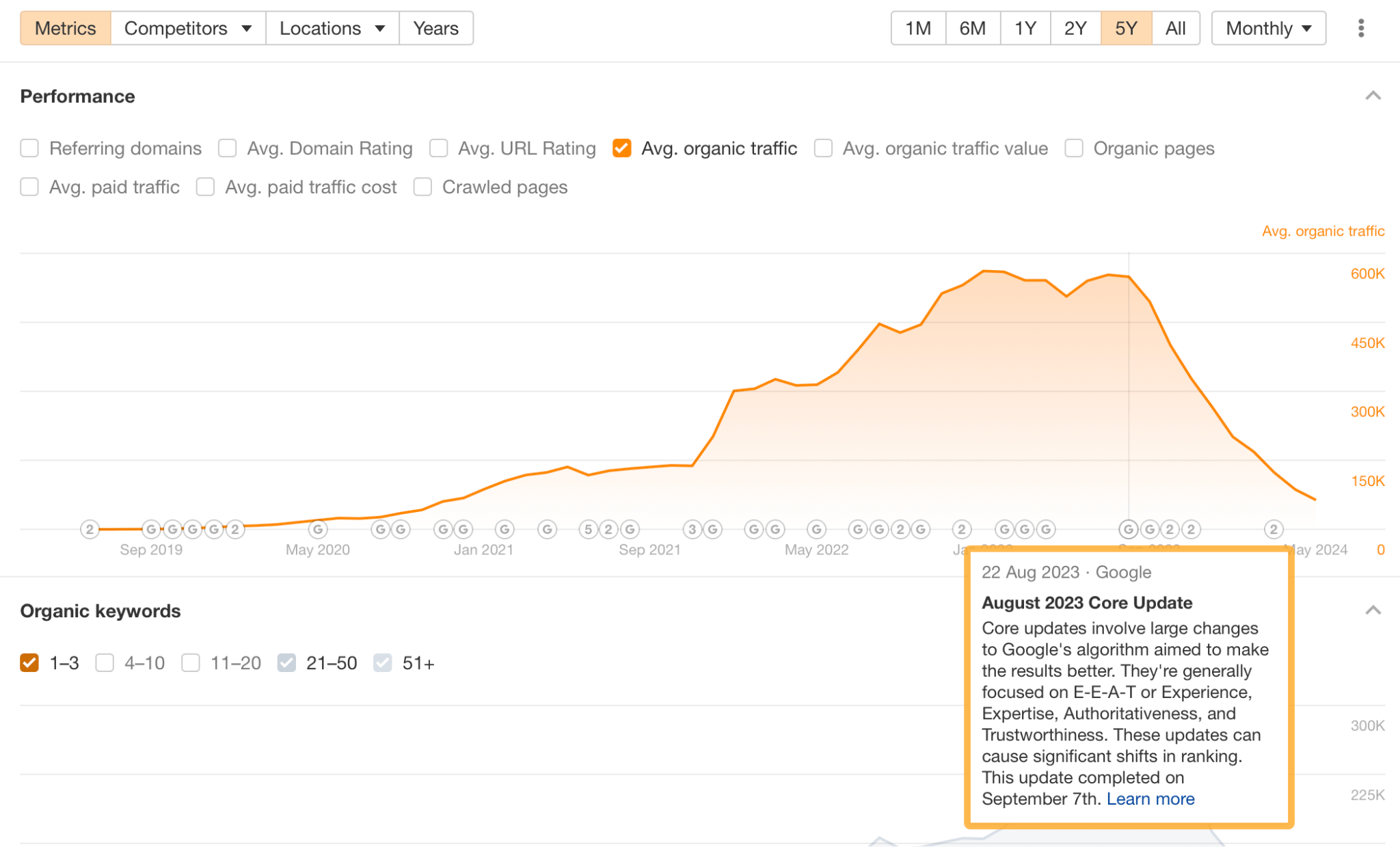

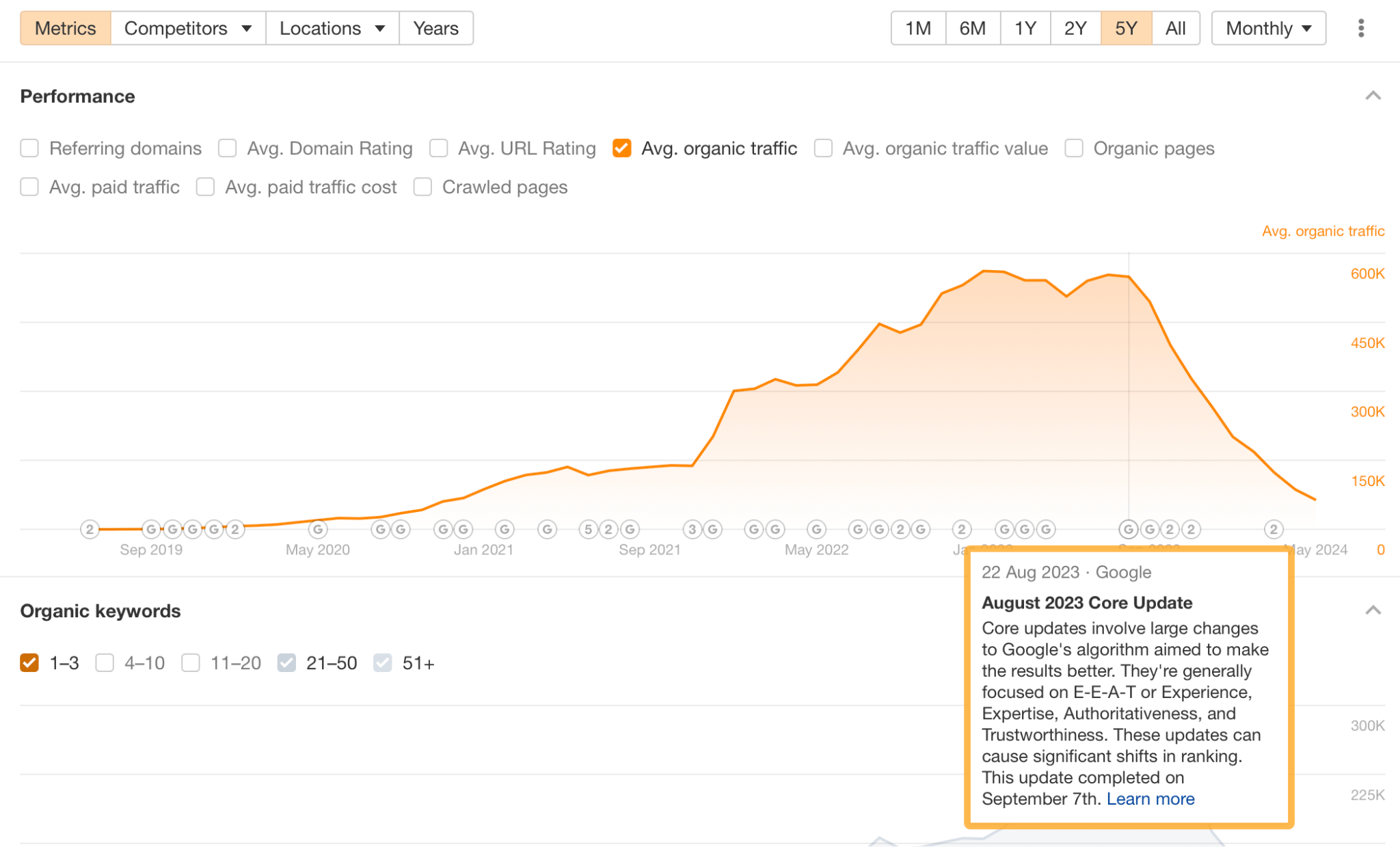

If you suspect a website has been impacted by a Google update, the fastest way to check is to plug the domain into Ahrefs’ Site Explorer.

Here’s an example of a website likely affected by Google’s August 2023 Core Update. The traffic drop started on the update’s start date.

From this screen, you can see if a drop in traffic correlates with a Google update. If there is a strong correlation, then that update may have hit the site. To remedy it, you will need to understand the update and take action accordingly.

Follow SEO best practices

It’s important your website follows SEO best practices so you can understand why it has been affected and determine what you need to do to fix things.

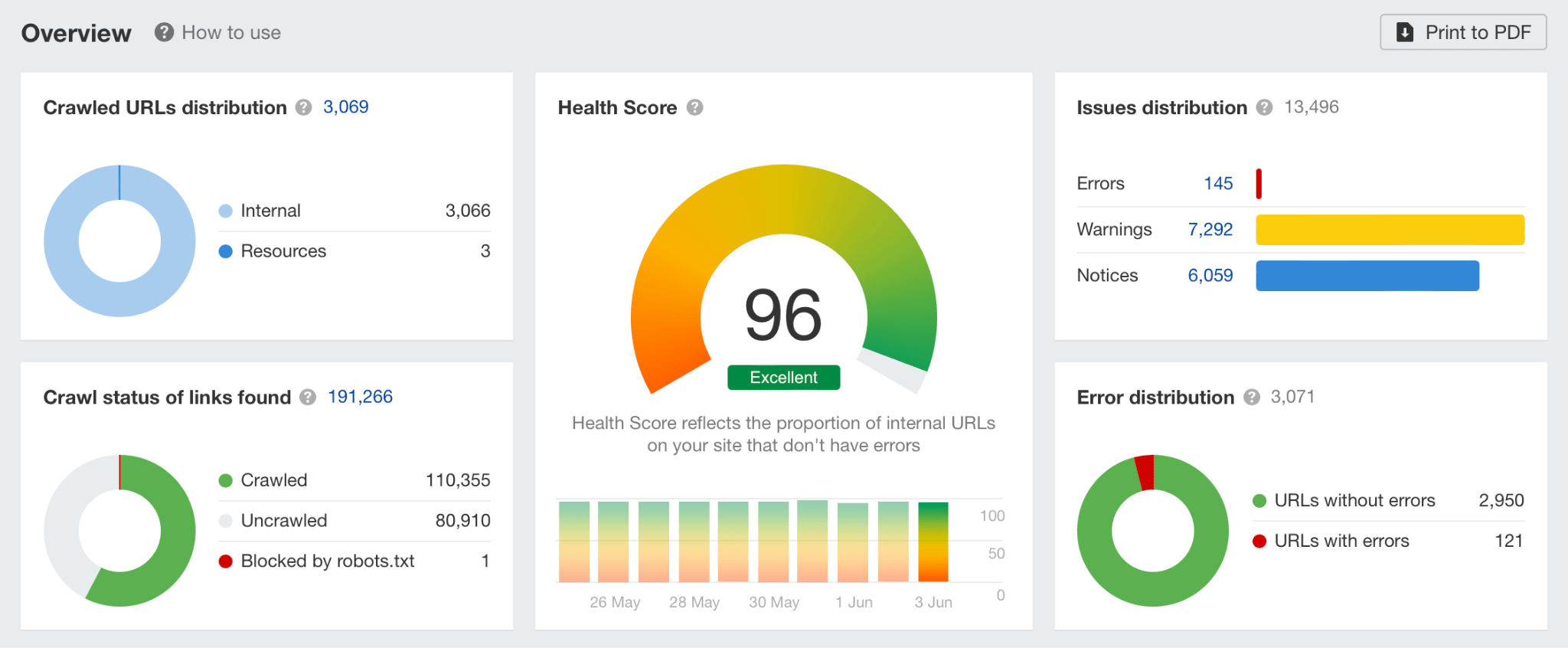

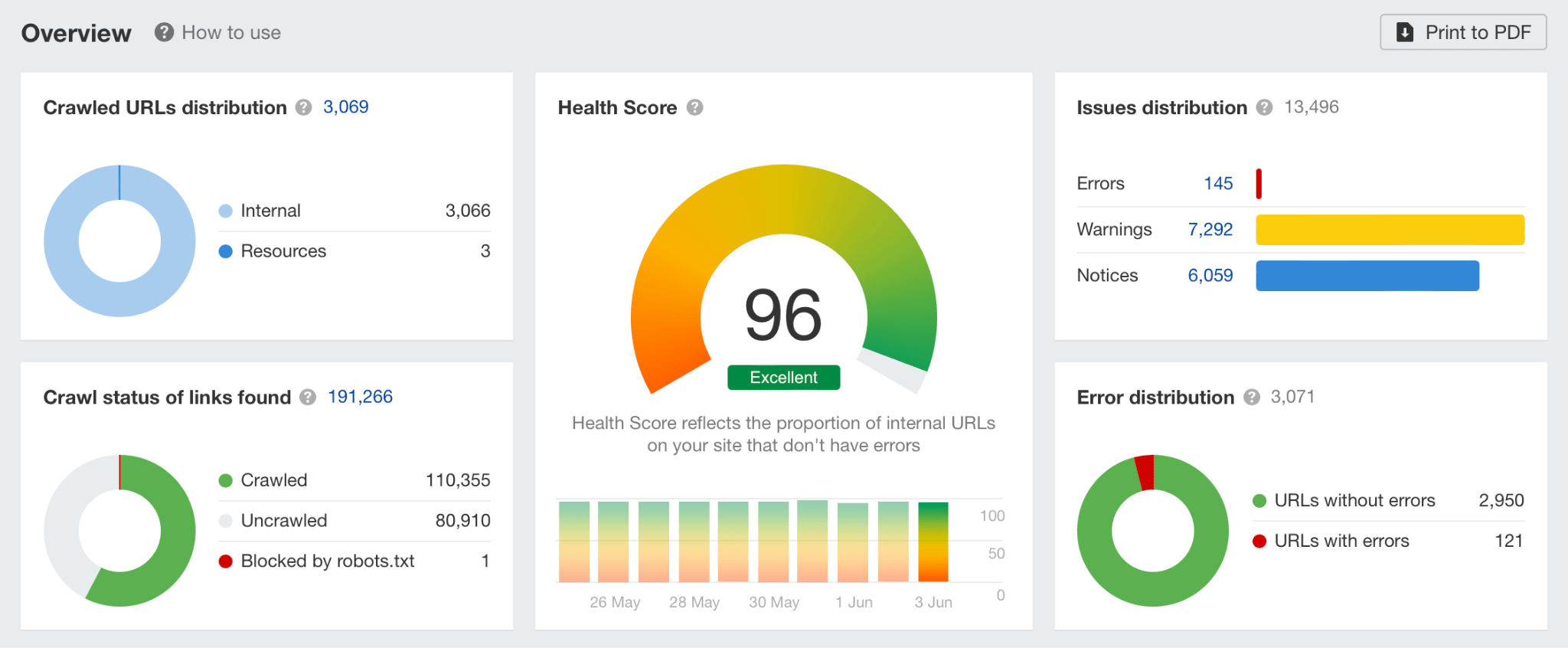

For example, you might have missed significant technical SEO issues impacting your website’s traffic. To rule this out, it’s worth using Site Audit to run a technical crawl of your website.

Monitor the latest SEO news

In addition to following best practices, it’s a good idea to monitor the latest SEO news. You can do this through various social media channels like X or LinkedIn, but I find the two websites below to be some of the most reliable sources of SEO news.

Even if you escape Google’s updates unscathed, you’ve still got to deal with your competitors vying to steal your top-ranking keywords from right under your nose.

This may sound grim, but it’s a mistake to underestimate them. Most of the time, they’ll be trying to improve their website’s SEO just as much as you are.

And these days, your competitors will:

How to deal with it:

If you want to stay ahead of your competitors, you need to do these two things:

Spy on your competitors and monitor their strategy

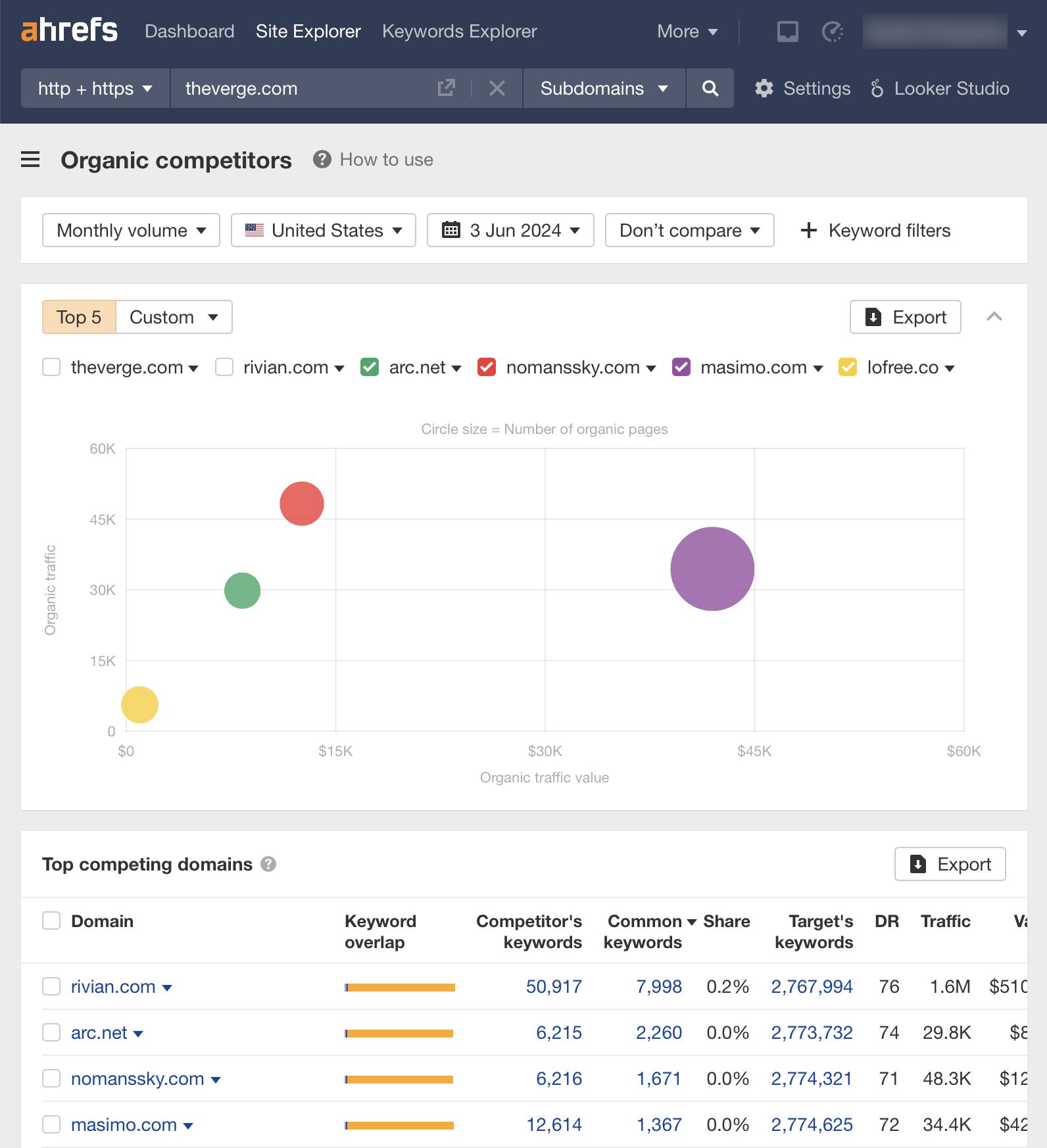

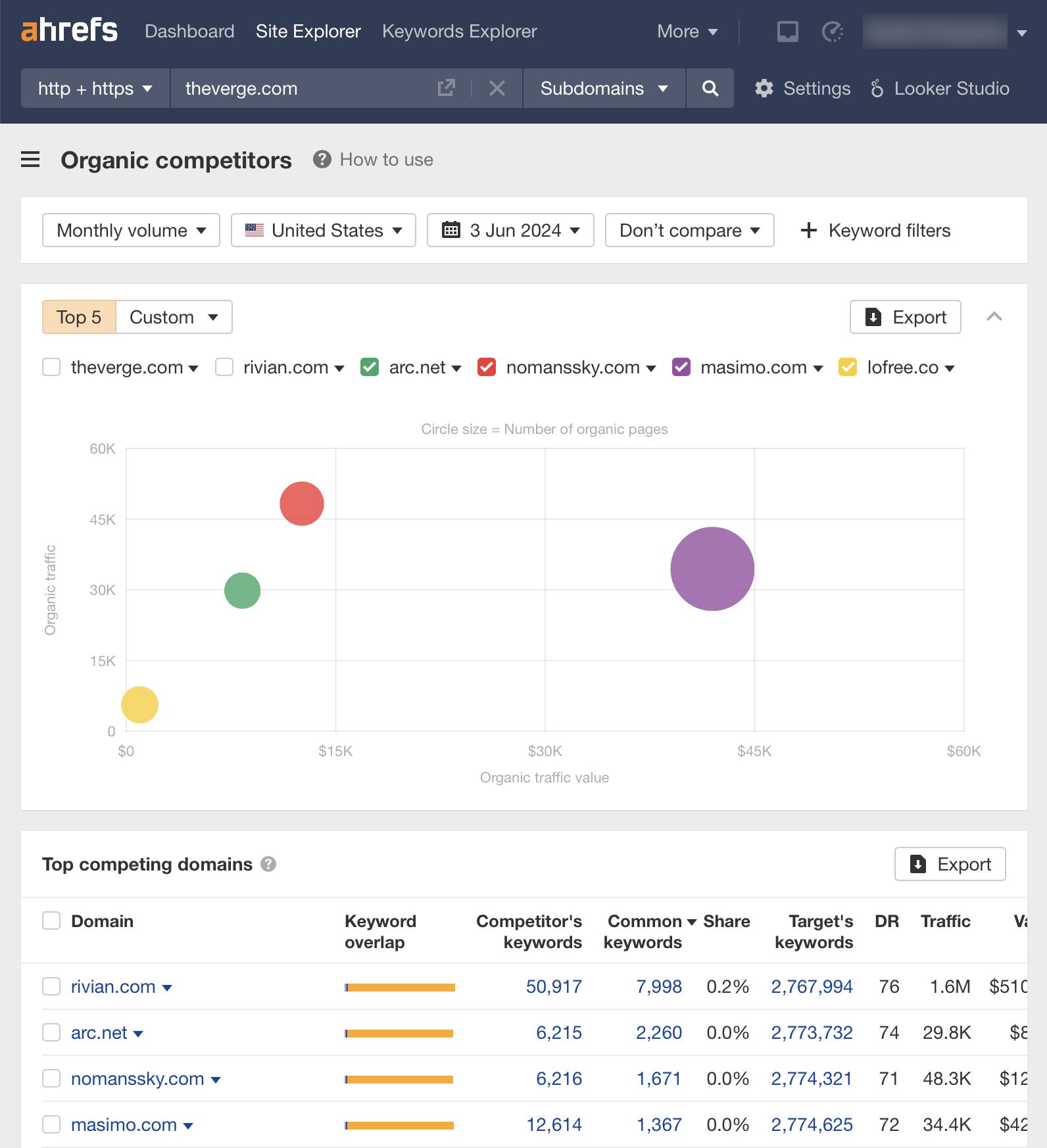

Ok, so you don’t have to be James Bond, but by using a tool like Ahrefs Site Explorer and our Google Looker Studio Integration (GLS), you can extract valuable information and keep tabs on your competitors, giving you a competitive advantage in the SERPs.

Using a tool like Site Explorer, you can use the Organic Competitors report to understand the competitor landscape:

You can check out their Organic traffic performance across the years:

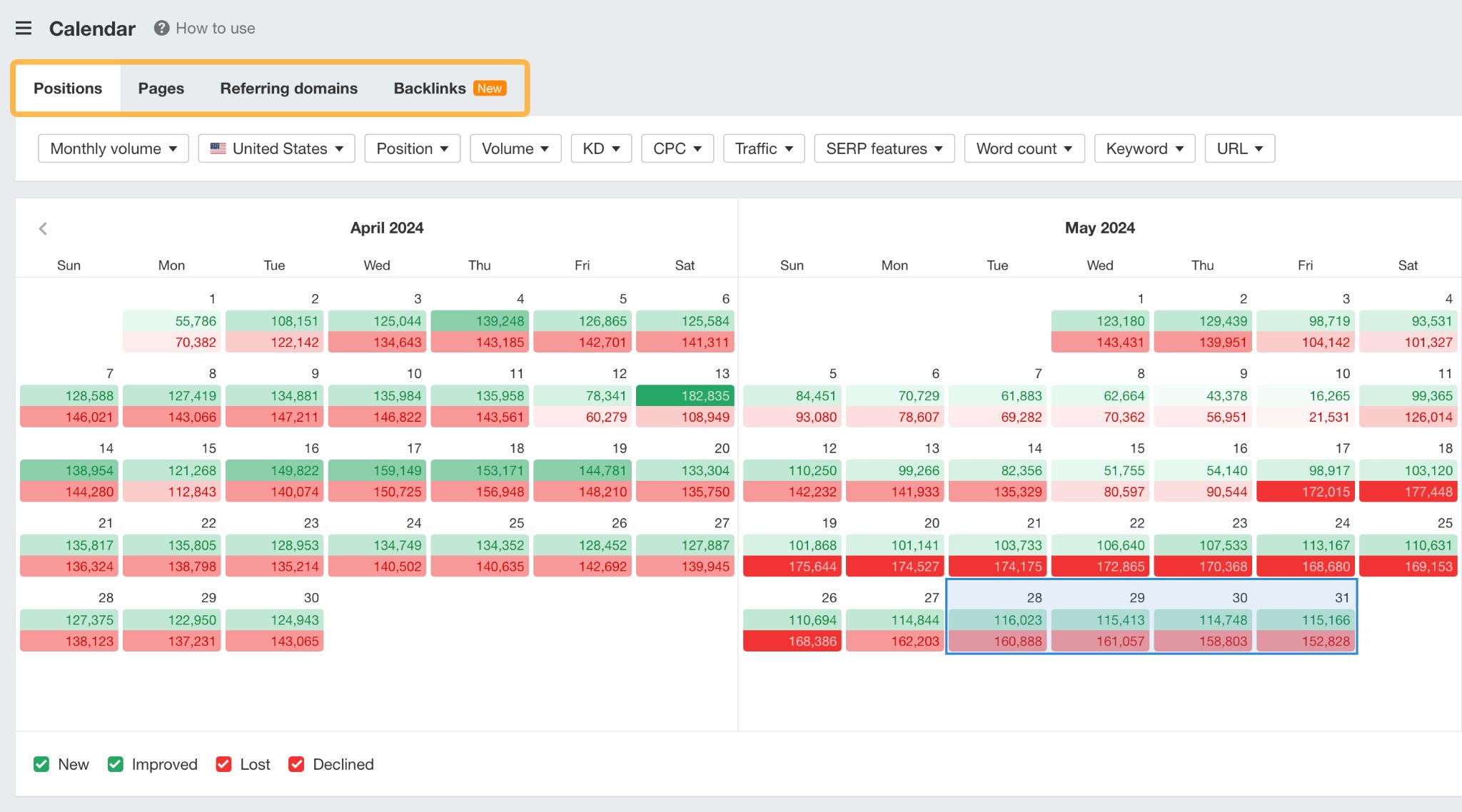

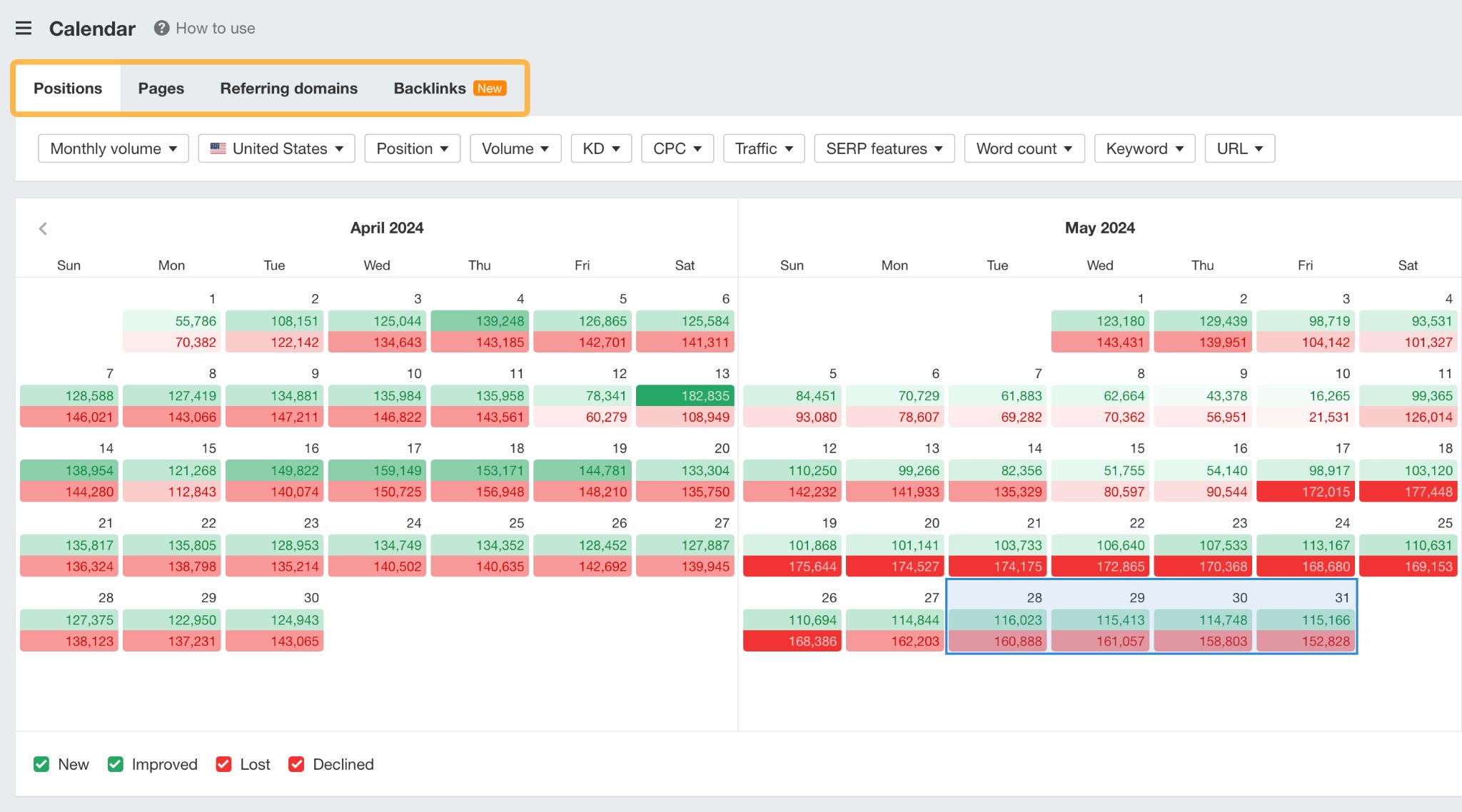

You can use Calendar to see which days changes in Positions, Pages, Referring domains Backlinks occurred:

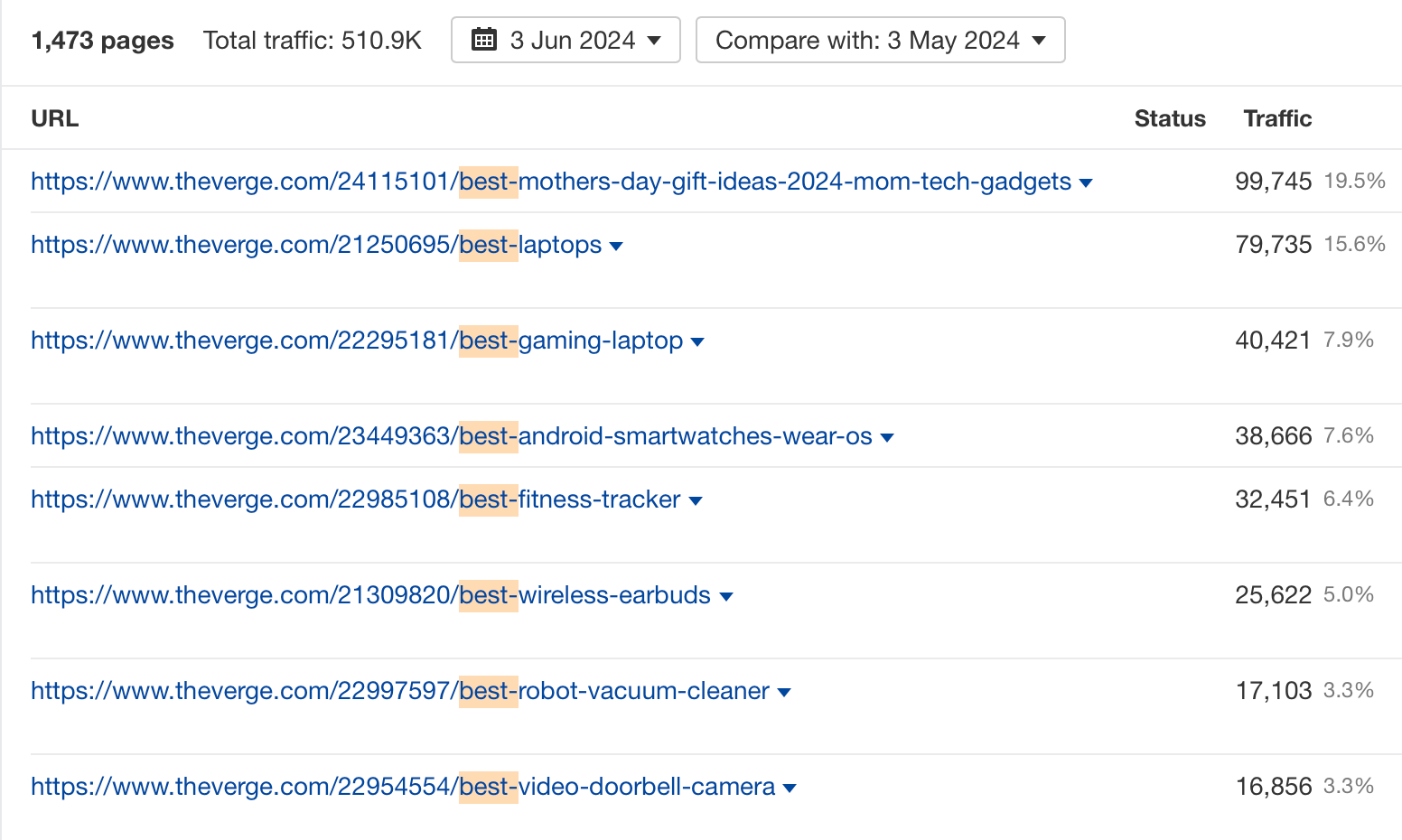

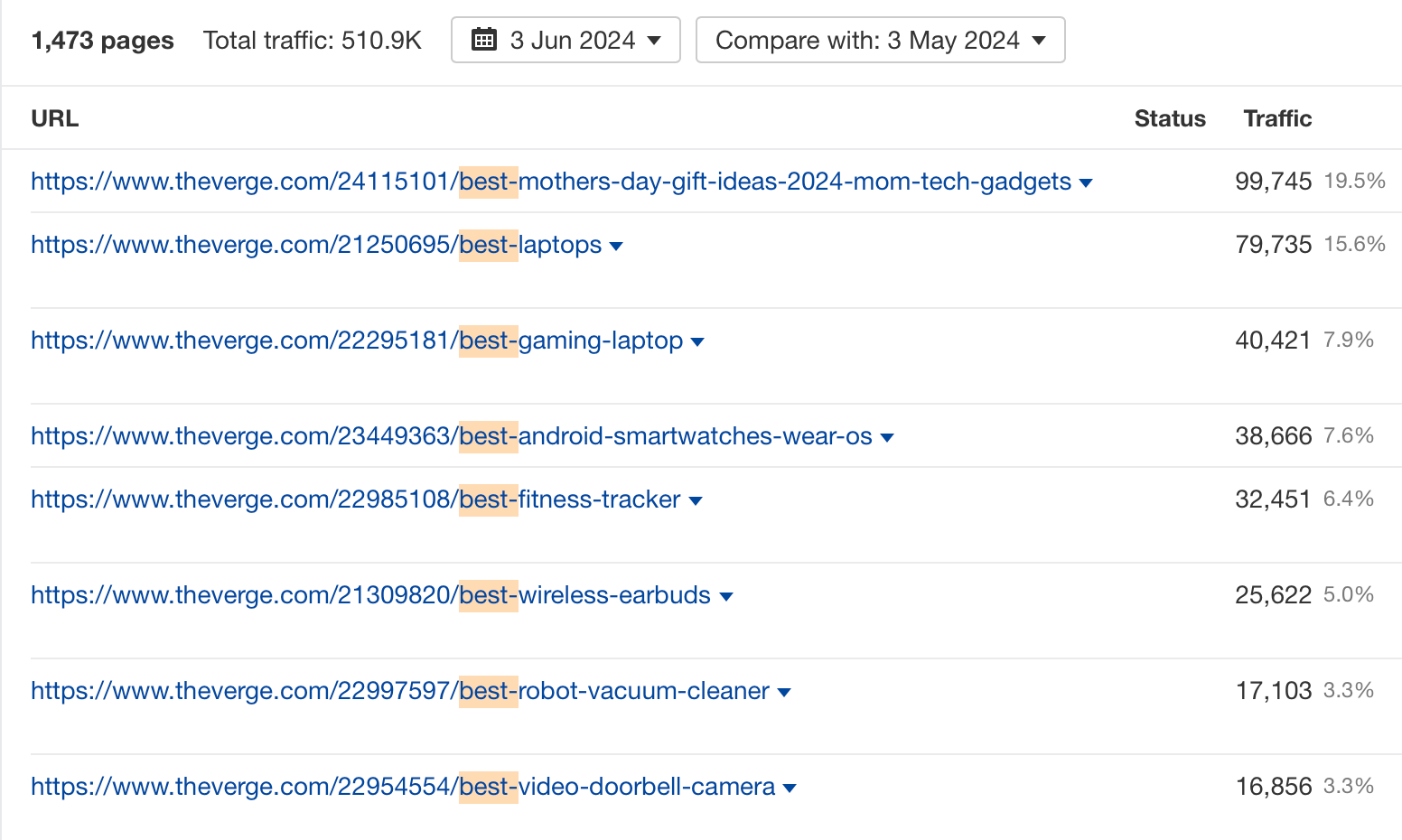

You can see their Top pages’ organic traffic and Organic keywords:

And much, much more.

If you want to monitor your most important competitors more closely, you can even create a dashboard using Ahrefs’ GLS integration.

Acquire links and create content that your competitors can’t recreate easily

Once you’ve done enough spying, it’s time to take action.

Links and content are the bread and butter for many SEOs. But a lot of the time the links that are acquired and the content that is created just aren’t that great.

So, to stand the best chance of maintaining your rankings, you need to work on getting high-quality backlinks and producing high-quality content that your competitors can’t easily recreate.

It’s easy to say this, but what does it mean in practice?

The best way to create this type of content is to create deep content.

At Ahrefs, we do this by running surveys, getting quotes from industry experts, running data studies, creating unique illustrations or diagrams, and generally fine-tuning our content until it is the best it can be.

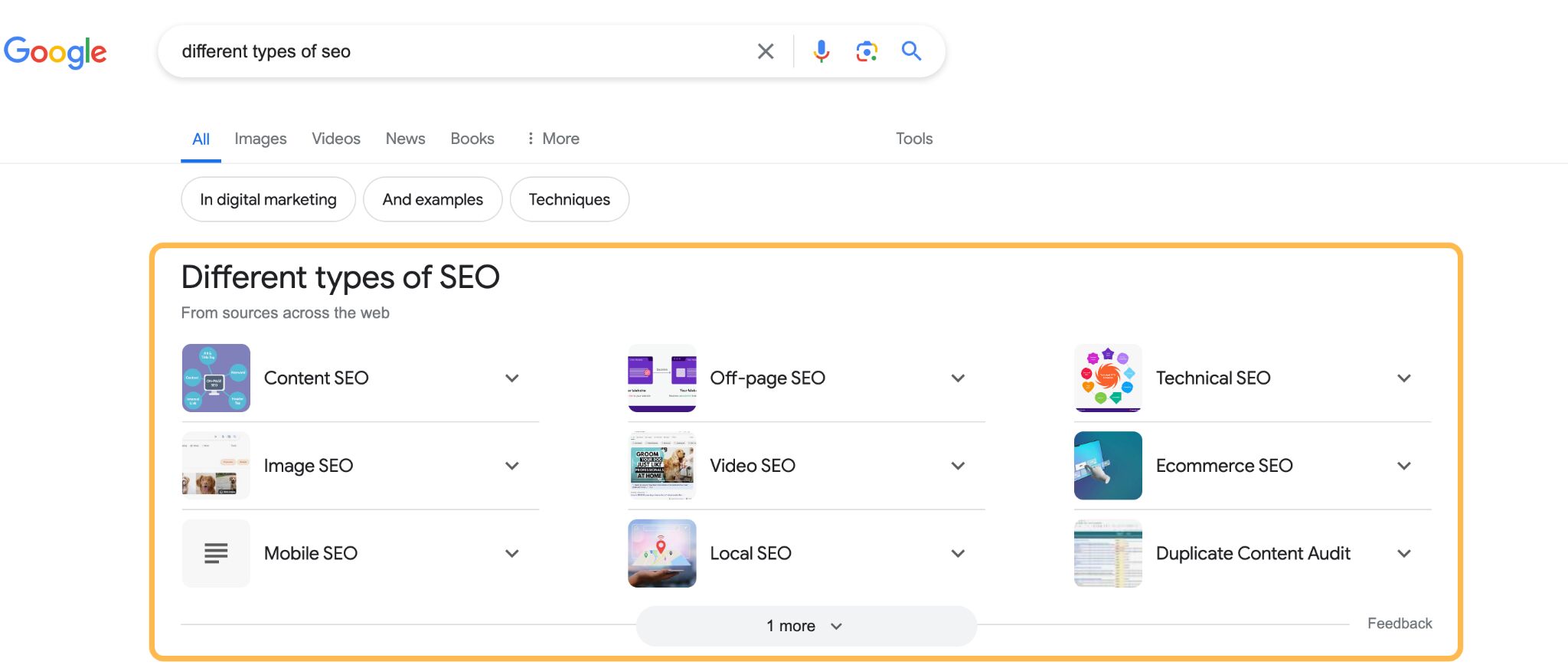

As if competing against your competitors wasn’t enough, you must also compete against Google for clicks.

As Google not-so-subtly transitions from a search engine to an answer engine, it’s becoming more common for it to supply the answer to search queries—rather than the search results themselves.

The result is that even the once top-performing organic search websites have a lower click-through rate (CTR) because they’re further down the page—or not on the first page.

Whether you like it or not, Google is reducing traffic to your website through two mechanisms:

- AI overviews – Where Google generates an answer based on sources on the internet

- Zero-click searches – Where Google shows the answer in the search results

With AI overviews, we can see that the traditional organic search results are not visible.

And with zero-click searches, Google supplies the answer directly in the SERP, so the user doesn’t have to click anything unless they want to know more.

These features have one thing in common: They are pushing the organic results further down the page.

With AI Overviews, even when links are included, Kevin Indig’s AI overviews traffic impact study suggests that AI overviews will reduce organic clicks.

In this example below, shared by Aleyda, we can see that even when you rank organically in the number one position, it doesn’t mean much if there are Ads and an AI overview with the UX with no links in the AI overview answer; it just perpetuates the zero-clicks model through the AI overview format.

How to deal with it:

You can’t control how Google changes the SERPs, but you can do two things:

Make your website the best it can be

If you focus on the latter, your website will naturally become more authoritative over time. This isn’t a guarantee that your website will be included in the AI overview, but it’s better than doing nothing.

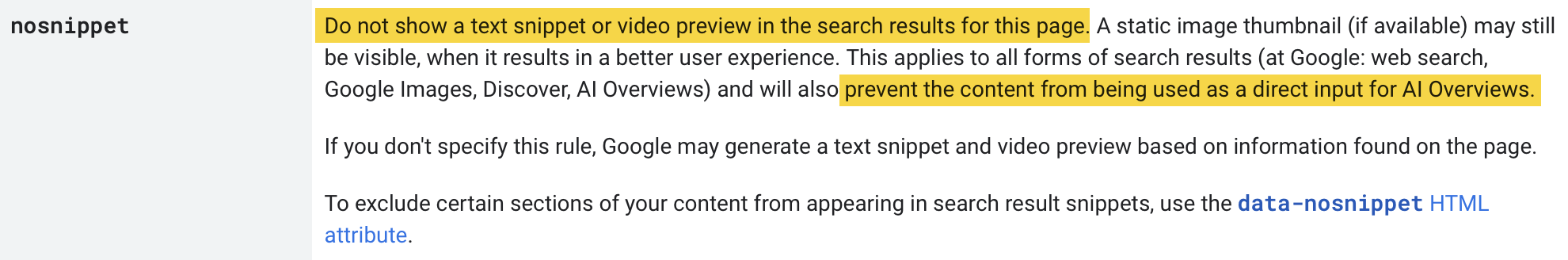

Prevent Google from showing your website in an AI Overview

If you want to be excluded from Google’s AI Overviews, Google says you can add no snippet to prevent your content from appearing in AI Overviews.

One of the reasons marketers gravitated towards Google in the early days was that it was relatively easy to set up a website and get traffic.

Recently, there have been a few high-profile examples of smaller websites that have been impacted by Google:

Apart from the algorithmic changes, I think there are two reasons for this:

- Large authoritative websites with bigger budgets and SEO teams are more likely to rank well in today’s Google

- User-generated content sites like Reddit and Quora have been given huge traffic boosts from Google, which has displaced smaller sites from the SERPs that used to rank for these types of keyword queries

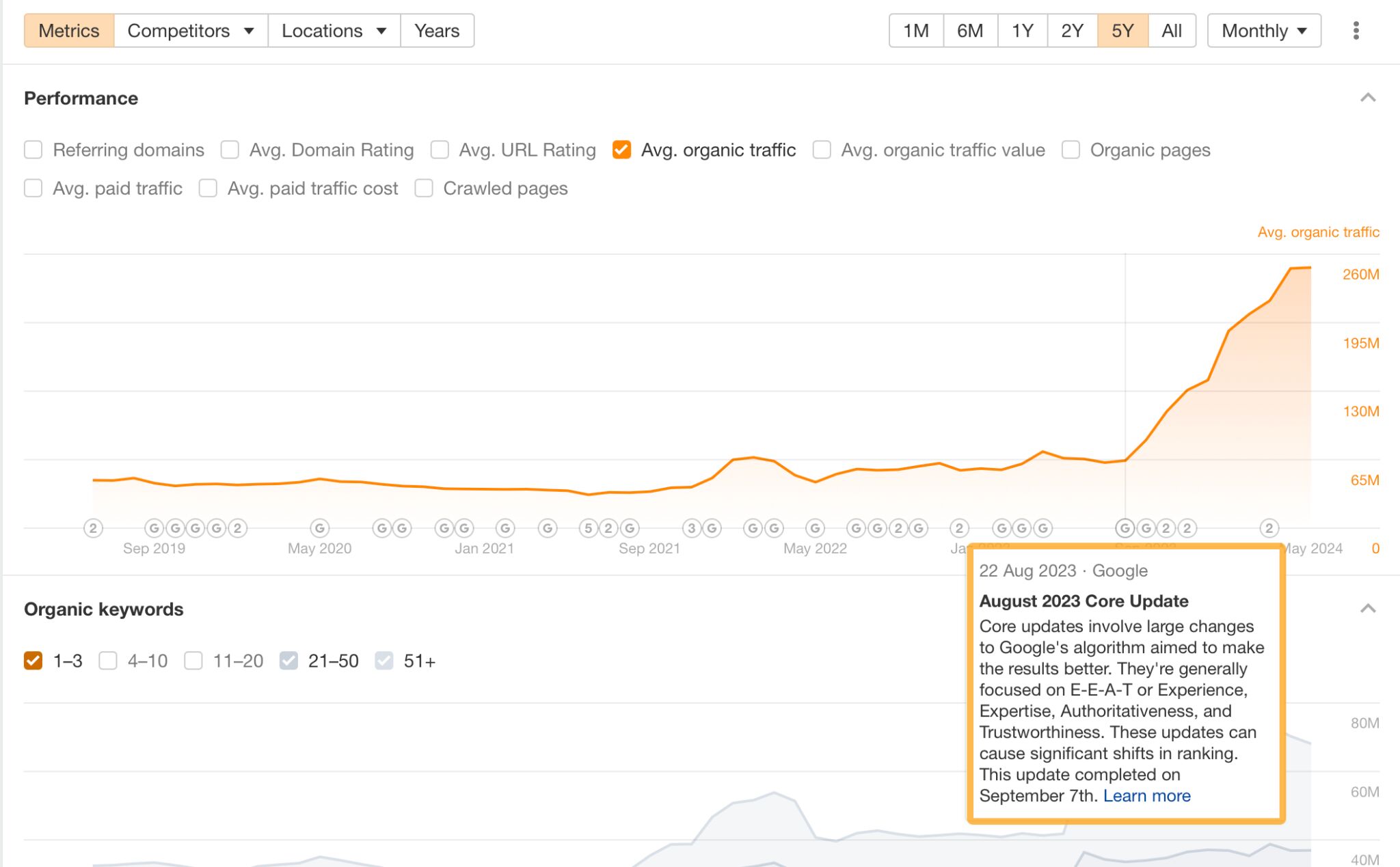

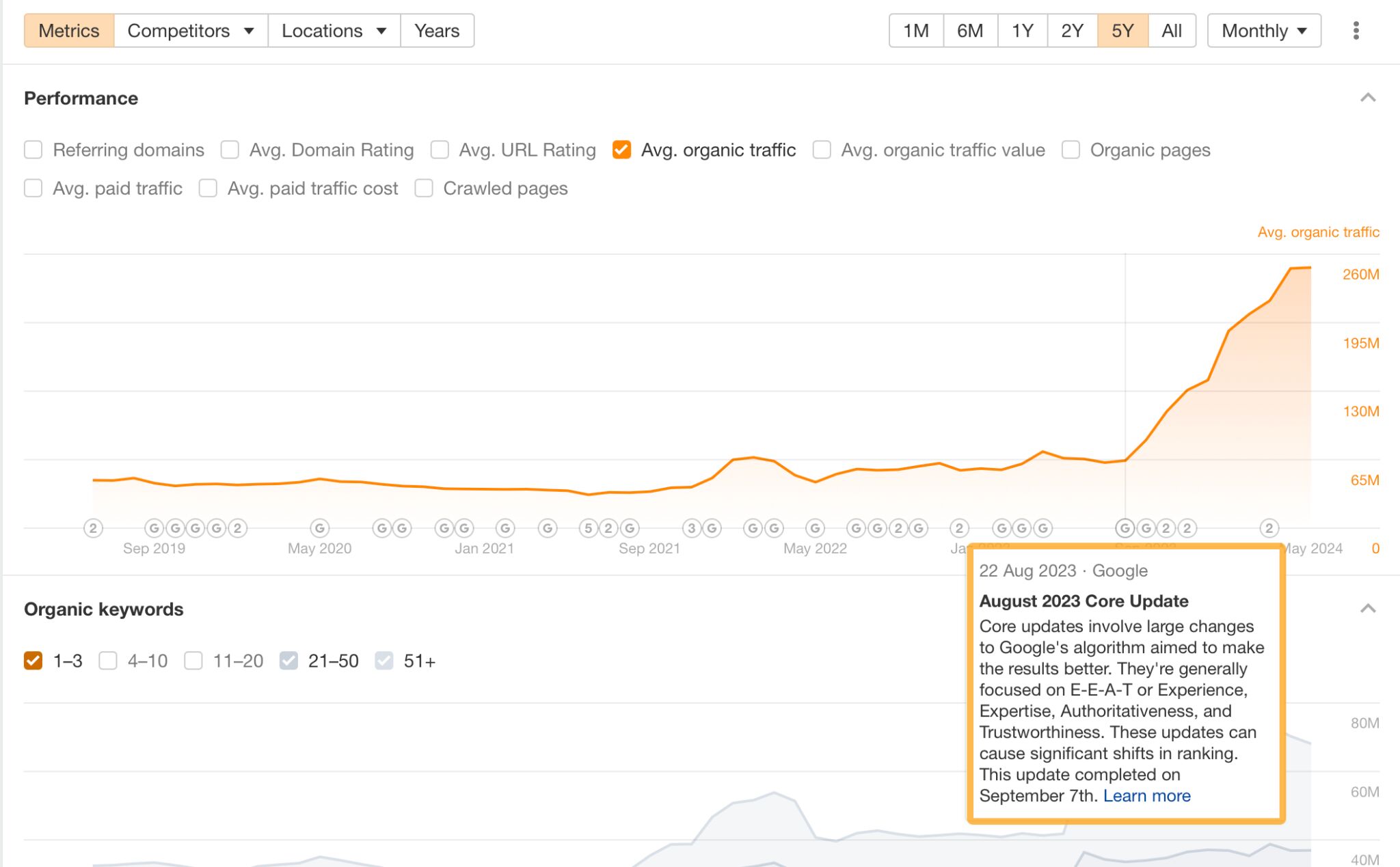

Here’s Reddit’s traffic increase over the last year:

And here’s Quora’s traffic increase:

How to deal with it:

There are three key ways I would deal with this issue in 2024:

- Focus on targeting less competitive keywords using keyword research

- Build more links to become more authoritative

- Create deep content

Focus on targeting the right keywords using keyword research

Knowing which keywords to target is really important for smaller websites. Sadly, you can’t just write about a big term like “SEO” and expect to rank for it in Google.

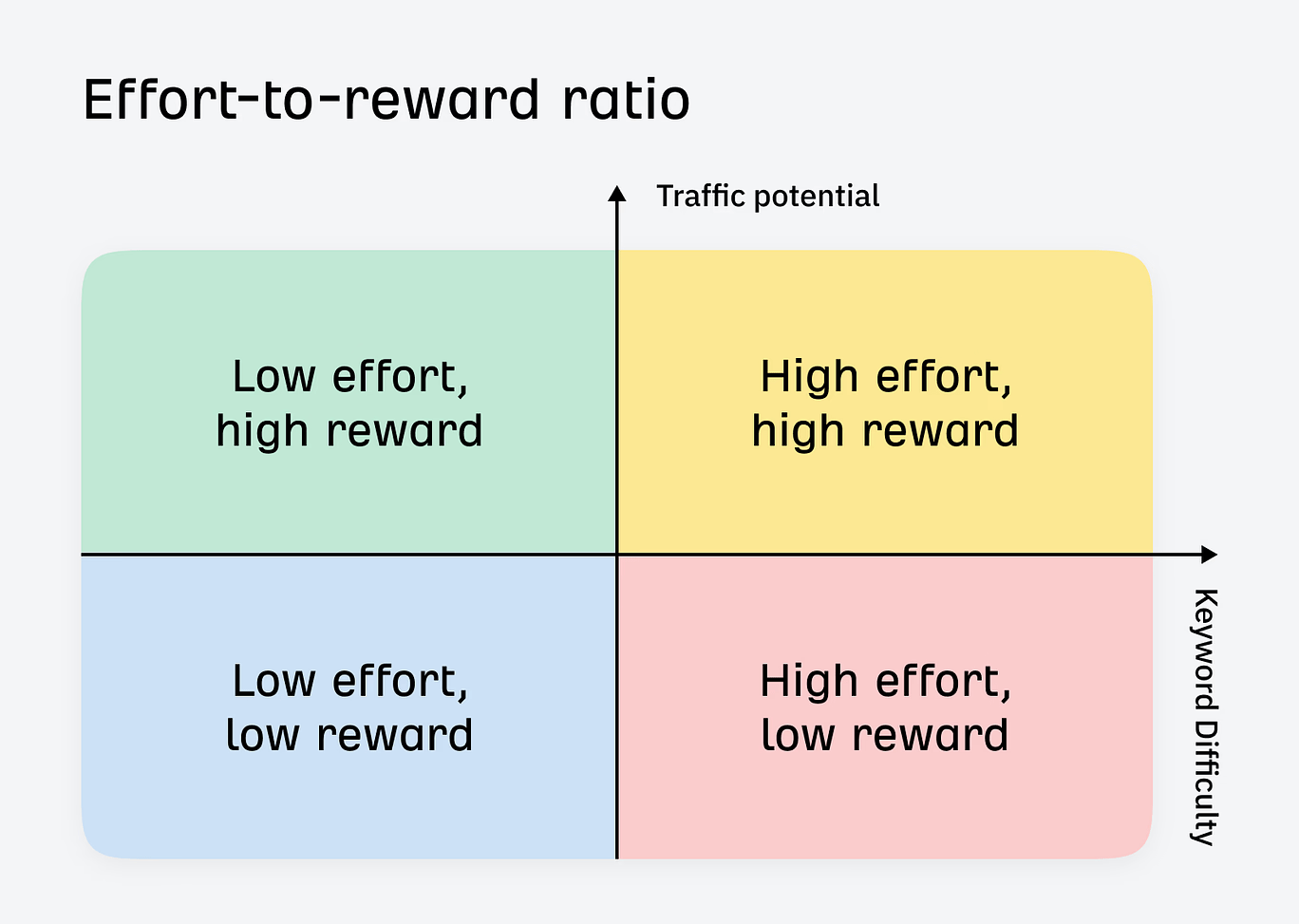

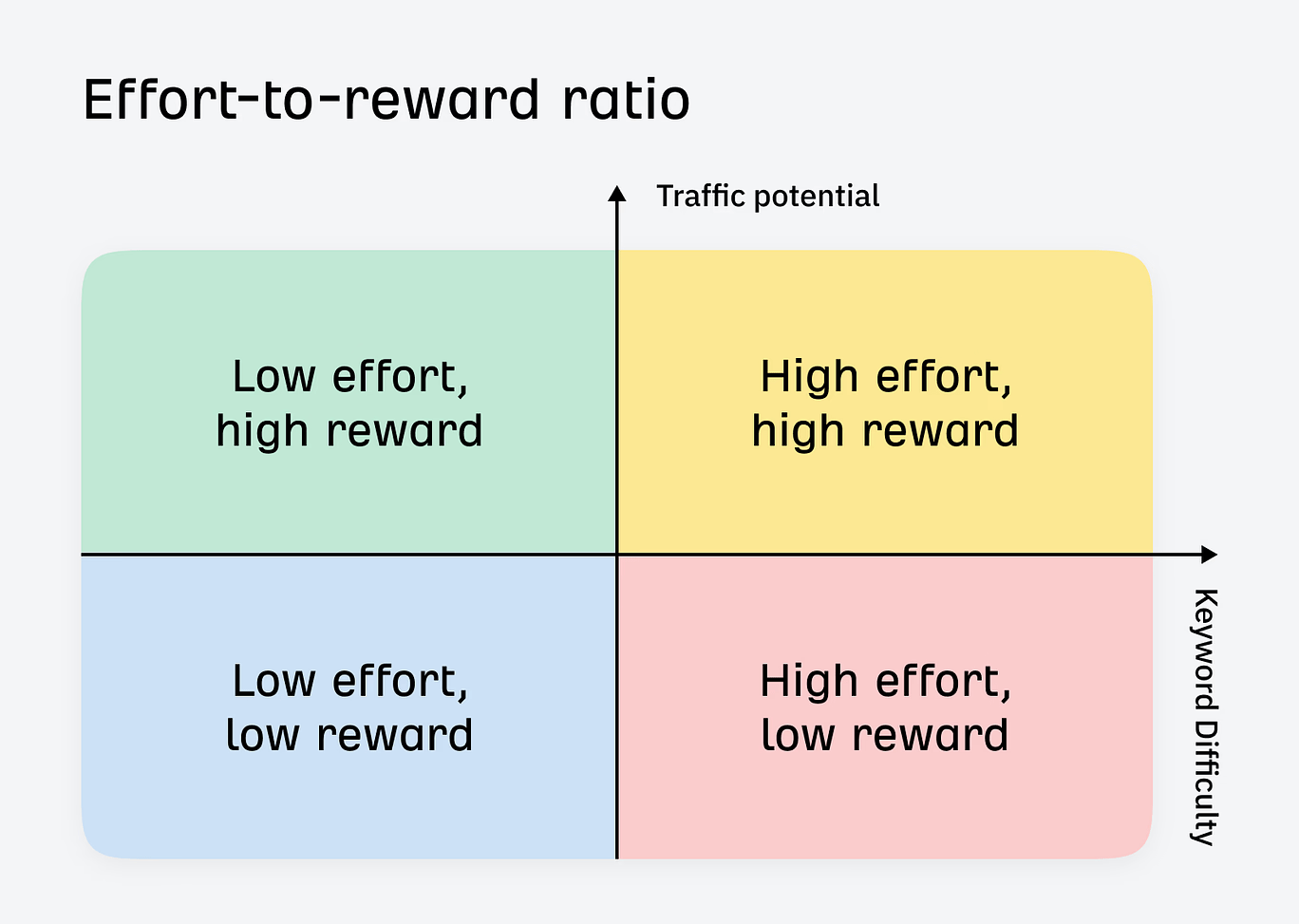

Use a tool like Keywords Explorer to do a SERP analysis for each keyword you want to target. Use the effort-to-reward ratio to ensure you are picking the right keyword battles:

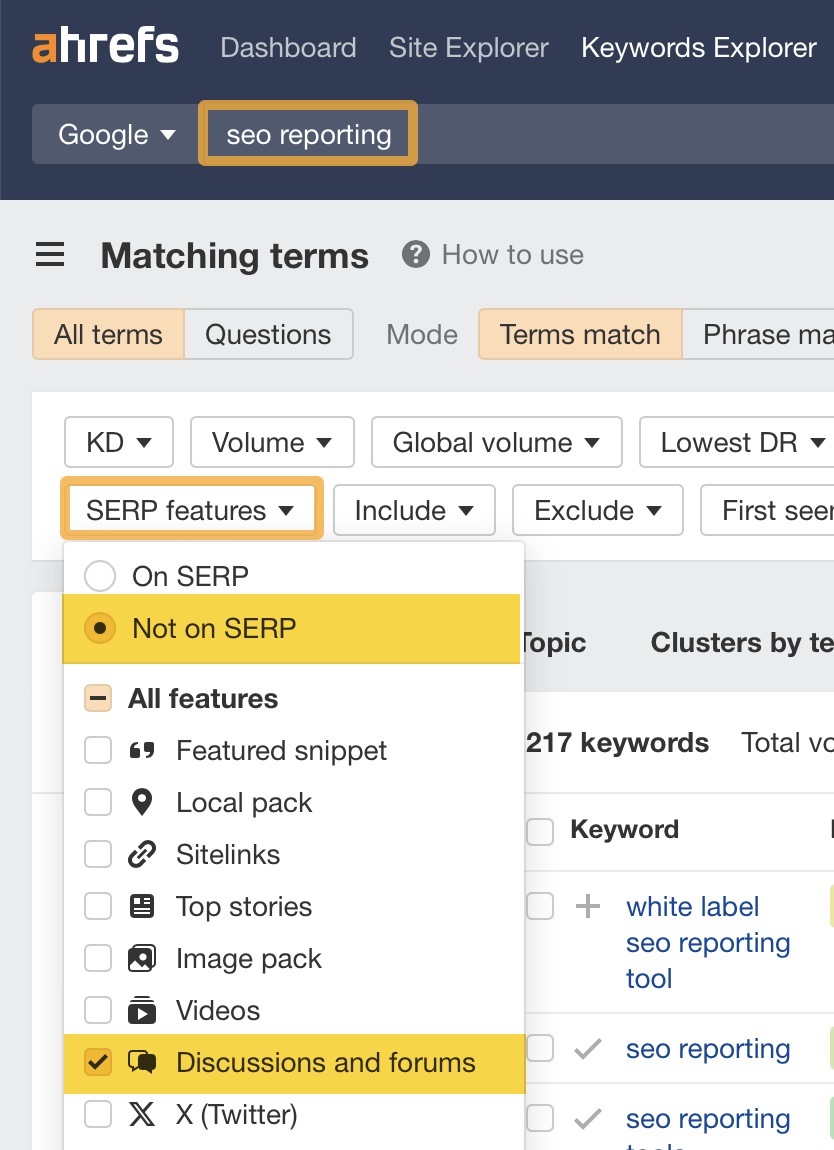

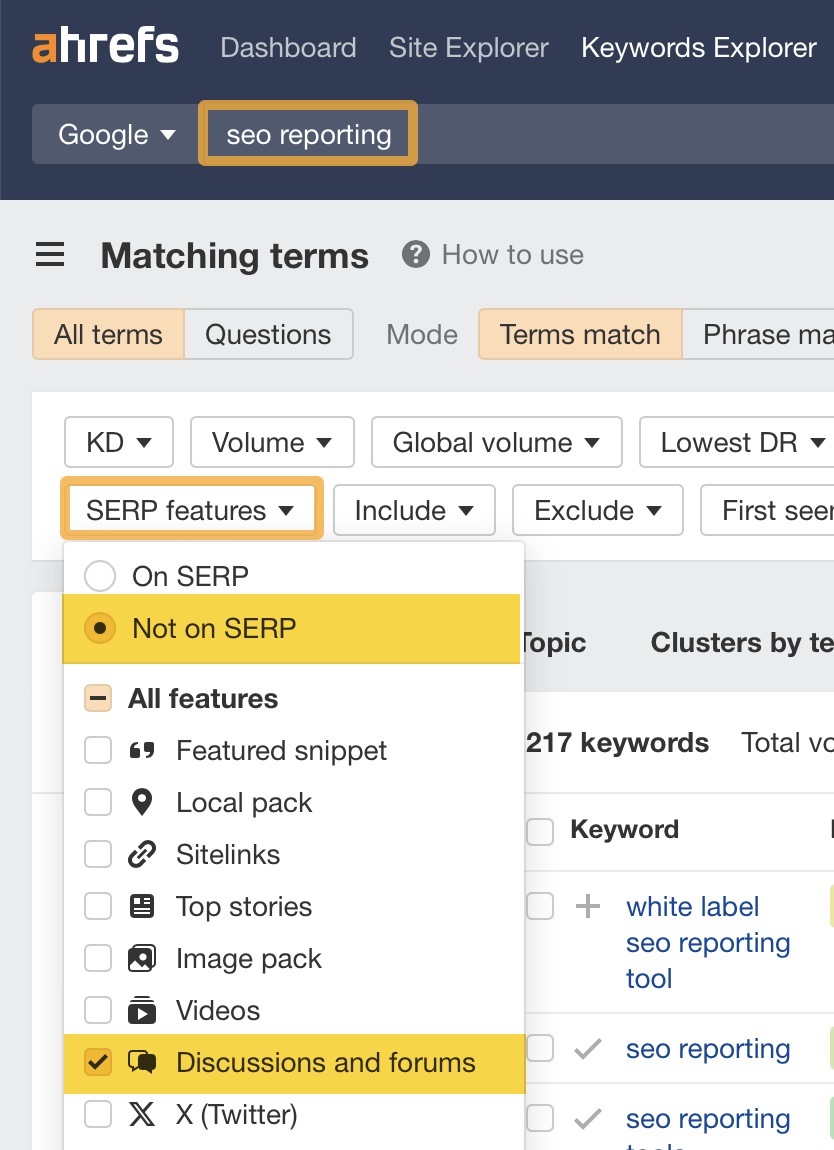

If you’re concerned about Reddit, Quora, or other UGC sites stealing your clicks, you can also use Keywords Explorer to target SERPs where these websites aren’t present.

To do this:

- Enter your keyword in the search bar and head to the matching terms report

- Click on the SERP features drop-down box

- Select Not on SERP and select Discussions and forums

This method can help you find SERPs where these types of sites are not present.

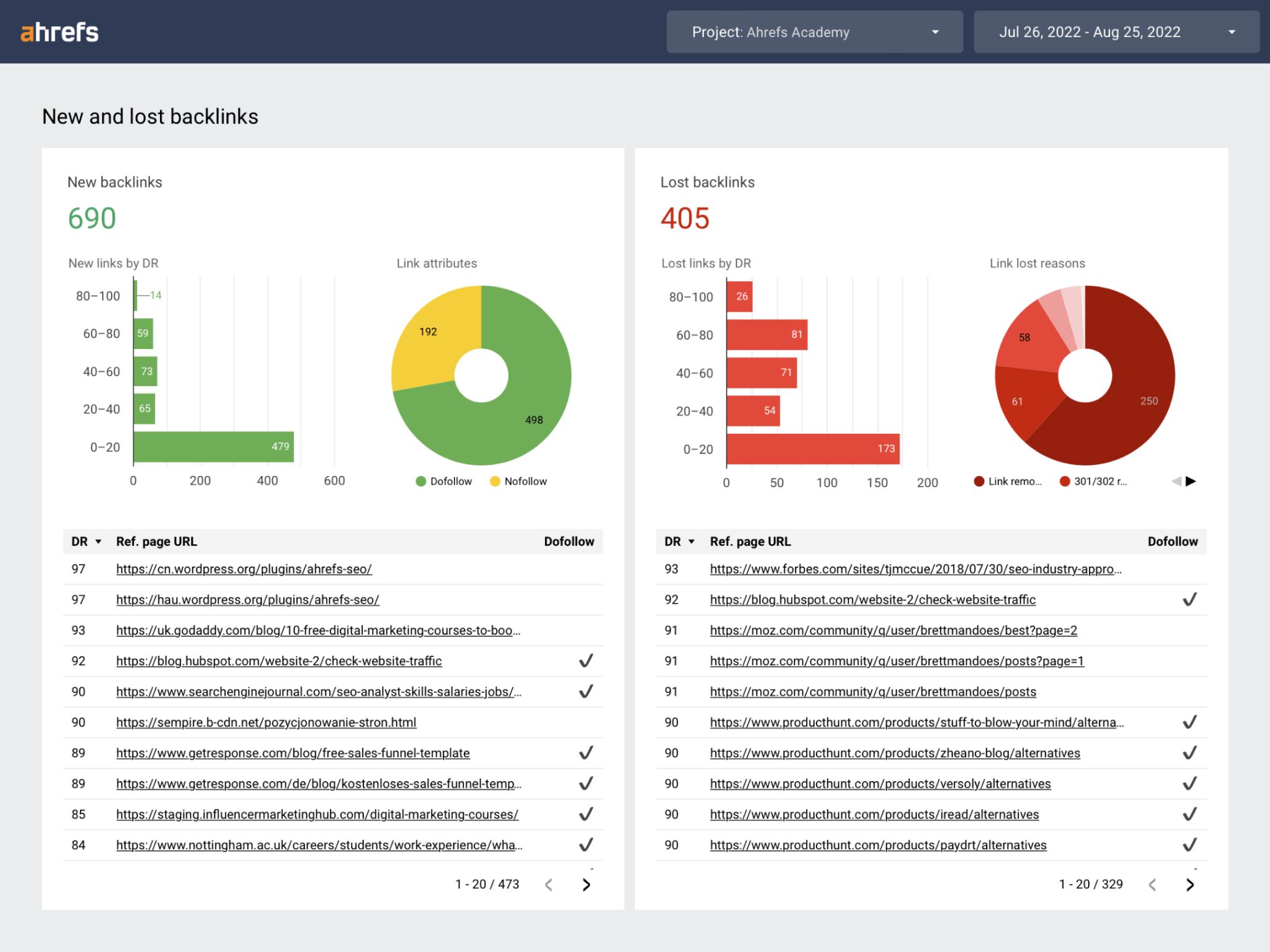

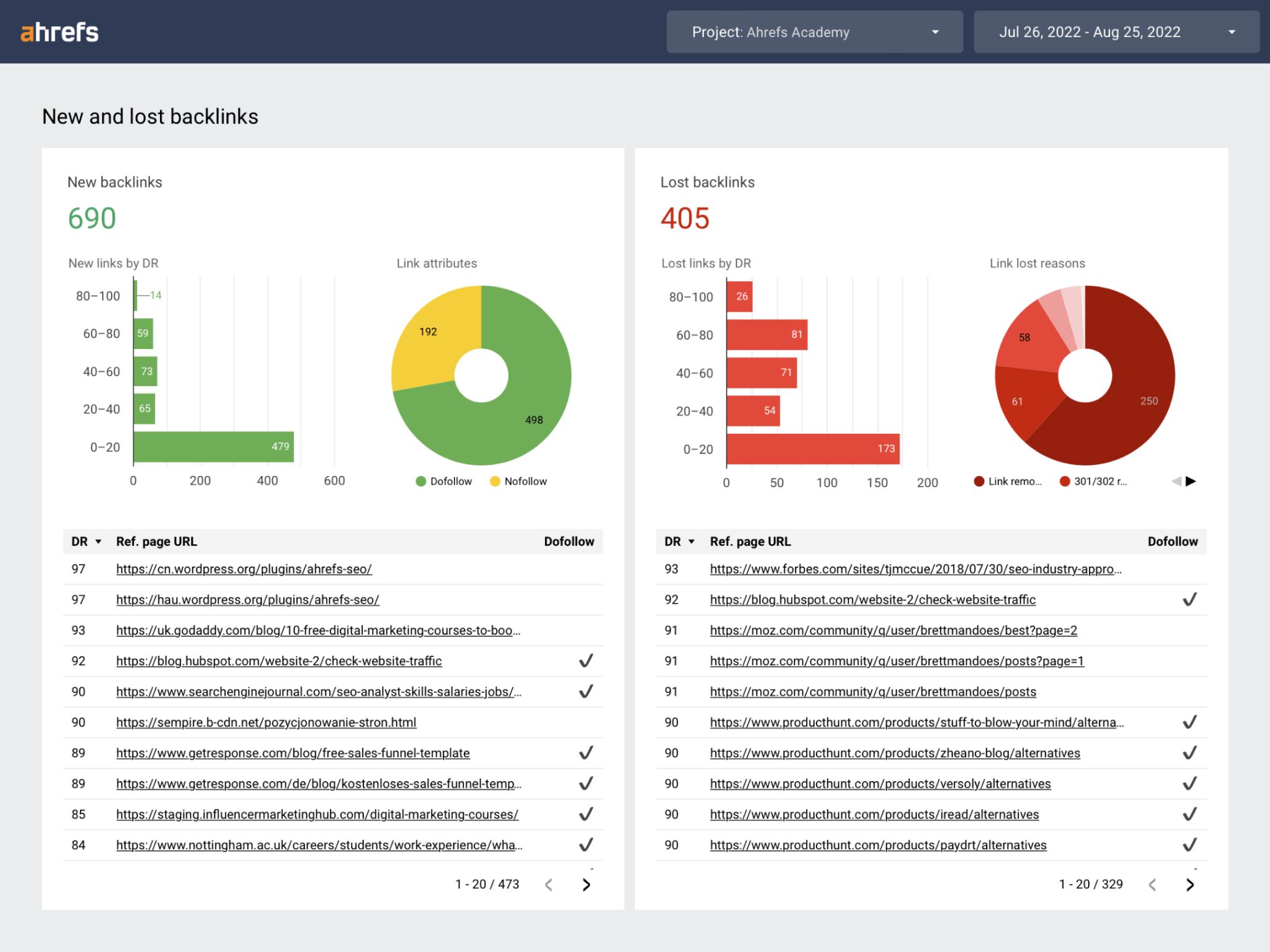

Build more links to become more authoritative

Another approach you could take is to double down on the SEO basics and start building more high-quality backlinks.

Write deep content

Most SEOs are not churning out 500-word blog posts and hoping for the best; equally, the content they’re creating is often not deep or the best it can possibly be.

This is often due to time restraints, budget and inclination. But to be competitive in the AI era, deep content is exactly what you should be creating.

As your website grows, the challenge of maintaining the performance of your content portfolio gets increasingly more difficult.

And what may have been an “absolute banger” of an article in 2020 might not be such a great article now—so you’ll need to update it to keep the clicks rolling in.

So how can you ensure that your content is the best it can be?

How to deal with it:

Here’s the process I use:

Steal this content updating framework

- Identify content that needs an update by completing a site-wide content audit or use Page inspect for single pages

- Prioritize it based on estimated business value and Traffic potential

- Identify what needs to be improved and update the content

- Monitor the rankings of the content you update using a Portfolio

- Rinse and repeat

And here’s a practical example of this in action:

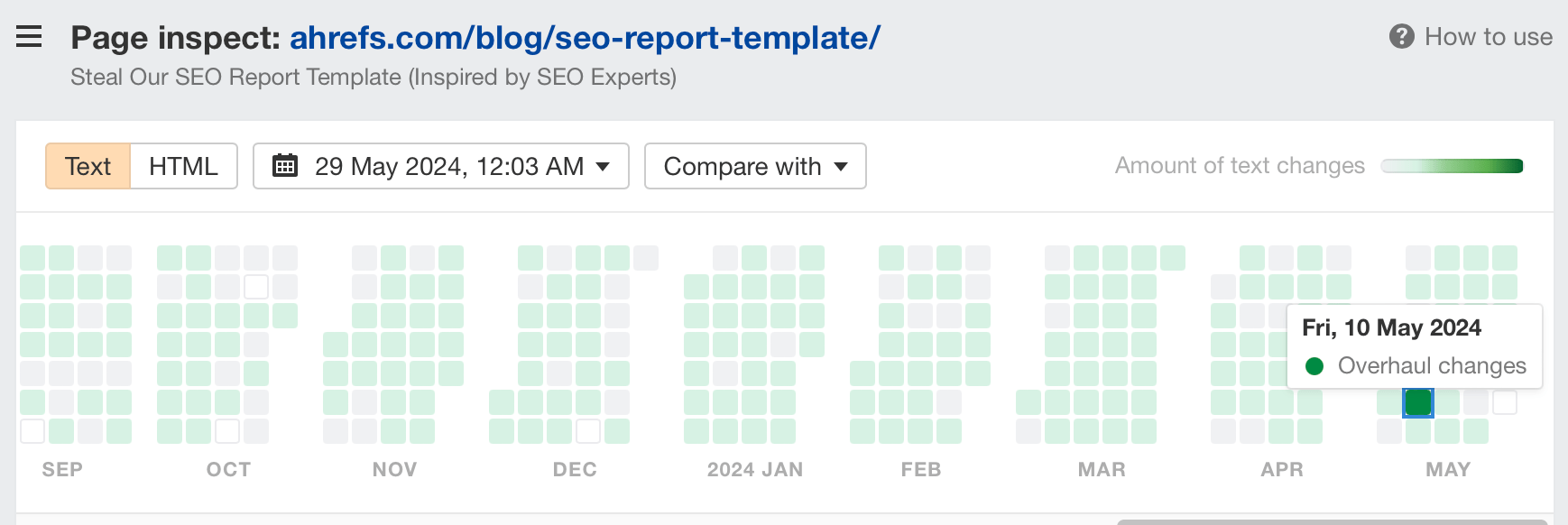

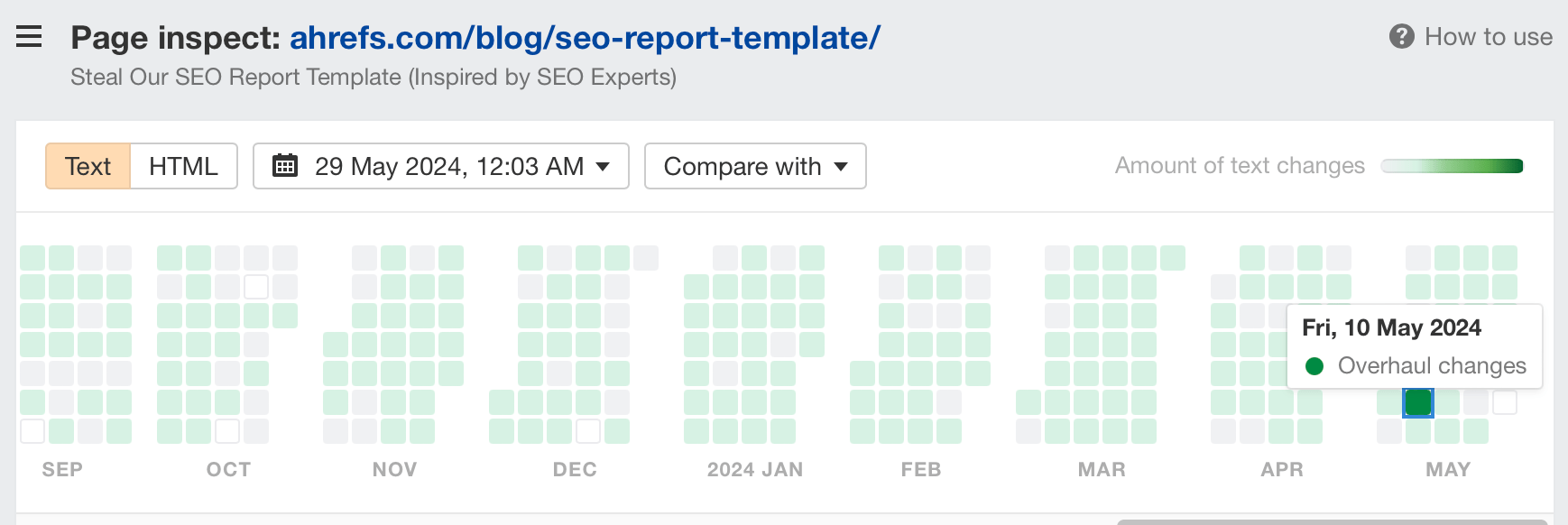

Use Page Inspect with Overview to identify pages that need updating

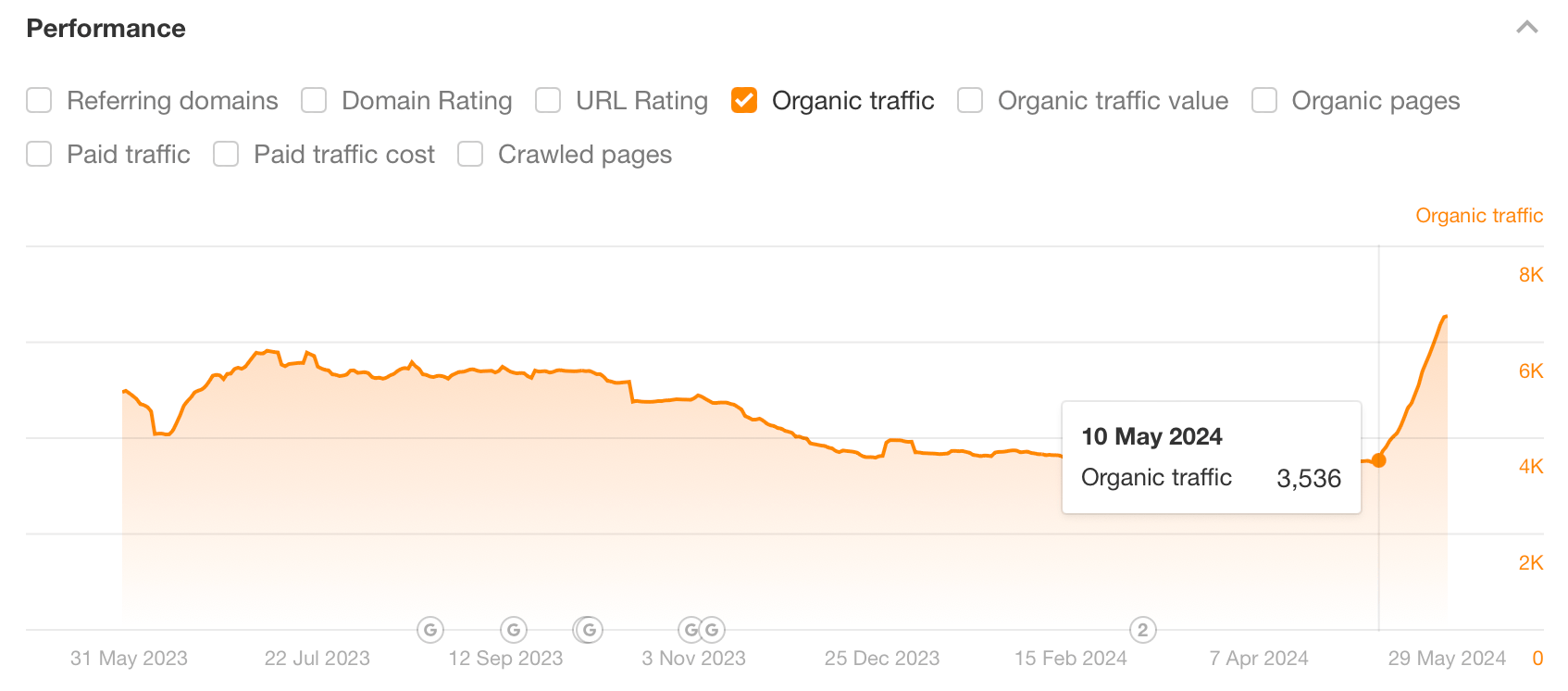

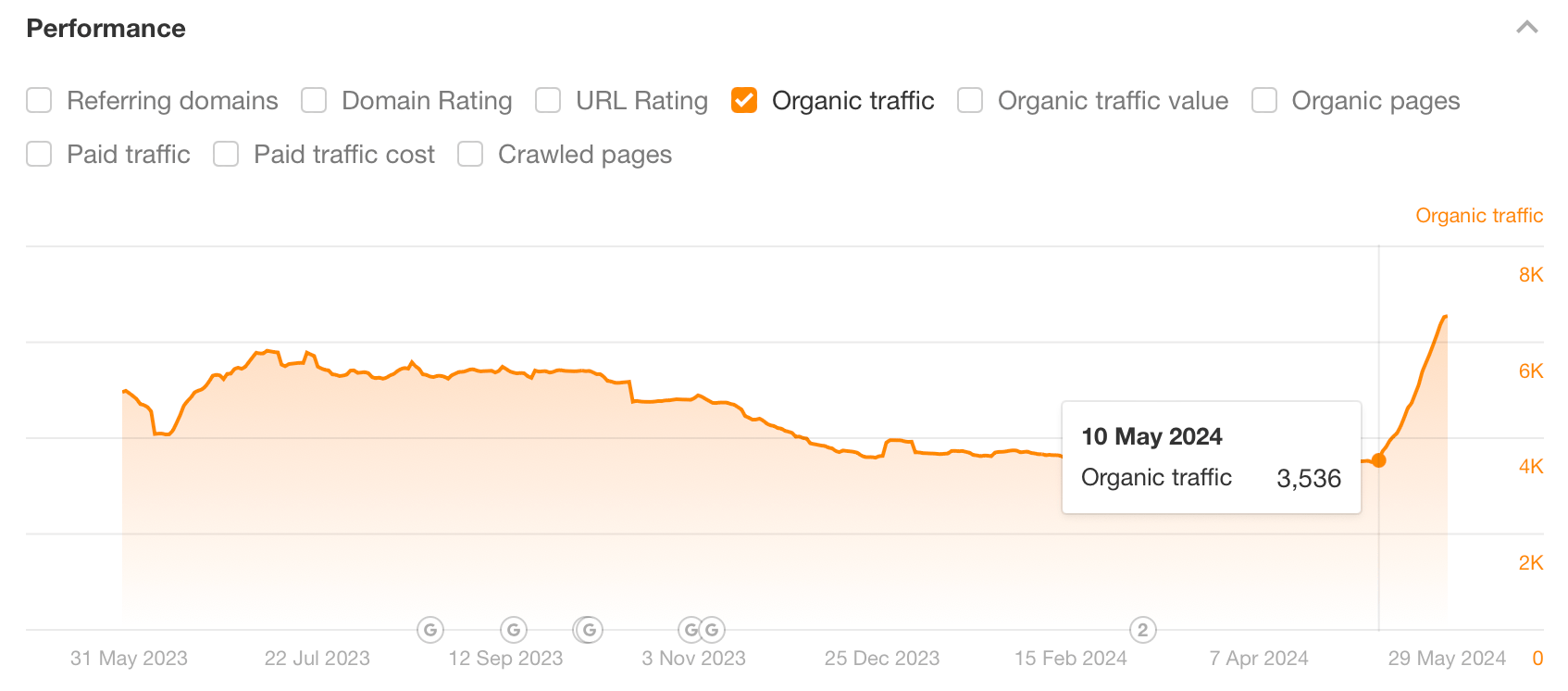

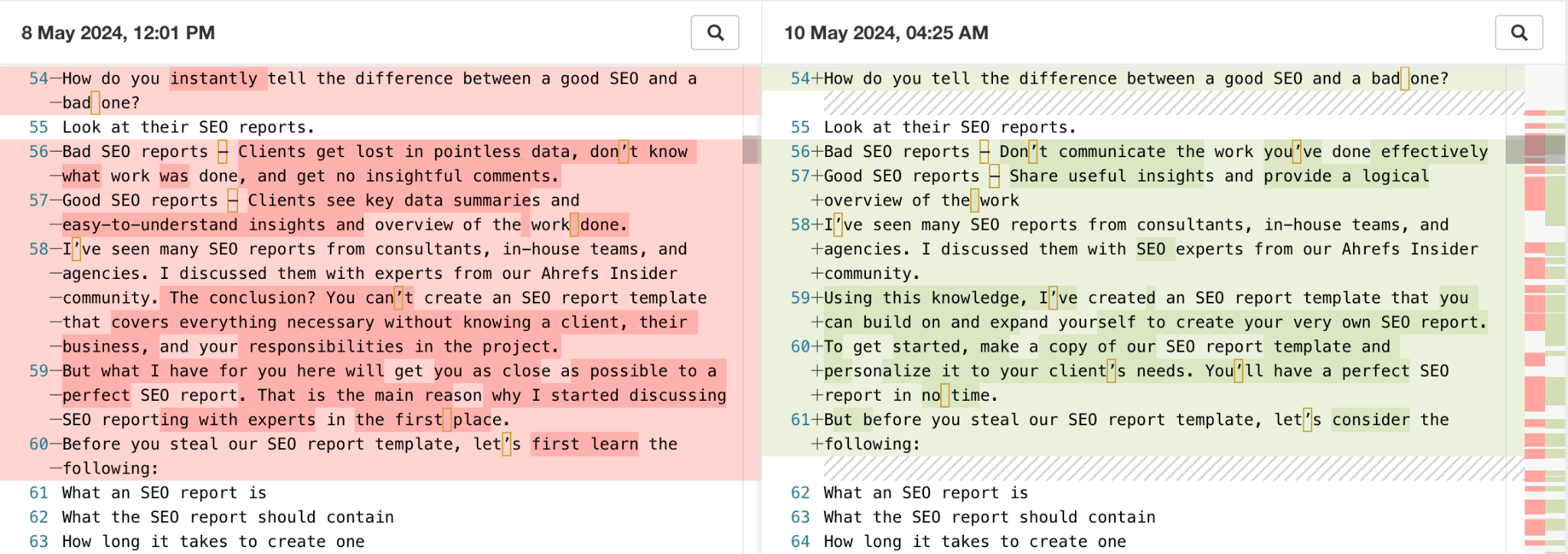

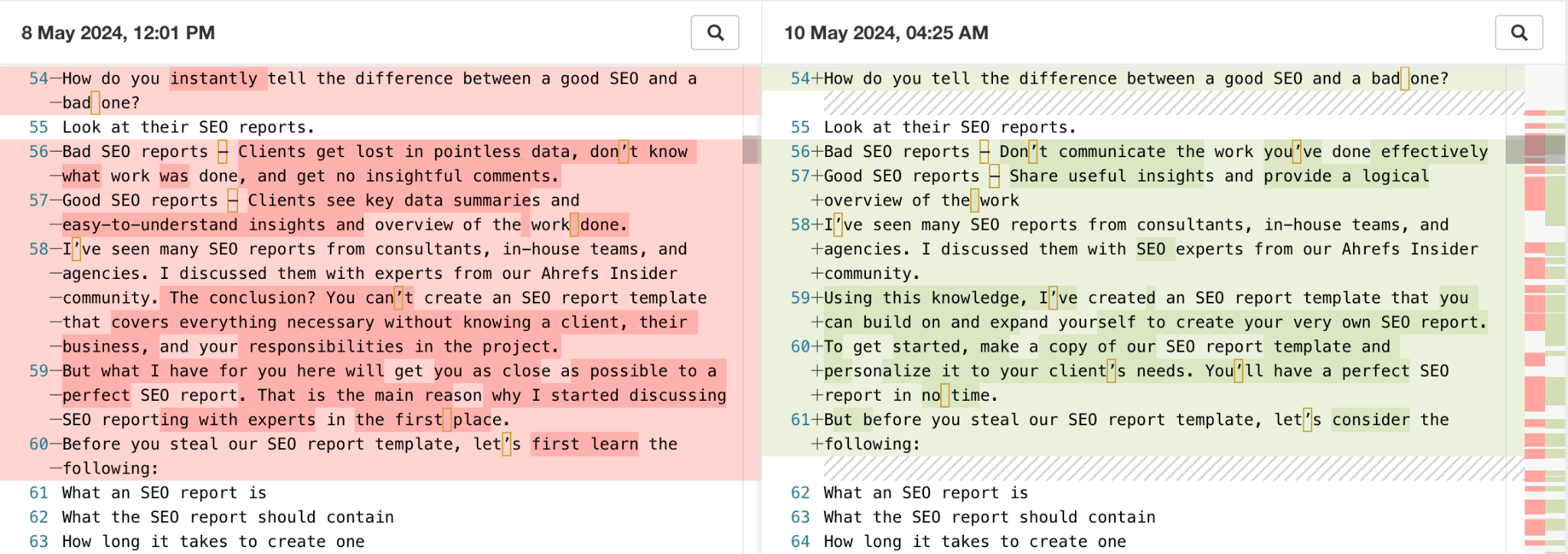

Here’s an example of an older article Michal Pecánek wrote that I recently updated. Using Page Inspect, we can pinpoint the exact date of the update was on May 10, 2024, with no other major in the last year.

According to Ahrefs, this update almost doubled the page’s organic traffic, underlining the value of updating old content. Before the update, the content had reached its lowest performance ever.

So, what changed to casually double the traffic? Clicking on Page Inspect gives us our answer.

I was focused on achieving three aims with this update:

- Keeping Michal’s original framework for the post intact

- Making the content as concise and readable as it can be

- Refreshing the template (the main draw of the post) and explaining how to use the updated version in a beginner-friendly way to match the search intent

Getting buy-in for SEO projects has never been easy compared to other channels. Unfortunately, this meme perfectly describes my early days of agency life.

SEO is not an easy sell—either internally or externally to clients.

With companies hiring fewer SEO roles this year, the appetite for risk seems lower than in previous years.

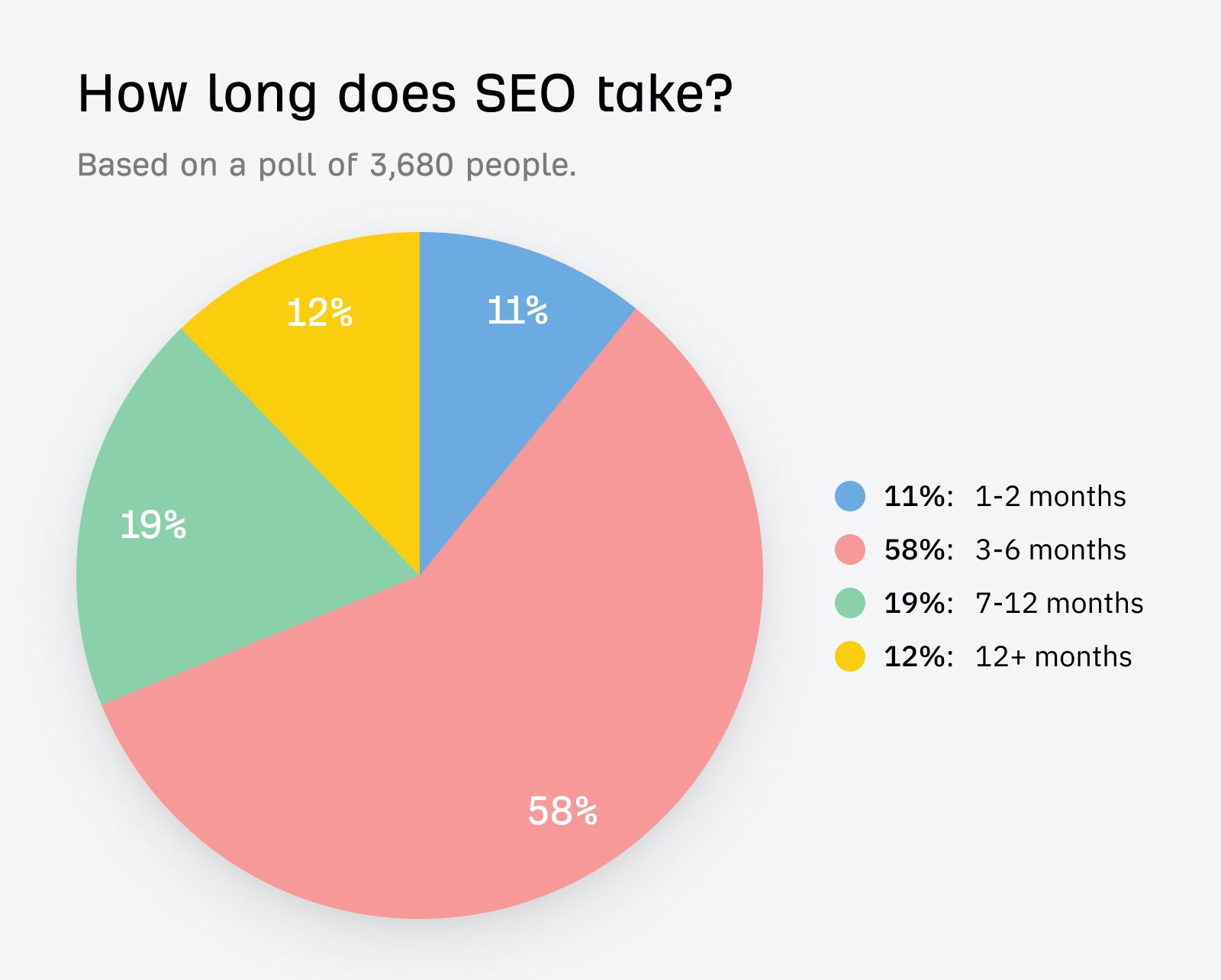

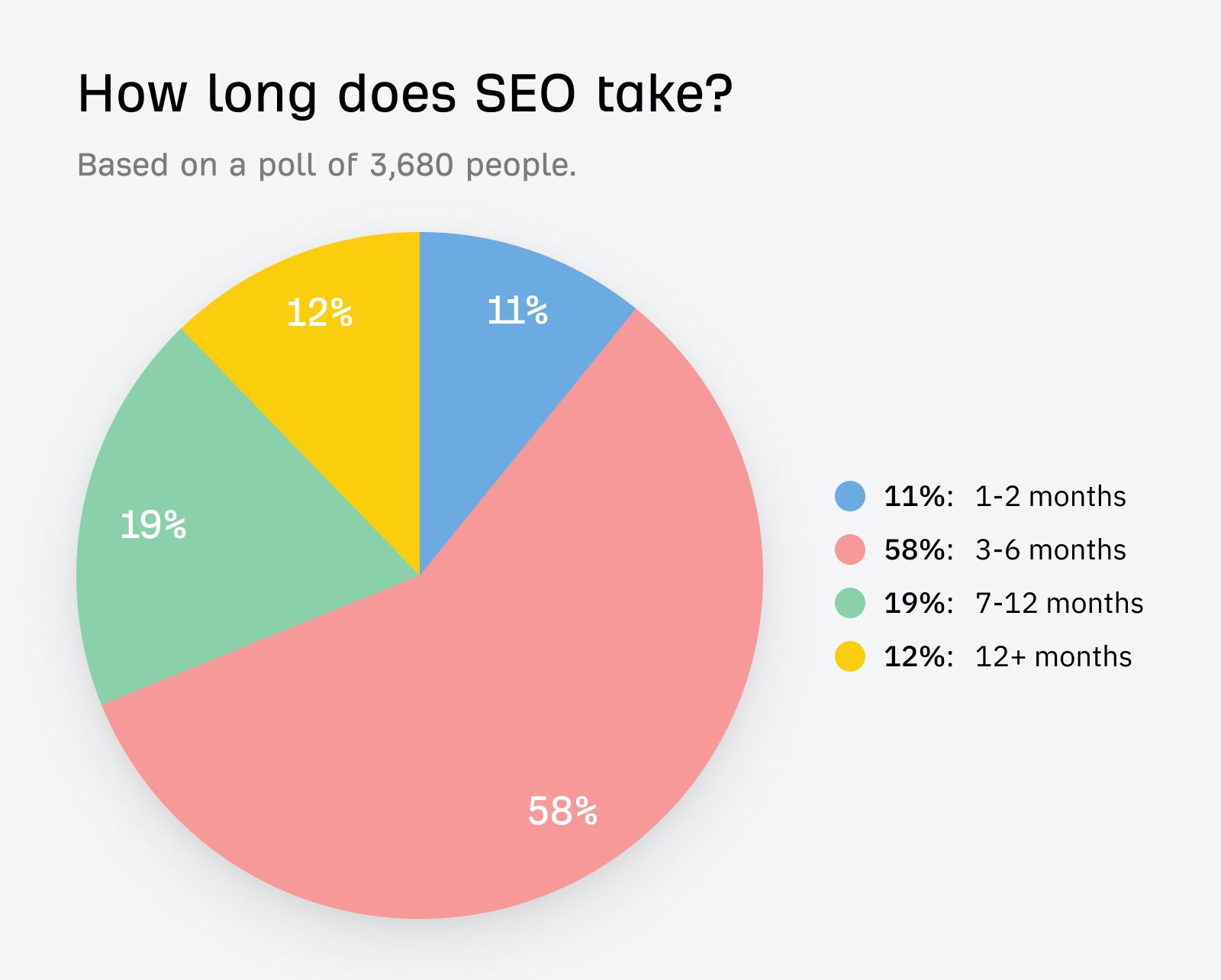

SEO can also be slow to take impact, meaning getting buy-in for projects is harder than other channels.

How to deal with it:

My colleague Despina Gavoyannis has written a fantastic article about how to get SEO buy-in, here is a summary of her top tips:

- Find key influencers and decision-makers within the organization, starting with cross-functional teams before approaching executives. (And don’t forget the people who’ll actually implement your changes—developers.)

- Adapt your language and communicate the benefits of SEO initiatives in terms that resonate with different stakeholders’ priorities.

- Highlight the opportunity costs of not investing in SEO by showing the potential traffic and revenue being missed out on using metrics like Ahrefs’ traffic value.

- Collaborate cross-functionally by showing how SEO can support other teams’ goals, e.g. helping the editorial team create content that ranks for commercial queries.

And perhaps most important of all: build better business cases and SEO opportunity forecasts.

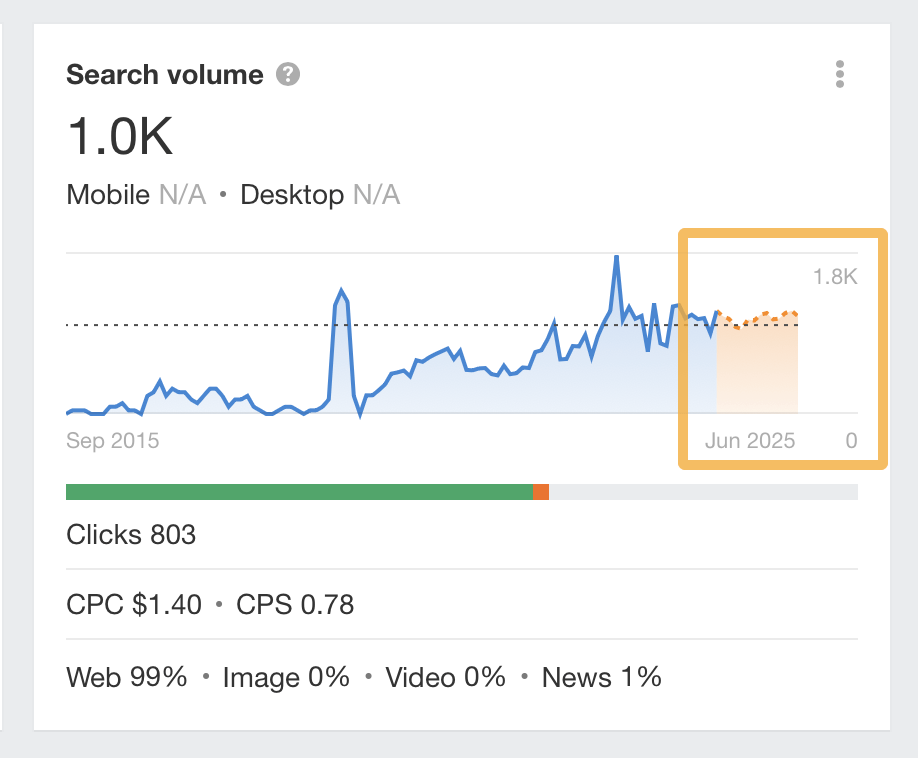

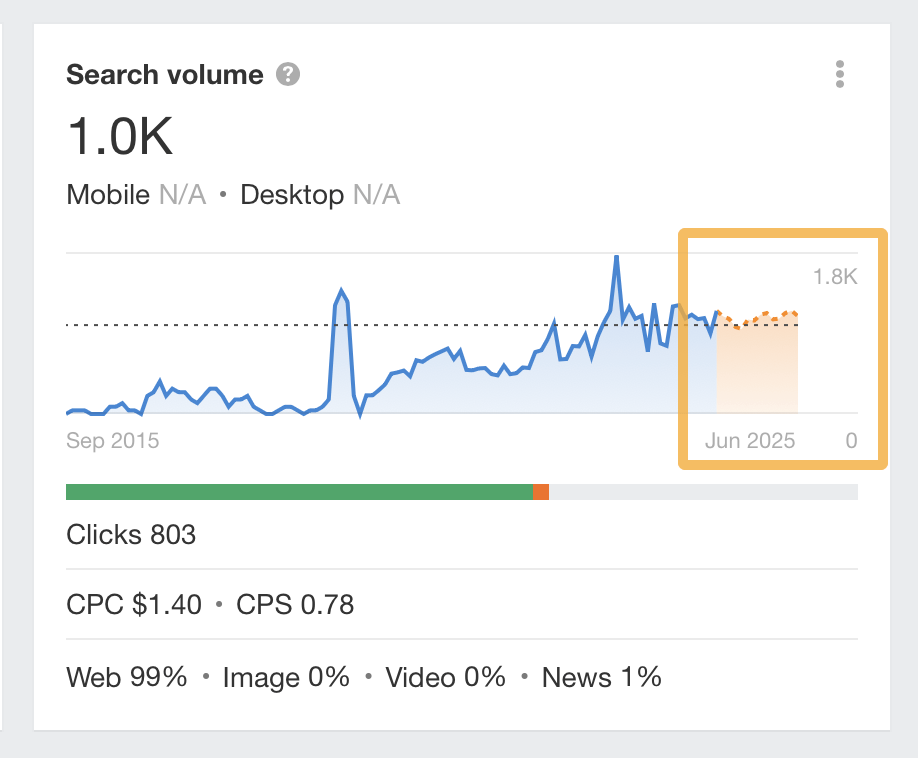

If you just want to show the short-term trend for a keyword, you can use Keywords Explorer:

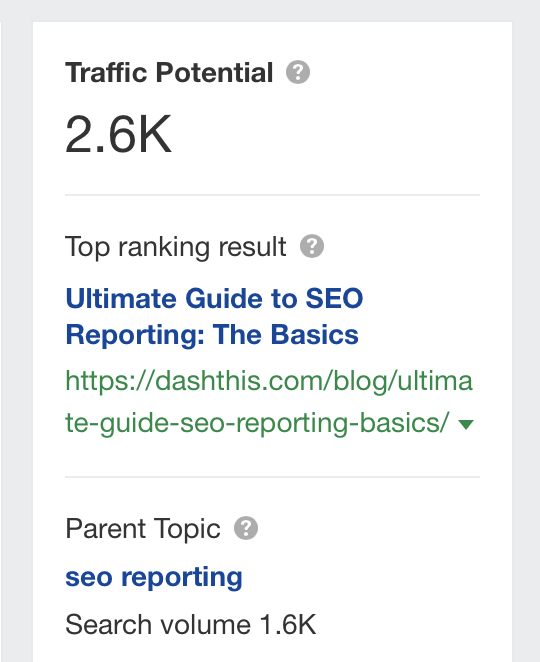

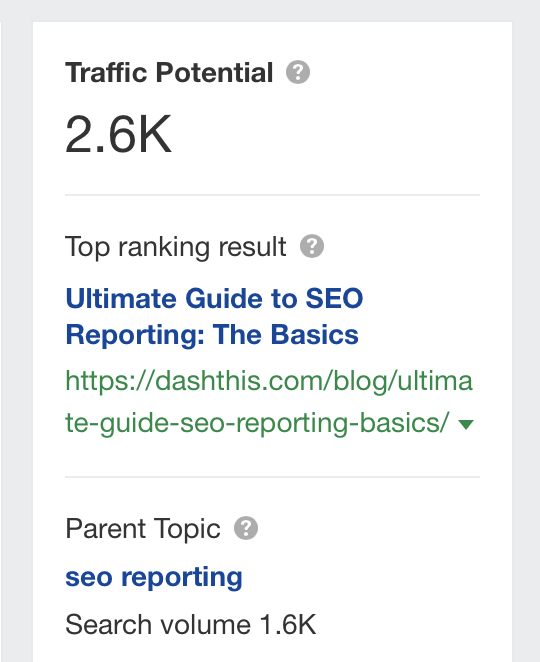

If you want to show the Traffic potential of a particular keyword, you can use our Traffic potential metric in SERP overview to gauge this:

And if you want to go the whole hog, you can create an SEO forecast. You can use a third-party tool to create a forecast, but I recommend you use Patrick Stox’s SEO forecasting guide.

Final thoughts

Of all the SEO challenges mentioned above, the one keeping SEOs awake at night is AI.

It’s swept through our industry like a hurricane, presenting SEOs with many new challenges. The SERPs are changing, competitors are using AI tools, and the bar for creating basic content has been lowered, all thanks to AI.

If you want to stay competitive, you need to arm yourself with the best SEO tools and search data on the market—and for me, that always starts with Ahrefs.

Got questions? Ping me on X.

Stay in the loop with Entireweb

Get the latest updates delivered straight to your inbox. No spam - unsubscribe anytime.