TECHNOLOGY

What’s Wrong with the Algorithms?

Social media algorithms have become a source of concern due to the spread of misinformation, echo chambers, and political polarization.

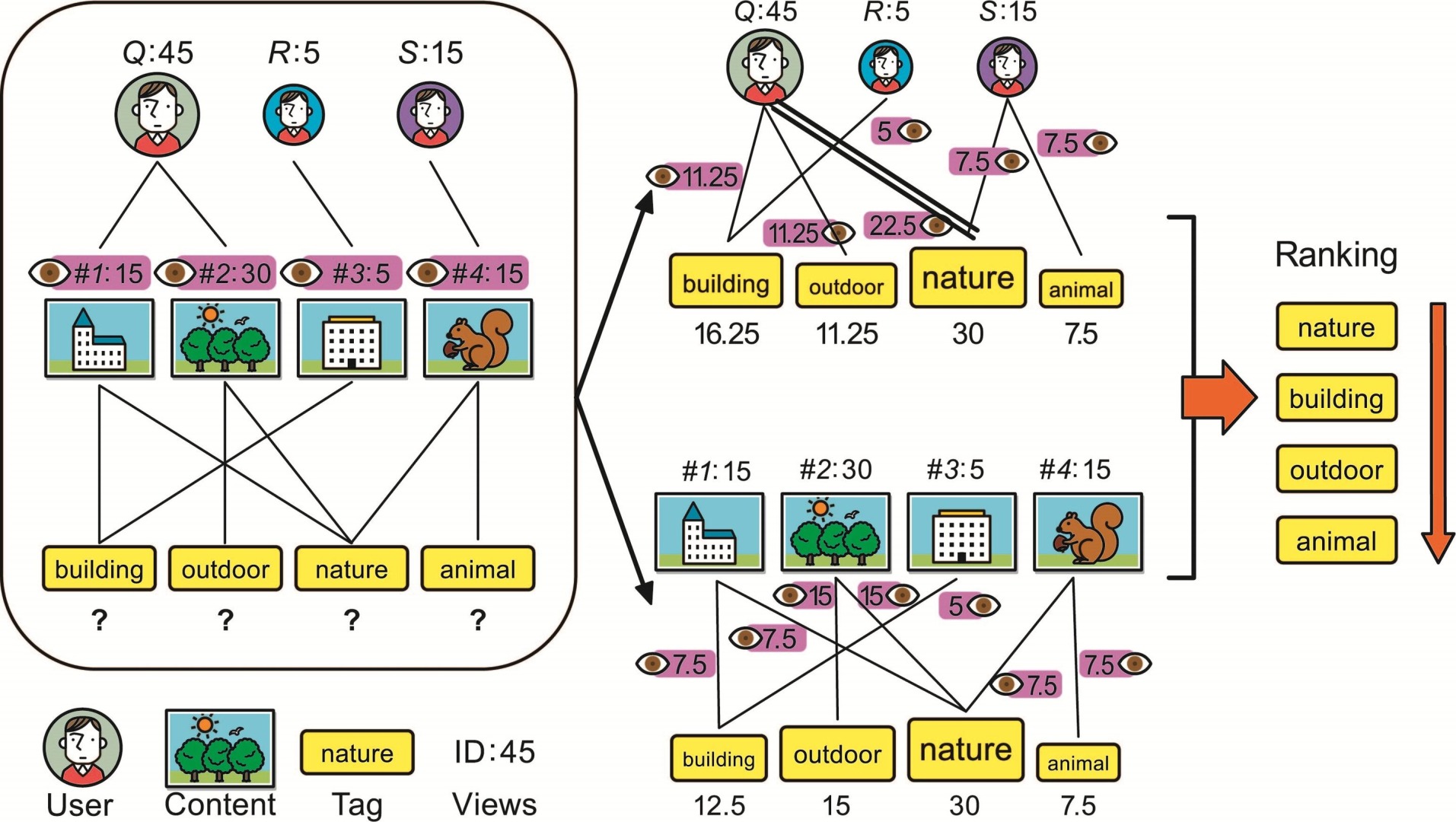

The main purpose of social media algorithms is to personalize and optimize user experience on platforms such as Facebook, Twitter, and YouTube.

Most social media algorithms sort, filter, and prioritize content based on a user’s individual preferences and behaviors. Social media algorithms have come under scrutiny in recent years for contributing to the spread of misinformation, echo chambers, and political polarization.

Facebook’s news feed algorithm has been criticized for spreading misinformation, creating echo chambers, and reinforcing political polarization. In 2016, the algorithm was found to have played a role in the spread of false information related to the U.S. Presidential election, including the promotion of fake news stories and propaganda. Facebook has since made changes to its algorithm to reduce the spread of misinformation, but concerns about bias and polarization persist.

Twitter’s trending topics algorithm has also been criticized for perpetuating bias and spreading misinformation. In 2016, it was revealed that the algorithm was prioritizing trending topics based on popularity, rather than accuracy or relevance. This led to the promotion of false and misleading information, including conspiracy theories and propaganda. Twitter has since made changes to its algorithm to reduce the spread of misinformation and improve the quality of public discourse.

YouTube’s recommendation algorithm has been criticized for spreading conspiracy theories and promoting extremist content. In 2019, it was revealed that the algorithm was recommending conspiracy theory videos related to the moon landing, 9/11, and other historical events. Additionally, the algorithm was found to be promoting extremist content, including white nationalist propaganda and hate speech. YouTube has since made changes to its algorithm to reduce the spread of misinformation and extremist content, but concerns about bias and polarization persist.

In this article, we’ll examine the problem with social media algorithms including the impact they’re having on society as well as some possible solutions.

1. Spread of Misinformation

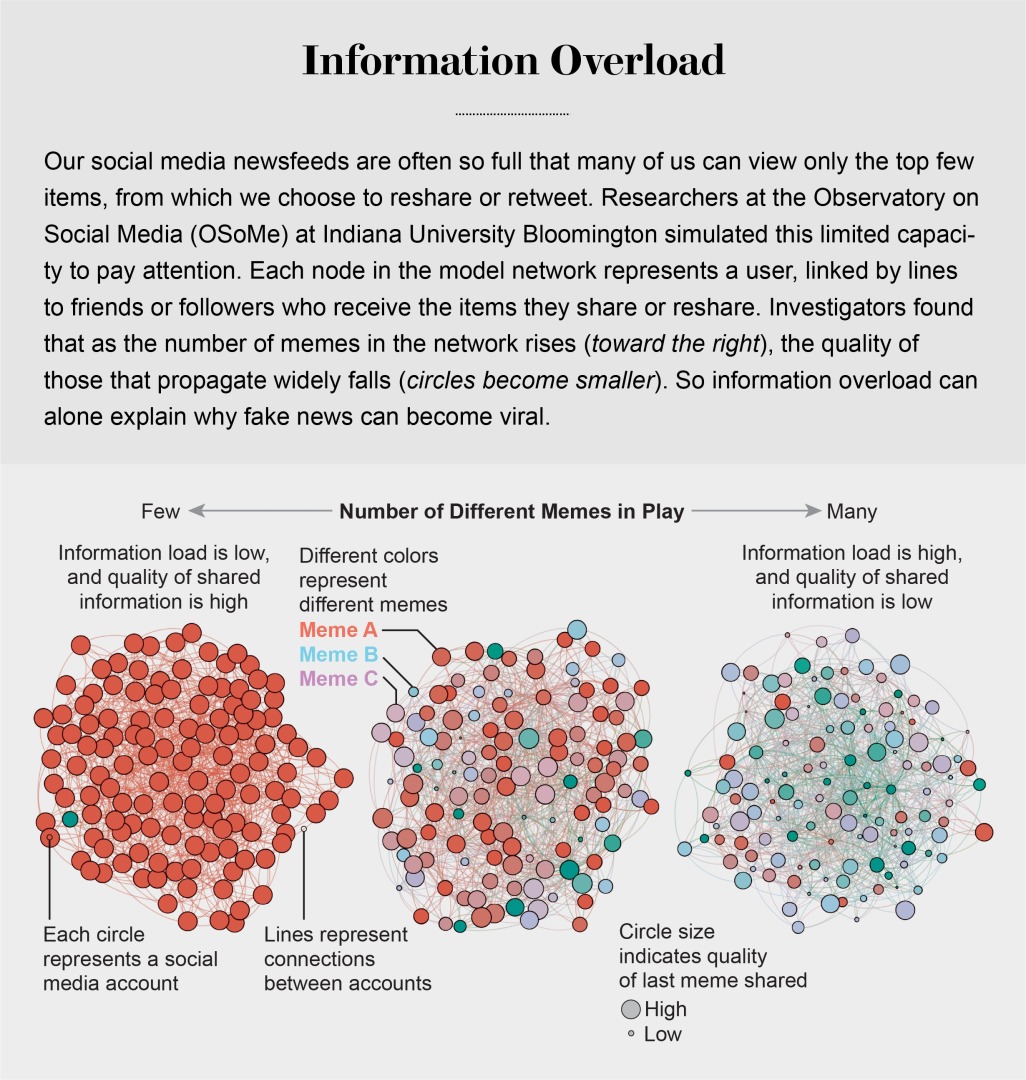

One of the biggest problems with social media algorithms is their tendency to spread misinformation. This can occur when algorithms prioritize sensational or controversial content, regardless of its accuracy, in order to keep users engaged and on the platform longer. This can lead to the spread of false or misleading information, which can have serious consequences for public health, national security, and democracy.

2. Echo Chambers and Political Polarization

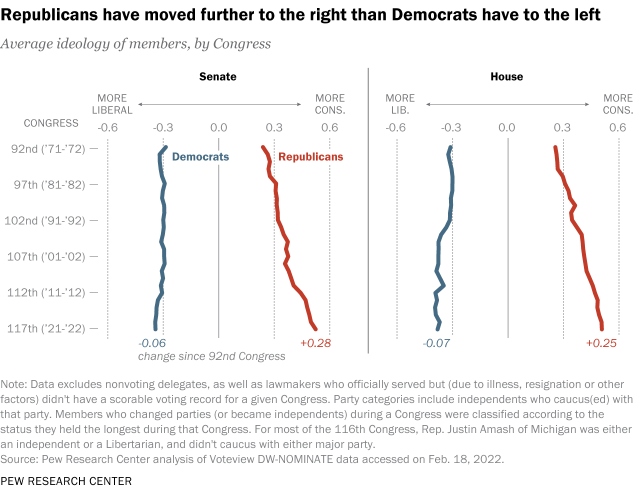

Another issue with social media algorithms is that they can create echo chambers and reinforce political polarization. This happens when algorithms only show users content that aligns with their existing beliefs and values, and filter out information that challenges those beliefs. As a result, users can become trapped in a self-reinforcing bubble of misinformation and propaganda, leading to a further division of society and a decline in the quality of public discourse.

3. Bias in Algorithm Design and Data Collection

There are also concerns about bias in the design and implementation of social media algorithms. The data used to train these algorithms is often collected from users in a biased manner, which can perpetuate existing inequalities and reinforce existing power structures. Additionally, the designers and developers of these algorithms may hold their own biases, which can be reflected in the algorithms they create. This can result in discriminatory outcomes and perpetuate social injustices.

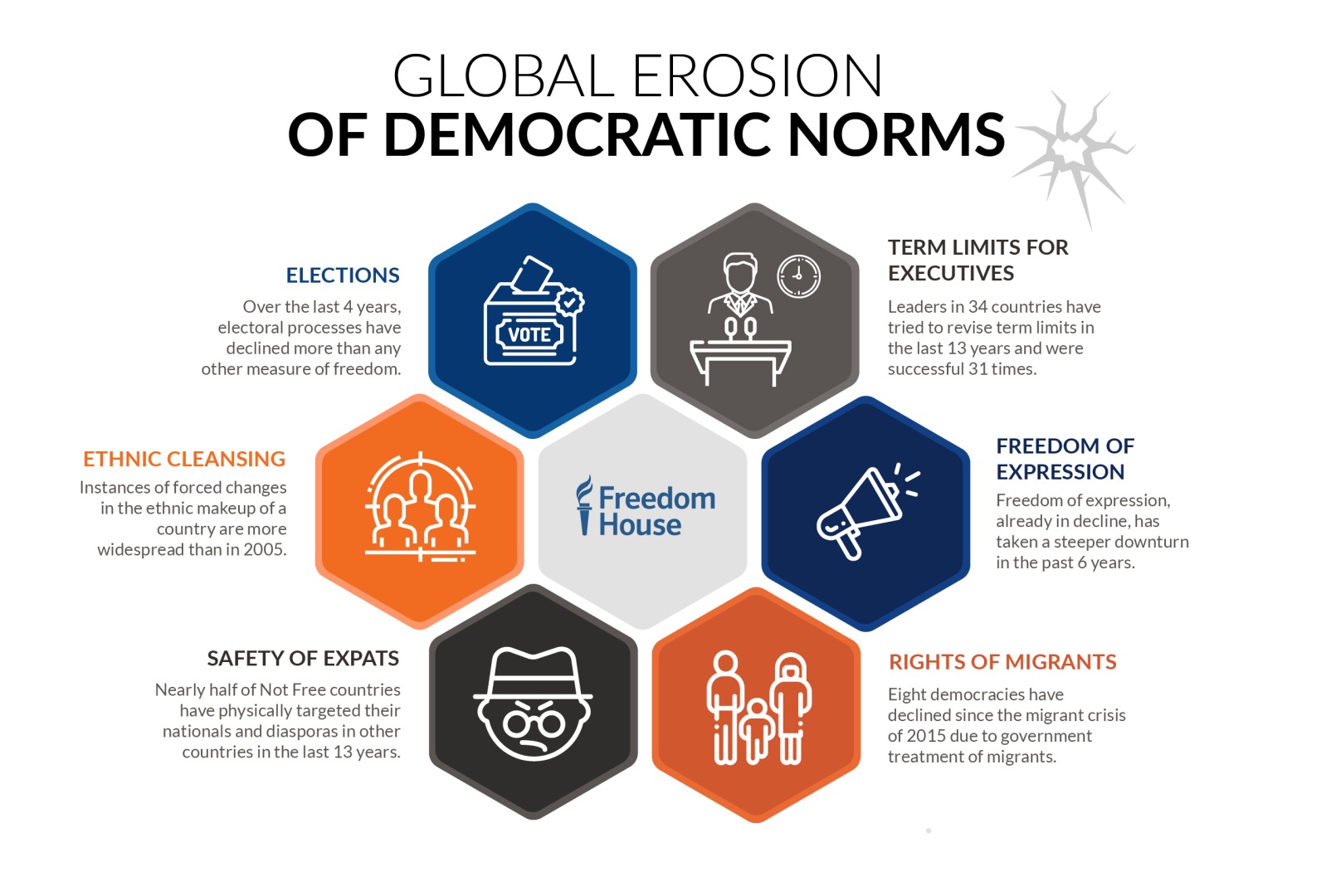

4. Democracy in Retreat

Social media algorithms are vulnerable to manipulation and can spread false or misleading information, which can be used to manipulate public opinion and undermine democratic institutions. The dominance of a few large social media companies has led to a concentration of power in the hands of a small number of organizations, which can undermine the diversity and competitiveness of the marketplace of ideas, a key principle of democratic societies.

How to Improve Social Media Algorithms?

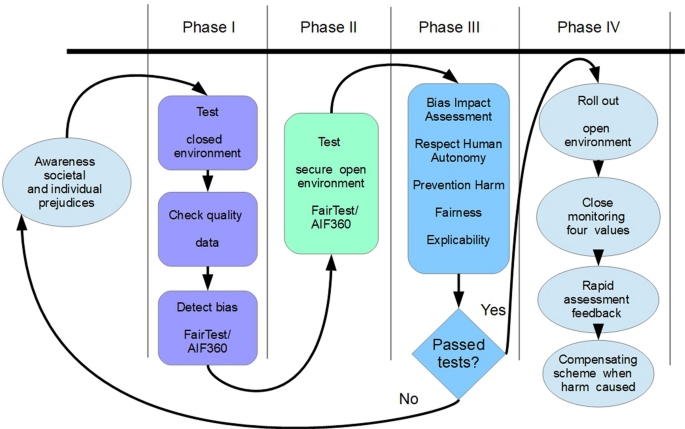

Governments and regulatory bodies have a role to play in holding technology companies accountable for the algorithms they create and their impact on society. This could involve enforcing laws and regulations to prevent the spread of misinformation and extremist content, and holding companies responsible for their algorithms’ biases.

There are several possible solutions that can be implemented to improve social media algorithms and reduce their impact on democracy. Some of these solutions include:

-

Increased transparency and accountability: Social media companies should be more transparent about their algorithms and data practices, and they should be held accountable for the impact of their algorithms on society. This can include regular audits and public reporting on algorithmic biases and their impact on society.

-

Regulation and standards: Governments can play a role in ensuring that social media algorithms are designed and operated in a way that is consistent with democratic values and principles. This can include setting standards for algorithmic transparency, accountability, and fairness, and enforcing penalties for violations of these standards.

-

Diversification of ownership: Encouraging a more diverse and competitive landscape of social media companies can reduce the concentration of power in the hands of a few dominant players and promote innovation and diversity in the marketplace of ideas.

-

User education and awareness: Social media users can be educated and empowered to make informed decisions about their usage of social media, including recognizing and avoiding disinformation and biased content.

-

Encouragement of responsible content creation: Social media companies can work to encourage the creation of high-quality and responsible content by prioritizing accurate information and rewarding creators who produce this content.

-

Collaboration between industry, government, and civil society: Addressing the challenges posed by social media algorithms will require collaboration between social media companies, governments, and civil society organizations. This collaboration can involve the sharing of data and best practices, the development of common standards and regulations, and the implementation of public education and awareness programs.

Conclusion

Social media companies have the power to censor and suppress speech, which can undermine the right to free expression and the democratic principle of an open and inclusive public discourse. It is crucial for technology companies and policymakers to address these issues and work to reduce the potential for harm from these algorithms. Social media platforms need to actively encourage and facilitate community participation in the development and improvement of their algorithms. This would involve setting up forums for discussion and collaboration, providing documentation and support for developers, and engaging with the community to address their concerns and ideas. In order to ensure that the algorithms are fair and unbiased, tech companies need to be transparent about the data they collect and use to train their algorithms. This would involve releasing the data sets used to train the algorithms, along with information about how the data was collected, what it represents, and any limitations or biases it may contain.

Stay in the loop with Entireweb

Get the latest updates delivered straight to your inbox. No spam - unsubscribe anytime.

You must be logged in to post a comment Login