MARKETING

Core Web Vitals: What Next?

The author’s views are entirely his or her own (excluding the unlikely event of hypnosis) and may not always reflect the views of Moz.

The promised page experience updates from Google that caused such a stir last year are far from being done, so Tom takes a look at where we are now and what happens next for the algorithm’s infamous Core Web Vitals.

Click on the whiteboard image above to open a high resolution version in a new tab!

Video Transcription

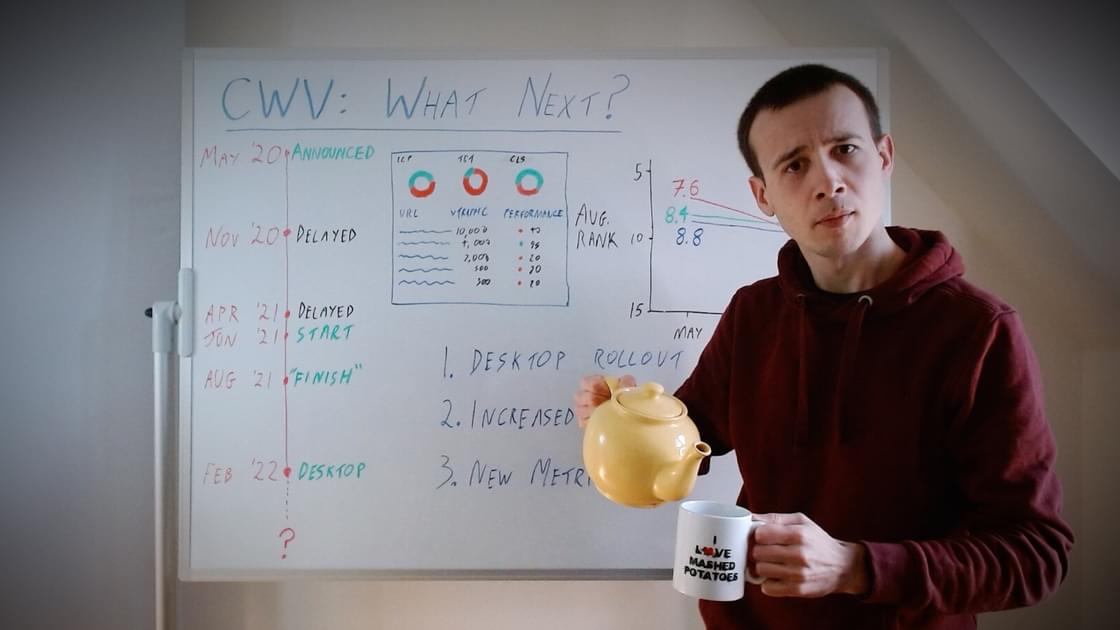

Happy Friday, Moz fans, and welcome to another Whiteboard Friday. This week’s video is about Core Web Vitals. Before you immediately pull that or pause the video, press Back, something like that, no, we haven’t got stuck in time. This isn’t a video from 2020. This is looking forwards. I’m not going to cover what the basic metrics are or how they work, that kind of thing in this video. There is a very good Whiteboard Friday about a year ago from Cyrus about all of those things, which hopefully will be linked below. What this video is going to be looking at is where we are now and what happens next, because the page experience updates from Google are very much not done. They are still coming. This is still ongoing. This is probably going to get more important over time, not less, even though the hype has kind of subsided a little bit.

Historical context

So, firstly, I want to look at some of the historical context in terms of how we got where we are. So I’ve got this timeline on this side of the board. You can see in May 2020, which is nearly two years ago now, Google first announced this. This is an extraordinary long time really in SEO and Google update terms. But they announced it, and then we had these two delays and it felt like it was taking forever. I think there are some important implications here because my theory is that — I’ve written about this before and again, hopefully, that will also be linked below — but my theory is that the reason for the delays was that too few pages would have been getting a boost if they had rolled out when they originally intended to, partly because too few sites had actually improved their performance and partly because Google is getting data from Chrome, the Chrome user experience or CrUX data. It’s from real users using Chrome.

For lots of pages for a long time, including now really, they didn’t really have a good enough sample size to draw conclusions. The coverage is not incredible. So because of that, initially when they had even less data, they were in an even worse position to roll out something. They don’t want to make a change to their algorithm that rewards a small number of pages disproportionately, because that would just distort their results. It will make their results worse for users, which is not what they’re aiming for with their own metrics.

So because of these delays, we were sort of held up until June last year. But what I’ve just explained, this system of only having enough sample size for more heavily visited pages, this is important for webmasters, not just Google. We’ll come back to it later when we talk about what’s going to happen next I think, but this is why whenever we display Core Web Vitals data in Moz Pro and whenever we talk about it publicly, we encourage you to look at your highest traffic or most important pages or your highest ranking pages, that kind of thing, rather than just looking at your slowest pages or something like that. You need to prioritize and triage. So we encourage you to sort by traffic and look at that alongside performance or something like that.

So anyway, June 2021, we did start having this rollout, and it was all rolled out within two or three months. But it wasn’t quite what we expected or what we were told to expect.

What happened after the rollout?

In the initial FAQ and the initial documentation from from Google, they talked about sites getting a boost if they passed a certain threshold for all three of the new metrics they were introducing. Although they kind of started to become more ambiguous about that over time, that definitely isn’t what happened with the rollout.

So we track this with MozCast data. So between the start and the end of when Google said they were rolling it out, we looked at the pages ranking top 20 in MozCast that had passes for zero, one, two, or three of the metrics against the thresholds that Google published.

Now one thing that’s worth noticing about this chart, before you even look at it anymore closely, is that all of these lines trend downwards, and that’s because of what I was talking about with the sample sizes increasing, with Google getting data on more pages over time. So as they got more pages, they started incorporating more low traffic or in other words low ranking pages into the CrUX data, and that meant that the average rank of a page that has CrUX data will go down, because when we first started looking at this, even though this is top 20 rankings for competitive keywords, only about 30% of them even had CrUX data in the first place when we first looked at this. It’s gone up a lot since then. So it now includes more low ranking pages. So that’s why there’s this sort of general downwards shift.

So the thing to notice here is the pages passing all three thresholds, these are the ones that Google said were going to get a big boost, and these went down by 0.2, which is about the same as the pages that were passing one or two thresholds. So I’m going to go out on a limb and say that that was just the general fit caused by incorporating more pages into CrUX data.

The really noticeable thing was the pages that passed zero. The pages that passed zero thresholds, they went down by 1.1. They went down by 1.1 positions. So instead of it being pass all three and get a boost, it’s more like pass zero and get a penalty. Or you could rephrase that positively and say the exact same thing, as pass one and get a boost relative to these ones that are falling off the cliff and dropping over one ranking position.

So there was a big impact it seems from the rollout, but not necessarily the one that we were told to expect, which is interesting. I suspect that’s because Google perhaps was more confident about the data on the sites performing very badly than about the data on the sites performing very well.

What happens next?

Desktop rollout

Now, in terms of what happens next, I think this is relevant because in February and March, probably as you’re watching this video, Google have said they’re going to be rolling out this same expect page experience update on desktop. So we assume it will work the same way. So what you’ve seen here on a smartphone only, this will be replicated on desktop at the start of this year. So you’ll probably see something very similar with very poorly performing sites. If you’re already watching this video, you probably have little or no time to get this fixed or they’ll see a ranking drop, which if maybe that’s one of your competitors, that could be good news.

But I don’t think it will stop there. There are two other things I expect to happen.

Increased impact

So one is you might remember with HTTPS updates and particularly with Mobilegeddon, we expected this really big seismic change. But what actually happened was when the update rolled out, it was very toned down. Not much noticeable shifted. But then, over time, Google sort of quietly turned up the wick. These days, we would all expect a very mobile-unfriendly site to perform very poorly in search, even though the initial impact of that algorithm update was very minor. I think something similar will happen here. The slower sites will feel a bigger and bigger penalty gradually building. I don’t mean like a manual penalty, but a bigger disadvantage gradually building over time, until in a few years’ time we would all intuitively understand that a site that doesn’t pass three thresholds or something is going to perform horribly.

New metrics

The last change I’m expecting to see, which Google hinted about initially, is new metrics. So they initially said that they would probably update this annually. You can already see on web.dev that Google is talking about a couple of new metrics. Those are smoothness and responsiveness. So smoothness is to do with the sort of frames per second of animations on the page. So when you’re scrolling up and down the page, is it more like a slideshow or a sort of fluid video? Then responsiveness is how quickly the page interacts or responds to your interactions. So we already have one of the current metrics is first input delay, but, as it says in the name, that’s only the first input. So I’m expecting this to care more about things that happen further into your browsing experience on that page.

So these are things I think you have to think about going forwards through 2022 and beyond for Core Web Vitals. I think the main lesson to take away is you don’t want to over-focus on the three metrics we have now, because if you just leave your page that’s currently having a terrible user experience but somehow sort of wiggling its way through these three metrics, that’s only going to punish you in the long run. It will be like the old-school link builders that are just constantly getting penalized as they find their way around every new update rather than finding a more sustainable technique. I think you have to do the same. You have to aim for a genuinely good user experience or this isn’t going to work out for you.

Anyway, that’s all for today. Hope the rest of your Friday is enjoyable. See you next time.

Source link

Stay in the loop with Entireweb

Get the latest updates delivered straight to your inbox. No spam - unsubscribe anytime.

You must be logged in to post a comment Login