SEO

10 Best Google Optimize Alternatives for 2023

Google Optimize, the freemium A/B testing and analytics Google tool, is being sunset.

Google Optimize and its larger enterprise version, Optimize 360, will no longer be available from September 30, 2023. And users suddenly find themselves in the market for a new testing and analytics tool.

To help you transition to an alternative tool, we looked for the 10 best Google Optimize alternatives and have an overview of each to help you make the best decision for your needs.

What Are Google Optimize & Optimize 360?

First launched on June 1, 2012, as Google Website Optimizer, Google Optimize, as it came to be known, is a web analytics and testing tool.

It allows webmasters to experiment with their web pages and test variants to see how they perform against specified objectives.

It consists of two main elements:

- The Editor – A Chrome plugin, this allows you to change visual HTML elements for testing, including buttons, calls to action (CTAs), and page structure. It applies a JavaScript customized to the rules of your experiment and works with a variety of devices.

- The Reporting Suite – Using data from your linked Google Analytics account, it provides you with tangible data about these experiments.

Combined, these allow webmasters to utilize A/B testing of new content, designs, and layouts on a subset of visitors.

Metrics provide insight into what’s working, allowing website owners to make educated decisions about their pages.

Prior to the advent of tools like this, designers were forced to rely on instinct and feel to determine what works best.

The free version, Optimize, allows users to run up to 5 experiments simultaneously, while the paid version, Optimize 360, lets you run more than 100 at a time.

Why Is Google Optimize Being Sunset?

Users of Optimize have been critical of the platform’s features, particularly on the free version, which restricts the number of tests, goals, variables, and runtime for experiments.

The free version also lacks dedicated customer support and has been accused of inflating visitor count.

Additionally, it requires the installation of an anti-flicker snippet that can impact loading time to ensure visitors don’t receive a momentary glimpse of the original page.

These problems, as well as a general lack of features and services required by customers for experimentation, led to Google’s decision to end Optimize and Optimize 360.

Instead, it has begun investing in third-party integrations for Google Analytics 4 that will provide better, more effective solutions for version testing and improving user experiences.

The 10 Best Google Optimize Alternatives

If you’re looking for something that offers the functionality of Google Optimize and Optimize 360, here are some alternatives you should consider:

1. Convert Experiences

A fast and flicker-free versioning tool, Convert Experiences offers advanced testing options, including A/B, split, multivariate, and multipage testing.

Users can target a highly specific audience using more than 40 filters, and collision prevention stops visitors from being exposed to more than one experiment at a time.

Partial Features List:

- Over 90 integrations, including Google Analytics.

- A/B testing.

- Visual editor.

- Code editor.

- Traffic allocation.

- Preview mode.

- Device targeting.

- Cross-domain testing.

Price: $99-$1,599/month.

2. VWO Testing

Visual Website Optimizer, or VWO Testing, allows users to create and test user experiences without the need for deep technical knowledge.

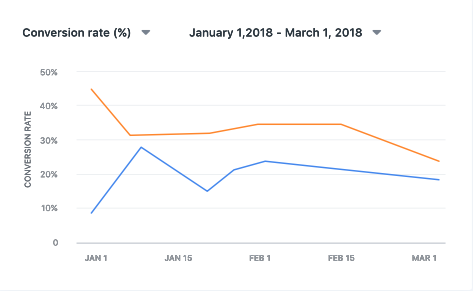

Screenshot from VWO Testing, February 2023

Screenshot from VWO Testing, February 2023

Using templated widgets and a point-and-click visual editor (a code editor is also included), this testing tool is designed to make prototyping and testing quick and easy.

Partial Features List:

- Extensive integrations, including Google Analytics and Adobe Analytics.

- Bayesian-powered statistics.

- Segmented results.

- Parallel load time.

- Visitor heatmaps and recordings.

- Test results recording and archiving.

- Kanban boards.

- Full-funnel tracking.

Price: Free limited version, paid versions start at $356/month.

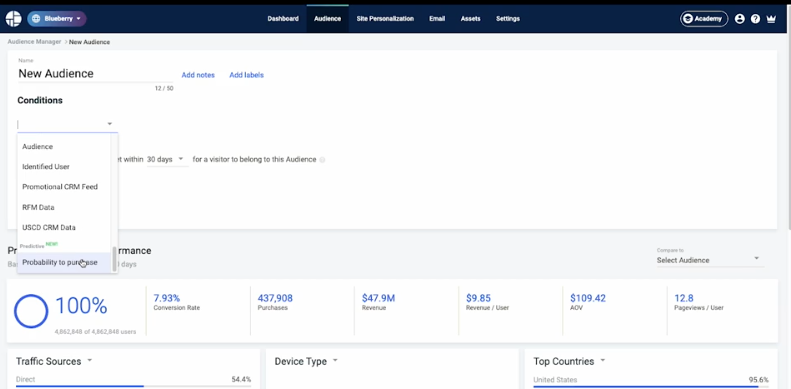

3. Kameleoon

Intended for enterprise-level organizations, Kameleoon offers full stack and feature experimentation in one optimization solution.

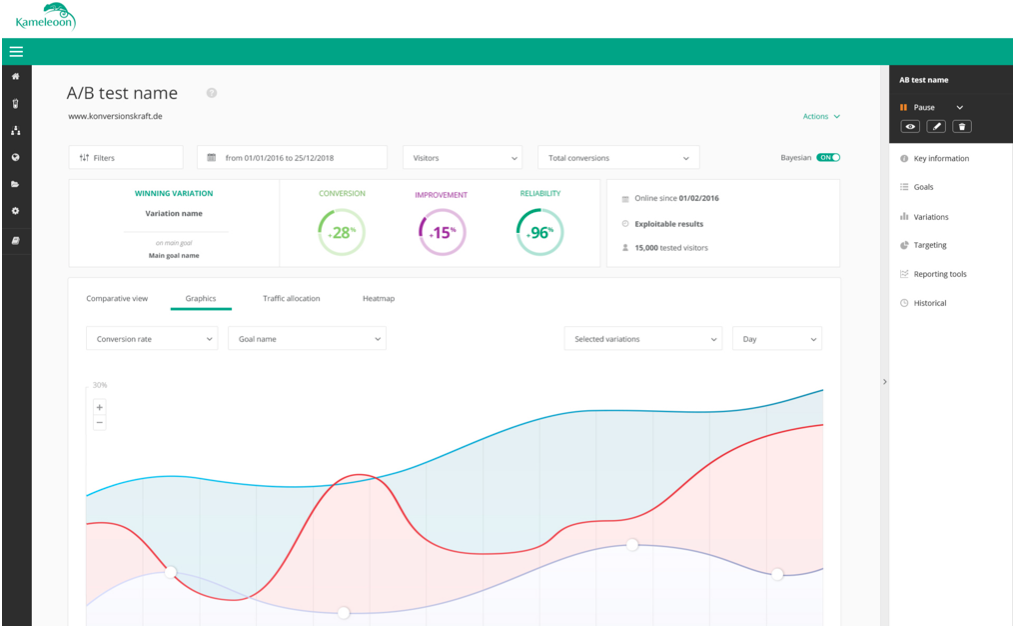

Screenshot from Kameleoon, February 2023

Screenshot from Kameleoon, February 2023

Providing more in-depth experimentation into both user experiences and server-side changes, it allows your web team to view all statistical data in one location.

HIPAA-certified, it also includes extensive privacy features and a machine-learning model to help predict visitor intent and accurately segment your audience.

Partial Features List:

- More than 30 integrations, including Adobe and Google.

- Data privacy, security, and consent management tools.

- Visual and code editors.

- Dynamic traffic allocation

- 45+ native audience segmentation criteria.

- KPI tracking.

Price: Quote based.

4. Zoho PageSense

Designed to help you understand what’s working on your website, as well as how your visitors are interacting with your site, Zoho PageSense has a range of tools for tracking, analysis, optimization, and personalization.

Screenshot from Zoho PageSense, February 2023

Screenshot from Zoho PageSense, February 2023

In addition to content optimization, it allows you to analyze the effectiveness of pop-ups, forms, and dropdowns.

Unlike other options, it does not provide an advanced code editor, and tests are limited to 10 projects with 50 total goals.

Partial Features List:

- Numerous, including Google Ads and Analytics.

- Funnel analysis tools.

- Visual heatmaps.

- A/B and split URL testing.

- Polling functionality.

- Personalization options.

Price: $12-780/month, depending on functionality and monthly visitors.

5. AB Tasty

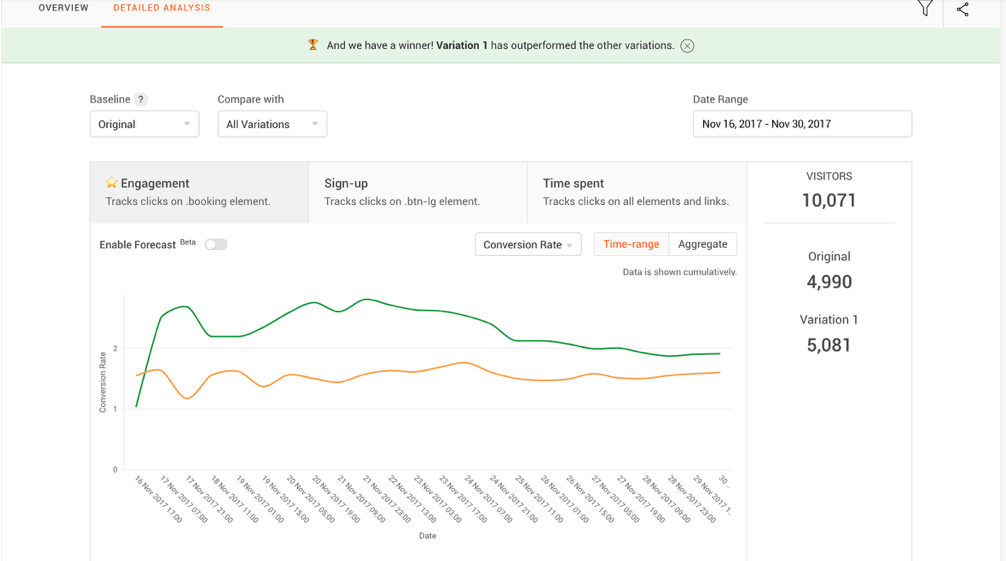

Another platform designed to integrate marketing and growth with back-end developments and products, AB Tasty provides a range of tools for experimentation.

Screenshot from AB Tasty, February 2023

Screenshot from AB Tasty, February 2023

The marketing functionality, which is what most people looking for a Google Optimize replacement will be interested in, is a user-friendly, low-code way to experiment with various changes and optimize a website.

Partial Features List:

- Third-party integrations.

- A/B and multivariate testing.

- Traffic allocation

- AI-personalization.

- Data-driven segmentation.

- Granular campaign triggers.

Price: Quote based.

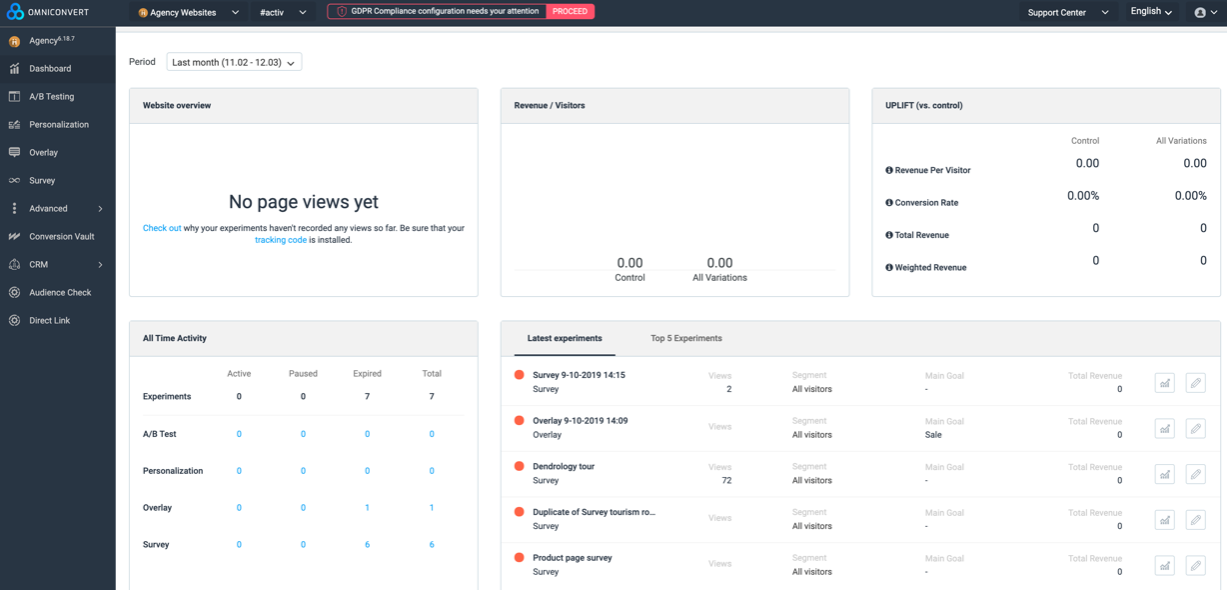

6. Omniconvert Explore

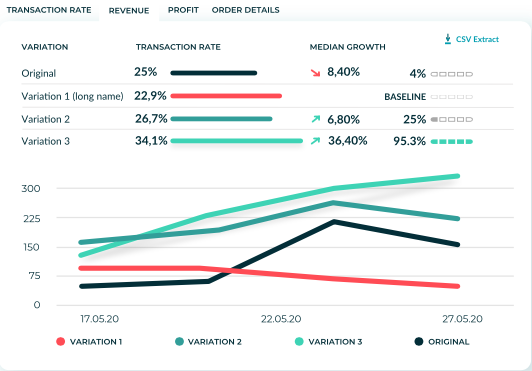

Designed specifically for retailers and ecommerce sites, Omniconvert Explore offers a variety of personalization and segmentation tools to help you optimize your site for more sales.

Screenshot from Omniconvert Explore, February 2023

Screenshot from Omniconvert Explore, February 2023

It’s used by more than 10,000 companies and includes more than 100 overlay templates, as well as a helpful experiment debugger.

Partial Features List:

- A/B testing.

- Web personalization.

- Survey tools.

- CDN cache bypass.

- CSS and JavaScript editor.

- API access.

- Bayesian & Frequentist statistics.

Price: $390-12,430+/month. Custom pricing is available.

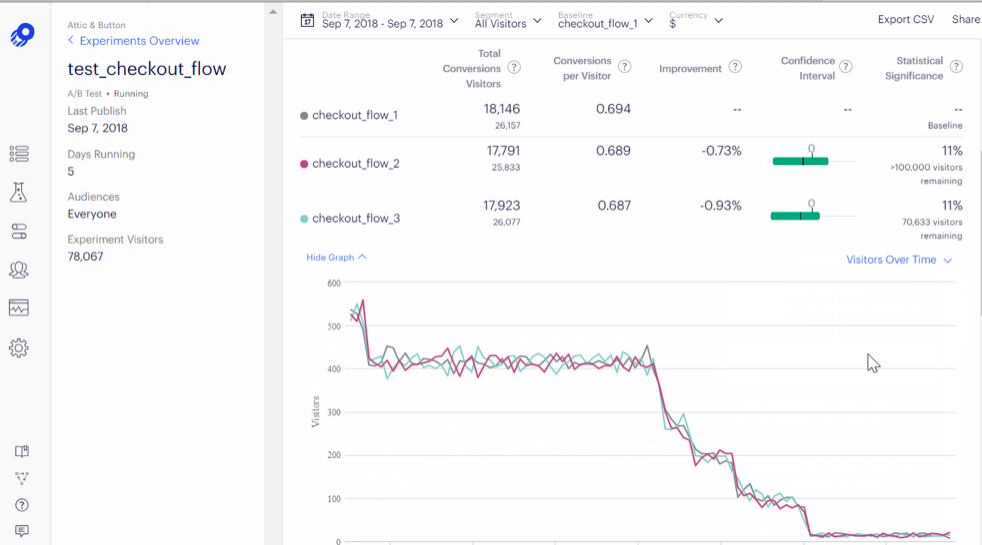

7. Optimizely

Providing three products running the gamut of testing from planning to monetization, Optimizely is intended to optimize every customer touchpoint.

Screenshot from Optimizely, February 2023

Screenshot from Optimizely, February 2023

Optimizely Experiment provides the same functionality as Google Optimize – and then some – allowing you to take a scientific approach to visitor experiences across channels and devices, with data that refreshes every 90 seconds.

Partial Features List:

- Low and no-code experiment options.

- Content management functionality.

- Headless API.

- Real-time segmentation and validated assumptions at scale.

- Experiment lifecycle management.

- Feature flagging and rollouts.

- Collaboration tools.

Price: Quote based.

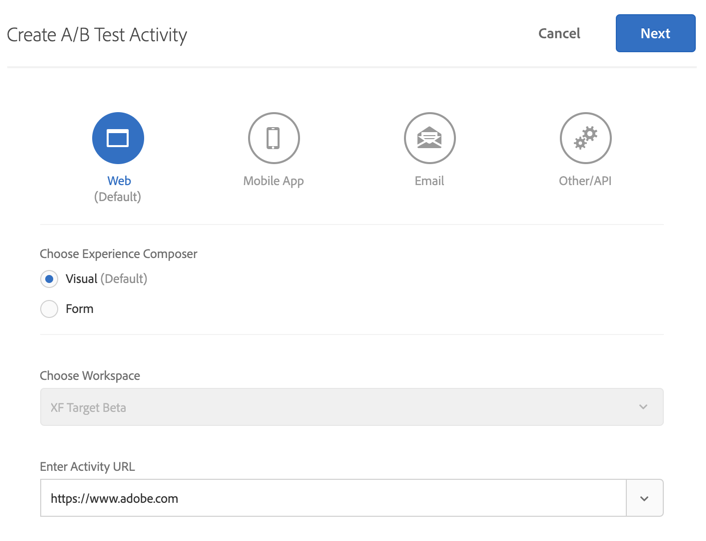

8. Adobe Target

The creator of Acrobat and InDesign also offers an audience-targeting experimentation tool in the form of Adobe Target.

Using machine learning algorithms, it provides AI-powered user experience testing while offering personalization and automation at scale.

Screenshot from Adobe Target, February 2023

Screenshot from Adobe Target, February 2023

Generally used by enterprise-level organizations, it seamlessly interfaces with Adobe’s analytics tools – but is only available as part of the company’s Marketing Cloud.

Partial Features List:

- Intuitive interface.

- A/B and multivariate testing.

- Automated omnichannel personalization.

- AI-assisted experience targeting.

- Simultaneous execution of multiple tests.

Price: Quote based.

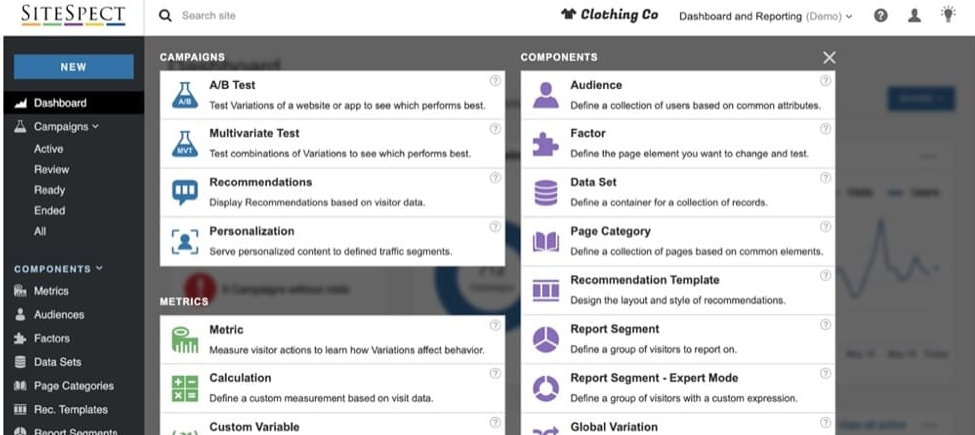

9. SiteSpect

SiteSpect is an A/B testing and experimentation tool that bills itself as the pioneer in the field.

Geared toward enterprise-level organizations with high site traffic, it has tools for marketers, product managers, developers, and network operations.

Screenshot from SiteSpect, February 2023

Screenshot from SiteSpect, February 2023

All of these tools serve to improve conversion rates and raise revenue.

Partial Features List:

- Third-party integrations.

- A/B and multivariate testing.

- AI-powered behavioral and contextual targeting.

- Omnichannel and device targeting.

- High-volume personalization tools.

- Visual editor.

- Flicker-free.

- SEO-friendly.

Price: Quote based.

10. DynamicYield Experience OS

Another tool geared toward high-volume sites, DynamicYield Experience OS allows you to algorithmically match content, offers, and products to individual visitors to your website.

Screenshot from DynamicYield Experience OS, February 2023

Screenshot from DynamicYield Experience OS, February 2023

Working across teams, it is designed to allow you to offer a wide array of seamless experiences and adjust your personalization program to your specific field and KPIs.

Partial Features List:

- Agnostic platform designed to be future-proof.

- A/B testing.

- Extensive personalization across channels and touchpoints.

- Customizable to your needs.

- Predictive test improvement via machine learning.

- Client- and server-side tools.

- Extensive support and resources.

Price: Quote based.

It’s Time To Move On From Google Optimize

Google Optimize was a useful tool, but with its sunset date in sight already, you can’t wait until the last minute to decide on its replacement.

And while dozens of options are available for A/B testing, not all of them will suit your unique needs.

Before you decide, take careful stock of how you’re currently using Google Optimize or Optimize 360. Look at the features you’re using the most, as well as what increased functionality you would enjoy.

Then carefully evaluate your options to find the best testing tools for you.

Luckily, most platforms listed here offer some form of trial basis or demo, so you can get a first-hand look at what they’re offering and how they work before pulling the trigger on a long-term deal.

Don’t be afraid to explore your options, including platforms that are not listed here.

No one knows your requirements better than you, but by carefully doing your homework and evaluating your needs, you can find the best tool for increasing visitor satisfaction and boosting your results.

More resources:

Featured Image: VectorMine/Shutterstock

![How AEO Will Impact Your Business's Google Visibility in 2026 Why Your Small Business’s Google Visibility in 2026 Depends on AEO [Webinar]](https://articles.entireweb.com/wp-content/uploads/2026/01/How-AEO-Will-Impact-Your-Businesss-Google-Visibility-in-2026-400x240.png)

![How AEO Will Impact Your Business's Google Visibility in 2026 Why Your Small Business’s Google Visibility in 2026 Depends on AEO [Webinar]](https://articles.entireweb.com/wp-content/uploads/2026/01/How-AEO-Will-Impact-Your-Businesss-Google-Visibility-in-2026-80x80.png)