SEO

7 Insights Into How Google Ranks Websites via @sejournal, @martinibuster

Google’s algorithm is built around understanding content and search queries and making the answers accessible to users in the most convenient manner.

These seven insights show how to develop a winning SEO and content strategy by leveraging what we know about Google’s algorithms.

The following are insights developed by studying patents and research papers published by Google itself.

Insight 1: Follow the Correct Intent

There are some content writing systems that mine the top-ranked websites and provide content writing and keyword suggestions based on the analysis of the top ten to top thirty webpages.

Some people who have used the software have told me that the information isn’t always helpful. And that’s not surprising because mining all of the top-ranked webpages in any given search results page (SERP) is going to result in a noisy data set that’s inaccurate and is of limited usefulness.

One of the issues with identifying user intent is that almost every query contains multiple user intents.

Advertisement

Continue Reading Below

Google solves this problem by showing links to webpages about the most popular user intents first.

For example, in a research study about automatically classifying YouTube channels (PDF), the researchers discuss the role of user intent in determining which results to show first.

In the below quote, where it uses the word “entity,” it’s a reference to what you normally think of as a noun (a person, a place, or a thing):

“A mapping from names to entities has been built by analyzing Google Search logs, and, in particular, by analyzing the web queries people are using to get to the Wikipedia article for a given entity…

For instance, this table maps the name Jaguar to the entity Jaguar car with a probability of around 45 % and to the entity Jaguar animal with a probability of around 35%.”

In plain English, that means researchers discovered that 45% of people who search for Jaguar are looking for information about the automobile and 35% are looking for information about the animal.

Advertisement

Continue Reading Below

That’s user intent that is segmented by popularity.

The takeaway here is that if your content is about selling a product and the top-ranked pages are about how to make that product then it may be possible that the popular user intent for that keyword is how to make that product and not where to buy that product.

That insight may mean that new content is needed to target the underlying “how to make” latent question that is inherent in that search query.

Insight 2: Link Ecosystem Has Changed

Blogging was at an all-time high twelve years ago. Many people were going online to churn out content and link out to interesting websites.

Aside from the recipe niche, that is no longer the case and that may be affecting the link signal that Google uses for ranking purposes. This is super important to think about.

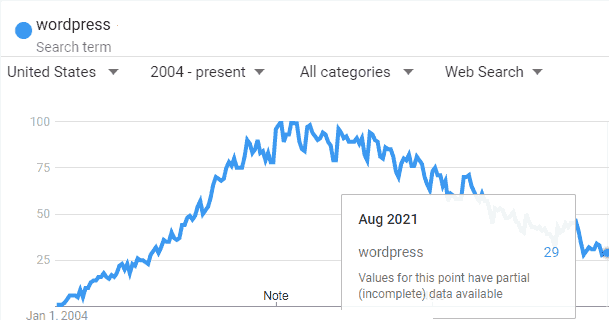

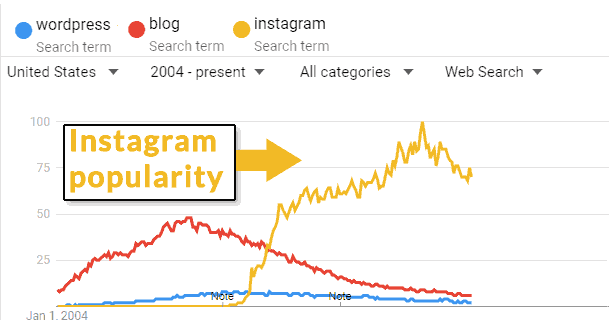

Fewer People Searching for WordPress

There are fewer and fewer people searching for WordPress every year. This indicates that WordPress is declining in popularity in the general population.

The search volume for the keyword “WordPress” has declined by 71% since September 2011.

Screenshot from Google Trends, September 2021

Screenshot from Google Trends, September 2021

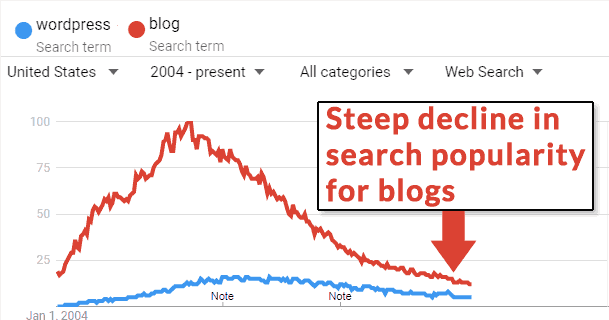

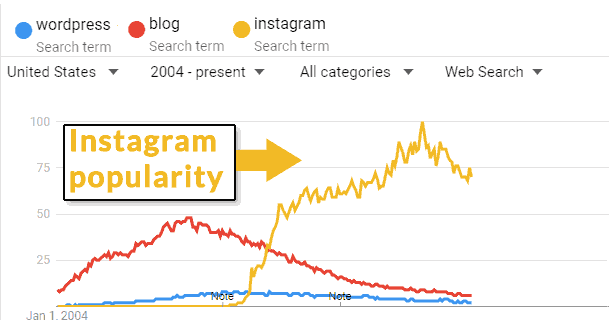

Fewer People Searching for Blogs

It’s not just WordPress usage that is going down. There are also fewer people searching for blogs, with a pattern that mirrors the decline in searches for WordPress.

Screenshot from Google Trends, September 2021

Screenshot from Google Trends, September 2021

The Link Ecosystem in Decline

There may be many reasons why blogging has declined in popularity.

It could be social media or it could be the introduction of the iPhone and Android changed how the public interacts online.

Screenshot from Google Trends, September 2021

Screenshot from Google Trends, September 2021

The Link Ecosystem Has Declined

One thing that is indisputable is that fewer people are blogging and the link ecosystem has suffered a strong decline. What caused it is beside the point.

Advertisement

Continue Reading Below

Gary Illyes of Google confirmed that the motivation for turning the nofollow link attribute directive into a hint was so that Google can use those links for ranking purposes.

“Yes. They had been missing important data that links had, due to nofollow. They can provide better search results now that they consider rel=nofollowed links into consideration.”

It’s not unreasonable to consider the use of nofollow links for ranking purposes was done because there are fewer natural links being generated.

With fewer links being naturally generated, it is highly likely that it’s going to affect how websites are ranked and that Google would be increasingly selective about the links it uses.

Today, it is increasingly clear that link strategies that rely on blog links are more easily detected as spam since fewer people are creating blogs.

The takeaway here is that when creating a link building strategy, it’s important to be aware that the link ecosystem is in decline.

Advertisement

Continue Reading Below

That means that freely given natural links are also in decline.

Link strategies must be more creative in terms of identifying who is left linking to websites and understanding why they are linking to websites.

Takeaway About Links

The time for being selective about getting links from so-called “authority” sites is long past.

Get what you can get as long as it is natural and freely given by any relevant website.

Insight 3: Link Drought Link Building Strategy

Because there are fewer natural links being freely given it’s time to rethink the race to obtain the right anchor text and massive amounts of links.

While a freely given link with a relevant anchor text is useful it’s rarely going to happen naturally.

So maybe it’s time to move away from old traditional link building focused on anchor text and guest posting (which today means paid links).

Instead, it may be useful to cultivate links from news and magazines, relevant organizations, and some educational organizations.

Advertisement

Continue Reading Below

Now more than ever it’s time to focus on outreach regardless of whether the outreach results in links. Just take the traffic.

Insight 4: Search Results Show What People Want to See

Ever walk down a supermarket cereal aisle and note how many sugar-laden kinds of cereal line the shelves? That’s user satisfaction in action. People expect to see sugar bomb cereals in their cereal aisle and supermarkets satisfy that user intent.

I often look at the Fruit Loops on the cereal aisle and think, “Who eats that stuff?” Apparently, a lot of people do, that’s why the box is on the supermarket shelf – because people expect to see it there.

Google is doing the same thing as the supermarket. Google is showing the results that are most likely to satisfy users, just like that cereal aisle.

Sometimes, that means showing newbie 101 level answers. Sometimes that means showing something incredibly racist and sad.

For example, in 2009, Google had to apologize for showing an image of Michelle Obama that was altered to resemble a monkey every time someone searched on her name.

Advertisement

Continue Reading Below

Why did Google show that result? Because most people searching on the name Michelle Obama were the kind of people who were satisfied seeing an image of her that resembled a monkey.

Click-through rates and other metrics of user satisfaction indicated that’s what people wanted to see. So Google’s user intent algorithm gave it to them.

Remember those sugar-laden cereals in the supermarket? That’s what those kinds of results are. It’s what I refer to as a “Fruit Loops algorithm,” a popularity-based algorithm that gives users what they expect to see.

Satisfying user intent is what Google means when they talk about showing relevant results. In the old days, it meant showing webpages that contained the keywords that a user typed. Now it means showing the webpage that most users expect to see.

Essentially, the search results pages are similar to the cereal aisle at your supermarket. That’s not a criticism, it’s an observation.

Advertisement

Continue Reading Below

I think it’s useful to think of the search results as a supermarket aisle and considering what kind of “cereal” is most popular. It may influence your content strategy in a positive way.

Insight 5: Expand the Range of Content

Google’s search results are biased to show the content that users expect to see.

This is why Google shows YouTube videos in the search results. It’s what people want to see.

It’s why Google shows featured snippets, it’s what satisfies the most people today who use mobile phones.

It’s not entirely accurate to complain that Google’s search results favor YouTube videos. People find video content useful, particularly for the how-to type of content. That’s why Google shows it.

It’s a bias in the search results, yes. But it’s a reflection of the users’ bias, not Google’s bias.

So if the user has a bias that favors YouTube videos, what should your online strategy response be?

Advertisement

Continue Reading Below

Write more content and build links to it? Or is the proper response to shift to the kind of content users want, in this case, video?

So if you see the search results are favoring a certain kind of content, pivot to producing that kind of content.

Learn to read the room in terms of what users want by paying close attention to what Google is ranking.

Insight 6: Drops in Ranking and NLP

Drops in ranking can sometimes be explained by a shift in how Google interprets what users mean when they search for something.

Google is increasingly using Natural Language Processing (NLP) algorithms which influences what Google believes users want when they search for something.

For example, I witnessed a near rewrite of what kind of content ranked at the top in a certain niche. Informational content zipped to the top, commercial content dropped to the bottom of the top 10.

There was nothing wrong with the commercial sites that dropped, other than how Google understood user intent changed.

Advertisement

Continue Reading Below

Trying to “fix” the commercial sites by adding more links, disavowing links, or adding more keywords to the page is unlikely to help the rankings.

Fixing something that isn’t broken never helps.

That’s why sometimes, it’s a good idea to study the search results first when diagnosing why a site lost ranking.

There might not be anything to fix. But there may be changes needing to be considered.

If your site has dropped in rankings, review what Google is ranking.

If the kinds of sites still ranking feature different content (focus, topic, etc) then the reason why your site dropped may not be about something that’s wrong.

It may be about something that needs changing.

Insight 7: Click Data Helps Determine User Intent

This is why I use the phrase “Fruit Loops Algo” to refer to Google’s user-intent-focused algorithm. It’s not meant as a slur. It’s meant to illustrate the reality of how Google’s search engine works.

Advertisement

Continue Reading Below

Many people want Fruit Loops and Captain Crunch breakfast cereals. The supermarkets respond by giving consumers what they want.

Search algorithms can operate in a similar manner.

A Better Definition of Relevance

That’s not keyword relevance to search terms you’re looking at — it’s relevance to what most users are expecting to see.

Sometimes that is expressed in how many links a site receives.

But I’m fairly confident that one of the ways user intent is understood is by click log data.

Here’s a patent filed by Google that discusses using click data to understand user intent, Modifying Search Result Ranking Based on Implicit User Feedback .

“Internet search engines aim to identify documents or other items that are relevant to a user’s needs and to present the documents or items in a manner that is most useful to the user. Such activity often involves a fair amount of mind-reading—inferring from various clues what the user wants.

…user reactions to particular search results or search result lists may be gauged, so that results on which users often click will receive a higher ranking. The general assumption under such an approach is that searching users are often the best judges of relevance, so that if they select a particular search result, it is likely to be relevant, or at least more relevant than the presented alternatives.”

Advertisement

Continue Reading Below

Understanding user intent is so important that Google and other search engines have developed eye-tracking and viewport time technologies to measure where on a search result mobile users are lingering. This helps to measure user satisfaction and understand user intent for mobile users.

Is Google or the User Biased Toward Brands?

Some people believe that Google has a big brand bias. But that’s not it at all.

If you consider this in light of what we know about Google’s algorithm and how it tries to satisfy user intent, then you will understand that if Google shows a big brand it’s because that is what users expect to see.

If you want to change that situation then you must create a campaign to build awareness for your site so that users begin to expect to see your site at the top.

Yes, links play a role in that. But other factors such as what users type into search engines also play a role.

Advertisement

Continue Reading Below

Someone once argued that Google should show results about the river when someone typed Amazon into Google. But that is unreasonable if what most people expect to see is Amazon the shopping site.

Again, Google is not matching keywords in that search query. Google is identifying the user intent and showing users what they want to see.

Key Takeaways

Understand the Search Results

The 10 links are not ordered by which page has the best on-page SEO or the most links. Those 10 links are ordered by user intent.

Write for User Intent

Understand what users want to accomplish and make that the focus of the content. Too often publishers write content focused on keywords, what some refer to as “semantically rich” content.

In 2015 I published an article about User Experience Marketing in which I proposed that focusing on user intent will put you in line with how Google ranks websites.

•What user intent is the content satisfying?

•What task or goal is the content helping the site visitor accomplish?”

Advertisement

Continue Reading Below

Understand Content Popularity

Content popularity is about writing content that can be understood by the widest audience possible. That means paying attention to the minimum grade level necessary for understanding your content.

If the grade level is high, this means your content may be too difficult for some users to understand.

I am not saying that Google prefers sites that a sixth-grader can understand. I am only stating that if you want to make your site easily understood by search engines and the most users, then paying attention to the difficulty of your content may be useful.

Google is not a keyword-matching search engine. Google is arguably a User Intent Matching Engine. Knowing and understanding this will improve everyone’s SEO.

There is a profound insight into understanding this and adapting your search marketing strategy to it.

Use What Is Known About Google’s Ranking Algorithms

Google publishes an astonishing amount of information about the algorithms used to rank websites. There are many other research papers that Google does not acknowledge whether or not the technology is in use.

Advertisement

Continue Reading Below

One can level up their SEO and marketing success by knowing what algorithms Google has admitted to using and what kinds of algorithms have been researched.

More Resources:

Featured image: Master1305/Shutterstock

Stay in the loop with Entireweb

Get the latest updates delivered straight to your inbox. No spam - unsubscribe anytime.