SEO

7 Ways SEMs Can Leverage AI Tools

With the arrival of widely available generative AI like ChatGPT and Bard, there is a whole slew of additional things PPC account managers can now automate.

Whereas most of the previous waves of automation helped with math in the form of bidding or pattern discovery in the form of targeting, the latest wave is focused on generating text, which can help with writing ads.

But generative AI can do much more than simply suggest a few additional headlines for responsive search ads (RSAs), so here I’ll share examples of how to use GPT to set up and optimize Google Ads.

How To Access Generative AI

The most widely discussed way of trying GPT is through ChatGPT, which is accessible at chat.openai.com.

But while this may be the quickest way to try generative AI, you’ll probably want a more scalable solution once you start using it to build and optimize PPC campaigns. This is where add-ons for spreadsheets come in handy.

One of my favorite ones is a Chrome extension called GPT for Sheets and Docs.

After you install it, you can add GPT formulas to cells in Google Sheets by typing formulas in the form of ‘=GPT(A2,B2)’.

So rather than working with a single prompt at a time in a chat interface, you can run the same prompt at scale on many cells.

You’ll need an API key to use this plugin, and you can get one from openAI here.

Now we’re ready to start using GPT to help us with PPC work.

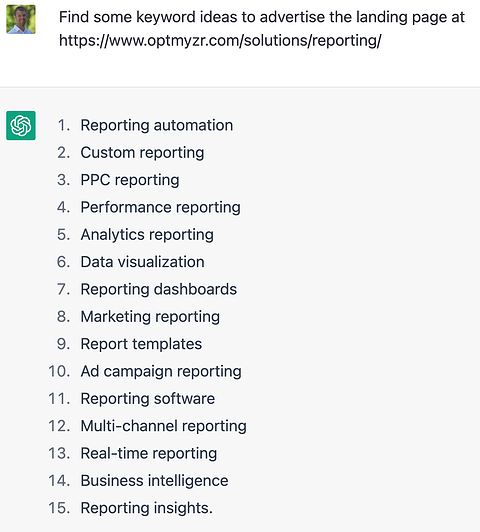

1. How To Use ChatGPT For Keyword Discovery

While Google’s Keyword Planner is a great tool for finding new keywords, ChatGPT provides a good alternative. You can prompt it with the landing page you want keywords for and get a few suggestions.

Screenshot from ChatGPT, April 2023

Screenshot from ChatGPT, April 2023To make the keyword suggestions a bit more relevant, you can ask ChatGPT to include a particular term in each one:

Screenshot from ChatGPT, April 2023

Screenshot from ChatGPT, April 2023Once ad groups are up and running, you might want to find some additional related keywords to target.

So rather than starting with a landing page URL, we’ll prompt ChatGPT to find keywords similar to existing ones.

In this example, I’ll show how that was done in Google Sheets.

| Prompt | Inputs | GPT Response |

| find 5 keywords similar to these: | “amazon ppc tools”,”optimize amazon ppc”,”amazon advertising software”,”amazon ads automation”,”amazon ppc automation”,”amazon ppc software”,”amazon ppc management tools”,”amazon ppc automation software”,”amazon ads software”,”amazon sponsored ads management”,”amazon ppc management software” | 1. Amazon PPC Optimization

2. Amazon Advertising Strategies 3. Amazon Ads Optimization 4. Amazon PPC Strategies 5. Amazon Sponsored Ads Automation |

The first column contains the prompt, the second column the list of existing keywords, and the third column the formula that gets the response from GPT: =GPT(A2,B2).

The beauty of using GPT in a sheet is that it’s quite easy to just change the keywords in the second column while using the same prompt and formula to generate the ChatGPT response.

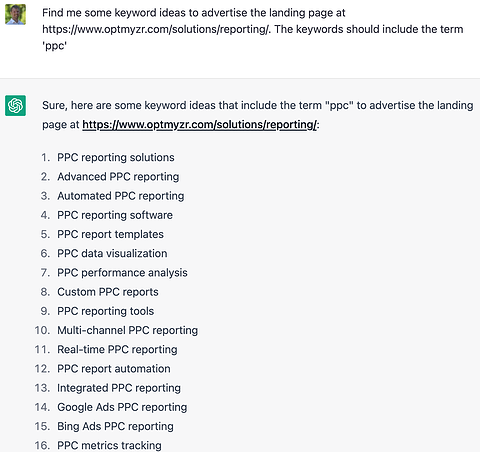

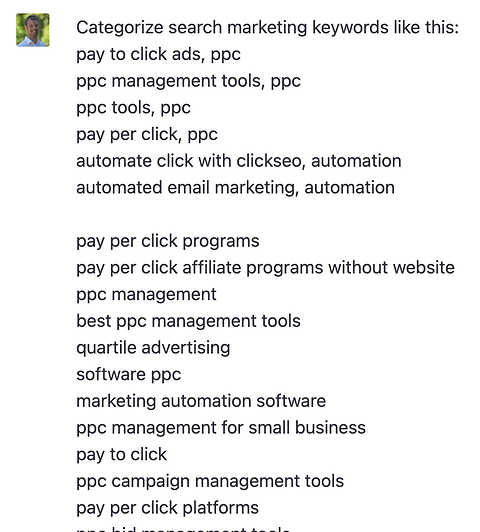

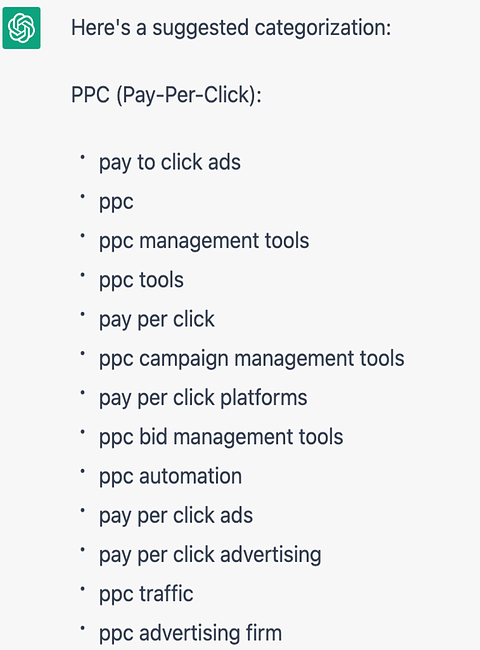

2. How To Use GPT For Keyword Classification

But what happens when a list of suggested keywords, whether from ChatGPT or another tool, gets too long?

We all know that Google rewards relevance through higher Quality Score. So we should split the list of keywords into smaller related groups.

Turns out ChatGPT is quite good at grouping words by relevance.

In my first attempt, I tried to help ChatGPT understand what might be a good categorization, so I added a category name after the first few keywords in my prompt. But I found there was no need to explain categorization, and issuing the same prompt without examples yielded equally good results.

-

Screenshot from ChatGPT, April 2023

Screenshot from ChatGPT, April 2023In this prompt for ChatGPT, we provided examples of how to categorize keywords:

-

Screenshot from ChatGPT, April 2023

Screenshot from ChatGPT, April 2023 - In this prompt, we didn’t provide examples for classification, but the quality of the response remained the same:

Screenshot from ChatGPT, April 2023

Screenshot from ChatGPT, April 2023This output could be more useful if presented in a table, so read on to the tips and tricks section of this post to learn how to ask ChatGPT for that.

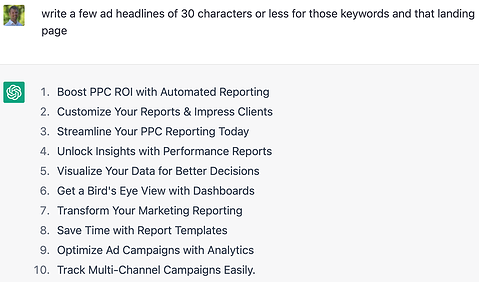

3. How To Use GPT To Create Ads

With a solid set of grouped keywords and an associated landing page from my site, all I’m really missing to set up ad groups are headlines and descriptions for the RSA ads.

So I asked ChatGPT for help with writing some ads:

-

Screenshot from ChatGPT, April 2023

Screenshot from ChatGPT, April 2023 - ChatGPT ad headline suggestions can far exceed the requested character limits.

The response to this prompt illustrates a known limitation of ChatGPT: it’s not good at math, and the headlines it suggested tended to be too long.

ChatGPT isn’t good at math because it works by predicting what text would logically appear next in a sequence. It might know that “1+1=” is usually followed by “2,” but it doesn’t do the math to know this. It looks for common sequences.

This is a known issue and seems to be getting addressed. In my most recent experiments this week, ChatGPT is now writing strings that are shorter and more likely to fit into the limited ad space provided by Google for headlines:

-

Screenshot from ChatGPT, April 2023

Screenshot from ChatGPT, April 2023

When looking for additional ad text variations, providing the current assets helps ChatGPT do better.

This is because GPT is really good at completing text – so providing examples in the prompt leads to better suggestions, because they will follow the same pattern as the examples.

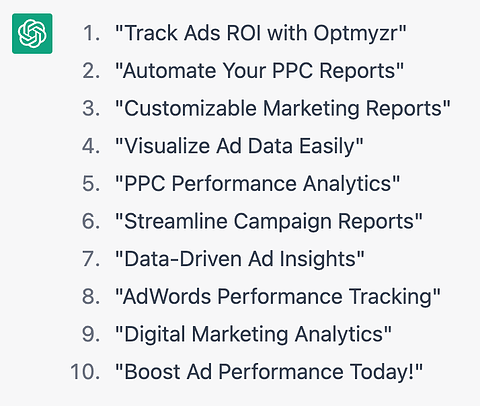

4. How To Use GPT For Search Terms Optimization

Once the ad groups are running, they’ll start to collect data, like what search terms the ads showed for.

Now we can use this data for optimization. The problem with search terms is that there can be a lot of them, and manually working your way through them can be tedious and time-consuming.

So I asked ChatGPT if it could take all my search terms for an ad group and rank them by relevance.

Since it already understands the concept of relevance, I didn’t need to explain, and got the following result:

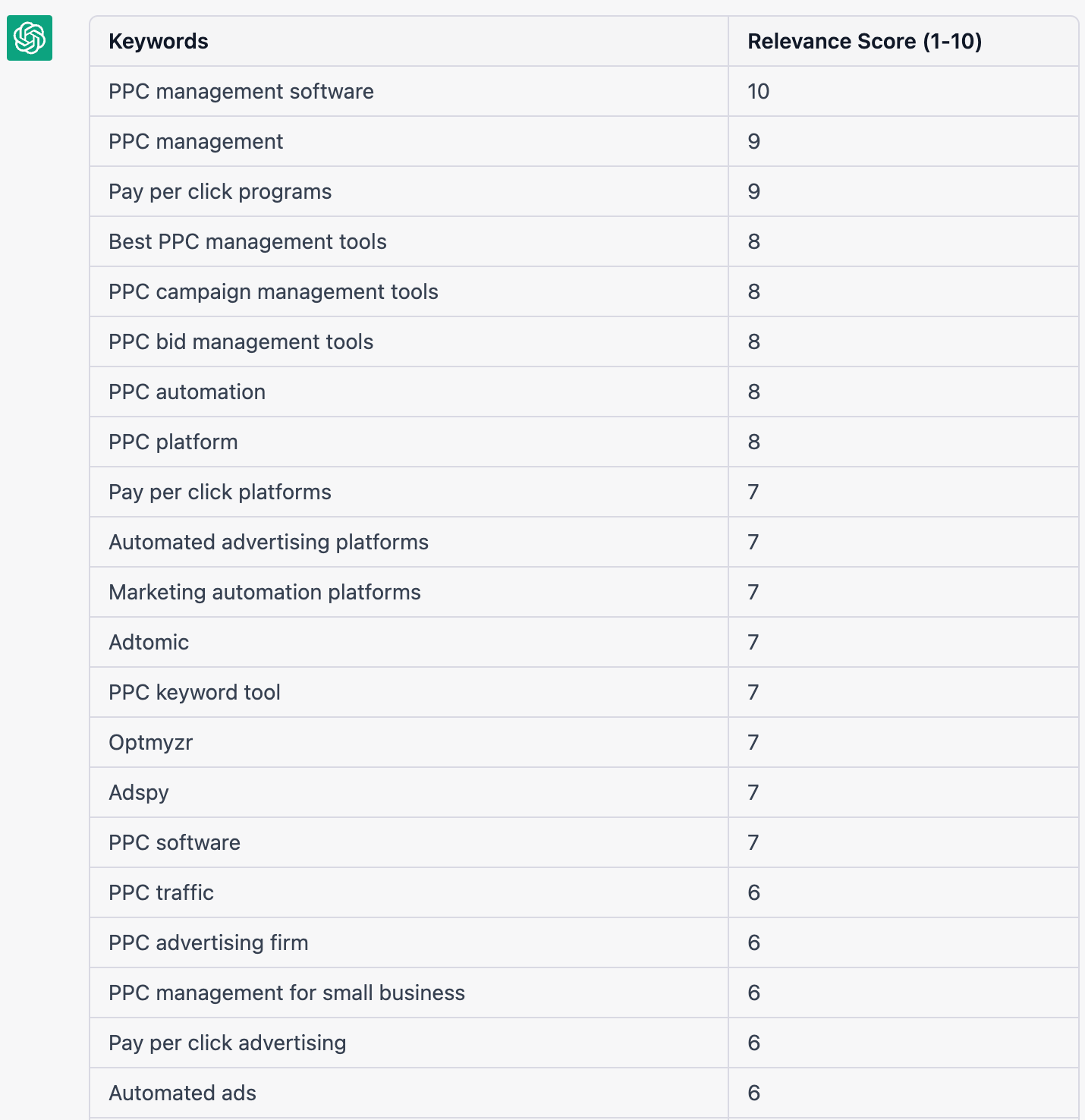

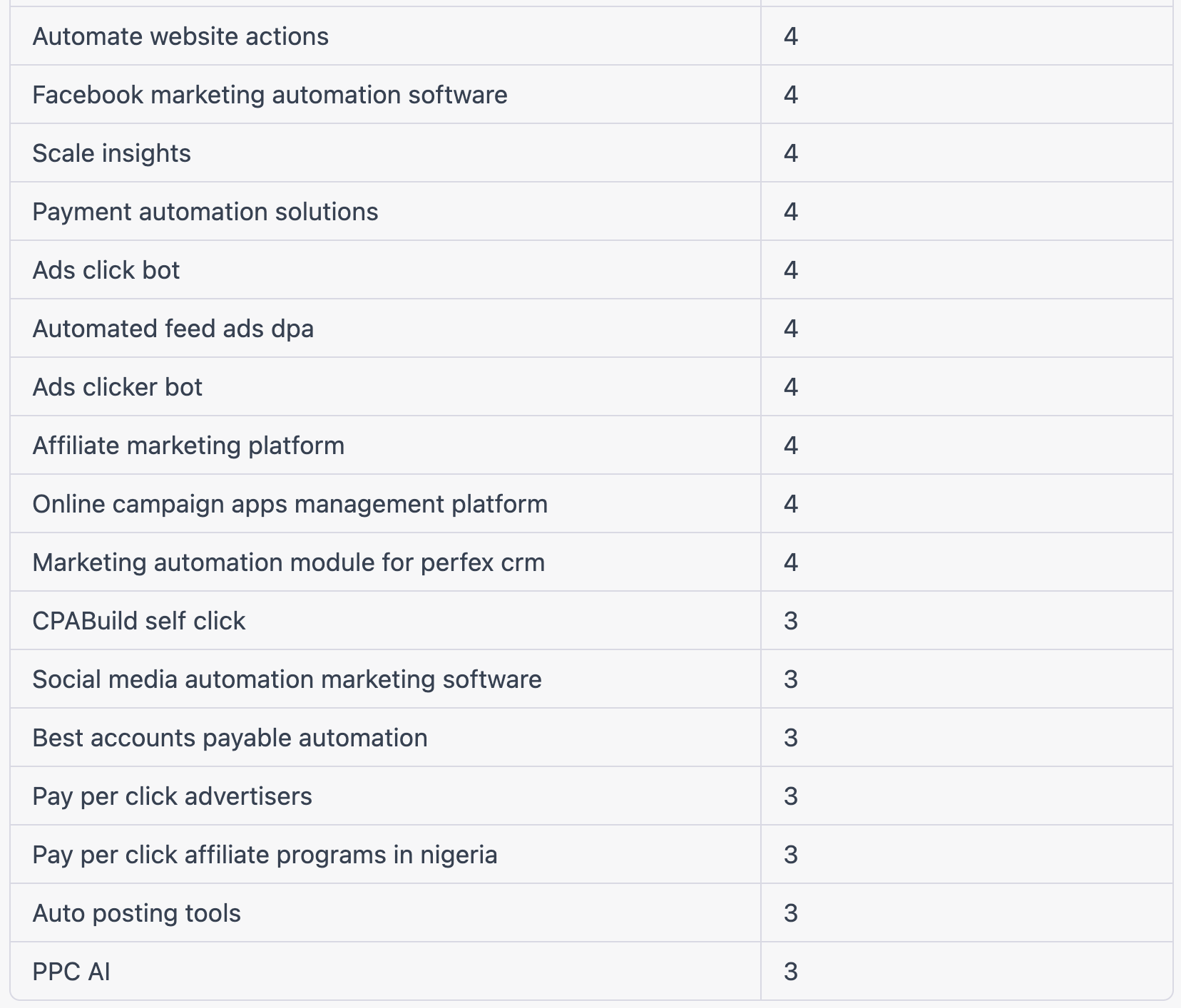

Screenshot from ChatGPT, April 2023

Screenshot from ChatGPT, April 2023Some search terms ChatGPT considers more relevant to a company selling PPC management software:

Screenshot from ChatGPT, April 2023

Screenshot from ChatGPT, April 2023Some search terms ChatGPT considers less relevant to a company selling PPC management software:

-

Screenshot from ChatGPT, April 2023

Screenshot from ChatGPT, April 2023

- I found this extremely helpful when researching negative keyword ideas. I could focus my attention near the bottom of the relevance list, where the terms were indeed more likely to be less relevant to what the landing page offered.

- This is by no means a perfect solution, but it helps prioritize things for busy marketers.

5. How To Use GPT For Shopping Feed Optimization

So far, I have covered a fairly traditional example of keyword advertising.

But could GPT also be used for shopping ads that are based on a product feed?

Instead of optimizing keywords, shopping advertisers need to optimize the feed, and that often means filling in missing data or coming up with new suggestions for product titles and descriptions.

GPT understands semantics and relationships and knows that “Nespresso” is a brand of kitchen appliances.

With no need for you to define brands and product categories, you can give GPT a product detail page URL in a Google Sheet and ask it to fill in a few blanks as I did below:

| what’s the brand of the product on the page | https://www.qvc.com/Nespresso-Vertuo-Next-Premium-Coffee-%26-Espresso-Maker-wFrother.product.K82175.html?sc=TSV&TZ=EST | Nespresso |

| what’s the product on the page | https://www.qvc.com/Nespresso-Vertuo-Next-Premium-Coffee-%26-Espresso-Maker-wFrother.product.K82175.html?sc=TSV&TZ=EST | Nespresso Vertuo Next Premium Coffee & Espresso Maker with Frother |

| what’s the category of the product on the page | https://www.qvc.com/Nespresso-Vertuo-Next-Premium-Coffee-%26-Espresso-Maker-wFrother.product.K82175.html?sc=TSV&TZ=EST | Kitchen Appliances |

As you can see, with just a landing page as input and some simple prompts, GPT is very capable of explaining what the product on the page is.

Advertisers can deploy GPT on their merchant center feed data to optimize their PPC ads.

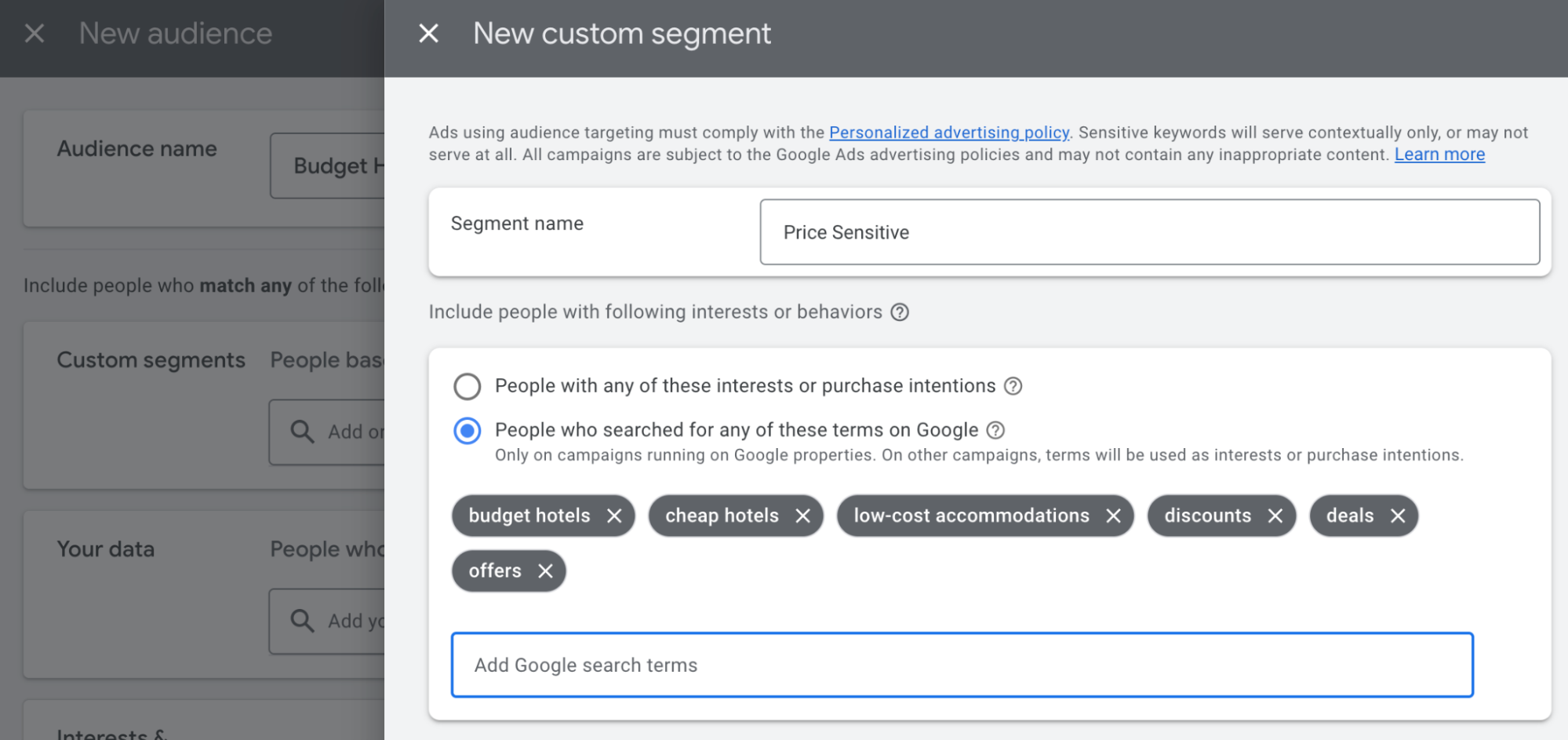

6. How To Use GPT For Building PPC Audiences

Another important PPC targeting lever is audiences. For example, Performance Max campaigns and search campaigns can be optimized by attaching audiences to them.

So next, I tried using GPT for developing audiences. Advertisers should focus on their own first-party audience data, but there are ways GPT can be used when first-party data is not available.

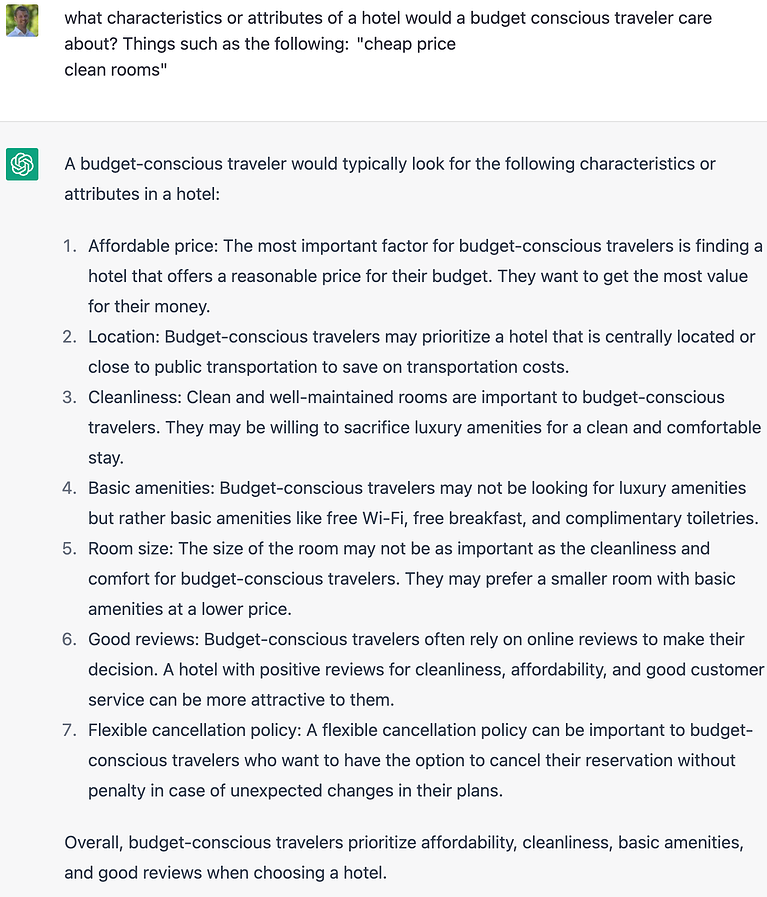

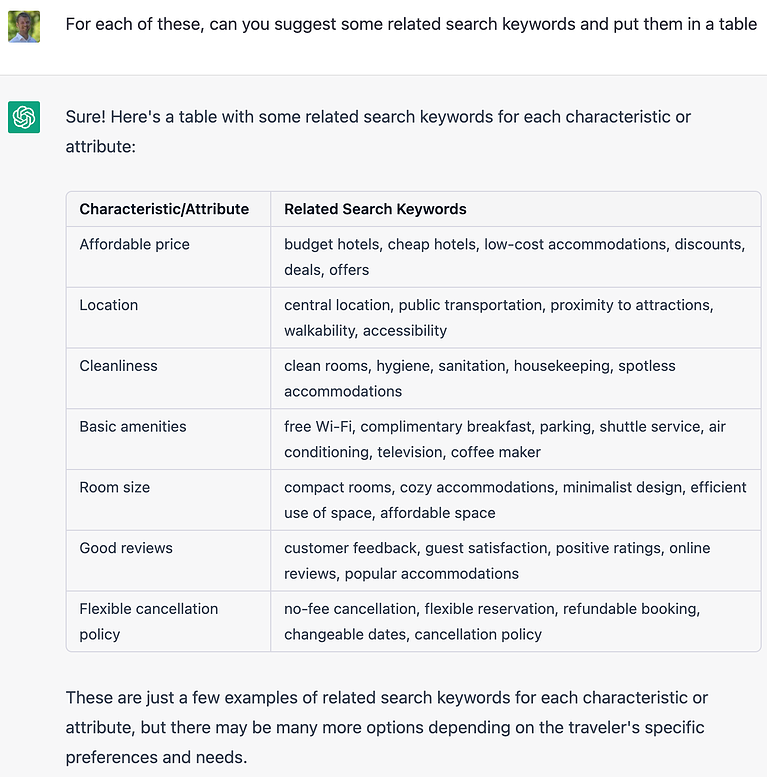

Here I used ChatGPT as I might use a research assistant.

I asked it what things a certain type of consumer might care about, and then I asked it to suggest some keywords those consumers might use if they were searching for something.

The keywords suggested by ChatGPT can then be used to create a custom audience segment in Google Ads.

Screenshot from ChatGPT, April 2023

Screenshot from ChatGPT, April 2023- Use ChatGPT as a research assistant to generate ideas about qualities of your business a prospect might care about:

-

Screenshot from ChatGPT, April 2023

Screenshot from ChatGPT, April 2023

- Using ChatGPT, follow up those qualities with a request for related keywords a prospect might search for.

-

Screenshot from ChatGPT, April 2023

Screenshot from ChatGPT, April 2023

- Use the suggested keywords to create a custom audience segment in Google Ads.

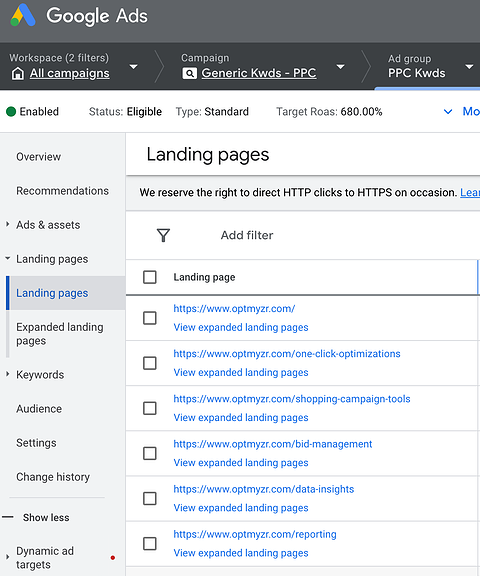

7. How To Use ChatGPT To Optimize Landing Page Relevance

As more campaigns become automated by ad engines, one thing advertisers seem to struggle with is understanding why ads show up for seemingly irrelevant search terms.

Dynamic search ads (DSAs) and Performance Max campaigns use a site’s webpages to determine relevant search terms that should trigger an ad.

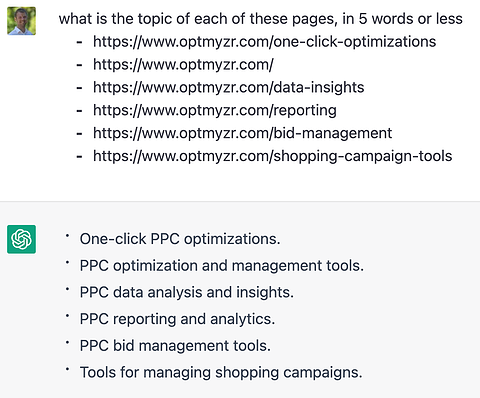

So I asked ChatGPT to tell me what it thought the pages on my site were about. If some of the answers seemed too loosely related to what I’m selling, this could give me ideas for how to optimize those pages with more text related to our core business.

Use the Google Ads landing page report to grab landing page to analyze with GPT:

-

Screenshot from Google Ads, April 2023

Screenshot from Google Ads, April 2023 - GPT shows the topic it believes each of the landing pages is about:

-

Screenshot from ChatGPT, April 2023

Screenshot from ChatGPT, April 2023

Here’s an example where ChatGPT said my landing page was about a topic that seems quite broad:

- Prompt: what is the topic of this page, in 5 words or less?

- https://www.optmyzr.com/solutions/integrations/

- Response: Integrations Solutions

With that information, I can tweak my landing page to be more PPC focused, or I could exclude that page from automated campaigns, for example, by using the URL exclusion feature of Performance Max campaigns.

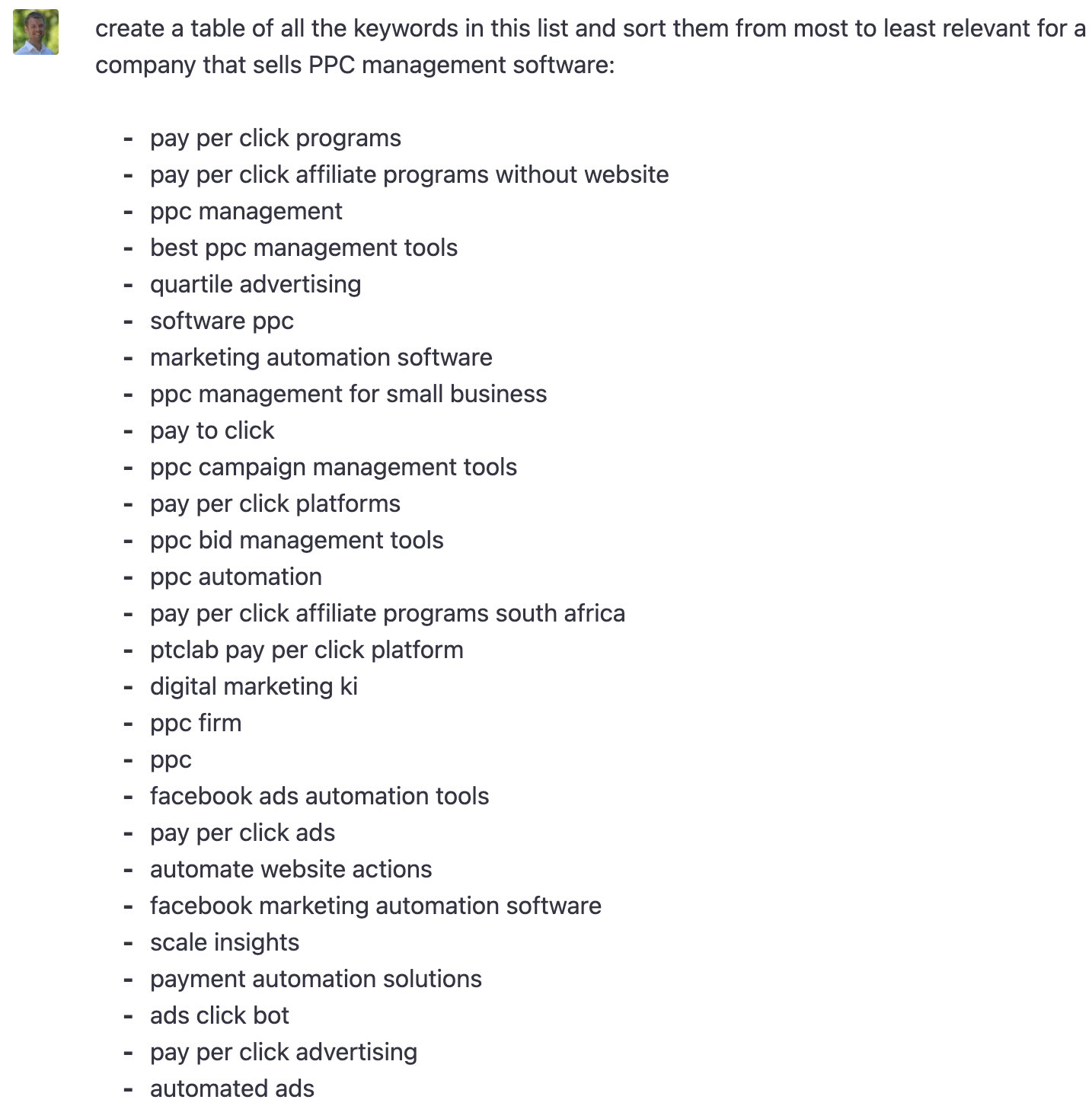

ChatGPT Tips And Tricks

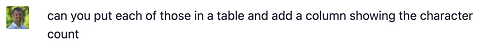

As you noticed, GPT’s responses take a variety of forms, from paragraphs to bullet point text, code, and tabular data. You can request the type of response you want rather than leave it to chance.

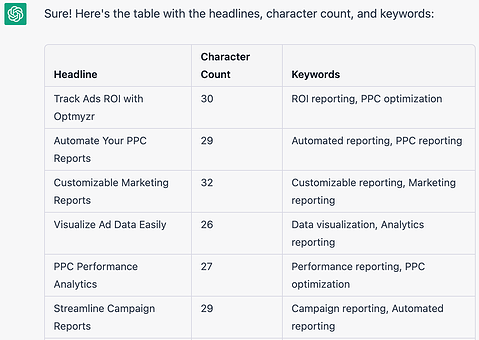

For example, after I asked ChatGPT for headline suggestions and got a list of headlines, I followed up with this prompt:

Screenshot from ChatGPT, April 2023

Screenshot from ChatGPT, April 2023And it responded with this:

-

Screenshot from ChatGPT, April 2023

Screenshot from ChatGPT, April 2023

GPT responses can be requested in a table format, making it easier to work with the output.

Note, again, that it’s still bad at math, so the numbers can’t be trusted (and this was also a problem in tests of GPT-4) – but it is convenient that you can so easily work with data in tables.

Conclusion

Generative AI like GPT and Bard are opening up a slew of new automation opportunities for PPC advertisers.

Try some of these examples on your own accounts, but be vigilant and monitor what the machines are doing for correctness and applicability to your business goals.

Human oversight through techniques like automation layering is a form of PPC insurance that is becoming more relevant in PPC every day, as AI like GPT shifts what it means to be a PPC marketer.

More resources:

Featured Image: Cast Of Thousands/Shutterstock