SEO

A Guide To Linkable Assets For Effective Link Building

Not all content is made equal, and not every blog post receives external links.

Getting inbound links for every blog article is unbelievably time-consuming due to the amount of outreach required to secure links to just one article or page.

Instead of establishing a link building program around outreach, having a linkable content asset can earn you links and improve your outreach response rates.

This article will help you get started creating linkable content so you can build a natural link profile in less time.

Let’s start by defining a linkable asset.

What Is A Linkable Asset?

Linkable content assets refer to high-quality content pieces created to attract backlinks from other websites, either via promotional efforts or link building outreach strategies.

These assets can be presented in various formats, such as infographics, written articles, videos, online tools, or downloadable documents.

An optimal linkable asset is a thorough, long-form resource that surpasses top-ranking content in the same niche. Your content should provide value, be unique, and cater to the interests of your target audience.

For an efficient traffic flow to your website, links must direct users to a specific webpage. Therefore, the asset should either be an HTML page, or integrated within a page.

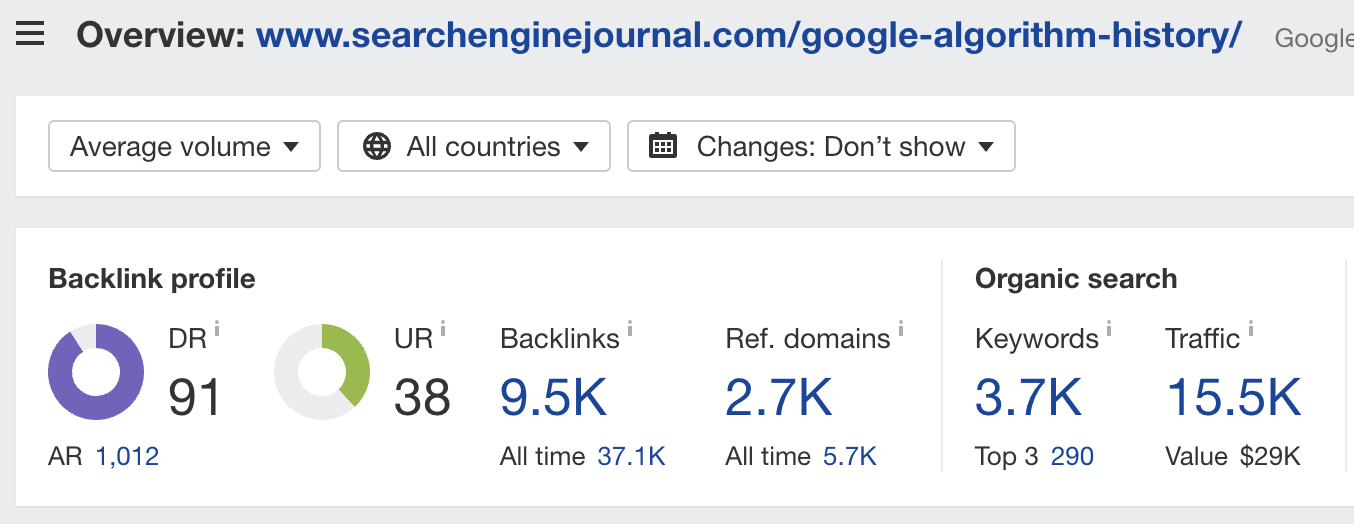

There are a wide variety of linkable assets, one of which is the History of Google Algorithm Updates, published by the Search Engine Journal (SEJ).

Based on data from Ahrefs, this page has successfully gathered nearly 3,000 referring domains.

Screenshot from Ahrefs, May 2023

Screenshot from Ahrefs, May 2023Well-established brands tend to receive more links compared to new or lesser-known sites.

SEJ has built a solid reputation for producing high-quality SEO content, and its trusted name in the field has played a significant role in the success of this particular asset.

The efficacy of a linkable asset often depends on a site’s proven expertise, experience, authority, and trust (E-E-A-T) within its domain.

Enhancing or establishing E-E-A-T can increase the appeal of your content to publishers. Now, let’s briefly delve into the concept of E-E-A-T.

Applications Of E-E-A-T In Linkable Content

Google’s Search Quality Rater Guidelines introduced the concept of E-E-A-T, which helps users assess content quality.

E-E-A-T has become increasingly significant in SEO, content marketing, and link building. To optimize a linkable asset, ensure it demonstrates each of these elements:

- Expertise: Showcase the author’s knowledge and understanding of the topic through education, experience, or publications.

- Experience: Highlight the author’s firsthand knowledge of the subject via personal experience, interviews, or research.

- Authority: Establish the author’s reputation and credibility through credentials, affiliations, or the quality of their work.

- Trustworthiness: Demonstrate the author’s honesty and integrity through transparency, neutrality, or avoiding conflicts of interest.

While the Quality Rater Guidelines and E-E-A-T don’t reveal the algorithm’s workings, Google claims that raters who follow these guidelines achieve similar results. Optimizing an article for E-E-A-T can improve its Google ranking and shareability.

Pro tip: Address questions real people ask in your niche to exhibit expertise and experience.

The emergence of generative AI, such as ChatGPT, has raised questions about demonstrating E-E-A-T in AI-generated content. Next, let’s explore generative AI in content creation.

Harnessing Generative AI Content Ideation

Generative AI is revolutionizing how content marketers conduct research, develop ideas and create content. Tools like ChatGPT, Google Bard, and Bing Chat can significantly reduce research time and provide compelling content topics.

As AI content generation is still emerging, search engine and publisher policies on AI-produced content continue to evolve.

Incorporating subject matter expertise (SME), personal experiences, case studies, and in-depth topic exploration can ensure that AI-generated content remains authentic and avoids plagiarism.

How To Get Content Ideas With ChatGPT

Select a topic or niche, then prompt ChatGPT with “Act as HubSpot’s blog idea generator and provide a list of 20 unique long-form content ideas for [topic/keyword].”

Choose relevant, unique, and searchable ideas, and refine them by comparing them to top-ranking content on Google.

How To Create An Outline With ChatGPT

Prompt ChatGPT with “Act as [industry blog or person] and create a comprehensive outline for an article ‘A Guide To Linkable Assets For Effective Link Building’, using contemporary content marketing techniques.”

An editor will then refine the AI-generated outline.

Use Bard to identify questions to address in the content with the prompt “What are common questions around ‘[topic|keyword]’.”

Update the outline to include the questions and answers from Bard, and modify the ChatGPT-generated sections from an experienced expert’s perspective, deciding which sections to include or remove.

Pro tips: Use tools like ZeroGPT or ChatGPT AI Classifier to ensure your content doesn’t seem too robotic.

When To Use ChatGPT Vs. Other Generative AI

Bing Chat & Google Bard can access their individual search results and current content, while ChatGPT receives monthly updates and lacks internet access.

Utilize Bing Chat & Bard for research and rely on ChatGPT for titles, outlines, and process lists.

Generative AI has the potential to enhance topic selection and outlines for linkable assets and even address some downsides of linkable content assets.

Next, let’s examine the pros and cons of linkable content assets.

Pros And Cons Of Linkable Assets

While high-quality linkable assets can generate substantial links and traffic, this link-building strategy has drawbacks.

Pros

- Scalable link acquisition: Using informational content allows link opportunities across numerous topical areas.

- Enhanced organic visibility: Long-form informative articles can attract thousands of monthly visits across various keywords.

- Relationship building: Linkable content assets help establish relationships with influencers or industry experts, fostering collaboration and trust.

- Long-term value: Relevant and valuable content assets can continue to attract backlinks and traffic over time.

- Brand exposure and credibility: Linkable assets can boost your brand’s reputation as an industry expert, increasing trust and credibility.

Cons

- Links not targeting ideal pages: Links typically target the asset, but a commercial page may be preferable for improving ranking.

- Time and resource-intensive: Creating high-quality linkable assets can be challenging for smaller businesses or teams due to the required time, effort, and resources.

- No guarantee of backlinks: High-quality content does not guarantee backlinks or SEO rewards.

- Difficult to measure success: Assessing the impact of linkable assets on SEO can be challenging, as it might take time for search engines to recognize and reward acquired backlinks.

- Competition: Increasing competition for backlinks and organic visibility makes it harder to stand out.

Using Linkable Assets For Link Building

Content can be tailored for specific link-building techniques or audiences, or created for a general niche.

Technique-first

This approach focuses on creating content for a specific link-building technique and audience.

Identify sites, forums, bloggers, or influencers interested in a particular topic, create content that appeals to them, and distribute it through email outreach or promotion to earn links.

Examples of technique-first methods include the Skyscraper technique, ranking statistical articles, niche forums, competitor link building, and guest posting.

Content-first

This strategy involves creating content for an audience or niche, then finding links for that piece. Use existing content or create new content that appeals to an expert audience.

Ideation techniques can involve a subject matter expert (SME) focus group or answering questions without solid answers online.

Unique distribution approaches for content-first include Help a Reporter Out (HARO) distribution, emailing an existing list of relationships, targeted social ads, and content syndication through press releases.

Next, let’s explore some examples of assets that you can recreate.

Types Of Assets

Creating content to secure links has been a significant focus of link builders since Brian Dean published an article about the SkyScraper technique in 2015 and many before that.

However, there are a lot of content types that work well for securing links. The options for content types seem limitless.

The following is a narrowed-down list of asset types that can improve outreach response rates and earn links under the right circumstance.

Statistical Roundup Lists

This article will aggregate statistics from reputable studies and then organize them into appropriate categories so they are easy to search.

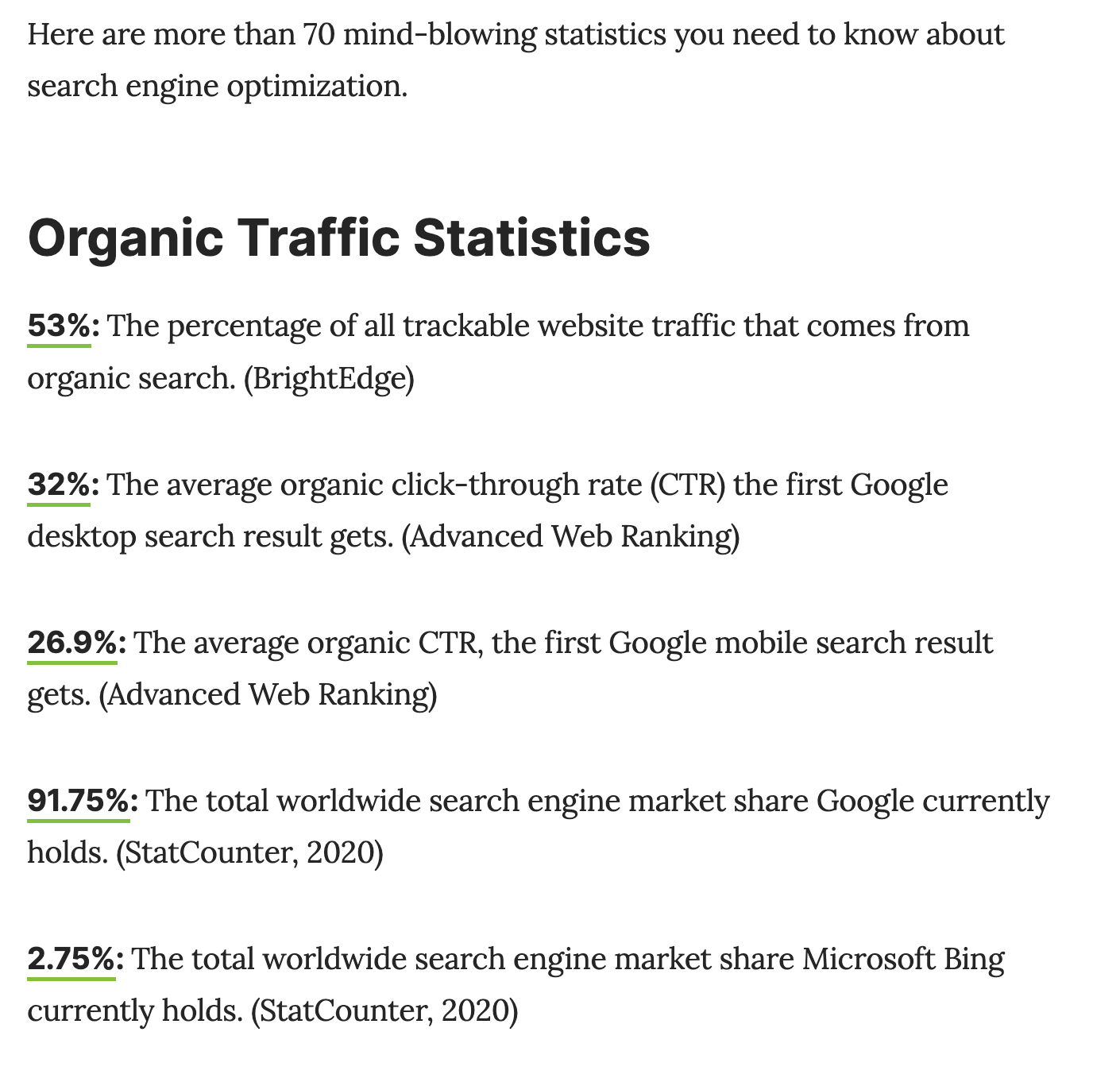

SEJ has a great example of a linkable asset with the roundup of 71 Mind-Blowing Search Engine Optimization Stats.

These are organized into organic traffic, spending, local search, users & search behavior, link building (my favorite topic), Google search, and SEO vs. other marketing channels.

Unique Research Study

These assets are unique studies with accompanying methodology and insights published in a blog article or a general webpage.

These assets are typically surveys, analyzing company data, or compiling & analyzing data from resources like Google Research, data.gov, or Kaggle.

Ahrefs publishes a lot of data. This study is one example, claiming “90% of content gets no traffic on Google.”

This asset has charts from the study embedded into the article, making both the images and the article linkable.

Listicles Of Companies, Tools, Or People

The term “listicle” is a portmanteau of the words “list” and “article.”

These articles typically featured a bullet or numbered list with each item accompanied by a brief description, explanation, or commentary.

The format of a listicle is easy to skim to find the most relevant information quickly. However, a listicle can oversimplify a complex topic.

The great thing about these articles is that they can be created in almost any industry.

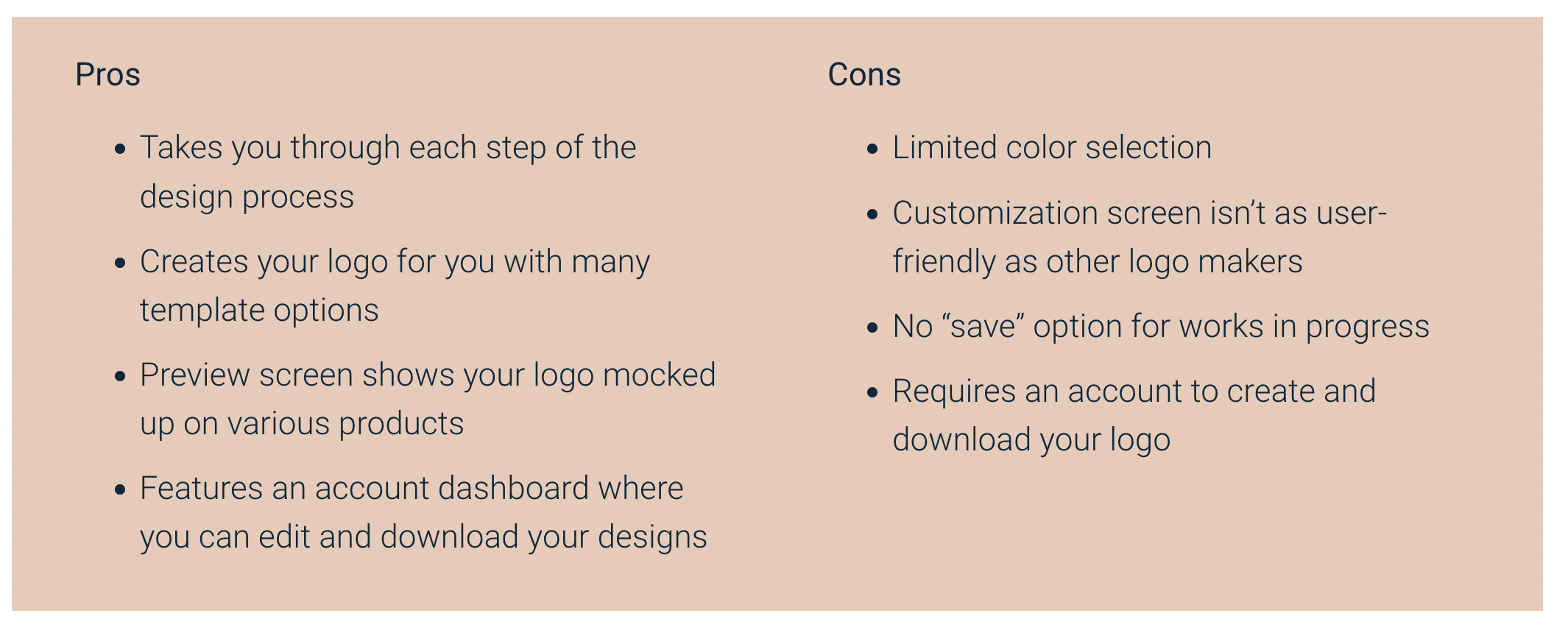

The article 12 Free Logo Makers You Can Try Right Now from a promotional printing company Quality Logo Products, shows that this technique can be used in any niche.

This article provides examples of potential logos along with the pros & cons for each logo maker.

Image from qualitylogoproducts.com, May 2023

Image from qualitylogoproducts.com, May 2023With any listicle, showing examples of using the tool or items is a way to demonstrate experience and build stronger trust with the audience.

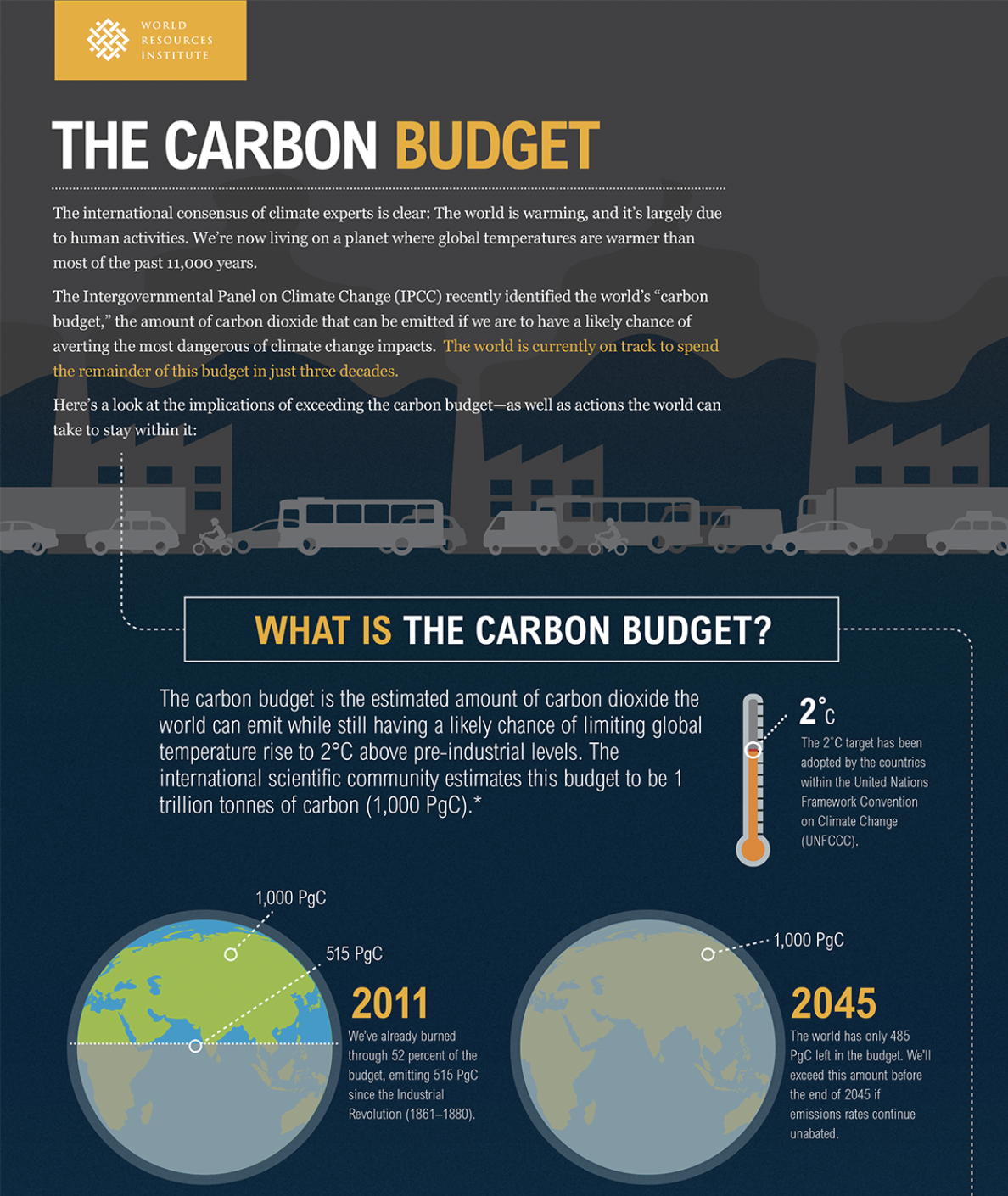

Informative Infographics

An infographic is a visual representation of information, data, or knowledge designed to convey complex information quickly and clearly.

Infographics often use a combination of charts, graphs, icons, illustrations, and text to present the information visually appealingly.

The carbon budget infographic by World Resources Institute uses a graphic of the earth to explain how much of the carbon budget (i.e., the amount of carbon the earth can produce before the temperature rises by 2 degrees) will be used by 2045.

Image from World Resources Institute, May 2023

Image from World Resources Institute, May 2023

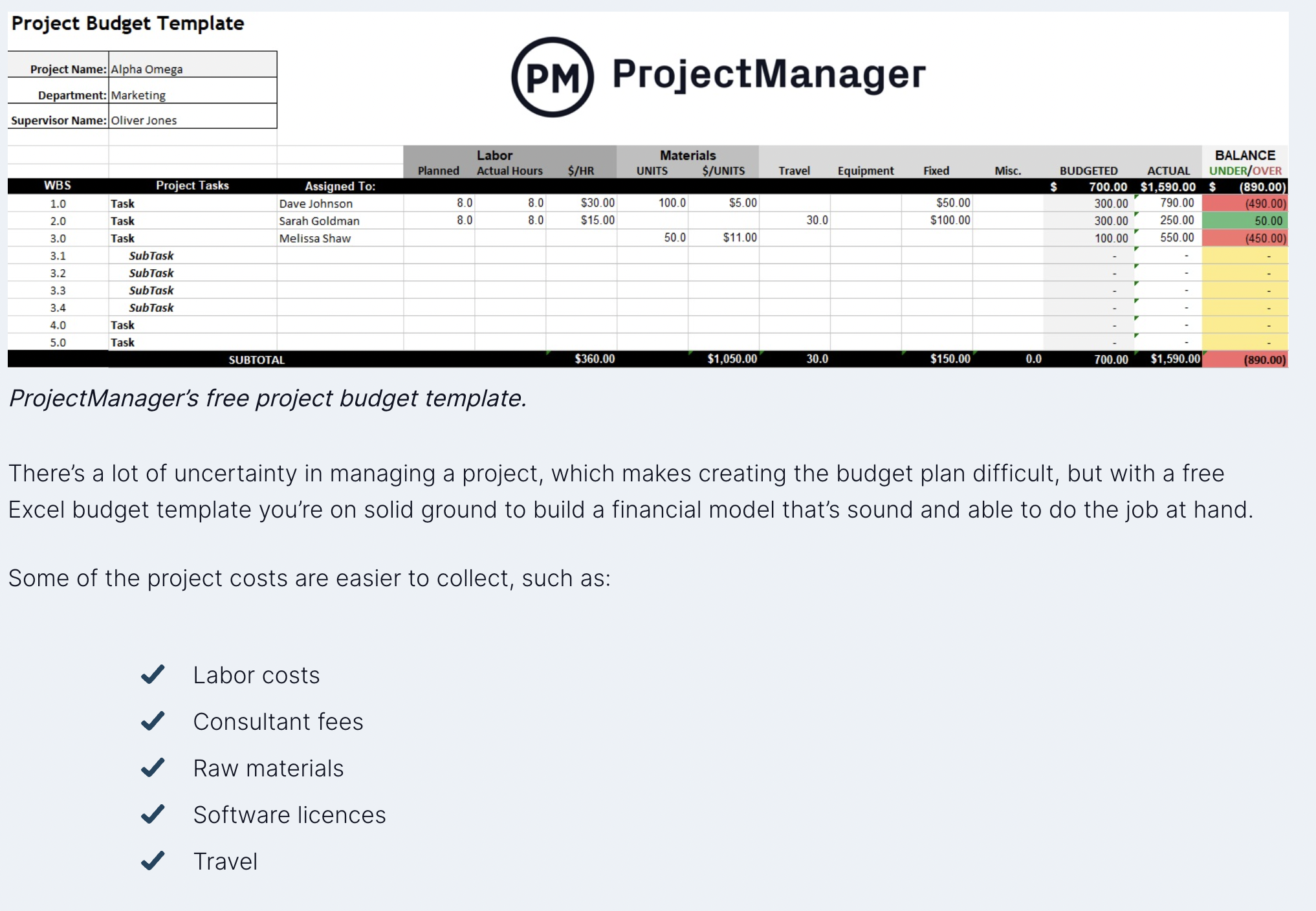

Excel & Google Sheet Templates

Templates are simple ways to organize a project with many moving parts. Even if the templates are not perfect for the project, they can serve as a source of inspiration for spreadsheet organization.

Theoretically, a template can be used for anything from home organization to advanced project planning. The higher search terms tend to be focused on project management.

An example is “Excel budget templates,” which has up to 100,000 average monthly searches.

This technique is very popular with B2B SaaS and project management (PM) software but can be used in any niche. ProjectManager is one of the PM sites producing Excel budgeting templates.

This example is simple but has secured over 100 referring domain links.

Screenshot from ProjectManager, May 2023

Screenshot from ProjectManager, May 2023More Asset Types

Planning Tools

A planner is an online web app that simplifies planning a specific task. The tasks can be for a simple consumer or a business planning task.

Calculators As Content

An online calculator can take the shape of an investment calculator, home improvement, or even a depth calculator.

Checklists Or Cheatsheets

These lists of steps or tasks to complete a specific project can come in the form of an online app, excel template, or pdf.

Conclusion

Creating a linkable asset that publishers, bloggers, and general websites will leverage can reduce outreach time and even earn links without any outreach.

Although these pieces are time-consuming to create, they can reduce the overall time to find links.

An asset can be a complex research study or a simple Excel template to organize planning.

Next time you’re launching a link building campaign, create a piece that is easily linkable.

More resources:

Featured Image: Marynchenko Oleksandr/Shutterstock