SEO

How To Build A Diverse & Healthy Link Profile

Search is evolving at an incredible pace and new features, formats, and even new search engines are popping up within the space.

Google’s algorithm still prioritizes backlinks when ranking websites. If you want your website to be visible in search results, you must account for backlinks and your backlink profile.

A healthy backlink profile requires a diverse backlink profile.

In this guide, we’ll examine how to build and maintain a diverse backlink profile that powers your website’s search performance.

What Does A Healthy Backlink Profile Look Like?

As Google states in its guidelines, it primarily crawls pages through links from other pages linked to your pages, acquired through promotion and naturally over time.

In practice, a healthy backlink profile can be divided into three main areas: the distribution of link types, the mix of anchor text, and the ratio of followed to nofollowed links.

Let’s look at these areas and how they should look within a healthy backlink profile.

Distribution Of Link Types

One aspect of your backlink profile that needs to be diversified is link types.

It looks unnatural to Google to have predominantly one kind of link in your profile, and it also indicates that you’re not diversifying your content strategy enough.

Some of the various link types you should see in your backlink profile include:

- Anchor text links.

- Image links.

- Redirect links.

- Canonical links.

Here is an example of the breakdown of link types at my company, Whatfix (via Semrush):

Most links should be anchor text links and image links, as these are the most common ways to link on the web, but you should see some of the other types of links as they are picked up naturally over time.

Mix Of Anchor Text

Next, ensure your backlink profile has an appropriate anchor text variance.

Again, if you overoptimize for a specific type of anchor text, it will appear suspicious to search engines like Google and could have negative repercussions.

Here are the various types of anchor text you might find in your backlink profile:

- Branded anchor text – Anchor text that is your brand name or includes your brand name.

- Empty – Links that have no anchor text.

- Naked URLs – Anchor text that is a URL (e.g., www.website.com).

- Exact match keyword-rich anchor text – Anchor text that exactly matches the keyword the linked page targets (e.g., blue shoes).

- Partial match keyword-rich anchor text – Anchor text that partially or closely matches the keyword the linked page targets (e.g., “comfortable blue footwear options”).

- Generic anchor text – Anchor text such as “this website” or “here.”

To maintain a healthy backlink profile, aim for a mix of anchor text within a similar range to this:

- Branded anchor text – 35-40%.

- Partial match keyword-rich anchor text – 15-20%.

- Generic anchor text -10-15%.

- Exact match keyword-rich anchor text – 5-10%.

- Naked URLs – 5-10%.

- Empty – 3-5%.

This distribution of anchor text represents a natural mix of differing anchor texts. It is common for the majority of anchors to be branded or partially branded because most sites that link to your site will default to your brand name when linking. It also makes sense that the following most common anchors would be partial-match keywords or generic anchor text because these are natural choices within the context of a web page.

Exact-match anchor text is rare because it only happens when you are the best resource for a specific term, and the site owner knows your page exists.

Ratio Of Followed Vs. Nofollowed Backlinks

Lastly, you should monitor the ratio of followed vs. nofollowed links pointing to your website.

If you need a refresher on what nofollowed backlinks are or why someone might apply the nofollow tag to a link pointing to your site, check out Google’s guide on how to qualify outbound links to Google.

Nofollow attributes should only be applied to paid links or links pointing to a site the linking site doesn’t trust.

While it is not uncommon or suspicious to have some nofollow links (people misunderstand the purpose of the nofollow attribute all the time), a healthy backlink profile will have far more followed links.

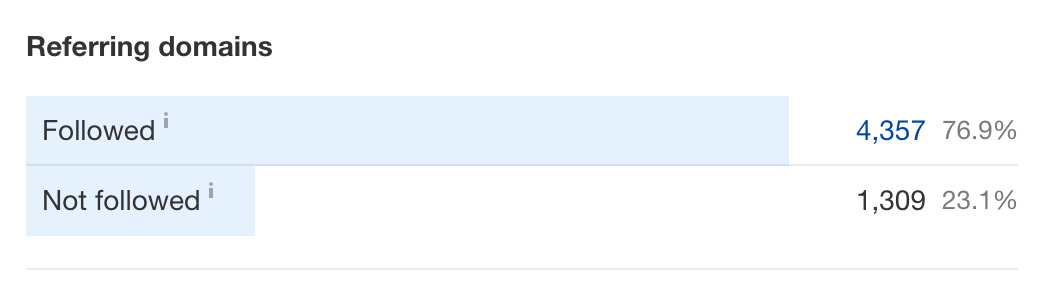

You should aim for a ratio of 80%:20% or 70%:30% in favor of followed links. For example, here is what the followed vs. nofollowed ratio looks like for my company’s backlink profile (according to Ahrefs):

Screenshot from Ahrefs, May 2024

Screenshot from Ahrefs, May 2024You may see links with other rel attributes, such as UGC or Sponsored.

The “UGC” attribute tags links from user-generated content, while the “Sponsored” attribute tags links from sponsored or paid sources. These attributes are slightly different than the nofollow tag, but they essentially work the same way, letting Google know these links aren’t trusted or endorsed by the linking site. You can simply group these links in with nofollowed links when calculating your ratio.

Importance Of Diversifying Your Backlink Profile

So why is it important to diversify your backlink profile anyway? Well, there are three main reasons you should consider:

- Avoiding overoptimization.

- Diversifying traffic sources.

- And finding new audiences.

Let’s dive into each of these.

Avoiding Overoptimization

First and foremost, diversifying your backlink profile is the best way to protect yourself from overoptimization and the damaging penalties that can come with it.

As SEO pros, our job is to optimize websites to improve performance, but overoptimizing in any facet of our strategy – backlinks, keywords, structure, etc. – can result in penalties that limit visibility within search results.

In the previous section, we covered the elements of a healthy backlink profile. If you stray too far from that model, your site might look suspicious to search engines like Google and you could be handed a manual or algorithmic penalty, suppressing your rankings in search.

Considering how regularly Google updates its search algorithm these days (and how little information surrounds those updates), you could see your performance tank and have no idea why.

This is why it’s so important to keep a watchful eye on your backlink profile and how it’s shaping up.

Diversifying Traffic Sources

Another reason to cultivate a diverse backlink profile is to ensure you’re diversifying your traffic sources.

Google penalties come swiftly and can often be a surprise. If you have all your eggs in that basket when it comes to traffic, your site will suffer badly and might need help to recover.

However, diversifying your traffic sources (search, social, email, etc.) will mitigate risk – similar to a stock portfolio – as you’ll have other traffic sources to provide a steady flow of visitors if another source suddenly dips.

Part of building a diverse backlink profile is acquiring a diverse set of backlinks and backlink types, and this strategy will also help you find differing and varied sources of traffic.

Finding New Audiences

Finally, building a diverse backlink profile is essential, as doing so will also help you discover new audiences.

If you acquire links from the same handful of websites and platforms, you will need help expanding your audience and building awareness for your website.

While it’s important to acquire links from sites that cater to your existing audience, you should also explore ways to build links that can tap into new audiences. The best way to do this is by casting a wide net with various link acquisition tactics and strategies.

A diverse backlink profile indicates a varied approach to SEO and marketing that will help bring new visitors and awareness to your site.

Building A Diverse Backlink Profile

So that you know what a healthy backlink profile looks like and why it’s important to diversify, how do you build diversity into your site’s backlink profile?

This comes down to your link acquisition strategy and the types of backlinks you actively pursue. To guide your strategy, let’s break link building into three main categories:

- Foundational links.

- Content promotion.

- Community involvement.

Here’s how to approach each area.

Foundational Links

Foundational links represent those links that your website simply should have. These are opportunities where a backlink would exist if all sites were known to all site owners.

Some examples of foundational links include:

- Mentions – Websites that mention your brand in some way (brand name, product, employees, proprietary data, etc.) on their website but don’t link.

- Partners – Websites that belong to real-world partners or companies you connect with offline and should also connect (link) with online.

- Associations or groups – Websites for offline associations or groups you belong to where your site should be listed with a link.

- Sponsorships – Any events or organizations your company sponsors might have websites that could (and should) link to your site.

- Sites that link to competitors – If a website is linking to a competitor, there is a strong chance it would make sense for them to link to your site as well.

These link opportunities should set the foundation for your link acquisition efforts.

As the baseline for your link building strategy, you should start by exhausting these opportunities first to ensure you’re not missing highly relevant links to bolster your backlink profile.

Content Promotion

Next, consider content promotion as a strategy for building a healthy, diverse backlink profile.

Content promotion is much more proactive than the foundational link acquisition mentioned above. You must manifest the opportunity by creating link-worthy content rather than simply capitalizing on an existing opportunity.

Some examples of content promotion for links are:

- Digital PR – Digital PR campaigns have numerous benefits and goals beyond link acquisition, but backlinks should be a primary KPI.

- Original research – Similar to digital PR, original research should focus on providing valuable data to your audience. Still, you should also make sure any citations or references to your research are correctly linked.

- Guest content – Whether regular columns or one-off contributions, providing guest content to websites is still a viable link acquisition strategy – when done right. The best way to gauge your guest content strategy is to ask yourself if you would still write the content for a site without guaranteeing a backlink, knowing you’ll still build authority and get your message in front of a new audience.

- Original imagery – Along with research and data, if your company creates original imagery that offers unique value, you should promote those images and ask for citation links.

Content promotion is a viable avenue for building a healthy backlink profile as long as the content you’re promoting is worthy of links.

Community Involvement

Community involvement is the final piece of your link acquisition puzzle when building a diverse backlink profile.

After pursuing all foundational opportunities and manually promoting your content, you should ensure your brand is active and represented in all the spaces and communities where your audience engages.

In terms of backlinks, this could mean:

- Wikipedia links – Wikipedia gets over 4 billion monthly visits, so backlinks here can bring significant referral traffic to your site. However, acquiring these links is difficult as these pages are moderated closely, and your site will only be linked if it is legitimately a top resource on the web.

- Forums (Reddit, Quora, etc.) – Another great place to get backlinks that drive referral traffic is forums like Reddit and Quora. Again, these forums are strictly moderated, and earning link placements on these sites requires a page that delivers significant and unique value to a specific audience.

- Social platforms – Social media platforms and groups represent communities where your brand should be active and engaged. While these strategies are likely handled by other teams outside SEO and focus on different metrics, you should still be intentional about converting these interactions into links when or where possible.

- Offline events – While it may seem counterintuitive to think of offline events as a potential source for link acquisition, legitimate link opportunities exist here. After all, most businesses, brands, and people you interact with at these events also have websites, and networking can easily translate to online connections in the form of links.

While most of the link opportunities listed above will have the nofollow link attribute due to the nature of the sites associated with them, they are still valuable additions to your backlink profile as these are powerful, trusted domains.

These links help diversify your traffic sources by bringing substantial referral traffic, and that traffic is highly qualified as these communities share your audience.

How To Avoid Developing A Toxic Backlink Profile

Now that you’re familiar with the link building strategies that can help you cultivate a healthy, diverse backlink profile, let’s discuss what you should avoid.

As mentioned before, if you overoptimize one strategy or link, it can seem suspicious to search engines and cause your site to receive a penalty. So, how do you avoid filling your backlink profile with toxic links?

Remember The “Golden Rule” Of Link Building

One simple way to guide your link acquisition strategy and avoid running afoul of search engines like Google is to follow one “golden rule.”

That rule is to ask yourself: If search engines like Google didn’t exist, and the only way people could navigate the web was through backlinks, would you want your site to have a link on the prospective website?

Thinking this way strips away all the tactical, SEO-focused portions of the equation and only leaves the human elements of linking where two sites are linked because it makes sense and makes the web easier to navigate.

Avoid Private Blog Networks (PBNs)

Another good rule is to avoid looping your site into private blog networks (PBNs). Of course, it’s not always obvious or easy to spot a PBN.

However, there are some common traits or red flags you can look for, such as:

- The person offering you a link placement mentions they have a list of domains they can share.

- The prospective linking site has little to no traffic and doesn’t appear to have human engagement (blog comments, social media followers, blog views, etc.).

- The website features thin content and little investment into user experience (UX) and design.

- The website covers generic topics and categories, catering to any and all audiences.

- Pages on the site feature numerous external links but only some internal links.

- The prospective domain’s backlink profile features overoptimization in any of the previously discussed forms (high-density of exact match anchor text, abnormal ratio of nofollowed links, only one or two link types, etc.).

Again, diversification – in both tactics and strategies – is crucial to building a healthy backlink profile, but steering clear of obvious PBNs and remembering the ‘golden rule’ of link building will go a long way toward keeping your profile free from toxicity.

Evaluating Your Backlink Profile

As you work diligently to build and maintain a diverse, healthy backlink profile, you should also carve out time to evaluate it regularly from a more analytical perspective.

There are two main ways to evaluate the merit of your backlinks: leverage tools to analyze backlinks and compare your backlink profile to the greater competitive landscape.

Leverage Tools To Analyze Backlink Profile

There are a variety of third-party tools you can use to analyze your backlink profile.

These tools can provide helpful insights, such as the total number of backlinks and referring domains. You can use these tools to analyze your full profile, broken down by:

- Followed vs. nofollowed.

- Authority metrics (Domain Rating, Domain Authority, Authority Score, etc.).

- Backlink types.

- Location or country.

- Anchor text.

- Top-level domain types.

- And more.

You can also use these tools to track new incoming backlinks, as well as lost backlinks, to help you better understand how your backlink profile is growing.

Some of the best tools for analyzing your backlink profile are:

Many of these tools also have features that estimate how toxic or suspicious your profile might look to search engines, which can help you detect potential issues early.

Compare Your Backlink Profile To The Competitive Landscape

Lastly, you should compare your overall backlink profile to those of your competitors and those competing with your site in the search results.

Again, the previously mentioned tools can help with this analysis – as far as providing you with the raw numbers – but the key areas you should compare are:

- Total number of backlinks.

- Total number of referring domains.

- Breakdown of authority metrics of links (Domain Rating, Domain Authority, Authority Score, etc.).

- Authority metrics of competing domains.

- Link growth over the last two years.

Comparing your backlink profile to others within your competitive landscape will help you assess where your domain currently stands and provide insight into how far you must go if you’re lagging behind competitors.

It’s worth noting that it’s not as simple as whoever has the most backlinks will perform the best in search.

These numbers are typically solid indicators of how search engines gauge the authority of your competitors’ domains, and you’ll likely find a correlation between strong backlink profiles and strong search performance.

Approach Link Building With A User-First Mindset

The search landscape continues to evolve at a breakneck pace and we could see dramatic shifts in how people search within the next five years (or sooner).

However, at this time, search engines like Google still rely on backlinks as part of their ranking algorithms, and you need to cultivate a strong backlink profile to be visible in search.

Furthermore, if you follow the advice in this article as you build out your profile, you’ll acquire backlinks that benefit your site regardless of search algorithms, futureproofing your traffic sources.

Approach link acquisition like you would any other marketing endeavor – with a customer-first mindset – and over time, you’ll naturally build a healthy, diverse backlink profile.

More resources:

Featured Image: Sammby/Shutterstock