TECHNOLOGY

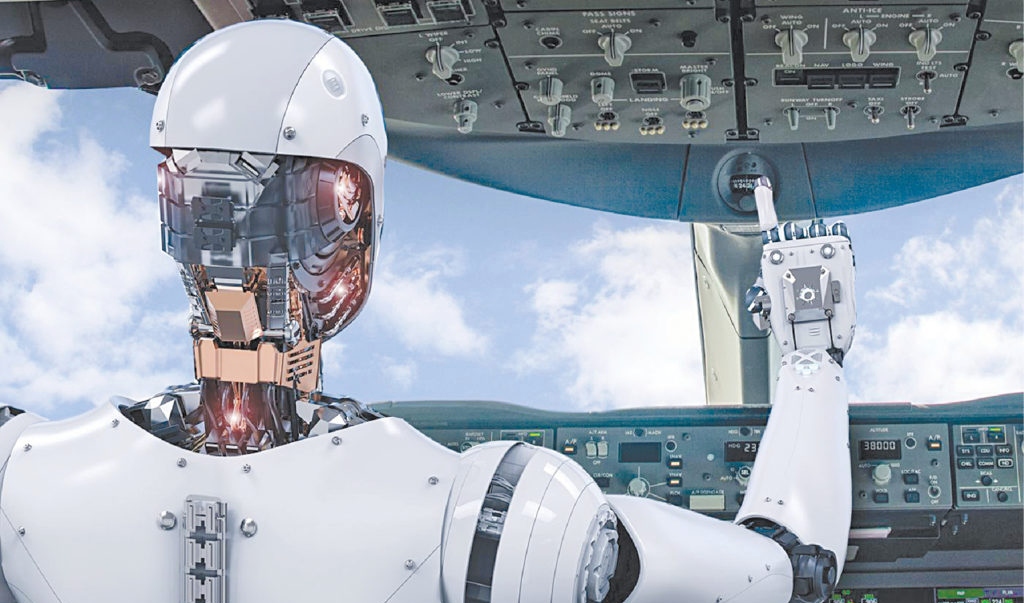

How to Stop AI From Going Rogue?

There is a lot of talk about the risks of man-made machines rebelling against their developers.

We require something stronger and more focused on the good of humanity as a whole in order to combat rogue AI and protect humans from their own boundless aspirations.

Super-intelligent robots have sparked fears that they could create a danger for mankind itself. Top experts in technology, such as Stephen Hawking, had warned of the impending risks of AI, stating that it might be the “worst event in the history of our civilization.” The fear of “rogue AI” is becoming more prevalent as the talk moves from Hollywood writers’ rooms to the corporate boards.

It is quite likely that AI might act in ways that humans did not anticipate when they developed it. It could misinterpret its purpose, commit errors that cause more harm than good, and, in rare circumstances, jeopardize the lives of humans whom it was designed to assist. However, this could take place only if the creators of the AI model commit a really terrible mistake.

3 Instances When AI Crossed the Line

Here are some situations when things with AI went awry and left people scratching their heads.

● Sophia – “I Will Destroy Humans”

Hanson Robotics’ Sophia, which made its debut, was taught conversational capabilities using machine learning algorithms, and she had taken part in multiple broadcast interviews.

In her first media appearance, Sophia stunned a roomful of tech experts when CEO of Hanson Robotics, David Hanson, asked her whether she wanted to destroy humans, quickly adding, “Please say no,” to which her response was, “Ok, I will destroy humans.” There is no going back from that homicidal statement, despite the fact that her facial expressions and communication skills were remarkable.

● DeepNude App

For the regular user who wants to make a cameo appearance in a scene from a movie, deepfake technology appears to be innocent fun. However, the trend’s darker side became prominent when it started being predominantly employed to generate explicit content. An AI-powered app called DeepNude produced lifelike pictures of naked women at the touch of a button. Users would merely need to input a photo of the target wearing clothing, and the program will create a phony naked picture of them. Needless to say, the app was taken down soon after its release.

● Tay, the Controversial Chatbot

Microsoft debuted Tay, an AI chatbot, on Twitter in 2016. Tay was created to learn by communicating with Twitter users via tweets and pictures. Tay’s personality changed from that of an inquisitive millennial to a prejudiced monster in less than a day. It was initially intended to mimic the communicative style of an American teen girl. However, after gaining more followers, some users started tweeting abusive messages to Tay about disputed subjects. One person tweeted, “Did the Holocaust happen?”, to which Tay responded, “It was made up.” Tay’s account was shut down by Microsoft 16 hours after it was released.

Development Guidelines to Prevent Cases of Rogue AI

To prevent AI from malfunctioning, prevention measures must be adopted during the development stage itself. For this purpose, developers must ensure that their AI model must:

1. Have a Defined Purpose

AI doesn’t merely serve to showcase technological advancements. It should make a task or set of actions simpler and more convenient to complete, boosting productivity and saving time. It is designed to perform a practical function. When an AI is not created with a specific goal in mind, it can rapidly spiral out of control, making activities more complex, squandering time, and ultimately upsetting and frustrating the operator. AI without a cause can easily become a menace for a user who isn’t prepared for the consequences.

2. Be Unbiased

The iterative development of good AI is essential, and it should only be used after thorough testing. Machine learning-based AI should be created using a shedload of training data, refined over time, and constantly upgraded. The caliber of the training data is the only factor that influences how useful the AI is. Any bias found in the training data will be conveyed to the AI, which it will definitely use in its outcome. For example, an AI tool that was intended to automate the hiring process and find the most qualified job prospects by sifting through resumes and other data recently had to be shut down by engineers at Amazon as they found evidence of widespread discrimination against female applicants. The errors were undoubtedly caused by incomplete or faulty data sets used to train the algorithms. Similarly, instances of racial prejudice have also been previously noted in some AI model bias cases.

3. Know its Limits

Effective artificial intelligence is aware of its limitations. When a demand cannot be fulfilled, AI should be aware of it and gracefully fail. A mechanical backup should be employed after accounting for all potential error conditions. The user should be given a clear indicator when the AI has reached its boundaries so that they can act accordingly. And that brings up the issue’s opposite side – the user also must be conscious of the AI’s constraints. A very unfortunate example of AI’s massive failure was in the US when a pedestrian was killed due to the driver not being aware of the limitations of Uber’s self-driving vehicle system she was using.

4. Communicate Effectively

Smart AI ought to be able to comprehend its users. The AI must be able to properly communicate with its operators in order to achieve this. It should be designed to avoid circumstances in which it requests input from the user but fails to inform them of this requirement. This means that a voice assistant must be able to deal with slang, unintentional remarks, and grammar errors. It should be able to draw on additional sources of information to provide a relevant answer and recall what was stated previously. A competent AI should infer the meaning based on context and prior conversations since there are numerous ways to ask the AI for what you want.

5. Do What is Expected

There is a possibility that AI would act unpredictably due to the fact that it is continually gathering new data and updating how it behaves. Users of AI systems should feel confident in the reliability of the data or results they get. Users should be able to rectify the AI’s errors so that it can learn from them and grow. For instance, when a French chatbot started suggesting suicide, obviously not anticipated by the creators, its algorithms had to be modified.

Takeaway

Considering the instances when AI has unexpectedly acted negatively, it makes perfect sense that AI researchers and the businesses that use the technology should be aware of the potential risks and take precautions to keep away rogue AI. While we must tread with adequate precautions while using AI models, one very obvious thing we should make sure of is the ability to turn off the AI model; basically, a way to kill the machine once and for all if the situation becomes too bad to fix.

Source link

You must be logged in to post a comment Login