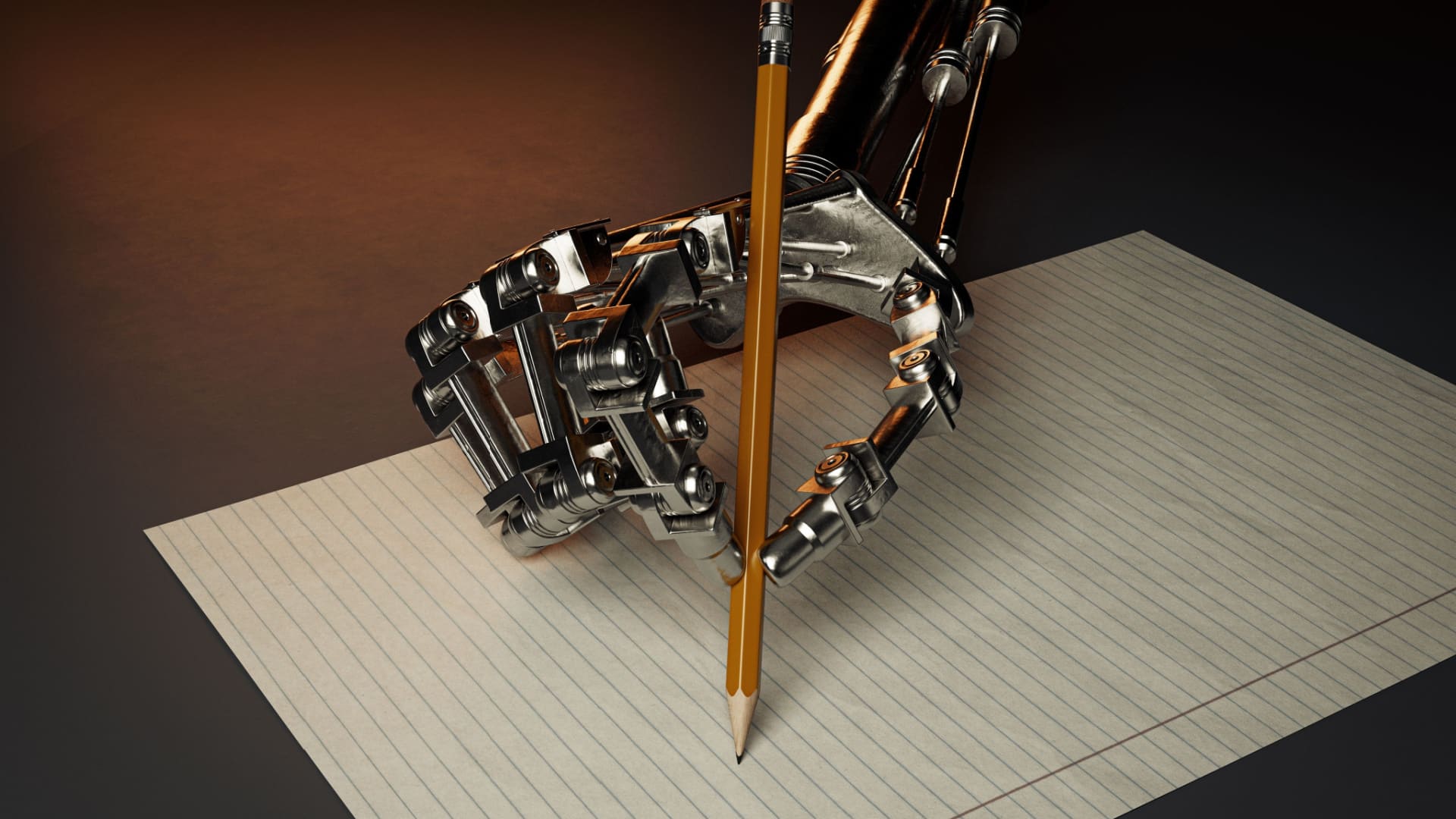

Last week Google announced it was changing its algorithm to rank content “by people, for people” higher than content written around SEO. But what about content written by machines for people? Has AI-generated content gotten to the point where it won’t hurt page rankings?

Google says what it calls “automatically generated content” is SPAM and a violation of its guidelines. This long-standing rule, written at a time when AI wasn’t as sophisticated, was primarily concerned with attempts to game search rankings. Since then, in informal discussions, officials at the company have indicated that they’re more interested in the quality of content than in who or what created it.

“I think Google is going to get it right,” says Nick Duncan, founder and developer of Contentbot.AI, an AI assistant for content creation. “And I hope that they do because we shouldn’t be using this technology to take over our job. It shouldn’t be so easy that you can spin up a 1000 word article, not check it, not worry about it and then push it out to your blog.”

AI content generators invent facts

Contentbot, Jasper.AI, Copy.AI and Quillbot are just a few of the companies now providing AI-based services for content generation. Duncan says that as of now AI isn’t capable of generating good content on its own.

“You still need — and for the most part you probably will for a long time — a human in the loop with this technology because it’s absolutely terrible at creating facts,” he says. “We don’t allow people to use it in the medical, financial or legal field because it will just make stuff up.”

Read next: The AI content creation space is growing

What these systems are best for is generating story ideas and copy for ads and emails, improving language, researching topics, creating outlines and paraphrasing.

Sound like an expert

Which isn’t to say that they can’t generate reasonable copy. Duncan asked Contentbot for something about ergonomic office furniture and how it can increase productivity.

Here’s what it came up with:

“Ergonomic furniture is designed to help increase comfort and productivity in the workplace. Many workers spend extended periods of time in the office and in most cases spend the majority of their day sitting and increasing. A number of companies are implementing ergonomic furniture.”

Duncan says, “I wouldn’t go so far as to say that it can start writing documentation but it can definitely make you sound like an expert in the field, if that’s what you’re after.”

Get MarTech! Daily. Free. In your inbox.

Therein lies the problem and not just for Google. Duncan says these systems can be used to do things like produce fake product reviews at scale. That means they can also be used for disinformation — an area where invented facts can be seen as a feature, not a bug.

Take action now

He believes companies need to be taking more action now to prevent that from happening.

“The ethical issue is that you can get a bunch of bad actors in the space,” he says. “There are content generators that can be used for black hat SEO techniques.”

One of the best protections is that OpenAI, whose system is the basis for all these content generation systems, is very careful about who can use their product. Duncan says he and his competitors are all concerned about misuse. Fortunately, AI can be a big help with that.

“We have automated tools that make sure you’re not writing…fake news, you’re not creating disinformation,” he says. “When our system identifies it, it sends them a warning and it can suspend them. It lets us know about what’s going on as well. If it falls into any sort of category that we don’t want them to write about – say diabetes – then it will issue a warning and we’ll be notified. And we can intervene. So, again, a human in the loop helping this AI process take place.”

Read next: 3 content challenges and how marketers can overcome them

You must be logged in to post a comment Login