Ringing alarm bells, Biden campaign calls Facebook ‘foremost propagator’ of voting disinformation

In a new letter to its chief executive on the eve of the first presidential debate, the Biden campaign slammed Facebook for its failure to act on false claims about voting in the U.S. election.

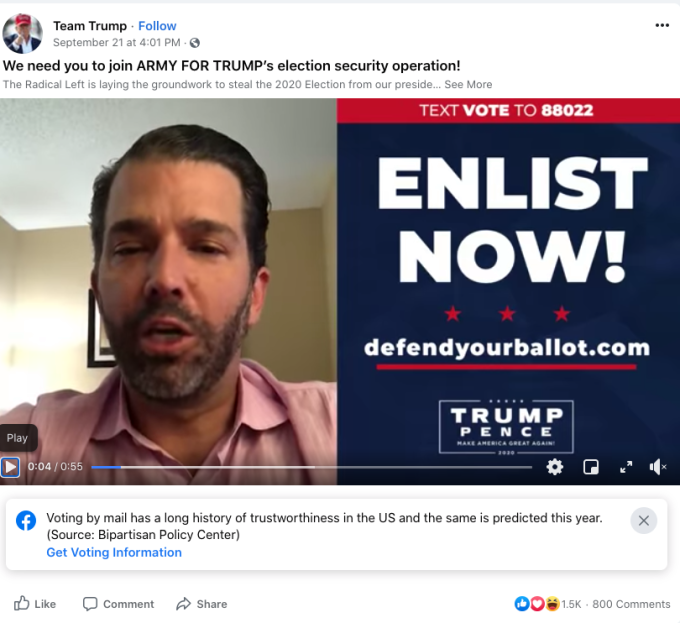

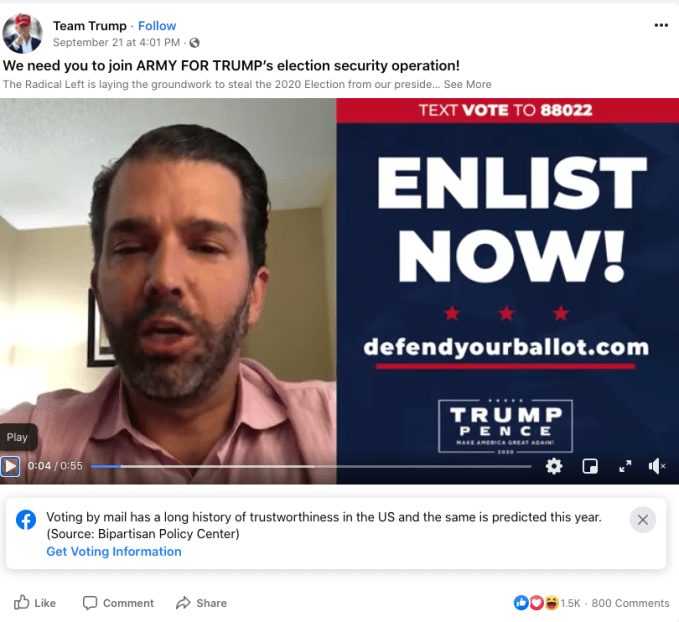

In the scathing letter, published by Axios, Biden Campaign Manager Jen O’Malley Dillon specifically singled out a troubling video post the Trump campaign shared to Facebook and Twitter last week.

Over the course of that video, the president’s son claims that his father’s political opponents “plan to add millions of fraudulent ballots that can cancel your vote and overturn the election” and calls on supporters to “enlist now” in an “army for Trump election security operation.” Those false claims appear to have inspired some Trump supporters, who plan to guard ballot drop-off sites and polling places — a form of voter intimidation that would likely constitute a federal crime.

When the Biden campaign (along with many others) flagged the video to Facebook, the company apparently said that the content would not be removed, pointing to its small, unobtrusive voting info labels that appear alongside all posts related to the 2020 U.S. election. The video remains up on Twitter with a similar label.

“We were assured that the label affixed to the video, buried on the top right corner of the screen where many viewers will miss it, should allay any concerns,” O’Malley Dillon wrote in the letter, addressed to Mark Zuckerberg .

“No company that considers itself a force for good in democracy, and that purports to take voter suppression seriously, would allow this dangerous claptrap to be spread to millions of people. Removing this video should have been the easiest of easy calls under your policies, yet it remains up today.”

In the letter, O’Malley Dillon also cites the president’s own repeated attempts to undermine national confidence in the 2020 election with unsubstantiated lies about the voting process, which is already under unique strain this year from the pandemic.

Rather than taking a strong approach to limit the reach of election-related disinformation from the president and his supporters, Facebook has largely remained hands-off. The platform is more comfortable touting its get out the vote campaign and other politically neutral efforts to inform and mobilize voters. Facebook clearly hopes those measures will offset its current role disseminating domestic disinformation from the president himself, but given the scope of what’s happening — and its lingering failures from 2016 — that doesn’t look likely.

“As you say, ‘voting is voice.’ Facebook has committed to not allow that voice to be drowned out by a storm of disinformation, but has failed at every opportunity to follow through on that commitment,” O’Malley Dillon wrote, adding that the Biden campaign would “be calling out those failures” over the course of the remaining 36 days until the election.

TechCrunch

Stay in the loop with Entireweb

Get the latest updates delivered straight to your inbox. No spam - unsubscribe anytime.