SEO

5 Ways You Can Really Steal Organic Clicks from Industry Giants

The giants of fairy-tales have three things going for them: strength, resources, and infamy.

In a similar way, our industry giants often dominate the searches due to the strength of their team, the resources of a large budget, and their brand fame.

A household name, a large team of experts, and a budget to rival the plunder of a fantasy kingdom, isn’t a luxury we all have as search marketers.

So how do you stand out from the crowd in a marketplace dominated by industry giants with resources out of your reach?

We are going to explore ways to win organic clicks and conversions away from the big, established players in your space while working with budgets a fraction of theirs.

In just five steps, I’m going to show you how you can prepare your website to bring down the giants.

1. Look for Their Weaknesses

The first step in competing against the dominant brands is assessing where they have weaknesses.

Content Gap Analysis

Start with a content audit. Using a tool like Ahrefs, you get an understanding of what keywords you are ranking for, which they are not.

In Ahrefs you can enter your domain and a couple of the sites of competitors who are also in the top 5 organic search results into the “Content Gap” feature and compare it with the number one player in your industry.

This will show you which keywords you and your competitors are ranking for which the giant is not. This gives you an idea of where your competitive edge is.

For example, I have chosen the industry “wooden sheds” and entered two websites and the brand I am keen on improving.

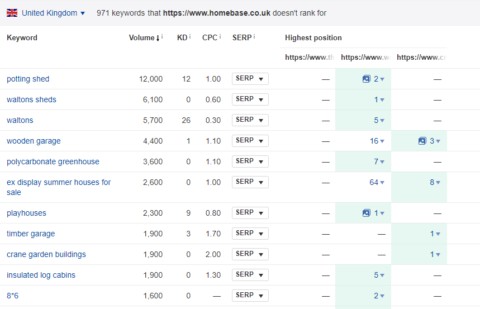

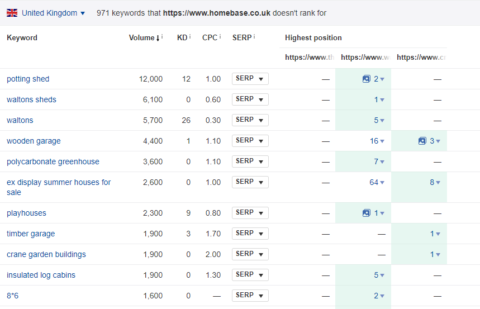

If the giant I’m wishing to take down is Homebase, a large home improvement and gardening store here in the UK, then I can see that the keywords they are not ranking for, but some or all of the smaller brands are.

This gives me an idea that I can compete more easily for terms such as “potting shed” and “playhouses”, both with a high monthly search volume but not something the giant is currently targeting.

Screenshot from Ahrefs’ “Content Gap” report

Content Format Gaps

The other aspect you should be looking at in your content gap analysis is the formats of content that are not being utilized by your heavyweight competitor.

Whatever your industry there is always the scope to go outside of the standard “text on a page” template for your site.

Diagrams, videos, and audio files all increase the ways your website can be found through search. You can easily use a crawler to search the code of a site for the indicators that it is using formats such as PDFs and even videos.

For more detail on how to use a web crawler and custom extraction to identify types of content on a page, such as YouTube iframes, take a look at Screaming Frog’s guide to web scraping and data extraction.

Once you have an idea of what content your competitors are not using you can start to take advantage of that gap.

For instance, producing video guides to explain how to set up the technical products you are both selling, or the audio explanation of complicated medical topics could give you that edge in usability and conversion.

Make a Display of Strength

Improving how your content is displayed in the search results can be an easy shot to take against a behemoth competitor.

Oftentimes, due to the sheer volume of products or pages on a site, they rely on templated page titles and descriptions rather than having the time or facility to craft them all by hand. Use that to your advantage.

Writing a compelling meta description to encourage click-through might seem like a fundamental of SEO.

Up against the likes of Amazon, however, whose inventory is in the millions, it can cause you to get the click even if you aren’t out-ranking them.

Mark up Your Unique Content

The best way to maximize the effectiveness of the content types you are utilizing that your competitors aren’t, is by using schema markup.

This will enable some search engines to pull through this information and display it in the SERPs in a more appealing way than a standard search result.

For example, if you choose to use videos on your site you can mark them up in such a way that Google can use them to populate the video carousel, a spot in the SERPs that is reserved purely for videos.

Even if your giant has great written copy that answers a user’s question it will not be able to outrank the video you created that visually answers their query.

Aim for Featured Snippets

The holy grail of search results, the featured snippet, evens the playing field when it comes to search rankings.

No matter if your site is not ranking in first position for a search query, you are still eligible to appear in the “position 0” placement if your content best answers the user’s search.

Studying the search results related to your industry can allow you to see when featured snippets are appearing and what is the content currently populating this area.

Google’s John Mueller has shared some details on how to rank for featured snippets.

Use Your Other Resources

If you are lucky enough to have access to paid search accounts for your brand then make sure you are using the data gathered from them. Analyze the converting terms that are relevant to the campaign you are running.

Larger brands may well find their teams working in silos, or even outsourcing elements of their campaigns to different agencies which means the cross-channel insight is harder to come by. Use this to your advantage.

2. Be Quick & Nimble

Juggernauts are intimidating, but they are also slow. The benefit of a small company or agency is the speed in which you can pivot.

If a campaign is not effective, getting sign-off to learn from it and try something new is not as much of a bureaucracy-laced endeavor as with a large brand.

Quick to React to New Opportunities

Many enterprise businesses have several layers of authorization required to make the smallest change.

Being able to adapt quickly to a change in the market or capitalize on a new audience gives your SEO team the edge.

Keeping an eye on what is performing well on social media can help you to ride the wave of an emerging trend.

This kind of adaptation is often out of reach for larger brands who are beholden to strict marketing and content plans that cannot be deviated from easily.

Through your understanding of market trends and the ability to move swiftly, you can create content for digital PR purposes a lot quicker than a team that is on a strict content plan.

Make Changes Swiftly

An extensive development queue is frequently a barrier to getting changes implemented to a website quickly.

Often, there are other priority tasks your in-house or agency developers are focusing their time on. Multiply this delay by ten for an enterprise site.

Being able to talk to your developers and ask them about priorities is a huge benefit that comes from working in a smaller company.

You may still be outsourcing your development work, but chances are you have a direct dial to a member of their development team or your account manager rather than having to submit a request and escalate it through slower, more official channels.

Make sure you take advantage of this closer working relationship by:

- Discussing your needs with your development team.

- Educating them on the importance of SEO, if it’s an area they are not familiar with.

3. Identify Your Secret Weapon

Your secret weapon against strong competitors is likely going to come out of your ability to focus your time and efforts where they cannot.

Stay Local

In some instances, this could be within a local community.

If your business serves people from a physical location, you are far better placed to rank for queries with a local intent than a purely ecommerce site.

If your large competitors also have brick-and-mortar stores but not in a location near yours, then your niche will be your local area.

Your small business that is in the heart of a community will be able to gain relevant local links from charities, sports clubs and community events in that area far easier than a large multinational corporation.

If you have a handful of shops across a small area, you are more likely to be able to spend time building relationships with local contacts than a centralized SEO team for a company that has hundreds of locations to cover.

Highlight Skills & Expertise

Another great way of differentiating your client or your company from larger competitors is by using its staff to build authority for your site.

The chances of the CEO of a company that has 100 employees being open to working with you to secure local media coverage is higher than one who is overseeing a 10,000-strong company and not even resident in the country you are optimizing the site for.

Expertise within the brand you are trying to promote will be more accessible within a smaller organization than it would be within a massive one.

Get to know the experts within your brand and start looking for opportunities for them to contribute to digital PR efforts.

Data that has been produced through their research or their expert opinion on a topical subject will go far in promoting the website as a source of authoritative information within the industry.

Keep the Battle Small

One of the key points to remember in taking on a giant is that you are only going to be able to beat them in certain conditions.

For example, you have no hope (or need) to beat Amazon in the SERPs for “cheap pillow cases” if you are a retailer of luxury perfume.

It is key to look at the few pages within their site which are actually competing against your website and identify how you can outrank those.

It may be that you can earn better, more relevant links to your product pages than your big competitor can purely because they have more products so their team’s time will be spread more thinly than yours.

Be Bold

One weakness larger brands have is very tight brand guidelines and sign-off procedures that stop innovation from occurring.

As a smaller brand, you have the opportunity to be bolder in your marketing.

Whether this takes the form of irreverent calls to action in your meta descriptions or taking a swipe at the competitor through a comparison article, you have the option to make an impact where a larger brand is wrapped in red-tape.

4. Develop Your Battle Plan

The key to winning any fight in the SERPs is having a great strategy.

In the case of fighting industry giants, it is imperative that you are developing a battle plan that capitalizes on their weaknesses we’ve already discussed.

Add the Value They Can’t

In many cases, this includes answering the queries they are not. This will likely be long-tail searches that require in-depth research.

A great source of material to start your long-tail strategy off is user forums.

Subreddits can be a gold mine of information on the sorts of questions users want answers to but cannot find an answer to online.

Through sites like this, Quora and Answer The Public, it’s possible to build up a picture of what your target audience is interested in but don’t necessarily have access to.

Use this to create content that engages your target audience in a way that your competitors aren’t.

Bring the Fight to Your Battlefield

It might be that the players dominating the industry on Google are not as hot in other search engines, or indeed, are neglecting other channels altogether.

A key question to ask is, are you ranking in the right search engines?

For instance, it may be that due to their lack of video content that you identified through your content gap analysis they are not visible on YouTube at all.

Is this a channel that you can exploit further? Don’t fall into the trap of only fighting them on one front.

Consider more industry-specific search engines, like TripAdvisor, is this a more level playing field for you to thrive in?

By looking outside of your primary search engine you can open up another line of attack that might not be where their efforts are focused.

5. If You Can’t Beat Them, Join Them

Another option is that if your industry behemoth is actually a marketplace or reseller, like Amazon, it may be a good move for your brand to start selling through them.

This sort of decision is usually outside the purview of a digital marketer, especially if you are working within an agency, rather than in-house.

However, it may be within your remit to make recommendations and use the data you are gathering as part of your reports to encourage this to be considered.

For brands that aren’t ecommerce, another option is to piggyback off the success of your industry competitor and look at partnering with them.

Can you write for their blog so you are getting traffic through referrals from their site and raising your profile with their audience?

For instance, sites in the UK targeting medical information terms such as “eczema treatment” will likely find themselves outranked by the National Health Service (NHS). However, this site does link out to reputable, authoritative websites that may give more specialized advice than they do.

If you stop looking at links as only being there to boost your backlink profile and see them as avenues to raise your profile with your audience, then you could find traffic and engagement rising drastically.

Conclusion

It isn’t impossible to steal organic traffic from the big players in your industry. It’s just daunting.

Put together a robust plan of attack and you should be able to start chipping away at their organic traffic and building up your own.

Soon, you might find you start to level out in size.

More Resources:

Image Credits

All screenshots taken by author, August 2019

Stay in the loop with Entireweb

Get the latest updates delivered straight to your inbox. No spam - unsubscribe anytime.