SEO

How to Spot SEO Myths: 20 Common SEO Myths, Debunked

There’s a lot of advice going around about SEO.

Some of it is helpful but some of it will lead you astray if acted on.

The difficulty is knowing which is which.

It can be hard to identify what advice is accurate and based on fact, and what is just regurgitated from misquoted articles or poorly understood Google statements.

SEO myths abound.

You’ll hear them in the strangest places.

A client will tell you with confidence how they are suffering from a duplicate content penalty.

Your boss will chastise you for not keeping your page titles to 60 characters.

Sometimes the myths are obviously fake. Other times they can be harder to detect.

The Dangers of SEO Myths

The issue is, we simply don’t know exactly how the search engines work.

Due to this, a lot of what we do as SEOs ends up being trial and error and educated guesswork.

When you are learning about SEO, it can be difficult to test out all of the claims you are hearing.

That’s when the SEO myths begin to take hold.

Before you know it, you’re proudly telling your line manager that you’re planning to “BERT optimize” your website copy.

SEO myths can be busted a lot of the time with a pause and some consideration.

How, exactly, would Google be able to measure that?

Would that actually benefit the end-user in any way?

There is a danger in SEO of considering the search engines to be omnipotent, and because of this, wild myths about how they understand and measure our websites start to grow.

What Is An SEO Myth?

Before we debunk some common SEO myths, we should first understand what forms they take.

Untested Wisdom

Myths in SEO tend to take the form of handed-down wisdom that isn’t tested.

As a result, something that might well have no impact on driving qualified organic traffic to a site gets treated like it matters.

Minor Factors Blown out of Proportion

SEO myths might also be something that has a small impact on organic rankings or conversion but is given too much importance.

This might be a “tick box” exercise that is hailed as being a critical factor in SEO success, or simply an activity that might only cause your site to eke ahead if everything else with your competition was truly equal.

Outdated Advice

Myths can arise simply because what used to be effective in helping sites to rank and convert well no longer does but is still being advised.

It might be that something used to work really well.

Over time the algorithms have grown smarter.

The public is more adverse to being marketed to.

Simply, what was once good advice is now defunct.

Google Being Misunderstood

Many times the start of a myth is Google itself.

Unfortunately, a slightly obscure or just not straightforward piece of advice from a Google representative gets misunderstood and run away with.

Before we know it, a new optimization service is being sold off the back of a flippant comment a Googler made in jest.

SEO myths can be based in fact, or perhaps these are more accurately SEO legends?

In the case of Google-born myths, it tends to be that the fact has been so distorted by the SEO industry’s interpretation of the statement that it no longer resembles useful information.

When Can Something Appear to Be a Myth

Sometimes an SEO technique can be written off as a myth by others purely because they have not experienced success from carrying out this activity for their own site.

It is important to remember that every website has its own industry, set of competitors, the technology powering it, and other factors that make it unique.

Blanket application of techniques to every website and expecting them to have the same outcome is naive.

Someone may not have had success with a technique when they have tried it in their highly competitive vertical.

It doesn’t mean it won’t help someone in a less competitive industry have success.

Causation & Correlation Being Confused

Sometimes SEO myths arise because of an inappropriate connection between an activity that was carried out and a rise in organic search performance.

If an SEO has seen a benefit from something they did, then it is natural that they would advise others to try the same.

Unfortunately, we’re not always great at separating causation and correlation.

Just because rankings or click-through rate increased around-about the same time as you implemented a new tactic doesn’t mean it caused the increase.

There could be other factors at play.

Soon an SEO myth arises from an overeager SEO wanting to share what they incorrectly believe to be a golden ticket.

Steering Clear of SEO Myths

It can save you from experiencing headaches, lost revenue, and a whole lot of time if you learn to spot SEO myths and act accordingly.

Test

The key to not falling for SEO myths is making sure you can test advice whenever possible.

If you have been given the advice that structuring your page titles a certain way will help your pages rank better for their chosen keywords, then try it with one or two pages first.

This can help you to measure whether making a change across many pages will be worth the time before you commit to doing so.

Is Google Just Testing?

Sometimes there will be a big uproar in the SEO community because of changes in the way Google displays or orders search results.

These changes are often tested in the wild before they are rolled out to more search results.

Once a big change has been spotted by one or two SEOs, advice on how to optimize for it begins to spread.

Remember the favicons in the desktop search results?

The upset that caused the SEO industry (and Google users in general) was vast.

Suddenly articles sprang up about the importance of favicons in attracting users to your search result.

Whether favicons would impact click-through rate that much barely had time to be studied.

Because just like that, Google changed it back.

Before you jump for the latest SEO advice that is being spread around Twitter as a result of a change by Google, wait to see if it is going to hold.

It could be that the advice that appears sound now will quickly become a myth if Google rolls back changes.

20 Common SEO Myths

So now we know what causes and perpetuates SEO myths, let’s find out the truth behind some of the more common ones.

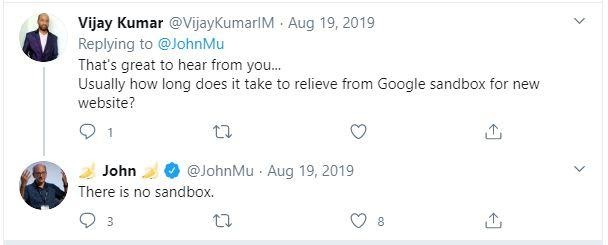

1. The Google Sandbox

It is a belief held by some SEOs that Google will automatically suppress new websites in the organic search results for a period of time before they are able to rank more freely.

It’s something that many SEOs will argue simply is not the case.

So who is right?

SEOs who have been around for many years will give you anecdotal evidence that would both support and detract from the idea of a sandbox.

The only guidance that has been given by Google from this appears to be in the form of tweets.

As already discussed, Google’s social media responses can often be misinterpreted.

Verdict: Officially? It’s a myth.

Unofficially – there does seem to be a period of time whilst Google tries to understand and rank the pages belonging to a new site.

This might mimic a sandbox.

2. Duplicate Content Penalty

This is a myth that I hear a lot. The idea is that if you have content on your website that is duplicated elsewhere on the web, Google will penalize you for it.

The key to understanding what is really going on here is knowing the difference between algorithmic suppression and manual action.

A manual action, the situation that can result in webpages being removed from Google’s index, will be actioned by a human at Google.

The website owner will be notified through Google Search Console.

An algorithmic suppression occurs when your page cannot rank well due to it being caught by a filter from an algorithm.

Chuck Price does a great job of explaining the difference between the two in this article that lays out all of the different manual actions available from Google.

Essentially, having copy that is taken from another webpage might mean you can’t outrank that other page.

The search engines may determine the original host of the copy is more relevant to the search query than yours.

As there is no benefit to having both in the search results, yours gets suppressed. This is not a penalty. This is the algorithm doing its job.

There are some content-related manual actions, as covered in Price’s article, but essentially copying one or two pages of someone else’s content is not going to trigger them.

It is, however, potentially going to land you in other trouble if you have no legal right to use that content. It also can detract from the value your website brings to the user.

Verdict: SEO myth

3. PPC Advertising Helps Rankings

This is a common myth. It’s also quite quick to debunk.

The idea is that Google will favor websites in the organic search results, which spend money with it through pay-per-click advertising.

This is simply false.

Google’s algorithm for ranking organic search results is completely separate from the one used to determine PPC ad placements.

Running a paid search advertising campaign through Google at the same time as carrying out SEO might benefit your site for other reasons, but it won’t directly benefit your ranking.

Verdict: SEO myth

4. Domain Age Is a Ranking Factor

This claim finds itself seated firmly in the “confusing causation and correlation” camp.

Because a website has been around for a long time and is ranking well, age must be a ranking factor.

Google has debunked this myth itself many times.

In fact, as recently as July 2019, Google Webmaster Trends Analyst John Mueller replied to a tweet suggesting that domain age was one of “200 signals of ranking” saying “No, domain age helps nothing”

The truth behind this myth is that an older website has had more time to do things well.

For instance, a website that has been live and active for 10 years may well have acquired a high volume of relevant backlinks to its key pages.

A website that has been running for less than six months will be unlikely to compete with that.

The older website appears to be ranking better, and the conclusion is that age must be the determining factor.

Verdict: SEO myth

5. Tabbed Content Affects Rankings

This idea is one that has roots going back a long way.

The premise is that Google will not assign as much value to the content that is sitting behind a tab or accordion.

For example, text that is not viewable on the first load of a page.

Google has again debunked this myth as recently as March 31, 2020, but it has been a contentious idea amongst many SEOs years.

In September 2018, Gary Illyes, Webmaster Trends Analyst at Google, answered a tweet thread about using tabs to display content.

His response:

“AFAIK, nothing’s changed here, Bill: we index the content, its weight is fully considered for ranking, but it might not get bolded in the snippets. It’s another, more technical question how that content is surfaced by the site. Indexing does have limitations.”

If the content is visible in the HTML, there is no reason to assume that it is being devalued just because it is not apparent to the user on the first load of the page.

This is not an example of cloaking, and Google can easily fetch the content.

As long as there is nothing else that is stopping the text from being viewed by Google, it should be weighted the same as copy, which isn’t in tabs.

Want more clarification on this?

Then check out Roger Montti’s post that puts this myth to bed.

Verdict: SEO myth

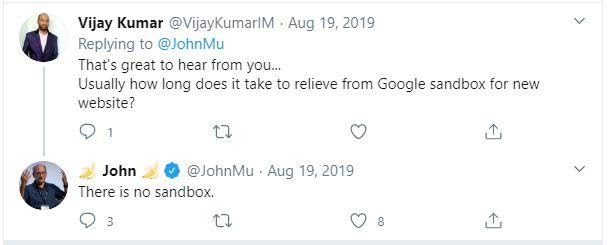

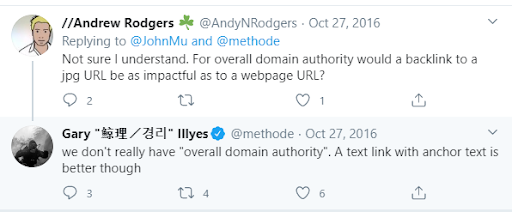

6. Google Uses Google Analytics Data in Rankings

This is a common fear amongst business owners.

They study their Google Analytics reports.

They feel their average sitewide bounce rate is too high, or their time on page is too low.

So they worry that Google will perceive their site to be low quality because of that.

They fear they won’t rank well because of it.

The myth is that Google uses the data in your Google Analytics account as part of its ranking algorithm.

It’s a myth that has been around for a long time.

Google’s Gary Illyes has again debunked this idea simply with, “We don’t use *anything* from Google analytics [sic] in the “algo.”

If we think about this logically, using Google Analytics data as a ranking factor would be really hard to police.

For instance, using filters could manipulate data to make it seem like the site was performing in a way that it isn’t really.

What is good performance anyway?

High “time on page” might be good for some long-form content.

Low “time on page” could be understandable for shorter content.

Is either right or wrong?

Google would also need to understand the intricate ways in which each Google Analytics account had been configured.

Some might be excluding all known bots, and others might not.

Some might use custom dimensions and channel groupings, and others haven’t configured anything.

Using this data reliably would be extremely complicated to do.

Consider the hundreds of thousands of websites that use other analytics programs.

How would Google treat them?

Verdict: SEO myth

This myth is another case of “causation, not correlation.”

A high sitewide bounce rate might be indicative of a quality problem, or it might not be.

Low time on page could mean your site isn’t engaging, or it could mean your content is quickly digestible.

These metrics give you clues as to why you might not be ranking well, they aren’t the cause of it.

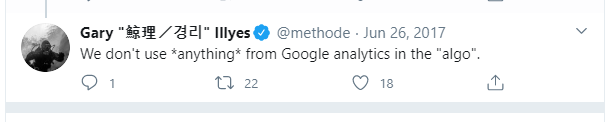

7. Google Cares About Domain Authority

PageRank is a link analysis algorithm used by Google to measure the importance of a webpage.

Google used to display a page’s PageRank score, a number up to 10, on its toolbar.

Google stopped updating the PageRank displayed in toolbars in 2013. In 2016 Google confirmed that the PageRank toolbar metric was not going to be used going forward.

In the absence of PageRank, many other third-party authority scores have been developed.

Commonly known ones are:

- Moz’s Domain Authority and Page Authority scores.

- Majestic’s Trust Flow and Citation Flow.

- Ahrefs’ Domain Rating and URL Rating.

These scores are used by some SEOs to determine the “value” of a page.

That calculation can never be an entirely accurate reflection of how a search engine values a page, however.

Commonly, SEOs will refer to the ranking power of a website often in conjunction with its backlink profile.

This too is known as the domain’s authority.

You can see where the confusion lies.

Google representatives have dispelled the notion of a domain authority metric used by them.

Gary Illyes once again debunking myths with “we don’t really have “overall domain authority.”

Verdict: SEO myth

8. Longer Content Is Better

You will have definitely heard it said before that longer content ranks better.

More words on a page automatically make yours more rank-worthy than your competitor’s.

This is “wisdom” that is often shared around SEO forums without little evidence to substantiate it.

There are a lot of studies that have been released over the years that state facts about the top-ranking webpages, such as “on average pages in the top 10 positions in the SERPs have over 1,450 words on them.”

It would be quite easy for someone to take this information in isolation and assume it means that pages need approximately 1,500 words to rank on Page 1. That isn’t what the study is saying, however.

Unfortunately, this is an example of correlation, not necessarily causation.

Just because the top-ranking pages in a particular study happened to have more words on them than the pages ranking 11th and lower does not make word count a ranking factor.

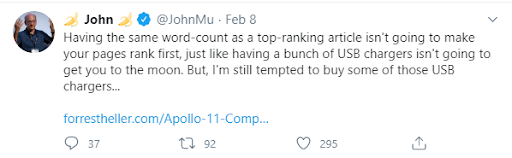

John Mueller of Google recently dispelled this myth:

Verdict: SEO myth

9. LSI Keywords Will Help You Rank

What exactly are LSI keywords?

LSI stands for “latent semantic indexing.”

It is a technique used in information retrieval that allows concepts within the text to be analyzed and relationships between them identified.

Words have nuances dependent on their context. The word “right” has a different connotation when paired with “left” than when it is paired with “wrong.”

Humans can quickly gauge concepts in text. It is harder for machines to do so.

The ability for machines to understand the context and linking between entities is fundamental to their understanding of concepts.

LSI is a huge step forward for a machine’s ability to understand text.

What it isn’t is synonyms.

Unfortunately, the field of LSI has been devolved by the SEO community into the understanding that using words that are similar or linked thematically will boost rankings for words that aren’t expressly mentioned in the text.

It’s simply not true. Google has gone far beyond LSI in its understanding of text, for instance, the introduction of BERT.

For more about what LSI is, and more importantly, what it isn’t, take a look at Clark Boyd’s article

Verdict: SEO myth

10. SEO Takes 3 Months

It helps us get out of sticky conversations with our bosses or clients.

It leaves a lot of wiggle room if you aren’t getting the results you promised.

“SEO takes at least 3 months to have an effect.”

It is fair to say that there are some changes that will take time for the search engine bots to process.

There is then, of course, some time to see if those changes are having a positive or negative effect. Then more time might be needed to refine and tweak your work.

That doesn’t mean that any activity you carry out in the name of SEO is going to have no effect for three months. Day 90 of your work will not be when the ranking changes kick-in.

There is a lot more to it.

If you are in a very low competition market, targeting niche terms, you might see ranking changes as soon as Google recrawls your page.

A competitive term could take much longer to see changes in rank.

A study by Ahrefs suggested that of the 2 million keywords they analyzed, the average age of pages ranking in position 10 of Google was 650 days. This study indicates that newer pages struggle to rank high.

However, there is more to SEO than ranking in the top 10 of Google.

For instance, a well-positioned Google My Business listing with great reviews can pay dividends for a company.

Bing, Yandex, and Baidu might be easier for your brand to conquer the SERPs in.

A small tweak to a page title could see an improvement in click-through rates. That could be the same day if the search engine were to recrawl the page quickly.

Although it can take a long time to see first page rankings in Google, it is naïve of us to reduce SEO success just down to that.

Therefore, “SEO takes 3 months” simply isn’t accurate.

Verdict: SEO myth

11. Bounce Rate Is a Ranking Factor

Bounce rate is the percentage of visits to your website that result in no interactions beyond landing on the page. It is typically measured by a website’s analytics program such as Google Analytics.

Some SEOs have argued that bounce rate is a ranking factor because it is a measure of quality.

Unfortunately, it is not a good measure of quality.

There are many reasons why a visitor might land on a webpage and leave again without interacting further with the site. They may well have read all the information they needed to on that page and left the site to call the company and book an appointment. In that instance, the visitor bouncing has resulted in a lead for the company.

Although a visitor leaving a page having landed on it could be an indicator of poor quality content, it isn’t always. It, therefore, wouldn’t be reliable enough for a search engine to use as a measure of quality.

“Pogo-sticking,” or a visitor clicking on a search result and then returning to the SERPs, would be a more reliable indicator of the quality of the landing page. It would suggest that the content of the page was not what the user was after, so much so that they have returned to the search results to find another page or re-search.

John Mueller cleared this up in a Google Webmaster Hangout in July 2018 with:

“We try not to use signals like that when it comes to search. So that’s something where there are lots of reasons why users might go back and forth, or look at different things in the search results, or stay just briefly on a page and move back again. I think that’s really hard to refine and say, “well, we could turn this into a ranking factor.”

Verdict: SEO myth

12. It’s All About Backlinks

Backlinks are important, that’s without much contention within the SEO community. However, exactly how important is still debated.

Some SEOs will tell you that backlinks are one of the many tactics that will influence rankings and not the most important. Others will tell you it’s the only real game-changer.

What we do know is that the effectiveness of links has changed over time. Back in the wild pre-Jagger days, link-building consisted of adding a link to your website wherever you could.

Forum comments spun articles, and irrelevant directories were all good sources of links.

It was easy to build effective links.

It’s not so easy now. Google has continued to make changes to its algorithms that reward higher quality, more relevant links, and disregard or penalize “spammy” links.

However, the power of links to affect rankings is still great.

There will be some industries that are so immature in SEO that a site can rank well without investing in link-building, purely through the strength of their content and technical efficiency.

That’s not the case with most industries.

Relevant backlinks will, of course, help with ranking, but they need to go hand-in-hand with other optimizations.

Your website still needs to have relevant copy, and it must be crawlable.

Google’s John Mueller recently stated, “links are definitely not the most important SEO factor.”

If you want your traffic to actually do something when they hit your website, it’s definitely not all about backlinks.

Ranking is only one part of getting converting visitors to your site. The content and usability of the site are extremely important in user engagement.

Verdict: SEO myth

13. Keywords in URLs Are Very Important

Cram your URLs full of keywords. It’ll help.

Unfortunately, it’s not quite as powerful as that.

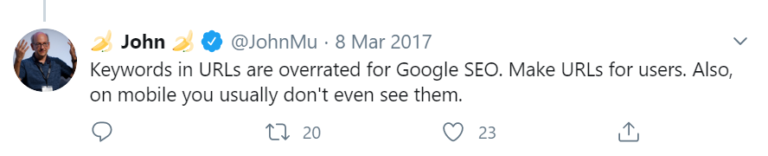

John Mueller has said several times that keywords in a URL are a very minor, lightweight ranking signal.

If you are looking to rewrite your URLs to include more keywords, you are likely to do more damage than good.

The process of redirecting URLs en masse should be when necessary as there is always a risk when restructuring a site.

For the sake of adding keywords to a URL? Not worth it.

Verdict: SEO myth

14. Website Migrations Are All About Redirects

It is something that is heard too often by SEOs. If you are migrating a website, all you need to do is remember to redirect any URLs that are changing.

If only this one was true.

In actuality, website migration is one of the most fraught and complicated procedures in SEO.

A website changing its layout, CMS, domain, and/or content can all be considered a website migration.

In each of those examples, there are several aspects that could affect how the search engines perceive the quality and relevance of the pages to their targeted keywords.

As a result of this, there are numerous checks and configurations that need to occur if the site is going to maintain its rankings and organic traffic.

Ensuring tracking hasn’t been lost. Maintaining the same content targeting. Making sure the search engines’ bots can still access the right pages.

All of this needs to be considered when a website is significantly changing.

Redirecting URLs that are changing is a very important part of website migration. It is in no way the only thing to be concerned about.

Verdict: SEO myth

15. Well-Known Websites Will Always Outrank Unknown Websites

It stands to reason that a larger brand will have resources that smaller brands do not. As a result, more can be invested in SEO.

More exciting content pieces can be created, leading to a higher volume of backlinks acquired. The brand name alone can lend more credence to outreach attempts.

The real question is, does Google algorithmically or manually boost big brands because of their fame?

This one is a bit contentious.

Some people say that Google favors big brands. Google says, otherwise.

In 2009, Google released an algorithm update named “Vince.” This update had a huge impact on how brands were treated in the SERPs.

Brands that were well-known offline saw ranking increases for broad competitive keywords.

It’s not necessarily time for smaller brands to throw in the towel.

The Vince update falls very much in-line with other Google moves towards valuing authority and quality.

Big brands are often more authoritative on broad-level keywords than smaller contenders.

However, small brands can still win.

Long-tail keyword targeting, niche product lines, and local presence can all make smaller brands more relevant to a search result than established brands.

Yes, the odds are stacked in favor of big brands, but it’s not impossible to outrank them.

Verdict: Not entirely truth or myth

16. Your Page Needs to Include ‘Near Me’ to Rank Well for Local SEO

It’s understandable that this myth is still prevalent.

There is still a lot of focus on keyword search volumes in the SEO industry. Sometimes at the expense of considering user intent and how the search engines understand it.

When a searcher is looking for something with “local intent,” i.e., a place or service relevant to a physical location, the search engines will take this into consideration when returning results.

With Google, you will likely see the Google Maps results as well as the standard organic listings.

The Maps results are clearly centered around the location searched. However, so are the standard organic listings when the search query denotes local intent.

So why do “near me” searches confuse some?

A typical keyword research exercise might yield something like the following:

- pizza restaurant manhattan – 110 searches per month

- pizza restaurants in manhattan – 110 searches per month

- best pizza restaurant manhattan – 90 searches per month

- best pizza restaurants in manhattan – 90 searches per month

- best pizza restaurant in manhattan – 90 searches per month

- pizza restaurants near me – 90,500 searches per month

With search volume like that, you would think “pizza restaurants near me” would be the one to rank for, right?

It is likely, however, that people searching for “pizza restaurant manhattan” are in the Manhattan area or planning to travel there for pizza.

“pizza restaurant near me” has 90,500 searches across the USA. The likelihood is that the vast majority of those searchers are not looking for Manhattan pizzas.

Google knows this and, therefore, will use location detection and serve pizza restaurant results relevant to the searcher’s location.

Therefore, the “near me” element of the search becomes less about the keyword and more about the intent behind the keyword. Google will just consider it to be the location the searcher is in.

So, do you need to include “near me” in your content to rank for those “near me” searches?

No, you need to be relevant to the location the searcher is in.

Verdict: SEO myth

17. Better Content Equals Better Rankings

It’s prevalent in SEO forums and Twitter threads. The common complaint, “my competitor is ranking above me, but I have amazing content, and theirs is terrible.”

The cry is one of indignation. After all, shouldn’t the search engines be rewarding their site for their “amazing” content?

This is both a myth and, sometimes, a delusion.

The quality of content is a subjective consideration. If it is your own content, it’s harder still to be objective.

Perhaps in Google’s eyes, your content isn’t better than your competitors’ for the search terms you are looking to rank for.

Perhaps you don’t meet searcher intent as well as they do.

Maybe you have “over-optimized” your content and reduced its quality.

In some instances, better content will equal better rankings. In others, the technical performance of the site or its lack of local relevance may cause it to rank lower.

Content is one factor within the ranking algorithms.

Verdict: SEO myth

18. You Need to Blog Every Day

This is a frustrating myth because it is one that seems to have spread outside of the SEO industry.

Google loves frequent content. You should add new content or tweak existing content every day for “freshness.”

Where did this idea come from?

Google had an algorithm update in 2011 that rewards fresher results in the SERPs.

This is because, for some queries, the fresher the results, the better likelihood of accuracy.

For instance, search for “royal baby” in the UK in 2013, and you would be served news articles about Prince George. Search it again in 2015, and you would see pages about Princess Charlotte.

In 2018, you would see reports about Prince Louis at the top of the Google SERPs, and in 2019 it would be baby Archie.

If you were to search “royal baby” in 2019, shortly after the birth of Archie, then seeing news articles on prince George would likely be unhelpful.

In this instance, Google discerns the user’s search intent and decides showing articles related to the newest UK royal baby would be better than showing an article that is arguably more rank-worthy due to authority, etc.

What this algorithm update doesn’t mean is that newer content will always outrank older content. Google decides if the “query deserves freshness” or not.

If it does, then the age of content becomes a more important ranking factor.

This means that if you are creating content purely to make sure it is newer than competitors’ content, you are not necessarily going to benefit.

If the query you are looking to rank for does not deserve freshness, i.e., “who is Prince William’s second child?” a fact that will not change, then the age of content will not play a significant part in rankings.

If you are writing content every day thinking it is keeping your website fresh and, therefore, more rank-worthy, then you are likely wasting time.

It would be better to write well-considered, researched, and useful content pieces less frequently and reserve your resources to making those highly authoritative and shareable.

Verdict: SEO myth

19. You Can Optimize Copy Once & Then It’s Done

The phrase “SEO optimized” copy is a common one in agency-land.

It’s used as a way to explain the process of creating copy that will be relevant to frequently searched queries.

The trouble with this is that it suggests that once you have written that copy, ensured it adequately answers searchers’ queries, you can move on.

Unfortunately, over time how searchers look for content might change. The keywords they use, the type of content they want could alter.

The search engines, too may change what they feel is the most relevant answer to the query. Perhaps the intent behind the keyword is perceived differently.

The layout of the SERPs might alter, meaning videos are being shown at the top of the search results where previously it was just web page results.

If you look at a page only once and then don’t continue to update it and evolve it with user needs, then you risk falling behind.

Verdict: SEO myth

20. There Is a Right Way to Do SEO

This one is probably a myth in many industries, but it seems prevalent in the SEO one. There is a lot of gatekeeping in SEO social media, forums, and chats.

Unfortunately, it’s not that simple.

There are some core tenants that we know about SEO.

Usually, something is stated by a search engine representative that has been dissected, tested, and ultimately declared true.

The rest is a result of personal and collective trial and error, testing, and experience.

Processes are extremely valuable within SEO business functions, but they have to evolve and be applied appropriately.

Different websites within different industries will respond to changes in ways others would not. Altering a meta title, so it is under 60 characters long might help the click-through rate for one page, and not for another.

Ultimately, we have to hold any SEO advice we’re given lightly before deciding whether it is right for the website you are working on.

Verdict: SEO myth

Conclusion

Some myths have their roots in logic, and others have no sense to them.

Now you know what to do when you hear an idea that you can’t say for certain is truth or myth.

Featured Image Credit: Paulo Bobita

Stay in the loop with Entireweb

Get the latest updates delivered straight to your inbox. No spam - unsubscribe anytime.