MARKETING

GenAI and the Future of Branding: The Crucial Role of the Knowledge Graph

The author’s views are entirely their own (excluding the unlikely event of hypnosis) and may not always reflect the views of Moz.

The one thing that brand managers, company owners, SEOs, and marketers have in common is the desire to have a very strong brand because it’s a win-win for everyone. Nowadays, from an SEO perspective, having a strong brand allows you to do more than just dominate the SERP — it also means you can be part of chatbot answers.

Generative AI (GenAI) is the technology shaping chatbots, like Bard, Bingchat, ChatGPT, and search engines, like Bing and Google. GenAI is a conversational artificial intelligence (AI) that can create content at the click of a button (text, audio, and video). Both Bing and Google use GenAI in their search engines to improve their search engine answers, and both have a related chatbot (Bard and Bingchat). As a result of search engines using GenAI, brands need to start adapting their content to this technology, or else risk decreased online visibility and, ultimately, lower conversions.

As the saying goes, all that glitters is not gold. GenAI technology comes with a pitfall – hallucinations. Hallucinations are a phenomenon in which generative AI models provide responses that look authentic but are, in fact, fabricated. Hallucinations are a big problem that affects anybody using this technology.

One solution to this problem comes from another technology called a ‘Knowledge Graph.’ A Knowledge Graph is a type of database that stores information in graph format and is used to represent knowledge in a way that is easy for machines to understand and process.

Before delving further into this issue, it’s imperative to understand from a user perspective whether investing time and energy as a brand in adapting to GenAI makes sense.

Should my brand adapt to Generative AI?

To understand how GenAI can influence brands, the first step is to understand in which circumstances people use search engines and when they use chatbots.

As mentioned, both options use GenAI, but search engines still leave a bit of space for traditional results, while chatbots are entirely GenAI. Fabrice Canel brought information on how people use chatbots and search engines to marketers’ attention during Pubcon.

The image below demonstrates that when people know exactly what they want, they will use a search engine, whereas when people sort of know what they want, they will use chatbots. Now, let’s go a step further and apply this knowledge to search intent. We can assume that when a user has a navigational query, they would use search engines (Google/Bing), and when they have a commercial investigation query, they would typically ask a chatbot.

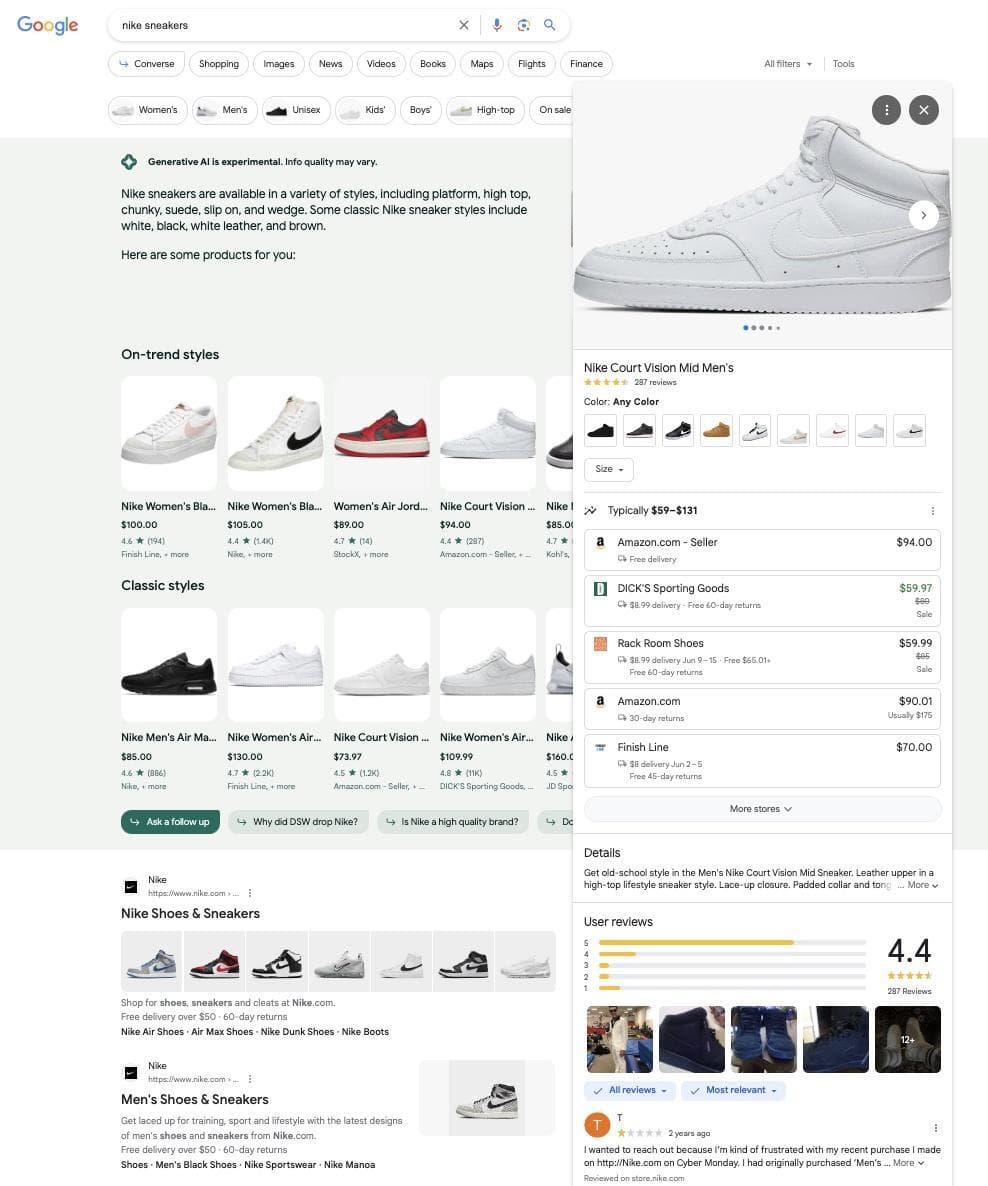

1. When users write a brand or product name into a search engine, you want your business to dominate the SERP. You want the complete package: GenAI experience (that pushes the user to the buying step of a funnel), your website ranking, a knowledge panel, a Twitter Card, maybe Wikipedia, top stories, videos, and everything else that can be on the SERP. Aleyda Solis on Twitter showed what the GenAI experience looks like for the term “nike sneakers”:

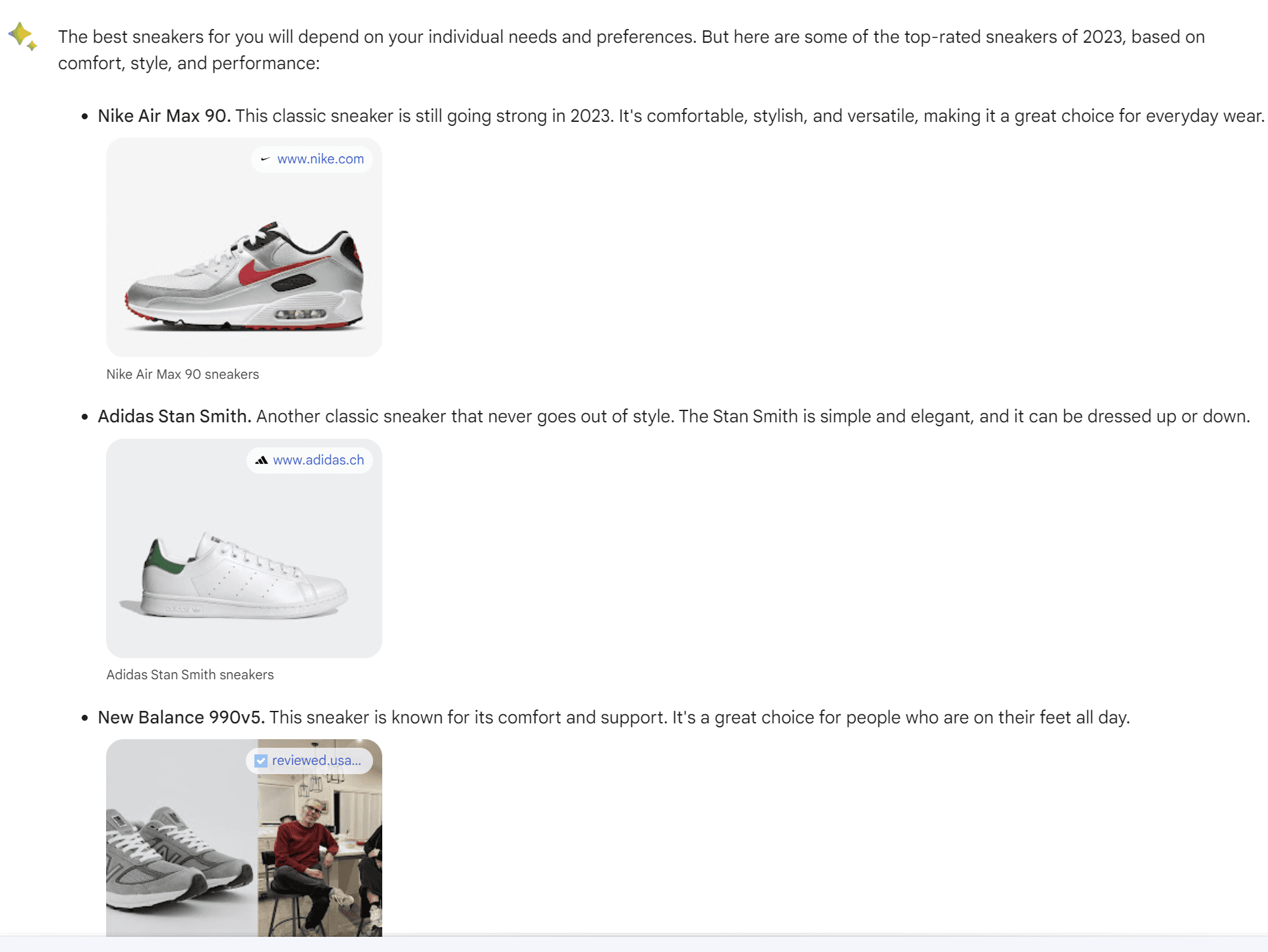

2. When users ask chatbots questions, they typically want their brand to be listed in the answers. For example, if you are Nike and a user goes to Bard and writes “best sneakers”, you will want your brand/product to be there.

3. When you ask a chatbot a question, related answers are given at the end of the original answer. Those questions are important to note, as they often help push users down your sales funnel or provide clarification to questions regarding your product or brand. As a consequence, you want to be able to control the related questions that the chatbot proposes.

Now that we know why brands should make an effort to adapt, it’s time to look at the issues that this technology brings before diving into solutions and what brands should do to ensure success.

The academic paper Unifying Large Language Models and Knowledge Graphs: A Roadmap extensively explains the problems of GenAI. However, before starting, let’s clarify the difference between Generative AI, Large Language Models (LLMs), Bard (Google chatbot), and Language Models for Dialogue Applications (LaMDA).

LLMs are a type of GenAI model that predicts the “next word,” Bard is a specific LLM chatbot developed by Google AI, and LaMDA is an LLM that is specifically designed for dialogue applications.

To make it clear, Bard was based initially on LaMDA (now on PaLM), but that doesn’t mean that all Bard’s answers were coming just from LamDA. If you want to learn more about GenAI, you can take Google’s introductory course on Generative AI.

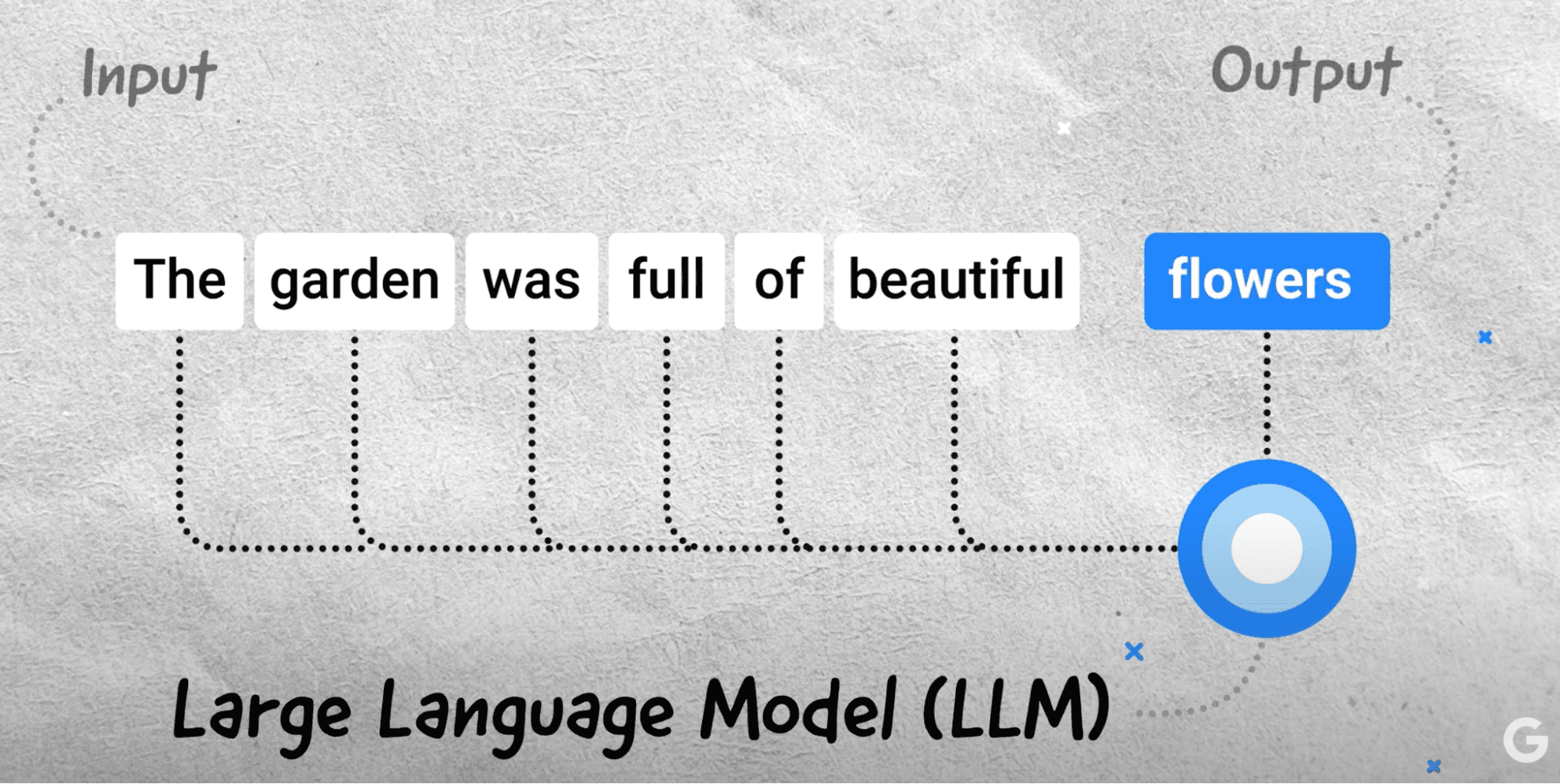

As explained in the previous paragraph, LLM predicts the next word. This is based on probability. Let’s look at the image below, which shows an example from the Google video What are Large Language Models (LLMs)? Considering the sentence that was written, it predicts the highest chance of the next word. Another option could have been the garden was full of beautiful “butterflies.” However, the model estimated that “flowers” had the highest probability. So it selected “flowers.”

Let’s come back to the main point here, the pitfall.

The pitfalls can be summarized in three points according to the paper Unifying Large Language Models and Knowledge Graphs: A Roadmap:

“Despite their success in many applications, LLMs have been criticized for their lack of factual knowledge.” What this means is that the machine can’t recall facts. As a result, it will invent an answer. This is a hallucination.

“As black-box models, LLMs are also criticized for lacking interpretability. LLMs represent knowledge implicitly in their parameters. It is difficult to interpret or validate the knowledge obtained by LLMs.” This means that, as a human, we don’t know how the machine arrived at a conclusion/decision because it used probability.

“LLMs trained on general corpus might not be able to generalize well to specific domains or new knowledge due to the lack of domain-specific knowledge or new training data.” If a machine is trained in the luxury domain, for example, it will not be adapted to the medical domain.

The repercussions of these problems for brands is that chatbots could invent information about your brand that is not real. They could potentially say that a brand was rebranded, invent information about a product that a brand does not sell, and much more. As a result, it’s good practice to test chatbots with everything brand-related.

This is not just a problem for brands but also for Google and Bing, so they have to find a solution. The solution comes from the Knowledge Graph.

One of the most famous Knowledge Graphs in SEO is the Google Knowledge Graph, and Google defines it: “Our database of billions of facts about people, places, and things. The Knowledge Graph allows us to answer factual questions such as ‘How tall is the Eiffel Tower?’ or ‘Where were the 2016 Summer Olympics held?’ Our goal with the Knowledge Graph is for our systems to discover and surface publicly known, factual information when it’s determined to be useful.”

The two key pieces of information to keep in mind in this definition are: 1. It’s a database

2. That stores factual information

This is precisely the opposite of GenAI. Consequently, the solution to solving any of the previously mentioned problems, and especially hallucinations, is to use the Knowledge Graph to verify the information coming from GenAI.

Obviously, this looks very easy in theory, but it’s not in practice. This is because the two technologies are very different. However, in the paper ‘LaMDA: Language Models for Dialog Applications,’ it looks like Google is already doing this. Naturally, if Google is doing this, we could also expect Bing to be doing the same.

The Knowledge Graph has gained even more value for brands because now the information is verified using the Knowledge Graph, meaning that you want your brand to be in the Knowledge Graph.

To be in the Knowledge Graph, a brand needs to be an entity. A machine is a machine; it can’t understand a brand as a human would. This is where the concept of entity comes in. We could simplify the concept by saying an entity is a name that has a number assigned to it and which can be read by the machine. For instance, I like luxury watches; I could spend hours just looking at them.

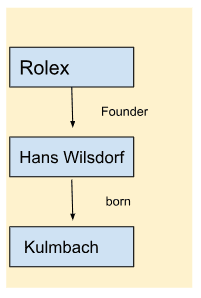

So let’s take a famous luxury watch brand that most of you probably know — Rolex. Rolex’s machine-readable ID for the Google knowledge graph is /m/023_fz. That means that when we go to a search engine, and write the brand name “Rolex”, the machine transforms this into /m/023_fz.

Now that you understand what an entity is, let’s use a more technical definition given by Krisztian Balog in the book Entity-Oriented Search: “An entity is a uniquely identifiable object or thing, characterized by its name(s), type(s), attributes, and relationships to other entities.”

Let’s break down this definition using the Rolex example:

Unique identifier = This is the entity; ID: /m/023_fz

Name = Rolex

Type = This makes reference to the semantic classification, in this case ‘Thing, Organization, Corporation.’

Attributes = These are the characteristics of the entity, such as when the company was founded, its headquarters, and more. In the case of Rolex, the company was founded in 1905 and is headquartered in Geneva.

All this information (and much more) related to Rolex will be stored in the Knowledge Graph. However, the magic part of the Knowledge Graph is the connections between entities.

For example, the owner of Rolex, Hans Wilsdorf, is also an entity, and he was born in Kulmbach, which is also an entity. So, now we can see some connections in the Knowledge Graph. And these connections go on and on. However, for our example, we will take just three entities, i.e., Rolex, Hans Wilsdorf, Kulmbach. From these connections, we can see how important it is for a brand to become an entity and to provide the machine with all relevant information, which will be expanded on in the section “How can a brand maximize its chances of being on a chatbot or being part of the GenAI experience?”

However, first let’s analyze LaMDA , the old Google Large Language Model used on BARD, to understand how GenAI and the Knowledge Graph work together.

I recently spoke to Professor Shirui Pan from Griffith University, who was the leading professor for the paper “Unifying Large Language Models and Knowledge Graphs: A Roadmap,” and confirmed that he also believes that Google is using the Knowledge Graph to verify information.

For instance, he pointed me to this sentence in the document LaMDA: Language Models for Dialog Applications:

“We demonstrate that fine-tuning with annotated data and enabling the model to consult external knowledge sources can lead to significant improvements towards the two key challenges of safety and factual grounding.”

I won’t go into detail about safety and grounding, but in short, safety implies that the model respects human values and grounding (which is the most important thing for brands), meaning that the model should consult external knowledge sources (an information retrieval system, a language translator, and a calculator).

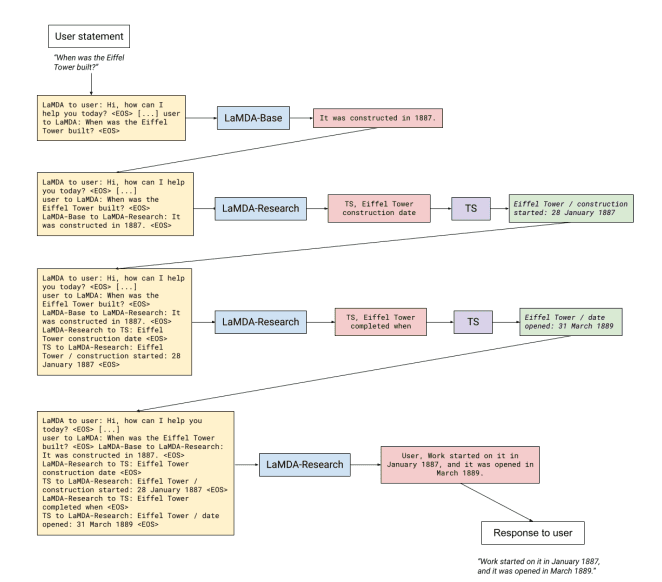

Below is an example of how the process works. It’s possible to see from the image below that the Green box is the output from the information retrieval system tool. TS stands for toolset. Google created a toolset that expects a string (a sequence of characters) as inputs and outputs a number, a translation, or some kind of factual information. In the paper LaMDA: Language Models for Dialog Applications, there are some clarifying examples: the calculator takes “135+7721” and outputs a list containing [“7856”]. Similarly, the translator can take “Hello in French” and output [“Bonjour”]. Finally, the information retrieval system can take “How old is Rafael Nadal?” and output [“Rafael Nadal / Age / 35”]. The response “Rafael Nadal / Age / 35” is a typical response we can get from a Knowledge Graph. As a result, it’s possible to deduce that Google uses its Knowledge Graph to verify the information.

This brings me to the conclusion that I had already anticipated: being in the Knowledge Graph is becoming increasingly important for brands. Not only to have a rich SERP experience with a Knowledge Panel but also for new and emerging technologies. This gives Google and Bing yet another reason to present your brand instead of a competitor.

In my opinion, one of the best approaches is to use the Kalicube process created by Jason Barnard, which is based on three steps: Understanding, Credibility, and Deliverability. I recently co-authored a white paper with Jason on content creation for GenAI; below is a summary of the three steps.

1. Understand your solution. This makes reference to becoming an entity and explaining to the machine who you are and what you do. As a brand, you need to make sure that Google or Bing have an understanding of your brand, including its identity, offerings, and target audience.

2. In the Kalicube process, credibility is another word for the more complex concept of E-E-A-T. This means that if you create content, you need to demonstrate Experience, Expertise, Authoritativeness, and Trustworthiness in the subject of the content piece.

A simple way of being perceived as more credible by a machine is by including data or information that can be verified on your website. For instance, if a brand has existed for 50 years, it could write on its website “We’ve been in business for 50 years.” This information is precious but needs to be verified by Google or Bing. Here is where external sources come in handy. In the Kalicube process, this is called corroborating the sources. For example, if you have a Wikipedia page with the date of founding of the company, this information can be verified. This can be applied to all contexts.

If we take an e-commerce business with client reviews on its website, and the client reviews are excellent, but there is nothing confirming this externally, then it’s a bit suspicious. But, if the internal reviews are the same as the ones on Trustpilot, for example, the brand gains credibility! So, the key to credibility is to provide information on your website first, and that information to be corroborated externally.

The interesting part is that all this generates a cycle because by working on convincing search engines of your credibility both onsite and offsite, you will also convince your audience from the top to the bottom of your acquisition funnel.

3. The content you create needs to be deliverable. Deliverability aims to provide an excellent customer experience for each touchpoint of the buyer decision journey. This is primarily about producing targeted content in the correct format and secondly about the technical side of the website.

An excellent starting point is using the Pedowitz Group’s Customer Journey model and to produce content for each step. Let’s look at an example of a funnel on BingChat that, as a brand, you want to control.

A user could write: “Can I dive with luxury watches?” As we can see from the image below, a recommended follow-up question suggested by the chatbot is “Which are some good diving watches?”

If a user clicks on that question, they get a list of luxury diving watches. As you can imagine, if you sell diving watches, you want to be included on the list.

In a few clicks, the chatbot has brought a user from a general question to a potential list of watches that they could buy. As a brand, you need to produce content for all the touchpoints of the buyer decision journey and figure out the most effective way to produce this content, whether it’s in the form of FAQs, how-tos, white papers, blogs, or anything else.

GenAI is a powerful technology that comes with its strengths and weaknesses. One of the main challenges brands face is hallucinations when it comes to using this technology. As demonstrated by the paper LaMDA: Language Models for Dialog Applications, a possible solution to this problem is using Knowledge Graphs to verify GenAI outputs. Being in the Google Knowledge Graph for a brand is much more than having the opportunity to have a much richer SERP. It also provides an opportunity to maximize their chances of being on Google’s new GenAI experience and chatbots — ensuring that the answers regarding their brand are accurate.

This is why, from a brand perspective, being an entity and being understood by Google and Bing is a must and no more a should!

The information above comes with some significant consequences:

What are the pitfalls of Generative AI?

What is a Knowledge Graph?

What a brand in the Knowledge Graph would look like

LaMDA and the Knowledge Graph

How can a brand maximize its chances of being part of a chatbot’s answers or being part of the GenAI experience?

In practice, this means having a machine-readable ID and feeding the machine with the right information about your brand and ecosystem. Remember the Rolex example where we concluded that the Rolex readable ID is /m/023_fz. This step is fundamental.