SEO

How to Hire a Link Building Agency: A Step-by-Step Guide

We all know that link building is an integral part of any SEO strategy. But acquiring top-notch links is not as easy as it looks. It takes a lot of experience, skill, and time to do it well. So what if you can’t manage link building yourself but still want the best results?

If you don’t have the experience to find high-quality link prospects or can’t spare hours doing outreach, now may be the time to hire a link building agency. A quality one can get the results you need without the headaches.

But with so many agencies out there claiming to be the best in the business, it can be challenging to know who’s legit. In this article, we will look at how to hire a link building agency, including the qualities and red flags to look out for.

Link building, when done well, is a full-time job. It takes skill to assess a site and come up with a strategy and roadmap, produce quality content that brands want to publish, and build long-lasting relationships with businesses and journalists.

Even if you have all of the skills required to build exceptional links that will move the needle, doing so takes a lot of time. If you’re already an SEO or a business owner, the likelihood is you don’t have the time needed to land killer links.

A link building agency has a dedicated expert team set up specifically to build links for their clients on a full-time basis. A high-quality agency will take care of everything you need—from strategy and planning to execution—and keep you updated as it progresses.

The best link building agencies are made up of expert writers, SEOs, PR executives, and experienced managers to deliver a successful service seamlessly. This means you can get the results you need without the headaches and simply check in with them for updates on your campaign.

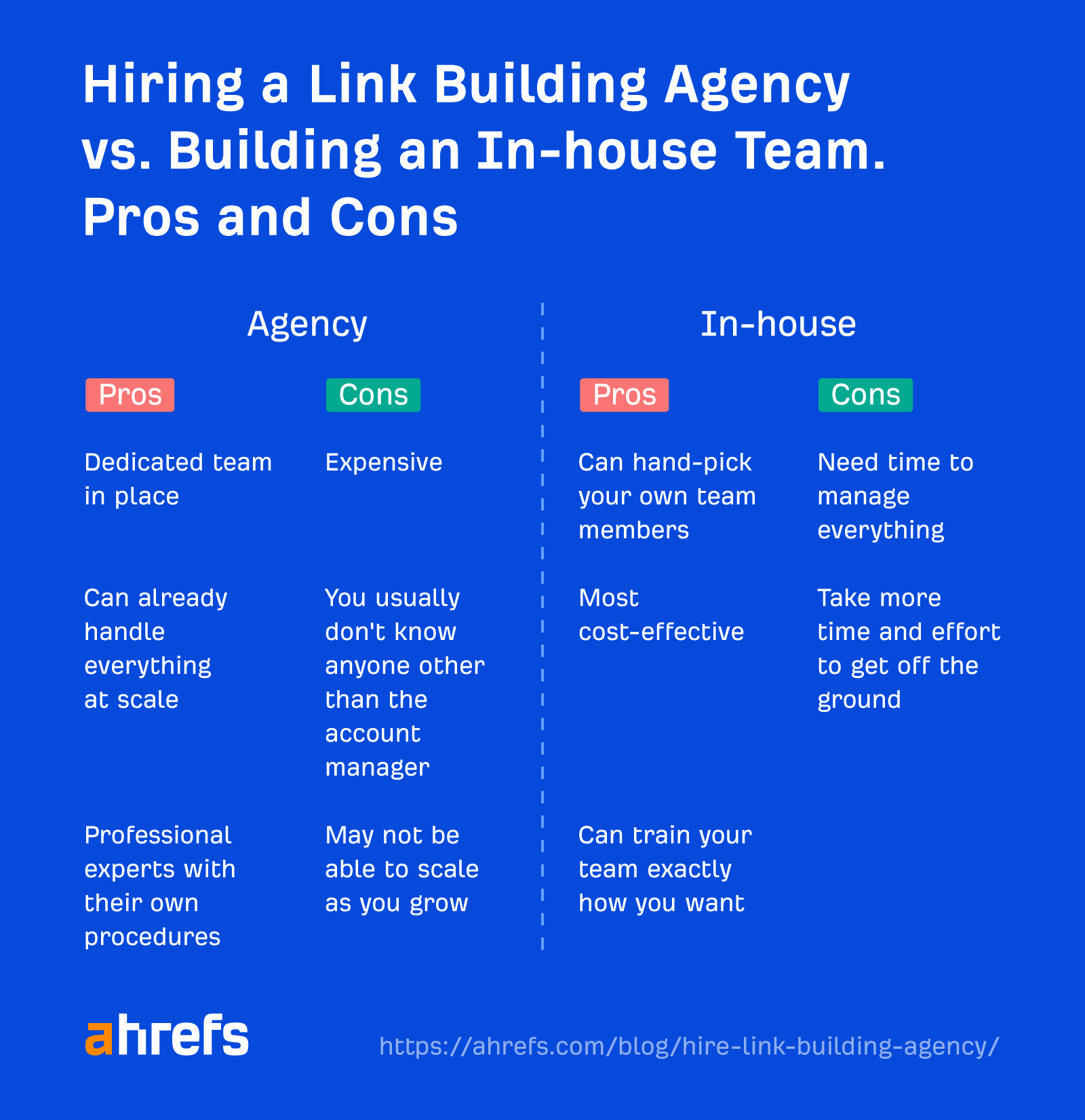

Outsourcing to an agency vs. building an in-house team

If you’re at the point where you know you need help with link building, you may want to take a moment to consider whether the best option is to hire a link building agency or build your own in-house team.

Obviously, hiring an agency has several benefits. You’re paying for years of experience, a fully functional, coordinated team with pre-built relationships, and systems in place. Especially in the short term, this all makes link building easy (and one less thing to manage).

However, link building agencies have high business costs. To turn a profit, link building agencies usually charge higher prices, which often take up a huge portion of your SEO budget. Meaning, using an agency to build links at scale long term may not be the best option.

If you’re building up your own business, either as an independent SEO consultant or building digital assets like a niche site, it may be more beneficial (especially in the long term) to build your own in-house team.

First, this will be more cost-efficient. And if you have link building experience yourself and just need additional hands to help scale, you can train an in-house team with your own standard operating procedures (SOPs) to work exactly how you want.

So now we have established why you may need a link building agency, the question is, “How do you find a high-quality agency that will actually get results?”

It’s easy to pick up any old rubbish from Fiverr or a list from a Linkedin outreach warrior. But acquiring backlinks that move the needle from big businesses like HubSpot or Monday takes more than a cold email from a random SEO “expert.”

To set you up for success, we’re going to look at the traits of a quality link building agency.

1. Great communication

Great communication is a key factor for success in any business. With link building agencies, responsiveness and transparency can be key indicators for quality.

Although you should always allow a reasonable amount of time for a busy agency to respond (keeping in mind that an agency may be in a different time zone to you and have different working days), expecting a response within 24 hours during working hours is standard.

An agency should also offer transparency into how it intends to work and what can be expected of it. It’s standard practice for a quality agency to have an onboarding process for new clients, walking you through exactly how things will go.

If an agency is reluctant to communicate or share its methods, timelines, etc., that should be a big red flag, and you’ll probably want to look elsewhere.

2. Creates realistic timelines and goals

Anyone who tells you they can acquire 762 links in 30 days probably sold snake oil in a former life.

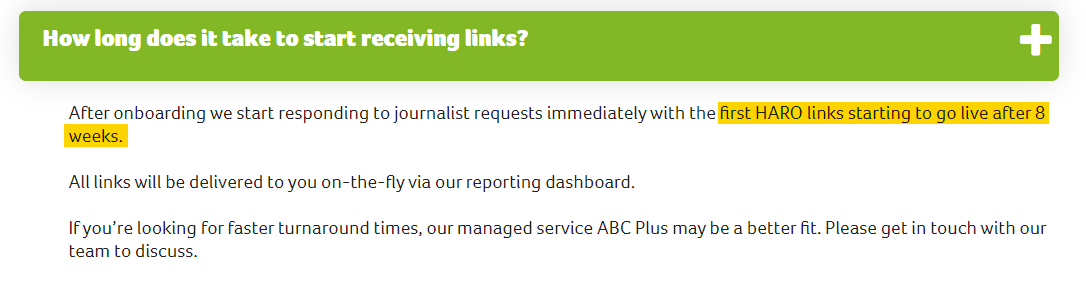

High-quality link building takes time and, depending on the link building services you choose, may even take months to start seeing results.

Services like digital PR and HARO link building require time. After all, it takes a while to build relationships with journalists and see placements as articles are published. It’s not an overnight process. A good agency will be up front about realistic timelines, many stating what to expect on their websites.

If an agency gives you unrealistic metrics (either the number of links it can acquire or the time frame it expects), it will likely use black-hat methods to acquire links or simply let you down.

3. Holds itself accountable

Here’s the thing about great link building: It’s not a one-size-fits-all solution!

Quality link building agencies must adapt their strategy on a client-by-client basis. In order to get the best results, they need to understand what your site needs and consider many factors, such as niche and business type.

Often, this can mean trial and error as they develop a client’s individual plan. On occasion, this can mean things go wrong.

An agency that can hold itself accountable for mistakes and offer reassurance on how it can rectify the issue and get back on track is necessary for a long-term, successful partnership.

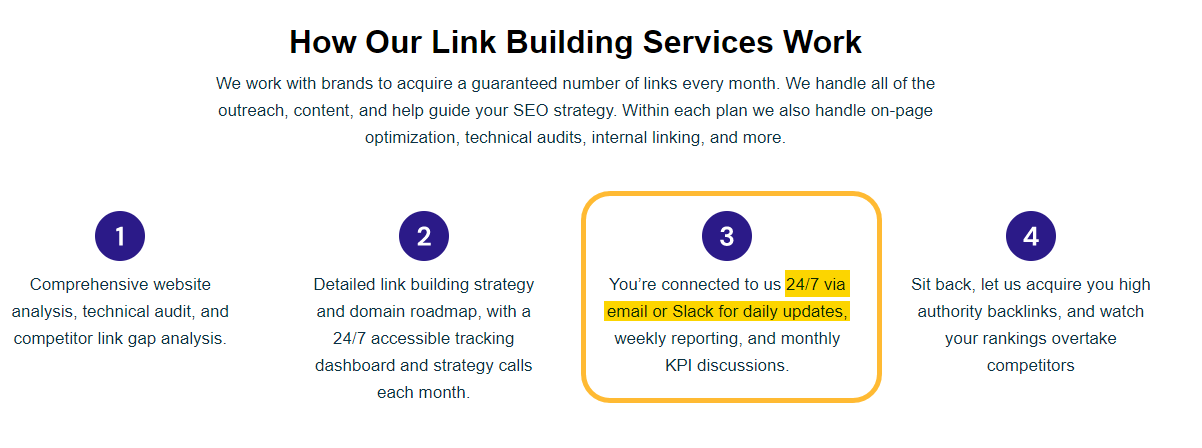

4. Continuously updates you with progress

Being updated on the progress of your account is essential, especially for link building services that take slightly longer to fulfill.

This helps ensure you have the reassurance the work you paid for is being done and you can coordinate other parts of your SEO strategy and campaigns alongside ongoing link building efforts.

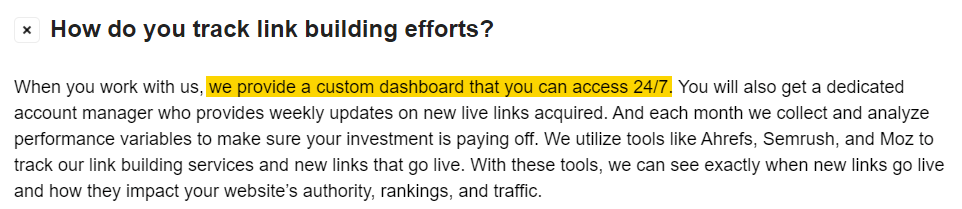

Most large, well-established agencies these days will have some form of client dashboard where you can monitor the ongoing progress of your campaigns. But even small agencies with limited staff should pre-arrange regular progress check-ins as part of the service.

5. Methods and tools

A professional link building agency will have specific methods for acquiring links (depending on the types of links) that it should be willing to share with you.

Although it may not want to share every insider secret that makes it more successful than other competitors, it should give you a brief overview of its process during client onboarding.

Agencies that are reluctant to share their processes are something to be wary of. Often, they work from a list of guest post providers or use techniques that can land you a Google penalty, like buying links.

But a professional agency will utilize many professional tools to run competitor analyses, audit backlinks, find link prospects, liaise with journalists, run outreach campaigns, and track results. An agency should be happy to share which tools it uses and why.

6. Reputation

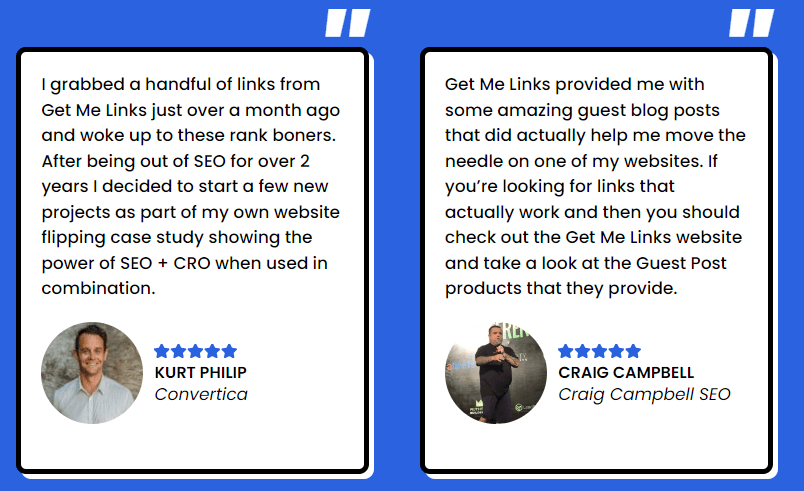

You should always be able to ask a prospective agency for testimonials from satisfied customers. In fact, the majority will have testimonials listed on their websites.

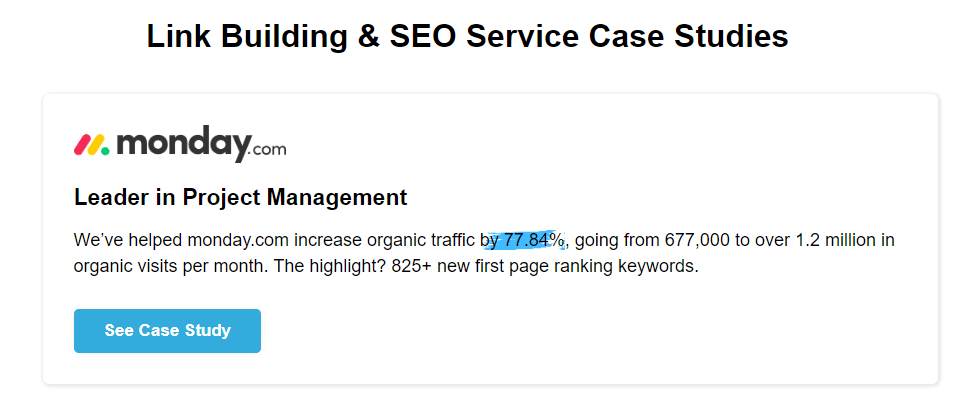

However, anyone can write a fake testimonial from never-heard-of-before Joe Bloggs about how fabulous they are. On the other hand, recommendations from well-known industry professionals add a layer of confidence that an agency knows what it is doing and gets results.

Moreover, well-established link building agencies will have additional information available alongside testimonials, adding to their credibility. Examples include detailed case studies and any awards they have won. These are all things to look out for.

You can also go one step further than what an agency promotes on its website (let’s face it, that is always going to be positive). Do some digging and check out reviews on social media, Reddit, and even from previous employees on Glassdoor.

7. Pricing

I think it is important to say here that expensive doesn’t always mean better. Paying someone $300 an hour doesn’t necessarily mean you will get the best results possible.

There are high-quality agencies worldwide, and those working from countries where the cost of living and running a business are cheaper will often have lower prices than those in the U.K. or U.S.

With that said, there has to be a happy medium. Any high-quality link building agency has several unavoidable costs they need to cover before even turning a profit, including:

- Professional tools.

- Outreach staff.

- Writers (for guest posts, for example).

- Other agency staff (account managers, administrators, etc.).

- General business costs (office space, utilities, and so on).

All of these need to be covered with an additional margin for profit for an agency to function. Therefore, if an agency charges $20 per link, regardless of where it is based, you should consider that a red flag.

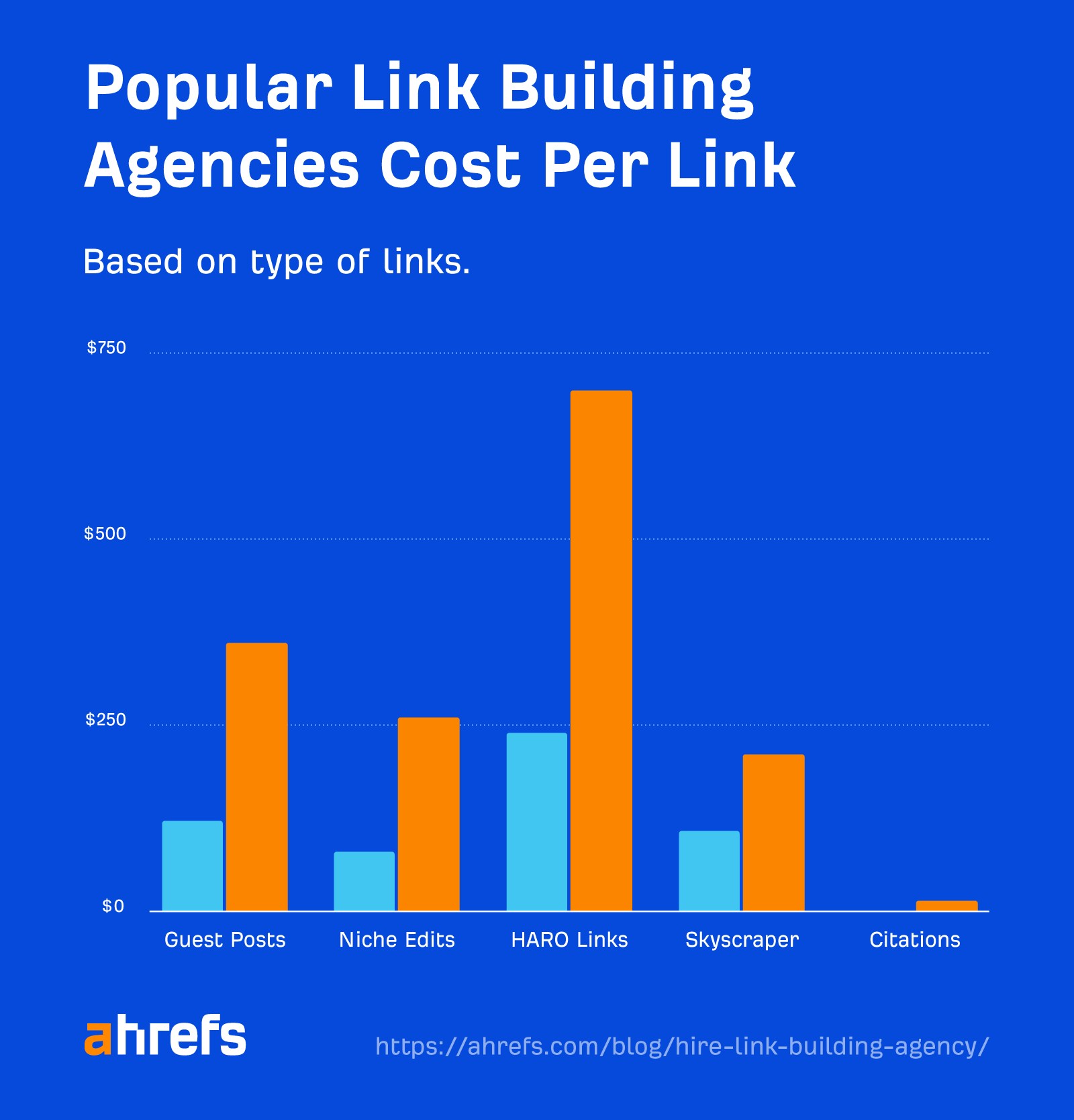

It’s also worth considering that the price (as well as scale and turnaround time) will vary depending on the types of links you want to acquire.

Link prices among the most popular agencies range from $1 per link (for local citations) to $700 per link (for HARO link building).

8. Ability to scale

If you are considering using a link building agency for the long term, you’re probably going to want to scale up the operation as you go forward. If the agency you’re using is a small four-person operation, it probably isn’t able to grow with you.

The ideal situation is to find an awesome link building agency that you can build long-lasting relationships with. It should be one that knows your website and goals so you won’t have to change providers every time you want to double down on your efforts.

Finding an agency that is already well established with a large team of staff is the best option if you are looking for long-term growth opportunities.

Final thoughts

Hiring a link building agency could be the secret sauce for SEO success for anyone who wants to build epic backlinks at scale. But finding the right agency can take time, effort and, in some cases, trial and error.

Finding an agency that is professional, has open communication, and is invested in seeing you succeed is going to be key to building a long-lasting partnership.

Got questions? Ping me on Twitter.

![How AEO Will Impact Your Business's Google Visibility in 2026 Why Your Small Business’s Google Visibility in 2026 Depends on AEO [Webinar]](https://articles.entireweb.com/wp-content/uploads/2026/01/How-AEO-Will-Impact-Your-Businesss-Google-Visibility-in-2026-400x240.png)

![How AEO Will Impact Your Business's Google Visibility in 2026 Why Your Small Business’s Google Visibility in 2026 Depends on AEO [Webinar]](https://articles.entireweb.com/wp-content/uploads/2026/01/How-AEO-Will-Impact-Your-Businesss-Google-Visibility-in-2026-80x80.png)