TECHNOLOGY

Everything You Need to Know About Fog Computing

The rise of the IoT has brought with it a need for new computing paradigms that can support the large amounts of data generated by these devices.

One such paradigm is fog computing, which extends cloud computing capabilities to the edge of the network, closer to where data is generated and consumed. This article will explore what fog computing is, how it works, its benefits and challenges, and real-world examples of its use.

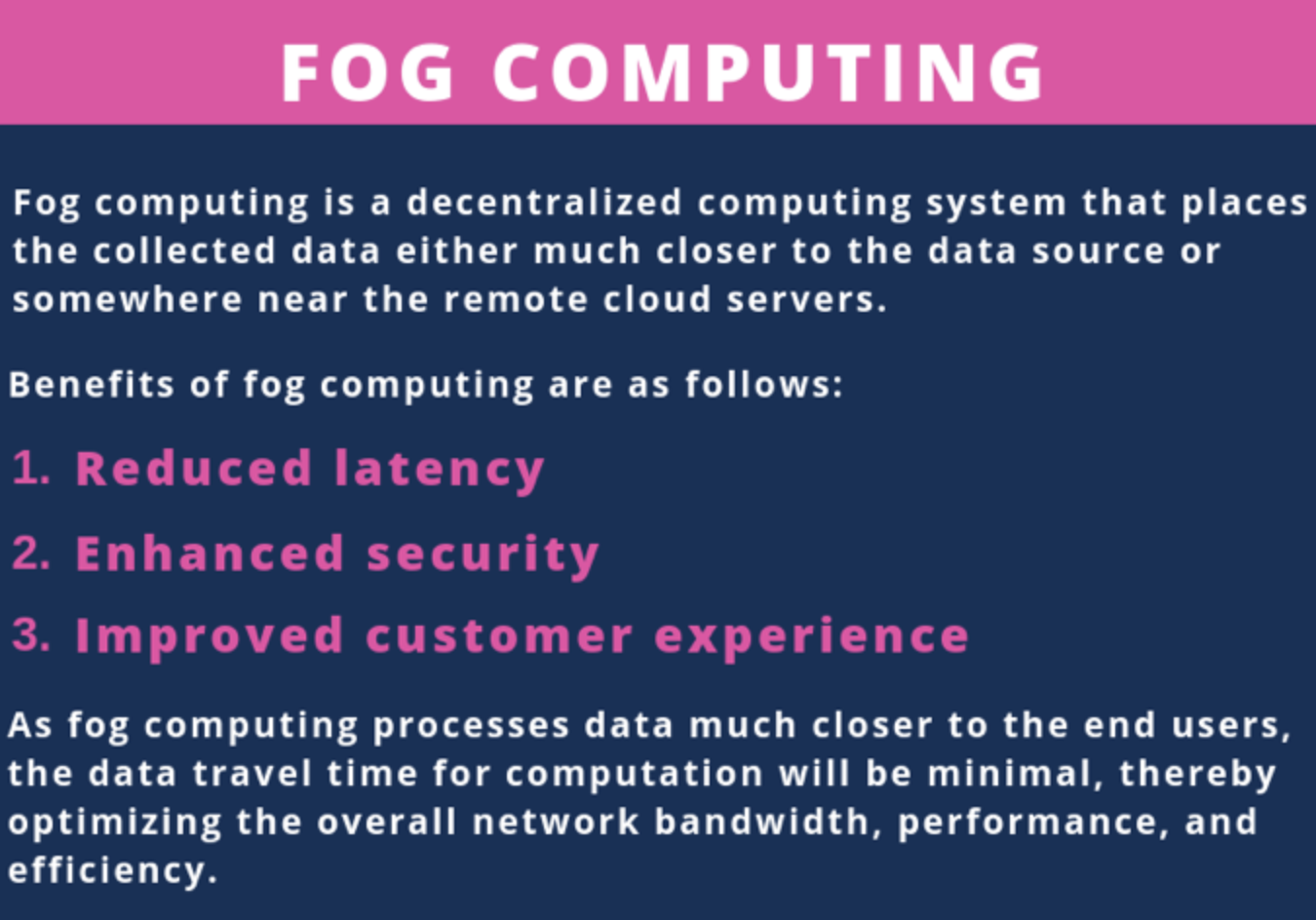

What is Fog Computing?

Fog computing is a distributed computing paradigm that brings computing resources closer to the edge of the network, reducing latency and improving the performance of internet of things (IoT) devices. In traditional cloud computing, data is generated by devices and transmitted to a remote data center or cloud for processing and storage. However, this approach can result in delays due to the distance between the device and the cloud, and the large amounts of data generated by IoT devices can also strain network bandwidth.

Fog computing addresses these challenges by extending cloud capabilities to the edge of the network, where data can be processed and analyzed closer to the source. This is achieved by deploying fog nodes, which are essentially mini data centers that can be located on the network edge or within the devices themselves. These fog nodes can perform data processing, storage, and analysis, as well as handle network management and security functions.

How Does Fog Computing Work?

Fog computing works by creating a distributed computing infrastructure that extends cloud capabilities to the edge of the network.

This infrastructure is made up of a network of fog nodes that can communicate with each other and with cloud data centers. Fog nodes can be located at the network edge, such as in an IoT gateway, or within devices themselves, such as a sensor or smart appliance.

When an IoT device generates data, it is transmitted to the nearest fog node for processing and analysis. The fog node can perform a range of functions, such as filtering, aggregation, and transformation of data, as well as running applications and services. The processed data can then be transmitted back to the device or sent to a cloud data center for further analysis and storage.

Benefits of Fog Computing

Fog computing offers several benefits over traditional cloud computing, including:

-

Reduced Latency: By processing data closer to the edge of the network, fog computing can significantly reduce the latency of data transmission, resulting in faster response times and improved performance of IoT devices.

-

Improved Security: Fog computing can improve security by enabling data to be processed and analyzed closer to the source, reducing the risk of sensitive data being transmitted over the network to a remote data center or cloud.

-

Scalability: The distributed nature of fog computing infrastructure allows for easy scalability and can support the growing number of IoT devices and the large amounts of data they generate.

-

Cost Savings: By reducing the need for data transmission to remote data centers or clouds, fog computing can reduce network bandwidth costs and lower operational expenses.

Applications of Fog Computing

Fog computing is being used in a variety of industries and applications, including:

-

Healthcare: Fog computing is being used in healthcare to support remote monitoring and telemedicine applications. In a hospital setting, for example, fog nodes can be deployed in patient rooms to collect and analyze vital signs, such as heart rate and blood pressure, and transmit the data to healthcare professionals for remote monitoring.

-

Industrial IoT: Fog computing is being used in industrial IoT applications to improve manufacturing efficiency, reduce downtime, and optimize supply chain management. In the oil and gas industry, for example, fog nodes have been deployed to collect and analyze data from sensors that monitor equipment performance and predict maintenance needs.

-

Smart Cities: Fog computing is being used in smart cities to support a range of applications, such as traffic management, public safety, and environmental monitoring. In Barcelona, for example, fog nodes have been deployed throughout the city to collect and analyze data from sensors that monitor air quality, noise pollution, and traffic congestion.

- Autonomous Vehicles: Fog computing is being used in autonomous vehicles to support real-time decision-making and reduce the reliance on cloud connectivity. Fog nodes can be deployed in vehicles to process sensor data and make real-time decisions, such as detecting obstacles or identifying pedestrians.

Challenges of Fog Computing

Despite its many benefits, fog computing also poses several challenges, including:

-

Security: The distributed nature of fog computing infrastructure can make it difficult to ensure the security of data and devices. Fog nodes can be vulnerable to cyberattacks, and there is a risk of data leakage or theft.

-

Interoperability: Fog computing requires the integration of multiple technologies and devices, which can pose interoperability challenges. Different devices may use different communication protocols or data formats, making it difficult to exchange data between them.

-

Complexity: The distributed nature of fog computing infrastructure can also make it more complex to manage and maintain. Fog nodes may require frequent updates and maintenance, and network management can become more challenging as the number of devices and nodes grows.

Future of Fog Computing

The future of fog computing looks bright, with many experts predicting that it will become an increasingly important computing paradigm in the years to come. Here are some of the key trends and developments that are likely to shape the future of fog computing:

-

Expansion of the IoT: As the number of IoT devices continues to grow, the demand for fog computing is likely to increase. Fog computing offers a way to process and analyze the large amounts of data generated by these devices, without requiring all the data to be transmitted to the cloud.

-

Integration with AI: Fog computing is well-suited for supporting AI applications, as it can provide the real-time processing and decision-making capabilities that are needed for many AI applications. As AI becomes more ubiquitous, we can expect to see greater integration of AI and fog computing.

-

Increased focus on security: Security is a major concern in the IoT, and fog computing offers several advantages over cloud computing in terms of security. As the importance of security in the IoT continues to grow, we can expect to see greater focus on security in fog computing.

-

Emergence of fog-to-fog communication: Fog computing is often viewed as a way to bridge the gap between cloud and edge computing, but it can also be used to enable communication between fog nodes. This could enable new applications and use cases that are not currently possible.

-

Standardization efforts: Standardization efforts are already underway for fog computing, with organizations such as the OpenFog Consortium working to define standards and best practices. As these efforts mature, we can expect to see greater adoption of fog computing in a wider range of industries and applications.

Fog computing is an emerging computing paradigm that offers several benefits over traditional cloud computing, including reduced latency, improved security, scalability, and cost savings. It is being used in a variety of industries and applications, and the future looks bright for this exciting technology. With the expansion of the IoT, integration with AI, increased focus on security, emergence of fog-to-fog communication, and standardization efforts, we can expect to see fog computing become an increasingly important computing paradigm in the years to come.

Conclusion

Fog computing is an emerging computing paradigm that offers several benefits over traditional cloud computing, including reduced latency, improved security, scalability, and cost savings. It is being used in a variety of industries and applications, including smart cities, industrial IoT, healthcare, and autonomous vehicles. However, fog computing also poses several challenges, including security, interoperability, and complexity, which must be addressed to ensure its widespread adoption. As the number of IoT devices continues to grow, fog computing is likely to become an increasingly important computing paradigm for supporting the large amounts of data generated by these devices.

TECHNOLOGY

Next-gen chips, Amazon Q, and speedy S3

AWS re:Invent, which has been taking place from November 27 and runs to December 1, has had its usual plethora of announcements: a total of 21 at time of print.

Perhaps not surprisingly, given the huge potential impact of generative AI – ChatGPT officially turns one year old today – a lot of focus has been on the AI side for AWS’ announcements, including a major partnership inked with NVIDIA across infrastructure, software, and services.

Yet there has been plenty more announced at the Las Vegas jamboree besides. Here, CloudTech rounds up the best of the rest:

Next-generation chips

This was the other major AI-focused announcement at re:Invent: the launch of two new chips, AWS Graviton4 and AWS Trainium2, for training and running AI and machine learning (ML) models, among other customer workloads. Graviton4 shapes up against its predecessor with 30% better compute performance, 50% more cores and 75% more memory bandwidth, while Trainium2 delivers up to four times faster training than before and will be able to be deployed in EC2 UltraClusters of up to 100,000 chips.

The EC2 UltraClusters are designed to ‘deliver the highest performance, most energy efficient AI model training infrastructure in the cloud’, as AWS puts it. With it, customers will be able to train large language models in ‘a fraction of the time’, as well as double energy efficiency.

As ever, AWS offers customers who are already utilising these tools. Databricks, Epic and SAP are among the companies cited as using the new AWS-designed chips.

Zero-ETL integrations

AWS announced new Amazon Aurora PostgreSQL, Amazon DynamoDB, and Amazon Relational Database Services (Amazon RDS) for MySQL integrations with Amazon Redshift, AWS’ cloud data warehouse. The zero-ETL integrations – eliminating the need to build ETL (extract, transform, load) data pipelines – make it easier to connect and analyse transactional data across various relational and non-relational databases in Amazon Redshift.

A simple example of how zero-ETL functions can be seen is in a hypothetical company which stores transactional data – time of transaction, items bought, where the transaction occurred – in a relational database, but use another analytics tool to analyse data in a non-relational database. To connect it all up, companies would previously have to construct ETL data pipelines which are a time and money sink.

The latest integrations “build on AWS’s zero-ETL foundation… so customers can quickly and easily connect all of their data, no matter where it lives,” the company said.

Amazon S3 Express One Zone

AWS announced the general availability of Amazon S3 Express One Zone, a new storage class purpose-built for customers’ most frequently-accessed data. Data access speed is up to 10 times faster and request costs up to 50% lower than standard S3. Companies can also opt to collocate their Amazon S3 Express One Zone data in the same availability zone as their compute resources.

Companies and partners who are using Amazon S3 Express One Zone include ChaosSearch, Cloudera, and Pinterest.

Amazon Q

A new product, and an interesting pivot, again with generative AI at its core. Amazon Q was announced as a ‘new type of generative AI-powered assistant’ which can be tailored to a customer’s business. “Customers can get fast, relevant answers to pressing questions, generate content, and take actions – all informed by a customer’s information repositories, code, and enterprise systems,” AWS added. The service also can assist companies building on AWS, as well as companies using AWS applications for business intelligence, contact centres, and supply chain management.

Customers cited as early adopters include Accenture, BMW and Wunderkind.

Want to learn more about cybersecurity and the cloud from industry leaders? Check out Cyber Security & Cloud Expo taking place in Amsterdam, California, and London. Explore other upcoming enterprise technology events and webinars powered by TechForge here.

TECHNOLOGY

HCLTech and Cisco create collaborative hybrid workplaces

Digital comms specialist Cisco and global tech firm HCLTech have teamed up to launch Meeting-Rooms-as-a-Service (MRaaS).

Available on a subscription model, this solution modernises legacy meeting rooms and enables users to join meetings from any meeting solution provider using Webex devices.

The MRaaS solution helps enterprises simplify the design, implementation and maintenance of integrated meeting rooms, enabling seamless collaboration for their globally distributed hybrid workforces.

Rakshit Ghura, senior VP and Global head of digital workplace services, HCLTech, said: “MRaaS combines our consulting and managed services expertise with Cisco’s proficiency in Webex devices to change the way employees conceptualise, organise and interact in a collaborative environment for a modern hybrid work model.

“The common vision of our partnership is to elevate the collaboration experience at work and drive productivity through modern meeting rooms.”

Alexandra Zagury, VP of partner managed and as-a-Service Sales at Cisco, said: “Our partnership with HCLTech helps our clients transform their offices through cost-effective managed services that support the ongoing evolution of workspaces.

“As we reimagine the modern office, we are making it easier to support collaboration and productivity among workers, whether they are in the office or elsewhere.”

Cisco’s Webex collaboration devices harness the power of artificial intelligence to offer intuitive, seamless collaboration experiences, enabling meeting rooms with smart features such as meeting zones, intelligent people framing, optimised attendee audio and background noise removal, among others.

Want to learn more about cybersecurity and the cloud from industry leaders? Check out Cyber Security & Cloud Expo taking place in Amsterdam, California, and London. Explore other upcoming enterprise technology events and webinars powered by TechForge here.

TECHNOLOGY

Canonical releases low-touch private cloud MicroCloud

Canonical has announced the general availability of MicroCloud, a low-touch, open source cloud solution. MicroCloud is part of Canonical’s growing cloud infrastructure portfolio.

It is purpose-built for scalable clusters and edge deployments for all types of enterprises. It is designed with simplicity, security and automation in mind, minimising the time and effort to both deploy and maintain it. Conveniently, enterprise support for MicroCloud is offered as part of Canonical’s Ubuntu Pro subscription, with several support tiers available, and priced per node.

MicroClouds are optimised for repeatable and reliable remote deployments. A single command initiates the orchestration and clustering of various components with minimal involvement by the user, resulting in a fully functional cloud within minutes. This simplified deployment process significantly reduces the barrier to entry, putting a production-grade cloud at everyone’s fingertips.

Juan Manuel Ventura, head of architectures & technologies at Spindox, said: “Cloud computing is not only about technology, it’s the beating heart of any modern industrial transformation, driving agility and innovation. Our mission is to provide our customers with the most effective ways to innovate and bring value; having a complexity-free cloud infrastructure is one important piece of that puzzle. With MicroCloud, the focus shifts away from struggling with cloud operations to solving real business challenges” says

In addition to seamless deployment, MicroCloud prioritises security and ease of maintenance. All MicroCloud components are built with strict confinement for increased security, with over-the-air transactional updates that preserve data and roll back on errors automatically. Upgrades to newer versions are handled automatically and without downtime, with the mechanisms to hold or schedule them as needed.

With this approach, MicroCloud caters to both on-premise clouds but also edge deployments at remote locations, allowing organisations to use the same infrastructure primitives and services wherever they are needed. It is suitable for business-in-branch office locations or industrial use inside a factory, as well as distributed locations where the focus is on replicability and unattended operations.

Cedric Gegout, VP of product at Canonical, said: “As data becomes more distributed, the infrastructure has to follow. Cloud computing is now distributed, spanning across data centres, far and near edge computing appliances. MicroCloud is our answer to that.

“By packaging known infrastructure primitives in a portable and unattended way, we are delivering a simpler, more prescriptive cloud experience that makes zero-ops a reality for many Industries.“

MicroCloud’s lightweight architecture makes it usable on both commodity and high-end hardware, with several ways to further reduce its footprint depending on your workload needs. In addition to the standard Ubuntu Server or Desktop, MicroClouds can be run on Ubuntu Core – a lightweight OS optimised for the edge. With Ubuntu Core, MicroClouds are a perfect solution for far-edge locations with limited computing capabilities. Users can choose to run their workloads using Kubernetes or via system containers. System containers based on LXD behave similarly to traditional VMs but consume fewer resources while providing bare-metal performance.

Coupled with Canonical’s Ubuntu Pro + Support subscription, MicroCloud users can benefit from an enterprise-grade open source cloud solution that is fully supported and with better economics. An Ubuntu Pro subscription offers security maintenance for the broadest collection of open-source software available from a single vendor today. It covers over 30k packages with a consistent security maintenance commitment, and additional features such as kernel livepatch, systems management at scale, certified compliance and hardening profiles enabling easy adoption for enterprises. With per-node pricing and no hidden fees, customers can rest assured that their environment is secure and supported without the expensive price tag typically associated with cloud solutions.

Want to learn more about cybersecurity and the cloud from industry leaders? Check out Cyber Security & Cloud Expo taking place in Amsterdam, California, and London. Explore other upcoming enterprise technology events and webinars powered by TechForge here.

-

PPC7 days ago

PPC7 days ago19 Best SEO Tools in 2024 (For Every Use Case)

-

SEARCHENGINES6 days ago

Daily Search Forum Recap: April 19, 2024

-

WORDPRESS7 days ago

WORDPRESS7 days agoHow to Make $5000 of Passive Income Every Month in WordPress

-

WORDPRESS5 days ago

WORDPRESS5 days ago13 Best HubSpot Alternatives for 2024 (Free + Paid)

-

MARKETING6 days ago

MARKETING6 days agoBattling for Attention in the 2024 Election Year Media Frenzy

-

SEO7 days ago

SEO7 days ago25 WordPress Alternatives Best For SEO

-

WORDPRESS6 days ago

WORDPRESS6 days ago7 Best WooCommerce Points and Rewards Plugins (Free & Paid)

-

AFFILIATE MARKETING7 days ago

AFFILIATE MARKETING7 days agoAI Will Transform the Workplace. Here’s How HR Can Prepare for It.

You must be logged in to post a comment Login