SEO

Unlocking Growth Through Enterprise SaaS SEO

Enterprise SaaS SEO is the practice of improving the visibility and rankings of an enterprise SaaS website in search engines. The goal is to attract people who are looking for solutions that your product can provide.

It works like this:

Let’s dive in.

There are many reasons SaaS companies should focus on SEO. These include:

- Credibility. With every new touchpoint, you have the opportunity to be seen as the expert and dominant offering. This helps with the perception of your brand and makes your product an easier choice.

- Growth. SEO brings you increased visibility and brand awareness.

- Revenue. Every touchpoint is a chance at a conversion or sale. You’ll increase customer lifetime value and reduce customer acquisition costs.

- Supports other marketing channels. For instance, you can use paid advertising to retarget an audience based on the content they consumed on your website.

Some common SEO challenges include:

- Long sales cycles. Enterprise SaaS products often have longer and more complex sales cycles. You need content to support users at each stage of their journey.

- Stiff competition. There’s a lot of money at stake, and your competitors are also making investments to improve their results.

- Complexity. Everything is more complex. The organization, the website, coordination between teams, globalization, etc.

- Getting buy-in for SEO. If the company you’re working with doesn’t see the value of SEO, you’ll lose resources and prioritization to whatever the company considers more important.

Here are a few examples of enterprise SaaS companies doing SEO well.

Ahrefs

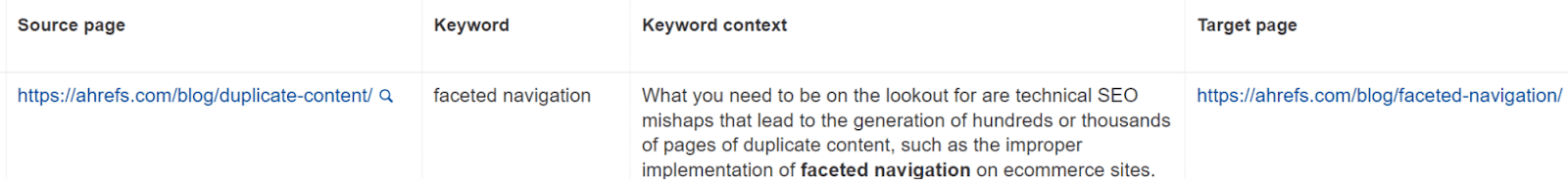

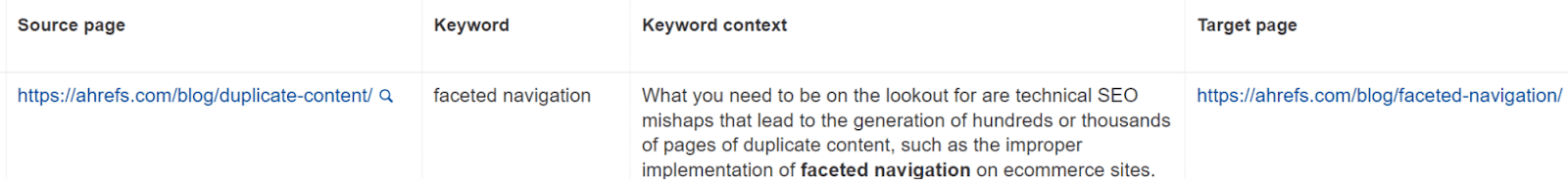

We are usually the example people use for product-led SEO. We combine top-of-funnel informational content with middle-of-funnel solution-aware content. Every blog and video teaches people what something is and shows how Ahrefs can help them with their tasks or fix their problems.

Here’s an example of how we incorporate this naturally into our content.

We also have free tools, data studies, programmatic plays that incorporate our data, content targeting specific verticals, and more.

Notion

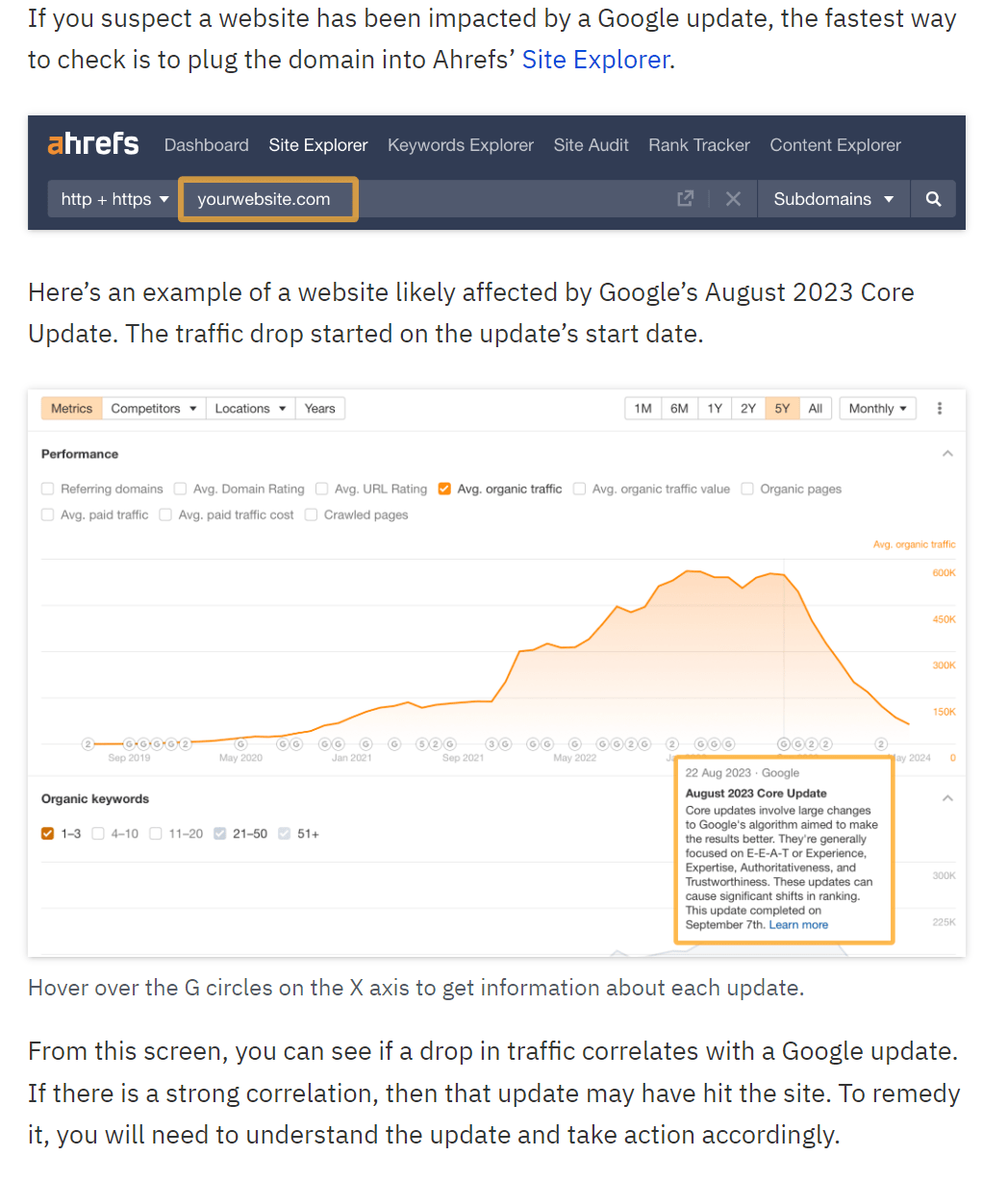

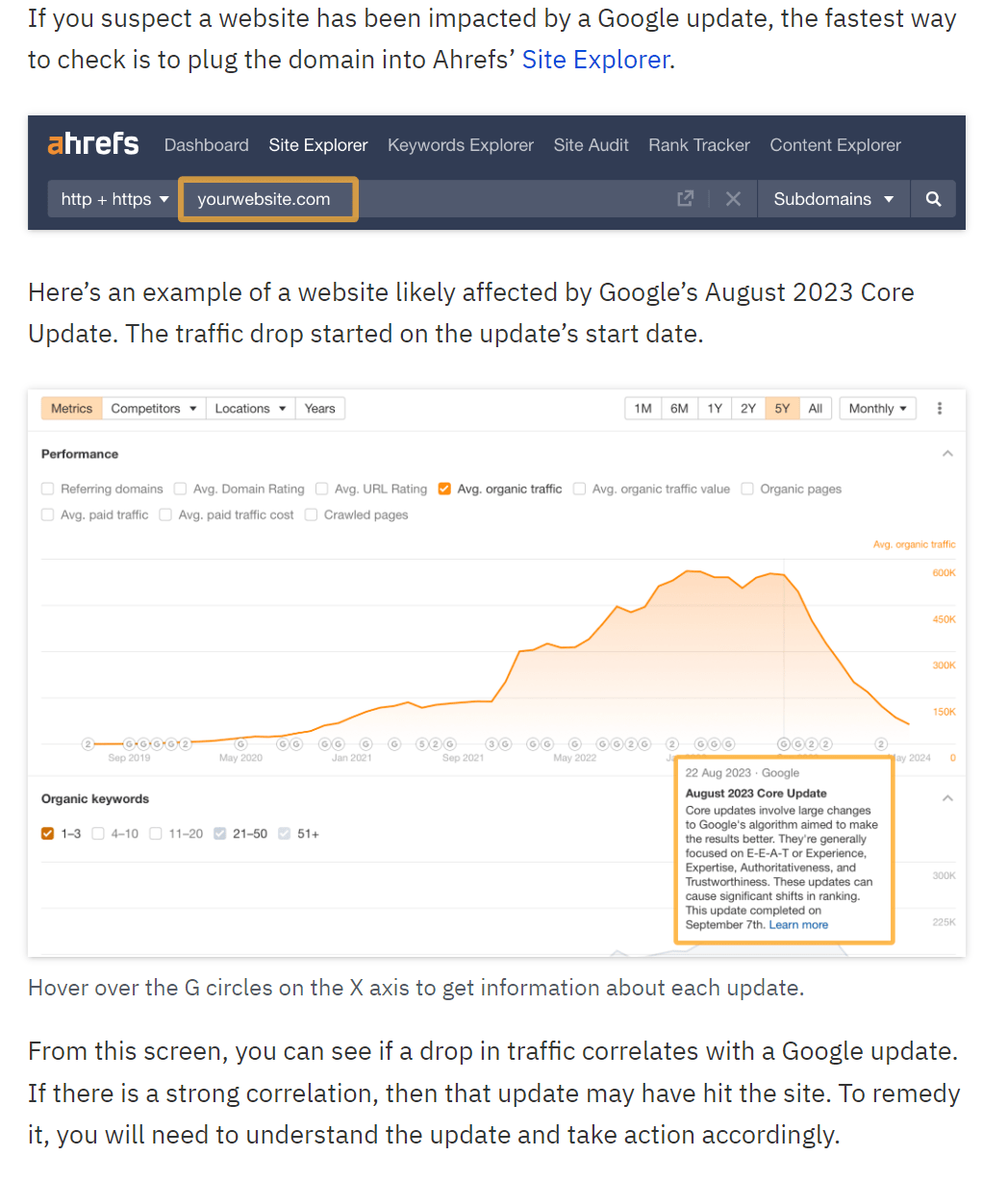

Their templates section is a perfect example of showcasing how to use the product for different purposes.

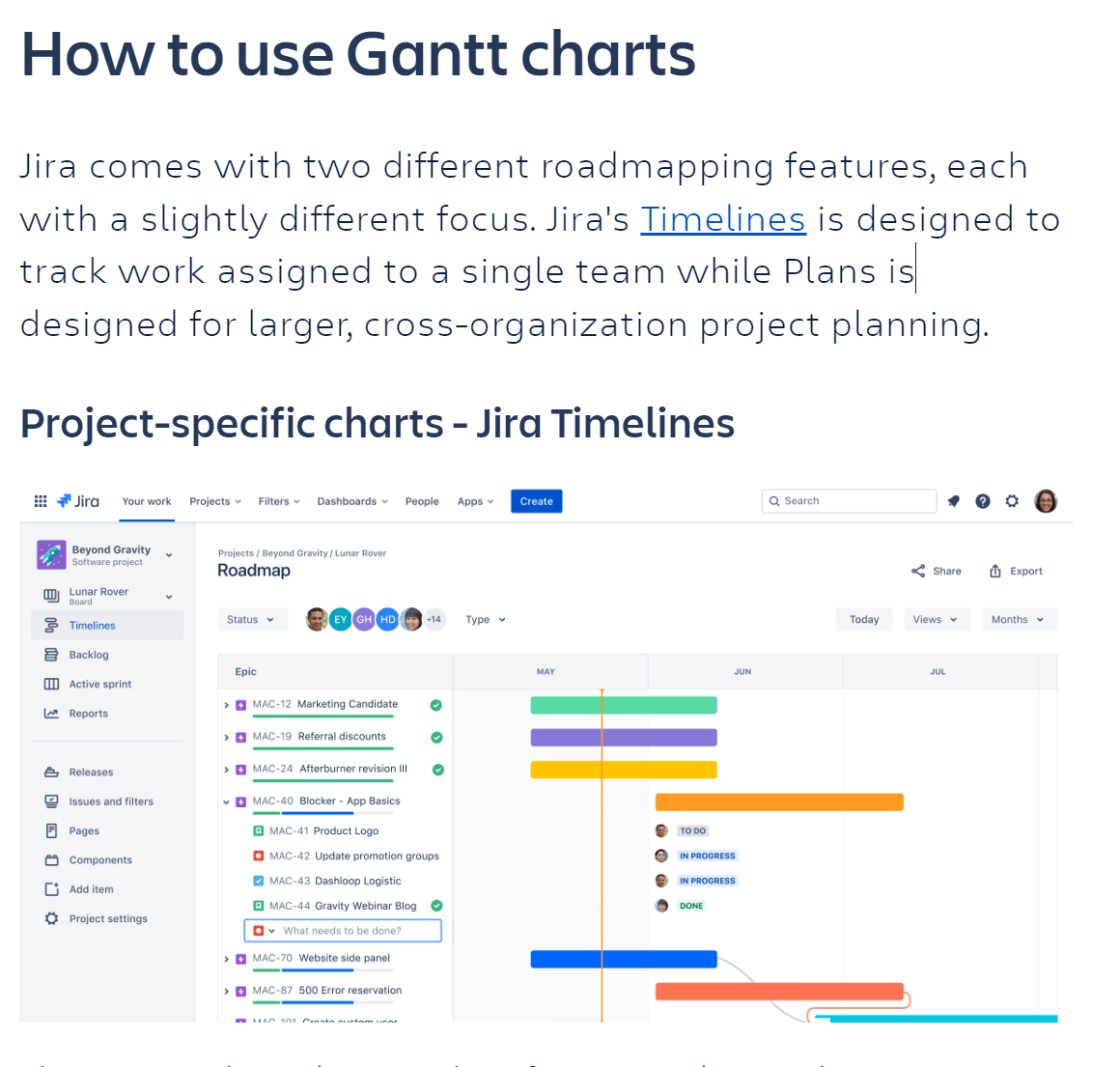

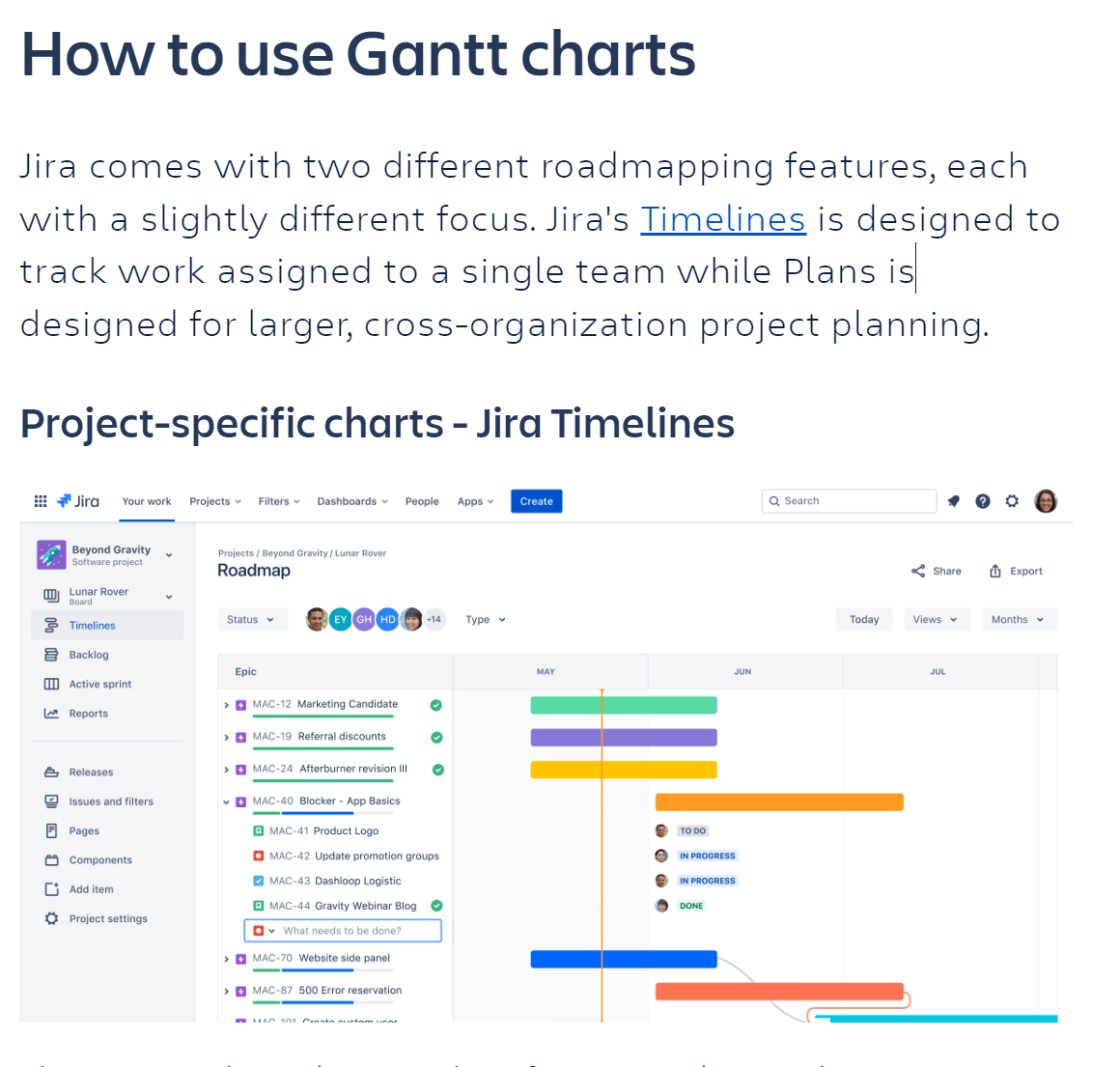

Atlassian

This is another company whose content is well-aligned with user needs and showcases the product.

Enterprise content marketing involves creating and sharing relevant content to attract, engage, and retain an organization’s target audience.

The highest ROI will be creating product-led content that helps the reader solve their problems using your product. This creates a natural path for new subscriptions and is the basis of our strategy at Ahrefs.

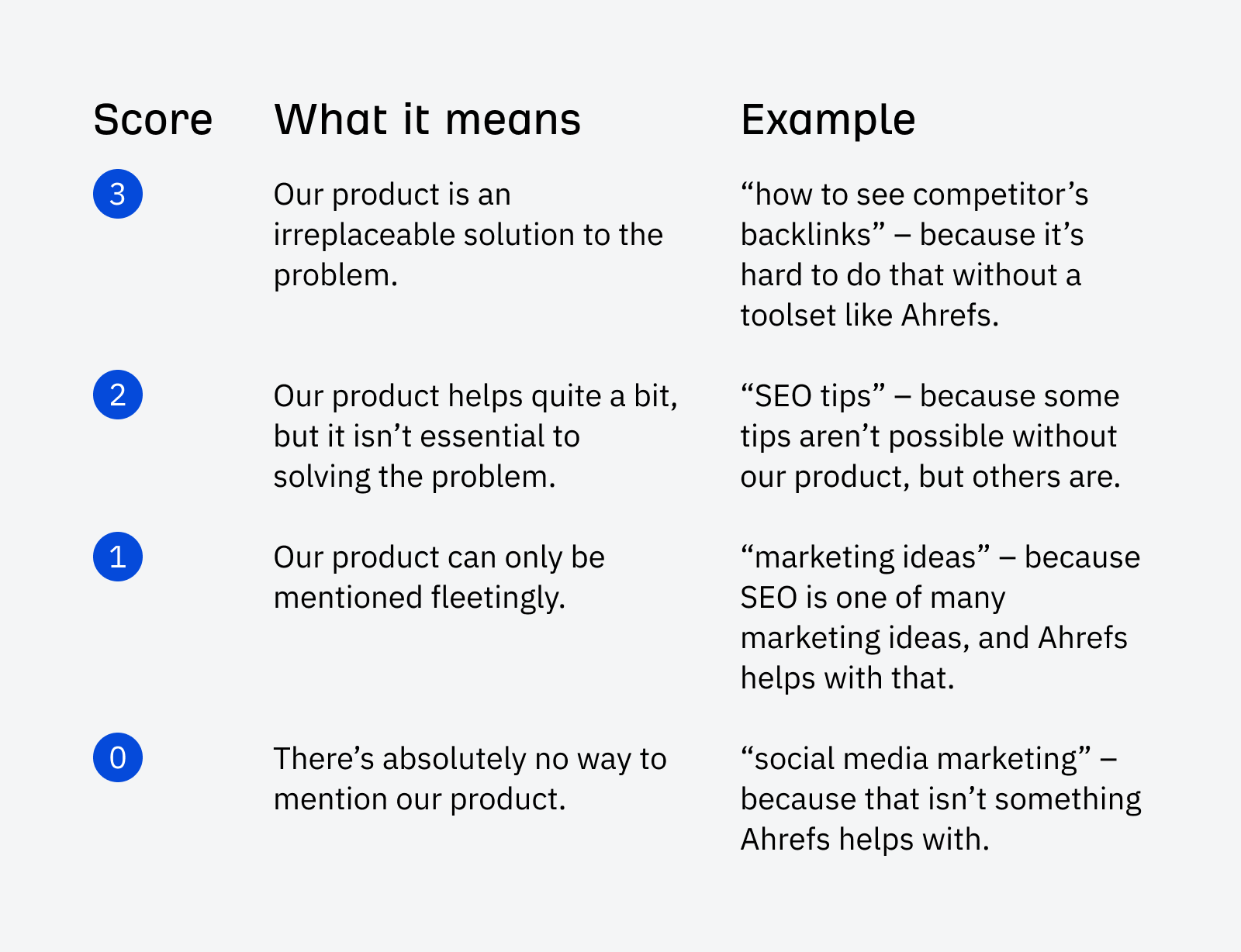

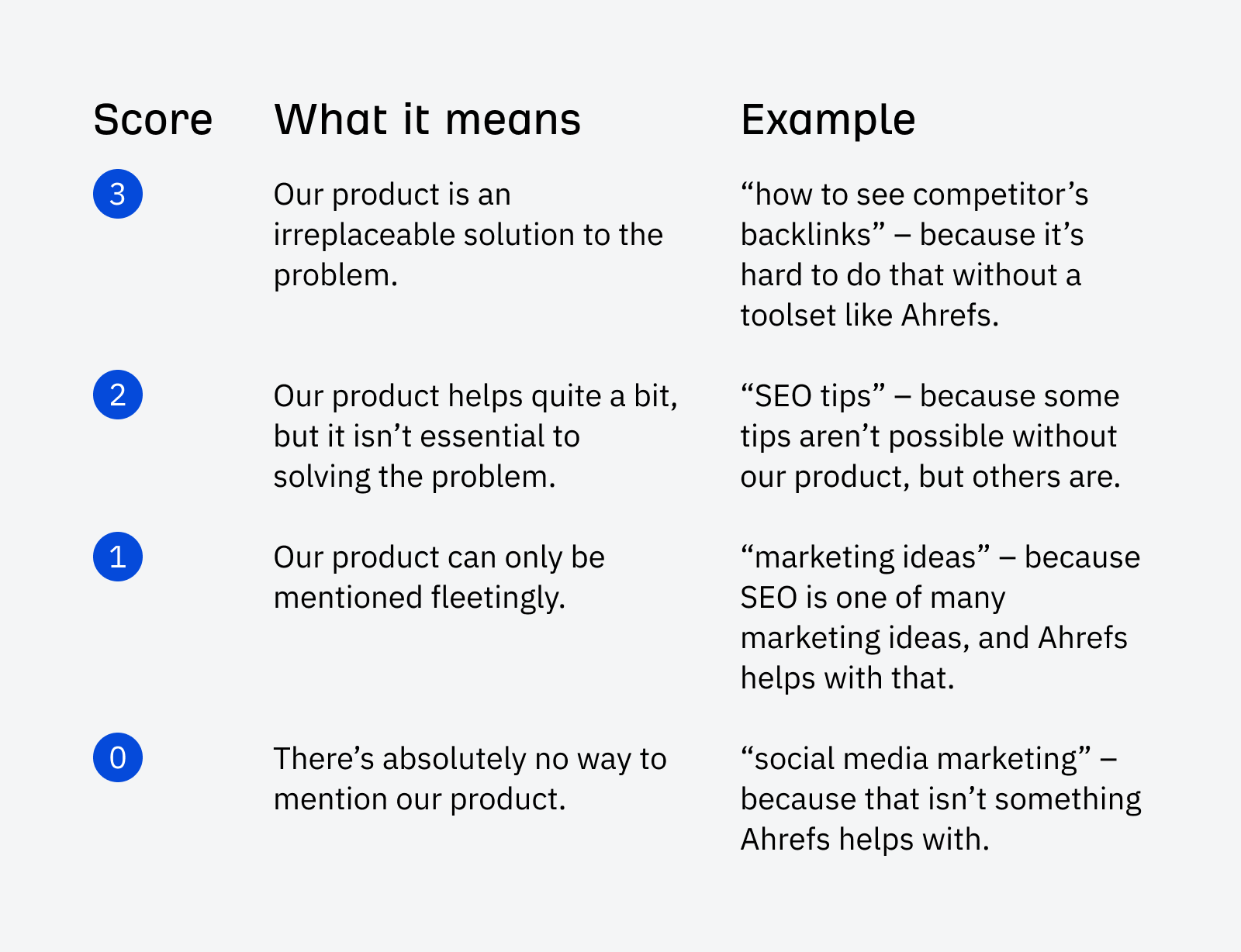

For every article we’re planning to write about on the blog, we give it a business potential score. This score is our estimation of how valuable it is to pitch our product for a given topic.

Making your content marketing successful takes a lot of work. Here are some things you can do.

Create new content

There are many different types of content you can create, but with limited resources, it’s usually best to start out with bottom-of-funnel transactional content and then move to informational content and videos. After you’ve got more resources, you can create things like virtual events, courses, e-books, case studies, white papers, podcasts, or even magazines or books.

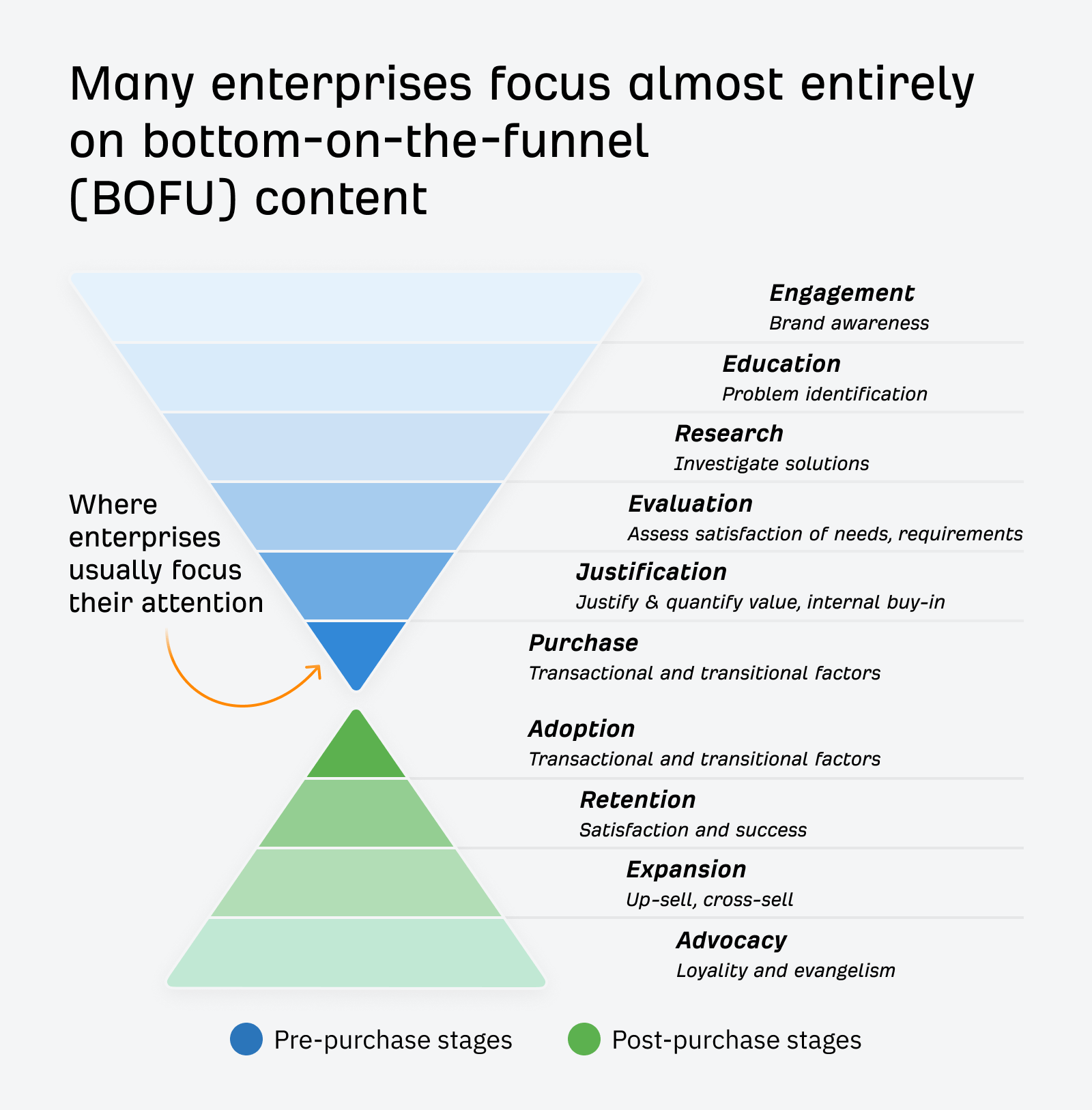

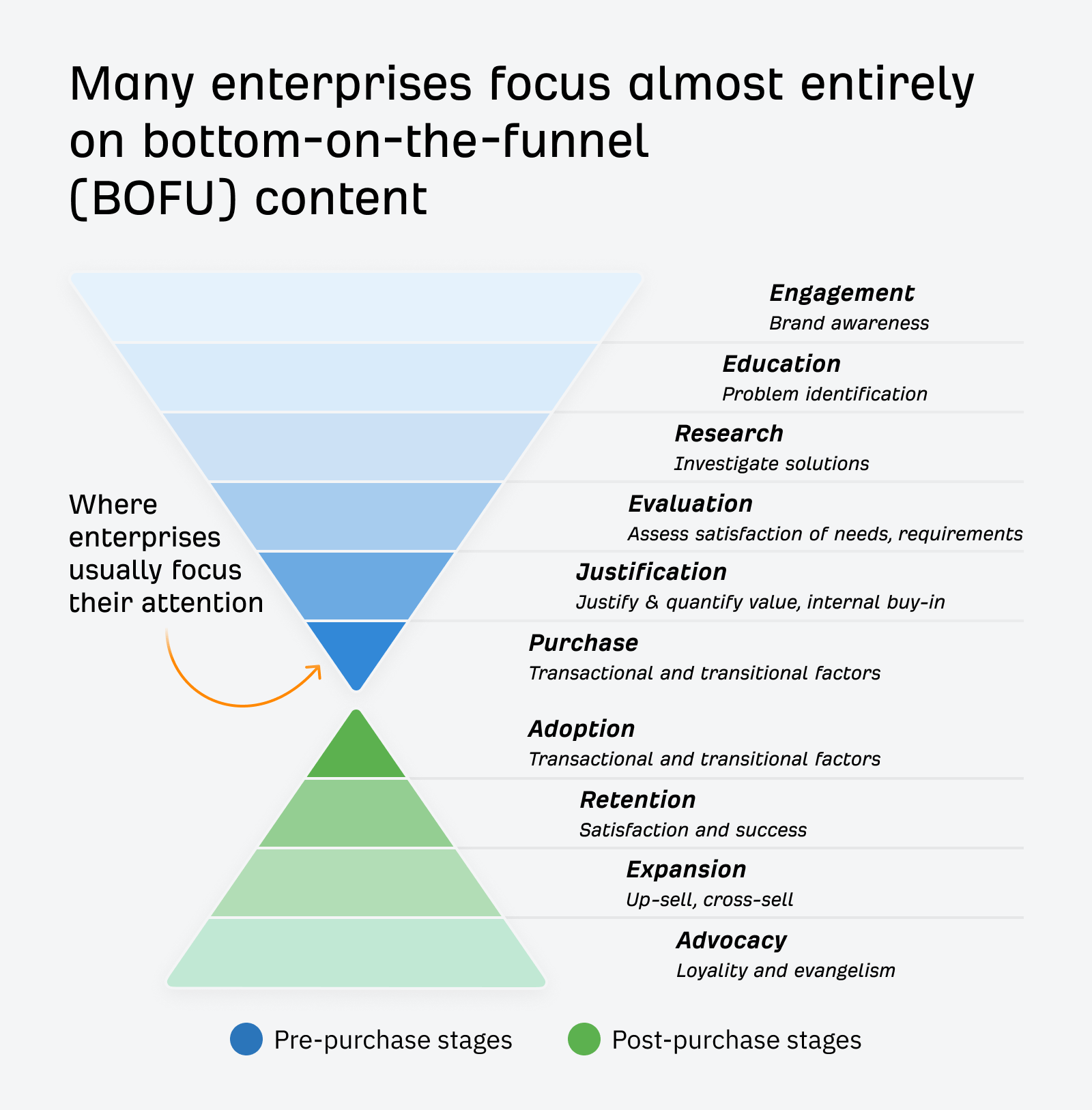

The sales process for enterprise companies is typically longer. Many companies want to skip top-of-the-funnel and informational content and focus more on the end-of-funnel traffic that converts. In doing so, they narrow their pipeline and give their competitors opportunities to be seen as experts instead of them.

Starting with bottom-of-the-funnel content makes sense, but eventually, you’ll want to create that top-of-the-funnel content and expand your pipeline.

When creating content, you have to find a process that works best for your company and content creators. That will change depending on who is creating your content.

What content should you create?

I like to start with my competitors’ top pages rather than starting research with a list of keywords. If you export and combine this data, you end up with a list of your competitors’ most successful content, and you can start with the content you know already works and is likely driving value to a competitor. I talk about my process for this in our article on how to create great content.

Every team I ever worked with, whether product-focused or marketing-focused, loved to see this data. You may want to keep track of your content creation in Google Sheets or Airtable.

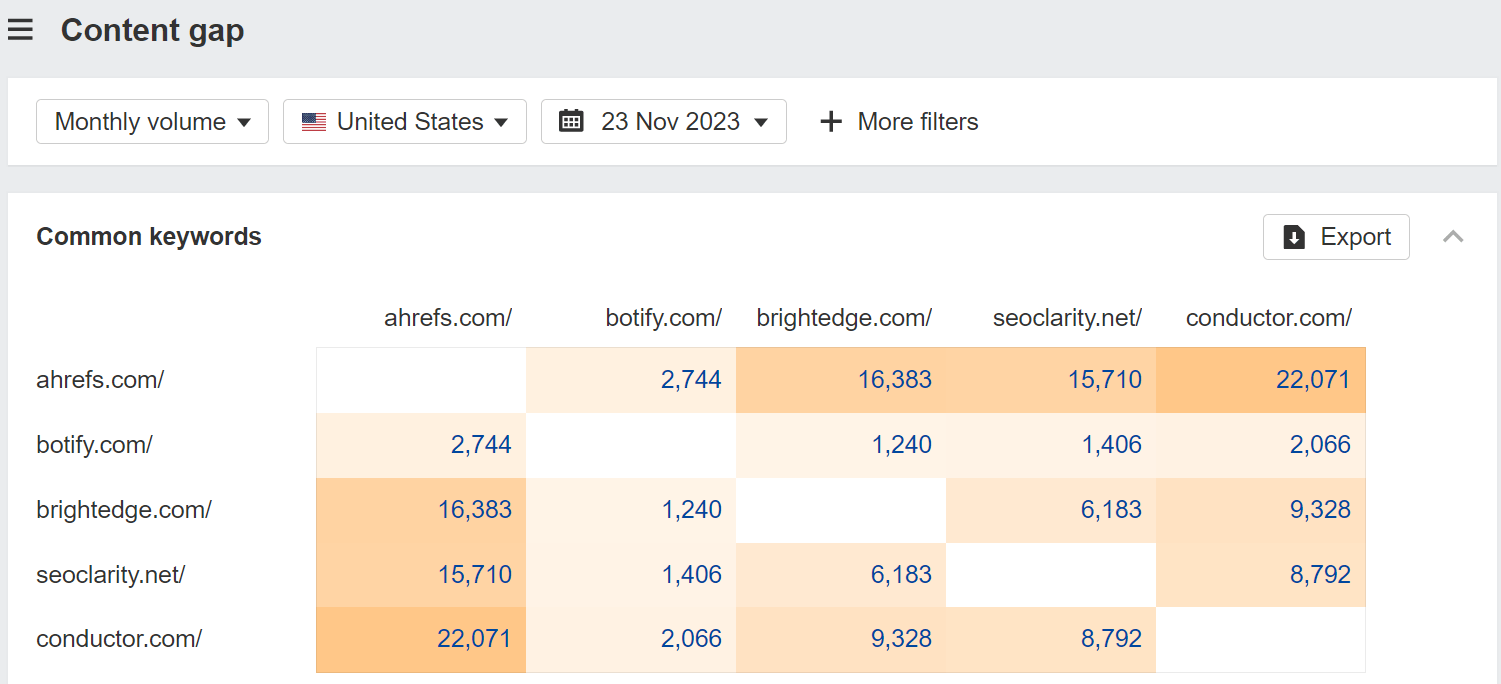

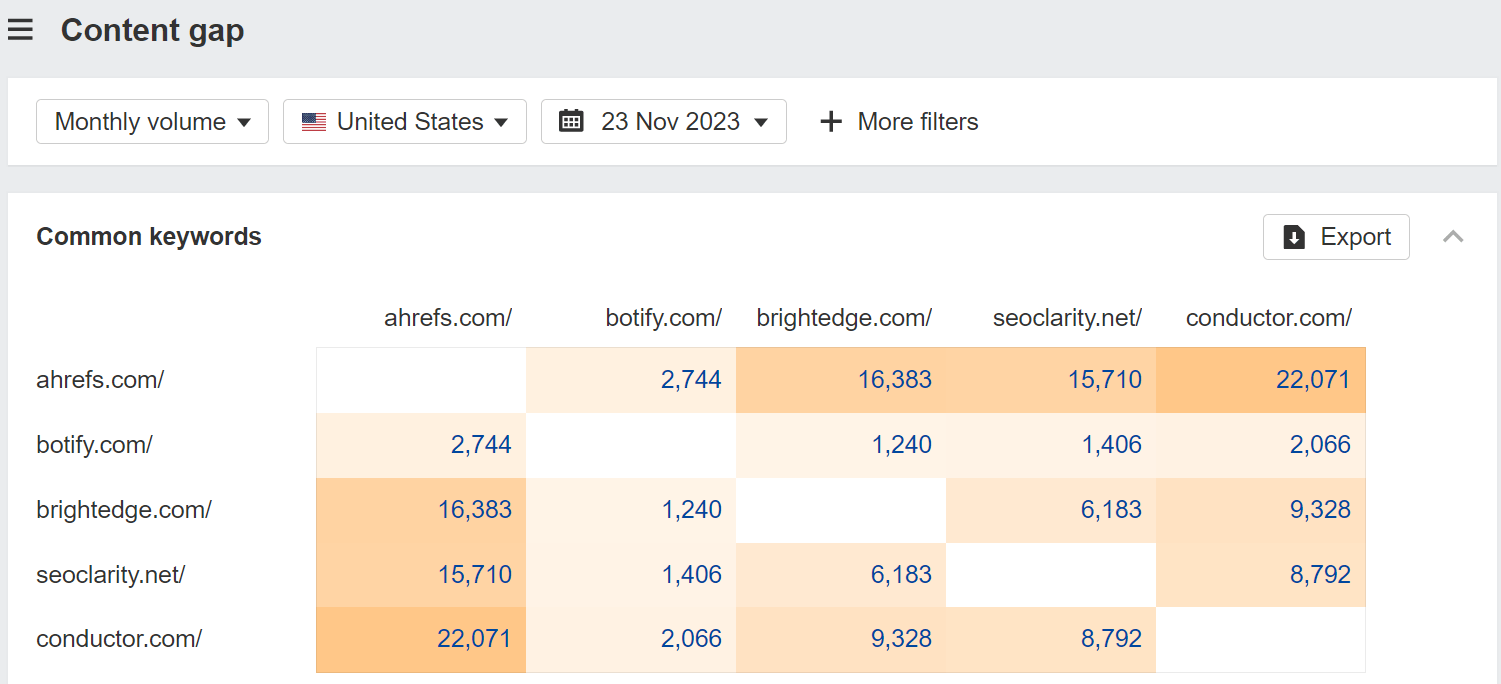

Alternatively, you can use the Content Gap tool to find these opportunities, but you may see some repeated opportunities because of similar keywords. We will soon update this to add clustering and help reduce this extra noise.

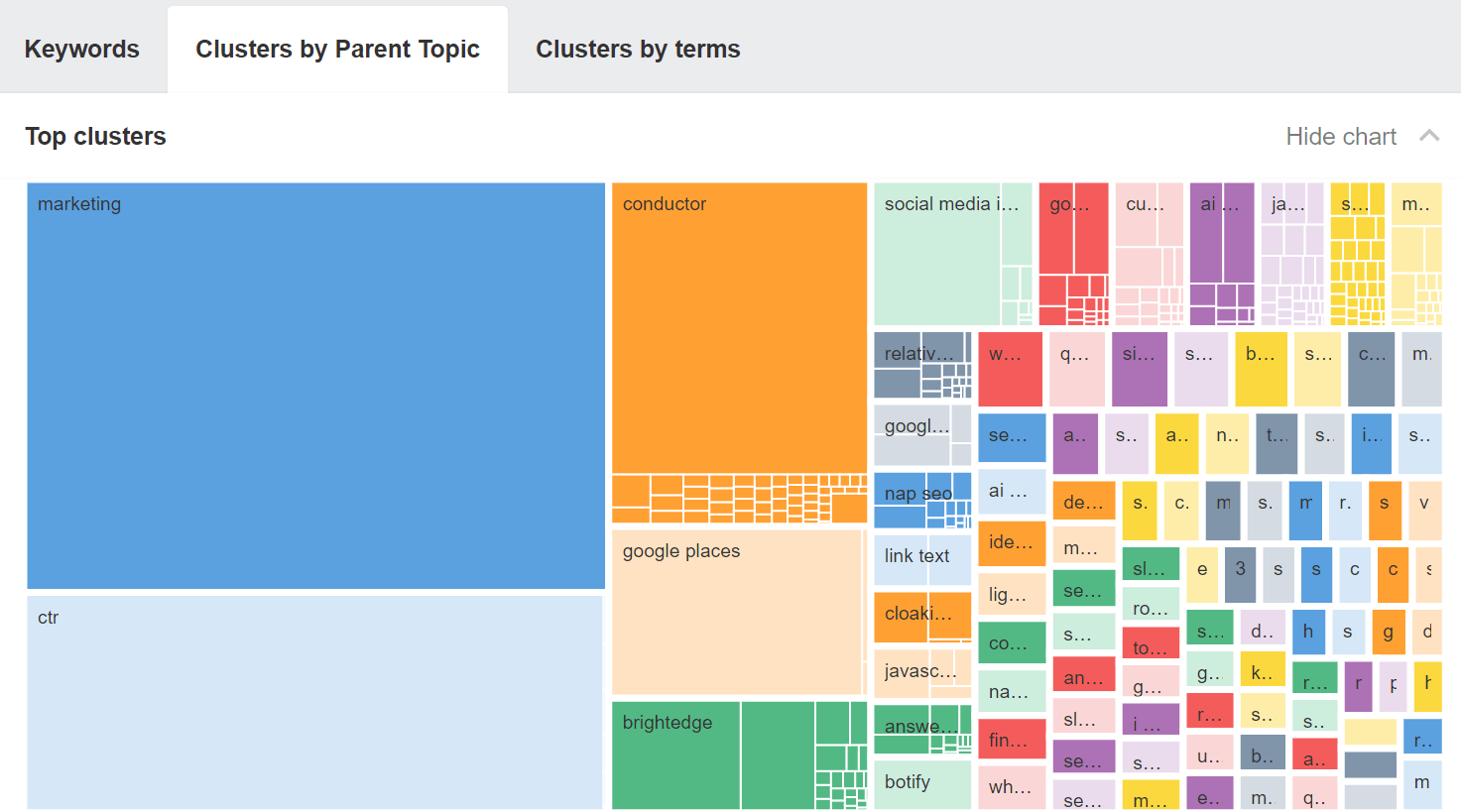

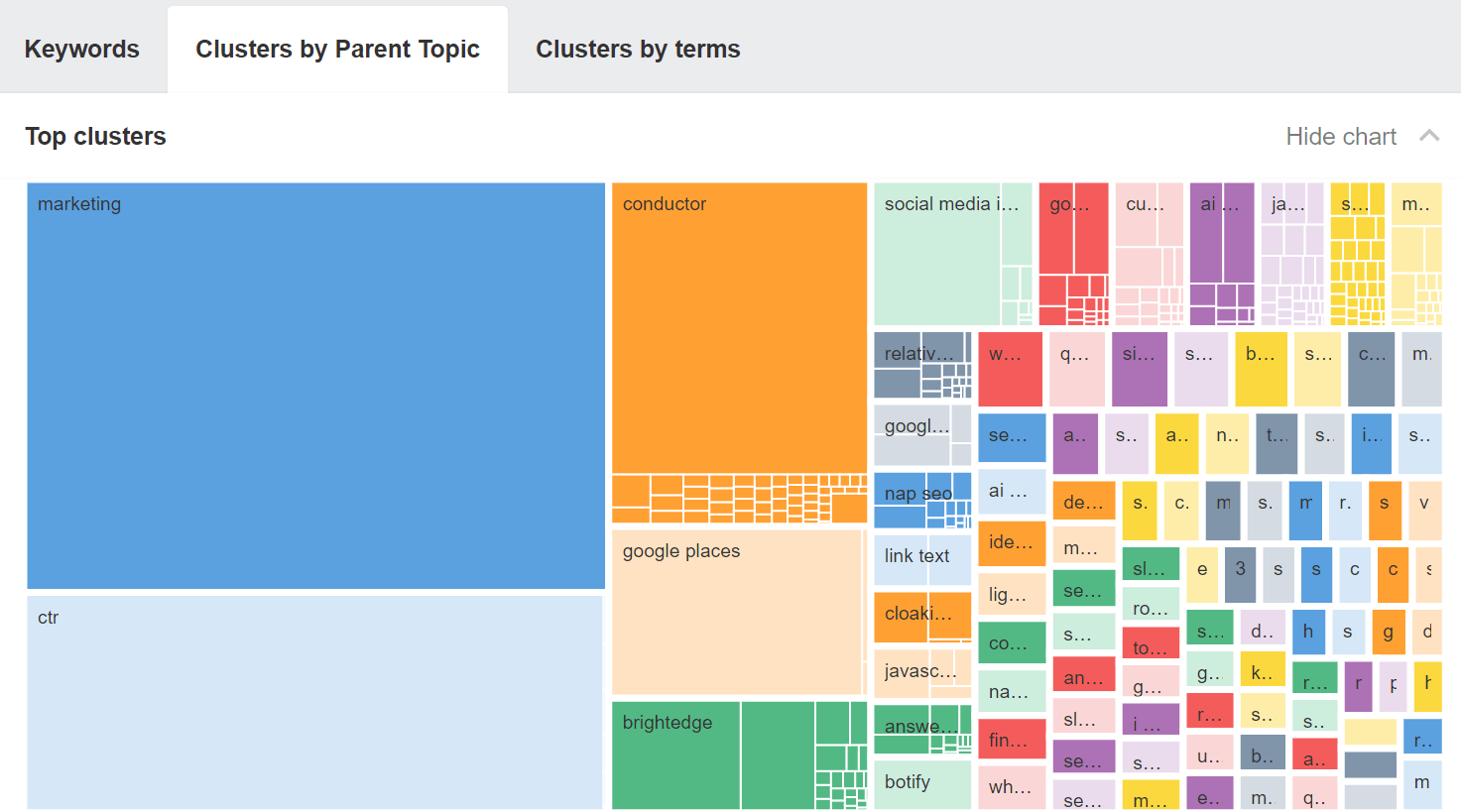

For now, you may want to export the keywords from the Content Gap tool, paste them into Keywords Explorer, and go to the “Clusters by Parent Topic” tab. This should give you actual content opportunities you may not be covering.

SEOs creating content

For SEOs writing the content, I recommend you talk to the experts or interview them to get their insights. They may have papers, presentations, podcasts, or webinars you can repurpose. The sales team is another great source of information. I’ll also look at what people search around a topic and what other pages cover.

A lot of organizations create copycat content, but that’s just more content that’s the same as what is already out there. This isn’t future-proof. I encourage you to do better. If you can put in a bit more work and add to the information that already exists, your content will be more successful.

Writers creating content

You likely have a team of people who create the content, and you may be able to empower them to do this process themselves.

One of the things that I liked to use with content teams was a card-sorting exercise. Take the data you’re looking at around what people search and what the top pages talk about, and put them on index cards.

Have your content writers organize this in a way that makes sense to them. They’re going to be grouping your data into topics and subtopics and coming up with the content sections or pages they should write.

This helps train people to do this task themselves, and there’s no right or wrong answer as to how it should be organized. You can also show how top pages cover this information as confirmation that it works. As long as you’re writing about what people are looking for, you’re likely to be successful.

Alternatively, your SEOs can provide writers with easy-to-digest outlines or content briefs that cover what should be talked about in the articles.

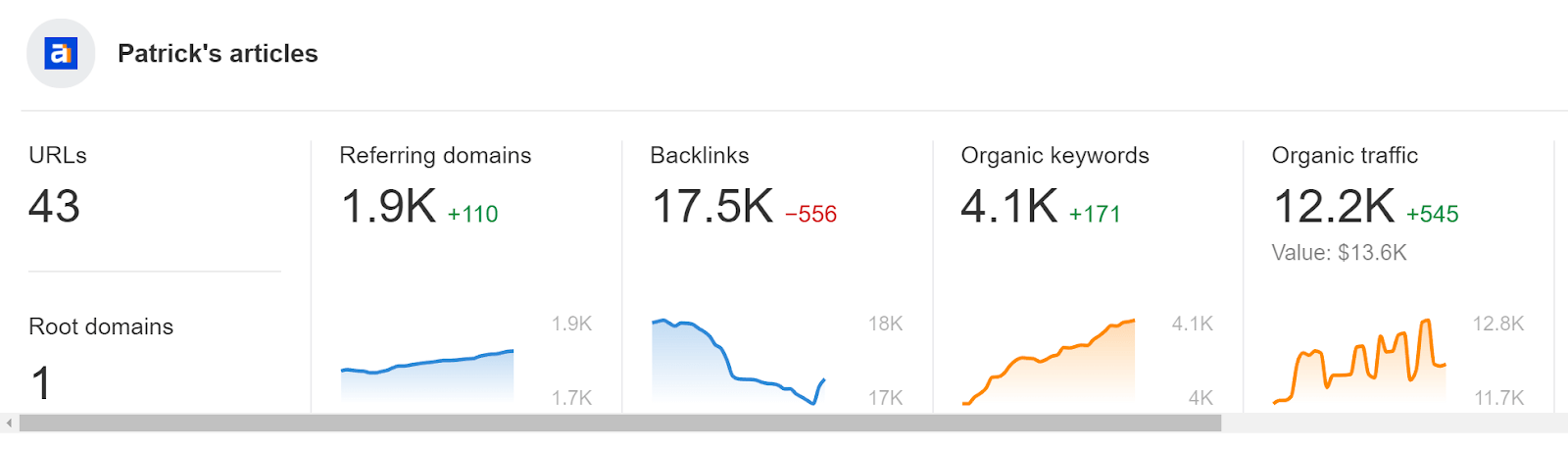

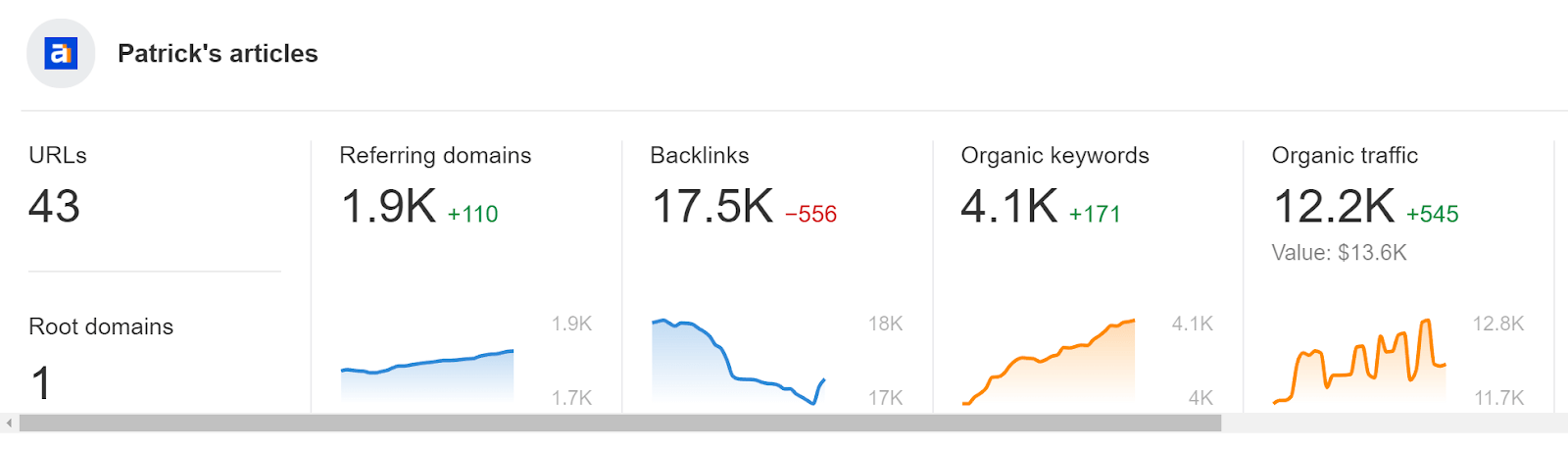

To see how each author or team is doing, you can create Portfolios. This will help identify star performers or writers or teams that might need some additional help.

Experts creating content

If your employees want to write content, you need to find a way to empower them to do so. These are your experts, and while the content they create may require some editing, the insights from these employees are valuable and may not be anywhere else.

If your experts don’t have the time to write content, another option is to interview them or have them review the content you create. Most people are usually happy to give quick insights verbally, which you can then use in your content.

Improve existing content

Making your existing content better can lead to quick wins. Here are some things you can look for.

Content with declining traffic

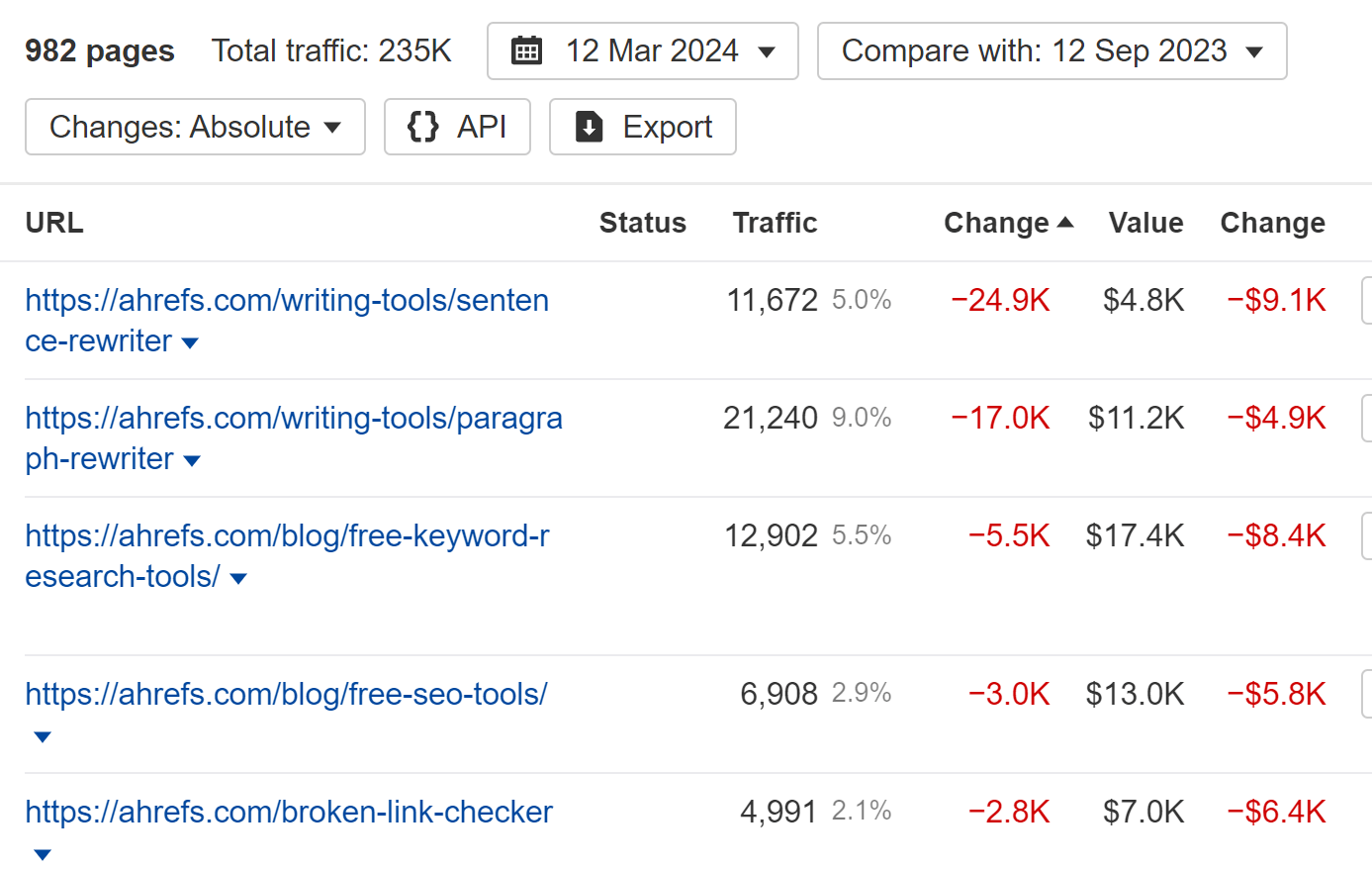

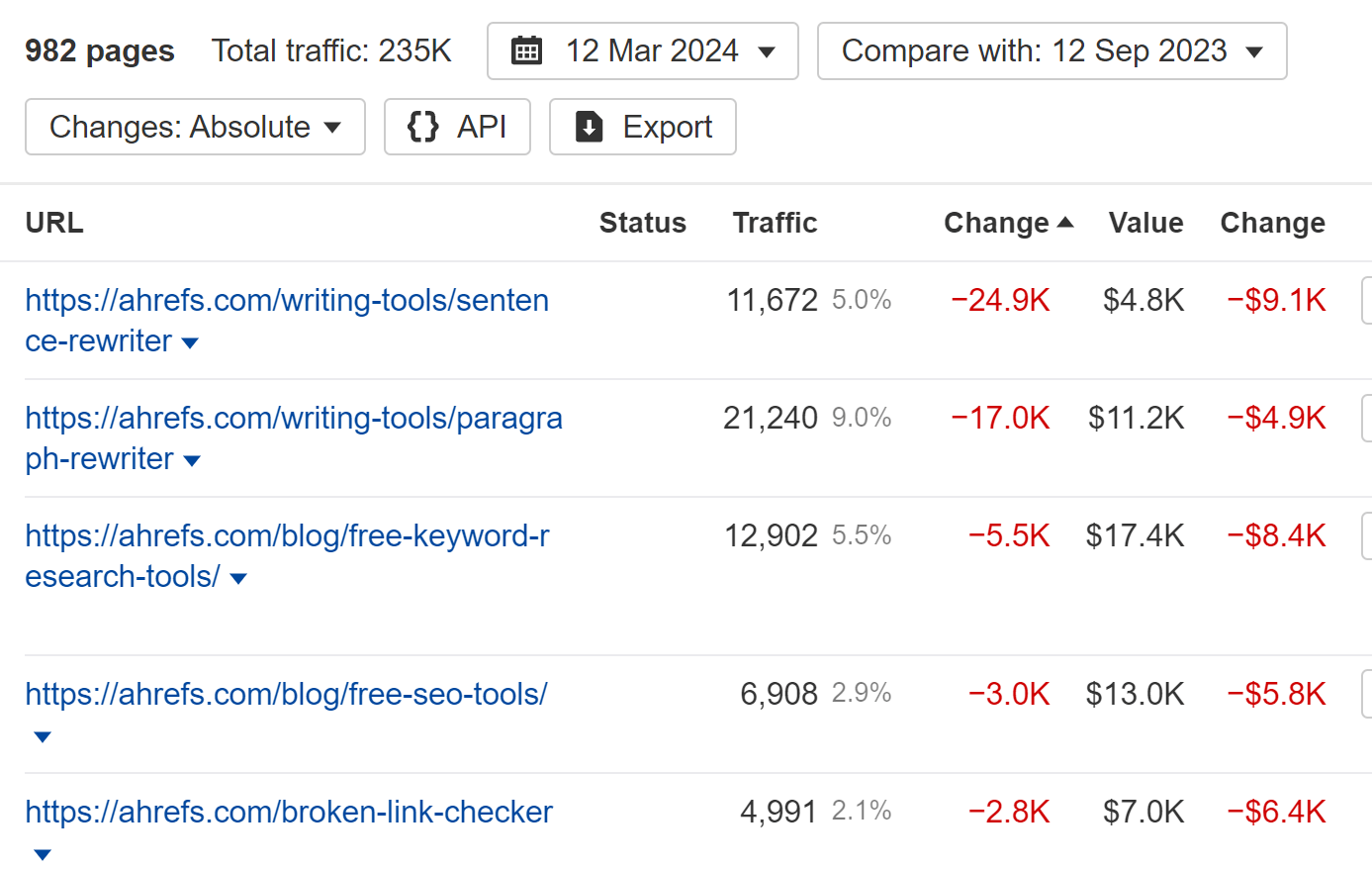

Apply a filter for “Traffic: Declined” in the Top pages report in Site Explorer and set your time period for the last six months or a year. Take a look at pages that lost traffic to see which ones are important to you and that you think you can improve.

Low-hanging fruit

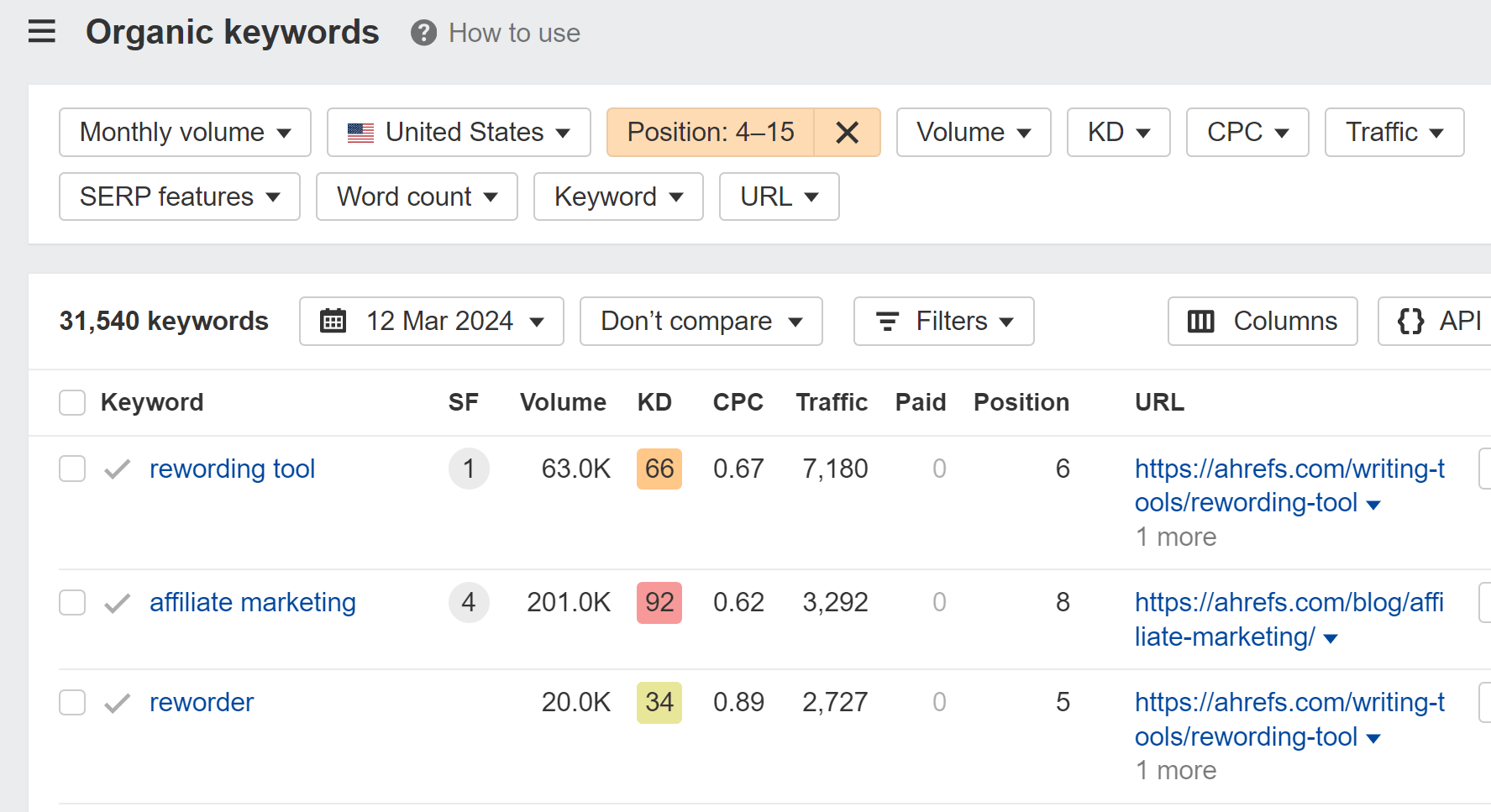

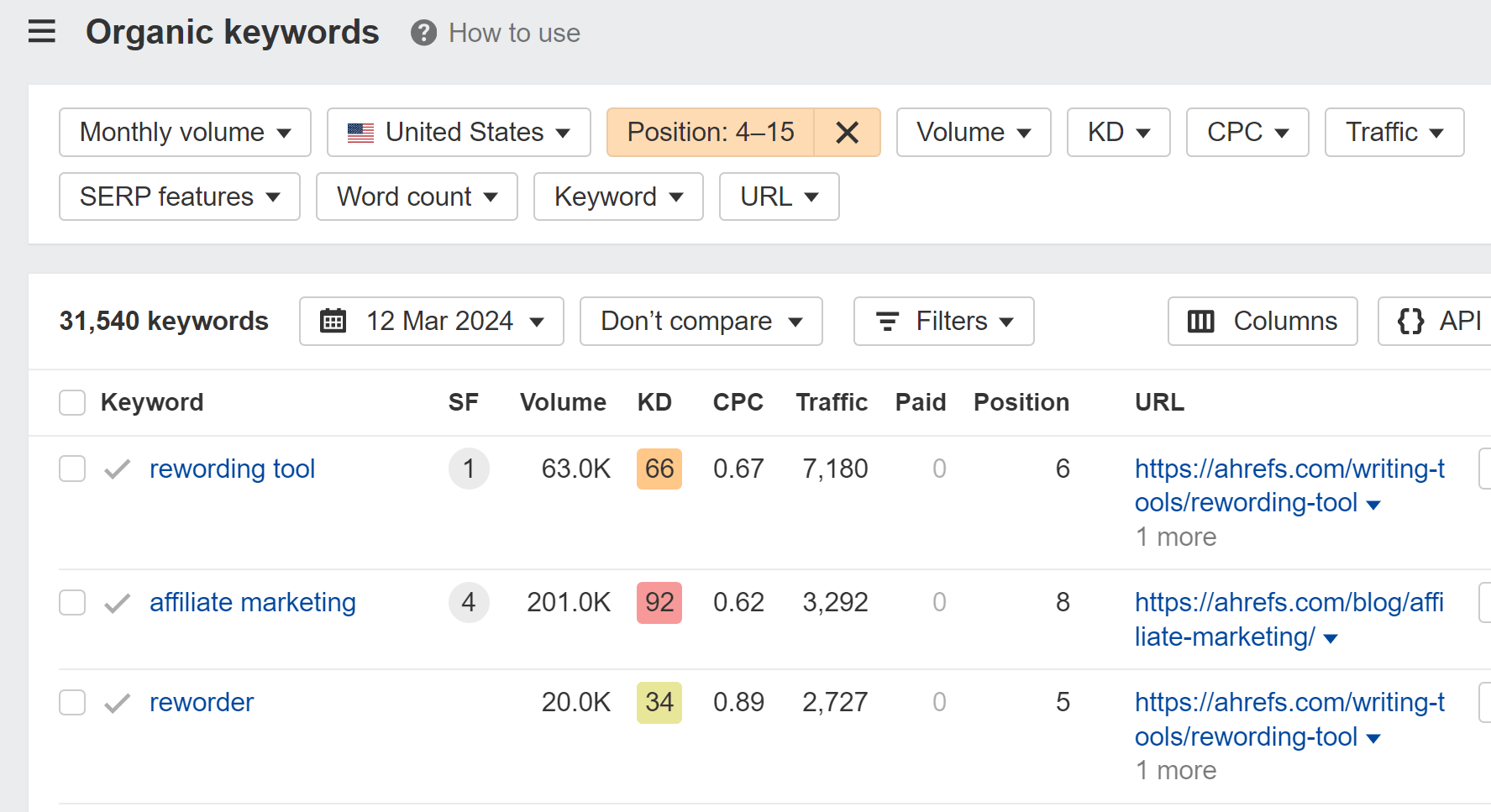

One common way to prioritize content improvements is to check for low-hanging opportunities, like pages ranking in positions 4-15 for their main keyword. You might be able to quickly improve these pages’ content to rank higher and get more traffic. Use Google Search Console or the Organic Keywords report in Site Explorer to find pages that fit the bill.

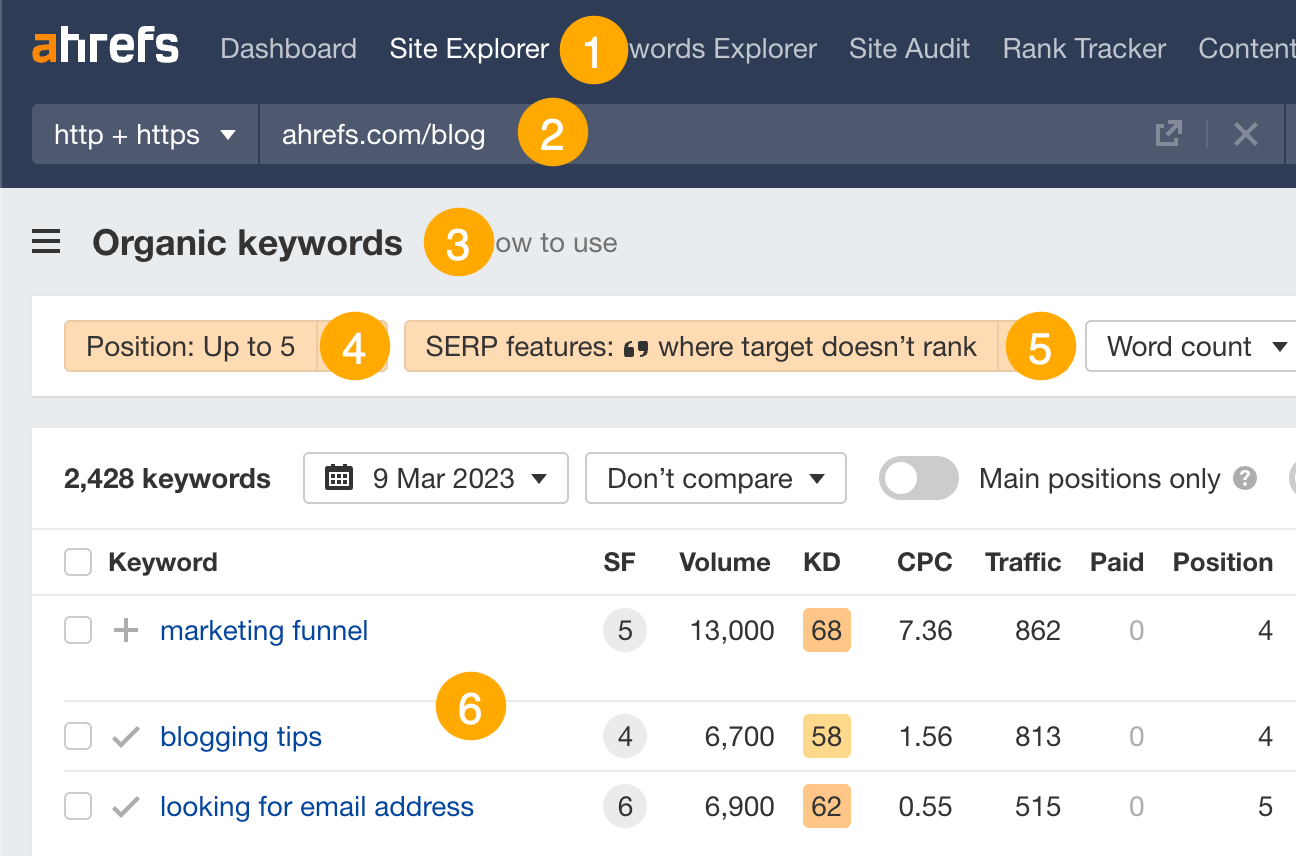

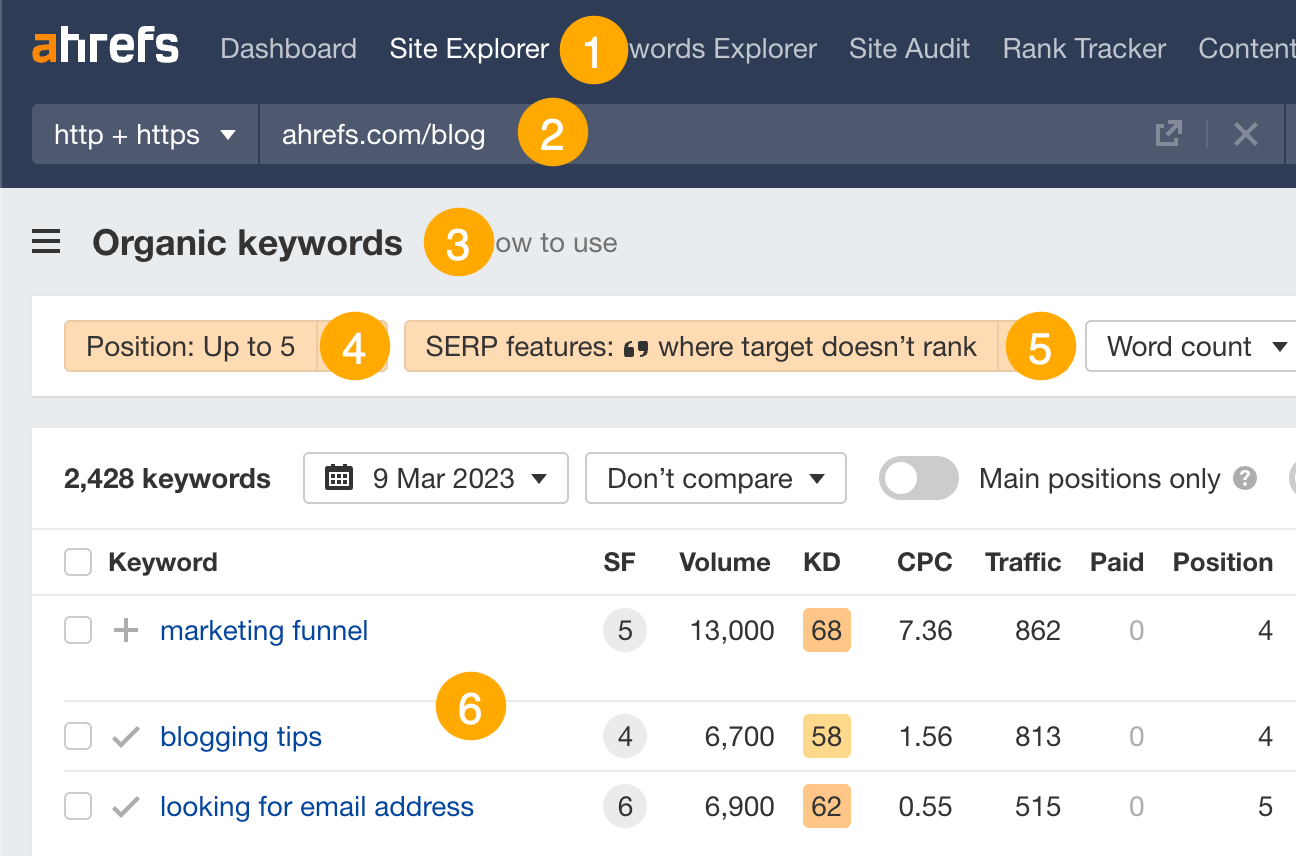

Optimize for featured snippets

For informational content, targeting featured snippets can skyrocket you to the top of the SERPs.

Here’s how to find the easiest opportunities:

- Go to Ahrefs’ Site Explorer

- Enter your domain

- Go to the Organic keywords report

- Filter for keywords in positions #1–5

- Filter for keywords that trigger featured snippets “where target doesn’t rank”

- Look for keywords where your page is missing the answer, then add it

This is arguably the most important section that you can write if you want to rank for informational queries. You can see what is already eligible for a snippet and the kind of things that these snippets mention, along with why one may be better than another. Now you just have to make something that’s better.

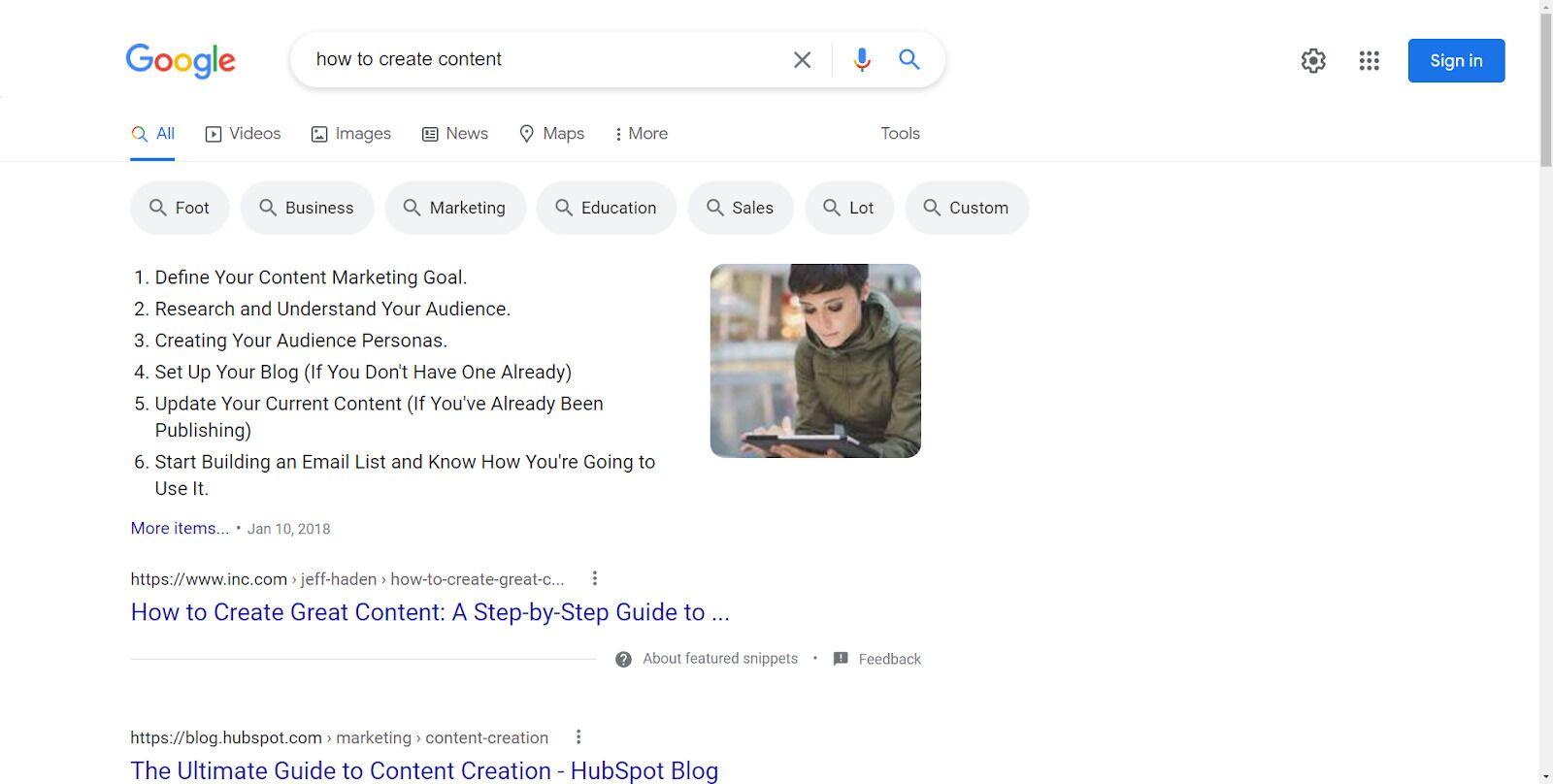

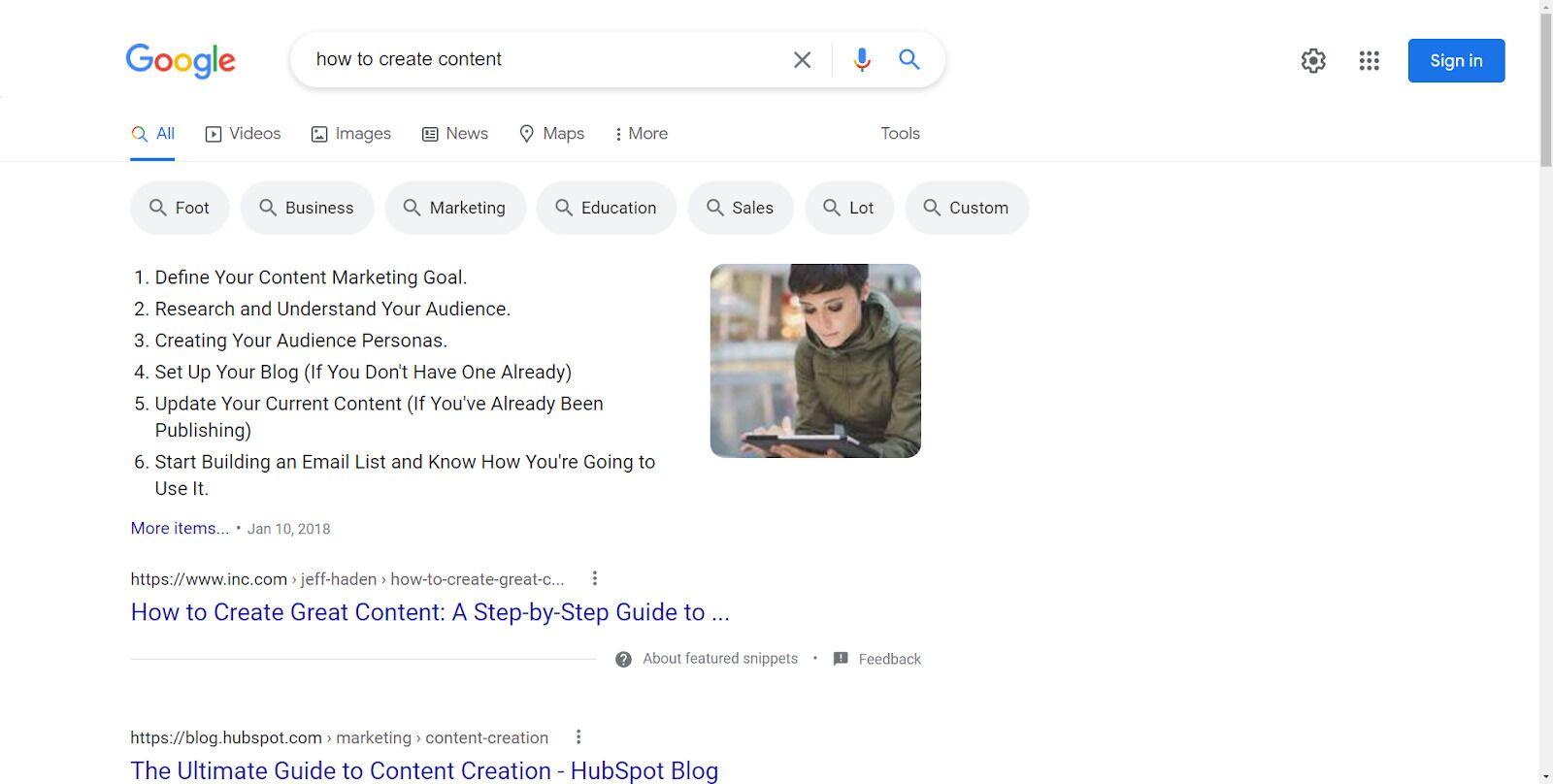

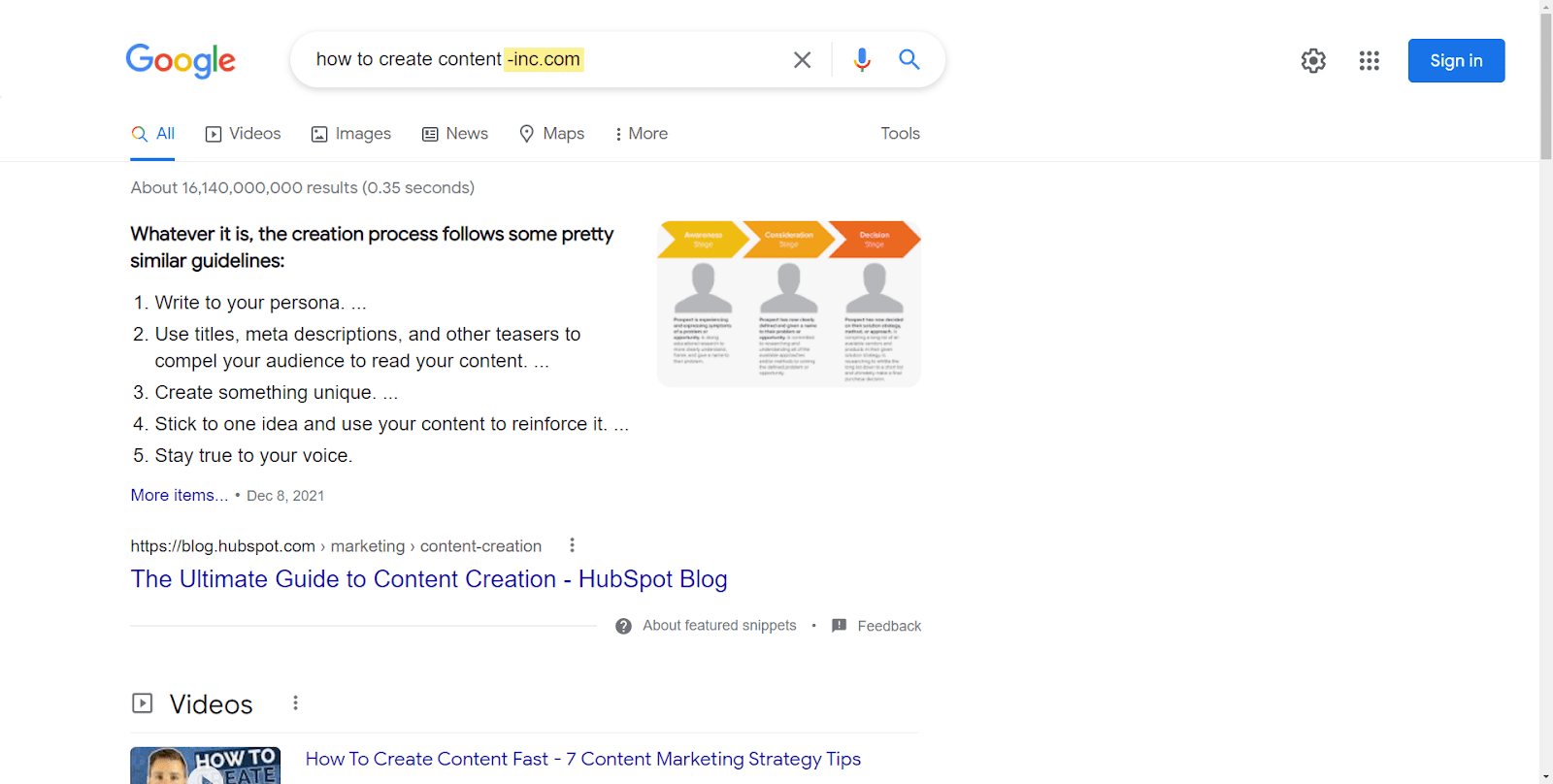

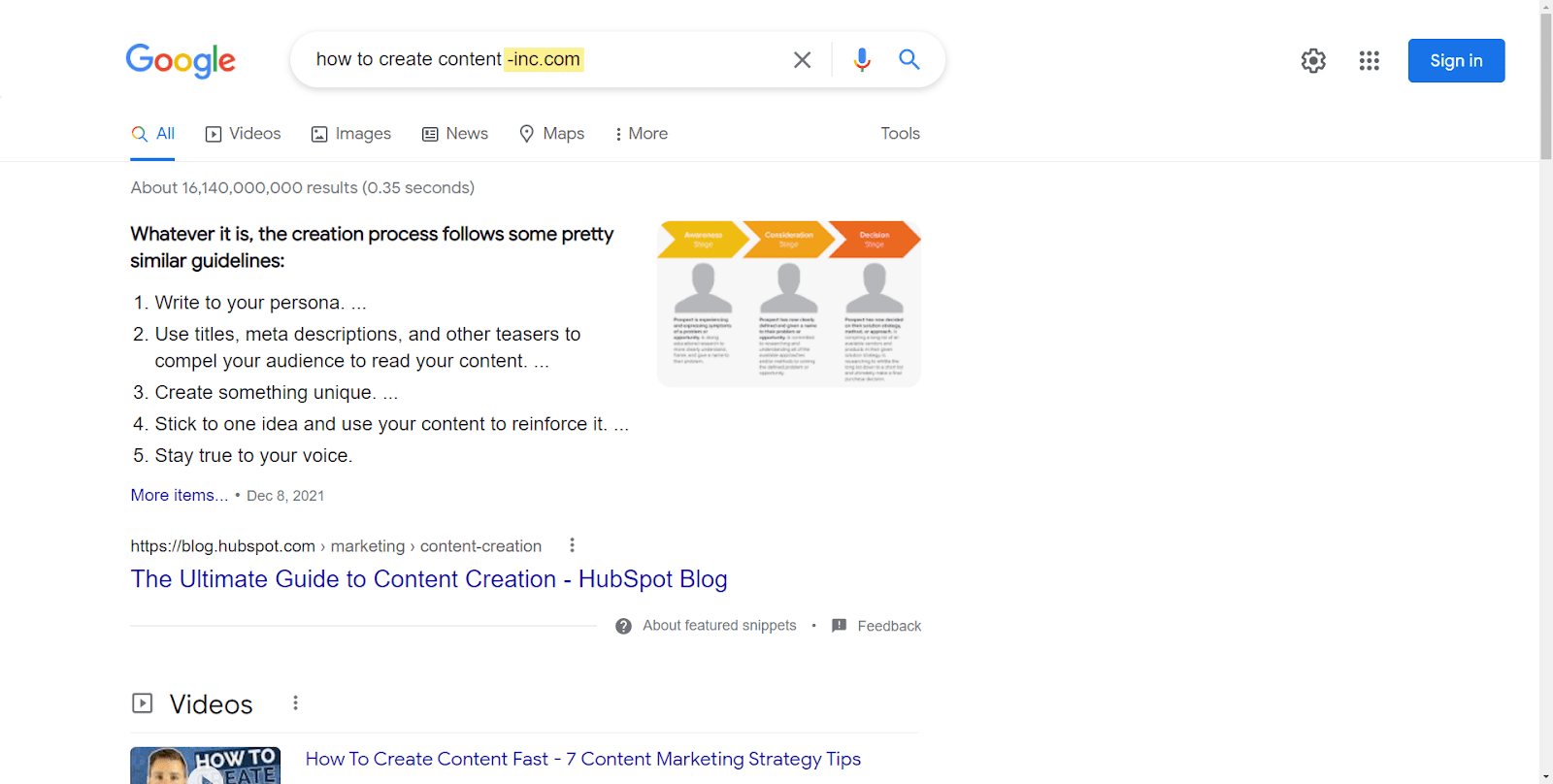

Here’s an example: For “how to create content,” the main snippet is from inc.com:

If you append “-inc.com” to your search, you’re removing this site from the results and can see the second eligible featured snippet from hubspot.com:

You can repeat this process, removing more sites from the results to see more eligible featured snippets. Also, you can glean insights into what it takes to get featured snippets and figure out why one may be considered better than another.

For some head terms that are more informational in nature, you may have to refine the query as “what is [head term]” for this to work.

Translate successful content

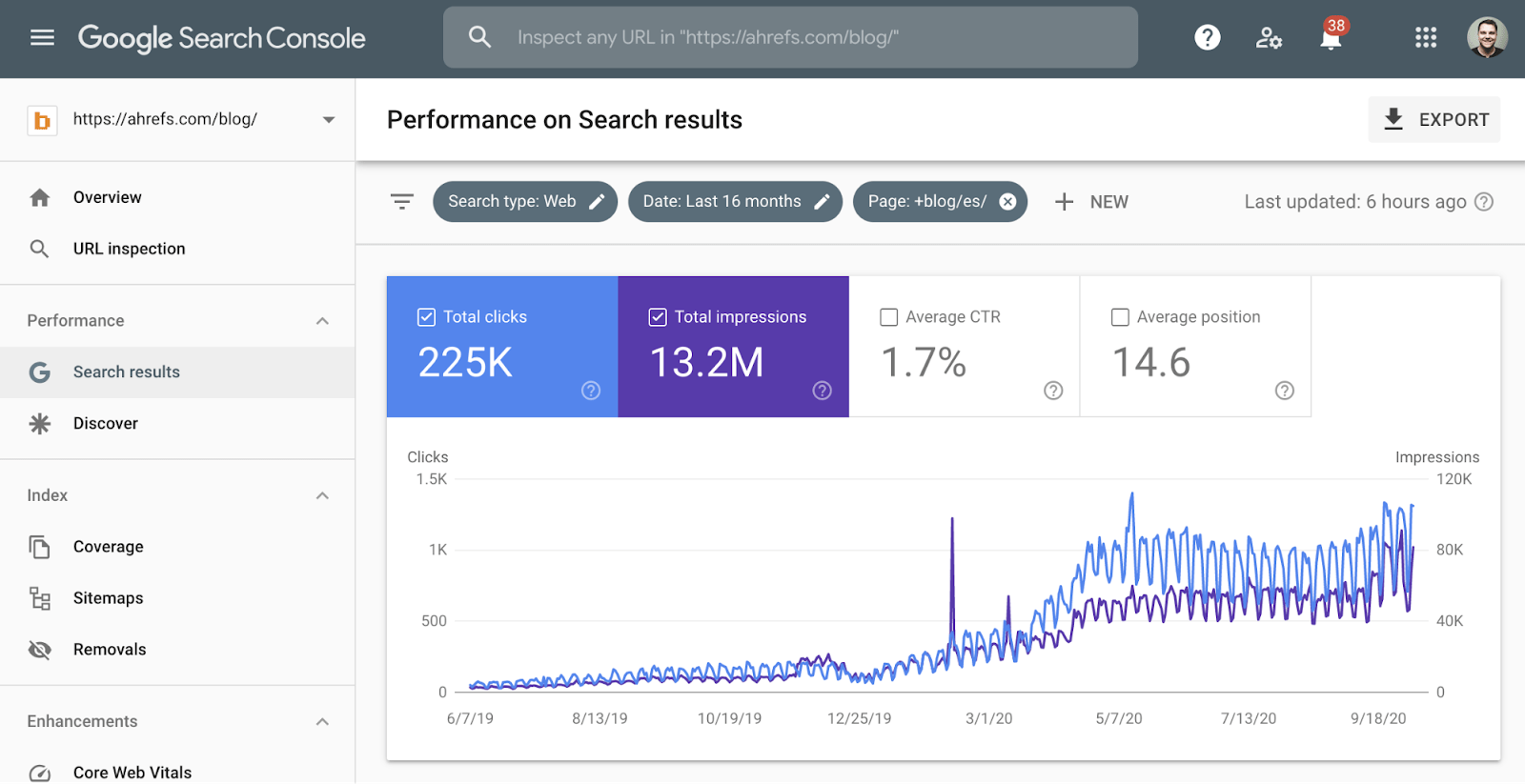

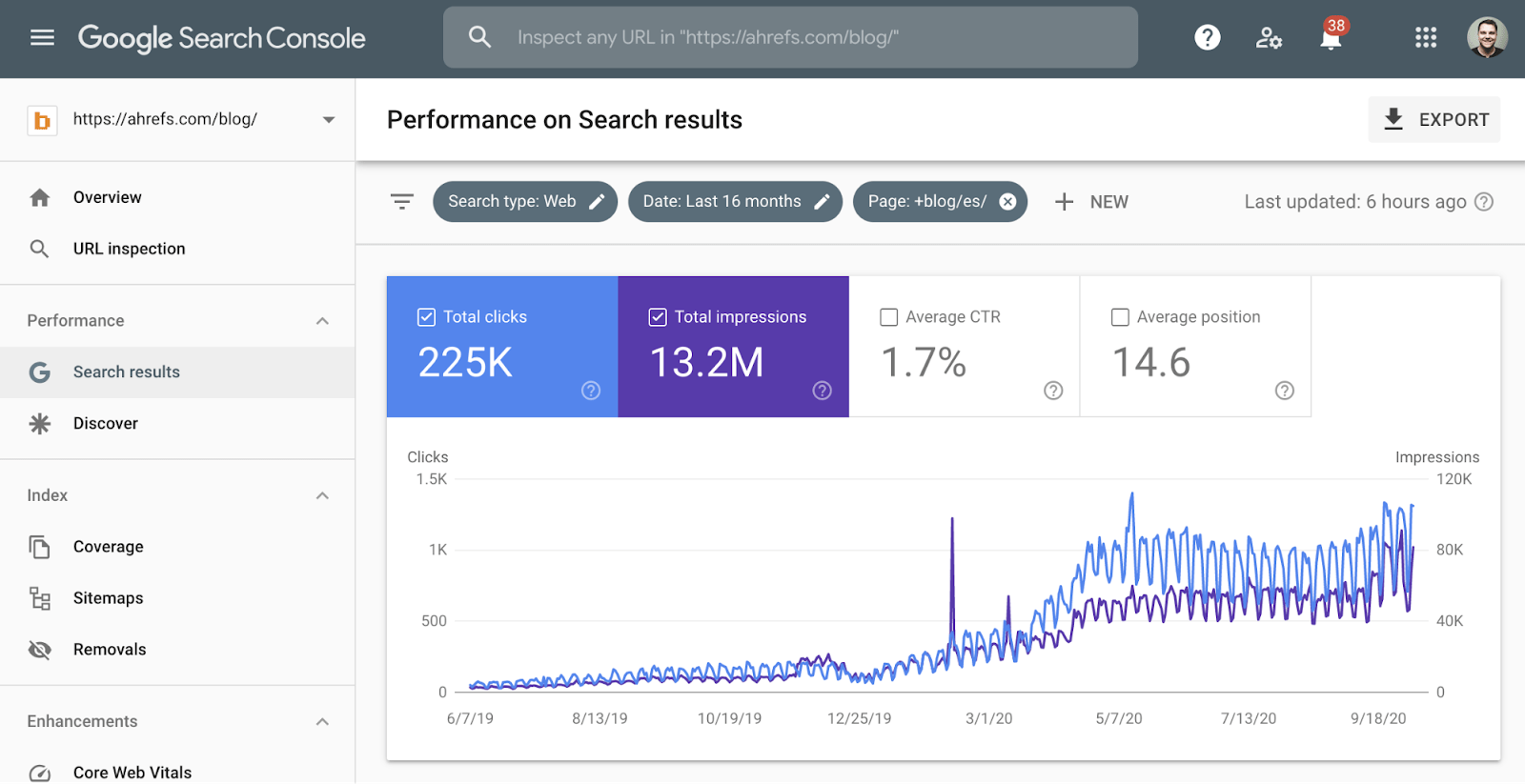

Most enterprise companies operate in many countries and in many different languages, and their enterprise SEO teams will have to work on international SEO. If you have content that’s working well in one language, it’s likely going to work well in another language as well. You should translate successful content for those other languages.

We’ve had success with this at Ahrefs despite allocating minimal resources to this process. It’s one of the areas where I expect massive growth as we start to focus on it more.

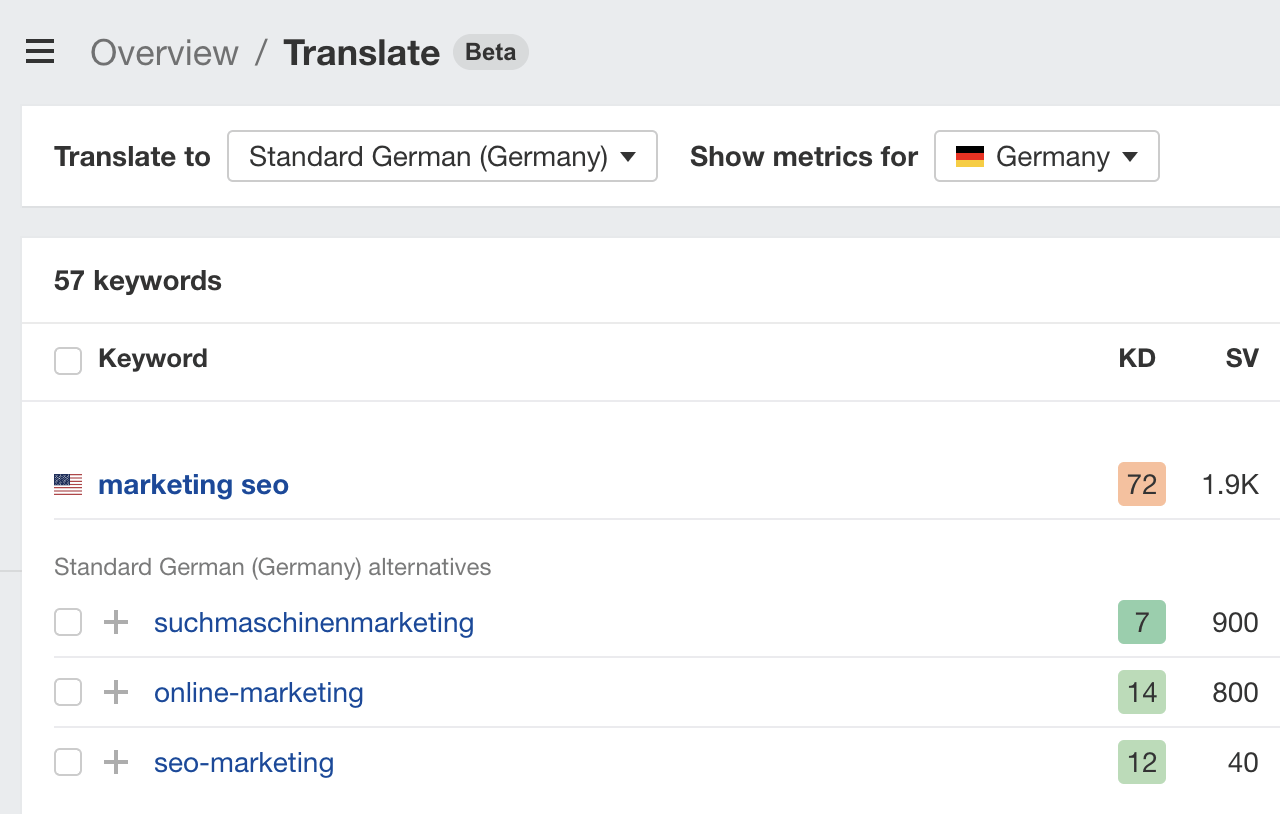

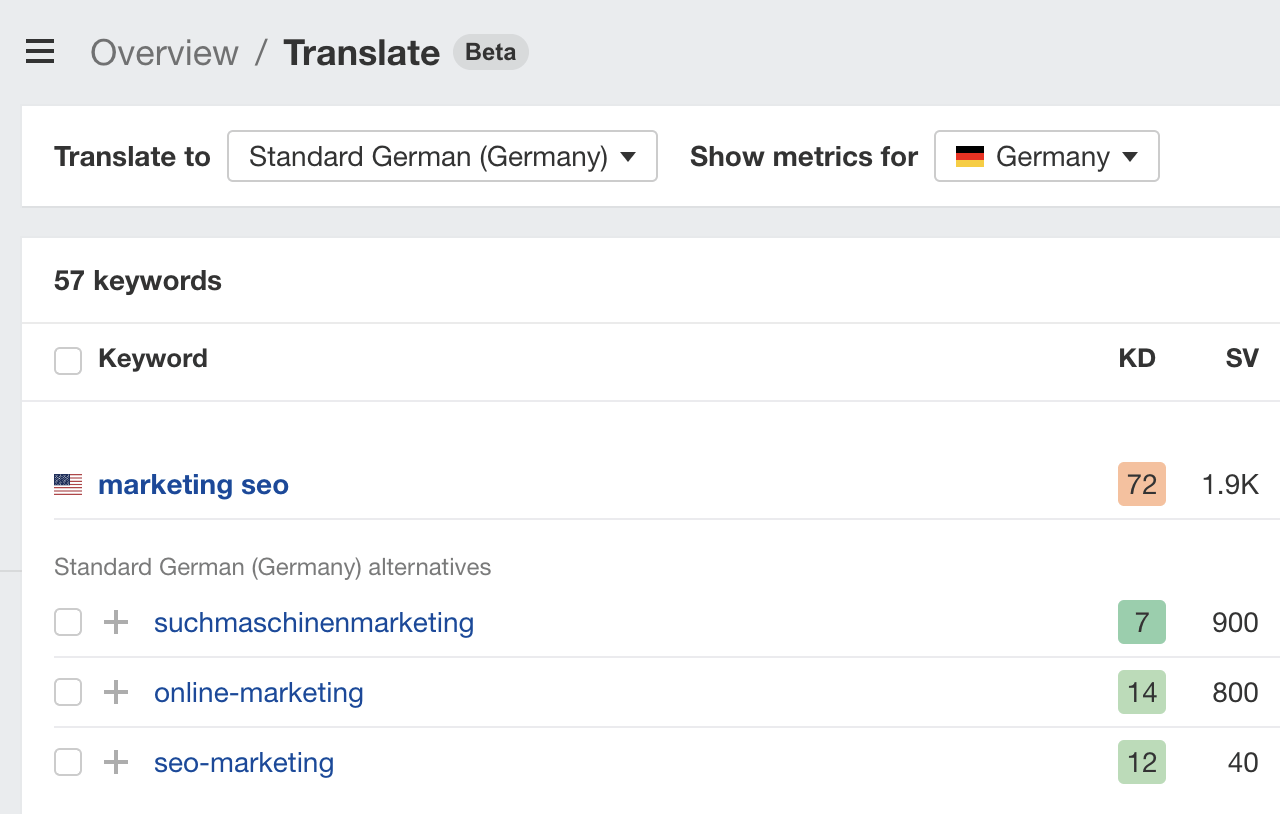

One new feature in Keywords Explorer that can help with this is the ability to translate and see metrics for keywords on a saved keyword list.

For example, we have a saved list of SEO keywords. In one click, I can translate those keywords into German and see metrics like search volume and Keyword Difficulty (KD) for their translations in Germany. This helps me to understand which topics have the highest search demand in other languages and markets.

Create branded content – sometimes

You’re probably going to run into content that is too brand-focused, too product-focused, or even too keyword-focused. People will ask you to rank for terms with pages they control that don’t align with search intent. A good example is someone wanting to “sprinkle some keywords” into their product page to rank for an informational term.

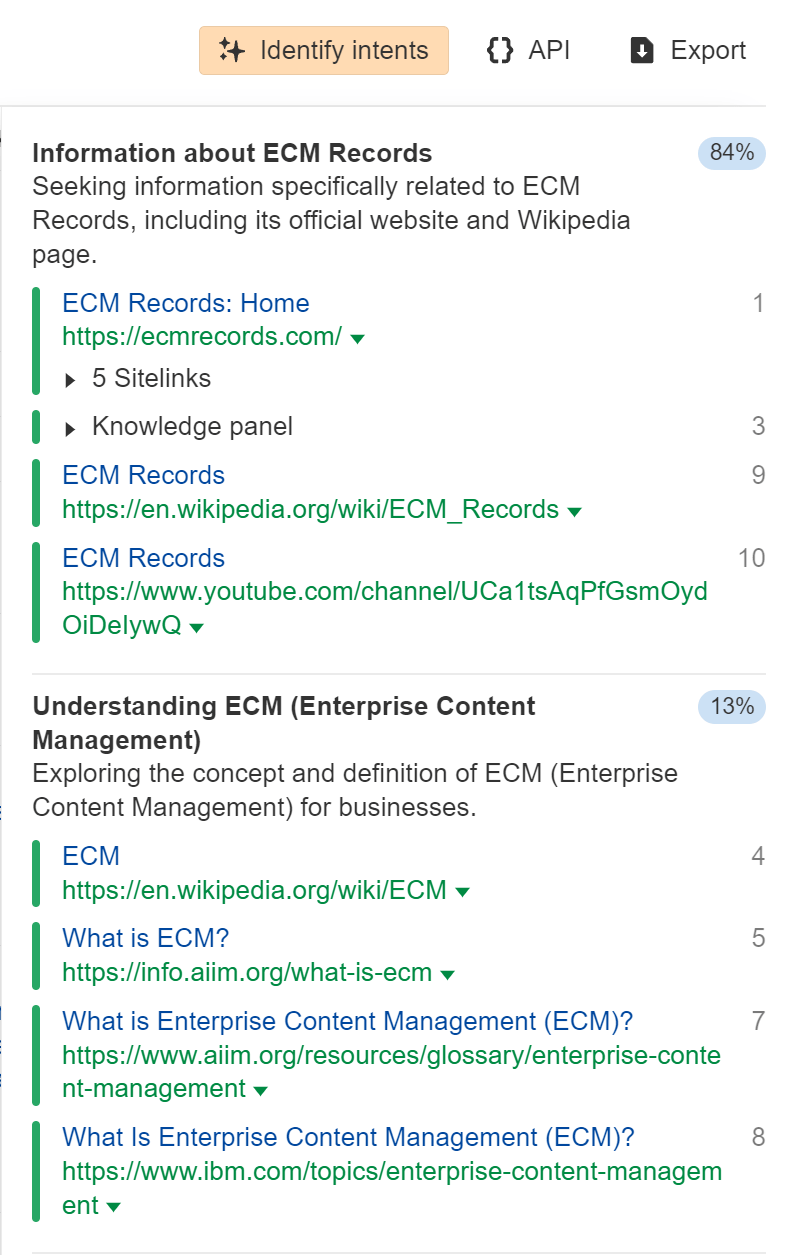

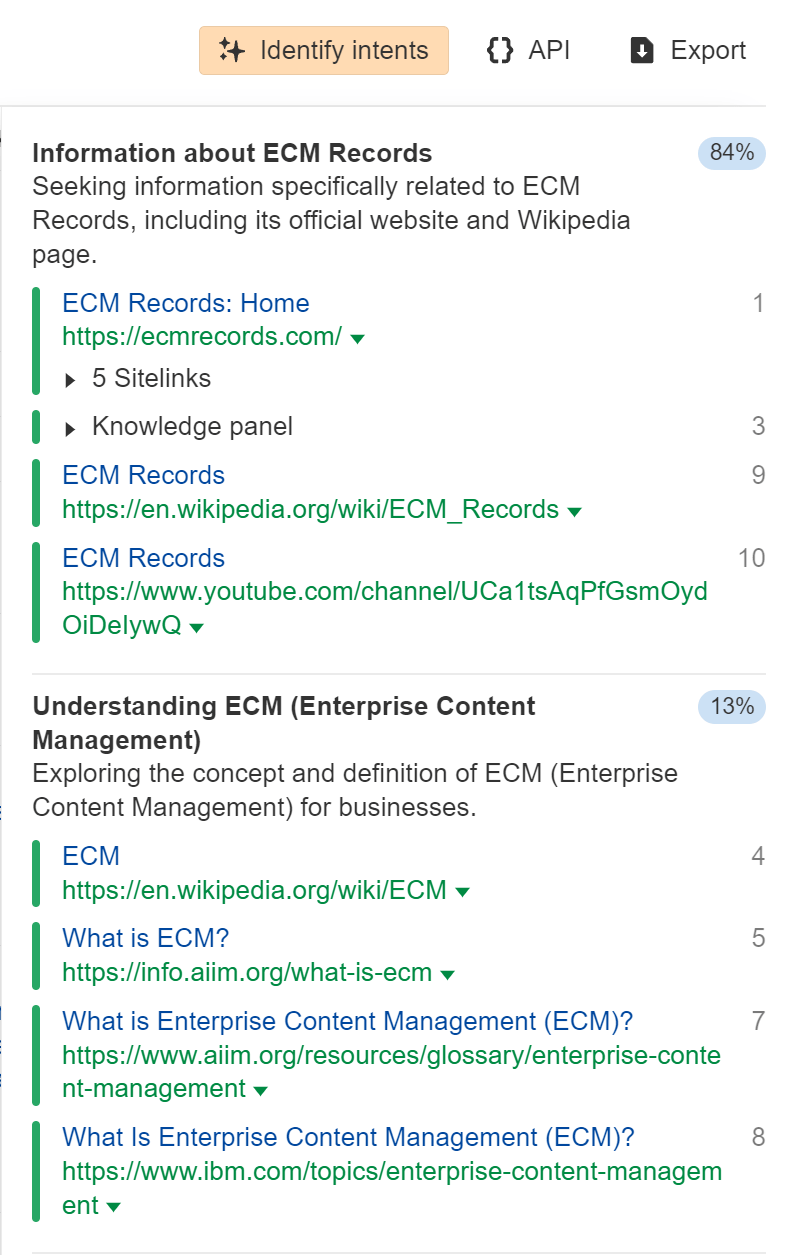

You can use the “Identify intents” feature in Keywords Explorer to show the main intent of each term and the percentage of traffic to each result type. A product page for “enterprise content management” isn’t likely to rank for this query as the main pages ranking are informational intent.

Sometimes, there may be one product page ranking for terms like this where you have a shot at ranking, but it’s usually the most popular product in that position.

There are times you may want to optimize and even create content for branded terms, but this shouldn’t be your usual strategy. Nor should you “sprinkle some keywords” into brand-or-product-focused pages to try to rank for informational terms. These pages may be full of marketing or sales jargon and not have the content you actually need to rank.

Many enterprise websites get a lot of their overall traffic from branded searches, and they may not rank well for unbranded terms. Branded traffic is a good thing. It’s high-quality and converts well, but you should be getting it even without SEO help.

The exceptions to that may be for terms related to companies that were acquired or products that were renamed. You may still need content or documentation to help you keep traffic for those terms and direct people to new versions of the product.

Syndicate content

Content syndication is when one or more third-party sites republish a copy of content that originally appeared elsewhere. It frequently happens with news content, although, to be honest, any popular site is going to have scrapers, and enterprise sites may have a paid syndication strategy.

There are a lot of benefits to syndication, including increased reach. Check out our article on content syndication to learn more about it and how to follow best practices.

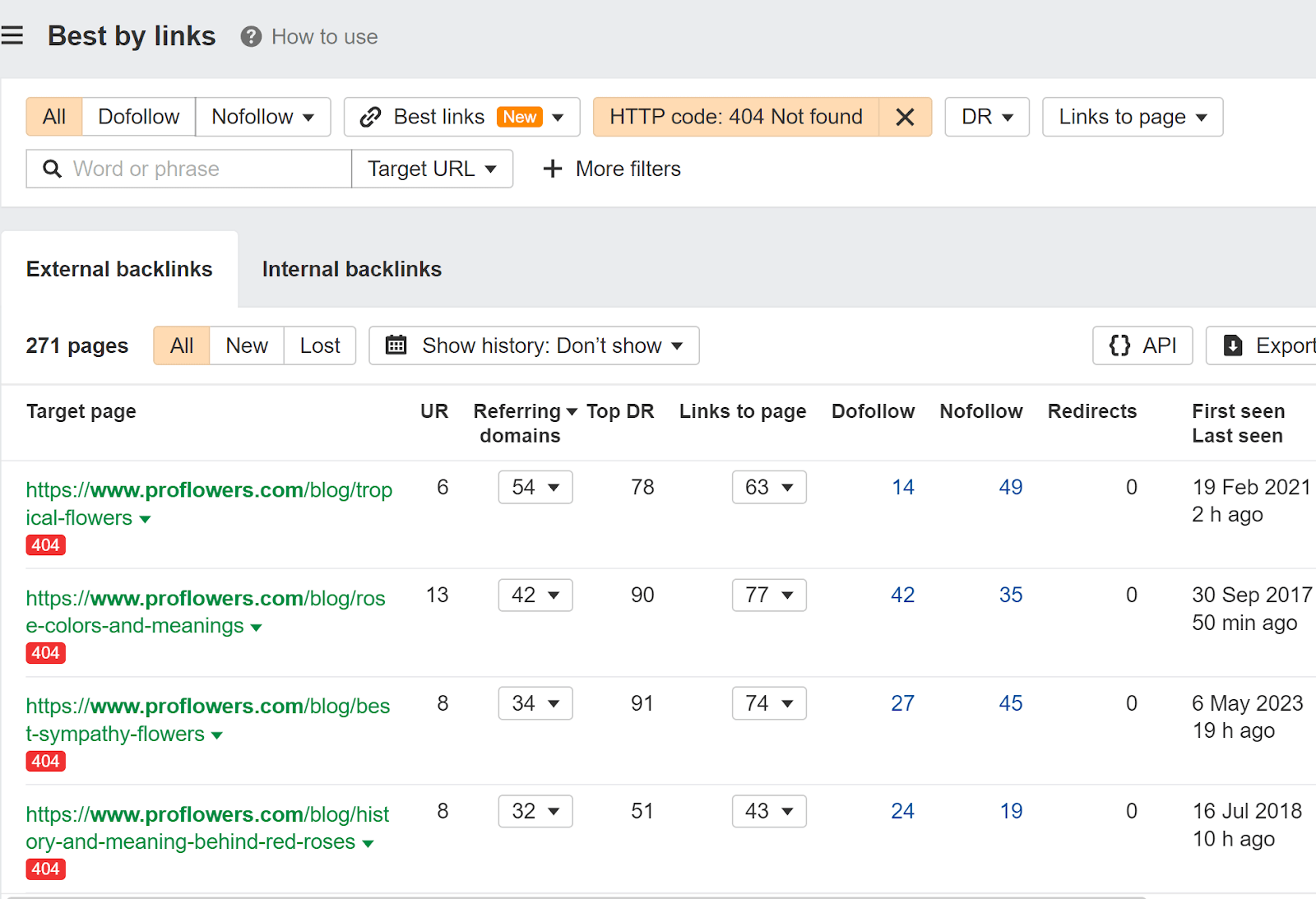

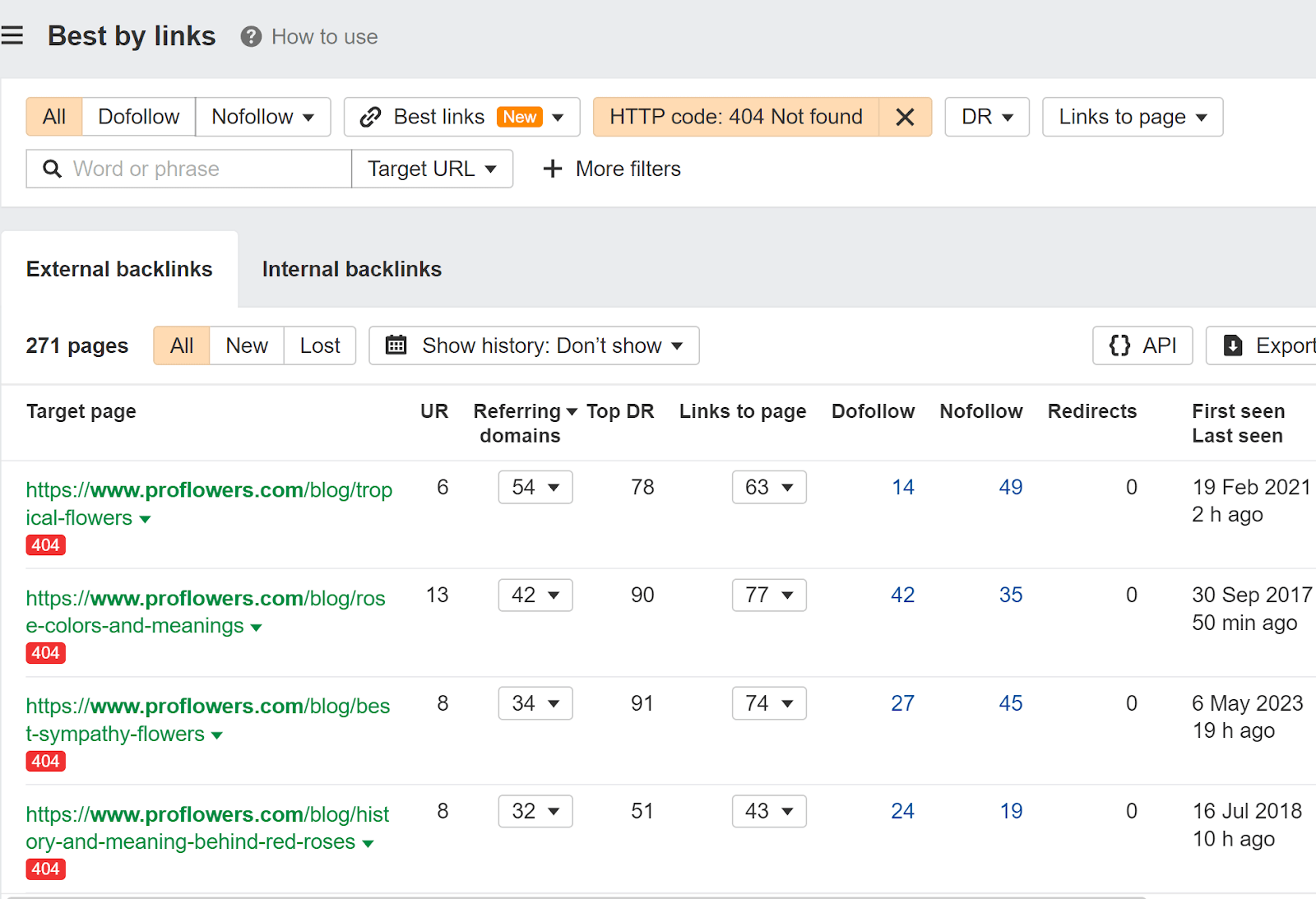

Redirect relevant old content

In many cases, your old URLs have links from other websites. If they’re not redirected to the current pages, then those links are lost and no longer count for your pages. It’s not too late to do these redirects, and you can quickly reclaim any lost value and help your content rank better.

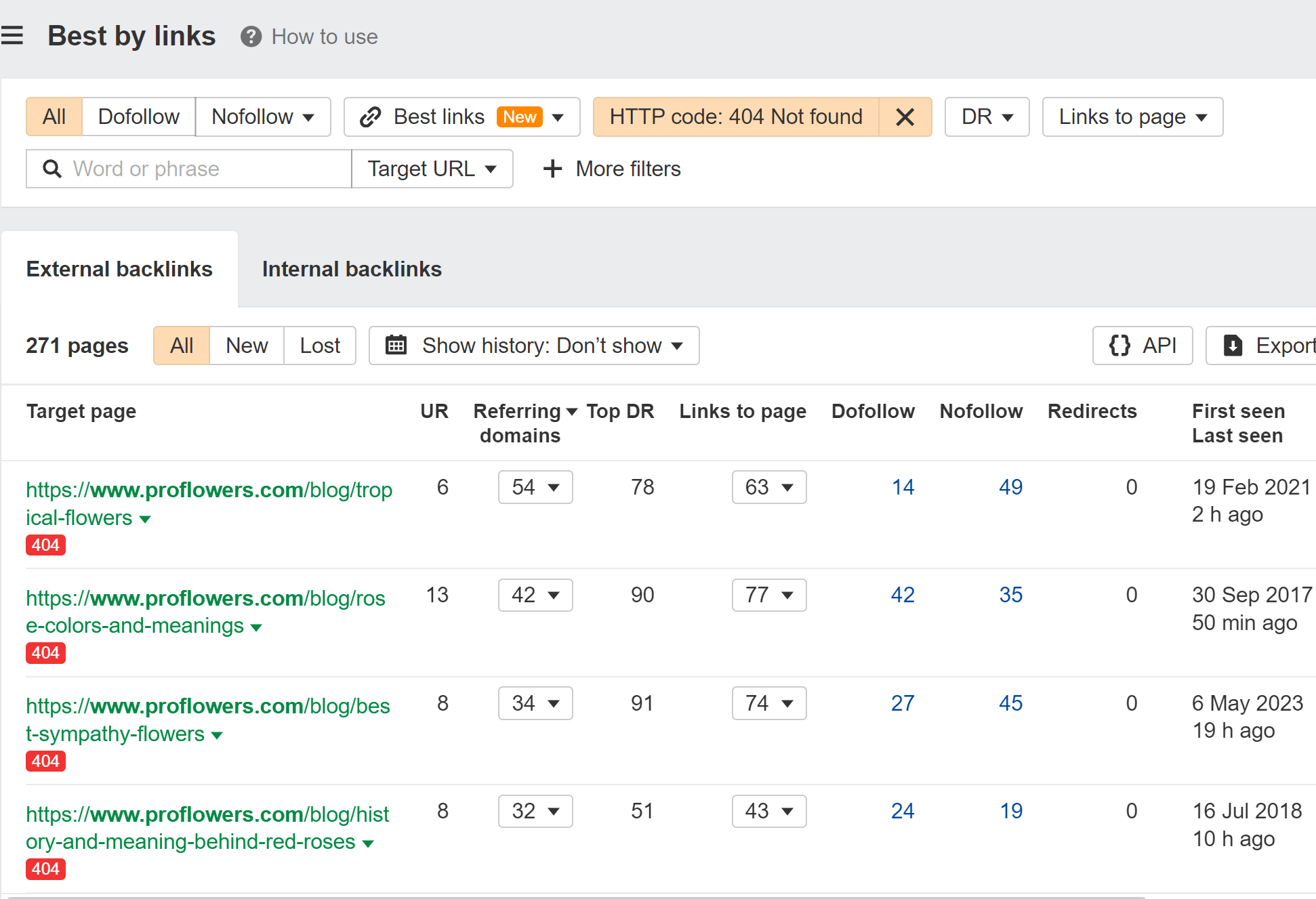

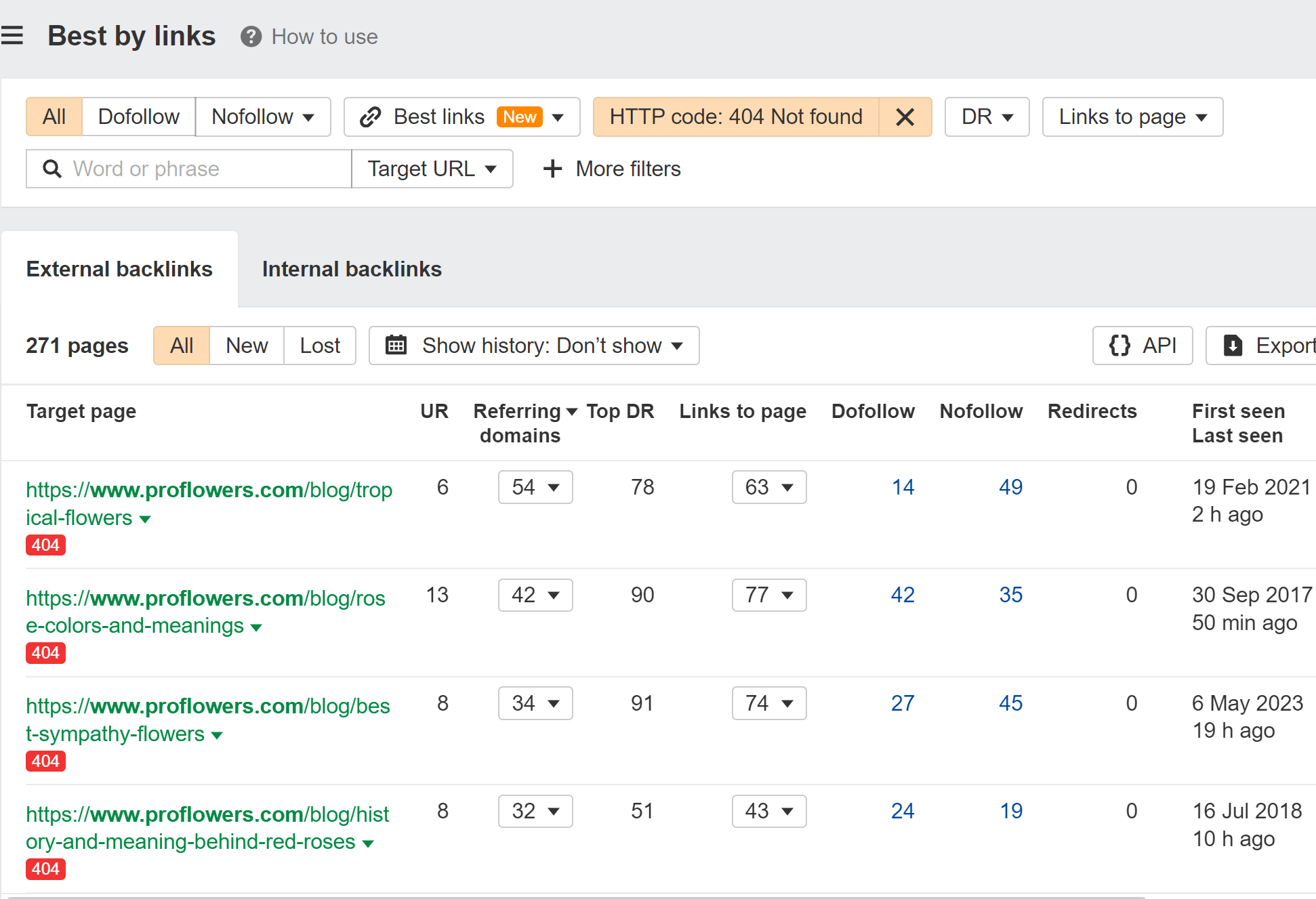

Here’s how to find those opportunities:

- Paste your domain into Site Explorer (also accessible for free in Ahrefs Webmaster Tools)

- Go to the Best by links report

- Add a “404 not found” HTTP response filter

I usually sort this by “Referring domains.”

Create “vs” pages

Creating content that compares you against competitors can be difficult to create in an enterprise environment because of all the legal hurdles. I still think it’s useful to push for these kinds of pages so that you can control the narrative.

There are ways you can do this without having giant tables comparing each feature. Those are always biased anyway. For instance, on our vs. page, we show what users think of us and talk about the quality of our data and unique features.

This page has done well for us, and I believe we will create more pages like it in the future.

Create free tools

If you can create free tools around your product or data, you can use it as a lead-gen tactic for your main products.

We use this strategy at Ahrefs, and some of our most trafficked pages are free tools. We even created a bunch of free writing tools, which we are starting to monetize.

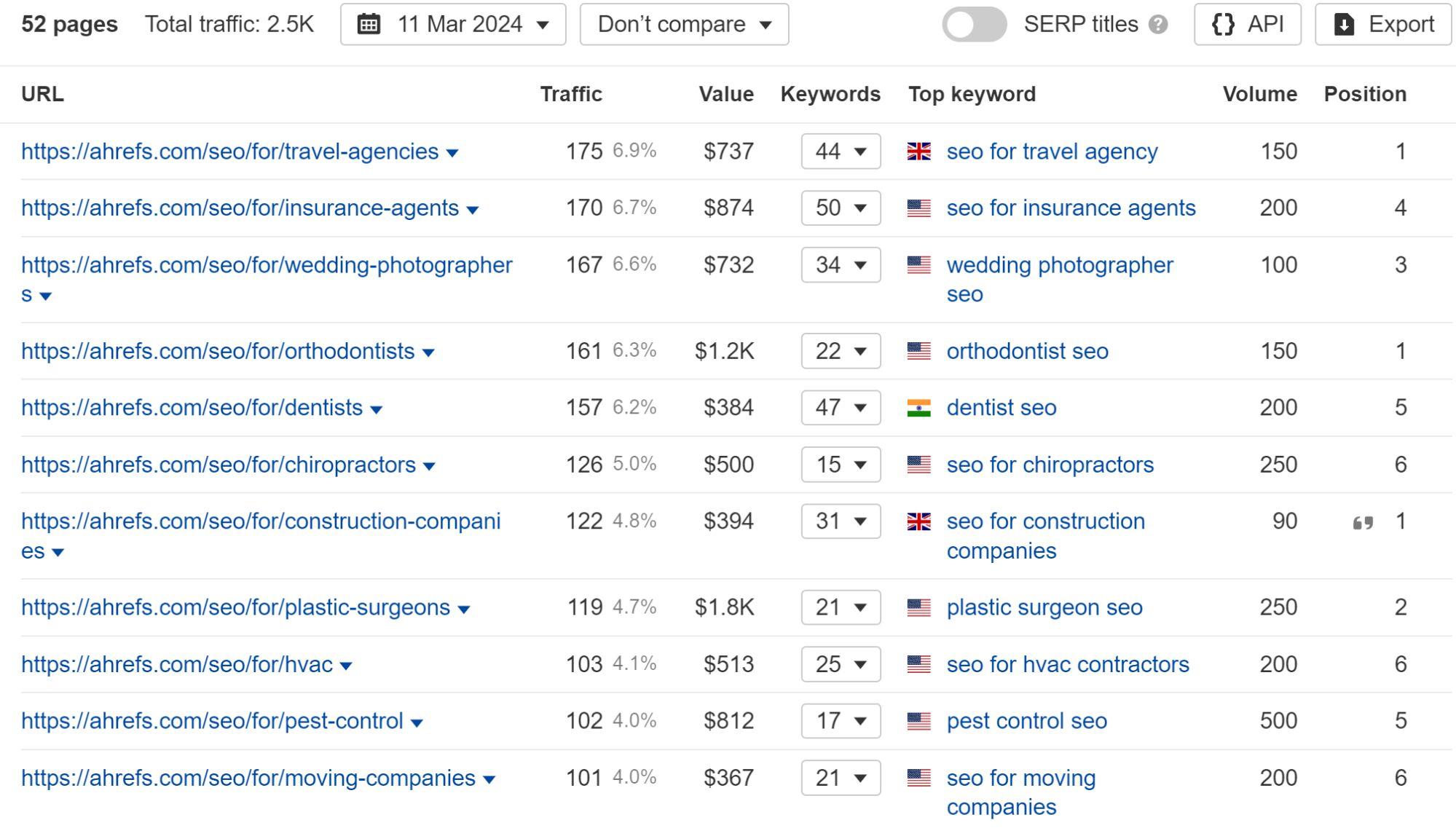

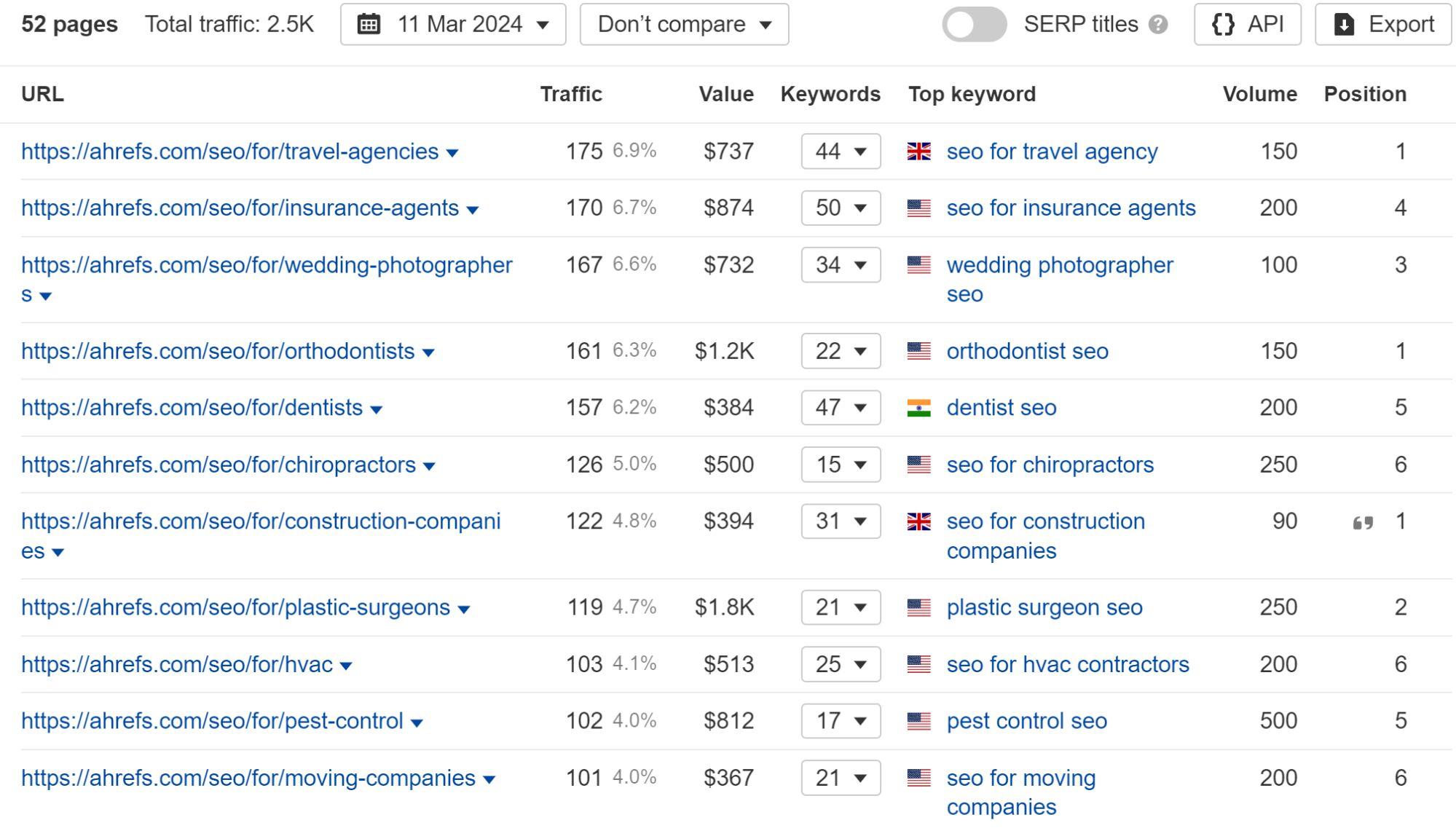

Create programmatic content

If you have the ability to create good pages programmatically using your data, it can be a great way to scale quickly.

We had some success with a small amount of effort by re-using components to create “SEO for x” pages, where x is different types of business. Most of these are ranking well already, but at some point I believe we will put in more effort and pull more data to make these pages even better.

We’re working on some additional programmatic plays that showcase our data even more, and I expect will drive a lot of leads.

Create video content

Video content can work extremely well for businesses. Sam Oh drives tons of leads for Ahrefs.

Ahrefs’ YouTube has over 500k subscribers with less than 300 published videos. Many of those videos have over 1 million views!

In my past jobs, I’ve always treated videos the same way I would blog content and structured the talking points around the things people are searching for and want to know. This worked extremely well, even for industries where people were convinced that folks in the industry didn’t watch videos.

Enterprise link building is the process of acquiring links to an enterprise website with the goal of improving visibility and rankings in search engines.

Enterprise companies get a lot of links naturally. While they may have some challenges with link building, these companies also have a ton of opportunities because of who they are and how much money is at stake.

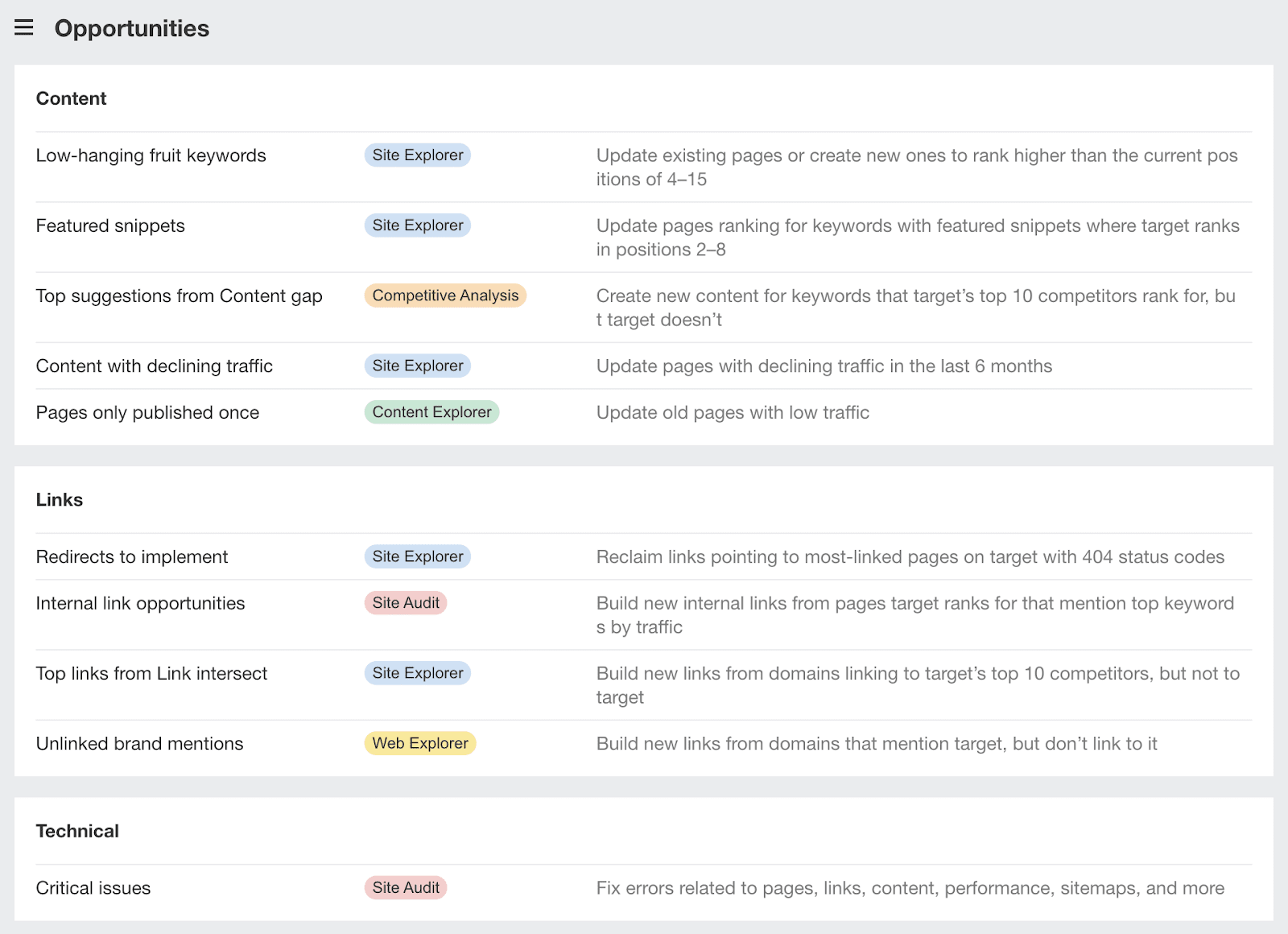

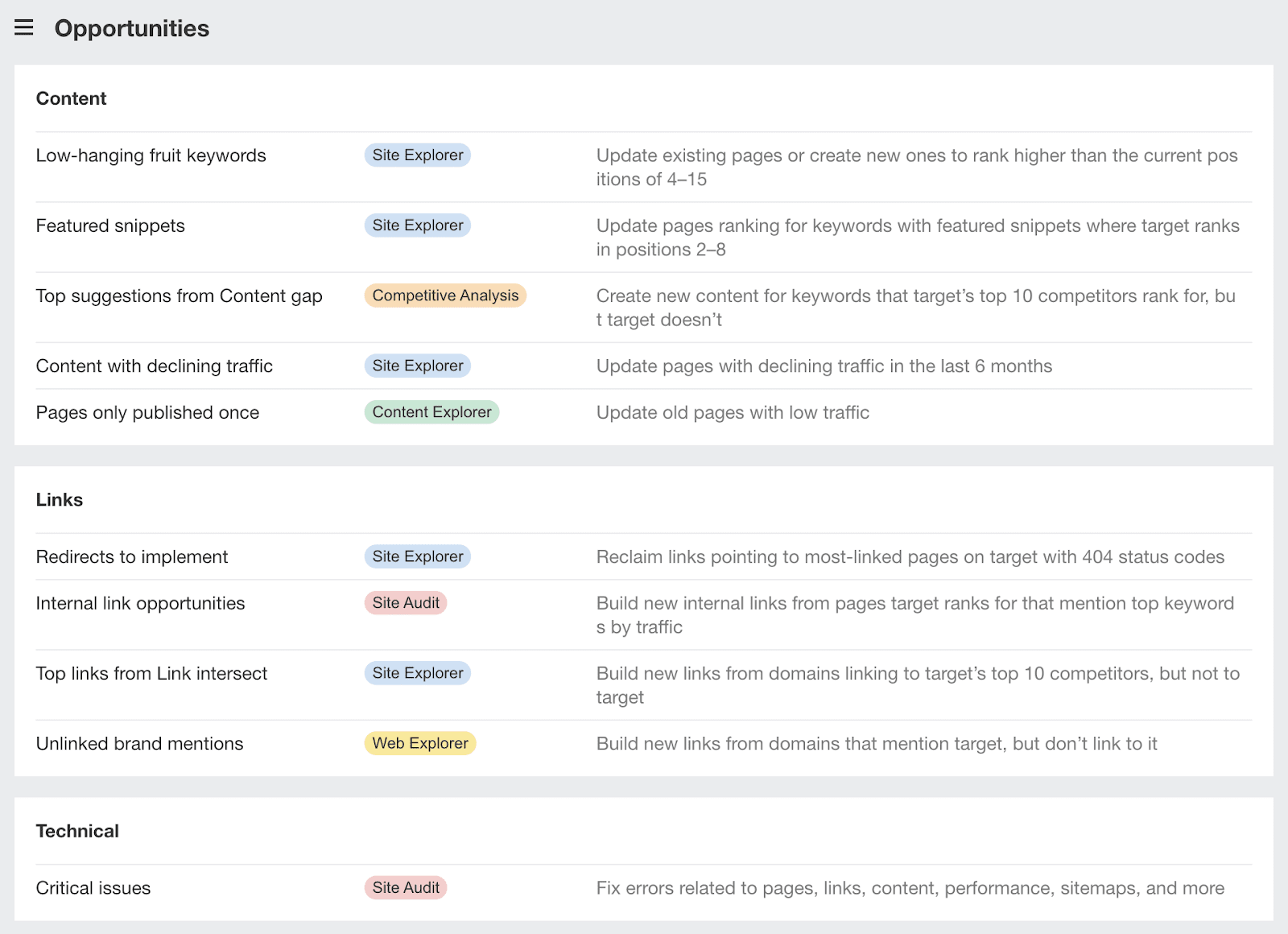

You have a lot of different options for link building in an enterprise environment. If you’re not sure where to start, I’d check out the Links section in Opportunities report in Site Explorer. This report has shortcuts to other reports with filters applied, that help you with some common tasks.

Here are some of the things you might want to try.

Create linkable assets

In SEO, we use the terms “linkable asset” or “link bait” to refer to content that is strategically crafted to attract links. Such linkable assets can take on many different forms:

- Industry surveys

- Studies and research

- Online tools and calculators

- Awards and rankings

- How-to guides and tutorials

- Definitions and coined terms

- Infographics, GIFographics, and “Map-o-graphics”

You can also use any industry-famous employees or thought leaders you have to create interesting quotes that might be linked.

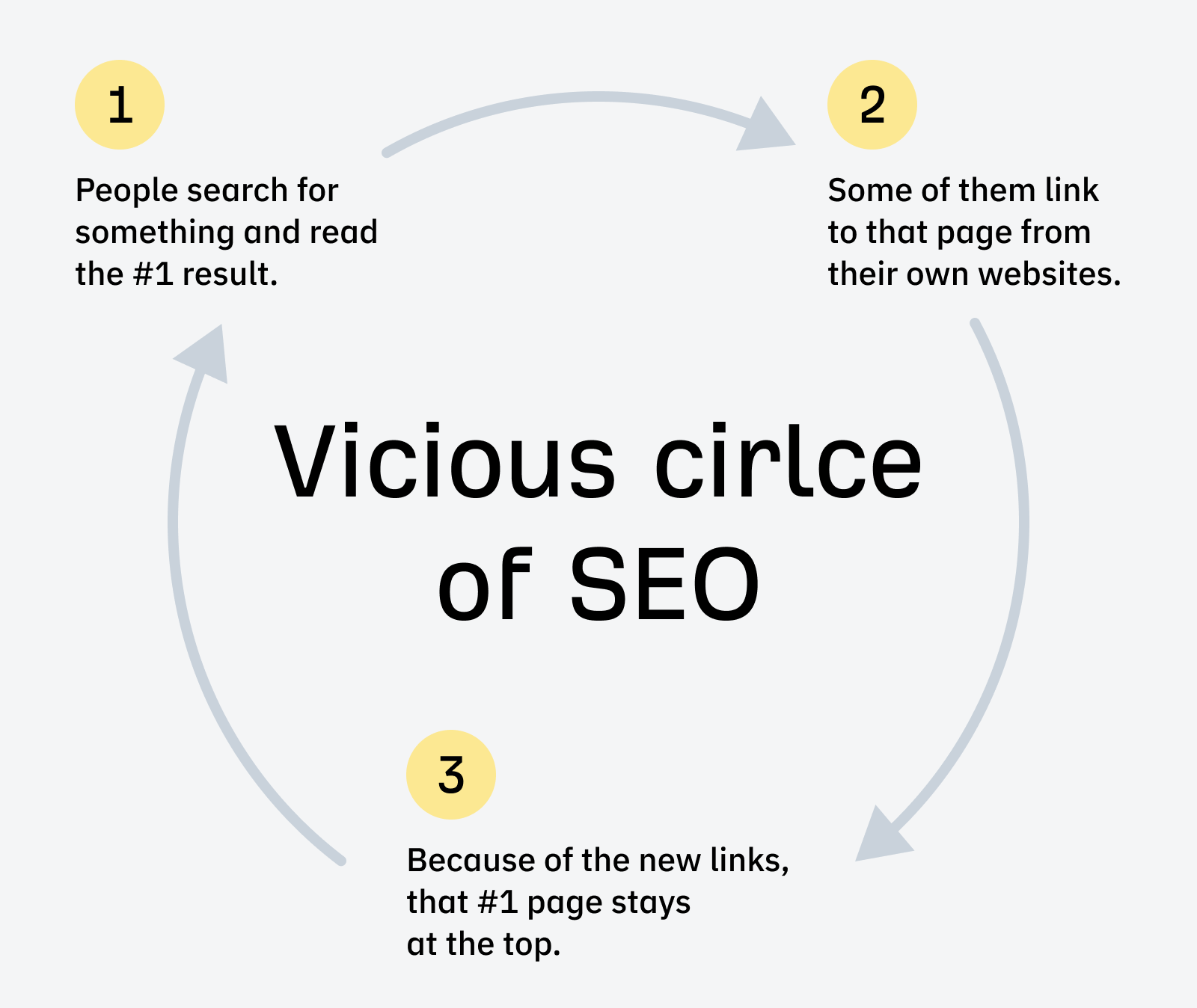

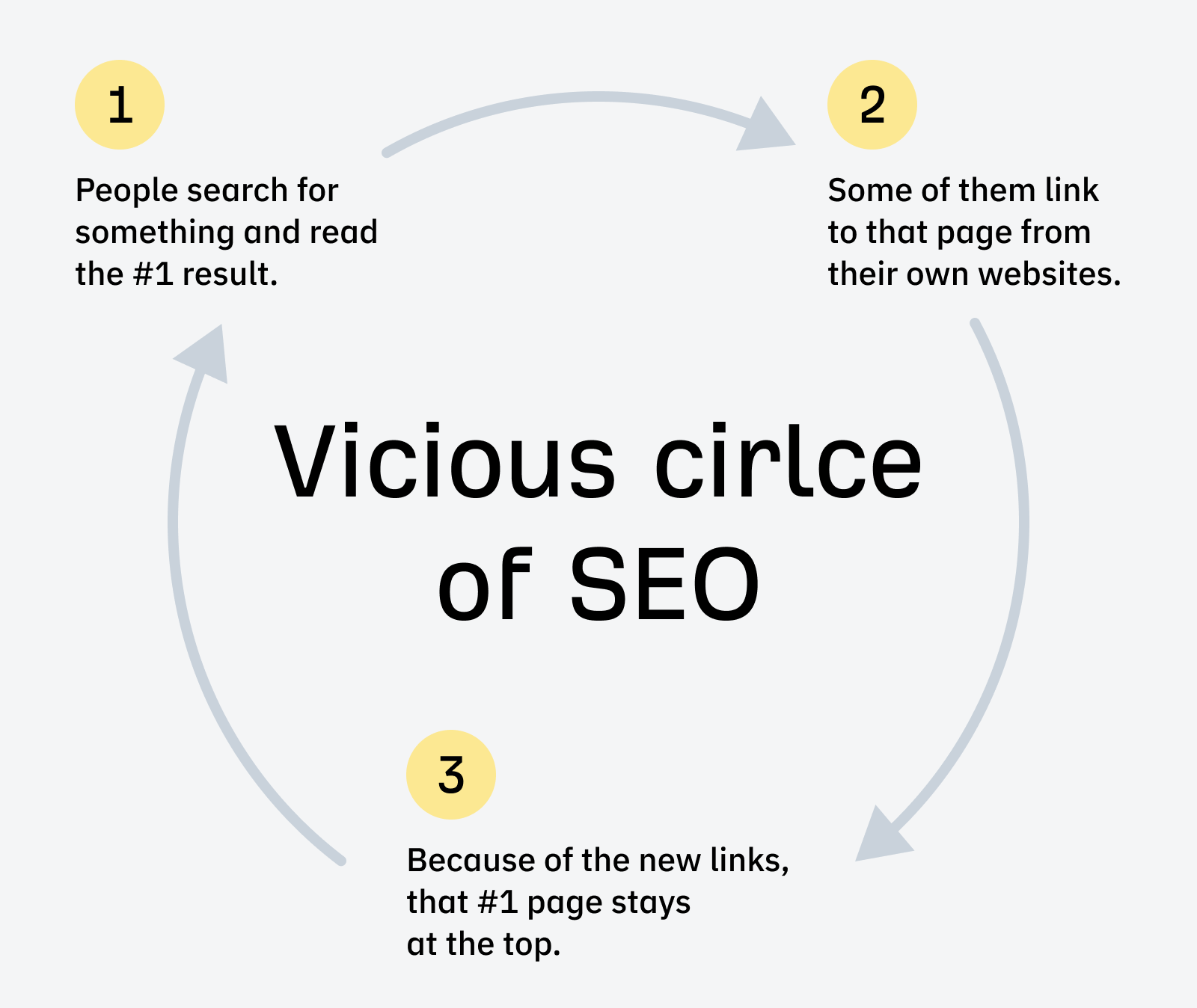

There’s also a phenomenon where high-ranking pages get linked to more over time. If your content is good enough to get you near the top, you’re more likely to get more links. Tim Soulo calls this the vicious circle of SEO.

For more ideas, check out our guide to enterprise content marketing.

Combine similar content to create a stronger page

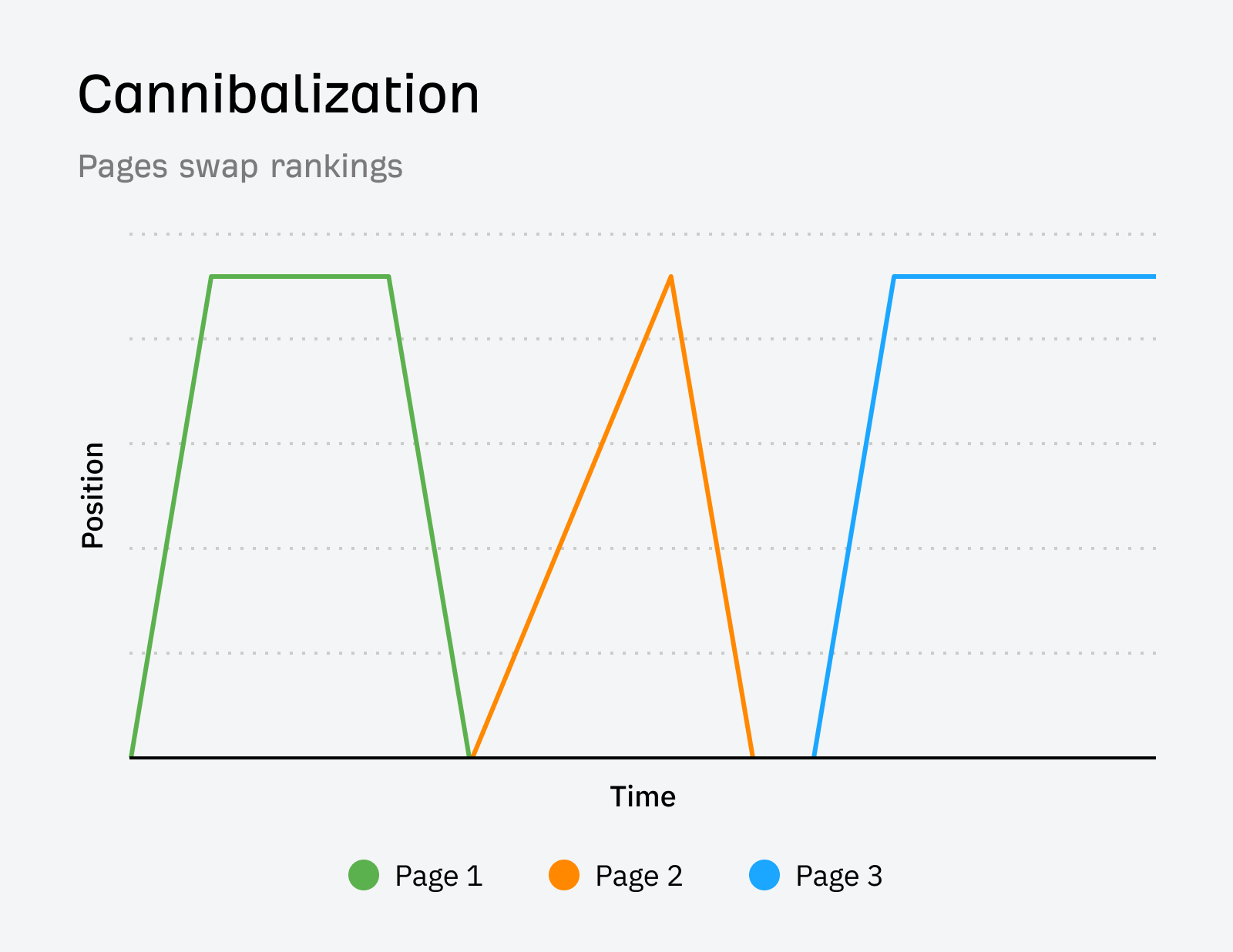

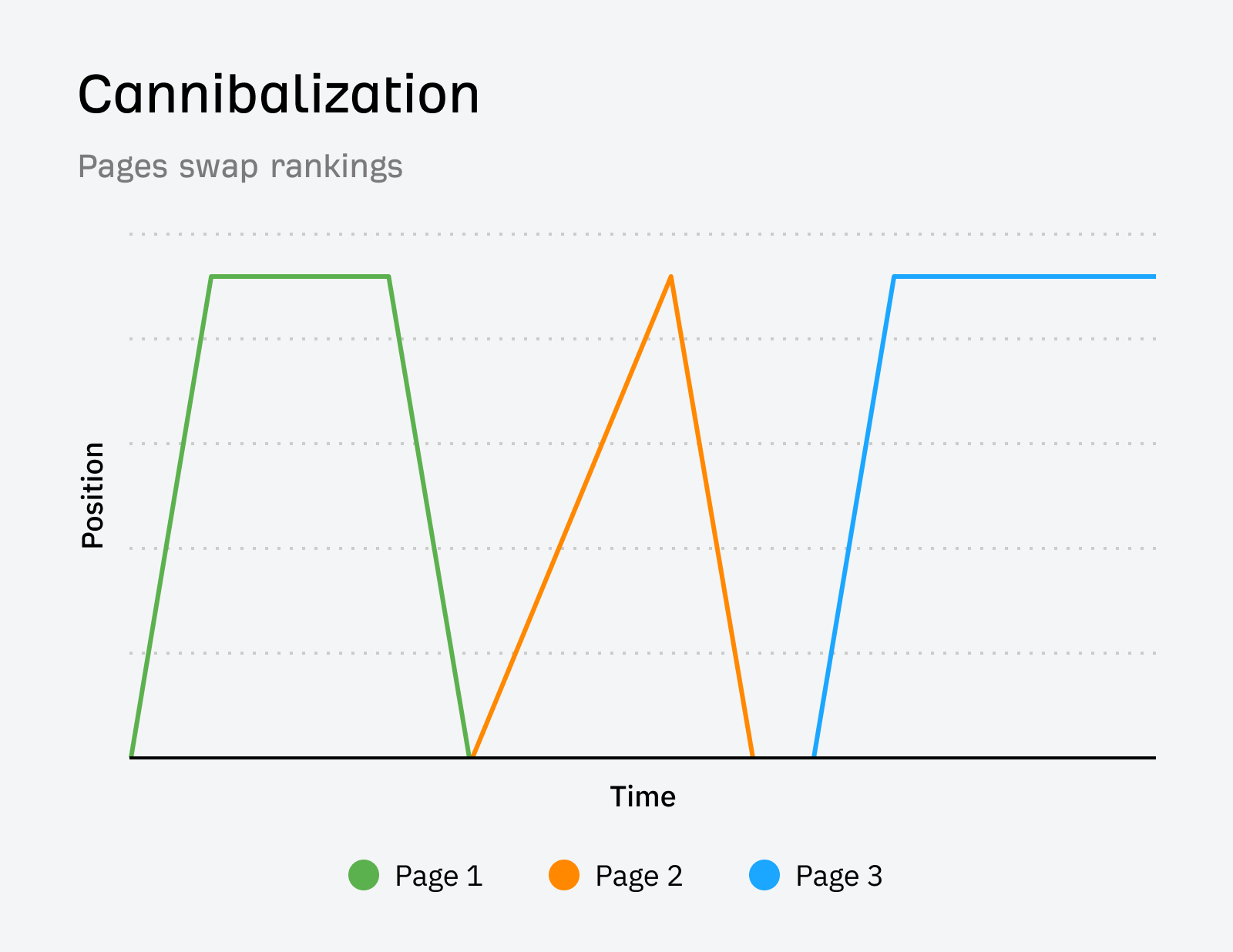

Keyword cannibalization is when a search engine consistently swaps rankings between multiple pages or when multiple pages rank simultaneously for the same keyword but are similar enough to be consolidated. Consolidating similar content into comprehensive guides or pillar pages can improve your chances of ranking and earning links.

Promote your content

The more visibility your content gets, the more links you are likely to get naturally. Leverage those other teams I talked about earlier to promote your content on social and maybe paid media. Use influencer relationships to amplify your reach. Use your PR teams for potential media coverage.

Keep in mind that these other teams are busy and have their own priorities as well. Be selective on what you ask them to promote. If you ask for them to promote everything, they’re likely to promote nothing.

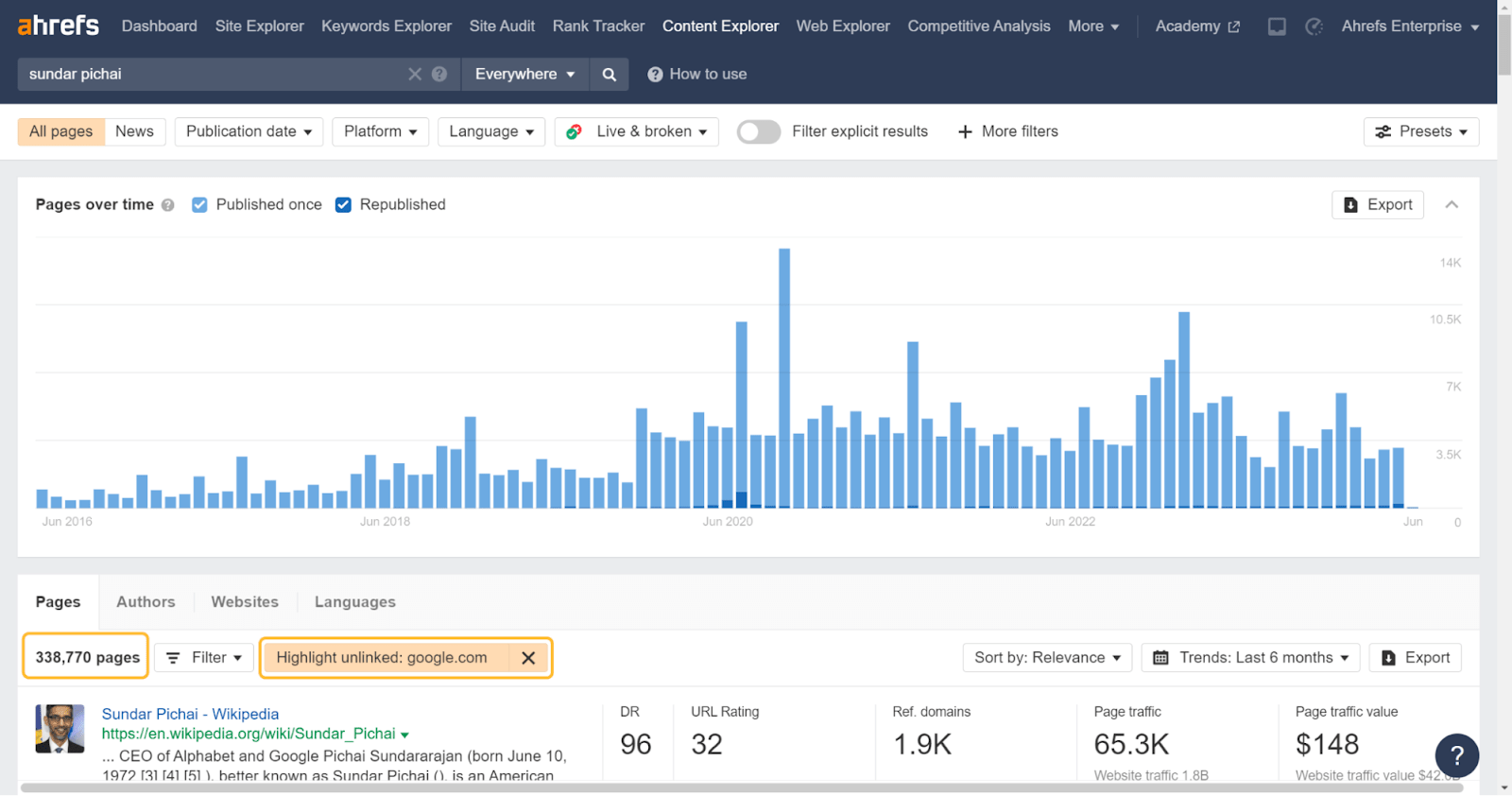

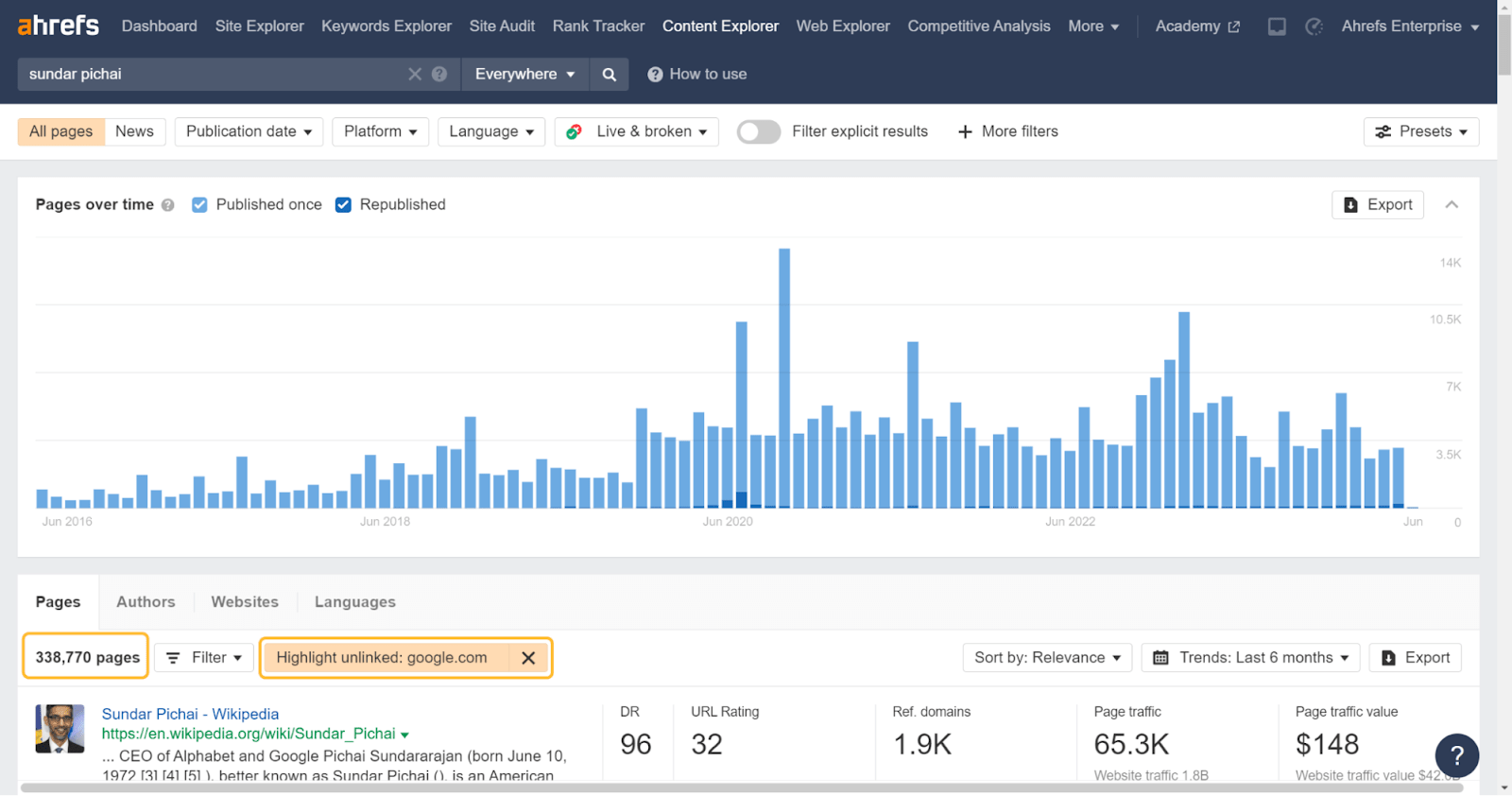

Go after unlinked brand mentions

Unlinked brand mentions are online mentions (citations) of your brand—or anything directly related to your brand—that do not link back to your site.

Enterprise companies tend to get talked about a fair bit, and each one of those mentions offers a chance to get a link. Even if there’s not initially a link, it doesn’t hurt to ask for one. You can use Content Explorer to find these mentions on the web and the built-in filter to highlight unlinked domains and hone in on unlinked mentions.

You can also look for unlinked brand mentions of key employees, famous quotes of theirs, or statistics from your studies.

Recover links with link reclamation

Sites, and the web in general, are always changing. We ran a study that found that ~two-thirds of links to pages on the web disappeared in the nine-year period we looked at.

In many cases, your old URLs have links from other websites. If they’re not redirected to the current pages, then those links are lost and may no longer count for your pages.

It’s not too late to do these redirects, and you can quickly reclaim any lost value and help your content rank better.

Here’s how to find those opportunities:

- Paste your domain into Site Explorer

- Go to the Best by links report

- Add a “404 not found” HTTP response filter

I usually sort this by “Referring domains.”

I even created a script to help you match redirects. Don’t be scared away; you just have to download a couple of files and upload them. The Colab notebook walks you through it and takes care of the heavy lifting for you.

While this script could be run periodically, if you’re constantly having to do redirects, I would recommend that you automate the implementation. You could pull data from the Ahrefs API and visits from your analytics into a system. Then, create logic like >3 RDs, >5 hits in a month, etc., and flag these to be redirected, suggest redirects, or even automatically redirect them.

If you had redirects in place for a year or more already, the value is likely already consolidated to the new pages. That’s what Google recommends, and it seemed to be true when we tested it. You could also add a flag for “was redirected” into the automation logic that checks if the page was previously redirected for a year to account for this.

Copy competitors’ links and strategies

There are a few different ways to do this. The usual recommendation for SEOs would be a link intersect report, which we have, but it’s pretty noisy for large sites.

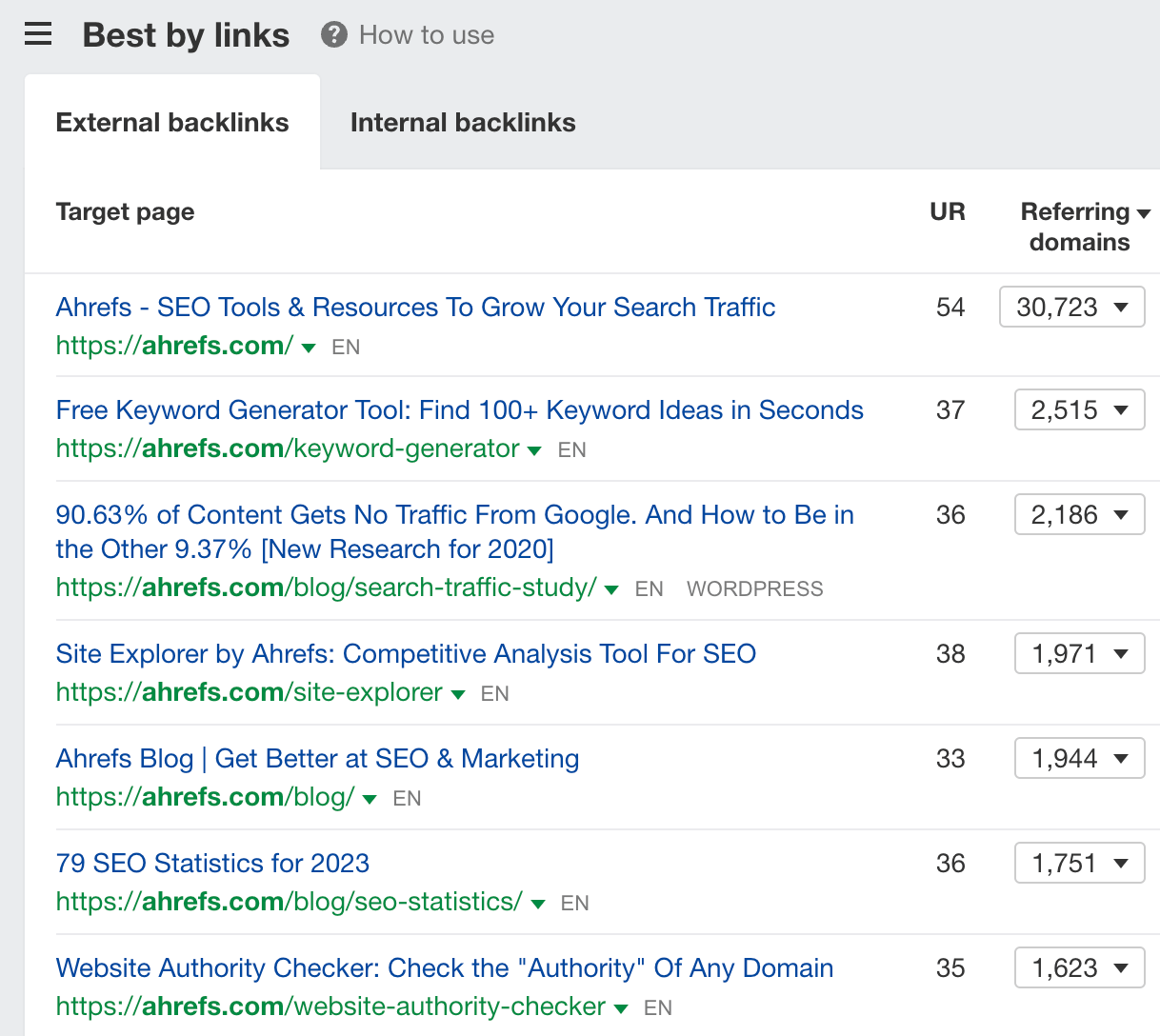

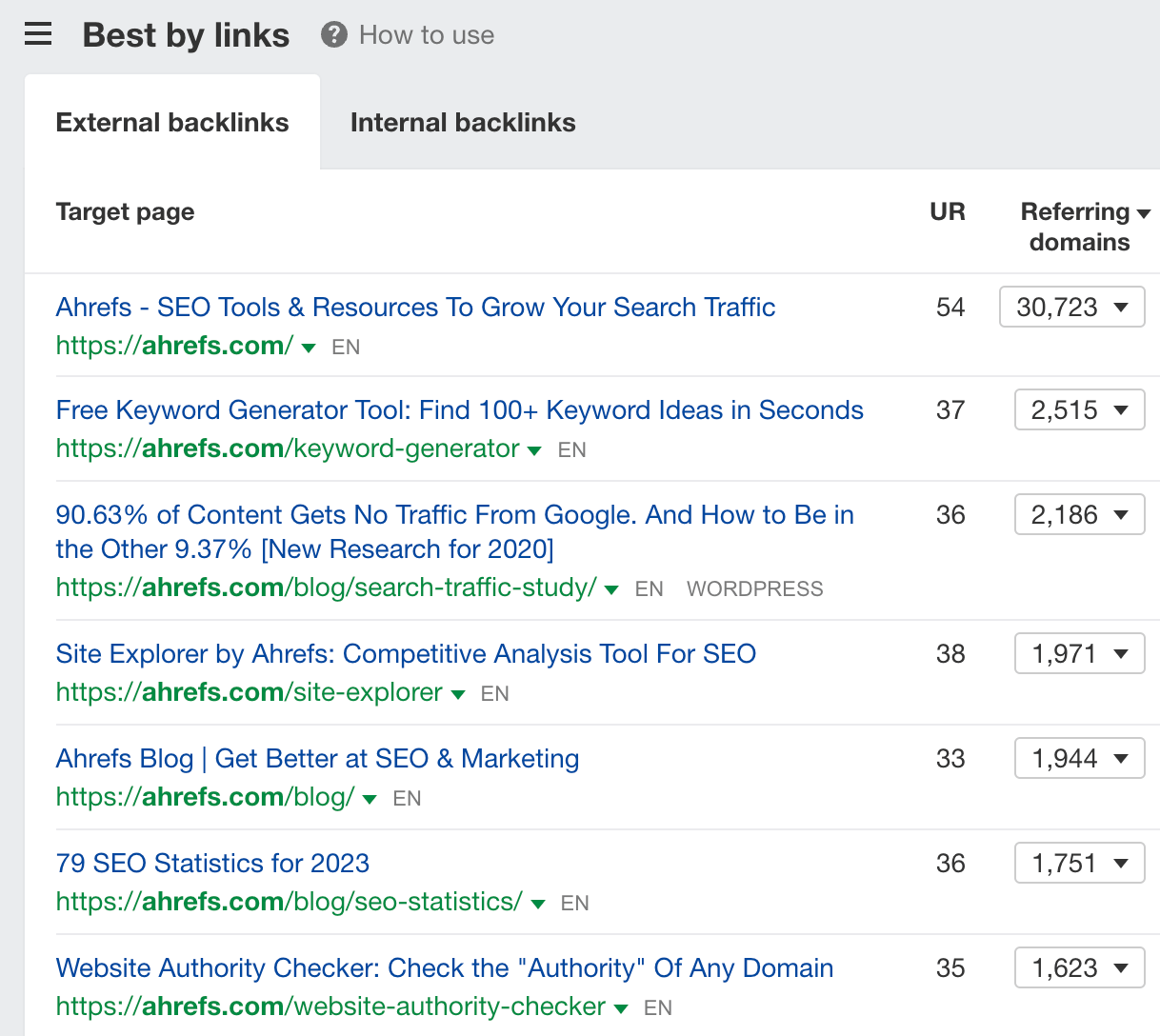

What I would recommend instead is the Best by links report in Site Explorer.

This is going to show you the most linked pages on a website. For us, that’s our homepage, some of our free tools, and our blog and data studies.

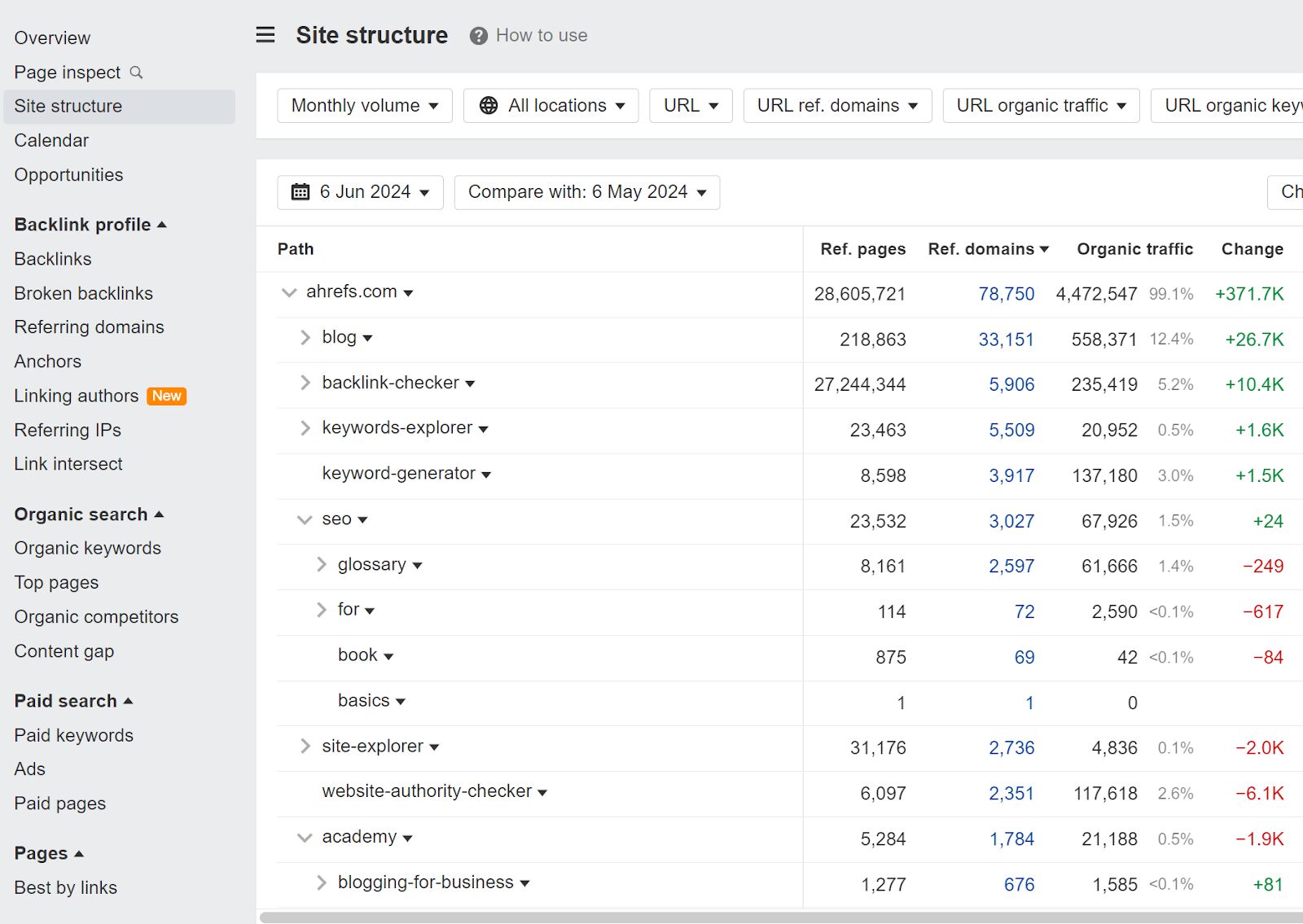

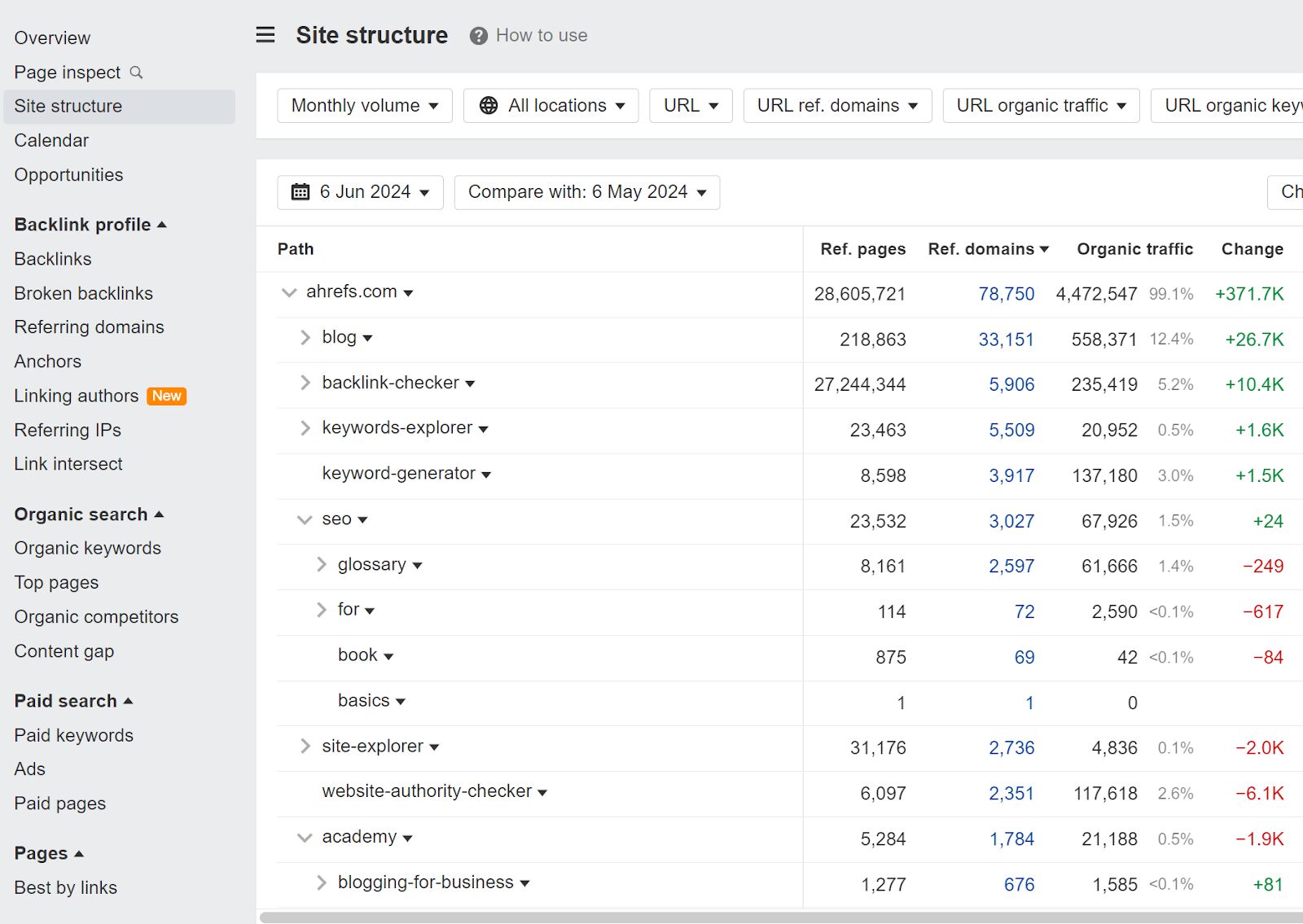

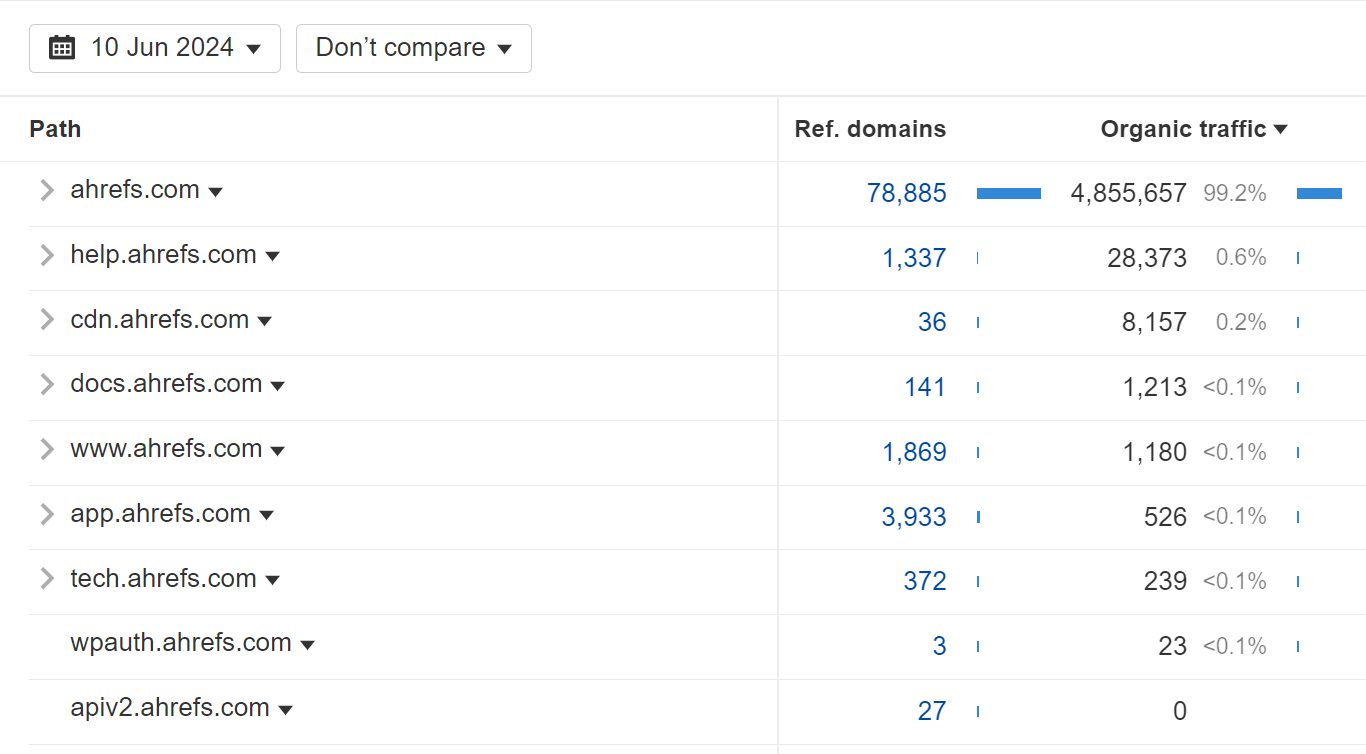

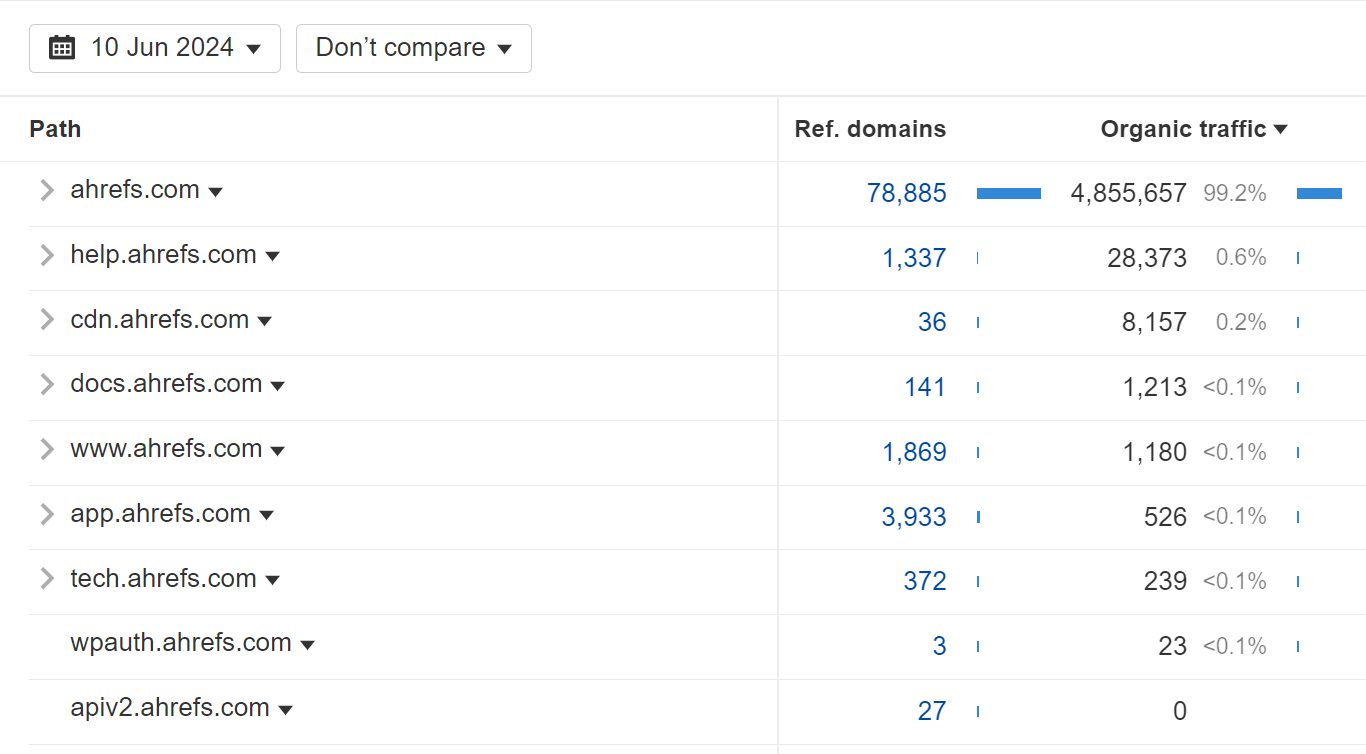

Another option is the Site Structure report in Site Explorer sorted by Referring domains or Referring pages.

This lets me quickly see that things like our blog, free tools, glossary, and training academy videos are all well-linked.

Build internal links

I’ve always found internal links to be a powerful way to help pages rank higher.

Even these links may be difficult to get in an enterprise environment. Sometimes different people are responsible for different sections of the website, which can make internal linking time-consuming and may require meetings and a lot of follow up to get internal linking done.

On top of the political hurdles, the process for internal linking can be a bit convoluted. You either have to know the site well and read through various pages looking for link opportunities, or you can follow a process that involves a lot of scraping and crawling to find opportunities.

At Ahrefs, we’ve made this simple, scalable, and accessible so anyone can find these opportunities. The easiest way to see internal link opportunities is with the Internal Link Opportunities report in Site Audit. We look at what your pages are ranking for and suggest links from other pages on your site that talk about those things.

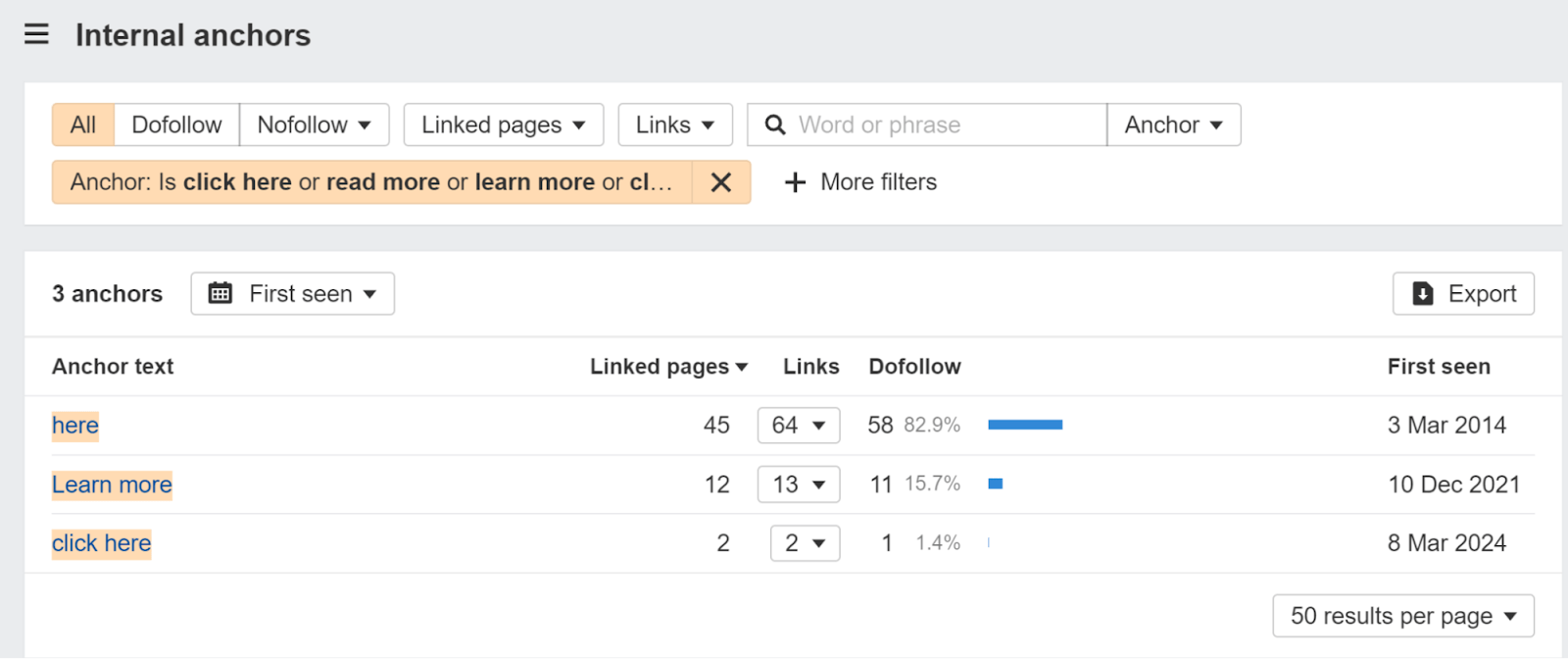

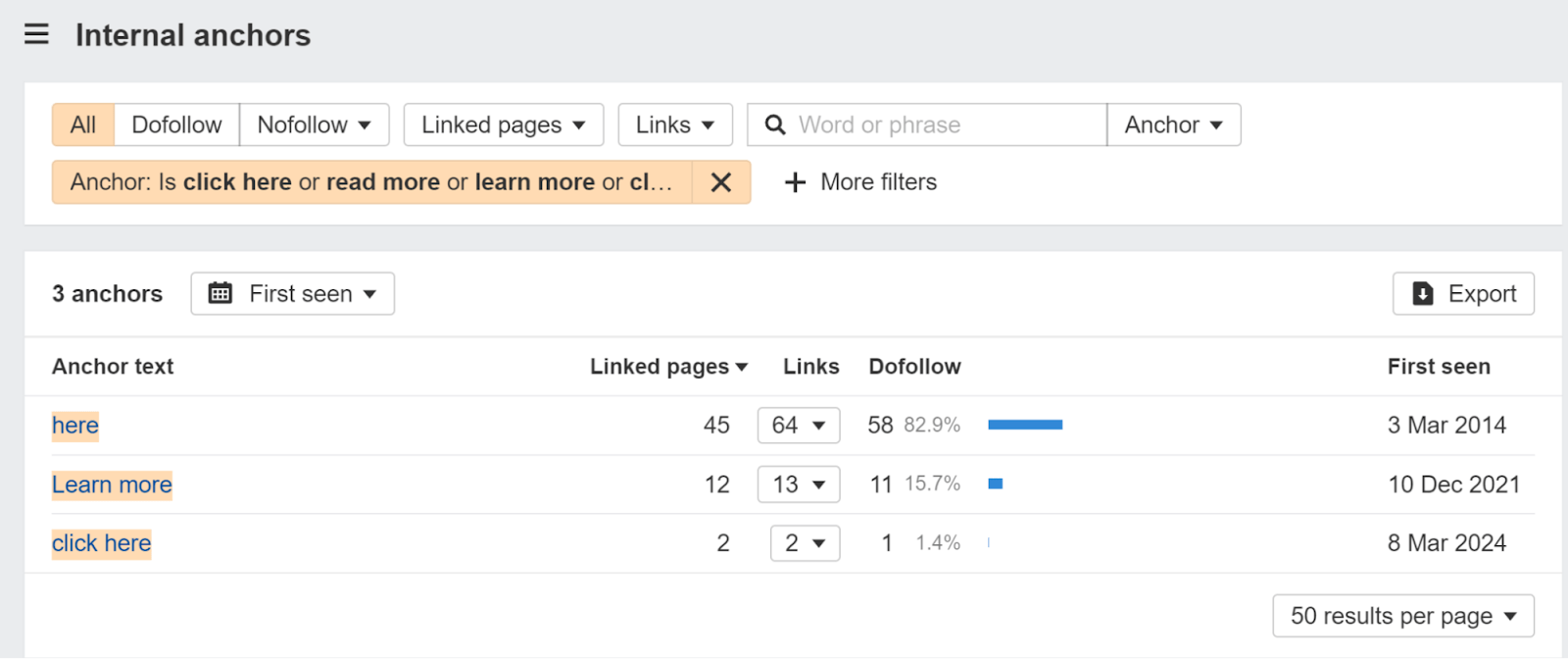

I’d also recommend watching out for opportunities to use better link anchor text. It’s common for page creators to overuse generic link anchor text such as ‘learn more,’ ‘read more,’ or ‘click here.’ You can look for usage of this kind of generic copy in the Internal anchors report in Site Explorer.

Build links from other websites you own

If your company owns multiple websites, you’ll want to add links between them where it makes sense. Ultimately, you may want to consolidate the content into one site, but that’s not always feasible. Even if it is, it may not happen within a reasonable timeframe, so you may want to add links between the sites in the meantime.

This can be abused and goes into a gray area, but for the most part, if you’re linking naturally to relevant pages, you’ll be fine.

Buy other companies’ websites

I wrote all about SEO for mergers and acquisitions. When you buy another company, you inherit their content and their links. This opens some nice options for consolidating content and links to stronger pages.

Enterprise technical SEO is the practice of optimizing an enterprise website to help search engines find, crawl, understand, and index your pages. It helps increase visibility and rankings in search engines.

Enterprise websites are where technical SEO shines. There’s so much money at stake. One mistake can keep millions of pages out of the index or remove an entire site from search results. One fix can potentially be worth millions in revenue.

Check out our guide to enterprise technical SEO where I talk about different types of crawl strategies, prioritization, submitting tickets, and some of the technical SEO projects below.

Check indexing

Priority – high

You probably have some pages indexed that shouldn’t be, and many pages noindexed that should be indexed. Canonicalization is another issue to check to make sure the version of a page you want indexed is the one that is indexed.

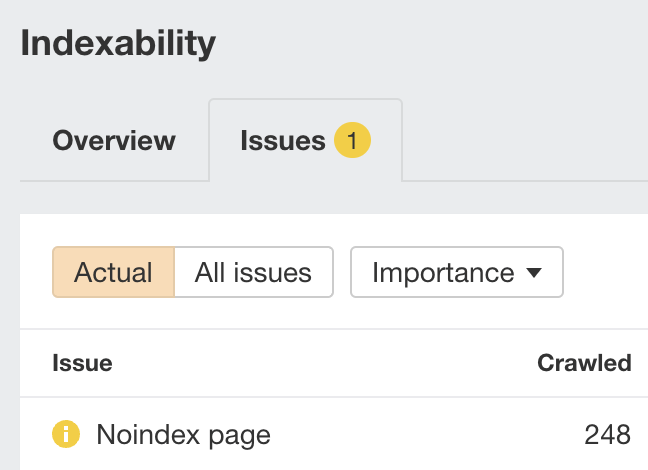

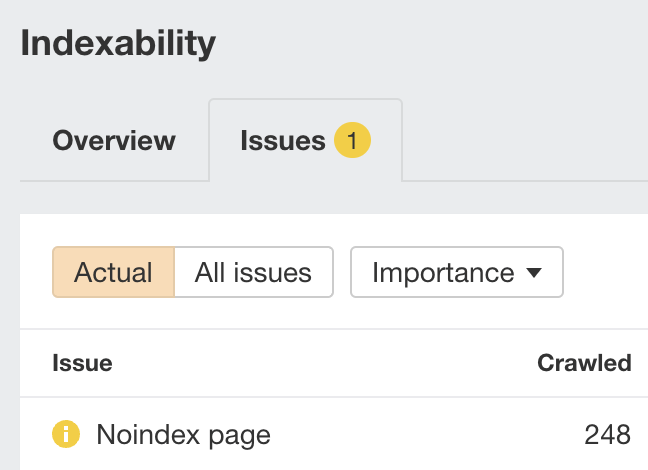

First, check the Indexability report in Site Audit for “Noindex page” warnings.

Google can’t index pages with this warning, so it’s worth checking they’re not pages you want indexed.

You can also check the Site Structure report in Site Explorer for any pages with organic traffic that shouldn’t have traffic.

Add schema markup

Priority – high

I’m a fan of schema markup as long as it gets you a search feature. Check out our guide to schema markup to see which ones you should be implementing. There are some cool tools now that can even suggest schema markup based on what is seen on the page.

Fix Page Experience

Priority – medium

While many of these aren’t necessarily going to move the needle for SEO, they are good for users and how they experience your website, so they’re worth working on.

- Core web vitals. This is how fast your pages load.

- HTTPS. You want your pages to be secure. A surprising number of sites, >6%, redirect HTTPS to HTTP.

- Mobile-friendliness. Are your pages usable on mobile?

- Interstitials. You don’t want intrusive interstitials or those that take up a good chunk of the screen.

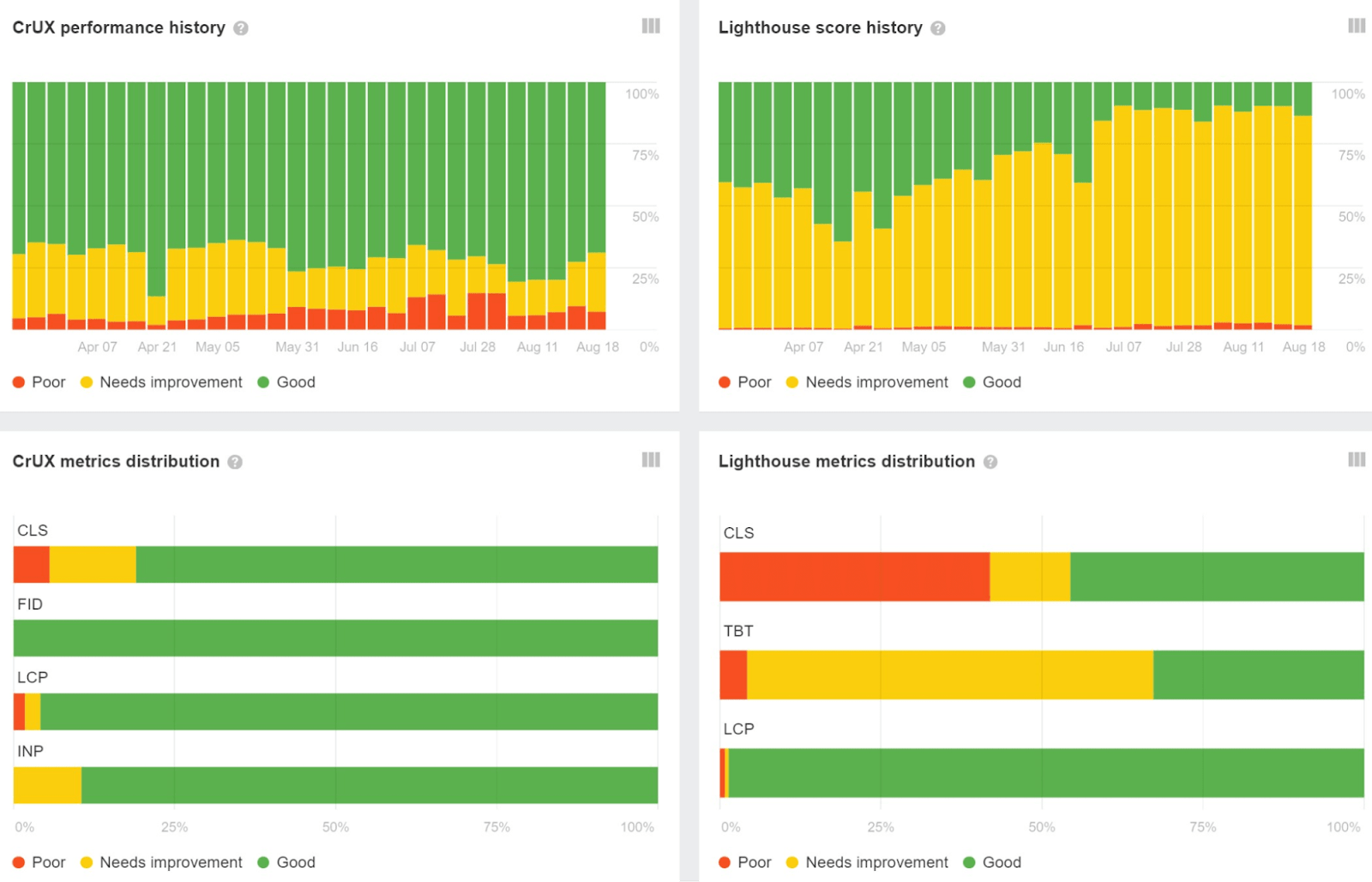

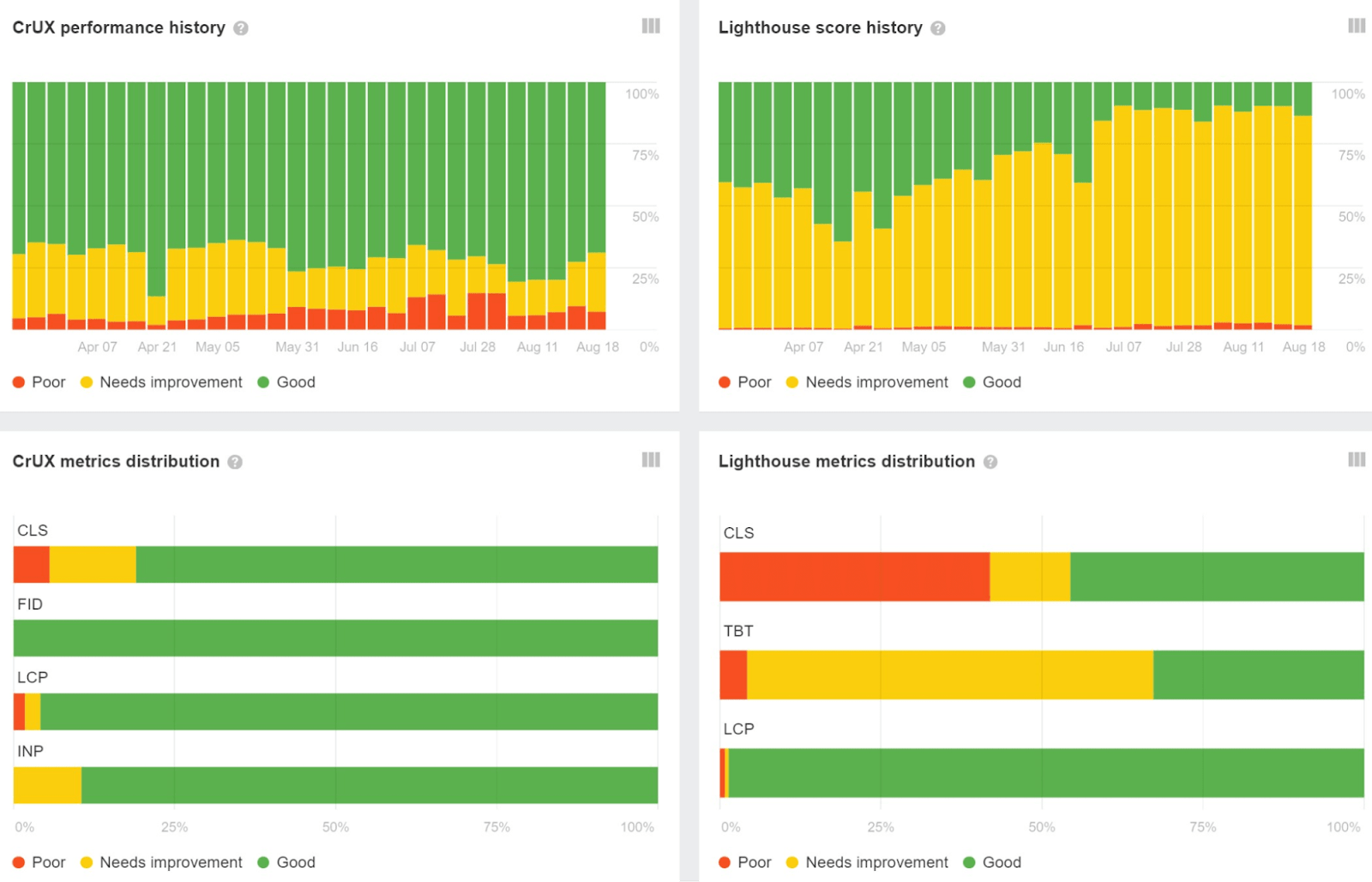

We cover most of these in Site Audit. For example, we pull PageSpeed Insights data so you get actual Chrome User Experience Report (CrUX) metrics for Core Web Vitals as well as Lighthouse metrics in Site Audit.

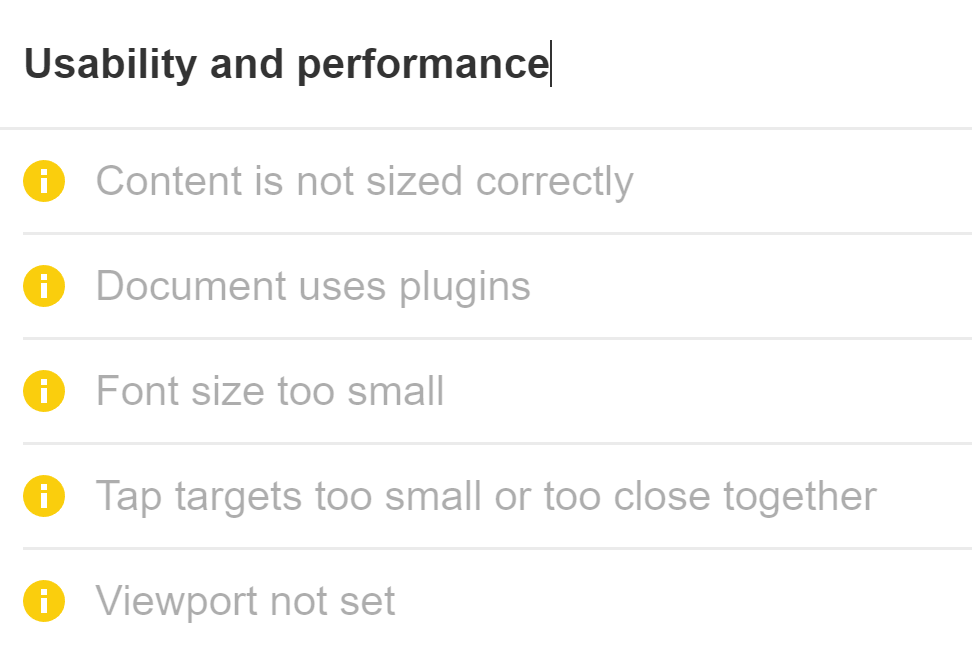

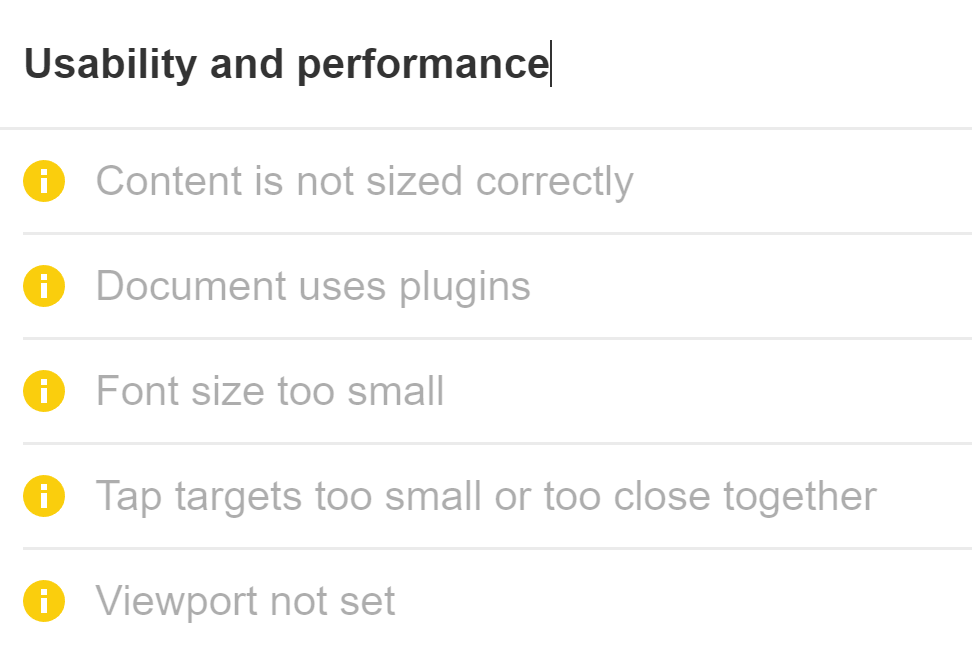

We also flag mobile SEO issues.

General website health and maintenance

Priority – low

These may not have much impact on SEO, but they can be an important consideration for user experience.

- Broken links. Find them and fix them.

- Redirect Chains. Google will follow up to 10 hops. I don’t worry until after five hops.

- Add sitemaps. I would make sure this is automated. If you are asked to manually create them, you can do it, but just know that if it’s manual, these will rarely be kept up-to-date. If you’re creating them based on crawled pages, then it’s likely all search engines can crawl them anyway.

You may want to check if any of the chains are too long. Look for this in the “Issues” tab in the Redirects report.

Hreflang

Priority depends on the site

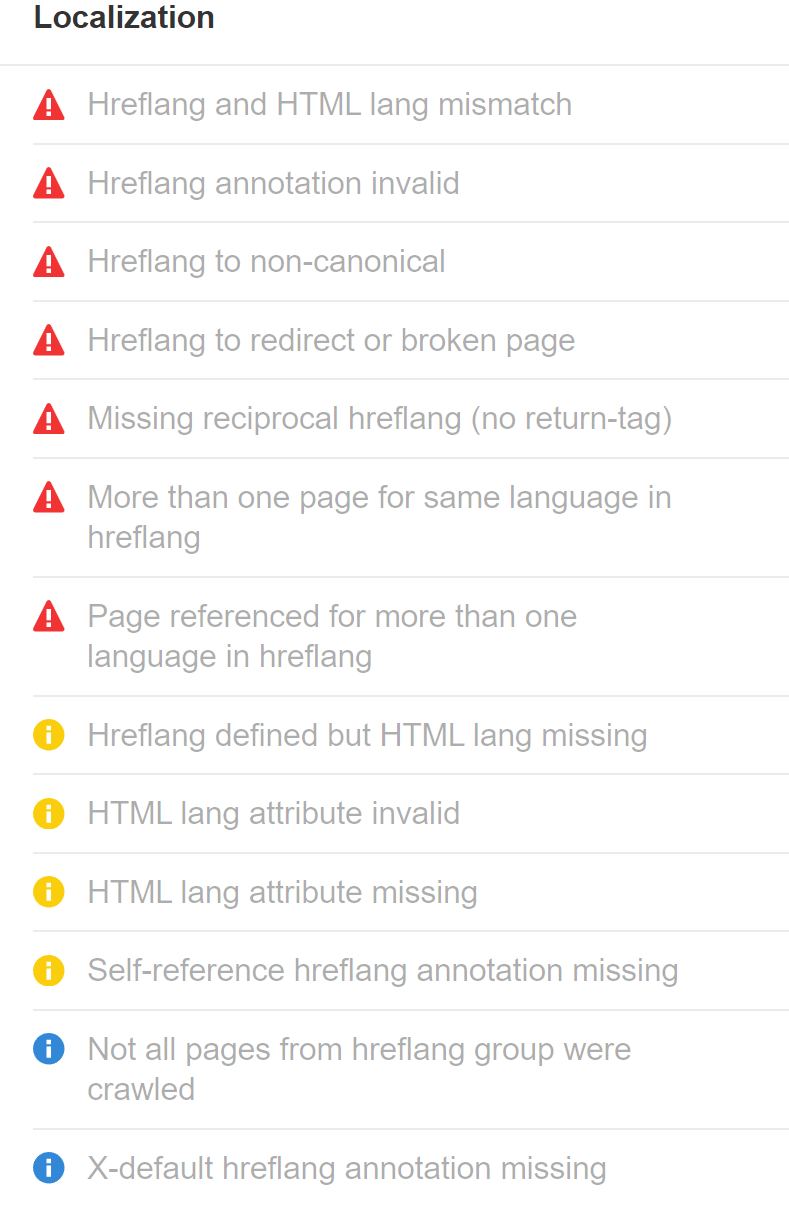

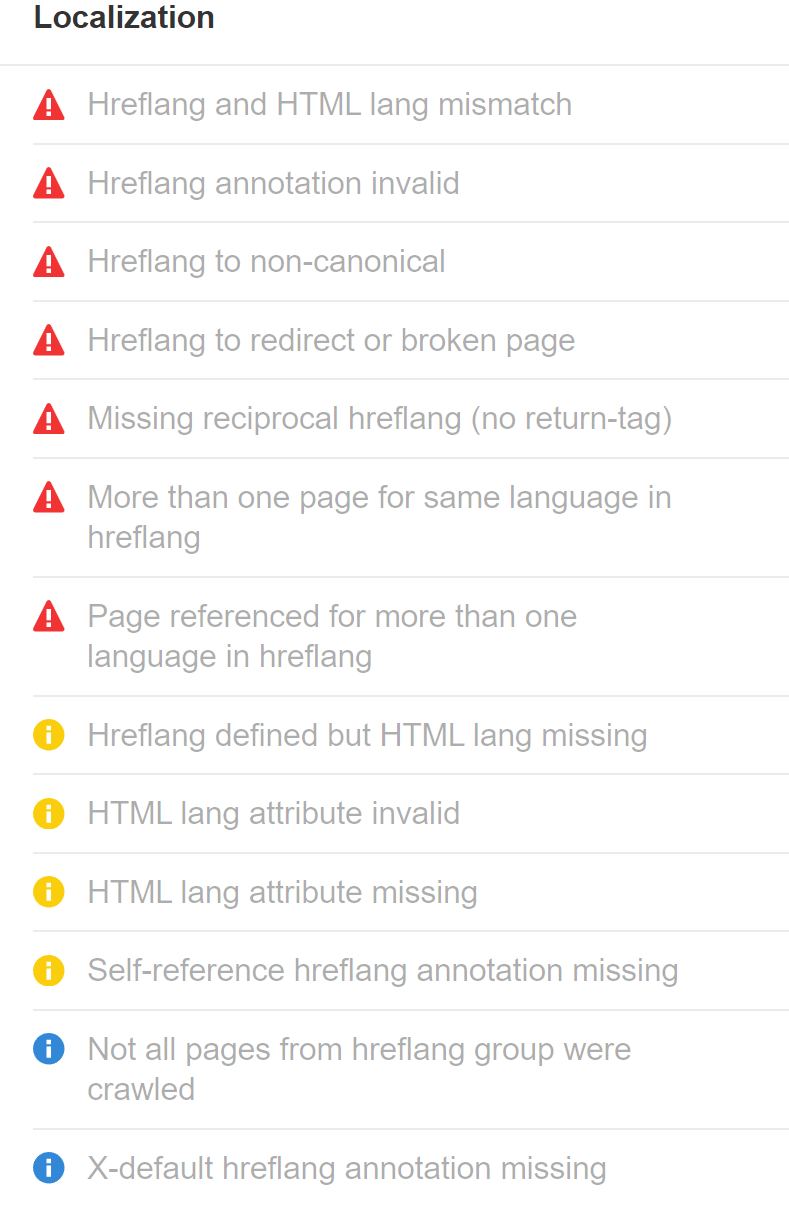

Hreflang helps show the right page to the right user in search. This can be crucial for enterprise companies to get right as the dropoff from bad pathing or annoying users can cost you a lot of money.

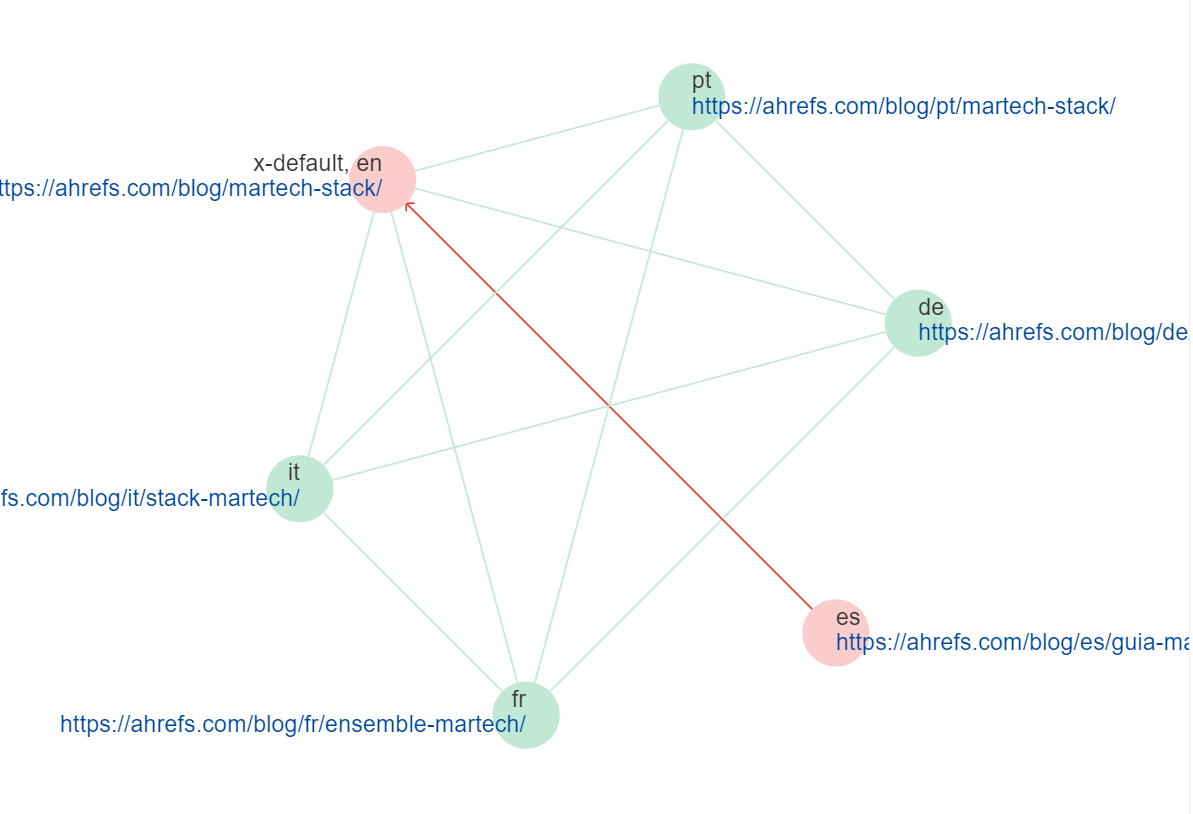

We flag a number of different hreflang issues in Site Audit.

There are also some nice visualizations to help you explain issues like this first-if-its-kind hreflang cluster visualization. It shows and tells you what is broken, making it much easier to explain to stakeholders than the typical spreadsheet.

Crawl budget

Priority depends on the site

Crawl budget can be a concern for larger sites with millions of pages or sites that are frequently updated. In general, if you have lots of pages not being crawled or updated as often as you’d like, then you may want to look into speeding up crawling.

E-commerce

Specialized task

Ecommerce SEO would be important for any site selling products.

For enterprise sites, faceted navigation can be particularly tricky. Luckily we have a great guide on faceted navigation.

Javascript

Specialized task

The bigger the site, the more likely you are to run into multiple tech stacks. Some of those may be JavaScript frameworks. These are relatively newer than CMSs and less understood by SEOs, so we have a guide on JavaScript SEO that covers many of the issues you’ll face, how to troubleshoot them, and how the rendering process works for Google.

Migrations

Specialized task

A website migration is any significant change to a website’s domain, URLs, hosting, platform, or design. Big companies like to change these things and it creates havoc. Try to write any standards to keep things consistent and minimize the impact of changes.

Mergers and acquisitions

Specialized task

Enterprise companies buy other companies all the time. When I worked in enterprise SEO, I felt like I was constantly doing one website merger project or another. There’s a lot that can go wrong and a lot of money on the line. Check out our guide on SEO for mergers and acquisitions for more info.

Log file analysis

Specialized task

I would typically consider this task firmly in the developer department, but it is something that technical SEOs may be asked to do at times. Logs can be expensive to store and analyze and they contain private information (PII) with IP addresses. Many companies won’t give SEOs log file access. I’d say in 99.9% of cases, the crawl stats report in Google Search Console will meet your needs instead of logs.

Enterprise SEO metrics are key performance indicators (KPIs) used to measure the effectiveness of your SEO efforts. Monitoring these metrics helps you prove value and shows the success of your SEO program.

You’ll create a lot of different SEO reports for a lot of different people in an enterprise environment. Check out our guide on enterprise SEO reporting to see some of the reports you’ll want to create and the metrics to include in them for different people. It includes things like:

- How to equate SEO metrics to money

- Selling SEO by comparing against competitors

- Different SEO metrics to include

- Creating status or project reports

- Reporting on opportunities

Some popular enterprise SEO tools include:

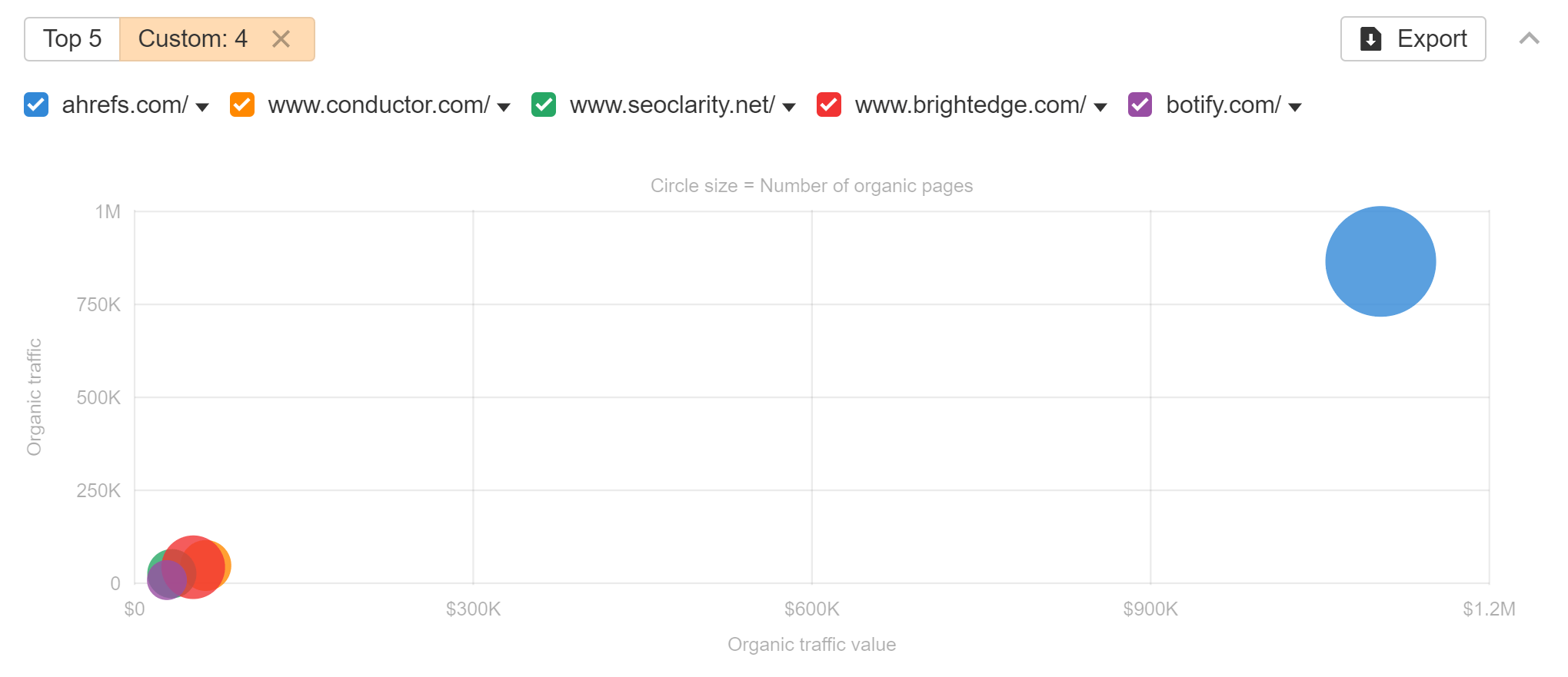

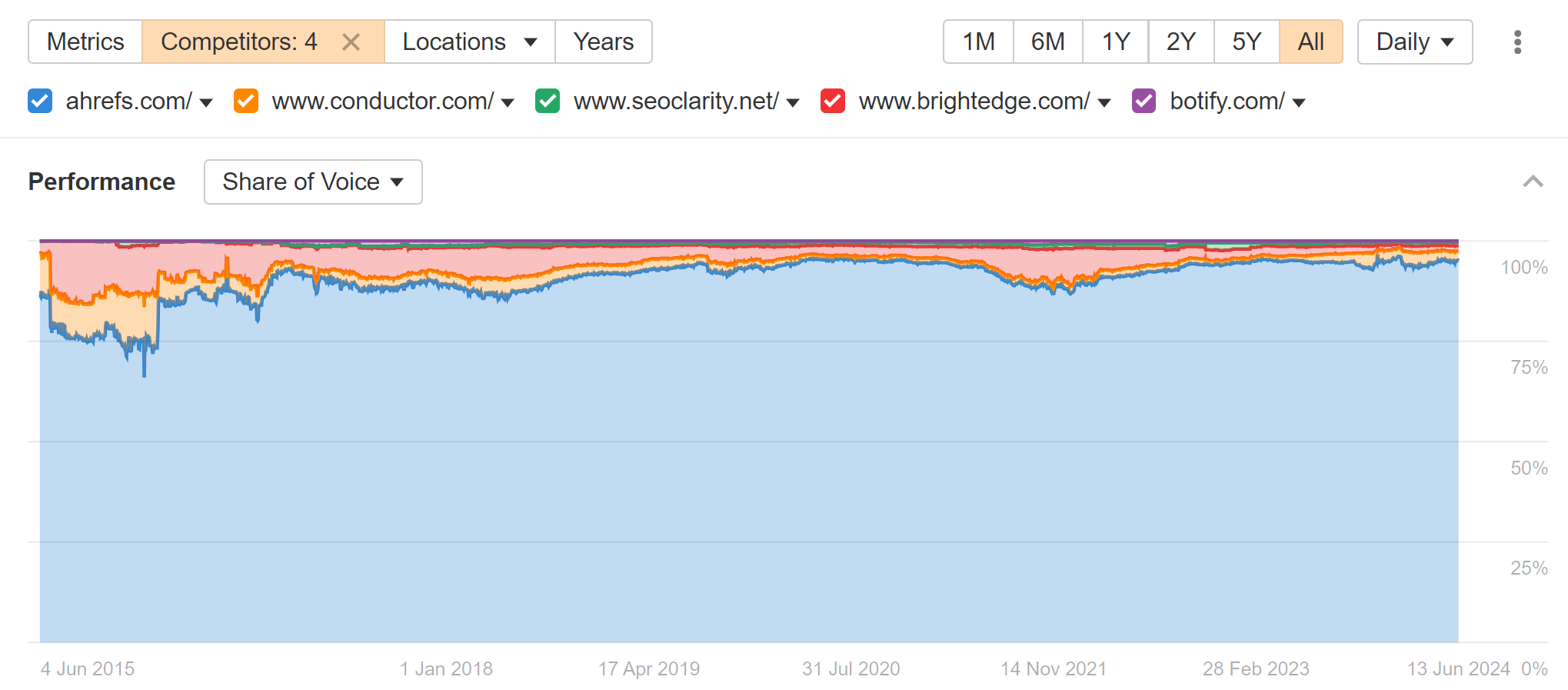

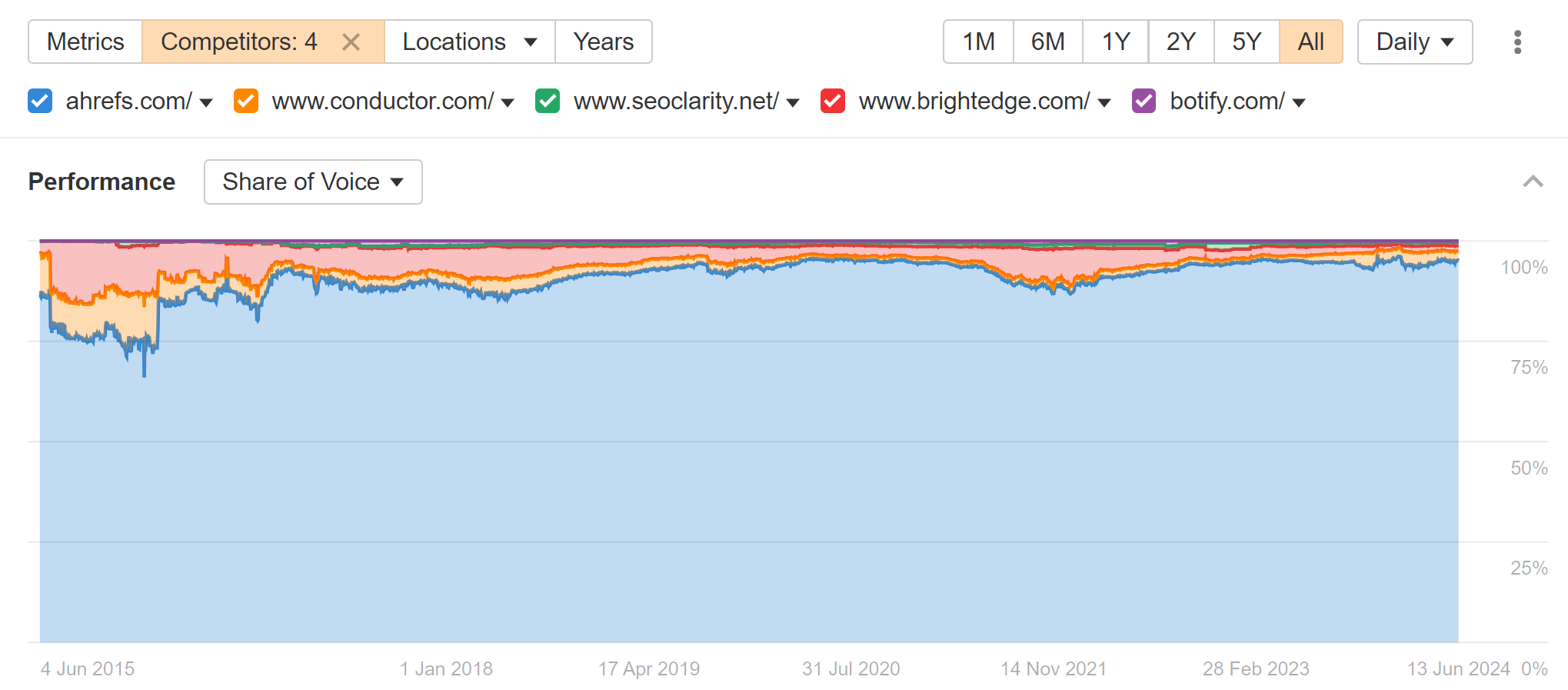

I’m obviously biased towards Ahrefs, but we’re really in a league of our own with 44% of the S&P 500 choosing us. Look how we compare to other enterprise SEO tools in the market.

And our organic search share of voice (SoV).

Check out our article on enterprise SEO tools to learn why you should choose us.

Final thoughts

There’s so much at stake in enterprise SEO and so many opportunities. When a company and its people finally get behind SEO, they can dominate an industry.

If you have any tips, enterprise SEO experiences you’d like to share, or questions, let me know on X or LinkedIn.