SEO

3 Ways To Safely Embrace AI-Generated Content & Opportunities

This post was sponsored by SE Ranking. The opinions expressed in this article are the sponsor’s own.

Wondering how to best integrate AI into your marketing workflow?

Unsure which parts of your content creation strategy can be safely delegated to machine learning?

You’re not alone.

While 46% of business owners resort to AI to craft internal communications, 30% of them are concerned about misinformation AI can cause and 33% are worried that AI implementation could lead to a workforce reduction.

Still, the demand for high-quality content continues to grow, and at least 12% of businesses are turning to AI-powered tools to optimize their content writing process.

The SE Ranking team is no stranger to AI.

We’ve been actively integrating this AI-powered Content Marketing Tool into our team’s workflow to create compelling SEO copy that outperforms competitors in search engines.

Important note: we have no intention to replace our human writers with AI. Instead, we use AI for creating more content than we ever could without AI assistance. We hope to finally test all the ideas we had for a while but never had a chance to implement because of a lack of resources.

Let’s dive into how these AI tools have helped us every step of the way:

- AI Writer, which is based on GPT neural networks.

- Extensive content optimization capabilities.

But first, let’s share why 44% of businesses are using AI technologies now, and why 56% are not.

How Missing AI Opportunities Right Now Can Leave You Behind In The Near Future

The market for Generative AI is booming, with market size expected to reach $51.8 billion by 2028.

44% of respondents adopted AI content writing.

Myth

52% of experts believe that automation will displace people from their careers.

That fear is the main reason why people are refusing to try out new technologies.

Fact

AI writing offers numerous benefits to marketers, giving them access to high-quality content at scale and at low cost.

If you need to produce high volumes of content in a short amount of time, such as product description pages or blog posts, AI can help you get ahead without the need to raise capital.

The earlier you incorporate AI tools into your workflow, the faster you will gain a competitive advantage.

Now is the best time to learn how to capitalize on these tools, while everyone is learning at the same time.

The usage of content automation tools and AI is just a skill, not a replacement, and we will show you how to master it.

So, let’s see how automation with AI tools works during each step of the content writing process.

How To Automate Your Workflow With Content Writing Tools

The content creation process starts with topic research and then includes the following stages:

- Brief creation.

- Content writing.

- Content evaluation.

- Additional optimization.

Each of these steps is time-consuming and requires you to focus on different variables during the process.

Here’s how to automate your writing routine with the help of SE Ranking’s Content Marketing Tool.

1. Build Briefs Faster With An AI Tool

Estimated Time Savings: 20 minutes vs 2 hours

First things first, let’s talk about brief creation.

Step 1: Set Up Your Content Parameters

Start in the Content Editor.

Select your parameters, including your target keywords, which you can choose with the help of the Keyword Research Module.

Image created by SERanking.com, April 2023

Image created by SERanking.com, April 2023Then, the software analyzes the top-ranked content on the selected topic.

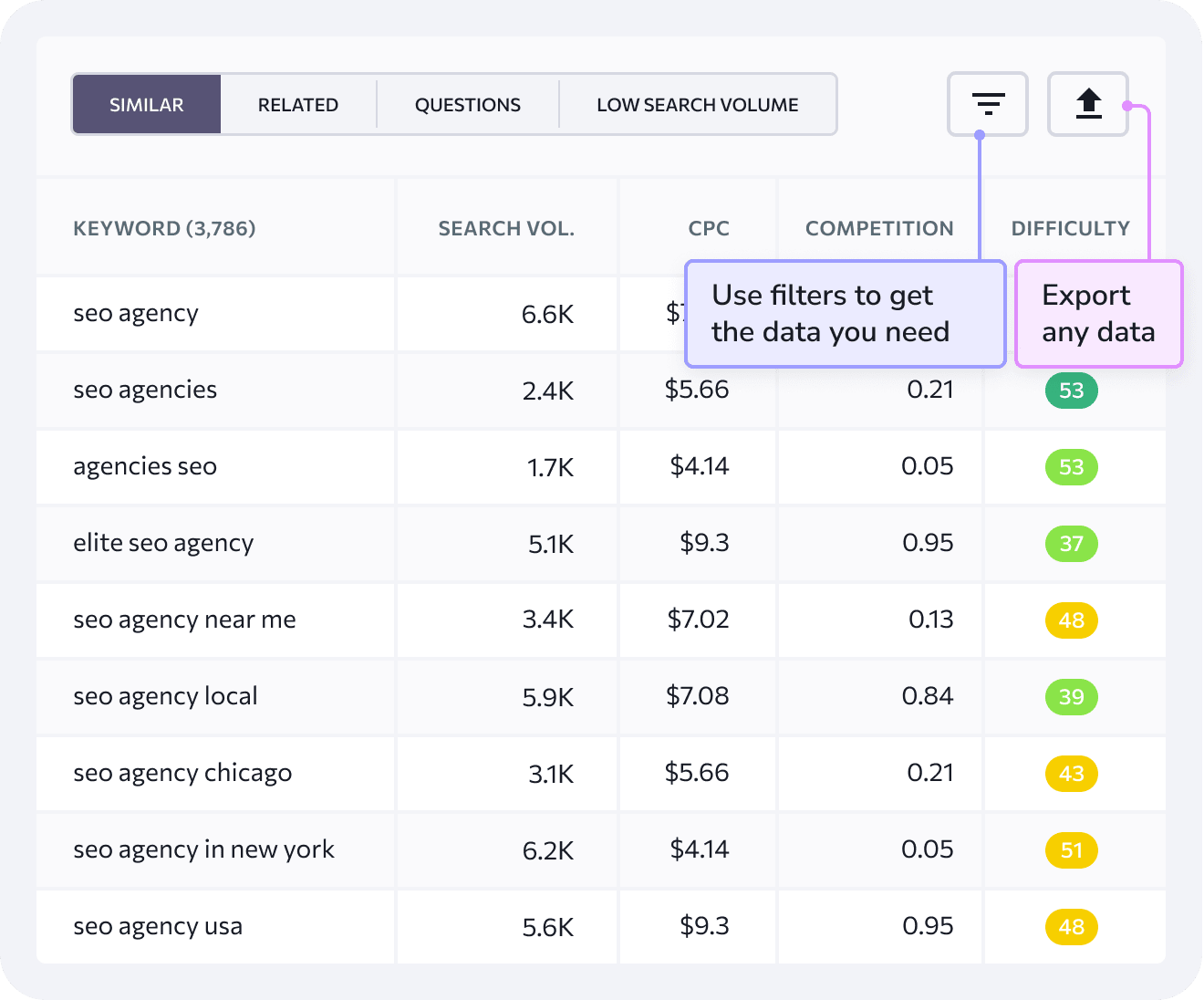

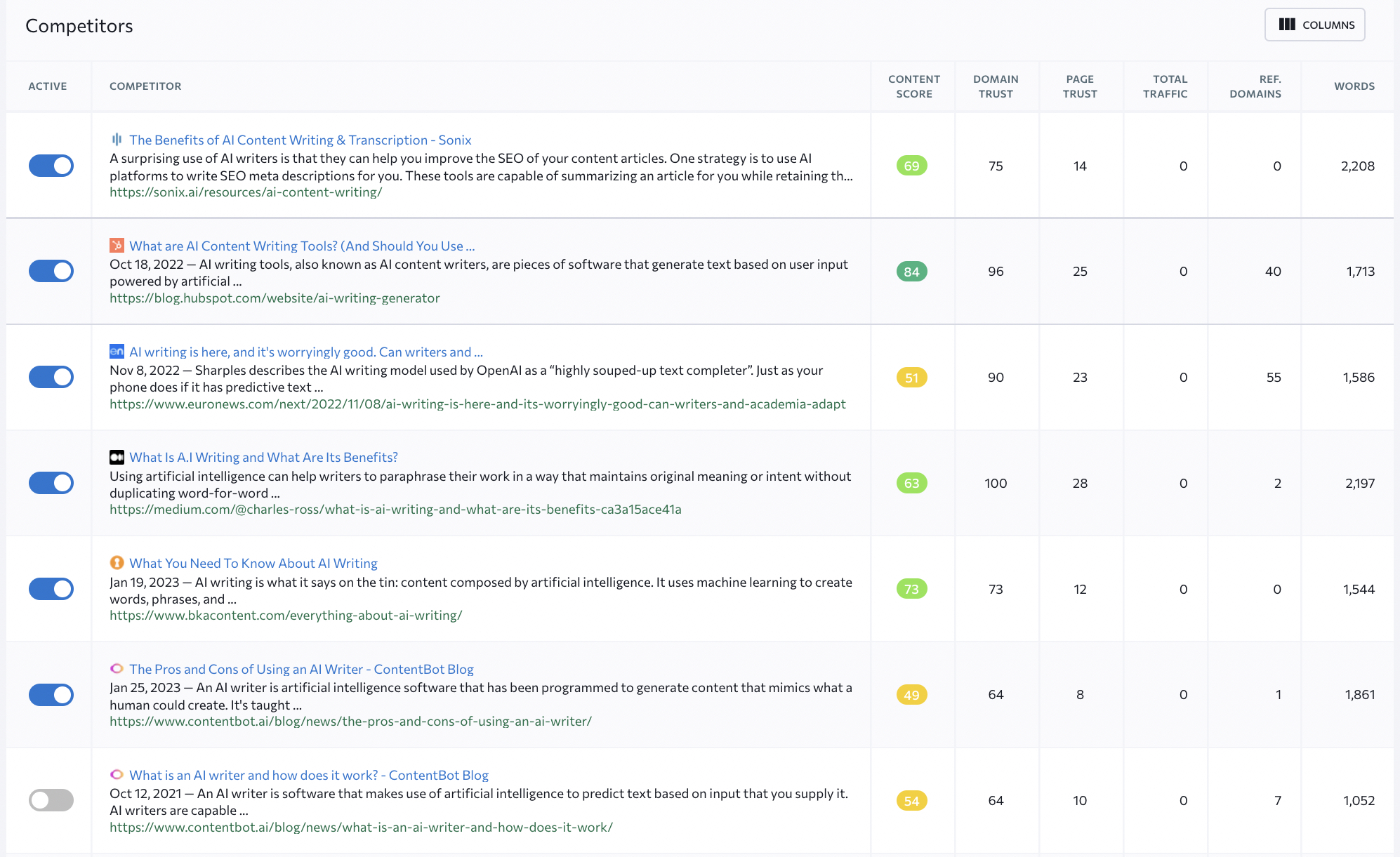

Step 2: Select Your Relevant Competitors For Automatic Analysis

To help inform your smart brief, choose all relevant competitors from the list based on:

- Total traffic.

- Domain trust score.

- Page trust scores.

- Total number of referring domains.

- Word count.

- Website visibility.

Image created by SERanking.com, May 2023

Image created by SERanking.com, May 2023Depending on the pages you chose, the tool recommends:

- Content length.

- The number of media elements to use.

- Terms to use (and their placement).

All of these recommendations play a crucial role in helping web pages rank higher.

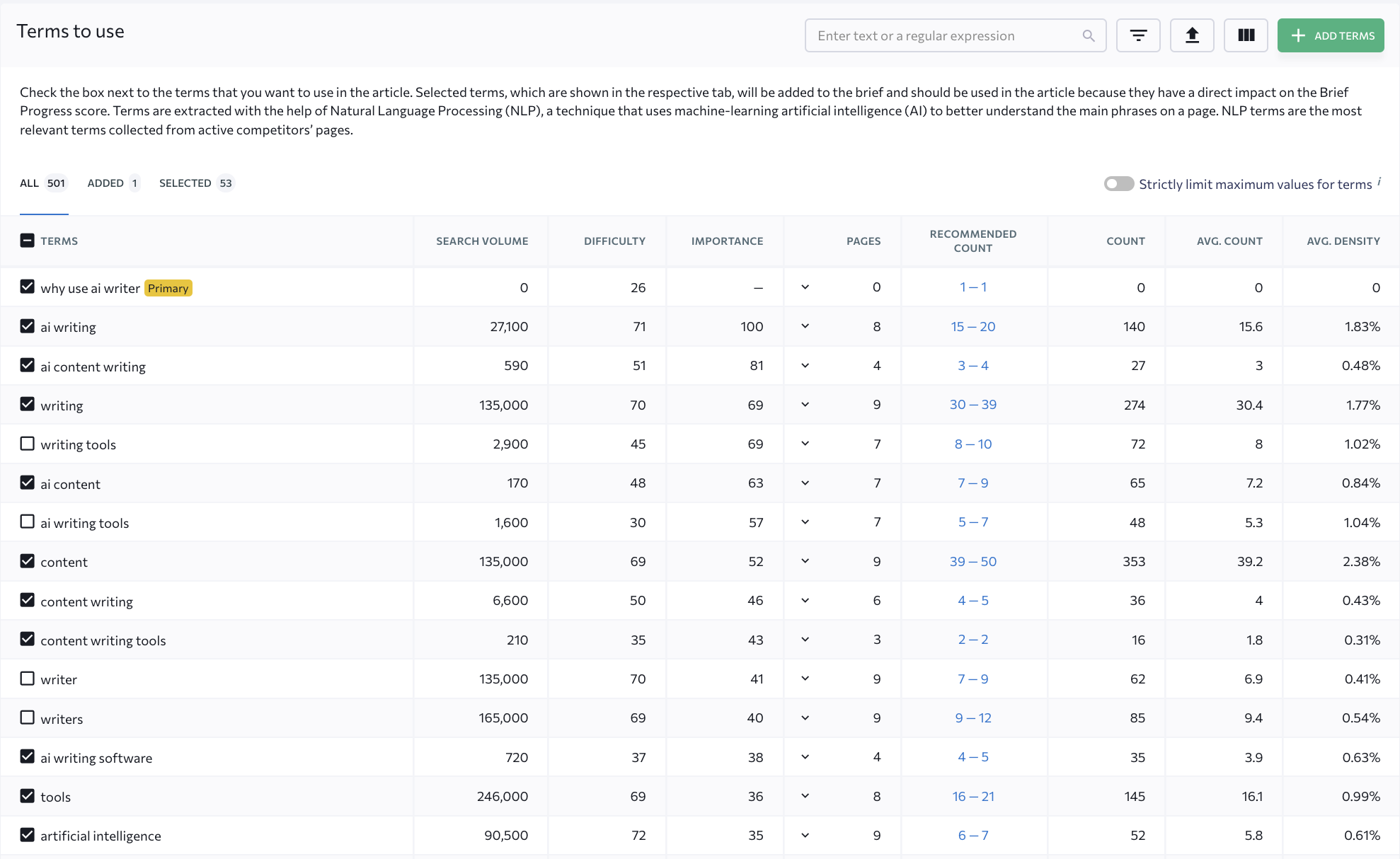

Step 3: Select Your Must-Have Keywords

This AI offers you a list of natural language processing (NLP) terms. NLP algorithms will look for these terms in your content to prove their relevance.

SE Ranking prioritizes terms based on how top-ranking pages use them in their texts and how well these pages perform in the SERP.

You can also go through the list and hand-pick the terms that you feel are the most appropriate.

Image created by SERanking.com, May 2023

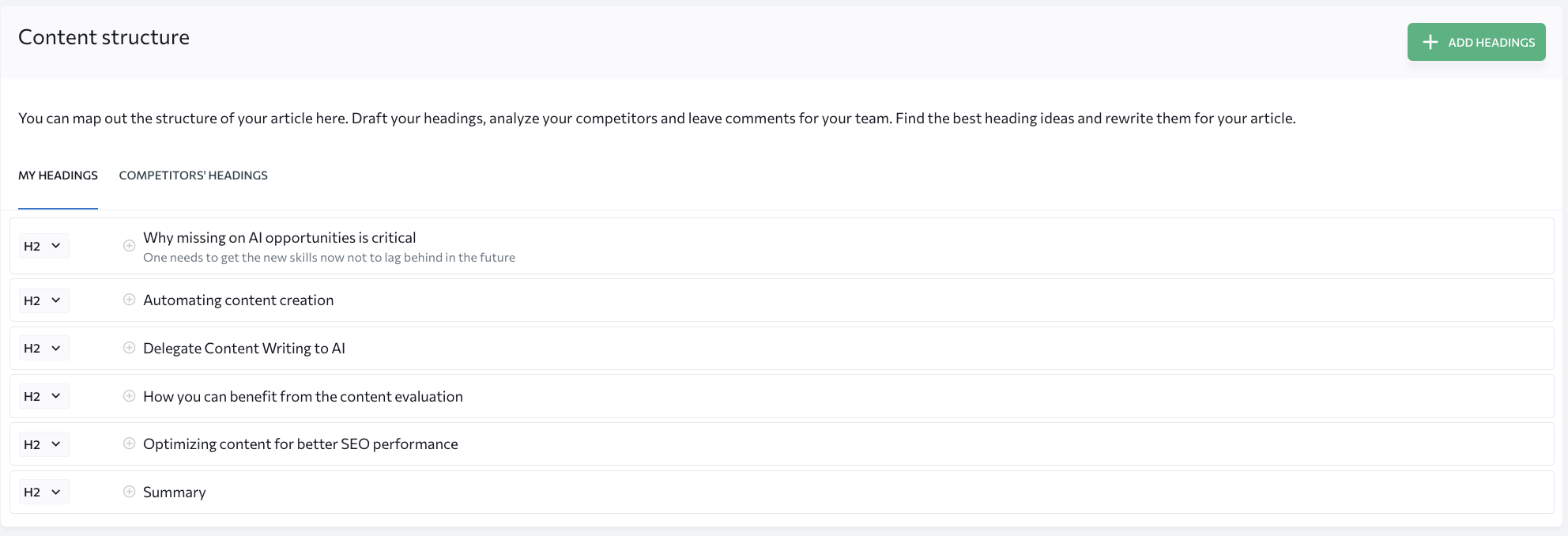

Image created by SERanking.com, May 2023The next step is content structure.

Step 4: Confirm Content Outline Structure

SE Ranking’s Content Editor shows how competitors structure your texts.

While you can add your competitor’s H1-H6 headings to the SEO technical assignment, content writing best practices require you to use them as a reference to create your own structure from scratch.

Image created by SERanking.com, May 2023

Image created by SERanking.com, May 2023With AI owning the creation of a smart, data-driven content writing brief, you can save valuable time, allowing you to focus on ensuring your content is as strong as possible.

2. Delegate Content Writing To AI

Estimated Time Savings: 1 hour vs 3 hours (or more if you had writer’s block)

When the brief is all set, you can choose to pass most of the writing routine to the GPT-powered AI Writer within the Content Editor, as we did here.

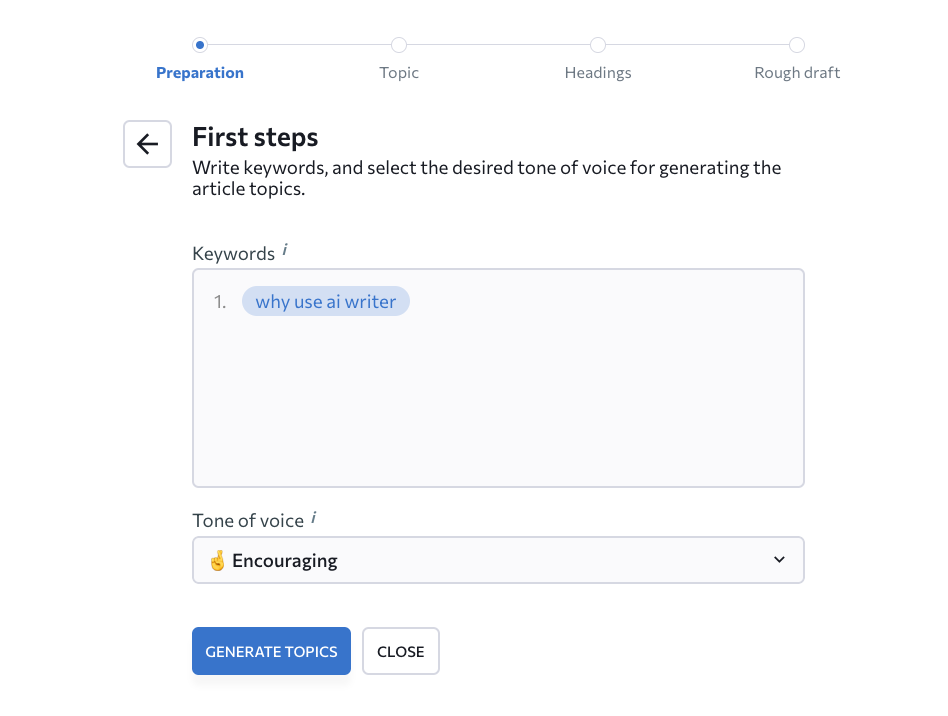

Step 1: Add Your Keywords From Your AI-Generated Content Brief

Once you transfer your terms from your brief, AI Writer will generate different types of content using your target keywords.

Image created by SERanking.com, May 2023

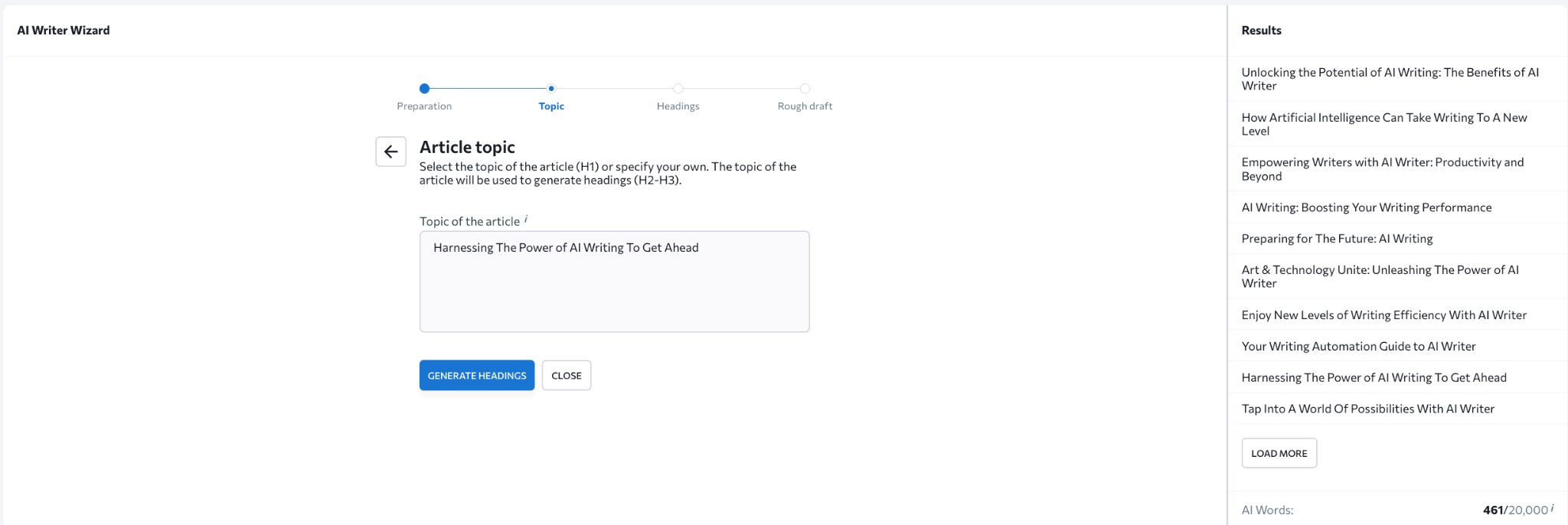

Image created by SERanking.com, May 2023Step 2: Automatically Get A High-Engagement Headline & Rough Draft

Once you input your keywords and voice, you can use the writing assistant to generate:

- An intriguing headline.

- Text structure.

- A rough draft of your content piece.

Image created by SERanking.com, May 2023

Image created by SERanking.com, May 2023Step 3: Quickly Edit A Near-Complete Rough Draft

In our example, we were surprised to find that we loved the first paragraph generated by the AI Writer.

However, we ultimately decided to put it in the Summary section, since what it wrote included the main things we wanted to emphasize in our text.

We also felt like changing one sentence in it.

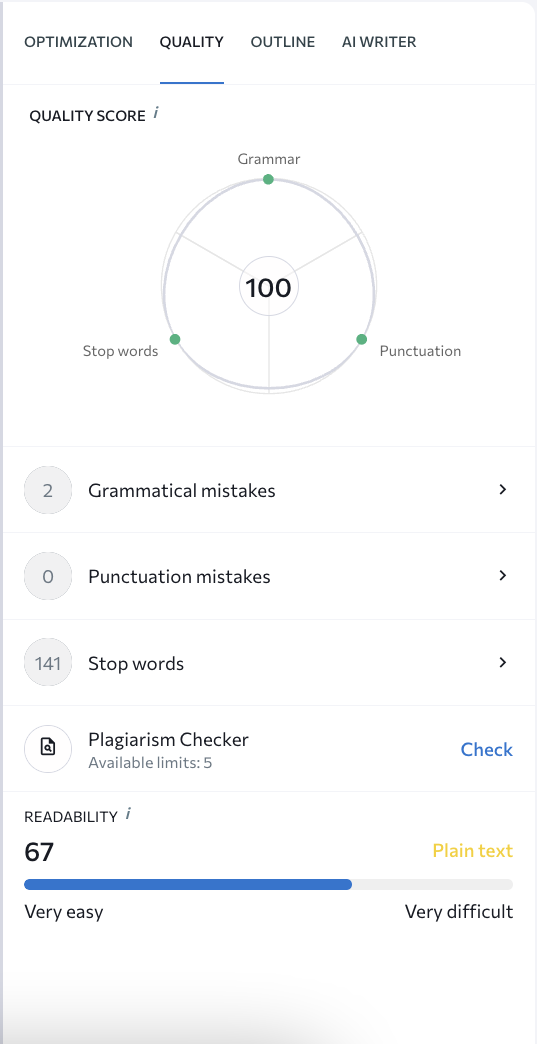

To do this, you just need to select a piece of text and ask the AI writing software to rephrase it:

Image created by SERanking.com, May 2023

Image created by SERanking.com, May 2023Step 4: Evaluate The Content

Now that our first draft is finally ready, it’s time to do some editing and polishing.

To make it easy, AI can help you:

- Evaluate its content score.

- Check the text for grammar.

- Uncover spelling errors.

- Determine uniqueness.

This is to ensure that the piece is of high quality and can win our competitors’ traffic.

In our example, here’s how we used the Content Marketing tool for this purpose.

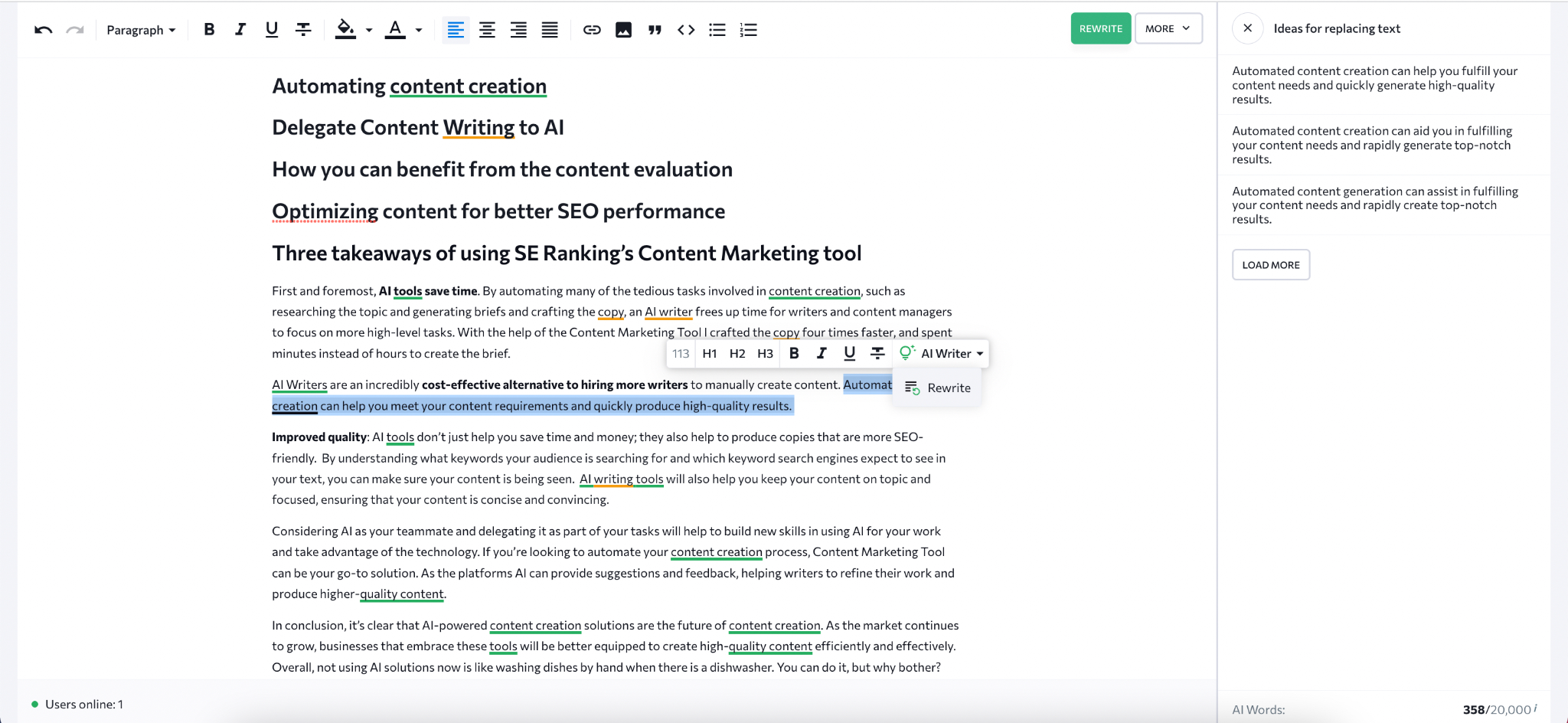

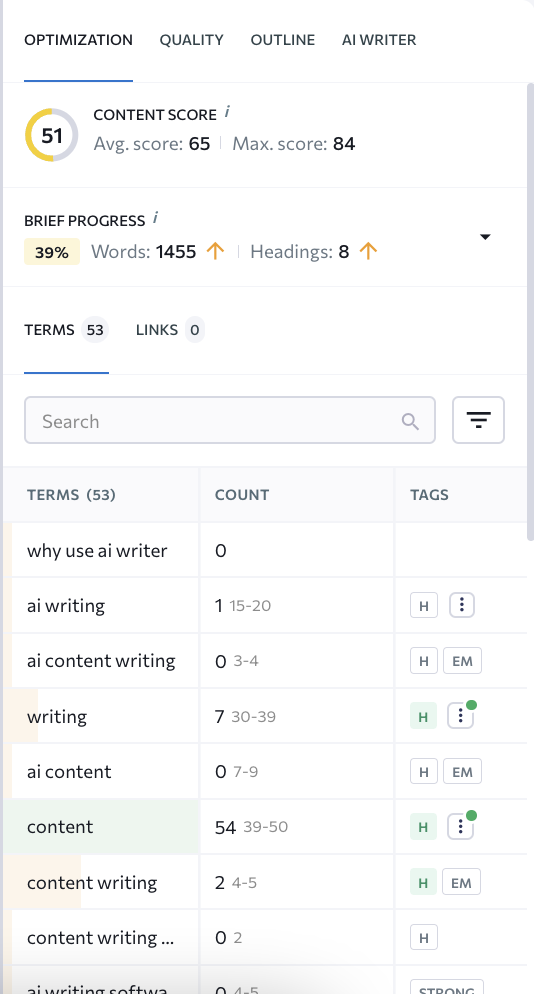

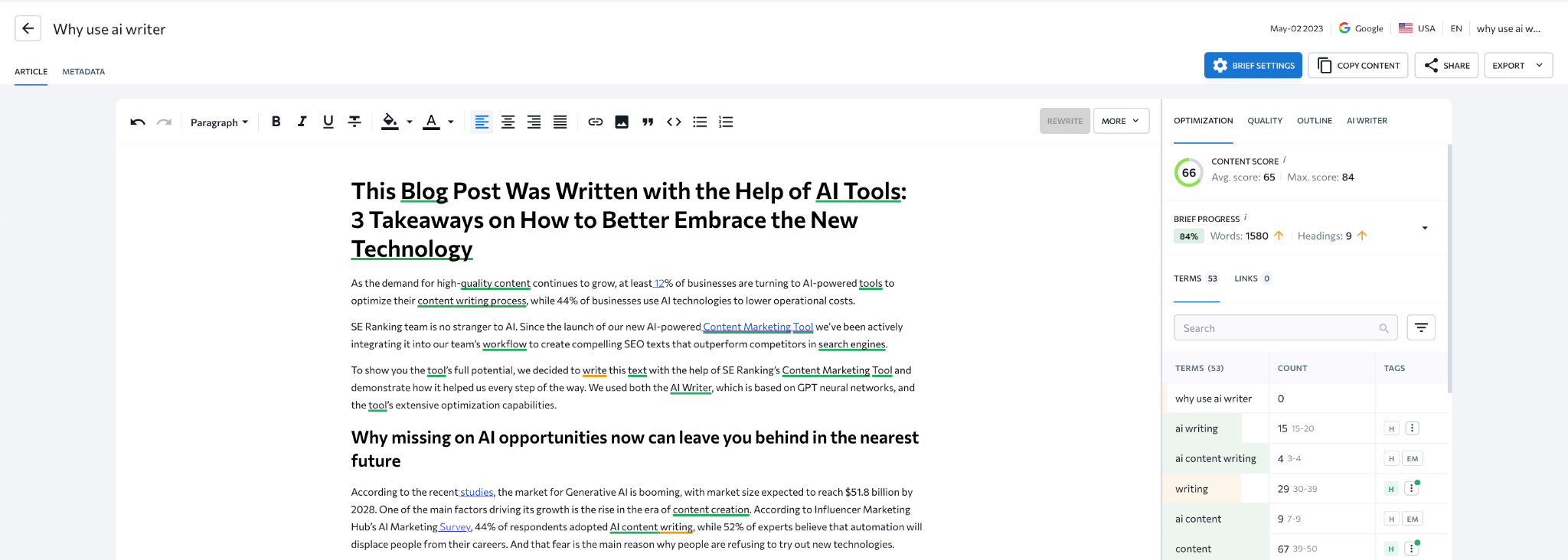

AI Content Score

SE Ranking’s Content Score offers valuable insights into how your content stacks up against the competition in terms of quality and impact.

It also provides hints on which adjustments can help your copy perform better. The tool also scans texts for common grammar and spelling errors to ensure that the content is professional and error-free.

Here are the results we got:

Image created by SERanking.com, May 2023

Image created by SERanking.com, May 2023 Image created by SERanking.com, May 2023

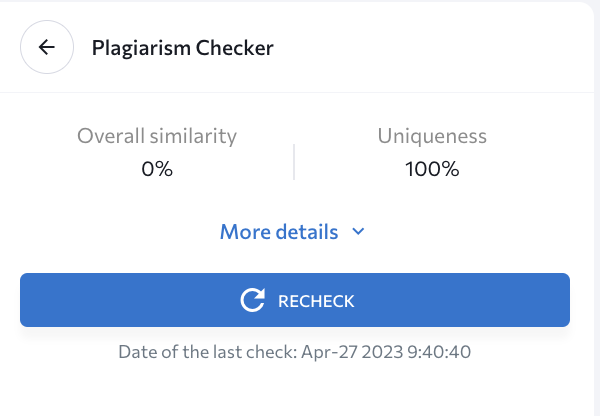

Image created by SERanking.com, May 2023AI Plagiarism Checker

Another useful tool is the plagiarism checker.

Plagiarism is a serious issue that can damage the reputation of any website if not handled properly.

But with the help of this tool, you can be sure that every piece of content you’ve created is completely unique and of the highest quality.

Image created by SERanking.com, May 2023

Image created by SERanking.com, May 2023Thankfully, that’s our overall uniqueness score. 100%! What a relief!

Step 5: Don’t Worry About Job Replacement

As you can see, AI does not remove content creators from the responsibility of creating work.

Your business and teams are still in charge of all the produced pieces, including text quality, trustworthiness, data, advice, and so on.

Whenever you use an AI writer, it’s important to fact-check and verify any information that the system provides you with. AI cannot determine which information is true and can generate misleading and inaccurate content.

3. Optimize Content For Better Search Performance

Estimated Time Savings: 30 minutes vs never getting a click from Google

You might have noticed in the screenshot above that the first draft of this text received a Content Score of 51.

Naturally, the results should be better if we wanted our content piece to be visible in the highly competitive SERP environment.

Step 1: Follow The Automatically Generated Recommendations

Follow the tool’s recommendations to improve the score, which, in this case, includes:

- Increasing the word count.

- Adding some images.

- Integrating suggested terms into the copy.

As a result, we managed to increase this text’s Content Score to 66.

Image created by SERanking.com, May 2023

Image created by SERanking.com, May 20233 Benefits Of Using SE Ranking’s Content Marketing Tool

First and foremost, AI content tools save time.

By automating many of the tedious tasks involved in content creation, such as researching the topic and generating briefs, and crafting the copy, an AI writer frees up time for writers and content managers to focus on more high-level tasks.

With the help of the Content Marketing Tool, we crafted the copy four times faster and spent minutes instead of hours creating the brief.

AI Writers are an incredibly cost-effective alternative to hiring more content writers to manually create content. Automated content creation can help you fulfill your content needs and quickly generate high-quality results.

Improved quality: AI tools don’t just help you save time and money; they also help to produce copy that is more SEO-friendly. By understanding which keywords your audience is searching for, as well as the keywords that search engines expect to see in your text, you can make sure your content is being seen. AI writing tools also help you keep your content on topic and focused, ensuring that your content is concise and convincing.

Considering AI as your teammate and delegating some of your tasks to it will help you build new skills in using AI for your work and leverage the technology’s potential. If you’re looking to automate your content creation process, our Content Marketing Tool can be your go-to solution. The platform’s AI can also provide suggestions and feedback so that writers can refine their work and produce higher-quality content.

The fact of the matter is this: AI-powered content creation solutions are the future of content creation. As the market continues to grow, businesses that embrace AI tools will be better equipped to create high-quality content efficiently and effectively. At this point, not using AI solutions is like washing dishes by hand when there is a dishwasher. You can do it, but why bother?

Image Credits

Featured Image: Image by SE Ranking. Used with permission.

![How AEO Will Impact Your Business's Google Visibility in 2026 Why Your Small Business’s Google Visibility in 2026 Depends on AEO [Webinar]](https://articles.entireweb.com/wp-content/uploads/2026/01/How-AEO-Will-Impact-Your-Businesss-Google-Visibility-in-2026-400x240.png)

![How AEO Will Impact Your Business's Google Visibility in 2026 Why Your Small Business’s Google Visibility in 2026 Depends on AEO [Webinar]](https://articles.entireweb.com/wp-content/uploads/2026/01/How-AEO-Will-Impact-Your-Businesss-Google-Visibility-in-2026-80x80.png)