SEO

Chatbots And AI Search Engines Converge: Key Strategies For SEO

A lot is happening in the world of search right now, and for many, keeping pace with these changes can be overwhelming.

The rise of chatbots and AI assistants – like ChatGPT and its new model GPT-4o, along with Google’s rollout of AI Overviews and Search Generative Experience (SGE) – is blurring the lines between chatbots and search engines.

New AI-first entrants, such as Perplexity and You.com, also fragment the search space.

While this causes some confusion and necessitates that marketers pivot and optimize for multiple types of “engines,” it also presents a whole new array of opportunities for SEO pros to optimize for both traditional and AI-driven search engines in a new multisearch universe.

This evolution raises a broader question – perhaps for another day – about redefining what we call SEO to encompass terms like Artificial Intelligence Optimization (AIO) and Generative Engine Optimization (GEO).

Currently, every naming convention seems subject to change, which is something to consider as I write this article.

Either way, this evolution opens up tremendous opportunities for disruption in the overall search landscape.

What Is A Chatbot Or AI Assistant?

At the most basic level, chatbots use natural language processing (NLP) and large language models (LLMs) that are trained to extract data from online information, sources, and specific datasets. They then classify and fine-tune text and visual outputs based on a user’s prompt or question.

Chatbots are often used within specific applications or platforms, such as customer service websites, messaging apps, or ecommerce sites. They are designed to address specific queries or tasks within these defined contexts.

Right now, we see many crossovers between LLM-based chatbots and search engines. Rapid developments in these areas can cause confusion.

In this article, we’ll focus on the development of AI models in chatbots and their relation to search, with an inferred reference between chatbots and AI assistants.

The Evolution Of Chatbots And AI Models

Since ChatGPT emerged in November 2022, we’ve seen a significant boom in chatbots and AI assistants. Now, generative AI allows users to interact directly with AI and engage in human-like conversations to ask questions and complete various tasks.

For example, these AI tools can assist with SEO tasks, create content, compose emails, write essays, and even handle coding and programming tasks.

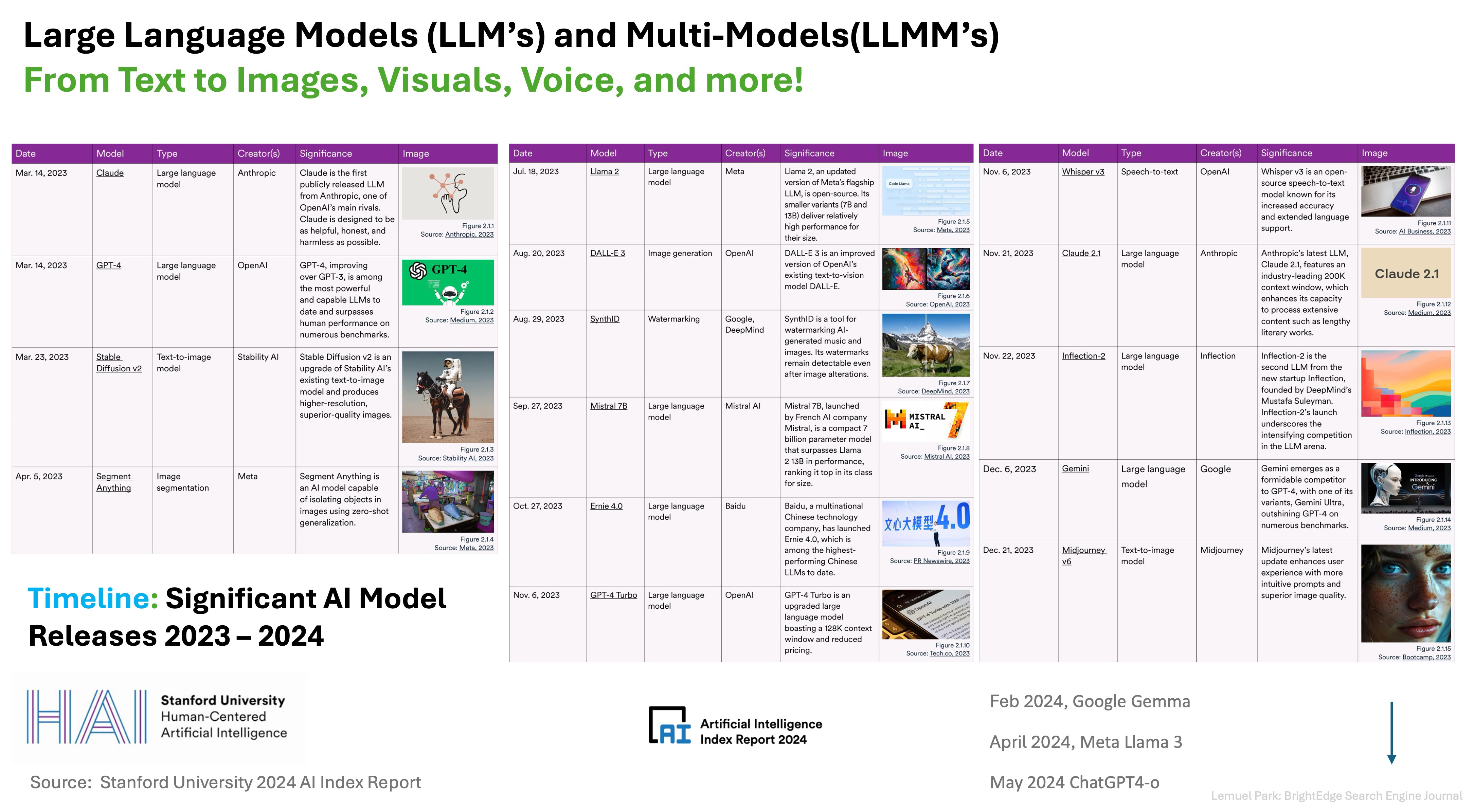

As they evolve, chatbots become multimodal (MMLLMs), improving capabilities beyond text to include images, audio, and more.

Image from 2024 AI Index Report from Stanford University, May 2024

Image from 2024 AI Index Report from Stanford University, May 2024For those interested in digging deeper into these models, the 2024 AI Index Report from Stanford University is a great resource for SEJ readers.

While many chatbots and AI models serve similar purposes, they also have distinct applications and use cases, such as content creation, image generation, and voice recognition.

Here are a few examples with some interesting differentiators and points:

- ChatGPT: Conversational AI for research, ideation, text, image content, and more.

- Google Gemini and Gemma: Uses Google’s LLM to connect and find sources within Google.

- Microsoft Bing: Uses ChatGPT for conversational web search in Bing.

- Anthropic Claude: Various AI models for content generation, images, and coding.

- Stability AI: Suite of models and AI assistants for text, image, audio, and coding.

- Meta Llama3: Utilizes Facebook’s social graph, its own Llama 3 model, and real-time data from Google.

- Microsoft’s Copilot: AI assistant for business creativity and productivity apps.

- Amazon LLM and Codewhisperer: Enhances customer and employer experiences.

- Perplexity AI: Provides quick answers, sources of information, and citations.

Perplexity AI (which I will touch on later in this article) acts more like a search engine than many other chatbots and AI assistants.

Beyond their primary use cases, many companies are making their models available to a wider audience and broader ecosystems, allowing users to customize their own AI assistants.

For example, Amazon’s Bedrock enables AWS customers to use Anthropic and other LLMs, including Amazon’s own model, to create custom AI agents. Companies like Lonely Planet, Coda, and United Airlines are already using it.

On May 13, OpenAI launched its new flagship model, GPT-4. This model is a combination of AI technologies, bringing together what OpenAI calls “text, vision, and audio.” It also opens up access to its application programming interface (API), allowing developers to build their own applications.

All of this convergence has a lot of people wondering.

What’s The Difference Between Chatbots And Search Engines?

The first thing to note is that both chatbots and search engines are designed to provide information.

Search engines and some chatbot models share many similarities, which means their definitions can blur, and the relationships between them converge and collide.

However, at the moment (but it is changing), there is still a distinct difference between the two:

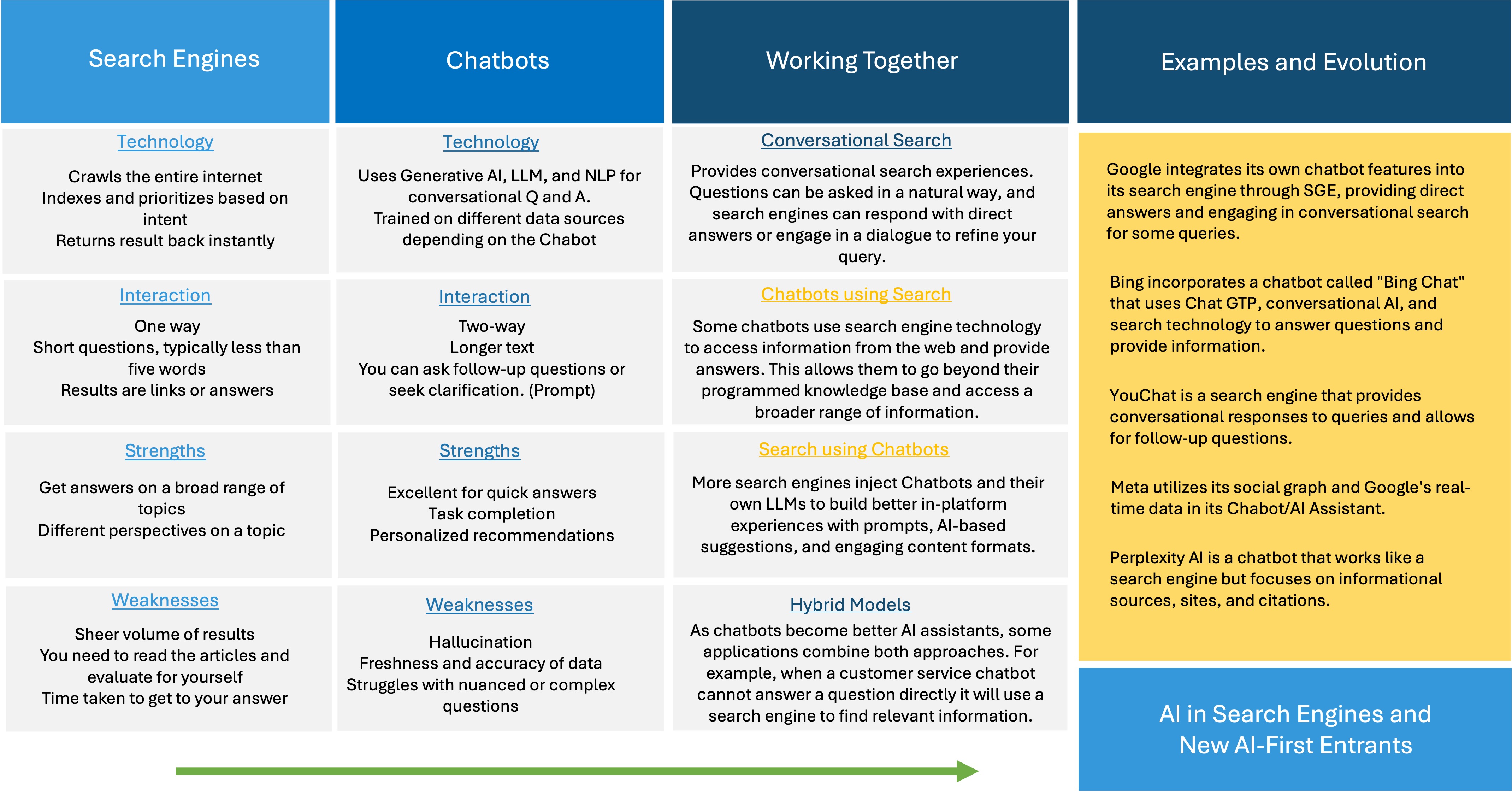

Search Engines

- Search engines are better for exploring a wide range of topics.

- They provide diverse perspectives from multiple sources.

Chatbots

- Chatbots are better for quick answers, task completion, and personalized interactions.

- They enhance the efficiency of the average searcher, making them much more effective at finding information.

Image from author, May 2024

Image from author, May 2024As more overlays and overlaps occur, the definitions of what constitutes a chatbot, an AI assistant, and a search engine may need to be redefined.

How Chatbots And Search Engines Work Together

Conversational search is a key area where search engines increasingly integrate chatbot features to provide a more interactive search experience.

You can ask questions in natural language, and the search engine may respond with direct answers or engage in a dialogue to refine your query.

Chatbots and AI assistants often utilize search engine technology to access information from the web, enhancing their ability to provide accurate and comprehensive answers.

This integration allows chatbots to go beyond their programmed knowledge base and tap into a broader range of information.

Here are a few examples:

- Google: Integrates its own chatbot features into its search engine through SGE, providing direct answers and engaging in conversational search for some queries.

- Bing: Incorporates a chatbot called “Bing Chat” that uses ChatGPT, conversational AI, and search technology to answer questions and provide information.

- YouChat: A search engine that provides conversational responses to queries and allows for follow-up questions.

- Meta: Utilizes its social graph and Google’s real-time data in its chatbot/AI assistant.

- Perplexity AI: A chatbot that functions like a search engine, focusing on informational sources, sites, and citations.

These examples illustrate how the lines between chatbots and search engines are blurring. Thousands more instances show this convergence, highlighting the evolving landscape of digital search and AI.

How “Traditional” Search Engines Are Evolving As AI-First Entrants Arrive

The rise of generative AI and chatbots has caused significant upheaval in the traditional search space.

Traditional search engines are evolving into “answer engines.” This transformation is driven by the need to provide users with direct, conversational responses rather than just a list of links.

The line between chatbot engines and AI-led search engines is becoming increasingly blurred.

While AI in search is not a new concept, the introduction of generative AI and chatbots has necessitated a seismic shift in how search engines operate. For the first time, users can interact with AI in a conversational way, prompting giants like Google and Microsoft to adapt.

On May 14 at Google IO, Google announced the roll-out of AI Overviews as it integrates AI features into its search engine. It is also making upgrades to SGE.

The ultimate goal is to enhance its ability to provide direct answers and engage in conversational search. This evolution signifies Google’s commitment to maintaining its leadership in the search space by leveraging AI to meet user expectations.

In a recent interview on Wired Magazine titled It’s the End of Google Search As We Know It, Google Head of Search, Liz Reid, was clear that:

“AI Overviews like this won’t show up for every search result, even if the feature is now becoming more prevalent.”

As my co-founder, Jim Yu, states in the same article:

“The paradigm of search for the last 20 years has been that the search engine pulls a lot of information and gives you the links. Now the search engine does all the searches for you and summarizes the results and gives you a formative opinion.”

Beyond Google, we are seeing a rise in new, AI-driven search engines like Perplexity, You.com, and Brave, which act more like traditional search engines by providing informational sources, sites, and citations.

These platforms leverage generative AI to deliver comprehensive answers and facilitate follow-up questions, challenging the dominance of established players.

Meta is also entering the fray by utilizing its social graph and real-time data from Google in its AI assistant, further contributing to the convergence of search and AI technologies.

At the same time, according to Digiday, TikTok is starting to reward what it calls “search value.”

Going forward, it’s important to remember that people have diverse needs, and we turn to different platforms for specific purposes.

Just as we go to Amazon for products, Yelp for restaurant suggestions, and YouTube for videos, the rise of AI will only amplify this trend. Each search engine will find its niche, leveraging its strengths to cater to particular user requirements.

ChatGPT is an intriguing case that stands out not for its research capabilities but for its prowess in content creation. While it excels in crafting high-quality content, its research functionalities fall short.

Effective research relies on real-time data, which platforms like ChatGPT currently lack. As we move forward, we expect to see search engines specialize even further, each excelling in specific areas based on its unique strengths and features.

What Does It All Mean For Marketers?

This fast-moving landscape and the convergence of search and AI presents both challenges and opportunities for marketers.

Optimizing for one engine is no longer sufficient; it’s essential to target multiple platforms – each with unique users, demographics, and intents.

Here’s how marketers can adapt and thrive in this dynamic environment.

Optimizing For Different Platforms

- Strength: Dominates the traditional search space with a vast user base and comprehensive data sources.

- Tip: Focus on core technical SEO, including schema markup and mobile optimization. Google’s Search Generative Experience means direct answers are becoming more prevalent, so structured data and high-quality content are vital.

Perplexity AI

- Strength: Provides detailed citations and emphasizes source material, driving referral traffic back to original sites.

- Tip: Ensure your content is authoritative and well-cited. Being a reliable source will increase the likelihood of your site being referenced, which can drive traffic and enhance brand trust.

ChatGPT

- Strength: Excels in conversational AI, making it suitable for quick answers and personalized interactions.

- Tip: Create engaging, concise content that answers common questions directly. Utilize conversational language in your SEO strategy to match the style of ChatGPT interactions.

Key Strategies For Marketers

From optimizing technical SEO to harnessing the power of semantic understanding and creativity, these strategies provide a roadmap for success in the era of AI-driven search.

Core Technical SEO

Basics like site speed, mobile-friendliness, and proper schema markup remain crucial. Ensuring your site is technically sound helps all search engines index and rank your content effectively.

Semantic Understanding

Search engines and conversational AI are increasingly focused on semantic search. Optimize for natural language queries and long-tail keywords to match user intent more accurately.

Content And Creativity

High-quality, creative content is more important than ever. Unique, valuable content that engages users will stand out in both traditional and AI-driven search results.

Expanded Role Of SEO

SEO now encompasses content creation, branding, public relations, and AIO. Marketers who can adapt to these roles will be more successful in the evolving search landscape.

Be The Source That Gets Cited

Ensure your content is authoritative and well-researched. Being a primary source will increase the likelihood of citations that drive traffic and enhance credibility.

Get Predictive

Anticipate follow-up questions and provide comprehensive answers. This will not only improve user experience but also increase the chances of your content being highlighted in AI-driven search results.

Brand Authority

Focus on areas where your brand excels. AI search engines prioritize authoritative sources, so build and maintain your reputation in key areas to stay competitive.

The Best Content That Provides The Best Experience Wins

Ultimately, the quality of your content will determine your success. Invest in creating the best possible user experience, from engaging visuals to informative text.

Key Takeaways

Today, search encompasses a dual purpose: It can serve as a standalone assistant-based application or integrate into search engines for AI-led conversational experiences.

This fusion presents marketers with a unique opportunity to elevate their brands by creating accurate and authoritative content that positions them as trusted sources in their respective fields.

Ranking on the first page and being recognized as the go-to source cited by AI engines is no less important than 10 or 20 years ago but is exponentially more difficult.

The good news is that whether it’s Google’s AI engine or newcomers like Perplexity, brands that establish themselves as authorities in their niche stand to benefit immensely.

Marketers need to embrace creativity and collaboration across omnichannel teams. Ensure that your website is visible and accessible to all types of engines, whether traditional or AI-driven.

I’d like to leave you with a few questions to consider as you find your way forward in this complex environment. Pardon the pun, but no one has all the right answers yet.

- Are chatbots morphing into search engines?

- How do social platforms differentiate as younger generations look to them as search engines?

- How would you define a search engine?

- Who will win the race for user loyalty – traditional search engines infused with AI or new entrants built on generative AI from the beginning?

- How would you redefine your role as an SEO – are you AI first?

While you consider that, stay proactive and adaptable and position yourself and your company to leverage the diversity and complexity of the search ecosystem to your advantage. In a world of ChatGPT, chatbots, and AI in search, you’re not optimizing for one channel, such as Google or Bing.

Successful optimization in this multifaceted landscape calls for a holistic approach. It’s not about keyword rankings or click-through rates; it’s about unraveling the intricacies of each platform and adjusting your strategies accordingly.

This means optimizing your content for conversational search, tapping into the capabilities of AI to tailor user experiences, and seamlessly integrating across different channels and devices.

Leverage the strengths of each platform to amplify your message by use case and engage with your audience on a deeper level, and you’ll ultimately drive more meaningful results for your business.

More resources:

Featured Image: Memory Stockphoto/Shutterstock