SEO

Here’s the Only SEO Guarantee That’s Not a Scam

SEO guarantees are one of the biggest tools agency sales teams use to turn prospects into clients. But they can often be scams or promise results that are achieved unethically.

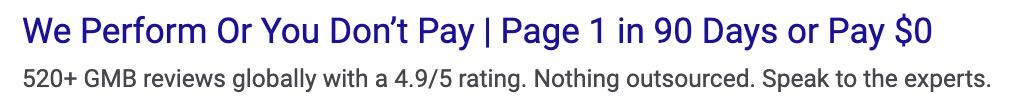

For instance, here’s a popular SEO guarantee:

And another possibly too-good-to-be-true guarantee:

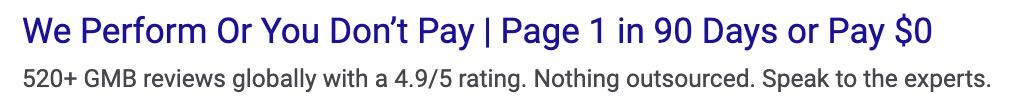

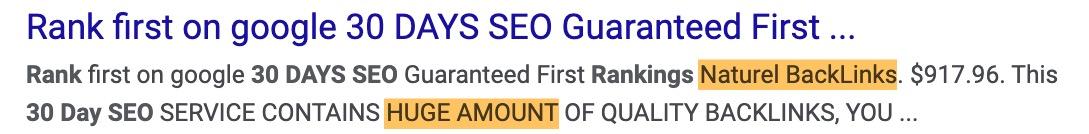

Not to mention this oh-so-safe and completely risk-free guarantee offering a “huge amount” of “naturel” backlinks 🙄

Sarcasm aside, I get it.

As a business owner, guarantees like this offer peace of mind that your limited marketing budget won’t be wasted. So, here’s everything you need to know to avoid the risky types of SEO guarantees that are nothing more than thinly veiled scams.

I’ll also share the only type of SEO performance guarantee that’s not a scam so keep your eyes peeled.

In fact, it’s the only lawyer-approved SEO guarantee I’ve ever come across.

Although there is at least one legitimate option for guaranteed SEO services (that we share below), you really should avoid most like the plague. Here are four reasons why.

1. Rankings cannot be guaranteed, under any circumstances

If this article had a catchphrase it would be “what cannot be controlled, cannot be guaranteed.”

With paid ads, you can spend more to bump your ad to the top of search results instantly. But, SEO rankings are earned, usually over a long period of time.

They are 100% at the discretion of the search engine and no SEO provider can predictably manipulate these. Nor can you throw more money at the problem. SEO simply doesn’t work this way.

Google warns business owners about this:

Anyone who guarantees rankings is, at best, over-confident in their ability or, at worst, straight-up lying to you.

2. Fast results often mean dangerous or unethical tactics are used

There are no shortcuts to earning SEO results legitimately. SEO is, and has always been, a long-term growth channel that compounds over time.

The most common way people earn short-term results is by using black hat SEO tactics.

These tactics are aimed at manipulating search algorithms to rank content higher. They also often violate search engine policies and can lead to penalties or your site being blacklisted altogether.

In my opinion, business owners who try to minimize their financial risk by using a low-cost, guaranteed SEO service are exposing themselves to far greater risks. Fear of losing money in the short term is a short-sighted way to think about SEO.

For instance, what’s worse?

Scenario A (no SEO guarantee)

You invest in an SEO service without a guarantee. The campaign runs at a loss initially since you don’t start seeing results until month 6. But by month 12, you’ve made your money back and also received a decent return.Scenario B (with a guarantee)

You invest in an SEO service with a guarantee that you’ll rank on page 1 in 30 days, or you get your money back. Within 30 days you rank on the first page of Google for some keywords (i.e., the guarantee is met). But, a few months later your site is penalized, and you lose all your SEO performance.

Obviously, the answer is scenario B.

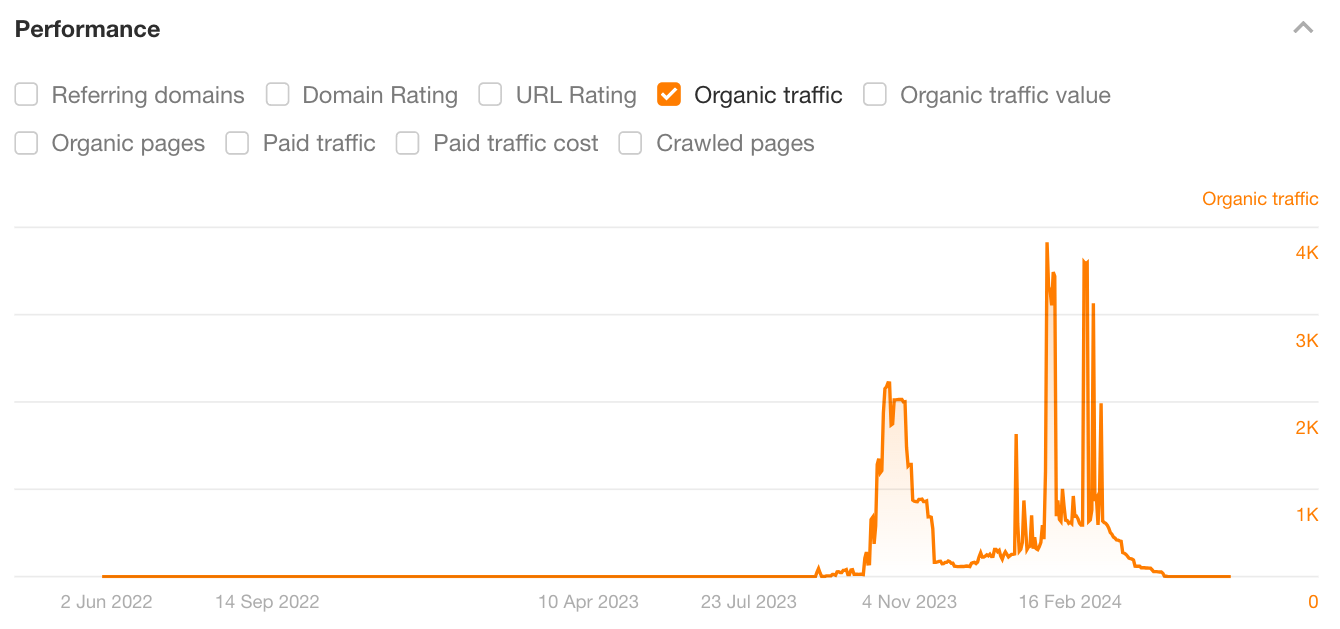

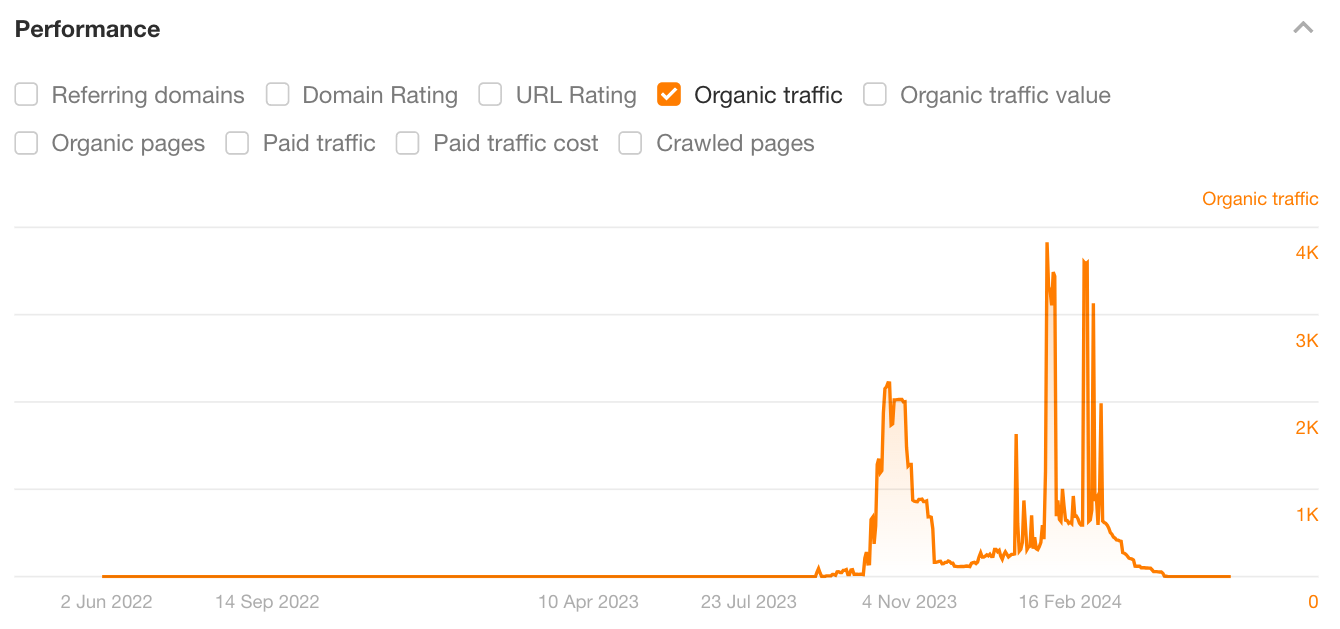

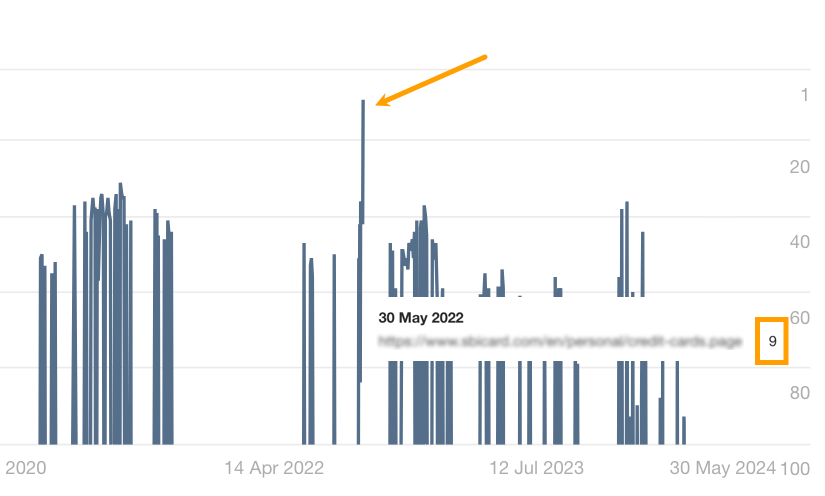

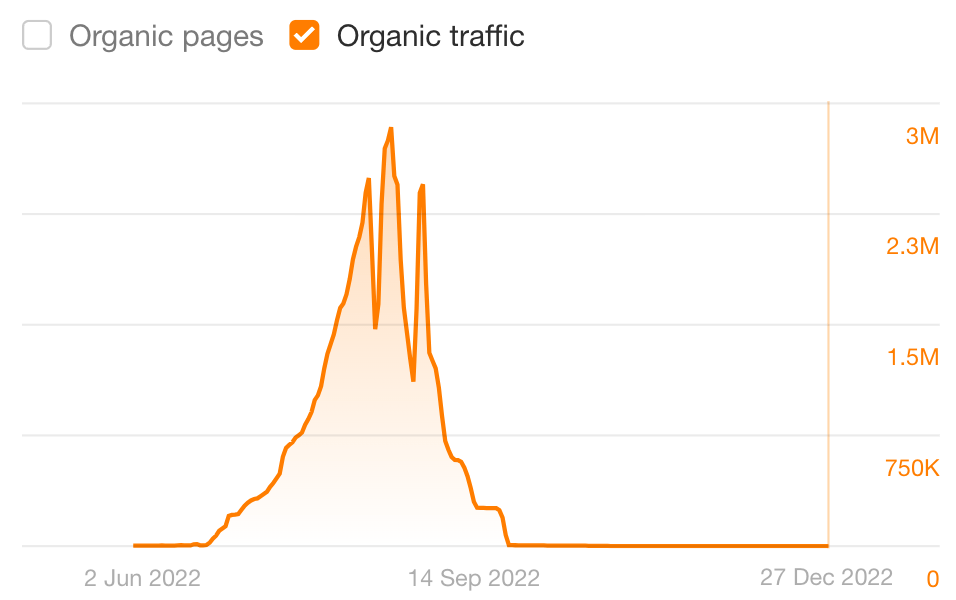

For example, here’s a site that has experienced multiple traffic losses until it was eventually penalized in early 2024. It will be very difficult for this site to overcome the penalty and grow.

Most business owners don’t think this far ahead when shopping for SEO so they often pass up the agencies that deliver results similar to scenario A.

In scenario B, since the guarantee has technically been met, there’s no chance you’ll be getting your money back or growing your business. It’s also likely that you need to invest in rebuilding your website on a new domain that has not been penalized by search engines.

So when you hear about guaranteed SEO results being scams, this is why.

Learning how a service provider delivers their service can save you from far bigger risks and greater financial losses in the long run.

3. Guaranteed SEO results probably won’t deliver a return on investment

SEO, just like any other marketing channel, needs to provide a return on investment (ROI) to be effective. You put money in, and you’re supposed to get more money out when it’s done right.

While guaranteed SEO results sound great in theory, when you dig a little deeper it’s often the case that these results are based on:

- Keywords unrelated to your business

- Keywords with very little to no search volume

- Keywords with informational search intent (that won’t deliver leads)

In short, the types of keyword rankings that are guaranteed usually won’t lead to more conversions, can diminish your quality of leads, and won’t deliver an ROI.

The keywords that do grow your business are often more competitive and take longer to rank for. There’s simply no way to shortcut the process, so performance for these keywords likely won’t be guaranteed.

Another consideration is that most SEO guarantees only focus on vanity metrics. Vanity metrics sound good on paper, but they don’t grow your business or allow you to make better decisions.

Rankings won’t feed your family. Neither will traffic.

So, if you’re considering using a guaranteed SEO service provider because you think it’s the best way to see a return on investment, think again if they only guarantee vanity metrics.

4. Initial results do not guarantee continued success

SEO is not a cheap service.

Most business owners don’t even consider the biggest risk (until it’s too late): the financial implications of signing a long-term contract based on a short-term guarantee.

I’ve seen a lot go wrong in this scenario.

For instance, let’s say you sign a 12-month contract with a guarantee like “first page rankings in 30 days or you get your money back.”

If your service provider ranks your website for a single keyword, any keyword, on the first page of Google they’ve technically met the guarantee. And it doesn’t even matter how long your site holds this position. For instance, this page ranked on the first page of Google results for less than a day:

It also doesn’t matter what happens after the 31st day. The guarantee doesn’t cover you for any of the following after it’s been met:

- Performance plateau

- Performance losses

- Site penalties

You might even pay for the remaining eleven months without the service provider completing further work on your website.

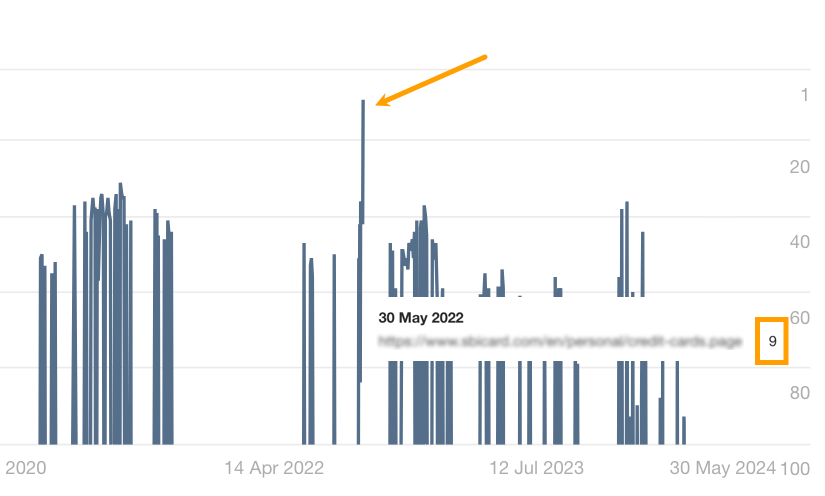

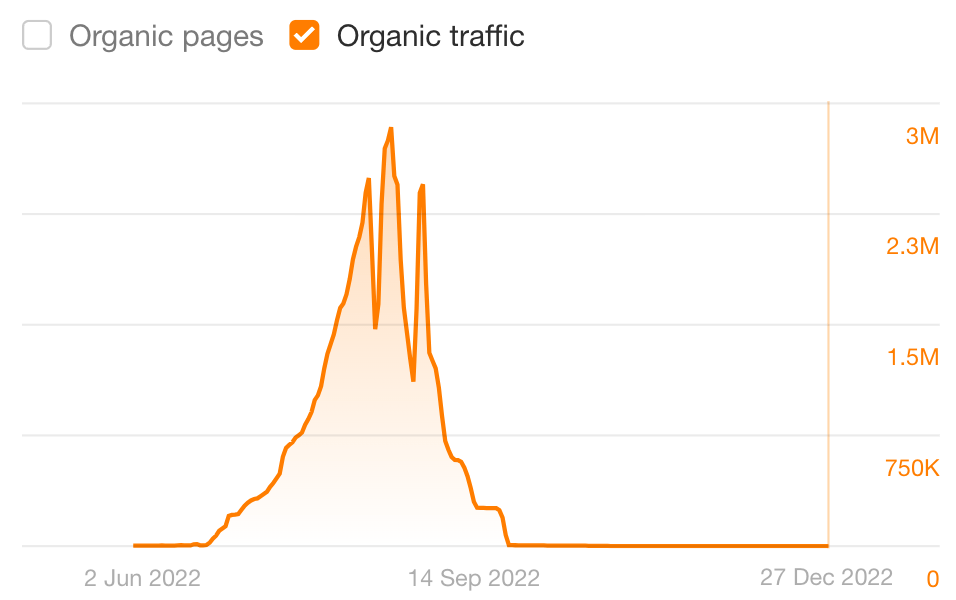

An extreme example of how things can go wrong is the website conch-house.com. This website scraped content from Amazon and published 6,000 posts a day. It was doing shady things to begin with. But, in the space of two months, it grew to over 6 million monthly users and just under $20,000 in revenue per day.

And during its third month in this peak performance period, it was penalized and blacklisted by Google.

Here’s what the traffic graph looked like:

Hypothetically, the results of this website would have met most SEO guarantees like:

- Rank #1 in 90 days or less

- First-page in 30 days, guarantees

- Explode your traffic in 60 days

It’s too bad it couldn’t sustain its performance in the long run due to the spammy tactics it used to grow.

TL;DR

Don’t sign a long-term contract on a short-term guarantee. There are too many loopholes and technicalities that aren’t in the favor of business owners.

If you choose an SEO provider that uses unethical tactics to grow your website quickly, you can also be exposed to far greater risks that completely sacrifice your future performance.

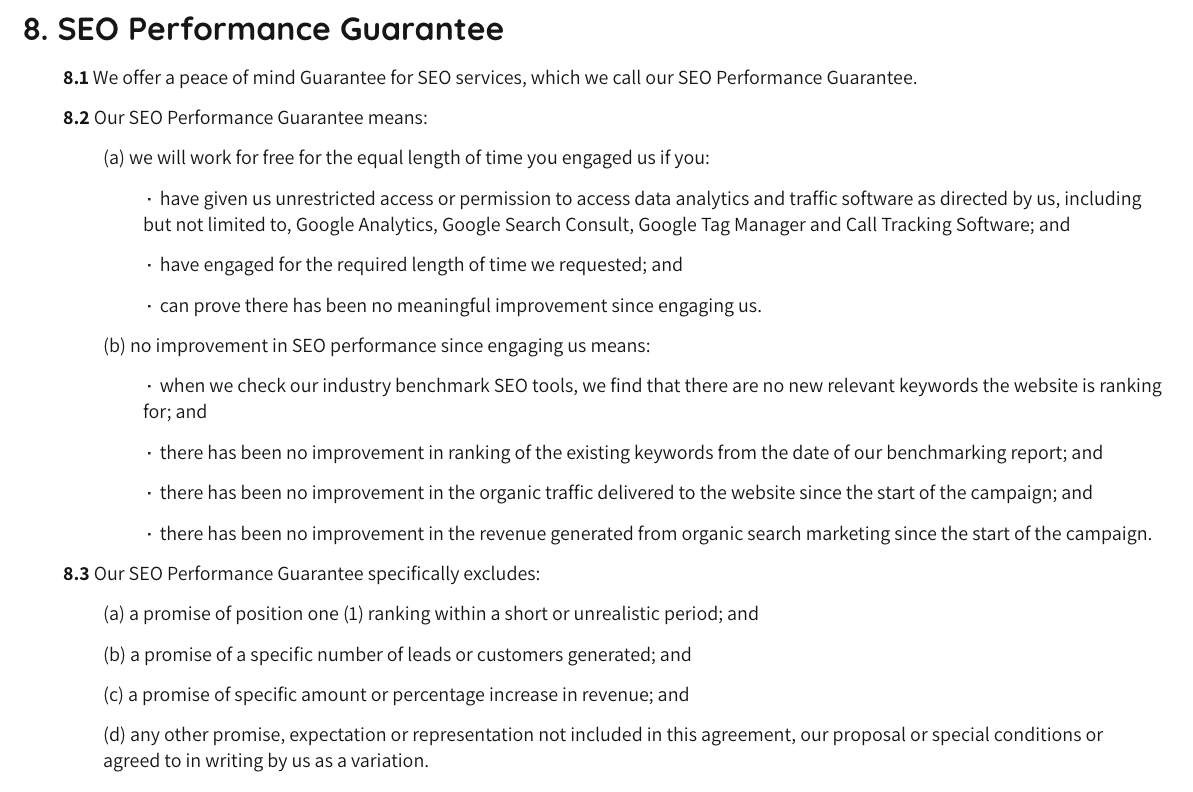

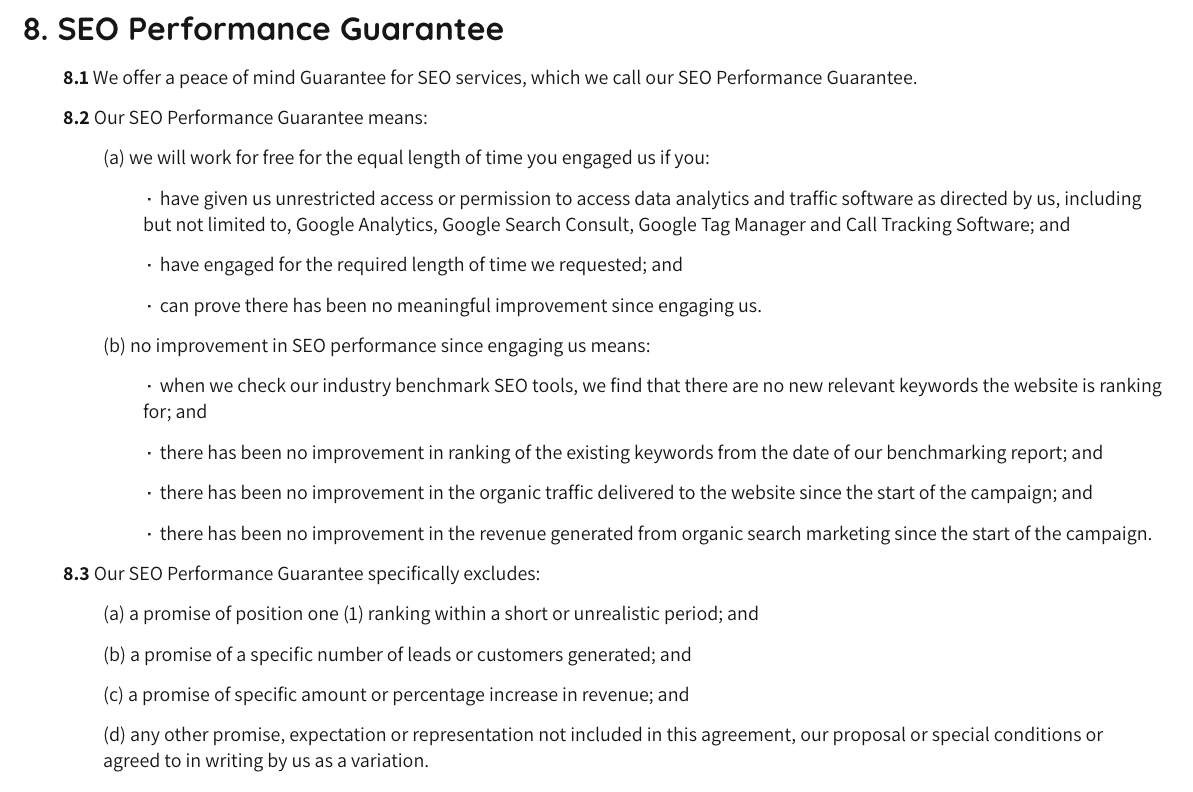

There’s only one type of SEO guarantee I’ve seen that’s legally sound and not a scam. I may be biased here because my lawyer drafted it for my consultancy.

As a service provider, I wanted to offer a “peace of mind guarantee” to my clients. But it’s difficult to do that ethically and with full transparency.

Here’s an example of what we settled on:

What does this type of guarantee offer that most others don’t?

- It holds the service provider accountable for delivering relevant results, or they work for free.

- It has long-term performance baked into it.

- It considers all metrics that indicate SEO performance, including organic revenue.

- It specifically excludes granular results based on vanity metrics.

- It clearly and transparently communicates what is and what isn’t covered.

- It isn’t based on loopholes or rankings for specific keywords with no business value.

- It has been written and approved by an SEO-savvy lawyer.

My favorite part is that it doesn’t assume that a service provider can control specific SEO results.

Rather, it implicitly acknowledges that rankings and organic traffic improvements will be byproducts of the services—as they should be.

Legitimate and trustworthy SEO service providers have the ability to take your business’ growth to the next level. Here’s what to look for when choosing a trustworthy SEO provider.

They focus on growing your business

A good SEO provider will align their strategies with your core business goals and measure SEO success according to the metrics that matter to you. These can include:

- Conversions

- Leads

- Sales

- Revenue

- Profit

- ROI

Unlike rankings and traffic, these are not vanity metrics.

They have a track record of delivering long-term results

A track record of success is often more important than a guarantee. An agency with many case studies of successful results or testimonials from happy clients is often more trustworthy than one offering a guarantee.

In particular, when evaluating an SEO provider’s results, look for:

- The number of success stories: More is obviously better!

- Long-term projects spanning multiple years: This is a great indicator of client happiness.

- Proof of ROI, revenue, or sales increases: Look for results that aren’t just vanity metrics.

- The average length of time until ROI was earned: Get a realistic sense of how long results take to achieve.

- Services they included: Get a feel for what’s included in their services and their methodology.

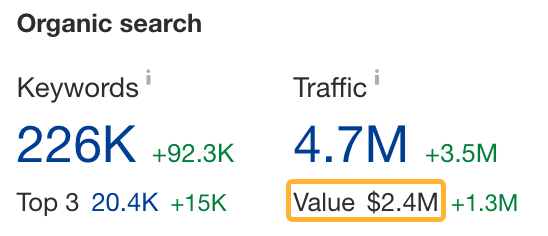

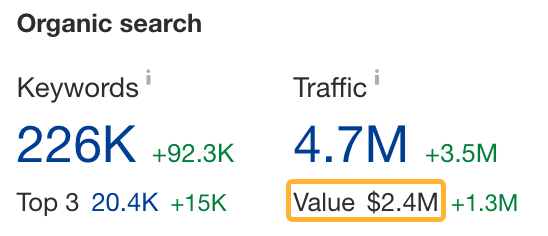

I also recommend going deeper than face value by verifying the performance claims in third party tools. For example, you can use Ahrefs’ Site Explorer to verify:

- Organic traffic growth

- Traffic value growth

- Backlink growth

- Keyword ranking improvements

- Improved keyword breadth

You can also use more specific reports like Top Pages to check improvement over a specific period of time. For example, here’s a project where there’s a clear correlation between the addition of more content and organic traffic growth:

So if you’re reading a case study that mentions content creation, this is the graph you need to see to verify the performance claim is true.

They are transparent about what they do and how they’ll do it

It pays to educate yourself on black hat SEO tactics to avoid.

When chatting with SEO providers about how they approach their SEO services, pay attention to whether they mention things that sound spammy, like:

- Building a huge amount of links

- Having a network of blogs they can guest post on

- Creating a very large volume of posts in a short time frame

- Manipulating traffic signals to your content

Instead, choose an agency or service provider that uses a white hat approach. Check out our guide on white hat SEO to learn how to rank without breaking Google’s rules.

However, going beyond this, the best agencies will also offer clarity on monthly deliverables for things like the hours worked on a campaign, specific tasks completed, quality delivered, or their efficiency.

These things are all 100% within the SEO provider’s control and are also safer elements to include in a guarantee.

They track and report on performance regularly

Accountability is an essential part of any service. Many SEO agencies and service providers do not hold themselves accountable for delivering long-term results.

I recommend asking how often you’ll be receiving performance updates and reports. Some agencies have dashboards they can give you access to so you can check progress at any time. Others will hop on a call with you monthly or quarterly to discuss how things are going.

The most important thing here is that your SEO service provider reports on what you care about. Make sure they consider the actual growth impact they’re having on your business, not just the vanity metrics.

For example, my favorite metrics to report on in client meetings are ROI or organic revenue (if the tracking and attribution are properly set up).

| Month | SEO Spend | Organic Revenue | SEO ROI % | SEO ROI $ |

|---|---|---|---|---|

| Jan | $5,375 | $114,257 | 2126% | $21.26 |

| Feb | $5,375 | $344,430 | 6408% | $64.08 |

| Mar | $5,375 | $425,632 | 7919% | $79.19 |

These metrics show my clients how much money they’ve made for every dollar invested in my service.

As long as these numbers go up, there’s no need to worry about the minutiae of how many keywords you’re ranking for or which keywords changed rankings. That’s all a distraction from what matters most: growing your bottom line.

Note that as a business owner, you’ll need to share sales data with your SEO service provider if you want them to report on organic revenue and ROI. If you do not provide this data, the best they can report on is the metrics available in SEO tools like Ahrefs.

In particular, the “organic value” metric is used by many agencies to substitute ROI as it quantifies the dollar value of your SEO traffic.

Don’t be swindled: Track your SEO results for free with Ahrefs

Tracking your SEO performance can help expose SEO providers who may be trying to pull the wool over your eyes.

Whether you want to track the performance you were guaranteed or just keep tabs on general SEO visibility, Ahrefs Webmaster Tools can help. It’s a free tool allowing you to track:

- Technical fixes made

- Backlink growth

- Keyword performance

- Overall SEO growth

If you have any questions about SEO performance guarantees or how to avoid SEO scams, reach out to me on LinkedIn.

![How AEO Will Impact Your Business's Google Visibility in 2026 Why Your Small Business’s Google Visibility in 2026 Depends on AEO [Webinar]](https://articles.entireweb.com/wp-content/uploads/2026/01/How-AEO-Will-Impact-Your-Businesss-Google-Visibility-in-2026-400x240.png)

![How AEO Will Impact Your Business's Google Visibility in 2026 Why Your Small Business’s Google Visibility in 2026 Depends on AEO [Webinar]](https://articles.entireweb.com/wp-content/uploads/2026/01/How-AEO-Will-Impact-Your-Businesss-Google-Visibility-in-2026-80x80.png)