SEO

5 Trends to Know in SEO & Content Marketing

What’s trending in content marketing and SEO these days?

Let’s say this: Content is more important than ever.

More specifically, quality, media type, authenticity, and audience targeting all come into play if you want to win with readers and Google.

Ready to learn more?

These are the five content marketing and SEO trends you need to know.

1. Go Beyond (WAY Beyond) Superficial Content

We’re seeing brands leave superficial content behind in favor of blogs and articles that plumb the depths of a topic.

That means more and more blogs are comprehensive, thoroughly researched and – you guessed it – long.

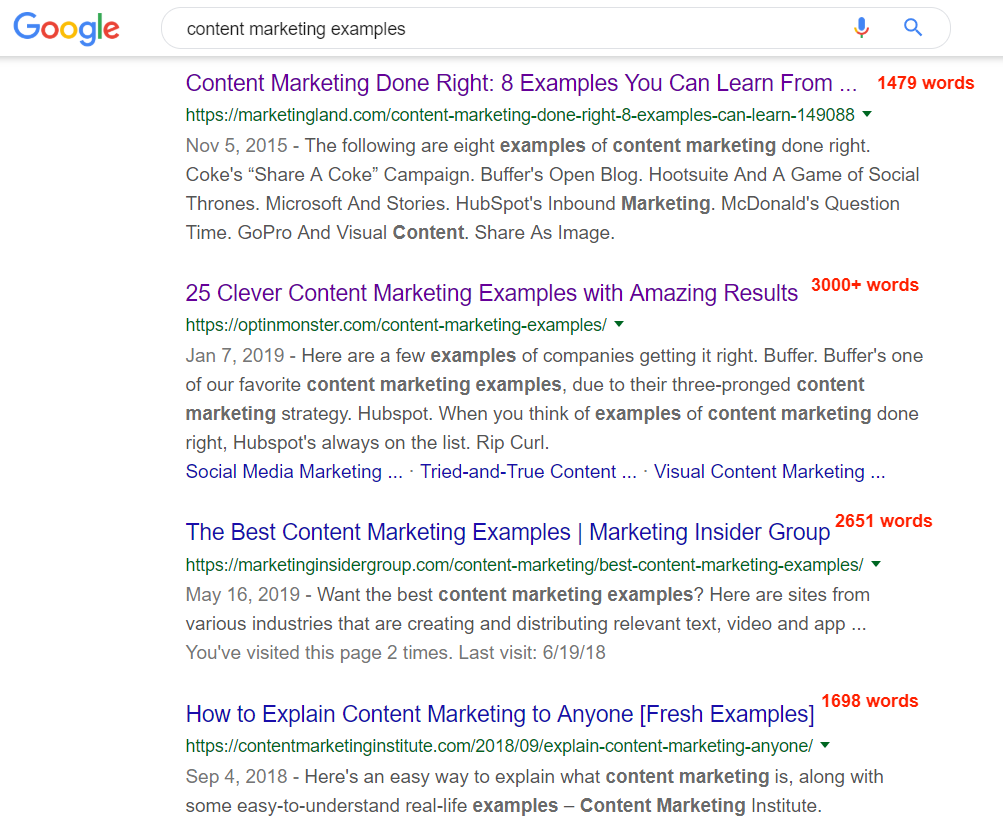

For instance, look at the SERP for the query [content marketing examples]. The top 4 results have an average length of 2,207 words.

Furthermore, all these results are chock-full of real-world examples, studies, statistics, and facts.

This research had to be accurately and carefully compiled, referenced, and cited.

This Optinmonster blog (result #2 and a featured snippet) is well-researched, meaning it includes lots of examples, links to sources, and screenshots. It also clocks in at over 3,000 words:

This is what is necessary to rank well with readers and search engines these days.

Still publishing unplanned, unresearched, fly-by-the-seat-of-your-pants content?

Not going to work anymore. In fact, I could argue this method never worked in the first place.

Superficial content will get you superficial results, at best. There is rarely any value in content that skims the surface of a topic.

No value = no audience interest. No audience interest = no results.

2. Invest in Content Creation Processes

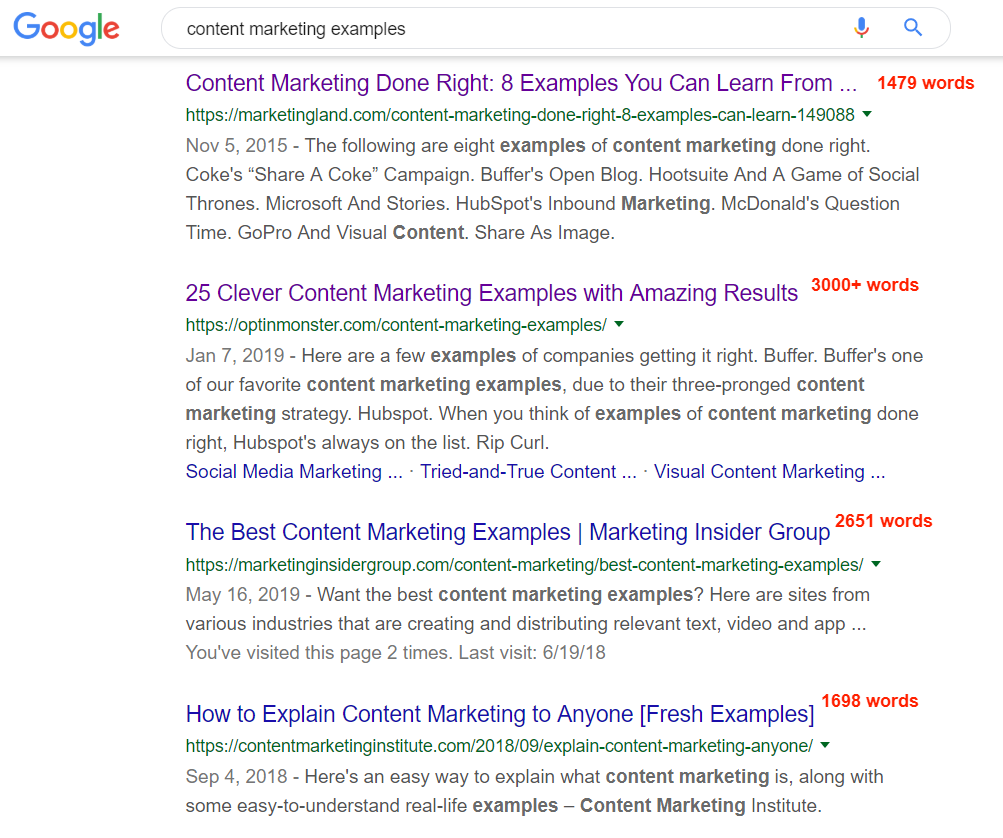

To create best-of-the-best content, more brands than ever are investing in content creation processes.

According to Content Marketing Institute’s 2019 B2C research, 56% of content marketers increased spending within the last 12 months on content creation.

That might involve:

- Researching keywords and topics.

- Planning what the content will cover (and why).

- Writing the content.

- Optimizing.

- Editing.

- Creating graphics, pulling screenshots, or leveraging the written content into multimedia.

- Posting and promoting the content across multiple channels.

The interest in creating content is a matter of course.

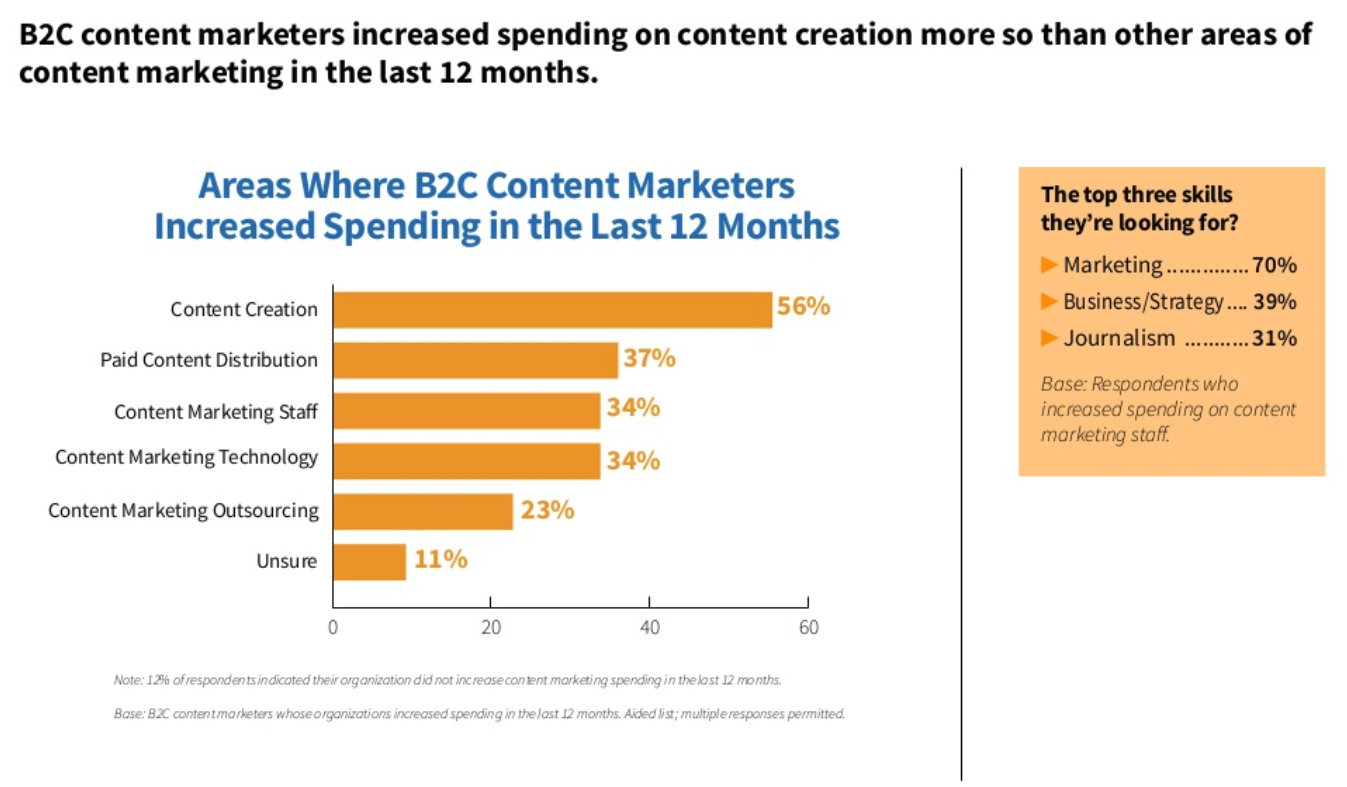

Content marketing has reached peak popularity over the last few years, according to Google Trends.

Most marketers want a piece of the promised land.

Investing more dollars in content creation processes makes sense:

How else can you create the type of value-packed, high-quality content that readers love and search engines rank?

If you don’t invest, you won’t have the resources to do it right.

End of story.

3. Use Content Personalization for Ultra-Targeted Content

Another widely-adopted content marketing trend is content personalization.

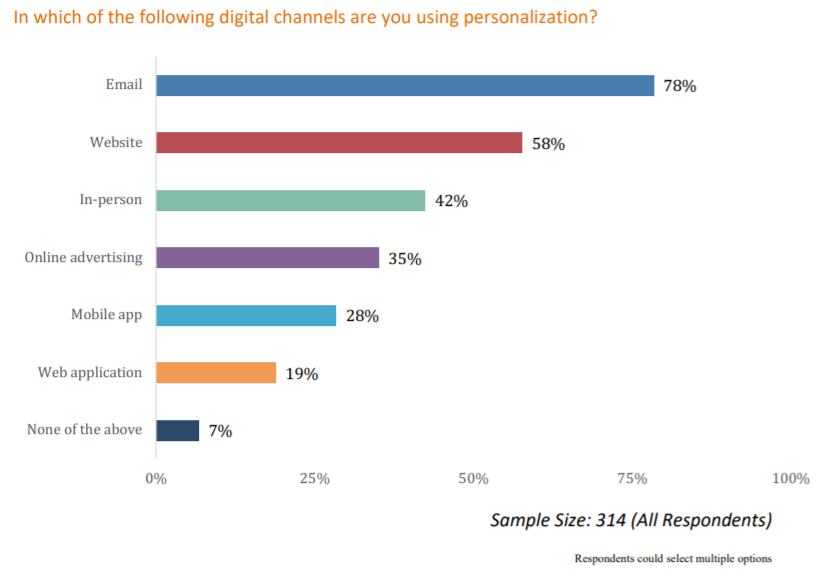

According to a survey from Evergage, 93% of marketers use personalization for at least one channel in their digital marketing strategy.

This practice focuses on tailoring the content your site serves to different users based on readily available personal data like demographics, preferences, and search/browsing history.

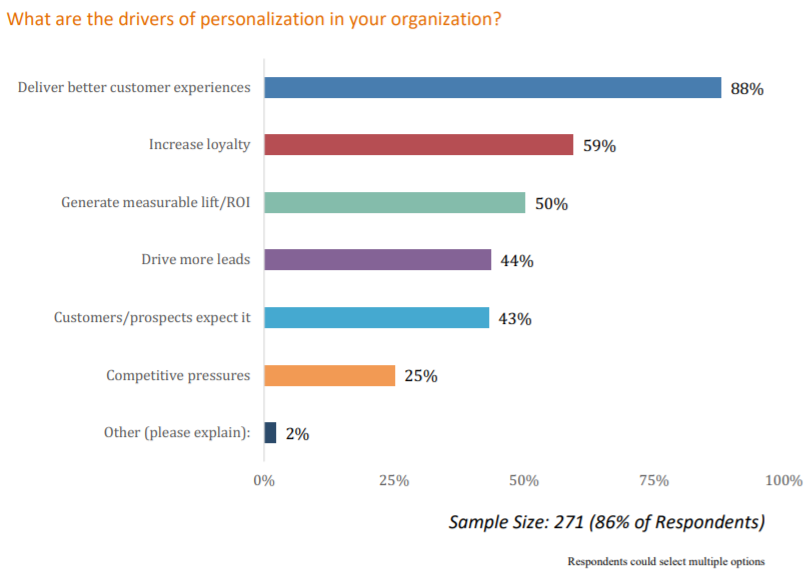

According to the Evergage survey, most marketers agree that this tactic:

- Helps deliver better customer experiences.

- Increases customer loyalty.

- Generates measurable ROI.

The point is, the content that speaks to one type of user won’t speak to another. A first-time visitor to your page has different needs than a visitor on their 20th session, for example.

Content personalization gives each of them slightly different pages filled with content that will appeal to their personal needs.

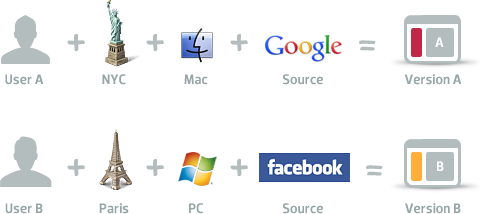

This chart from ConversionXL shows how two different versions of content are served to two different users.

4. Double-Down on Building Customer Loyalty with Authenticity & Transparency

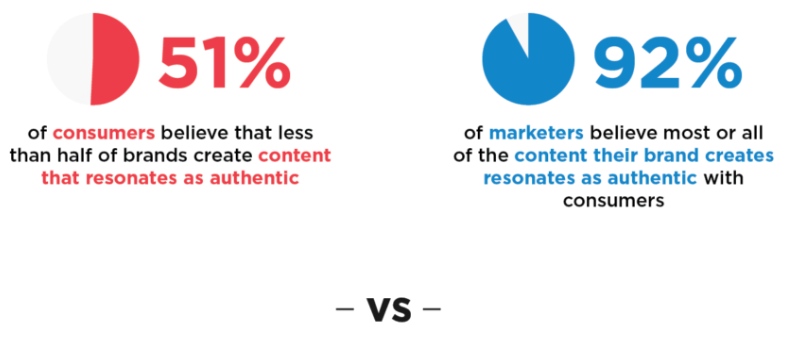

According to a 2019 Stackla survey, 90% of consumers agree that authenticity matters for the brands they like and support. Most marketers are aware of this trend and want to be that authentic voice people crave.

The problem? 92% of marketers think the content they create oozes authenticity, but 51% of consumers think less than half of all brands accomplish this.

However, when brands get authenticity right, the rewards are huge. A Cohn & Wolfe survey found almost 90% of consumers are willing to reward a brand’s authenticity by taking action:

- 52% said they would recommend the brand to other people

- 49% would give the brand their loyalty

If marketers are often mistaken about the authenticity in their content, how can they get past their overconfidence and reach what works?

Brands that understand what authenticity actually means will come out on top. Michael Fertik for Forbes defines it like this:

“Being authentic means being accountable and upholding your brand promise. It requires transparency and a dash of vulnerability. When a brand is authentic, consumers know it, appreciate it and prioritize their spending accordingly.”

For examples of authenticity in big-name brands, look at Amazon, Apple, Lego, and Intel – all of these appear in Cohn & Wolfe’s list of the 100 Most Authentic Brands ranked by consumers.

5. Expand Your Horizons Past Just Blogging

Blogging is a big deal in content marketing, but it’s not the end-all, be-all.

Blogging is awesome, don’t get me wrong.

My agency has seen 99% of our leads and sales come through our organic content rankings.

That said, we are living in a dynamic, multi-channel, multi-experience digital world.

If you only target people who read blogs and articles, you’re missing out on all the people who prefer only video, only audio, or a mix of two or three in their browsing adventures.

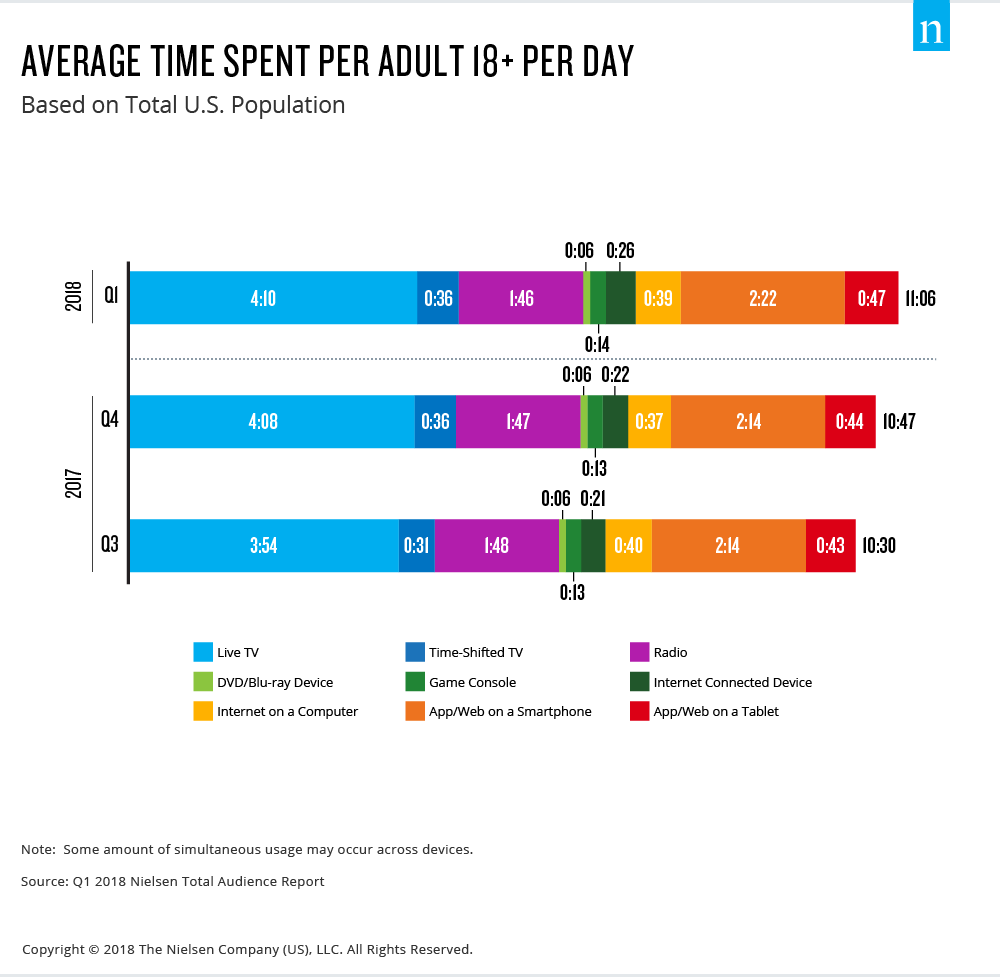

According to Nielsen, U.S. adults over age 18 now spend nearly half of their days – over 11 hours total – consuming content. (Over 6 of those hours are devoted to video content!)

They get their content everywhere: On live TV, time-shifted TV, the internet, smartphones, tablets, game consoles, radio, DVD/Blu-ray devices, and other internet-connected devices.

Most marketers are wise to this trend and are diving into the uncharted waters of YouTube, podcasts, webinars, and live streaming along with their blogging strategies.

If you’re still only blogging, it’s time to start thinking about adding on a YouTube channel, a podcast, or some other form of dynamic media to your content strategy.

For best results, go where your audience lives.

Trending Content & SEO Tactics Are Just the Beginning

As content marketing evolves, these trends are just the start of new shifts in the industry.

Over the last year alone, we have seen brands reprioritizing their audiences, refocusing on telling authentic stories, and shifting to include more channels in their content strategy.

Going forward, brands that invest in the right trends will see more growth overall. Are you ready?