SEO

10 Tips on How to Rock a Small PPC Budget

Many advertisers have a tight budget for pay-per-click (PPC) advertising, making it challenging to maximize results.

One of the first questions that often looms large is, “How much should we spend?” It’s a pivotal question, one that sets the stage for the entire PPC strategy.

Read on for tips to get started or further optimize budgets for your PPC program to maximize every dollar spent.

1. Set Expectations For The Account

With a smaller budget, managing expectations for the size and scope of the account will allow you to keep focus.

A very common question is: How much should our company spend on PPC?

To start, you must balance your company’s PPC budget with the cost, volume, and competition of keyword searches in your industry.

You’ll also want to implement a well-balanced PPC strategy with display and video formats to engage consumers.

First, determine your daily budget. For example, if the monthly budget is $2,000, the daily budget would be set at $66 per day for the entire account.

The daily budget will also determine how many campaigns you can run at the same time in the account because that $66 will be divided up among all campaigns.

Be aware that Google Ads and Microsoft Ads may occasionally exceed the daily budget to maximize results. The overall monthly budget, however, should not exceed the Daily x Number of Days in the Month.

Now that we know our daily budget, we can focus on prioritizing our goals.

2. Prioritize Goals

Advertisers often have multiple goals per account. A limited budget will also limit the number of campaigns – and the number of goals – you should focus on.

Some common goals include:

- Brand awareness.

- Leads.

- Sales.

- Repeat sales.

In the example below, the advertiser uses a small budget to promote a scholarship program.

They are using a combination of leads (search campaign) and awareness (display campaign) to divide up a daily budget of $82.

-

Screenshot from author, May 2024

The next several features can help you laser-focus campaigns to allocate your budget to where you need it most.

Remember, these settings will restrict traffic to the campaign. If you aren’t getting enough traffic, loosen up/expand the settings.

3. Location Targeting

Location targeting is a core consideration in reaching the right audience and helps manage a small ad budget.

To maximize a limited budget, you should focus on only the essential target locations where your customers are located.

While that seems obvious, you should also consider how to refine that to direct the limited budget to core locations. For example:

- You can refine location targeting by states, cities, ZIP codes, or even a radius around your business.

- Choosing locations to target should be focused on results.

- The smaller the geographic area, the less traffic you will get, so balance relevance with budget.

- Consider adding negative locations where you do not do business to prevent irrelevant clicks that use up precious budget.

If the reporting reveals targeted locations where campaigns are ineffective, consider removing targeting to those areas. You can also try a location bid modifier to reduce ad serving in those areas.

-

Screenshot by author from Google Ads, May 2024

Screenshot by author from Google Ads, May 2024

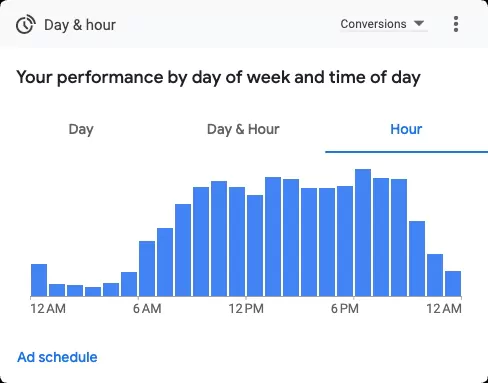

4. Ad Scheduling

Ad scheduling also helps to control budget by only running ads on certain days and at certain hours of the day.

With a smaller budget, it can help to limit ads to serve only during hours of business operation. You can choose to expand that a bit to accommodate time zones and for searchers doing research outside of business hours.

If you sell online, you are always open, but review reporting for hourly results over time to determine if there are hours of the day with a negative return on investment (ROI).

Limit running PPC ads if the reporting reveals hours of the day when campaigns are ineffective.

Screenshot by author from Google Ads, May 2024

Screenshot by author from Google Ads, May 20245. Set Negative Keywords

A well-planned negative keyword list is a golden tactic for controlling budgets.

The purpose is to prevent your ad from showing on keyword searches and websites that are not a good match for your business.

- Generate negative keywords proactively by brainstorming keyword concepts that may trigger ads erroneously.

- Review query reports to find irrelevant searches that have already led to clicks.

- Create lists and apply to the campaign.

- Repeat on a regular basis because ad trends are always evolving!

6. Smart Bidding

Smart Bidding is a game-changer for efficient ad campaigns. Powered by Google AI, it automatically adjusts bids to serve ads to the right audience within budget.

The AI optimizes the bid for each auction, ideally maximizing conversions while staying within your budget constraints.

Smart bidding strategies available include:

- Maximize Conversions: Automatically adjust bids to generate as many conversions as possible for the budget.

- Target Return on Ad Spend (ROAS): This method predicts the value of potential conversions and adjusts bids in real time to maximize return.

- Target Cost Per Action (CPA): Advertisers set a target cost-per-action (CPA), and Google optimizes bids to get the most conversions within budget and the desired cost per action.

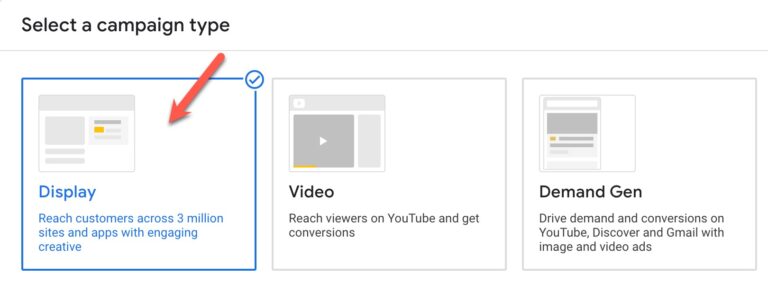

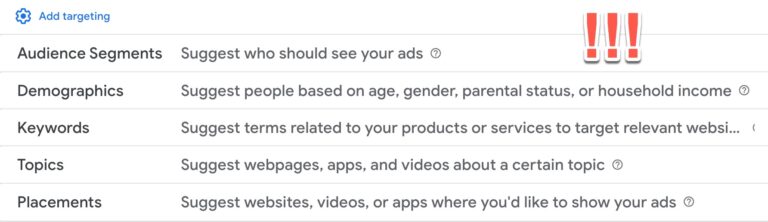

7. Try Display Only Campaigns

Screenshot by author from Google Ads, May 2024

Screenshot by author from Google Ads, May 2024For branding and awareness, a display campaign can expand your reach to a wider audience affordably.

Audience targeting is an art in itself, so review the best options for your budget, including topics, placements, demographics, and more.

Remarketing to your website visitors is a smart targeting strategy to include in your display campaigns to re-engage your audience based on their behavior on your website.

Let your ad performance reporting by placements, audiences, and more guide your optimizations toward the best fit for your business.

Screenshot by Lisa Raehsler from Google Ads, May 2024

Screenshot by Lisa Raehsler from Google Ads, May 20248. Performance Max Campaigns

Performance Max (PMax) campaigns are available in Google Ads and Microsoft Ads.

In short, automation is used to maximize conversion results by serving ads across channels and with automated ad formats.

This campaign type can be useful for limited budgets in that it uses AI to create assets, select channels, and audiences in a single campaign rather than you dividing the budget among multiple campaign types.

Since the success of the PMax campaign depends on the use of conversion data, that data will need to be available and reliable.

9. Target Less Competitive Keywords

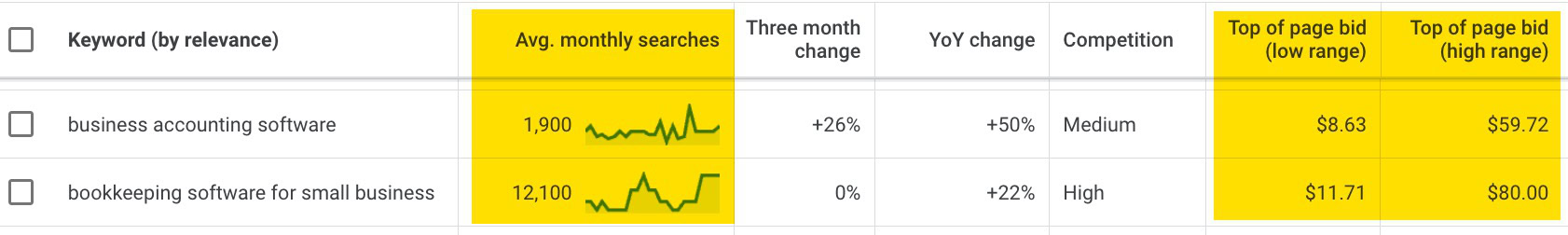

Some keywords can have very high cost-per-click (CPC) in a competitive market. Research keywords to compete effectively on a smaller budget.

Use your analytics account to discover organic searches leading to your website, Google autocomplete, and tools like Google Keyword Planner in the Google Ads account to compare and get estimates.

In this example, a keyword such as “business accounting software” potentially has a lower CPC but also lower volume.

Ideally, you would test both keywords to see how they perform in a live campaign scenario.

Screenshot by author from Google Ads, May 2024

Screenshot by author from Google Ads, May 202410. Manage Costly Keywords

High volume and competitive keywords can get expensive and put a real dent in the budget.

In addition to the tip above, if the keyword is a high volume/high cost, consider restructuring these keywords into their own campaign to monitor and possibly set more restrictive targeting and budget.

Levers that can impact costs on this include experimenting with match types and any of the tips in this article. Explore the opportunity to write more relevant ad copy to these costly keywords to improve quality.

Every Click Counts

As you navigate these strategies, you will see that managing a PPC account with a limited budget isn’t just about monetary constraints.

Rocking your small PPC budgets involves strategic campaign management, data-driven decisions, and ongoing optimizations.

In the dynamic landscape of paid search advertising, every click counts, and with the right approach, every click can translate into meaningful results.

More resources:

Featured Image: bluefish_ds/Shutterstock

![How AEO Will Impact Your Business's Google Visibility in 2026 Why Your Small Business’s Google Visibility in 2026 Depends on AEO [Webinar]](https://articles.entireweb.com/wp-content/uploads/2026/01/How-AEO-Will-Impact-Your-Businesss-Google-Visibility-in-2026-400x240.png)

![How AEO Will Impact Your Business's Google Visibility in 2026 Why Your Small Business’s Google Visibility in 2026 Depends on AEO [Webinar]](https://articles.entireweb.com/wp-content/uploads/2026/01/How-AEO-Will-Impact-Your-Businesss-Google-Visibility-in-2026-80x80.png)