SEO

12 Reasons Your Website Can Have A High Bounce Rate

“Why do I have such a high bounce rate?”

It’s a question you’ll encounter on Twitter, Reddit, and your favorite digital marketing Facebook group.

It’s a question you may have even asked yourself. Heck, it could be the question that brought you to this article.

Whatever brought you here, rest assured: There is no “perfect” bounce rate.

But you don’t necessarily want one that’s too high.

Read on as we dig into what may be causing your high bounce rate and what you can do to fix it.

What Is A Bounce Rate?

As a refresher, Google refers to a “bounce” as “a single-page session on your site.”

Bounce rate refers to the percentage of visitors that leave your website (or “bounce” back to the search results or referring website) after viewing only one page on your site.

This can even happen when a user idles on a page for more than 30 minutes.

So, what is a high bounce rate, and why is it bad?

Well, “high bounce rate” is a relative term that depends on your company’s goals and what kind of site you have.

Low bounce rates can be a problem, too.

Data from Semrush suggests the average bounce rate ranges from 41% to 55%, with a range of 26% to 40% being optimal, and anything above 46% is considered “high.”

This aligns well with data from an earlier RocketFuel study, which found that most websites will see bounce rates between 26% to 70%:

Based on the data they gathered, RocketFuel provided a bounce rate grading system of sorts:

- 25% or lower: Something is probably broken.

- 26-40%: Excellent.

- 41-55%: Average.

- 56-70%: Higher than normal, but could make sense depending on the website.

- 70% or higher: Bad and/or something is probably broken.

How To Find Your Bounce Rate In Google Analytics

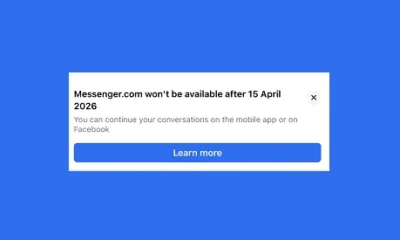

In Google Analytics 4, Google seems to have done away with bounce rate as we know it (more on this in a bit).

In Universal Analytics, you can find the overall bounce rate for your site in the Audience Overview tab.

Screenshot from Google Analytics UA, September 2022

Screenshot from Google Analytics UA, September 2022You can find your bounce rate for individual channels and pages in the behavior column of most views in Google Analytics.

Screenshot from Google Analytics UA, September 2022

Screenshot from Google Analytics UA, September 2022However, most organizations are currently transitioning to Google Analytics 4, affectionately known as GA4.

If your organization is in that boat, you may be wondering, “Where did the bounce rate go?”

Your eyes aren’t tricking you; Google indeed removed the bounce rate. Or, rather, they replaced it with a new and improved metric called “engagement rate.”

In GA4, you can find your site’s bounce rate engagement rate by navigating to Acquisition > User acquisition or Acquisition > Traffic acquisition.

Engagement rate fixes some of the pitfalls that plagued bounce rate as a metric. For one, it includes sessions where a visitor converted or spent at least 10 seconds on the page, even if they did not visit any other pages – two types of sessions that were not factored in previously.

As a result, you should see your bounce rate lower in GA4. Once you do a little bit of math, that is.

To calculate your new bounce rate, you simply subtract your engagement rate from 100%.

Screenshot from Google Analytics 4, September 2022

Screenshot from Google Analytics 4, September 2022While bounce rate is an important metric, I’m happy to see Google made this change.

Instead of focusing on the negative, it encourages us to focus on the positive: How many people are engaged with your site.

Plus, it’s a more accurate and relevant metric now.

In GA4, engagement rate counts a visitor as “engaged” if they visited 2+ pages, spent at least 10 seconds on your site or converted.

Now, let’s get back to what you came here for: Why your bounce rate is high and what you can do about it.

Possible Explanations For A High Bounce Rate

Below are 12 common causes of a high bounce rate, followed by five ways you can fix it.

1. Slow-To-Load Page

Google has a renewed focus on site speed, especially as a part of the Core Web Vitals initiative.

A slow-to-load page can be a huge problem for bounce rates.

Site speed is part of Google’s ranking algorithm. It always has been.

Google wants to promote content that provides a positive experience for users, and they recognize that a slow site can provide a poor experience.

Users want the facts fast – this is part of the reason Google has put so much work into featured snippets.

If your page takes longer than a few seconds to load, your visitors may get fed up and leave.

Fixing site speed is a lifelong journey for most SEO and marketing pros.

But the upside is that with each incremental fix, you should see an incremental boost in speed.

Review your page speed (overall and for individual pages) using tools like:

- Google PageSpeed Insights.

- Google Search Console PageSpeed reports.

- Lighthouse reports.

- Pingdom.

- GTmetrix.

They’ll offer you recommendations specific to your site, such as compressing your images, reducing third-party scripts, and leveraging browser caching.

2. Self-Sufficient Content*

Sometimes your content is efficient enough that people can quickly get what they need and bounce!

This can be a wonderful thing.

Perhaps you’ve achieved the content marketer’s dream and created awesome content that wholly consumed them for a handful of minutes in their lives.

Or perhaps you have a landing page that only requires the user to complete a short lead form.

To determine whether bounce rate is nothing to worry about, you’ll want to look at the Time Spent on Page and Average Session Duration metrics in Google Analytics.

You can also conduct user experience testing and A/B testing to see if the high bounce rate is a problem.

If the user is spending a couple of minutes or more on the page, that sends a positive signal to Google that they found your page highly relevant to their search query.

If you want to rank for that particular search query, that kind of user intent is gold.

If the user is spending less than a minute on the page (which may be the case of a properly optimized landing page with a quick-hit CTA form), consider enticing the reader to read some of your related blog posts after filling out the form.

*This is an example where GA4’s engagement rate may be a superior metric to UA’s bounce rate. In GA4, this type of session would not count as a bounce and would instead count as “engaged.”

3. Disproportional Contribution By A Few Pages

If we expand on the example from the previous section, you may have a few pages on your site that are contributing disproportionally to the overall bounce rate for your site.

Google is savvy at recognizing the difference between these.

If your single CTA landing pages reasonably satisfy user intent and cause them to bounce quickly after taking an action, but your longer-form content pages have a lower bounce rate, you’re probably good to go.

However, you will want to dig in and confirm that this is the case or discover if some of these pages with a higher bounce rate shouldn’t be causing users to leave en masse.

Open up Google Analytics. Go to Behavior > Site Content > Landing Pages, and sort by Bounce Rate.

Consider adding an advanced filter to remove pages that might skew the results.

For example, it’s not necessarily helpful to agonize over the one Twitter share with five visits that have all your social UTM parameters tacked onto the end of the URL.

My rule of thumb is to determine a minimum threshold of volume that is significant for the page.

Choose what makes sense for your site, whether it’s 100 visits or 1,000 visits, and then click on Advanced and filter for Sessions greater than that.

In GA4, navigate to Acquisition > User acquisition or Acquisition > Traffic acquisition. From there, click on “Add filter +” underneath the report title.

Create a filter by selecting “Session default channel grouping” (or “Session medium” or “Session source / medium” etc.). Then check the box for “Organic Search” in the Dimension values menu.

Click the blue Apply button. Once you’re back in the report, click on the blue plus sign to open up a new menu.

Navigate to Page/screen and select Landing page.

4. Misleading Title Tag And/Or Meta Description

Ask yourself: Is the content of your page accurately summarized by your title tag and meta description?

If not, visitors may enter your site thinking your content is about one thing, only to find that it isn’t, and then bounce back to whence they came.

Whether it was an innocent mistake or you were trying to game the system by optimizing for keyword clickbait (shame on you!), this is, fortunately, simple enough to fix.

Either review the content of your page and adjust the title tag and meta description accordingly. Or, rewrite the content to address the search queries you want to attract visitors for.

You can also check what kind of meta description Google has auto-generated for your page for common searches – Google can change your meta description, and if they make it worse, you can take steps to remedy that.

5. Blank Page Or Technical Error

If your bounce rate is exceptionally high and you see that people are spending less than a few seconds on the page, it’s likely your page is blank, returning a 404, or otherwise not loading properly.

Take a look at the page from your audience’s most popular browser and device configurations (e.g., Safari on desktop and mobile, Chrome on mobile, etc.) to replicate their experience.

You can also check in Search Console under Coverage to discover the issue from Google’s perspective.

Correct the issue yourself or talk to someone who can – an issue like this can cause Google to drop your page from the search results in a hurry.

6. Bad Link From Another Website

You could be doing everything perfectly on your end to achieve a normal or low bounce rate from organic search results and still have a high bounce rate from your referral traffic.

The referring site could be sending you unqualified visitors, or the anchor text and context for the link could be misleading.

Sometimes this is a result of sloppy copywriting.

The writer or publisher linked to your site in the wrong part of the copy or didn’t mean to link to your site at all.

Reach out to the author of the article first. If they don’t respond or they can’t update the article after publishing, then you can escalate the issue to the site’s editor or webmaster.

Politely ask them to remove the link to your site – or update the context, whichever makes sense.

(Tip: You can easily find their contact information with this guide.)

Unfortunately, the referring website may be trying to sabotage you with some negative SEO tactics out of spite or just for fun.

For example, they may have linked to your “Guide To Adopting A Puppy” with the anchor text of FREE GET RICH QUICK SCHEME.

You should still reach out and politely ask them to remove the link, but if needed, you’ll want to update your disavow file in Search Console.

Disavowing the link won’t reduce your bounce rate, but it will tell Google not to take that site’s link into account when it comes to determining the quality and relevance of your site.

7. Affiliate Landing Page Or Single-Page Site*

If you’re an affiliate, the whole point of your page may be to deliberately send people away from your website to the merchant’s site.

In these instances, you’re doing the job right if the page has a higher bounce rate.

A similar scenario would be if you have a single-page website, such as a landing page for your ebook or a simple portfolio site.

It’s common for sites like these to have a very high bounce rate since there’s nowhere else to go.

Remember that Google can usually tell when a website is doing a good job satisfying user intent even if the user’s query is answered super quickly (sites like WhatIsMyScreenResolution.com come to mind).

If you’re interested, you can adjust your bounce rate so it makes more sense for the goals of your website.

For Single Page Apps (or SPAs), you can adjust your analytics settings to see different parts of a page as a different page, adjusting the bounce rate to better reflect the user experience.

*This is another example where GA4’s engagement rate may be a superior metric to UA’s bounce rate. If you’ve set it up so that a click on your affiliate link is considered a conversion event, this type of session would not count as a bounce and would instead count as “engaged.”

8. Low-Quality Or Underoptimized Content

Visitors may be bouncing from your website because your content is just plain bad.

Take a long, hard look at your page and have your most judgmental and honest colleague or friend review it.

(Ideally, this person either has a background in content marketing or copywriting, or they fall into your target audience).

One possibility is that your content is great, but you just haven’t optimized it for online reading – or for the audience that you’re targeting.

- Are you writing in simple sentences (think high school students versus PhDs)?

- Is it easily scannable with lots of header tags?

- Does it cleanly answer questions?

- Have you included images to break up the copy and make it easy on the eyes?

Writing for the web is different than writing for offline publications.

Brush up your online copywriting skills to increase the time people spend reading your content.

The other possibility is that your content is poorly written overall or simply isn’t something your audience cares about.

Consider hiring a freelance copywriter (like me!) or content strategist who can help you transform your ideas into powerful content that converts.

9. Bad Or Obnoxious UX

Are you bombarding people with ads, pop-up surveys, and email subscribe buttons?

CTA-heavy features like these may be irresistible to the marketing and sales team, but using too many of them can make a visitor run for the hills.

Google’s Core Web Vitals are all about user experience – not only are they ranking factors, but they impact your site visitors’ happiness, too.

Is your site confusing to navigate?

Perhaps your visitors are looking to explore more, but your blog is missing a search box, or the menu items are difficult to click on a smartphone.

As online marketers, we know our websites in and out.

It’s easy to forget that what seems intuitive to us is anything but to our audience.

Make sure you’re avoiding these common design mistakes, and have a web or UX designer review the site and let you know if anything pops out to them as problematic.

10. The Page Isn’t Mobile-Friendly

While SEOs know it’s important to have a mobile-friendly website, the practice isn’t always followed in the real world.

Google announced its switch to mobile-first indexing way back in 2017, but many websites today still wouldn’t be considered mobile-friendly.

Websites that haven’t been optimized for mobile don’t look good on mobile devices – and they don’t load too fast, either.

That’s a recipe for a high bounce rate.

Even if your website was implemented using responsive design principles, it’s still possible that the live page doesn’t read as mobile-friendly to the user.

Sometimes, when a page gets squeezed into a mobile format, it causes some of the key information to move below the fold.

Now, instead of seeing a headline that matches what they saw in search, mobile users only see your site’s navigation menu.

Assuming the page doesn’t offer what they need, they bounce back to Google.

If you see a page with a high bounce rate and no glaring issues immediately jump out to you, test it on your mobile phone.

You can also check for mobile issues in Google Search Console and Lighthouse.

11. Content Depth*

Google can give people quick answers through featured snippets and knowledge panels; you can give people deep, interesting, interconnected content that’s a step beyond that.

Make sure your content compels people to click to explore other pages on your site if it makes sense.

Provide interesting, relevant internal links, and give them a reason to stay.

And for the crowd that wants the quick answer, give them a TL;DR summary at the top.

*This is another example where GA4’s engagement rate may be a superior metric to UA’s bounce rate. If your content is deeply engrossing, people will keep reading after the 10-second mark, leading GA4 to count their session as “engaged” instead of a bounce.

12. Asking For Too Much

Don’t ask someone for their credit card number, social security, grandmother’s pension, and children’s names right off the bat (or ever, in some of those examples) – your user doesn’t trust you yet.

People are ready to be suspicious, considering how many scam websites are out there.

Being presented with a big pop-up asking for info will cause a lot of people to bounce immediately.

Your job is to build trust with your visitors.

Do so, and you’ll both be happier. Your visitor will feel like they can trust you, and you’ll have a lower bounce rate.

Either way, if it makes users happy, Google likes it.

Pro Tips For Reducing Your Bounce Rate

Regardless of the reason behind your high bounce rate, here’s a summary of best practices you can implement to bring it down.

Make Sure Your Content Lives Up To The Hype

Together, you can think of your title tag and meta description as your website’s virtual billboard on Google.

Whatever you’re advertising in the SERPs, your content needs to match.

Don’t call your page an “ultimate guide” if it’s a short post with three tips.

Don’t claim to be the “best” vacuum if your user reviews show a three-star rating.

You get the idea.

Also, make your content readable:

- Break up your text with lots of white space.

- Add supporting images.

- Use short sentences.

- Spellcheck is your friend.

- Use a good, clean design.

- Don’t bombard visitors with too many ads.

Keep Critical Elements Above The Fold

Sometimes, your content matches what you advertise in your title tag and meta description. It’s just that your visitors can’t tell at first glance.

When people arrive on a website, they make an immediate first impression.

You want that first impression to validate whatever they thought they were going to see when they arrived.

A prominent H1 should match the title they read on Google.

If it’s an ecommerce site, a photo should match the product description they saw on Google.

Also, make sure these elements aren’t obscured by pop-ups or advertisements.

Speed Up Your Site

When it comes to SEO, faster is always better.

Keeping up with site speed is a task that should remain firmly stuck at the top of your SEO to-do list.

There will always be new ways to compress, optimize, and otherwise accelerate load time. For now, make sure to:

- Compress all images before loading them to your site, and only use the maximum display size necessary.

- Review and remove any external or load-heavy scripts, stylesheets, and plugins. If there are any you don’t need, remove them. For the ones you do need, see if there’s a faster option.

- Tackle the basics: Use a CDN, minify JavaScript and CSS, and set up browser caching.

- Check Lighthouse for more suggestions.

Minimize Non-Essential Elements

Don’t bombard your visitors with pop-up ads, in-line promotions, and other content they don’t care about.

Visual overwhelm can cause visitors to bounce.

What CTA is the most important for the page?

Compellingly highlight that.

For everything else, delegate it to your sidebar or footer.

Edit, edit, edit!

Help People Get Where They Want To Be Faster

Want to encourage people to browse more of your site?

Make it easy for them.

- Leverage on-site search with predictive search, helpful filters, and an optimized “no results found” page.

- Rework your navigation menu and A/B test how complex vs. simple drop-down menus affect your bounce rate.

- Include a Table of Contents in your long-form articles with anchor links taking people straight to the section they want to read.

Conclusion

Remember: Bounce rates are just one metric.

A high bounce rate doesn’t mean the end of the world.

Some well-designed, effective webpages have high bounce rates – and that’s okay.

Bounce rates can be a measure of how well your site is performing, but it’s good to keep them in context.

Hopefully, this article helped you diagnose what’s causing your high bounce rate, and you have a good idea of how to fix it.

Not sure where to start?

Make your site useful, user-focused, and fast – good sites attract good users.

More Resources:

Featured Image: Cagkan Sayin/Shutterstock

![How AEO Will Impact Your Business's Google Visibility in 2026 Why Your Small Business’s Google Visibility in 2026 Depends on AEO [Webinar]](https://articles.entireweb.com/wp-content/uploads/2026/01/How-AEO-Will-Impact-Your-Businesss-Google-Visibility-in-2026-400x240.png)

![How AEO Will Impact Your Business's Google Visibility in 2026 Why Your Small Business’s Google Visibility in 2026 Depends on AEO [Webinar]](https://articles.entireweb.com/wp-content/uploads/2026/01/How-AEO-Will-Impact-Your-Businesss-Google-Visibility-in-2026-80x80.png)

You must be logged in to post a comment Login